Recognition: no theorem link

Laplace Variational Inference for Dirichlet Process Mixtures of Marked Poisson Point Processes

Pith reviewed 2026-05-12 04:19 UTC · model grok-4.3

The pith

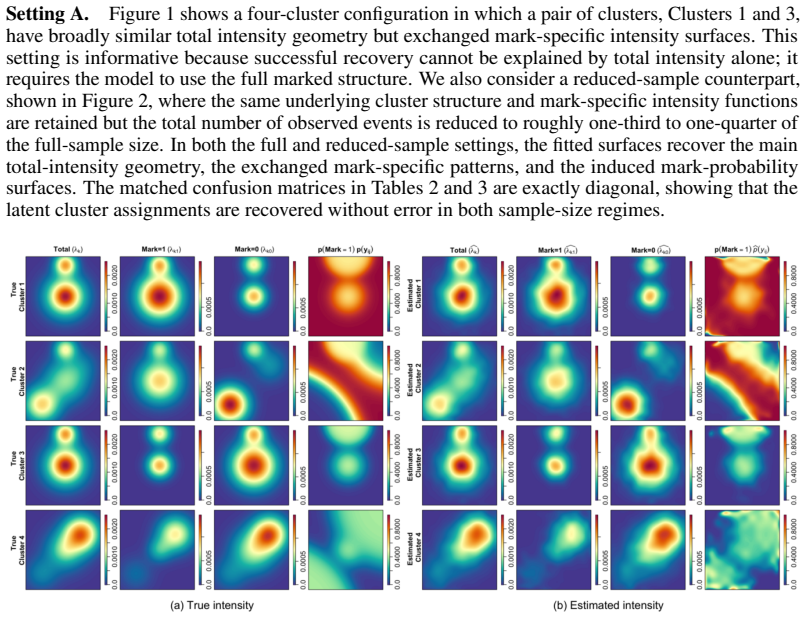

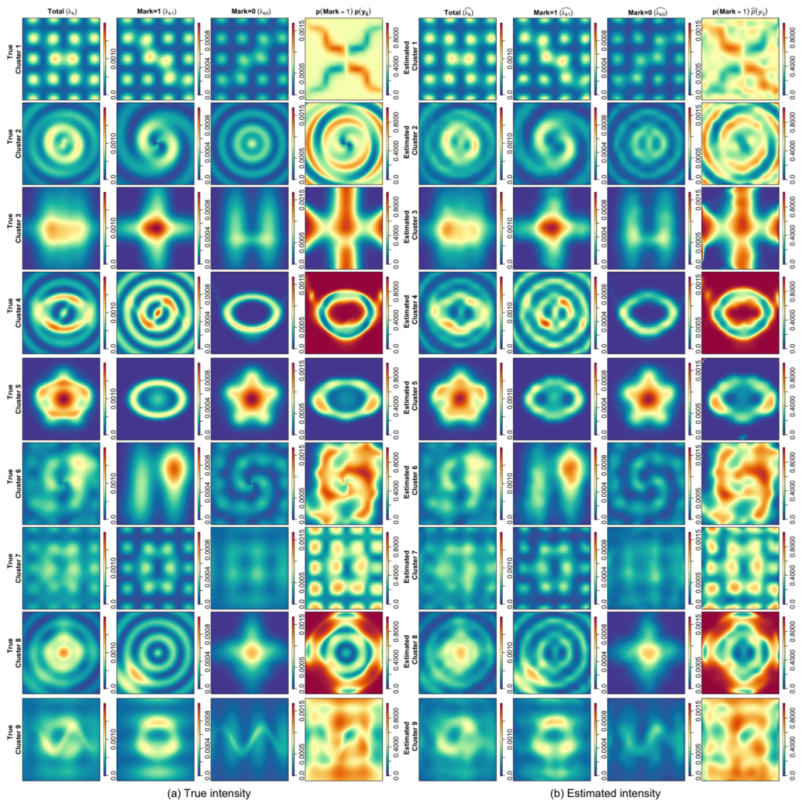

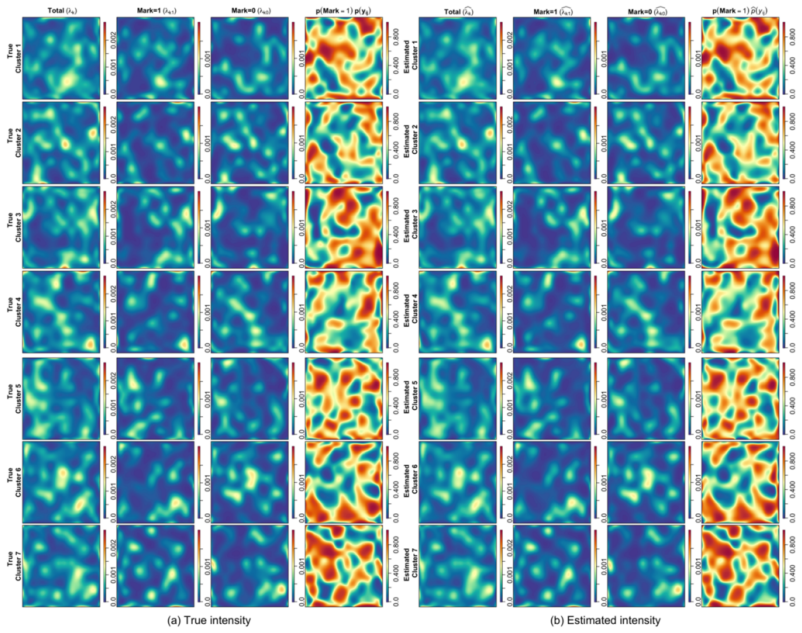

A Bayesian nonparametric model for marked Poisson point processes uses Dirichlet process mixtures and a constrained Laplace variational algorithm to jointly infer clusters, their number, and continuous mark-specific intensity surfaces.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Dirichlet process mixtures of marked Poisson point processes, equipped with a squared-link intensity representation and a variational Bayes algorithm that applies constrained Laplace approximation to the nonconjugate basis-coefficient block, jointly recover latent cluster structure, the unknown number of clusters, and continuous mark-specific intensity surfaces from replicated observations.

What carries the argument

The constrained Laplace approximation for the nonconjugate basis-coefficient block, cast as a constrained optimization problem to enforce validity of the squared-link intensity.

If this is right

- Replicated marked point process datasets can be clustered without fixing the number of groups in advance.

- Mark-specific intensity surfaces are recovered continuously over the domain rather than on a discrete grid.

- The variational procedure scales to larger collections of replicated processes than full MCMC sampling.

- Clustering and intensity estimation occur in one joint step instead of separate procedures.

Where Pith is reading between the lines

- The constrained-optimization device for squared-link coefficients may transfer to other Bayesian nonparametric models that encounter similar nonconjugacy.

- The framework could be extended to non-Poisson marked point processes provided their likelihoods can be expressed in a similarly tractable squared-link form.

- Applications in spatial ecology or event data analysis would gain from the automatic determination of cluster number alongside continuous surface estimates.

Load-bearing premise

The squared-link intensity representation produces tractable continuous-domain likelihood terms without gridding or thinning, and the constrained Laplace approximation yields a valid mode-finding optimization whose guarantees hold under the stated model assumptions.

What would settle it

Synthetic marked point process data with known clusters and intensities on which the variational cluster assignments or recovered intensity surfaces deviate substantially from those obtained by long-run MCMC sampling would show the approximation is unreliable.

Figures

read the original abstract

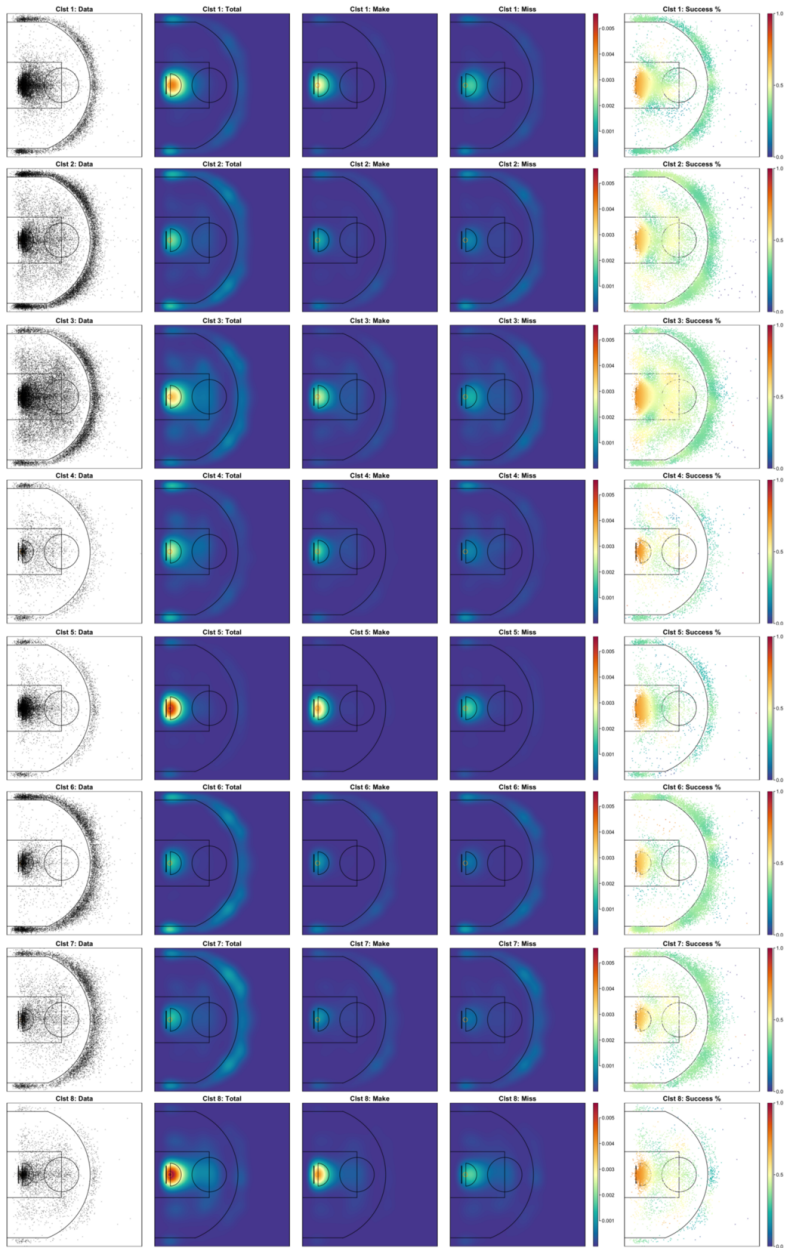

Marked point process data arise when events occur in a space with event-level marks. We study clustering of replicated marked Poisson point processes and introduce Dirichlet process mixtures of marked Poisson point processes, a Bayesian nonparametric model that jointly infers latent cluster structure, the number of clusters, and continuous mark-specific intensity surfaces. We use a squared link intensity representation to obtain tractable continuous domain likelihood terms without gridding or thinning. For posterior inference, we develop an efficient variational Bayes algorithm with a constrained Laplace approximation for the nonconjugate basis-coefficient block. The resulting coefficient update is formulated as a constrained optimization problem, which avoids the sign ambiguity and nodal-line issue of squared-link models. We further establish theoretical guarantees for mode finding optimization. We demonstrate the performance of the proposed model and algorithm through synthetic experiments and real-data analysis.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces Dirichlet process mixtures of marked Poisson point processes as a Bayesian nonparametric model for clustering replicated marked point process data. It jointly infers latent cluster assignments, the number of clusters, and continuous mark-specific intensity surfaces. A squared-link intensity representation is used to obtain closed-form likelihood terms over the continuous domain without gridding or thinning. Posterior inference employs a variational Bayes algorithm that applies a constrained Laplace approximation to the nonconjugate basis-coefficient block; the resulting update is cast as a constrained optimization problem that resolves sign ambiguity and nodal-line issues, with accompanying theoretical guarantees for mode finding. The approach is illustrated on synthetic experiments and real-data examples.

Significance. If the derivations and empirical results hold, the work supplies a coherent, computationally tractable framework for nonparametric clustering of marked point processes that avoids common discretization artifacts and handles the nonconjugacy of the intensity coefficients in a principled way. The explicit treatment of the constrained Laplace step and the convex-analysis guarantees for the mode-finding subproblem are methodological strengths that could support reliable inference in spatial and event-data applications.

major comments (1)

- [§4.2] §4.2 (Constrained Laplace approximation): the proof that the penalized objective remains strictly convex under the chosen basis expansion and the DP concentration parameter should be stated explicitly, because this convexity is load-bearing for the claim that the constrained optimizer reliably locates the global mode of the variational objective.

minor comments (3)

- [§3 and §4] The notation for the mark-specific intensity surfaces (e.g., the basis expansion coefficients) is introduced in §3 but reused without redefinition in the ELBO derivation of §4; a short table of symbols would improve readability.

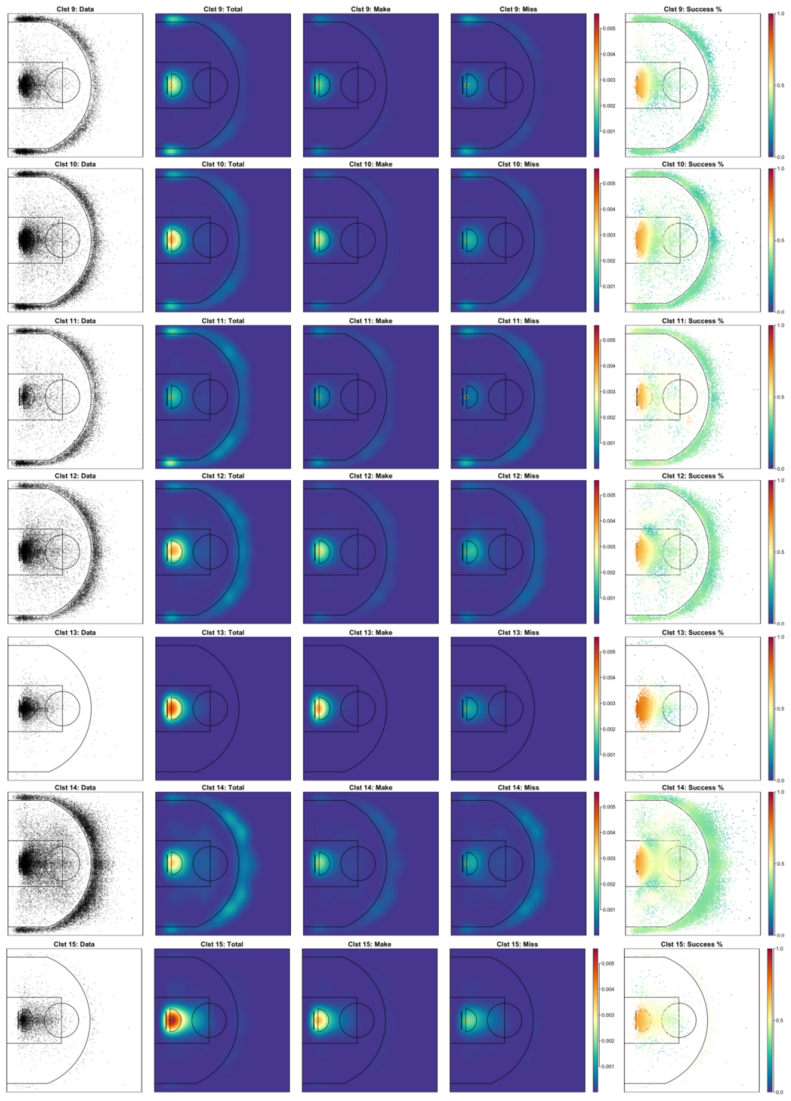

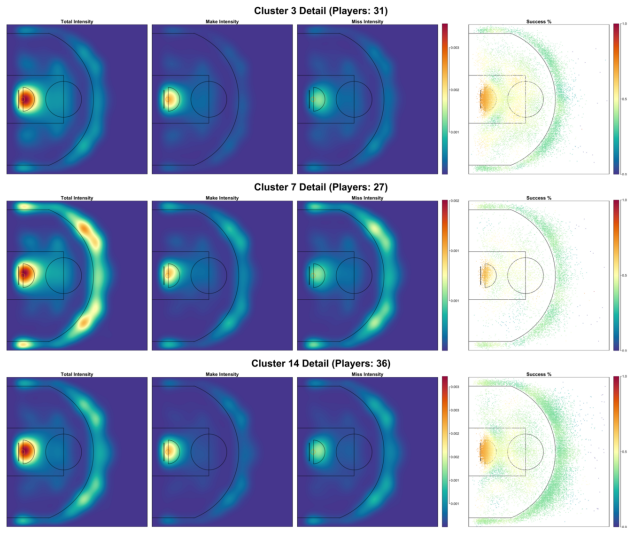

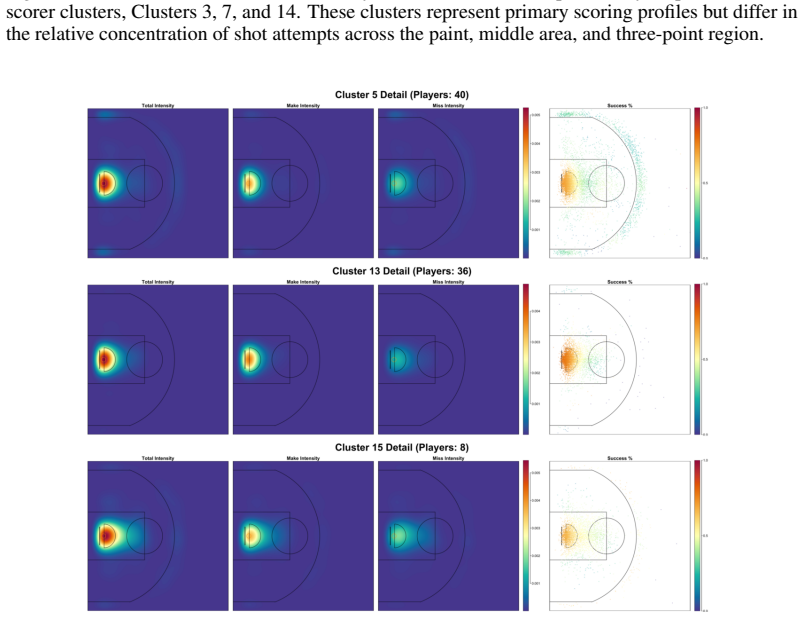

- [Figure 3] Figure 3 (real-data intensity surfaces): the color scale and contour lines are not labeled with numerical values, making it difficult to assess the magnitude of the estimated intensities.

- [§5.1] The synthetic-data simulation protocol in §5.1 should report the exact values of the Dirichlet-process concentration parameter and the basis dimension used to generate the ground-truth intensities.

Simulated Author's Rebuttal

We thank the referee for their careful reading of the manuscript and for the positive assessment and recommendation of minor revision. We address the single major comment below.

read point-by-point responses

-

Referee: [§4.2] §4.2 (Constrained Laplace approximation): the proof that the penalized objective remains strictly convex under the chosen basis expansion and the DP concentration parameter should be stated explicitly, because this convexity is load-bearing for the claim that the constrained optimizer reliably locates the global mode of the variational objective.

Authors: We agree that an explicit statement of the convexity result would improve clarity in §4.2. In the revised version we will add a self-contained paragraph (or short subsection) that states and proves strict convexity of the penalized objective under the chosen basis expansion and for the relevant range of the DP concentration parameter. This addition will directly underpin the claim that the constrained optimizer locates the global mode. revision: yes

Circularity Check

No significant circularity detected

full rationale

The derivation chain is self-contained. The model is constructed by combining a Dirichlet process prior with marked Poisson point processes and a squared-link intensity representation chosen for tractability; this is an explicit modeling decision rather than a reduction of one quantity to another by definition. The variational Bayes algorithm with constrained Laplace approximation is developed from standard variational inference and convex optimization applied to the nonconjugate block, with mode-finding guarantees following from standard arguments on the penalized objective. No load-bearing step reduces to a fitted input renamed as prediction, a self-citation chain, or an ansatz smuggled from prior work by the same authors. The central claims rest on independent constructions whose assumptions are stated and externally verifiable.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Ryan Prescott Adams, Iain Murray, and David J. C. MacKay. Tractable nonparametric bayesian inference in poisson processes with gaussian process intensities. InProceedings of the 26th Annual International Conference on Machine Learning, pages 9–16. ACM, 2009. doi: 10.1145/ 1553374.1553376

-

[2]

Bonilla, Theodoros Damoulas, and Sally Cripps

Virginia Aglietti, Edwin V . Bonilla, Theodoros Damoulas, and Sally Cripps. Structured vari- ational inference in continuous cox process models. InAdvances in Neural Information Processing Systems, volume 32, pages 12437–12447, 2019

work page 2019

- [3]

-

[4]

Duncan Barrack and Simon Preston. Classification and clustering for observations of event time data using non-homogeneous poisson process models.arXiv preprint arXiv:1703.02111, 2017

-

[5]

Bishop.Pattern Recognition and Machine Learning

Christopher M. Bishop.Pattern Recognition and Machine Learning. Springer, 2006

work page 2006

-

[6]

Variational inference for dirichlet process mixtures

David M Blei and Michael I Jordan. Variational inference for dirichlet process mixtures. Bayesian analysis, 1(1):121–143, 2006

work page 2006

-

[7]

Blei, Alp Kucukelbir, and Jon D

David M. Blei, Alp Kucukelbir, and Jon D. McAuliffe. Variational inference: A review for statisticians.Journal of the American Statistical Association, 112(518):859–877, 2017

work page 2017

-

[8]

Daryl J. Daley and David Vere-Jones.An Introduction to the Theory of Point Processes: Volume I: Elementary Theory and Methods. Probability and Its Applications. Springer, 2nd edition, 2003

work page 2003

-

[9]

Carl de Boor.A Practical Guide to Splines, volume 27 ofApplied Mathematical Sciences. Springer, 2001

work page 2001

-

[10]

C-NTPP: Learning cluster-aware neural temporal point process

Fangyu Ding, Junchi Yan, and Haiyang Wang. C-NTPP: Learning cluster-aware neural temporal point process. InProceedings of the Thirty-Seventh AAAI Conference on Artificial Intelligence, pages 7369–7377, 2023. doi: 10.1609/aaai.v37i6.25897

-

[11]

Bayesian nonparametric poisson-process allocation for time-sequence modeling

Hongyi Ding, Mohammad Khan, Issei Sato, and Masashi Sugiyama. Bayesian nonparametric poisson-process allocation for time-sequence modeling. InProceedings of the Twenty-First International Conference on Artificial Intelligence and Statistics, volume 84 ofProceedings of Machine Learning Research, pages 1108–1116. PMLR, 2018

work page 2018

-

[12]

Christian Donner and Manfred Opper. Efficient bayesian inference of sigmoidal gaussian cox processes.Journal of Machine Learning Research, 19(67):1–34, 2018

work page 2018

- [13]

-

[14]

Tom Gunter, Chris Lloyd, Michael A. Osborne, and Stephen J. Roberts. Efficient Bayesian non- parametric modelling of structured point processes. InProceedings of the Thirtieth Conference on Uncertainty in Artificial Intelligence, pages 254–263. AUAI Press, 2014

work page 2014

-

[15]

Bayesian group learning for shot selection of professional basketball players.Stat, 10(1):e324, 2021

Guanyu Hu, Hou-Cheng Yang, and Yishu Xue. Bayesian group learning for shot selection of professional basketball players.Stat, 10(1):e324, 2021. doi: 10.1002/sta4.324

-

[16]

Guanyu Hu, Hou-Cheng Yang, Yishu Xue, and Dipak K. Dey. Zero-inflated poisson model with clustered regression coefficients: Application to heterogeneity learning of field goal attempts of professional basketball players.Canadian Journal of Statistics, 51(1):157–172, 2023. doi: 10.1002/cjs.11684

-

[17]

Hemant Ishwaran and Lancelot F. James. Gibbs sampling methods for stick-breaking priors. Journal of the American Statistical Association, 96(453):161–173, 2001

work page 2001

-

[18]

Jieying Jiao, Guanyu Hu, and Jun Yan. A bayesian marked spatial point processes model for basketball shot chart.Journal of Quantitative Analysis in Sports, 17(2):77–90, 2021. doi: 10.1515/jqas-2019-0106. 10

-

[19]

S. T. John and James Hensman. Large-scale cox process inference using variational fourier fea- tures. InProceedings of the 35th International Conference on Machine Learning, volume 80 of PMLR, Stockholm, Sweden, 2018. URL https://api.semanticscholar.org/CorpusID: 4626119

work page 2018

-

[20]

Jordan, Zoubin Ghahramani, Tommi S

Michael I. Jordan, Zoubin Ghahramani, Tommi S. Jaakkola, and Lawrence K. Saul. An introduction to variational methods for graphical models.Machine Learning, 37:183–233, 1999

work page 1999

-

[21]

John Frank Charles Kingman.Poisson Processes. Oxford Studies in Probability. Clarendon Press, 1993

work page 1993

-

[22]

Bayesian P-splines.Journal of Computational and Graphical Statistics, 13(1):183–212, 2004

Stefan Lang and Andreas Brezger. Bayesian P-splines.Journal of Computational and Graphical Statistics, 13(1):183–212, 2004. doi: 10.1198/1061860043010

-

[23]

Qiwei Li, Xinlei Wang, Faming Liang, and Guanghua Xiao. A bayesian mark interaction model for analysis of tumor pathology images.The Annals of Applied Statistics, 13(3):1708–1732,

-

[24]

doi: 10.1214/19-AOAS1254

-

[25]

Variational inference for gaussian process modulated poisson processes

Chris Lloyd, Tom Gunter, Michael Osborne, and Stephen Roberts. Variational inference for gaussian process modulated poisson processes. InProceedings of the 32nd International Conference on Machine Learning, volume 37 ofPMLR, pages 1814–1822, 2015

work page 2015

-

[26]

Latent point process allocation

Chris Lloyd, Tom Gunter, Michael Osborne, Stephen Roberts, and Tom Nickson. Latent point process allocation. InProceedings of the 19th International Conference on Artificial Intelligence and Statistics, volume 51 ofProceedings of Machine Learning Research, pages 389–397. PMLR, 2016

work page 2016

-

[27]

V . J. Martínez, P. Arnalte-Mur, and D. Stoyan. Measuring galaxy segregation with the mark connection function.Astronomy & Astrophysics, 513:A22, 2010. doi: 10.1051/0004-6361/ 200912922

-

[28]

Factorized point process intensities: A spatial analysis of professional basketball

Andrew Miller, Luke Bornn, Ryan Adams, and Kirk Goldsberry. Factorized point process intensities: A spatial analysis of professional basketball. InProceedings of the 31st International Conference on Machine Learning, volume 32 ofProceedings of Machine Learning Research, pages 235–243. PMLR, 2014

work page 2014

-

[29]

George Mohler. Marked point process hotspot maps for homicide and gun crime prediction in chicago.International Journal of Forecasting, 30(3):491–497, 2014. doi: 10.1016/j.ijforecast. 2014.01.004

- [30]

-

[31]

Jesper Møller and Rasmus Plenge Waagepetersen.Statistical Inference and Simulation for Spatial Point Processes. Chapman and Hall/CRC, 2004. ISBN 9781584882657

work page 2004

-

[32]

Radford M. Neal. Markov chain sampling methods for Dirichlet process mixture models. Journal of Computational and Graphical Statistics, 9(2):249–265, 2000

work page 2000

-

[33]

A random finite set model for data clustering.arXiv preprint arXiv:1703.04832, 2017

Dinh Phung and Ba-Tuong V o. A random finite set model for data clustering.arXiv preprint arXiv:1703.04832, 2017

-

[34]

András Prékopa. On secondary processes generated by a random point distribution of Poisson type.Annales Universitatis Scientiarum Budapestinensis de Rolando Eötvös Nominatae, Sectio Mathematica, 1:153–170, 1958

work page 1958

-

[35]

Carl Edward Rasmussen and Christopher K. I. Williams.Gaussian Processes for Machine Learning. MIT Press, 2006

work page 2006

-

[36]

Brian J. Reich, James S. Hodges, Bradley P. Carlin, and Adam M. Reich. A spatial analysis of basketball shot chart data.The American Statistician, 60(1):3–12, 2006. doi: 10.1198/ 000313006X90305. 11

work page 2006

-

[37]

Håvard Rue, Sara Martino, and Nicolas Chopin. Approximate Bayesian inference for latent Gaussian models by using integrated nested Laplace approximations.Journal of the Royal Statistical Society: Series B (Statistical Methodology), 71(2):319–392, 2009

work page 2009

-

[38]

A constructive definition of Dirichlet priors.Statistica Sinica, 4:639–650, 1994

Jayaram Sethuraman. A constructive definition of Dirichlet priors.Statistica Sinica, 4:639–650, 1994

work page 1994

-

[39]

Illian, Finn Lindgren, Sigrunn H

Daniel Simpson, Janine B. Illian, Finn Lindgren, Sigrunn H. Sørbye, and Håvard Rue. Going off grid: Computationally efficient inference for log-Gaussian Cox processes.Biometrika, 103 (1):49–70, 2016

work page 2016

-

[40]

Ming Teng, Farouk S. Nathoo, and Timothy D. Johnson. Bayesian computation for log-Gaussian Cox processes: A comparative analysis of methods.Journal of Statistical Computation and Simulation, 87(11):2227–2252, 2017

work page 2017

-

[41]

Martin J. Wainwright and Michael I. Jordan. Graphical models, exponential families, and variational inference.Foundations and Trends in Machine Learning, 1(1–2):1–305, 2008

work page 2008

-

[42]

Chong Wang and David M. Blei. Variational inference in nonconjugate models.Journal of Machine Learning Research, 14:1005–1031, 2013

work page 2013

-

[43]

Eliot Wong-Toi, Hou-Cheng Yang, Weining Shen, and Guanyu Hu. A joint analysis for field goal attempts and percentages of professional basketball players: Bayesian nonparametric resource.Journal of Data Science, 21(1):68–86, 2023. doi: 10.6339/22-JDS1062

-

[44]

A dirichlet mixture model of hawkes processes for event sequence clustering

Hongteng Xu and Hongyuan Zha. A dirichlet mixture model of hawkes processes for event sequence clustering. InAdvances in Neural Information Processing Systems, volume 30, pages 1354–1363, 2017

work page 2017

-

[45]

Fan Yin, Jieying Jiao, Jun Yan, and Guanyu Hu. Bayesian nonparametric learning for point processes with spatial homogeneity: A spatial analysis of NBA shot locations. InProceedings of the 39th International Conference on Machine Learning, volume 162 ofProceedings of Machine Learning Research, pages 25523–25551. PMLR, 2022

work page 2022

-

[46]

Fan Yin, Guanyu Hu, and Weining Shen. Analysis of professional basketball field goal attempts via a bayesian matrix clustering approach.Journal of Computational and Graphical Statistics, 32(1):49–60, 2023. doi: 10.1080/10618600.2022.2085727

-

[47]

Row-clustering of a point process- valued matrix

Lihao Yin, Ganggang Xu, Huiyan Sang, and Yongtao Guan. Row-clustering of a point process- valued matrix. InAdvances in Neural Information Processing Systems, volume 34, pages 20028–20039, 2021

work page 2021

-

[48]

Learning mixture of neural temporal point processes for multi-dimensional event sequence clustering

Yunhao Zhang, Junchi Yan, Xiaolu Zhang, Jun Zhou, and Xiaokang Yang. Learning mixture of neural temporal point processes for multi-dimensional event sequence clustering. InProceedings of the Thirty-First International Joint Conference on Artificial Intelligence, pages 3766–3772, 2022. A Full ELBO The variational objective is L(q) =E q logp(y,m,z,ϕ,θ,τ 2) ...

work page 2022

-

[49]

model zone-level shot counts through a zero-inflated Poisson regression mixture, and Wong-Toi et al. [42] jointly analyze shot attempts and field-goal percentages over 12 predefined front-court regions while retaining only players with at least four shots in each region. More broadly, matrix- and region-based representations do not explicitly separate spa...

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.