Recognition: no theorem link

MiXR: Harvesting and Recomposing Geometry from Real-World Objects for In-Situ 3D Design

Pith reviewed 2026-05-12 03:37 UTC · model grok-4.3

The pith

MiXR lets users harvest real-world geometry segments in XR and assemble them by direct manipulation before generative AI synthesizes the final model.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

MiXR enables in-situ compositional modeling that lets users create new 3D models by harvesting geometry from their environment. Users extract segments from captured objects and assemble new artifacts through direct 3D manipulation, while generative AI synthesizes a coherent model from the user-defined composition. This hybrid workflow allows users to define spatial structure explicitly while delegating geometric refinement to generative models, enabling them to specify spatial intent that is difficult to express through verbal prompts alone.

What carries the argument

The hybrid workflow of extracting geometry segments from real-world captures, assembling them via direct 3D manipulation in XR, and passing the resulting spatial composition to generative AI for coherent synthesis.

If this is right

- Users gain the ability to specify precise spatial relationships that text or image prompts struggle to convey accurately.

- Final designs match intended targets more closely than those produced by generative composition alone.

- Participants report feeling greater control over the modeling process.

- Cognitive workload decreases because the system separates structural decisions from surface refinement.

- The method supports in-situ work by letting users work directly with geometry taken from the surrounding environment.

Where Pith is reading between the lines

- The same harvest-and-recompose pattern could support iterative refinement loops where users adjust segments and re-trigger synthesis until the result matches their intent.

- Extending the system to preserve surface textures or material properties from the original captures would make the output more suitable for fabrication pipelines.

- The workflow suggests a general template for other creative domains where humans supply spatial constraints and AI supplies missing geometric detail.

- Integration with physical scanning devices could reduce the gap between captured reality and the final digital model.

Load-bearing premise

Generative AI can reliably synthesize coherent, artifact-free 3D models from arbitrary user-specified spatial compositions extracted from real-world captures.

What would settle it

A controlled replication in which participants assemble the same set of harvested segments and the generative model repeatedly produces disconnected geometry, surface artifacts, or spatial arrangements that ignore the user-specified layout.

Figures

read the original abstract

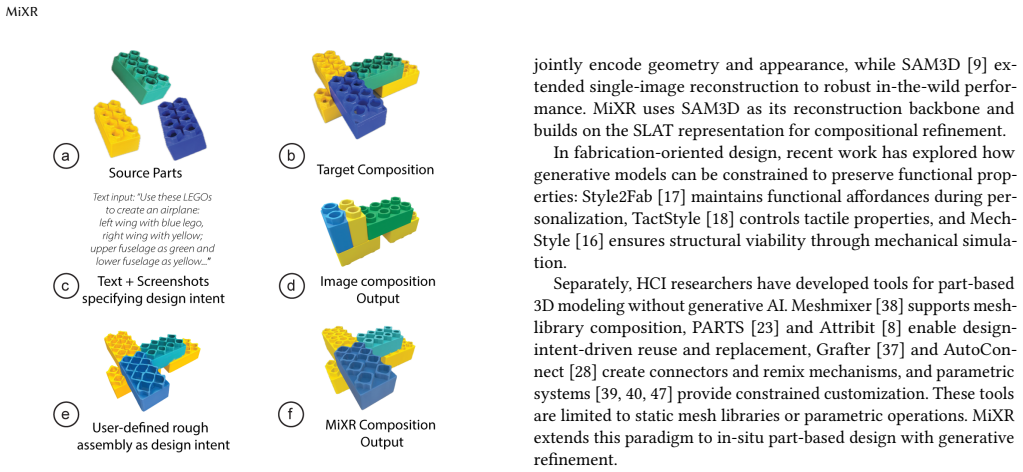

Recent developments in 3D generative AI enable users to create bespoke 3D models from text or image prompts. However, these approaches provide limited control over spatial structure, making them ill suited for tasks requiring precise geometric composition. We present MiXR, an XR system for in-situ compositional modeling that enables users to create new 3D models by harvesting geometry from their environment. Users extract segments from captured objects and assemble new artifacts through direct 3D manipulation, while generative AI synthesizes a coherent model from the user-defined composition. This hybrid workflow allows users to define spatial structure explicitly while delegating geometric refinement to generative models, enabling them to specify spatial intent that is difficult to express through verbal prompts alone. In a controlled user study ($N=12$), participants using MiXR rated their designs as significantly closer to the target, felt more in control, and experienced lower cognitive workload compared to a generative composition baseline.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript presents MiXR, an XR system for in-situ 3D compositional modeling. Users capture real-world objects, harvest geometric segments, assemble them via direct 3D manipulation, and use generative AI to synthesize a coherent final model from the user-defined spatial composition. The hybrid approach aims to give explicit control over structure while delegating refinement to AI. A controlled user study (N=12) reports that MiXR participants rated designs significantly closer to targets, felt more in control, and experienced lower cognitive workload than a generative composition baseline.

Significance. If the empirical findings hold after fuller reporting, MiXR could meaningfully advance in-situ 3D design tools by addressing the spatial-control limitations of pure generative AI methods. The direct-manipulation harvesting interface combined with synthesis offers a practical workflow for XR prototyping and creative tasks, with potential impact in HCI, design, and AR/VR applications.

major comments (3)

- [Abstract / User Study] Abstract and User Study section: the central claim that MiXR yields 'significantly closer' designs, 'more in control' ratings, and 'lower cognitive workload' is presented without any statistical details (p-values, effect sizes, exact metrics, exclusion criteria, or full methods). This omission directly weakens evaluation of the primary empirical result.

- [Evaluation / User Study] Evaluation section: no quantitative validation is provided on generative synthesis quality (geometric consistency, artifact frequency, or fidelity to user-specified spatial constraints). Observed benefits could therefore be attributable to the harvesting interface alone rather than the hybrid pipeline, which is load-bearing for the claimed advantage over the baseline.

- [System Design] System description: the mapping from user-assembled compositions to generative-model inputs is described at a high level only, with no technical specifics on prompt construction, constraint enforcement, or handling of invalid assemblies. This limits assessment of reproducibility and failure modes.

minor comments (2)

- [Figures] Figure captions should explicitly label workflow steps (capture, harvest, assemble, synthesize) to improve readability.

- [Related Work] Add a short related-work subsection contrasting MiXR with recent 3D generative interfaces that also support spatial constraints.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed review. We address each major comment below, agreeing where revisions are needed to improve clarity and rigor, and outlining specific changes to the manuscript.

read point-by-point responses

-

Referee: [Abstract / User Study] Abstract and User Study section: the central claim that MiXR yields 'significantly closer' designs, 'more in control' ratings, and 'lower cognitive workload' is presented without any statistical details (p-values, effect sizes, exact metrics, exclusion criteria, or full methods). This omission directly weakens evaluation of the primary empirical result.

Authors: We agree that the abstract omits key statistical details, which limits immediate assessment of the claims. The full user study section reports the quantitative results, but we will revise the abstract to incorporate the primary statistical findings (p-values, effect sizes, and key metrics) and expand the user study section to explicitly detail exclusion criteria, exact questionnaire items, and full analysis methods. revision: yes

-

Referee: [Evaluation / User Study] Evaluation section: no quantitative validation is provided on generative synthesis quality (geometric consistency, artifact frequency, or fidelity to user-specified spatial constraints). Observed benefits could therefore be attributable to the harvesting interface alone rather than the hybrid pipeline, which is load-bearing for the claimed advantage over the baseline.

Authors: The controlled study compares the full MiXR hybrid workflow against a generative composition baseline that lacks the harvesting and direct 3D assembly steps, thereby evaluating the combined benefit of explicit spatial control plus synthesis. We acknowledge the absence of isolated quantitative metrics on synthesis quality (e.g., constraint fidelity or artifact rates) as a gap. We will add a post-hoc analysis of the generated outputs, including objective measures of geometric consistency and adherence to user-specified spatial constraints, to better isolate the generative component's contribution. revision: partial

-

Referee: [System Design] System description: the mapping from user-assembled compositions to generative-model inputs is described at a high level only, with no technical specifics on prompt construction, constraint enforcement, or handling of invalid assemblies. This limits assessment of reproducibility and failure modes.

Authors: We agree that greater technical specificity would aid reproducibility and understanding of edge cases. In the revised manuscript we will expand the system section with concrete details on prompt construction from user assemblies, the mechanisms used to encode spatial constraints for the generative model, and the strategies employed for detecting and mitigating invalid or incomplete assemblies. revision: yes

Circularity Check

No circularity: empirical user study with independent evaluation

full rationale

The paper describes an XR system for 3D modeling and evaluates it through a controlled user study (N=12) comparing participant ratings of design closeness, control, and workload against a generative baseline. No equations, fitted parameters, derivation chains, or self-referential reductions appear in the provided text. Claims rest on direct empirical measurements rather than any mathematical construction that collapses to inputs by definition. Self-citations, if present for prior generative AI work, are not load-bearing for the central result and do not create a uniqueness theorem or ansatz that forces the outcome.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Generative AI models can produce coherent 3D geometry from partial user-specified compositions

- domain assumption Users can accurately extract and manipulate 3D segments from captured real-world objects in XR

invented entities (1)

-

MiXR system

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Shm Garanganao Almeda, JD Zamfirescu-Pereira, Kyu Won Kim, Pradeep Mani Rathnam, and Bjoern Hartmann. 2024. Prompting for discovery: Flex- ible sense-making for ai art-making with dreamsheets. InProceedings of the 2024 CHI Conference on Human Factors in Computing Systems. 1–17

work page 2024

-

[2]

Tyler Angert, Miroslav Suzara, Jenny Han, Christopher Pondoc, and Hariharan Subramonyam. 2023. Spellburst: A node-based interface for exploratory creative coding with natural language prompts. InProceedings of the 36th Annual ACM Symposium on User Interface Software and Technology. 1–22

work page 2023

-

[3]

Oğuz Arslan, Artun Akdoğan, and Mustafa Doga Dogan. 2025. TinkerXR: In-Situ, Reality-Aware CAD and 3D Printing Interface for Novices. InProceedings of the ACM Symposium on Computational Fabrication. 1–19

work page 2025

- [4]

- [5]

-

[6]

Stephen Brade, Bryan Wang, Mauricio Sousa, Sageev Oore, and Tovi Gross- man. 2023. Promptify: Text-to-image generation through interactive prompt exploration with large language models. InProceedings of the 36th Annual ACM Symposium on User Interface Software and Technology. 1–14

work page 2023

-

[7]

Virginia Braun and Victoria Clarke. 2006. Using thematic analysis in psychology. Qualitative research in psychology3, 2 (2006), 77–101

work page 2006

-

[8]

Siddhartha Chaudhuri, Evangelos Kalogerakis, Stephen Giguere, and Thomas Funkhouser. 2013. Attribit: Content Creation with Semantic Attributes. InPro- ceedings of the 26th Annual ACM Symposium on User Interface Software and Tech- nology(St. Andrews, Scotland, United Kingdom)(UIST ’13). Association for Com- puting Machinery, New York, NY, USA, 193–202. doi...

-

[9]

Xingyu Chen, Fu-Jen Chu, Pierre Gleize, Kevin J Liang, Alexander Sax, Hao Tang, Weiyao Wang, Michelle Guo, Thibaut Hardin, Xiang Li, et al. 2025. Sam 3d: 3dfy anything in images.arXiv preprint arXiv:2511.16624(2025)

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[10]

Gene Chou, Yuval Bahat, and Felix Heide. 2023. Diffusion-sdf: Conditional generative modeling of signed distance functions. InProceedings of the IEEE/CVF international conference on computer vision. 2262–2272

work page 2023

-

[11]

John Joon Young Chung and Eytan Adar. 2023. Promptpaint: Steering text-to- image generation through paint medium-like interactions. InProceedings of the 36th Annual ACM Symposium on User Interface Software and Technology. 1–17

work page 2023

-

[12]

Hai Dang, Frederik Brudy, George Fitzmaurice, and Fraser Anderson. 2023. World- smith: Iterative and expressive prompting for world building with a generative ai. InProceedings of the 36th Annual ACM Symposium on User Interface Software and Technology. 1–17

work page 2023

-

[13]

Matt Deitke, Dustin Schwenk, Jordi Salvador, Luca Weihs, Oscar Michel, Eli VanderBilt, Ludwig Schmidt, Kiana Ehsani, Aniruddha Kembhavi, and Ali Farhadi

-

[14]

InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition

Objaverse: A Universe of Annotated 3D Objects. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 13142–13153

-

[15]

Mustafa Doga Dogan, Patrick Baudisch, Hrvoje Benko, Michael Nebeling, Huaishu Peng, Valkyrie Savage, and Stefanie Mueller. 2022. Fabricate It or Render It? Digital Fabrication vs. Virtual Reality for Creating Objects Instantly. InExtended Abstracts of the 2022 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery, 5. do...

-

[16]

Mustafa Doga Dogan, Eric J Gonzalez, Karan Ahuja, Ruofei Du, Andrea Colaço, Johnny Lee, Mar Gonzalez-Franco, and David Kim. 2024. Augmented object intelligence with xr-objects. InProceedings of the 37th Annual ACM Symposium on User Interface Software and Technology. 1–15

work page 2024

-

[17]

Faraz Faruqi, Amira Abdel-Rahman, Leandra Tejedor, Martin Nisser, Jiaji Li, Vrushank Phadnis, Varun Jampani, Neil Gershenfeld, Megan Hofmann, and Stefanie Mueller. 2025. MechStyle: Augmenting Generative AI with Mechanical Simulation to Create Stylized and Structurally Viable 3D Models. InProceedings of the ACM Symposium on Computational Fabrication. 1–15

work page 2025

-

[18]

Faraz Faruqi, Ahmed Katary, Tarik Hasic, Amira Abdel-Rahman, Nayeemur Rahman, Leandra Tejedor, Mackenzie Leake, Megan Hofmann, and Stefanie Mueller. 2023. Style2Fab: Functionality-Aware Segmentation for Fabricating Personalized 3D Models with Generative AI. InProceedings of the 36th Annual ACM Symposium on User Interface Software and Technology. 1–13

work page 2023

-

[19]

Faraz Faruqi, Maxine Perroni-Scharf, Jaskaran Singh Walia, Yunyi Zhu, Shuyue Feng, Donald Degraen, and Stefanie Mueller. 2025. TactStyle: Generating Tactile Textures with Generative AI for Digital Fabrication. InProceedings of the 2025 CHI Conference on Human Factors in Computing Systems. 1–16

work page 2025

- [20]

-

[21]

Jun Gao, Tianchang Shen, Zian Wang, Wenzheng Chen, Kangxue Yin, Daiqing Li, Or Litany, Zan Gojcic, and Sanja Fidler. 2022. GET3D: A Generative Model of High Quality 3D Textured Shapes Learned from Images. InAdvances In Neural Information Processing Systems

work page 2022

-

[22]

2025.Gemini 3: A Family of Highly Capable Multimodal Models

Gemini Team, Google. 2025.Gemini 3: A Family of Highly Capable Multimodal Models. Technical Report. Google DeepMind. https://storage.googleapis.com/ deepmind-media/Model-Cards/Gemini-3-Pro-Model-Card.pdf

work page 2025

-

[23]

Hyojun Go, Byeongjun Park, Jiho Jang, Jin-Young Kim, Soonwoo Kwon, and Changick Kim. 2025. SplatFlow: Multi-View Rectified Flow Model for 3D Gauss- ian Splatting Synthesis. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). 21524–21536

work page 2025

-

[24]

Megan Hofmann, Gabriella Hann, Scott E. Hudson, and Jennifer Mankoff. 2018. Greater than the Sum of Its PARTs: Expressing and Reusing Design Intent in 3D Models. InProceedings of the 2018 CHI Conference on Human Factors in Computing Systems(Montreal QC, Canada)(CHI ’18). Association for Computing Machinery, New York, NY, USA, 1–12. doi:10.1145/3173574.3173875

- [25]

-

[26]

Erzhen Hu, Mingyi Li, Jungtaek Hong, Xun Qian, Alex Olwal, David Kim, Seongkook Heo, and Ruofei Du. 2025. Thing2Reality: Enabling Spontaneous Creation of 3D Objects from 2D Content using Generative AI in XR Meetings. In Proceedings of the 38th Annual ACM Symposium on User Interface Software and Technology. 1–16

work page 2025

-

[27]

Alexander Kirillov, Eric Mintun, Nikhila Ravi, Hanzi Mao, Chloe Rolland, Laura Gustafson, Tete Xiao, Spencer Whitehead, Alexander C Berg, Wan-Yen Lo, et al

-

[28]

InProceedings of the IEEE/CVF international conference on computer vision

Segment anything. InProceedings of the IEEE/CVF international conference on computer vision. 4015–4026

-

[29]

Roman Klokov, Edmond Boyer, and Jakob Verbeek. 2020. Discrete point flow net- works for efficient point cloud generation. InEuropean Conference on Computer Vision. Springer, 694–710

work page 2020

- [30]

-

[31]

Brian Lee, Savil Srivastava, Ranjitha Kumar, Ronen Brafman, and Scott R Klem- mer. 2010. Designing with interactive example galleries. InProceedings of the SIGCHI conference on human factors in computing systems. 2257–2266

work page 2010

- [32]

-

[33]

Zisu Li, Jiawei Li, Zeyu Xiong, Shumeng Zhang, Faraz Faruqi, Stefanie Mueller, Chen Liang, Xiaojuan Ma, and Mingming Fan. 2025. InteRecon: Towards Re- constructing Interactivity of Personal Memorable Items in Mixed Reality. In Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems. 1–19

work page 2025

-

[34]

Chen-Hsuan Lin, Jun Gao, Luming Tang, Towaki Takikawa, Xiaohui Zeng, Xun Huang, Karsten Kreis, Sanja Fidler, Ming-Yu Liu, and Tsung-Yi Lin. 2023. Magic3d: High-resolution text-to-3d content creation. InProceedings of the IEEE/CVF Con- ference on Computer Vision and Pattern Recognition. 300–309

work page 2023

-

[35]

Tianqi Liu, Guangcong Wang, Shoukang Hu, Liao Shen, Xinyi Ye, Yuhang Zang, Zhiguo Cao, Wei Li, and Ziwei Liu. 2025. MVSGaussian: Fast Generalizable Gaussian Splatting Reconstruction from Multi-View Stereo. InComputer Vision – ECCV 2024, Aleš Leonardis, Elisa Ricci, Stefan Roth, Olga Russakovsky, Torsten Sattler, and Gül Varol (Eds.). Springer Nature Switz...

work page 2025

-

[36]

Damien Masson, Sylvain Malacria, Géry Casiez, and Daniel Vogel. 2024. Direct- gpt: A direct manipulation interface to interact with large language models. In Proceedings of the 2024 CHI Conference on Human Factors in Computing Systems. 1–16

work page 2024

-

[37]

Yuxuan Mu, Xinxin Zuo, Chuan Guo, Yilin Wang, Juwei Lu, Xiaofeng Wu, Song- cen Xu, Peng Dai, Youliang Yan, and Li Cheng. 2025. GSD: View-Guided Gaussian Splatting Diffusion for 3D Reconstruction. InComputer Vision – ECCV 2024, Aleš Leonardis, Elisa Ricci, Stefan Roth, Olga Russakovsky, Torsten Sattler, and Gül Varol (Eds.). Springer Nature Switzerland, Ch...

work page 2025

-

[38]

Savvas Petridis, Nicholas Diakopoulos, Kevin Crowston, Mark Hansen, Keren Henderson, Stan Jastrzebski, Jeffrey V Nickerson, and Lydia B Chilton. 2023. Anglekindling: Supporting journalistic angle ideation with large language models. InProceedings of the 2023 CHI conference on human factors in computing systems. 1–16

work page 2023

-

[39]

Thijs Jan Roumen, Willi Müller, and Patrick Baudisch. 2018. Grafter: Remixing 3D- Printed Machines. InProceedings of the 2018 CHI Conference on Human Factors in Computing Systems(Montreal QC, Canada)(CHI ’18). Association for Computing Machinery, New York, NY, USA, 1–12. doi:10.1145/3173574.3173637 Faruqi et al

-

[40]

Ryan Schmidt and Karan Singh. 2010. Meshmixer: An Interface for Rapid Mesh Composition. InACM SIGGRAPH 2010 Talks. Association for Computing Ma- chinery, New York, NY, USA. doi:10.1145/1837026.1837034

-

[41]

Adriana Schulz, Ariel Shamir, David I. W. Levin, Pitchaya Sitthi-amorn, and Wojciech Matusik. 2014. Design and Fabrication by Example.ACM Trans. Graph. 33, 4, Article 62 (jul 2014), 11 pages. doi:10.1145/2601097.2601127

-

[42]

Maria Shugrina, Ariel Shamir, and Wojciech Matusik. 2015. Fab Forms: Cus- tomizable Objects for Fabrication with Validity and Geometry Caching.ACM Trans. Graph.34, 4, Article 100 (jul 2015), 12 pages. doi:10.1145/2766994

-

[43]

Evgeny Stemasov, Simon Demharter, Max Rädler, Jan Gugenheimer, and Enrico Rukzio. 2024. pARam: Leveraging Parametric Design in Extended Reality to Support the Personalization of Artifacts for Personal Fabrication. InProceedings of the CHI Conference on Human Factors in Computing Systems (CHI ’24). Association for Computing Machinery, New York, NY, USA, 1–...

-

[44]

Evgeny Stemasov, Jessica Hohn, Maurice Cordts, Anja Schikorr, Enrico Rukzio, and Jan Gugenheimer. 2023. BrickStARt: Enabling In-situ Design and Tangible Exploration for Personal Fabrication using Mixed Reality.Proceedings of the ACM on Human-Computer Interaction7, ISS (Oct. 2023), 64–92. doi:10.1145/3626465

-

[45]

Blair Subbaraman, Shenna Shim, and Nadya Peek. 2023. Forking a sketch: How the openprocessing community uses remixing to collect, annotate, tune, and extend creative code. InProceedings of the 2023 ACM Designing Interactive Systems Conference. 326–342

work page 2023

- [46]

-

[47]

Ryo Suzuki, Parastoo Abtahi, Chen Zhu-Tian, Mustafa Doga Dogan, Andrea Colaco, Eric J Gonzalez, Karan Ahuja, and Mar Gonzalez-Franco. 2025. Pro- grammable reality.Frontiers in Virtual Reality6 (2025), 1649785

work page 2025

-

[48]

Jiaxiang Tang, Zhaoxi Chen, Xiaokang Chen, Tengfei Wang, Gang Zeng, and Ziwei Liu. 2024. Lgm: Large multi-view gaussian model for high-resolution 3d content creation. InEuropean Conference on Computer Vision. Springer, 1–18

work page 2024

-

[49]

Tom Veuskens, Florian Heller, and Raf Ramakers. 2021. CODA: A Design Assis- tant to Facilitate Specifying Constraints and Parametric Behavior in CAD Models. InGraphics Interface 2021. https://openreview.net/forum?id=1dLDPJeafRZ

work page 2021

-

[50]

Zhijie Wang, Yuheng Huang, Da Song, Lei Ma, and Tianyi Zhang. 2024. Promptcharm: Text-to-image generation through multi-modal prompting and refinement. InProceedings of the 2024 CHI Conference on Human Factors in Com- puting Systems. 1–21

work page 2024

-

[51]

Christian Weichel, Manfred Lau, David Kim, Nicolas Villar, and Hans Gellersen

-

[52]

MixFab: a mixed-reality environment for personal fabrication.CHI ’14 Proceedings of the SIGCHI Conference on Human Factors in Computing Systems (April 2014), 3855–3864. doi:10.1145/2556288.2557090

-

[53]

Jianfeng Xiang, Zelong Lv, Sicheng Xu, Yu Deng, Ruicheng Wang, Bowen Zhang, Dong Chen, Xin Tong, and Jiaolong Yang. 2025. Structured 3d latents for scalable and versatile 3d generation. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition. 21469–21480

work page 2025

-

[54]

Jiale Xu, Weihao Cheng, Yiming Gao, Xintao Wang, Shenghua Gao, and Ying Shan. 2024. Instantmesh: Efficient 3d mesh generation from a single image with sparse-view large reconstruction models.arXiv preprint arXiv:2404.07191(2024)

work page internal anchor Pith review arXiv 2024

-

[55]

J Diego Zamfirescu-Pereira, Richmond Y Wong, Bjoern Hartmann, and Qian Yang. 2023. Why Johnny can’t prompt: how non-AI experts try (and fail) to design LLM prompts. InProceedings of the 2023 CHI conference on human factors in computing systems. 1–21

work page 2023

-

[56]

Lei Zhang, Jin Pan, Jacob Gettig, Steve Oney, and Anhong Guo. 2024. Vrcopilot: Authoring 3d layouts with generative ai models in vr. InProceedings of the 37th Annual ACM Symposium on User Interface Software and Technology. 1–13

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.