Recognition: no theorem link

Zoom, Don't Wander: Why Regional Search Outperforms Pareto Reasoning and Global Optimization in Budget-Constrained SBSE

Pith reviewed 2026-05-12 03:40 UTC · model grok-4.3

The pith

In budget-constrained SBSE, regional zooming outperforms Pareto and global optimization.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

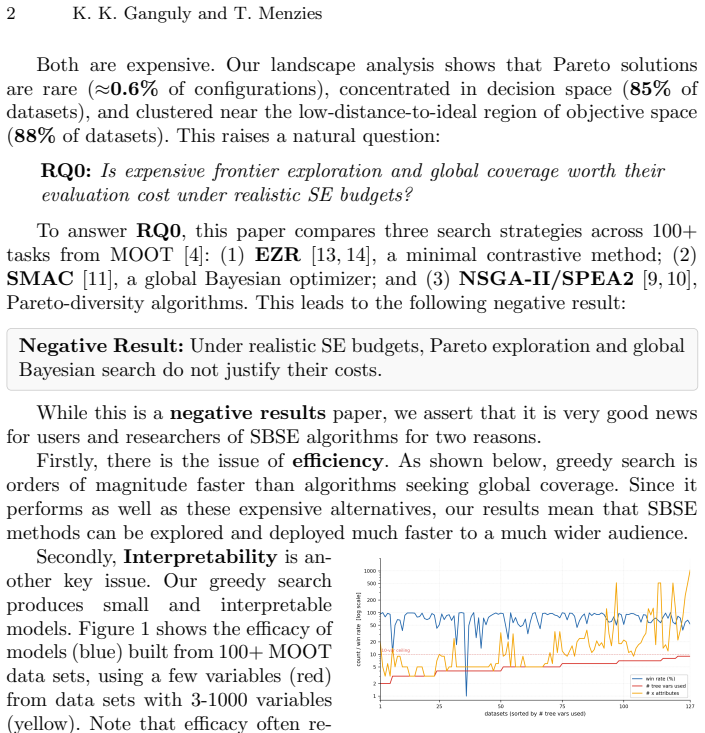

Pareto-optimal solutions form a tiny, tight island concentrated in a compact region of decision space across the studied tasks. A minimal greedy zoom method, EZR, therefore runs three orders of magnitude faster than Pareto and global Bayesian methods, achieves higher statistical ranks, wins or ties in 84-89% of datasets on equal budget, and still wins or ties in 79-81% at one-fifth budget, while matching or exceeding Pareto methods on IGD and HV coverage metrics despite never explicitly seeking the frontier.

What carries the argument

EZR, the minimal greedy zoom method that iteratively narrows search to promising regions identified from initial samples rather than exploring globally or maintaining a full Pareto front.

If this is right

- SBSE practitioners can obtain superior or equal performance with far smaller evaluation budgets by replacing global search with regional zoom.

- The resulting models are small and interpretable, reducing reliance on black-box optimization.

- Global and Pareto methods waste evaluations outside the optimal island in the studied tasks.

- Competitive coverage metrics like IGD and HV can be reached without explicitly maintaining or targeting the Pareto front.

Where Pith is reading between the lines

- The observed clustering of optima suggests many SE problems may have low effective dimensionality that zooming exploits efficiently.

- Similar regional focus could be tested in other constrained optimization settings outside software engineering where evaluation budgets are tight.

- A hybrid that begins with zooming and adds occasional wider sampling might handle cases where the compact-island structure does not hold.

Load-bearing premise

Pareto-optimal solutions form a tiny, tight island concentrated in a compact region of decision space across the studied SE optimization tasks.

What would settle it

An SE optimization task in which Pareto-optimal solutions are spread across a wide area of decision space, causing the zooming method to miss better solutions that global methods locate within the same budget.

Figures

read the original abstract

Traditional Search-Based Software Engineering (SBSE) assumes global search and full Pareto exploration are essential. We offer the following negative result based on a study of over 100 Software Engineering (SE) optimization tasks: "zooming" into promising regions is far more effective than Pareto and global exploration under constrained evaluation budgets. Our minimal greedy zoom method, EZR, runs three orders of magnitude faster than Pareto and global Bayesian methods, achieving higher statistical ranks and winning or tying in 84-89\% of datasets on equal budget. Even at one-fifth the evaluation budget, EZR wins or ties in 79-81\% of datasets. Surprisingly, despite never explicitly seeking a frontier, EZR matches or outperforms Pareto methods on their own coverage metrics (IGD, HV) at equal budgets. The explanation for this widespread failure is structural: across the datasets studied, Pareto-optimal solutions form a tiny, tight island concentrated in a compact region of decision space. Methods that wander waste their budgets outside this island. Beyond efficiency, zooming yields small, interpretable models, thus addressing concerns about black-box AI. By replacing global wandering with greedy zooming, we make SBSE much faster, more explicable, and hence accessible to a wider audience. SBSE practitioners and researchers should zoom, not wander.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims that traditional SBSE assumptions favoring global search and full Pareto exploration are misguided under constrained evaluation budgets. Based on experiments across over 100 SE optimization tasks, the authors introduce a minimal greedy zooming method (EZR) that runs three orders of magnitude faster than Pareto and global Bayesian optimizers, achieves higher statistical ranks, wins or ties in 84-89% of datasets at equal budget (and 79-81% at one-fifth budget), and matches or exceeds Pareto methods on IGD and HV metrics despite not targeting the frontier. They explain this via the structural observation that Pareto-optimal solutions form a tiny, tight island in a compact region of decision space, rendering global wandering wasteful, and note that zooming also yields small, interpretable models.

Significance. If the empirical results hold after detailed verification of experimental design, this has substantial significance for SBSE by providing large-scale evidence that regional greedy search can outperform established global and Pareto approaches in budget-limited settings, potentially improving efficiency and accessibility. The scale (>100 tasks) and multi-metric evaluation (including Pareto-favored IGD/HV) are strengths, as is the post-hoc structural insight into solution compactness, which could inform future algorithm design and address interpretability concerns in AI for software engineering.

minor comments (3)

- The abstract provides headline performance numbers but omits key methodological details such as task selection criteria, exact statistical tests for ranks and win/tie rates, baseline implementation specifics, and any controls for selection bias; these should be summarized briefly in the abstract or prominently in the introduction for readers to assess the claims.

- The structural explanation that Pareto-optimal solutions form a 'tiny, tight island' is presented post-hoc; consider adding a dedicated subsection in the discussion or results that tests this observation more formally (e.g., via metrics of solution-space concentration) rather than as retrospective interpretation.

- Clarify the precise definition and implementation of the EZR 'minimal greedy zoom' procedure early in the methods section, including any hyperparameters or stopping criteria, to ensure reproducibility.

Simulated Author's Rebuttal

We thank the referee for their positive and accurate summary of our work, as well as the recommendation for minor revision. The assessment correctly captures the core negative result, the scale of the experiments, and the structural explanation regarding solution compactness. Since the report lists no specific major comments, we have no point-by-point rebuttals to provide.

Circularity Check

No significant circularity detected

full rationale

The paper presents an empirical negative result from experiments on over 100 SE optimization tasks: the EZR greedy zoom method outperforms Pareto and global Bayesian optimizers in runtime (three orders of magnitude) and win/tie rates (84-89% at equal budget, 79-81% at 1/5 budget) while matching IGD/HV metrics. The structural claim that Pareto fronts occupy a compact region is offered as a post-hoc observation from the data rather than a prior modeling assumption or fitted parameter. No equations, derivations, or self-citations reduce the central performance claims to tautological inputs; the work is self-contained via direct experimental comparison against external baselines.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Anthony J. Bagnall, Victor J. Rayward-Smith, and Ian M Whittley. The next release problem. Inf. Soft. Tech., 43(14):883–890, 2001

work page 2001

-

[2]

Evosuite: automatic test suite generation for object-oriented software

Gordon Fraser and Andrea Arcuri. Evosuite: automatic test suite generation for object-oriented software. InProc. Found. Soft. Eng., pages 416–419, 2011

work page 2011

-

[3]

Au- tomated parameter optimization of classification techniques for defect prediction models

Chakkrit Tantithamthavorn, Shane McIntosh, Ahmed E Hassan, and Kenichi Matsumoto. Au- tomated parameter optimization of classification techniques for defect prediction models. In Proc. ICSE, pages 321–332. ACM, 2016

work page 2016

-

[4]

Moot: a repository of many multi-objective optimization tasks

Tim Menzies, Tao Chen, Yulong Ye, Kishan Kumar Ganguly, Amirali Rayegan, Srinath Srini- vasan, and Andre Lustosa. Moot: a repository of many multi-objective optimization tasks. arXiv preprint arXiv:2511.16882, 2025

-

[5]

Mark Harman, S Afshin Mansouri, and Yuanyuan Zhang. Search-based software engineering: Trends, techniques and applications.ACM Computing Surveys (CSUR), 45(1):11, 2012

work page 2012

-

[6]

Lev Sorokin, Damir Safin, and Shiva Nejati. Can search-based testing with pareto optimization effectively cover failure-revealing test inputs?Empirical Software Engineering, 30(1):26, 2025

work page 2025

-

[7]

J. Chen and M. Li. The weights can be harmful: Pareto search versus weighted search in multi- objective search-based software engineering.ACM Trans. Soft. Eng. Methodol., 32:1–40, 2023

work page 2023

-

[8]

M. Li, T. Chen, and X. Yao. How to evaluate solutions in pareto-based search-based software engineering: A critical review and methodological guidance.IEEE TSE, 48(5):1771–1799, 2022

work page 2022

-

[9]

A fast and elitist multiobjective genetic algorithm: Nsga-ii.IEEE Trans

Kalyanmoy Deb, Amrit Pratap, Sameer Agarwal, and TAMT Meyarivan. A fast and elitist multiobjective genetic algorithm: Nsga-ii.IEEE Trans. Evo. Comp., 6(2):182–197, 2002

work page 2002

-

[10]

SPEA2: Improving the strength Pareto evolutionary algorithm

Eckart Zitzler, Marco Laumanns, and Lothar Thiele. SPEA2: Improving the strength Pareto evolutionary algorithm. Technical Report TIK-Report 103, ETH Zurich, 2001

work page 2001

-

[11]

Frank Hutter, Holger H. Hoos, and Kevin Leyton-Brown. Sequential model-based optimization for general algorithm configuration. InProc. LION, pages 507–523. Springer, 2011. Zoom, Don’t Wander 15

work page 2011

- [12]

-

[13]

Minimal Data, Maximum Clarity: A Heuristic for Explaining Optimization

Amirali Rayegan and Tim Menzies. Minimal data, maximum clarity: A heuristic for explaining optimization.arXiv preprint arXiv:2509.08667, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[14]

How low can you go? The data-light SE challenge

Tim Menzies and Kishan Kumar Ganguly. How low can you go? The data-light SE challenge. InArxiv, 2026

work page 2026

-

[15]

Cynthia Rudin. Stop explaining black box machine learning models for high stakes decisions and use interpretable models instead.Nature machine intelligence, 1(5):206–215, 2019

work page 2019

-

[16]

Vivek Nair, Zhe Yu, Tim Menzies, Norbert Siegmund, and Sven Apel. Finding faster configu- rations using FLASH.IEEE Transactions on Software Engineering, 46(7):794–811, 2020

work page 2020

-

[17]

Heuristics for systems engineering cost estimation.IEEE Sys

Ricardo Valerdi. Heuristics for systems engineering cost estimation.IEEE Sys. J., 2010

work page 2010

-

[18]

Mark Easterby-Smith. The design, analysis and interpretation of repertory grids.International Journal of Man-Machine Studies, 13(1):3–24, 1980

work page 1980

-

[19]

Springer Science & Business Media, 2012

Ching-Lai Hwang and Kwangsun Yoon.Multiple attribute decision making: methods and applications a state-of-the-art survey. Springer Science & Business Media, 2012

work page 2012

-

[20]

Milan Zeleny.Multiple Criteria Decision Making. McGraw-Hill, New York, 1982

work page 1982

-

[21]

Miqing Li, Tao Chen, and Xin Yao. How to evaluate solutions in pareto-based search-based software engineering? a critical review and methodological guidance.IEEE TSE, 2020

work page 2020

-

[22]

T. E. Colanzi, W. K. G. Assun¸ c˜ ao, S. R. Vergilio, P. R. Farah, G. Guizzo, et al. The symposium on search-based software engineering: Past, present and future.Information and Software Technology, 127:106372, 2020

work page 2020

-

[23]

J. Bergstra, R. Bardenet, Y. Bengio, and B. K´ egl. Algorithms for hyper-parameter optimization. InNIPS, 2011

work page 2011

-

[24]

Awad, Neeratyoy Mallik, and Frank Hutter

Noor H. Awad, Neeratyoy Mallik, and Frank Hutter. DEHB: evolutionary hyberband for scal- able, robust and efficient hyperparameter optimization.CoRR, abs/2105.09821, 2021

-

[25]

Andrea Arcuri and Lionel Briand. A practical guide for using statistical tests to assess random- ized algorithms in software engineering. InInt. Conf. Soft. Eng., pages 1–10. IEEE, 2011

work page 2011

- [26]

-

[27]

Promisetune: Unveiling causally promising and explainable configuration tuning

Pengzhou Chen and Tao Chen. Promisetune: Unveiling causally promising and explainable configuration tuning. InProc. of the 48th IEEE/ACM Int. Conf. Softw. Eng., 2026

work page 2026

-

[28]

The case for compact ai.Commun

Tim Menzies. The case for compact ai.Commun. ACM, 68(8):6–7, 2025

work page 2025

-

[29]

Andre Lustosa and Tim Menzies. isneak: Partial ordering as heuristics for model- based rea- soning in software engineering.IEEE Access, 12:142915–142929, 2024

work page 2024

-

[30]

Lohith Senthilkumar and Tim Menzies. Can large language models improve se active learning via warm-starts?arXiv preprint arXiv:2501.00125, 2024

-

[31]

Andre Lustosa and Tim Menzies. Less noise, more signal: Drr for better optimizations of se tasks.arXiv preprint arXiv:2503.21086, 2025

-

[32]

V. Nair, A. Agrawal, J. Chen, W. Fu, G. Mathew, T. Menzies, L. L. Minku, M. Wagner, and Z. Yu. Data-driven search-based software engineering. InMSR, 2018

work page 2018

-

[33]

Using bad learners to find good configurations

Vivek Nair, Tim Menzies, Norbert Siegmund, and Sven Apel. Using bad learners to find good configurations. InProc. Found. Soft. Eng., pages 257–267, 2017

work page 2017

-

[34]

Accuracy can lie: On the impact of surrogate model in configuration tuning.IEEE Trans

Pengzhou Chen, Jingzhi Gong, and Tao Chen. Accuracy can lie: On the impact of surrogate model in configuration tuning.IEEE Trans. Softw. Eng., 51(2):548–580, 2025

work page 2025

-

[35]

Andre Lustosa and Tim Menzies. Learning from very little data: On the value of landscape analysis for predicting software project health.ACM Trans. Soft. Eng. Methodol., 33(3), 2024

work page 2024

-

[36]

Jianfeng Chen, Vivek Nair, and Tim Menzies. Beyond evolutionary algorithms for search-based software engineering.Information and Software Technology, 95:281–294, 2018

work page 2018

-

[37]

Student dropout analysis and prediction dataset, 2025

Abdullah0a. Student dropout analysis and prediction dataset, 2025. Kaggle

work page 2025

-

[38]

Fifa world cup 2022: Complete dataset, 2025

die9origephit. Fifa world cup 2022: Complete dataset, 2025. Kaggle

work page 2022

-

[39]

Ibm hr analytics employee attrition & performance, 2025

Pavansubhasht. Ibm hr analytics employee attrition & performance, 2025. Kaggle

work page 2025

- [40]

- [41]

-

[42]

Medical data and hospital readmissions, 2025

dansbecker. Medical data and hospital readmissions, 2025. Kaggle

work page 2025

- [43]

-

[44]

Animal crossing new horizons: Nookplaza dataset, 2021

jessicali9530. Animal crossing new horizons: Nookplaza dataset, 2021. Kaggle

work page 2021

-

[45]

Marketing analytics – marketing data, 2022

Jack Daoud. Marketing analytics – marketing data, 2022. Kaggle

work page 2022

-

[46]

Car price dataset – cleaned, 2025

syedfaizanalii. Car price dataset – cleaned, 2025. Kaggle

work page 2025

-

[47]

A. J. Scott and M. Knott. A cluster analysis method for grouping means in the analysis of variance.Biometrics, 30:507–512, 1974

work page 1974

-

[48]

Janez Demˇ sar. Statistical comparisons of classifiers over multiple data sets.Journal of Machine learning research, 7(Jan):1–30, 2006

work page 2006

-

[49]

The effect of offspring population size on NSGA-II: A preliminary study

Max Hort and Federica Sarro. The effect of offspring population size on NSGA-II: A preliminary study. InProc. GECCO, pages 1–2. ACM, 2021

work page 2021

-

[50]

Kalyanmoy Deb and Himanshu Jain. An evolutionary many-objective optimization algorithm using reference-point-based nondominated sorting approach.IEEE TSE, 18(4):577–601, 2014

work page 2014

-

[51]

Qingfu Zhang and Hui Li. MOEA/D: A multiobjective evolutionary algorithm based on de- composition.IEEE Transactions on Evolutionary Computation, 11(6):712–731, 2007

work page 2007

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.