Recognition: 2 theorem links

· Lean TheoremFreeMOCA: Memory-Free Continual Learning for Malicious Code Analysis

Pith reviewed 2026-05-15 05:30 UTC · model grok-4.3

The pith

Adaptive layer-wise interpolation between task optima enables memory-free continual learning for malware detection.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

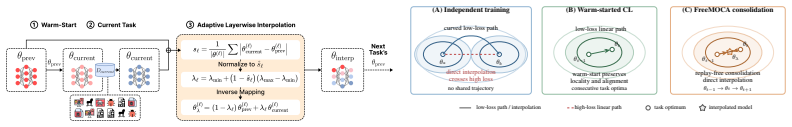

FreeMOCA achieves continual learning for malicious code analysis by adaptive layer-wise interpolation between consecutive task optima in parameter space, leveraging low-loss connectivity of warm-started solutions to avoid catastrophic forgetting without memory or replay, and outperforming baselines on Class-IL and Domain-IL settings for EMBER and AZ benchmarks.

What carries the argument

adaptive layer-wise interpolation between consecutive task updates, which exploits low-loss paths connecting warm-started task optima

If this is right

- Continual updates to malware classifiers become feasible without storing previous samples or incurring high compute costs for full retraining.

- Detectors maintain high accuracy on both new and old threat categories, closing exploitable blind spots.

- The method scales to large benchmarks like EMBER and AZ while reducing forgetting compared to replay-based approaches.

- Both class-incremental and domain-incremental scenarios benefit, supporting evolving threat landscapes.

Where Pith is reading between the lines

- This geometric interpolation strategy might apply to other continual learning problems where task optima are similarly connected, such as in image classification or natural language processing.

- If the low-loss path property holds more generally, it could reduce dependence on data replay across security and other dynamic domains.

- Further tests on additional malware datasets or real-time streaming scenarios would help validate the scalability.

Load-bearing premise

Warm-started task optima in the model parameter space are connected by low-loss paths that permit effective interpolation.

What would settle it

An experiment where applying the layer-wise interpolation between two consecutive task optima causes a large drop in accuracy on the first task's test set, comparable to or worse than naive fine-tuning.

Figures

read the original abstract

As over 200 million new malware samples are identified each year, antivirus systems must continuously adapt to the evolving threat landscape. However, retraining solely on new samples leads to catastrophic forgetting and exploitable blind spots, while retraining on the entire dataset incurs substantial computational cost. We propose FreeMOCA, a memory- and compute-efficient continual learning framework for malicious code analysis that preserves prior knowledge via adaptive layer-wise interpolation between consecutive task updates, leveraging the fact that warm-started task optima are connected by low-loss paths in parameter space. We evaluate FreeMOCA in both class-incremental (Class-IL) and domain-incremental (Domain-IL) settings on large-scale Windows (EMBER) and Android (AZ) malware benchmarks. FreeMOCA achieves substantial gains in Class-IL, outperforming 11 baselines on both EMBER and AZ benchmarks. It also significantly reduces forgetting, achieving the best retention across baselines, and improving accuracy by up to 42% and 37% on EMBER and AZ, respectively. These results demonstrate that warm-started interpolation in parameter space provides a scalable and effective alternative to replay for continual malware detection. Code is available at: https://github.com/IQSeC-Lab/FreeMOCA.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes FreeMOCA, a memory- and compute-efficient continual learning framework for malicious code analysis. It preserves prior knowledge by performing adaptive layer-wise interpolation between consecutive task optima in parameter space, based on the assumption that warm-started optima are connected by low-loss paths. The method is evaluated in Class-IL and Domain-IL settings on the EMBER (Windows) and AZ (Android) malware benchmarks, claiming to outperform 11 baselines, achieve the best retention, and deliver accuracy gains of up to 42% on EMBER and 37% on AZ.

Significance. If the low-loss connectivity premise holds for malware models, FreeMOCA offers a practical, replay-free alternative for handling the high volume of new malware samples, which is valuable for resource-constrained antivirus systems. The open-sourced code is a positive factor for reproducibility. The result would be significant for continual learning in security domains if the central assumption is empirically supported.

major comments (2)

- [Method section (around the interpolation description)] The central claim in the method description rests on the premise that warm-started task optima are connected by low-loss paths in parameter space, enabling interpolation to avoid forgetting. No empirical verification is provided, such as loss curves or barrier analysis along the interpolation path on held-out prior-task data for the EMBER and AZ feature distributions and model architectures. This check is load-bearing because domain shifts in malware data can induce barriers that would invalidate the interpolation step.

- [Evaluation and results section] The quantitative results section reports outperformance over 11 baselines and specific accuracy/retention gains but provides no error bars, statistical significance tests, or full details on baseline implementations and the exact adaptive interpolation procedure. Without these, the robustness of the claimed 42% and 37% improvements cannot be assessed.

minor comments (2)

- [Abstract] The abstract could briefly clarify how layer-wise adaptation is computed (e.g., the selection criterion for interpolation weights).

- [Results tables and figures] Ensure all tables include standard deviations or confidence intervals and that figure captions fully describe the plotted quantities.

Simulated Author's Rebuttal

We thank the referee for the detailed and constructive feedback on our paper. We address the major comments point by point below, agreeing to incorporate additional analyses and details to enhance the manuscript's rigor.

read point-by-point responses

-

Referee: [Method section (around the interpolation description)] The central claim in the method description rests on the premise that warm-started task optima are connected by low-loss paths in parameter space, enabling interpolation to avoid forgetting. No empirical verification is provided, such as loss curves or barrier analysis along the interpolation path on held-out prior-task data for the EMBER and AZ feature distributions and model architectures. This check is load-bearing because domain shifts in malware data can induce barriers that would invalidate the interpolation step.

Authors: We concur that providing empirical support for the low-loss path assumption is important, particularly given potential domain shifts in malware distributions. Although the original manuscript relies on this premise from prior continual learning literature, we will add a new subsection with barrier analysis and loss curves along interpolation paths using held-out data from previous tasks on both EMBER and AZ datasets. This will confirm the absence of significant barriers for the model architectures used. revision: yes

-

Referee: [Evaluation and results section] The quantitative results section reports outperformance over 11 baselines and specific accuracy/retention gains but provides no error bars, statistical significance tests, or full details on baseline implementations and the exact adaptive interpolation procedure. Without these, the robustness of the claimed 42% and 37% improvements cannot be assessed.

Authors: We agree that including error bars, statistical tests, and more implementation details will improve the reliability assessment of our results. In the revised version, we will report mean accuracies with standard deviations over 5 random seeds, include paired t-tests or similar for significance against baselines, provide expanded descriptions of how each baseline was implemented (with references to their original papers and our adaptations), and detail the exact adaptive layer-wise interpolation procedure, including how the interpolation coefficients are computed per layer. revision: yes

Circularity Check

No circularity: method applies external connectivity property without self-referential reduction

full rationale

The paper frames its core mechanism as leveraging the known property that warm-started task optima lie on low-loss paths, then applies adaptive layer-wise interpolation for continual learning on malware benchmarks. No equations, fitted parameters, or self-citations are shown reducing the claimed predictions or gains to the inputs by construction. The derivation remains an application of an external fact to the EMBER/AZ tasks, with reported empirical outperformance serving as independent validation rather than tautological output.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Warm-started task optima are connected by low-loss paths in parameter space

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

FreeMOCA leverages Linear Mode Connectivity (LMC), which suggests that different solutions can be connected through low-loss paths... warm-started task optima are connected by low-loss paths in parameter space.

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

Proposition 1 (Local Linear Connectivity)... second-order Taylor expansion around θt−1

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

Git Re-Basin: Merging models modulo permutation symmetries

Samuel Ainsworth, Jonathan Hayase, and Siddhartha Srinivasa. Git Re-Basin: Merging models modulo permutation symmetries. InInternational Conference on Learning Representations (ICLR), 2023

work page 2023

-

[2]

Bissyandé, Jacques Klein, and Yves Le Traon

Kevin Allix, Tegawendé F. Bissyandé, Jacques Klein, and Yves Le Traon. Androzoo: Collecting millions of android apps for the research community. InInternational Conference on Mining Software Repositories (MSR), 2016

work page 2016

-

[3]

EMBER: An Open Dataset for Training Static PE Malware Machine Learning Models

Hyrum S Anderson and Phil Roth. EMBER: an open dataset for training static pe malware machine learning models.arXiv:1804.04637, 2018

work page internal anchor Pith review Pith/arXiv arXiv 2018

-

[4]

Drebin: Effective and explainable detection of android malware in your pocket

Daniel Arp, Michael Spreitzenbarth, Malte Hubner, Hugo Gascon, and Konrad Rieck. Drebin: Effective and explainable detection of android malware in your pocket. InNetwork and Distributed System Security Symposium (NDSS), 2014. 10

work page 2014

-

[5]

Malware statistics and trends report.https://www.av-test.org/en/statist ics/malware/, 2025

A V-TEST. Malware statistics and trends report.https://www.av-test.org/en/statist ics/malware/, 2025

work page 2025

-

[6]

Nested learning: The illusion of deep learning architectures

Ali Behrouz, Meisam Razaviyayn, Peilin Zhong, and Vahab Mirrokni. Nested learning: The illusion of deep learning architectures. InAdvances in Neural Information Processing Systems (NeurIPS), 2025

work page 2025

-

[7]

Task-aware information routing from common representation space in lifelong learning

Prashant Bhat, Bahram Zonooz, and Elahe Arani. Task-aware information routing from common representation space in lifelong learning. InInternational Conference on Learning Representations (ICLR), 2023

work page 2023

-

[8]

Optimization methods for large-scale machine learning.SIAM review, 2018

Léon Bottou, Frank E Curtis, and Jorge Nocedal. Optimization methods for large-scale machine learning.SIAM review, 2018

work page 2018

-

[10]

Forget forgetting: Continual learning in a world of abundant memory, 2026

Dongkyu Cho, Taesup Moon, Rumi Chunara, Kyunghyun Cho, and Sungmin Cha. Forget forgetting: Continual learning in a world of abundant memory, 2026. URL https://arxiv. org/abs/2502.07274

-

[11]

Theo Chow, Mario D’Onghia, Lorenz Linhardt, Zeliang Kan, Daniel Arp, Lorenzo Cavallaro, and Fabio Pierazzi. Beyond the TESSERACT: Trustworthy dataset curation for sound evalua- tions of android malware classifiers. InIEEE Conference on Secure and Trustworthy Machine Learning (SaTML), 2026

work page 2026

-

[12]

Don't forget, there is more than forgetting: new metrics for Continual Learning

Natalia Díaz-Rodríguez, Vincenzo Lomonaco, David Filliat, and Davide Maltoni. Don’t forget, there is more than forgetting: new metrics for continual learning.arXiv preprint arXiv:1810.13166, 2018

work page internal anchor Pith review Pith/arXiv arXiv 2018

-

[13]

Continual learning beyond a single model

Thang Doan, Seyed Iman Mirzadeh, and Mehrdad Farajtabar. Continual learning beyond a single model. InConference on Lifelong Learning Agents (CoLLAs), 2023

work page 2023

-

[14]

Essentially no barriers in neural network energy landscape

Felix Draxler, Kambis Veschgini, Manfred Salmhofer, and Fred Hamprecht. Essentially no barriers in neural network energy landscape. InInternational Conference on Machine Learning (ICML), 2018

work page 2018

-

[15]

Linear mode connectivity and the lottery ticket hypothesis

Jonathan Frankle, Gintare Karolina Dziugaite, Daniel Roy, and Michael Carbin. Linear mode connectivity and the lottery ticket hypothesis. InInternational Conference on Machine Learning (ICML), 2020

work page 2020

-

[16]

Catastrophic forgetting in connectionist networks.Trends in Cognitive Sciences, 1999

Robert M French. Catastrophic forgetting in connectionist networks.Trends in Cognitive Sciences, 1999

work page 1999

-

[17]

Timur Garipov, Pavel Izmailov, Dmitrii Podoprikhin, Dmitry P Vetrov, and Andrew G Wilson. Loss surfaces, mode connectivity, and fast ensembling of dnns.Advances in Neural Information Processing Systems (NeurIPS), 2018

work page 2018

-

[18]

Md Ahsanul Haque, Md Mahmuduzzaman Kamol, Suresh Kumar Amalapuram, Vladik Kreinovich, and Mohammad Saidur Rahman. CITADEL: A semi-supervised active learn- ing framework for malware detection under continuous distribution drift.arXiv preprint arXiv:2511.11979, 2025

-

[19]

LAMDA: A longitudinal android malware benchmark for concept drift analysis

Md Ahsanul Haque, Ismail Hossain, Md Mahmuduzzaman Kamol, Md Jahangir Alam, Suresh Kumar Amalapuram, Sajedul Talukder, and Mohammad Saidur Rahman. LAMDA: A longitudinal android malware benchmark for concept drift analysis. InInternational Conference on Learning Representations (ICLR), 2026

work page 2026

-

[20]

Batch Normalization: Accelerating deep network training by reducing internal covariate shift

Sergey Ioffe and Christian Szegedy. Batch Normalization: Accelerating deep network training by reducing internal covariate shift. InInternational Conference on Machine Learning (ICML), 2015. 11

work page 2015

-

[21]

Memory-free continual learning with null space adaptation for zero-shot vision-language models

Yujin Jo and Taesup Kim. Memory-free continual learning with null space adaptation for zero-shot vision-language models. InInternational Conference on Learning Representations (ICLR), 2026

work page 2026

-

[23]

James Kirkpatrick, Razvan Pascanu, Neil Rabinowitz, Joel Veness, Guillaume Desjardins, Andrei A Rusu, Kieran Milan, John Quan, Tiago Ramalho, Agnieszka Grabska-Barwinska, et al. Overcoming catastrophic forgetting in neural networks.Proceedings of the National Academy of Sciences (PNAS), 2017

work page 2017

-

[24]

FireEye MalwareGuard uses machine learning to detect malware

Eduard Kovacs. FireEye MalwareGuard uses machine learning to detect malware. https: //www.securityweek.com/fireeye-malwareguard-uses-machine-learning-detec t-malware/, 2018

work page 2018

-

[25]

Continual learning with weight interpolation

J˛ edrzej Kozal, Jan Wasilewski, Bartosz Krawczyk, and Michał Wo´ zniak. Continual learning with weight interpolation. InIEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024

work page 2024

-

[26]

Timothée Lesort, Thomas George, and Irina Rish. Continual learning in deep networks: an analysis of the last layer.arXiv preprint arXiv:2106.01834, 2021

-

[27]

Zhizhong Li and Derek Hoiem. Learning without forgetting.IEEE transactions on Pattern Analysis and Machine Intelligence (TPAMI), 2017

work page 2017

-

[28]

Activation function design sustains plasticity in continual learning

Lute Lillo and Nick Cheney. Activation function design sustains plasticity in continual learning. InInternational Conference on Learning Representations (ICLR), 2026

work page 2026

-

[29]

KAN: Kolmogorov-Arnold Networks

Ziming Liu, Yixuan Wang, Sachin Vaidya, Fabian Ruehle, James Halverson, Marin Soljaˇci´c, Thomas Y Hou, and Max Tegmark. Kan: Kolmogorov-arnold networks.arXiv preprint arXiv:2404.19756, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[30]

Deep learning via hessian-free optimization

James Martens. Deep learning via hessian-free optimization. InInternational Conference on International Conference on Machine Learning (ICML), 2010

work page 2010

-

[31]

Communication-efficient learning of deep networks from decentralized data

Brendan McMahan, Eider Moore, Daniel Ramage, Seth Hampson, and Blaise Aguera y Arcas. Communication-efficient learning of deep networks from decentralized data. InArtificial Intelligence and Statistics (AISTATS), 2017

work page 2017

-

[32]

Linear mode connectivity in multitask and continual learning.arXiv preprint arXiv:2010.04495, 2020

Seyed Iman Mirzadeh, Mehrdad Farajtabar, Dilan Gorur, Razvan Pascanu, and Hassan Ghasemzadeh. Linear mode connectivity in multitask and continual learning.arXiv preprint arXiv:2010.04495, 2020

-

[33]

Linear mode connectivity in multitask and continual learning

Seyed Iman Mirzadeh, Mehrdad Farajtabar, Dilan Gorur, Razvan Pascanu, and Hassan Ghasemzadeh. Linear mode connectivity in multitask and continual learning. InInterna- tional Conference on Learning Representations (ICLR), 2021

work page 2021

-

[34]

Behnam Neyshabur, Hanie Sedghi, and Chiyuan Zhang. What is being transferred in transfer learning?Advances in Neural Information Processing Systems (NeurIPS), 2020

work page 2020

-

[35]

Jimin Park, AHyun Ji, Minji Park, Mohammad Saidur Rahman, and Se Eun Oh. MalCL: Lever- aging gan-based generative replay to combat catastrophic forgetting in malware classification. InAAAI Conference on Artificial Intelligence, 2025

work page 2025

-

[36]

Fuli Qiao and Mehrdad Mahdavi. Learn more, but bother less: parameter efficient continual learning.Advances in Neural Information Processing Systems (NeurIPS), 2024

work page 2024

-

[37]

Maithra Raghu, Chiyuan Zhang, Jon Kleinberg, and Samy Bengio. Transfusion: Understanding transfer learning for medical imaging.Advances in Neural Information Processing Systems (NeurIPS), 2019. 12

work page 2019

-

[38]

On the limitations of continual learning for malware classification

Mohammad Saidur Rahman, Scott Coull, and Matthew Wright. On the limitations of continual learning for malware classification. InConference on Lifelong Learning Agents (CoLLAs), 2022

work page 2022

-

[39]

MADAR: Efficient continual learning for malware analysis with distribution-aware replay

Mohammad Saidur Rahman, Scott Coull, Qi Yu, and Matthew Wright. MADAR: Efficient continual learning for malware analysis with distribution-aware replay. InConference on Applied Machine Learning in Information Security (CAMLIS), 2025

work page 2025

-

[40]

iCaRL: Incremental classifier and representation learning

Sylvestre-Alvise Rebuffi, Alexander Kolesnikov, Georg Sperl, and Christoph H Lampert. iCaRL: Incremental classifier and representation learning. InConference on Computer Vision and Pattern Recognition (CVPR), 2017

work page 2017

-

[41]

Weijieying Ren, Xinlong Li, Lei Wang, Tianxiang Zhao, and Wei Qin. Analyzing and reducing catastrophic forgetting in parameter efficient tuning.arXiv preprint arXiv:2402.18865, 2024

-

[42]

Expe- rience replay for continual learning

David Rolnick, Arun Ahuja, Jonathan Schwarz, Timothy Lillicrap, and Gregory Wayne. Expe- rience replay for continual learning. InAdvances in Neural Information Processing Systems (NeurIPS), 2019

work page 2019

-

[43]

Ahmed Sabbah, Radi Jarrar, Samer Zein, and David Mohaisen. Understanding concept drift with deprecated permissions in android malware detection.IEEE Transactions on Dependable and Secure Computing (TDSC), 2026

work page 2026

-

[44]

Budgeted online continual learning by adaptive layer freezing and frequency-based sampling

Minhyuk Seo, Hyunseo Koh, and Jonghyun Choi. Budgeted online continual learning by adaptive layer freezing and frequency-based sampling. InInternational Conference on Learning Representations (ICLR), 2025

work page 2025

-

[45]

Timothy Tadros, Giri P Krishnan, Ramyaa Ramyaa, and Maxim Bazhenov. Sleep-like unsuper- vised replay reduces catastrophic forgetting in artificial neural networks.Nature Communica- tions, 2022

work page 2022

-

[46]

Antti Tarvainen and Harri Valpola. Mean teachers are better role models: Weight-averaged con- sistency targets improve semi-supervised deep learning results.Advances in Neural Information Processing Systems (NeurIPS), 2017

work page 2017

-

[47]

Norman Tatro, Pin-Yu Chen, Payel Das, Igor Melnyk, Prasanna Sattigeri, and Rongjie Lai. Op- timizing mode connectivity via neuron alignment.Advances in Neural Information Processing Systems (NeurIPS), 2020

work page 2020

-

[48]

Gido M van de Ven, Hava T Siegelmann, and Andreas S Tolias. Brain-inspired replay for continual learning with artificial neural networks.Nature Communications, 2020

work page 2020

-

[49]

Gido M Van de Ven, Hava T Siegelmann, and Andreas S Tolias. Brain-inspired replay for continual learning with artificial neural networks.Nature Communications, 2020

work page 2020

-

[50]

VirusTotal – Stats.https://www.virustotal.com/gui/stats, 2025

VirusTotal. VirusTotal – Stats.https://www.virustotal.com/gui/stats, 2025

work page 2025

-

[51]

Continual test-time domain adaptation

Qin Wang, Olga Fink, Luc Van Gool, and Dengxin Dai. Continual test-time domain adaptation. InIEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022

work page 2022

-

[52]

Rethinking continual learning with progressive neural collapse

Zheng Wang, Wanhao Yu, Li Yang, and Sen Lin. Rethinking continual learning with progressive neural collapse. InInternational Conference on Learning Representations (ICLR), 2026

work page 2026

-

[53]

Optimiz- ing mode connectivity for class incremental learning

Haitao Wen, Haoyang Cheng, Heqian Qiu, Lanxiao Wang, Lili Pan, and Hongliang Li. Optimiz- ing mode connectivity for class incremental learning. InInternational Conference on Machine Learning (ICML), 2023

work page 2023

-

[54]

Jason Yosinski, Jeff Clune, Yoshua Bengio, and Hod Lipson. How transferable are features in deep neural networks?Advances in Neural Information Processing Systems (NeurIPS), 2014

work page 2014

-

[55]

Continual learning through synaptic intelligence.Journal of Machine Learning Research (JMLR), 2017

Friedemann Zenke, Ben Poole, and Surya Ganguli. Continual learning through synaptic intelligence.Journal of Machine Learning Research (JMLR), 2017. 13

work page 2017

-

[56]

Haiyan Zhao, Tianyi Zhou, Guodong Long, Jing Jiang, and Chengqi Zhang. Does continual learning equally forget all parameters? InInternational Conference on Machine Learning (ICML), 2023

work page 2023

-

[57]

Exploring tradeoffs through mode connectivity for multi-task learning

Zhipeng Zhou, Ziqiao Meng, Pengcheng Wu, Peilin Zhao, and Chunyan Miao. Exploring tradeoffs through mode connectivity for multi-task learning. InNeural Information Processing Systems (NeurIPS), 2026. A Discussion and Limitations FreeMOCA is designed for a specific CL regime: sequential tasks with observable boundaries and sufficient representational conti...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.