Recognition: 2 theorem links

· Lean TheoremMVB-Grasp: Minimum-Volume-Box Filtering of Diffusion-based Grasps for Frontal Manipulation

Pith reviewed 2026-05-12 03:21 UTC · model grok-4.3

The pith

A minimum-volume bounding box filter raises diffusion grasp success from 25% to 59% for frontal manipulation on workspace-constrained robot arms.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

MVB-Grasp adds an MVBB geometric filter that exploits oriented bounding-box face normals to reject infeasible grasps in linear time, together with a re-scoring function that blends discriminator scores and face-alignment geometry at a calibrated weight of 0.85, producing a 2.4 times higher success rate than vanilla GraspGen on the Z1 arm.

What carries the argument

The MVBB-based geometric filter, which fits a minimum-volume bounding box via PCA to obtain object face normals and uses them to discard grasps misaligned with accessible frontal directions.

If this is right

- Pre-trained diffusion grasp generators can be deployed on new low-cost manipulators without retraining by adding only the MVBB filter and re-scoring step.

- The O(N) filtering step keeps the method fast enough for real-time use alongside YOLO detection and IK planning.

- Systematic variation of object distance, lateral offset, and pitch in simulation supplies a concrete protocol for measuring embodiment-specific grasp performance.

- Real-world confirmation on the physical Z1 arm shows the same reliability gains without additional model changes.

Where Pith is reading between the lines

- The same face-normal prior could be combined with collision or reachability checks to further cut failures on other constrained arms.

- Recalibrating the 0.85 blend weight for different robots or camera placements might extend the gains beyond the tested Z1 setup.

- If MVBB fitting proves stable across object categories, the method offers a lightweight way to adapt any diffusion grasp model to new kinematic limits.

Load-bearing premise

That the minimum-volume bounding box face normals reliably mark the grasp directions that remain reachable for the specific objects and the Z1 arm's frontal workspace constraints.

What would settle it

Repeating the 81-episode MuJoCo protocol on objects whose true graspable faces deviate from MVBB normals, or on a robot whose approach directions differ, and checking whether the 59 percent success rate collapses back toward the unfiltered baseline.

Figures

read the original abstract

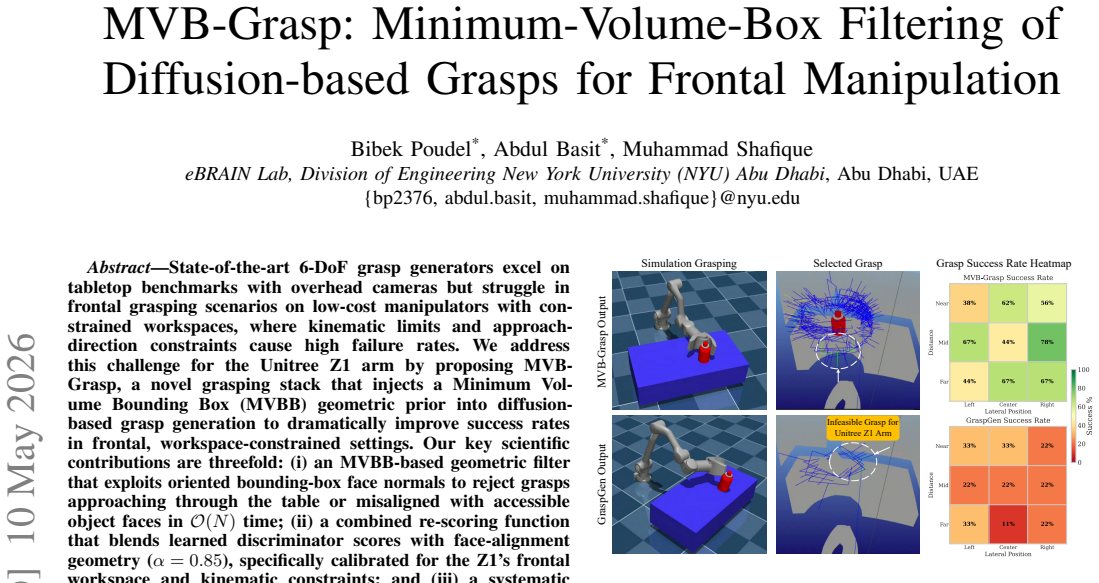

State-of-the-art 6-DoF grasp generators excel on tabletop benchmarks with overhead cameras but struggle in frontal grasping scenarios on low-cost manipulators with constrained workspaces, where kinematic limits and approach-direction constraints cause high failure rates. We address this challenge for the Unitree Z1 arm by proposing MVB-Grasp, a novel grasping stack that injects a Minimum Volume Bounding Box (MVBB) geometric prior into diffusion-based grasp generation to dramatically improve success rates in frontal, workspace-constrained settings. Our key scientific contributions are threefold: (i) an MVBB-based geometric filter that exploits oriented bounding-box face normals to reject grasps approaching through the table or misaligned with accessible object faces in O(N) time; (ii) a combined re-scoring function that blends learned discriminator scores with face-alignment geometry {\alpha}=0.85, specifically calibrated for the Z1's frontal workspace and kinematic constraints; and (iii) a systematic MuJoCo evaluation protocol measuring grasp success across object types, distances, lateral positions, and pitch orientations to validate embodiment-specific performance. We implement MVB-Grasp on a Unitree Z1 arm with an Intel RealSense D405 camera, integrating YOLOv8 object detection, GraspGen for candidate generation, Principal Component Analysis (PCA)-based MVBB fitting, and inverse-kinematics trajectory planning. Experiments across 81 MuJoCo episodes (cylinder, asymmetric box, waterbottle) demonstrate that MVB-Grasp achieves 59.3% success versus 24.7% for vanilla GraspGen, a 2.4x improvement, by filtering geometrically infeasible candidates and prioritizing face-aligned grasps suited to the Z1's frontal approach constraints. Real-world trials confirm that the MVBB prior substantially improves grasp reliability on constrained, low-cost manipulators without requiring model retraining.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript presents MVB-Grasp, a post-processing stack that augments diffusion-based grasp generation (GraspGen) with a Minimum-Volume Bounding Box (MVBB) geometric filter and a blended re-scoring function (α=0.85) to improve frontal grasping success on the Unitree Z1 arm under workspace constraints. The central empirical claim is a 2.4× improvement in grasp success (59.3% vs. 24.7% for vanilla GraspGen) across 81 MuJoCo episodes on three object classes (cylinder, asymmetric box, waterbottle), supported by real-world hardware trials using YOLOv8 detection, PCA-based MVBB fitting, and IK planning.

Significance. If the results hold under more rigorous controls, the work demonstrates a practical, training-free way to adapt learned 6-DoF grasp generators to specific robot embodiments and approach constraints by injecting classical geometric priors. This hybrid approach could be broadly useful for low-cost manipulators where kinematic limits cause high failure rates in frontal scenarios.

major comments (3)

- [Experiments] Experiments section: aggregate success rates of 59.3% vs. 24.7% are reported without per-object breakdowns, standard deviations across random seeds, or an ablation that removes the MVBB geometric term. This leaves open whether the observed gain is driven by the filter or by other unstated factors in the 81-episode protocol.

- [Method (MVBB geometric filter)] MVBB filter description: for the cylinder and waterbottle, PCA-derived MVBB face normals are axis-aligned approximations whose normals need not align with actual surface normals or the Z1's frontal approach vectors; the paper provides no validation that the filter correctly rejects table-penetrating grasps or retains viable side grasps on these non-box objects.

- [Method (combined re-scoring)] Re-scoring function: the blending parameter α=0.85 is stated as calibrated for the Z1 but no calibration procedure, sensitivity analysis, or justification for this specific value is given, raising the risk that the reported improvement is tied to the particular test scenarios rather than a robust prior.

minor comments (2)

- [Experiments] The evaluation protocol (distances, lateral positions, pitch orientations) is summarized but could be tabulated or pseudocoded for exact reproducibility.

- [Method] The re-scoring equation should be written explicitly rather than described in prose.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback. We address each major comment below and will revise the manuscript accordingly to improve clarity and rigor.

read point-by-point responses

-

Referee: [Experiments] Experiments section: aggregate success rates of 59.3% vs. 24.7% are reported without per-object breakdowns, standard deviations across random seeds, or an ablation that removes the MVBB geometric term. This leaves open whether the observed gain is driven by the filter or by other unstated factors in the 81-episode protocol.

Authors: We agree that aggregate results alone are insufficient. The revised manuscript will report per-object success rates for cylinder, asymmetric box, and waterbottle; include standard deviations computed over at least five random seeds; and add an ablation study that disables the MVBB geometric filter while keeping the re-scoring function. These additions will isolate the filter's contribution and address potential confounding factors in the evaluation protocol. revision: yes

-

Referee: [Method (MVBB geometric filter)] MVBB filter description: for the cylinder and waterbottle, PCA-derived MVBB face normals are axis-aligned approximations whose normals need not align with actual surface normals or the Z1's frontal approach vectors; the paper provides no validation that the filter correctly rejects table-penetrating grasps or retains viable side grasps on these non-box objects.

Authors: The MVBB filter operates on the oriented bounding-box faces obtained via PCA to identify accessible frontal approach directions compatible with the Z1 workspace, rather than requiring exact surface-normal alignment. We will add a dedicated validation subsection with qualitative examples and quantitative counts of rejected table-penetrating grasps versus retained side grasps for the cylinder and waterbottle, drawn from the existing MuJoCo episodes. revision: yes

-

Referee: [Method (combined re-scoring)] Re-scoring function: the blending parameter α=0.85 is stated as calibrated for the Z1 but no calibration procedure, sensitivity analysis, or justification for this specific value is given, raising the risk that the reported improvement is tied to the particular test scenarios rather than a robust prior.

Authors: The value α=0.85 was selected via preliminary grid search on a small set of Z1-specific trials to maximize success under frontal constraints. The revised methods section will describe this calibration procedure, include a sensitivity plot of success rate versus α over [0.6, 0.95], and discuss robustness across the tested object classes and distances. revision: yes

Circularity Check

No circularity: geometric prior and calibrated hyperparameter are independent of diffusion outputs; success rates are measured empirically

full rationale

The paper presents an engineering method (MVBB face-normal filter plus alpha-blended rescoring) applied as post-processing to an external diffusion grasp generator (GraspGen). The filter operates on PCA-derived bounding-box geometry in O(N) time and the alpha=0.85 value is stated as calibrated for the Z1 embodiment; neither step is derived from the diffusion model scores nor reduces the reported 59.3 % success rate to the input grasp candidates by construction. Experimental results are obtained directly from 81 MuJoCo episodes and real-world trials rather than from any first-principles prediction or self-referential equation. No self-citations, uniqueness theorems, or ansatzes are invoked to justify the core claims.

Axiom & Free-Parameter Ledger

free parameters (1)

- alpha =

0.85

axioms (2)

- domain assumption Oriented bounding box face normals indicate accessible approach directions for frontal grasping

- domain assumption The diffusion model generates candidate grasps that can be filtered geometrically without loss of viable options

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

an MVBB-based geometric filter that exploits oriented bounding-box face normals to reject grasps... combined re-scoring function that blends learned discriminator scores with face-alignment geometry (alpha=0.85)

-

IndisputableMonolith/Foundation/AbsoluteFloorClosure.leanreality_from_one_distinction unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

Experiments across 81 MuJoCo episodes demonstrate that MVB-Grasp achieves 59.3% success versus 24.7% for vanilla GraspGen

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

Deep learning approaches to grasp synthesis: A review,

R. Newburyet al., “Deep learning approaches to grasp synthesis: A review,” 2023. TABLE VI ORIENTATION ROBUSTNESS:EFFECT OF OBJECT PITCH FOR ASYMMETRIC OBJECTS. Object Pitch Method Succ. [%] #Cand. #MVB Cylinder 0◦ GraspGen 33.3 45.2 – 0◦ MVB 88.945.2 43.1 45◦ GraspGen 11.1 26.0 – 45◦ MVB 100.026.0 22.4 −45◦ GraspGen 33.3 33.5 – −45◦ MVB 66.733.5 27.4 BoxA...

work page 2023

-

[2]

Grasp pose detection in point clouds,

A. ten Paset al., “Grasp pose detection in point clouds,” 2017

work page 2017

-

[3]

Contact-graspnet: Efficient 6-dof grasp genera- tion in cluttered scenes,

M. Sundermeyeret al., “Contact-graspnet: Efficient 6-dof grasp genera- tion in cluttered scenes,” 2021

work page 2021

-

[4]

Anygrasp: Robust and efficient grasp perception in spatial and temporal domains,

H.-S. Fanget al., “Anygrasp: Robust and efficient grasp perception in spatial and temporal domains,” 2023

work page 2023

-

[5]

Graspgen: A diffusion-based framework for 6-dof grasping with on-generator training,

A. Muraliet al., “Graspgen: A diffusion-based framework for 6-dof grasping with on-generator training,” 2025

work page 2025

-

[6]

Deep learning for detecting robotic grasps,

I. Lenzet al., “Deep learning for detecting robotic grasps,” 2014

work page 2014

-

[7]

Real-time grasp detection using convolutional neural networks,

J. Redmonet al., “Real-time grasp detection using convolutional neural networks,” 2015

work page 2015

-

[8]

Closing the loop for robotic grasping: A real-time, generative grasp synthesis approach,

D. Morrisonet al., “Closing the loop for robotic grasping: A real-time, generative grasp synthesis approach,” 2018

work page 2018

-

[9]

J. Mahleret al., “Dex-net 2.0: Deep learning to plan robust grasps with synthetic point clouds and analytic grasp metrics,” 2017

work page 2017

-

[10]

6-dof graspnet: Variational grasp generation for object manipulation,

A. Mousavianet al., “6-dof graspnet: Variational grasp generation for object manipulation,” 2019

work page 2019

-

[11]

Minimum volume bounding box decomposition for shape approximation in robot grasping,

K. Huebneret al., “Minimum volume bounding box decomposition for shape approximation in robot grasping,” in2008 IEEE International Conference on Robotics and Automation, 2008, pp. 1628–1633

work page 2008

-

[12]

Learning of 2d grasping strategies from box-based 3d object approximations,

S. Geidenstamet al., “Learning of 2d grasping strategies from box-based 3d object approximations,” inRobotics: Science and Systems V. The MIT Press, 07 2010

work page 2010

-

[13]

Goalgrasp: Grasping goals in partially occluded scenarios without grasp training,

S. Guiet al., “Goalgrasp: Grasping goals in partially occluded scenarios without grasp training,” 2025

work page 2025

-

[14]

Robot grasping based on object shape approximation and lightgbm,

S. Linet al., “Robot grasping based on object shape approximation and lightgbm,”Multimedia Tools and Applications, vol. 83, pp. 1–17, 06 2023

work page 2023

-

[15]

Improving robotic grasping accuracy through oriented bounding box detection with yolov11-obb,

V . D. Conget al., “Improving robotic grasping accuracy through oriented bounding box detection with yolov11-obb,”Heliyon, vol. 11, no. 12, p. e43512, 2025

work page 2025

-

[16]

Generalizing 6-dof grasp detection via domain prior knowledge,

H. Maet al., “Generalizing 6-dof grasp detection via domain prior knowledge,” 2024

work page 2024

-

[17]

Efficient heatmap-guided 6-dof grasp detection in cluttered scenes,

S. Chenet al., “Efficient heatmap-guided 6-dof grasp detection in cluttered scenes,” 2024

work page 2024

- [18]

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.