Recognition: no theorem link

Cross-Domain Lossy Compression via Constrained Minimum Entropy Coupling

Pith reviewed 2026-05-12 04:23 UTC · model grok-4.3

The pith

Common randomness makes intermediate representations unnecessary for optimal cross-domain lossy compression via minimum entropy coupling.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Under common randomness, the rate-constrained minimum entropy coupling problem (MEC-B) is equivalent to a deterministic coupling formulation, allowing the intermediate representation to be removed without loss of optimality. For Bernoulli sources, closed-form expressions are derived with and without classification constraints. A neural restoration framework using quantization, entropy modeling, distribution matching, and classification regularization demonstrates the approach, with experiments on MNIST super-resolution and SVHN denoising showing that higher rates improve classification accuracy and yield more informative reconstructions.

What carries the argument

Rate-constrained minimum entropy coupling (MEC-B) under common randomness, which equates the problem to an equivalent deterministic coupling between the degraded source and the target distribution.

If this is right

- Closed-form expressions give the exact optimal coupling for Bernoulli sources both with and without classification constraints.

- In the neural implementation, higher available rate directly improves classification accuracy on the target task.

- The same framework produces reconstructions that better match the target domain statistics in super-resolution and denoising settings.

Where Pith is reading between the lines

- The removal of the intermediate representation could simplify training in other cross-domain settings if the common-randomness condition can be approximated by shared noise.

- Testing the same deterministic-coupling reduction on non-Bernoulli discrete sources or quantized continuous sources would show how far the closed-form insight generalizes.

- The classification-regularized coupling might be useful for privacy-preserving compression where the decoder must avoid leaking source-domain details.

Load-bearing premise

That maximizing coupling strength under a logarithmic-loss-style objective is the right way to measure performance in cross-domain reconstruction tasks.

What would settle it

A source-target pair and rate where the deterministic coupling formulation produces strictly lower mutual information or lower classification accuracy than a method that retains an explicit intermediate representation.

Figures

read the original abstract

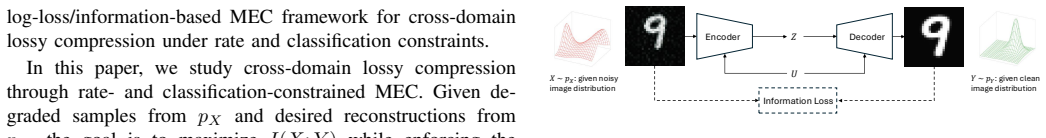

This paper studies cross-domain lossy compression through the lens of minimum entropy coupling (MEC) with rate and classification constraints. In this setting, an encoder observes samples from a degraded source domain, while the decoder is required to generate outputs following a prescribed target distribution and to preserve information relevant to a downstream classification task. Motivated by logarithmic-loss distortion, we adopt an information-based objective that maximizes the coupling strength between the source and reconstruction, rather than minimizing a sample-wise distortion. Under common randomness, we formulate a rate-constrained MEC problem (MEC-B) and show that the intermediate representation can be removed without loss of optimality, yielding an equivalent deterministic coupling formulation. For Bernoulli sources, closed-form expressions are derived with and without classification constraints. In addition, we implement a neural restoration framework using quantization, entropy modeling, distribution matching, and classification regularization. Experiments on MNIST super-resolution and SVHN denoising show that increasing the available rate improves classification accuracy and yields more informative reconstructions.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims to advance cross-domain lossy compression by formulating it as a constrained minimum entropy coupling (MEC) problem. Under common randomness, it introduces a rate-constrained MEC-B formulation and shows that the intermediate representation can be removed without loss of optimality to yield an equivalent deterministic coupling. Closed-form expressions are derived for Bernoulli sources with and without classification constraints. A neural implementation using quantization, entropy modeling, distribution matching, and classification regularization is presented, with experiments on MNIST super-resolution and SVHN denoising demonstrating that higher rates improve classification accuracy and reconstruction informativeness.

Significance. If the equivalence result and Bernoulli closed-forms hold, this provides a theoretically grounded information-theoretic approach to cross-domain compression that jointly handles rate constraints, target marginal matching, and preservation of classification-relevant mutual information. The closed-forms offer concrete, verifiable benchmarks for simple sources, while the neural framework suggests extensibility to images. This could influence task-oriented and domain-adaptive compression research, though empirical significance would increase with stronger baseline comparisons.

major comments (2)

- [§3.2] §3.2 (equivalence of MEC-B to deterministic coupling): The argument that the intermediate representation can be removed without loss of optimality must explicitly confirm that the rate bound, the target marginal, and the classification mutual information I(Y;Z) are all preserved. The current reasoning appears to rely on properties of the common randomness that may fail when its support is finite or when the joint entropy terms interact with the classification constraint, which would invalidate the subsequent simplifications used for the Bernoulli cases.

- [§4] §4 (Bernoulli closed-forms): The derivations of the coupling probabilities (with and without classification constraints) need to show how the rate constraint is enforced in the optimization; if the expressions optimize only the coupling strength objective, they may violate the rate bound for some parameter values, undermining the claim that they solve the full MEC-B problem.

minor comments (3)

- [Abstract] The abstract states the main results but omits any equation or reference to the closed-form expressions, which would allow readers to immediately gauge the contribution.

- [§5] Experimental details in §5 (network architecture, quantization levels, entropy model specifics, and training hyperparameters) are insufficient for reproducibility; adding these would strengthen the empirical claims.

- [Preliminaries] Notation for the common randomness variable and the distinction between source and target domains should be introduced with a clear table or diagram in the preliminaries to avoid later confusion.

Simulated Author's Rebuttal

We thank the referee for the thorough review and insightful comments on our manuscript. We address each major comment point by point below, providing clarifications and committing to revisions where the concerns identify gaps in explicitness or detail.

read point-by-point responses

-

Referee: [§3.2] §3.2 (equivalence of MEC-B to deterministic coupling): The argument that the intermediate representation can be removed without loss of optimality must explicitly confirm that the rate bound, the target marginal, and the classification mutual information I(Y;Z) are all preserved. The current reasoning appears to rely on properties of the common randomness that may fail when its support is finite or when the joint entropy terms interact with the classification constraint, which would invalidate the subsequent simplifications used for the Bernoulli cases.

Authors: We appreciate this observation, which correctly identifies that our current write-up in §3.2 could be more explicit. The equivalence proof relies on the fact that, under the common-randomness model, the deterministic coupling preserves the joint distribution properties needed for the MEC-B objective; specifically, the rate I(X;Z) is unchanged by the data-processing inequality, the target marginal is enforced by construction in the coupling, and I(Y;Z) is preserved because the classification constraint is applied to the same marginal on Z. Nevertheless, to address potential issues with finite-support common randomness and interactions with the classification term, we will revise §3.2 to include an explicit lemma verifying preservation of all three quantities (rate bound, target marginal, and I(Y;Z)) and add a short remark on the support condition. This will also strengthen the foundation for the Bernoulli derivations. revision: yes

-

Referee: [§4] §4 (Bernoulli closed-forms): The derivations of the coupling probabilities (with and without classification constraints) need to show how the rate constraint is enforced in the optimization; if the expressions optimize only the coupling strength objective, they may violate the rate bound for some parameter values, undermining the claim that they solve the full MEC-B problem.

Authors: We agree that the current presentation of the closed-forms in §4 could more clearly demonstrate enforcement of the rate constraint. The derivations solve the Lagrangian of the MEC-B problem, where the multiplier associated with the rate bound is chosen so that the resulting coupling probabilities satisfy I(X;Z) ≤ R for the admissible range of parameters; boundary cases where the constraint is active are handled by clipping the coupling strength. To eliminate any ambiguity, we will expand the derivations in the revised manuscript to include the explicit Lagrangian, the KKT conditions used to obtain the closed forms, and a short verification (via substitution) that the rate bound holds for the reported parameter values. This will confirm that the expressions solve the full constrained problem rather than an unconstrained relaxation. revision: yes

Circularity Check

No circularity: derivation chain self-contained with independent closed-form results

full rationale

The paper formulates the rate-constrained MEC-B problem under common randomness and claims to prove that the intermediate representation can be removed without loss of optimality to obtain an equivalent deterministic coupling. It then derives closed-form expressions for Bernoulli sources both with and without classification constraints. No equations, fitted parameters, or self-citations are exhibited in the abstract or description that would reduce any claimed result to a tautology by construction, a renamed fit, or a load-bearing self-reference. The Bernoulli expressions are presented as derived rather than statistically forced, and the central equivalence is asserted as a mathematical step rather than a definitional identity. The derivation therefore remains self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Common randomness is shared between encoder and decoder

Reference graph

Works this paper leans on

-

[1]

T. M. Cover,Elements of information theory. John Wiley & Sons, 1999

work page 1999

-

[2]

Image quality assessment: from error visibility to structural similarity,

Z. Wang, A. C. Bovik, H. R. Sheikh, and E. P. Simoncelli, “Image quality assessment: from error visibility to structural similarity,”IEEE transactions on image processing, vol. 13, no. 4, pp. 600–612, 2004

work page 2004

-

[3]

Cross-domain lossy compres- sion as entropy constrained optimal transport,

H. Liu, G. Zhang, J. Chen, and A. Khisti, “Cross-domain lossy compres- sion as entropy constrained optimal transport,”IEEE Journal on Selected Areas in Information Theory, vol. 3, no. 3, pp. 513–527, 2022

work page 2022

-

[4]

Cross-domain lossy compression via rate- and classification-constrained optimal transport,

N. Nguyen, T. Nguyen, and B. Bose, “Cross-domain lossy compression via rate- and classification-constrained optimal transport,” inProceedings of the International Conference on Learning Representations, 2026

work page 2026

-

[5]

Minimum entropy coupling with bottleneck,

M. R. Ebrahimi, J. Chen, and A. Khisti, “Minimum entropy coupling with bottleneck,” inAdvances in Neural Information Processing Systems, vol. 37, 2024

work page 2024

-

[6]

The perception-distortion tradeoff,

Y . Blau and T. Michaeli, “The perception-distortion tradeoff,” inPro- ceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2018, pp. 6228–6237

work page 2018

-

[7]

Rethinking lossy compression: The rate-distortion-perception tradeoff,

——, “Rethinking lossy compression: The rate-distortion-perception tradeoff,” inInternational Conference on Machine Learning, 2019, pp. 675–685

work page 2019

-

[8]

Generative adversarial networks for extreme learned image compres- sion,

E. Agustsson, M. Tschannen, F. Mentzer, R. Timofte, and L. V . Gool, “Generative adversarial networks for extreme learned image compres- sion,” inProceedings of the IEEE International Conference on Computer Vision, 2019, pp. 221–231

work page 2019

-

[9]

I. Goodfellow, J. Pouget-Abadie, M. Mirza, B. Xu, D. Warde-Farley, S. Ozair, A. Courville, and Y . Bengio, “Generative adversarial nets,” vol. 27, 2014, pp. 2672–2680

work page 2014

-

[10]

Wasserstein generative ad- versarial networks,

M. Arjovsky, S. Chintala, and L. Bottou, “Wasserstein generative ad- versarial networks,” inInternational Conference on Machine Learning, 2017, pp. 214–223

work page 2017

-

[11]

Deep generative models for distribution-preserving lossy compression,

M. Tschannen, E. Agustsson, and M. Lucic, “Deep generative models for distribution-preserving lossy compression,” inAdvances in Neural Information Processing Systems, 2018, pp. 5929–5940

work page 2018

-

[12]

Autoencoding beyond pixels using a learned similarity metric,

A. B. L. Larsen, S. K. Sønderby, H. Larochelle, and O. Winther, “Autoencoding beyond pixels using a learned similarity metric,” in Proceedings of The 33rd International Conference on Machine Learning, ser. Proceedings of Machine Learning Research, vol. 48. New York, New York, USA: PMLR, 20–22 Jun 2016, pp. 1558–1566

work page 2016

-

[13]

On the classification-distortion- perception tradeoff,

D. Liu, H. Zhang, and Z. Xiong, “On the classification-distortion- perception tradeoff,” inAdvances in Neural Information Processing Systems, vol. 32, 2019

work page 2019

-

[14]

A rate-distortion-classification approach for lossy image compression,

Y . Zhang, “A rate-distortion-classification approach for lossy image compression,”Digital Signal Processing, vol. 141, p. 104163, Sep. 2023

work page 2023

-

[15]

Task-oriented lossy compression with data, perception, and classification constraints,

Y . Wang, Y . Wu, S. Ma, and Y .-J. Angela Zhang, “Task-oriented lossy compression with data, perception, and classification constraints,”IEEE Journal on Selected Areas in Communications, vol. 43, no. 7, pp. 2635– 2650, 2025

work page 2025

-

[16]

Rate-distortion-classification representation theory for bernoulli sources,

N. Nguyen, T. Nguyen, and B. Bose, “Rate-distortion-classification representation theory for bernoulli sources,” inProceedings of the IEEE International Symposium on Information Theory (ISIT), 2026, accepted. Available as arXiv:2601.11919

-

[17]

Universal rate- distortion-classification representations for lossy compression,

N. Nguyen, T. Nguyen, T. Nguyen, and B. Bose, “Universal rate- distortion-classification representations for lossy compression,” in2025 IEEE Information Theory Workshop, 2025, pp. 1–6

work page 2025

-

[18]

Multiterminal source coding with an entropy-based distortion measure,

T. A. Courtade and R. D. Wesel, “Multiterminal source coding with an entropy-based distortion measure,” in2011 IEEE International Sympo- sium on Information Theory Proceedings. IEEE, 2011, pp. 2040–2044

work page 2011

-

[19]

Multiterminal source coding under logarithmic loss,

T. A. Courtade and T. Weissman, “Multiterminal source coding under logarithmic loss,”IEEE Transactions on Information Theory, vol. 60, no. 1, pp. 740–761, 2013

work page 2013

-

[20]

A single-shot approach to lossy source coding under logarithmic loss,

Y . Y . Shkel and S. Verdú, “A single-shot approach to lossy source coding under logarithmic loss,”IEEE Transactions on Information Theory, vol. 64, no. 1, pp. 129–147, 2017

work page 2017

-

[21]

M. Vidyasagar, “A metric between probability distributions on finite sets of different cardinalities and applications to order reduction,”IEEE Transactions on Automatic Control, vol. 57, no. 10, pp. 2464–2477, 2012

work page 2012

-

[22]

Minimum-entropy couplings and their applications,

F. Cicalese, L. Gargano, and U. Vaccaro, “Minimum-entropy couplings and their applications,”IEEE Transactions on Information Theory, vol. 65, no. 6, pp. 3436–3451, 2019

work page 2019

-

[23]

M. Kova ˇcevi´c, I. Stanojevi´c, and V . Šenk, “On the entropy of couplings,” Information and Computation, vol. 242, pp. 369–382, 2015

work page 2015

-

[24]

M. Kocaoglu, S. Shakkottai, A. G. Dimakis, C. Caramanis, and S. Vish- wanath, “Entropic causal inference,” inProceedings of the Thirty-First AAAI Conference on Artificial Intelligence, 2017, pp. 1156–1162

work page 2017

-

[25]

Entropic causality and greedy minimum entropy coupling,

M. Kocaoglu, A. G. Dimakis, S. Vishwanath, and B. Hassibi, “Entropic causality and greedy minimum entropy coupling,” inProceedings of the 2017 IEEE International Symposium on Information Theory (ISIT), 2017, pp. 1465–1469

work page 2017

-

[26]

Minimum-entropy coupling approximation guarantees beyond the ma- jorization barrier,

S. Compton, D. Katz, B. Qi, K. Greenewald, and M. Kocaoglu, “Minimum-entropy coupling approximation guarantees beyond the ma- jorization barrier,” inInternational Conference on Artificial Intelligence and Statistics. PMLR, 2023, pp. 10 445–10 469

work page 2023

-

[27]

A unifying mutual information view of metric learning: Cross-entropy vs. pairwise losses,

M. Boudiaf, J. Rony, I. M. Ziko, E. Granger, M. Pedersoli, P. Piantanida, and I. B. Ayed, “A unifying mutual information view of metric learning: Cross-entropy vs. pairwise losses,” inProceedings of the European Conference on Computer Vision. Springer, 2020, pp. 145–160

work page 2020

-

[28]

Variational image compression with a scale hyperprior,

J. Ballé, D. Minnen, S. Singh, S. J. Hwang, and N. Johnston, “Variational image compression with a scale hyperprior,” inInternational Conference on Learning Representations, 2018

work page 2018

-

[29]

The im algorithm: a variational approach to information maximization,

D. Barber and F. Agakov, “The im algorithm: a variational approach to information maximization,”Advances in neural information processing systems, vol. 16, no. 320, p. 201, 2004

work page 2004

-

[30]

X. Chen, Y . Duan, R. Houthooft, J. Schulman, I. Sutskever, and P. Abbeel, “Infogan: Interpretable representation learning by information maximizing generative adversarial nets,”Advances in neural information processing systems, vol. 29, 2016

work page 2016

-

[31]

J. Ziv, “On universal quantization,”IEEE Transactions on Information Theory, vol. 31, no. 3, pp. 344–347, 1985

work page 1985

-

[32]

On the advantages of stochastic encoders,

L. Theis and E. Agustsson, “On the advantages of stochastic encoders,” in Neural Compression Workshop at International Conference on Learning Representations, 2021

work page 2021

-

[33]

A. El Gamal and Y .-H. Kim,Network Information Theory. Cambridge University Press, 2011

work page 2011

-

[34]

Strong functional representation lemma and applications to coding theorems,

C. T. Li and A. El Gamal, “Strong functional representation lemma and applications to coding theorems,”IEEE Transactions on Information Theory, vol. 64, no. 11, pp. 6967–6978, 2018

work page 2018

-

[35]

S. Boyd and L. Vandenberghe,Convex Optimization. Cambridge, UK: Cambridge University Press, 2004

work page 2004

-

[36]

R. B. Nelsen,An Introduction to Copulas, 2nd ed. Springer, 2006, fréchet–Hoeffding bounds and comonotone/antimonotone cou- plings (Sec. 2.5)

work page 2006

-

[37]

Modelling dependence with copulas and applications to risk management,

P. Embrechts, A. McNeil, and D. Straumann, “Modelling dependence with copulas and applications to risk management,” inHandbook of Heavy Tailed Distributions in Finance. Elsevier, 2001, pp. 329–384. APPENDIX A. Proof of Theorem 1 Recall the formulation in Definition 1: IMEC-B-R(pX , pY , R) = max pU,X,Z,Y ∈M(p X ,pY ) I(X;Y) s.t.H(Z|U)≤R. whereM(p X , pY )...

work page 2001

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.