Recognition: 2 theorem links

· Lean TheoremLakestream: A Consistent and Brokerless Data Plane for Large Foundation Model Training

Pith reviewed 2026-05-12 02:42 UTC · model grok-4.3

The pith

Lakestream provides a brokerless object-store data plane with transactional global batches for foundation model training.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central discovery is a brokerless data plane called Lakestream that realizes training data consistency through Transactional Global Batch semantics built on lakehouse-style storage, including atomic all-rank batch visibility and checkpoint-aligned lifecycle management, realized via the Decentralized Adaptive Commit algorithm that sustains ingestion throughput without inter-producer communication, as shown in evaluations where it exceeds colocated dataloader throughput with isolation and outperforms Kafka in ingestion and latency on 64-GPU workloads.

What carries the argument

Transactional Global Batch (TGB) semantics that extend ACID lakehouse storage with training-specific properties like atomic batch visibility and end-to-end exactly-once recovery, carried by the Decentralized Adaptive Commit (DAC) algorithm that inlines producer state in manifests and ties reclamation to checkpoints.

If this is right

- Training jobs can isolate failures without affecting data consistency or requiring restarts of the entire pipeline.

- Ingestion throughput stays stable as the training manifest grows over many steps.

- Exactly-once recovery prevents duplicate data consumption across distributed ranks.

- Commodity object stores can replace both storage and coordination functions previously handled by brokers.

Where Pith is reading between the lines

- This could allow training frameworks to rely less on dedicated messaging infrastructure.

- Similar batch semantics might apply to other large-scale data processing pipelines beyond model training.

- The design suggests that object stores can handle coordination tasks efficiently if algorithms adapt to their semantics.

Load-bearing premise

The Transactional Global Batch semantics and Decentralized Adaptive Commit algorithm can be implemented on real object stores at scale with no hidden performance costs or correctness issues.

What would settle it

A test at larger scale, such as 512 GPUs over extended training steps, that reveals either violation of atomic batch visibility or ingestion throughput falling below that of Kafka.

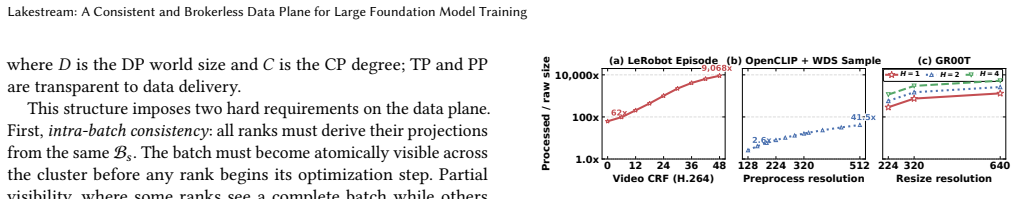

Figures

read the original abstract

Modern Large Foundation Model (LFM) training has transformed the data pipeline from a static ingestion layer into a dynamic component that must co-evolve with the training process. Existing systems are ill-equipped: colocated dataloaders offer no failure isolation, while message queue-based disaggregated dataloaders operate on a record/offset abstraction that cannot express the batch-level semantics required by distributed training. We present Lakestream, a brokerless, object-store-native training data plane with three key properties. First, it introduces the Transactional Global Batch (TGB), which builds on lakehouse-style ACID storage semantics and extends them with training-specific consistency, including atomic all-rank batch visibility, a globally ordered step sequence, checkpoint-aligned lifecycle management, and end-to-end exactly-once recovery. Second, it realizes recovery and retention directly in the storage layer, by inlining producer state in the manifest and tying reclamation to distributed checkpoint state. Third, its Decentralized Adaptive Commit (DAC) algorithm sustains stable ingestion throughput as the manifest grows, without any inter-producer communication. Evaluations on large-scale multimodal pre-training and SFT workloads using 64 GPUs show that Lakestream outperforms colocated dataloader throughput while providing full failure isolation, outperforms Apache Kafka in ingestion throughput, and achieves lower consumer read latency than Kafka.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper presents Lakestream, a brokerless data plane for large foundation model training that is native to object stores. It introduces Transactional Global Batch (TGB) semantics extending lakehouse ACID properties with training-specific guarantees (atomic all-rank visibility, globally ordered steps, checkpoint-aligned lifecycle, exactly-once recovery) and realizes them via inlined producer state and the Decentralized Adaptive Commit (DAC) algorithm, which avoids inter-producer communication while sustaining ingestion as the manifest grows. On 64-GPU multimodal pre-training and SFT workloads, Lakestream is reported to exceed colocated dataloader throughput with full failure isolation, exceed Apache Kafka ingestion throughput, and deliver lower consumer read latency than Kafka.

Significance. If the TGB/DAC design and reported performance hold under realistic long-running conditions, the work would offer a meaningful alternative to both colocated loaders (which lack isolation) and broker-based queues (which lack batch-level training semantics) for disaggregated data planes at LFM scale.

major comments (2)

- [Evaluation] Evaluation section: the headline claims rest on 64-GPU experiments, yet no details are supplied on cluster configuration, dataset sizes, checkpoint intervals, baseline implementations (colocated dataloader and Kafka versions), number of runs, or error bars. Without these, it is impossible to determine whether the reported throughput and latency advantages are reproducible or load-bearing.

- [DAC algorithm and Evaluation] DAC algorithm description and Evaluation: the paper asserts that DAC sustains stable ingestion 'as the manifest grows' without inter-producer communication, but the 64-GPU tests do not include long-running runs in which manifest size increases by orders of magnitude, nor measurements of per-operation object-store round-trips, consistency overhead, or reclamation cost under realistic checkpoint intervals. This leaves the weakest assumption (efficient scaling of manifest operations on real object stores) untested at the scales motivating the work.

minor comments (2)

- [Abstract] Abstract and §1: the phrase 'outperforms colocated dataloader throughput while providing full failure isolation' is stated without quantifying the isolation mechanism or the exact failure model exercised in the experiments.

- [Introduction] Notation: TGB and DAC are introduced as new entities; a short table contrasting their properties against record/offset queues and colocated loaders would improve readability.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment below and commit to revisions that strengthen the evaluation and clarify the DAC claims without overstating the current experimental scope.

read point-by-point responses

-

Referee: [Evaluation] Evaluation section: the headline claims rest on 64-GPU experiments, yet no details are supplied on cluster configuration, dataset sizes, checkpoint intervals, baseline implementations (colocated dataloader and Kafka versions), number of runs, or error bars. Without these, it is impossible to determine whether the reported throughput and latency advantages are reproducible or load-bearing.

Authors: We agree that the Evaluation section requires substantially more detail to support reproducibility and to allow readers to assess the reported advantages. In the revised manuscript we will expand this section with the cluster configuration (GPU model, interconnect, storage backend), exact dataset sizes for the multimodal pre-training and SFT workloads, checkpoint intervals used, precise baseline implementations and versions for both the colocated dataloader and Apache Kafka, the number of runs performed, and error bars or other statistical measures on all throughput and latency figures. revision: yes

-

Referee: [DAC algorithm and Evaluation] DAC algorithm description and Evaluation: the paper asserts that DAC sustains stable ingestion 'as the manifest grows' without inter-producer communication, but the 64-GPU tests do not include long-running runs in which manifest size increases by orders of magnitude, nor measurements of per-operation object-store round-trips, consistency overhead, or reclamation cost under realistic checkpoint intervals. This leaves the weakest assumption (efficient scaling of manifest operations on real object stores) untested at the scales motivating the work.

Authors: The referee is correct that the 64-GPU runs, while showing stable ingestion, do not yet demonstrate manifest growth over orders of magnitude or provide the requested per-operation metrics. The DAC algorithm description in the paper relies on local inlined state and adaptive commit to avoid inter-producer communication; we will add a new subsection with analytical bounds on manifest-operation cost together with concrete measurements of object-store round-trips, consistency overhead, and reclamation cost collected under realistic checkpoint intervals. Where new long-running experiments are feasible we will include them; otherwise we will clearly state the current experimental limits while strengthening the supporting analysis. revision: partial

Circularity Check

No circularity: claims rest on novel system design and direct empirical comparison

full rationale

The paper defines TGB semantics and the DAC algorithm as new constructs, then reports throughput and latency measurements on 64-GPU multimodal workloads against colocated dataloaders and Kafka. No equations, fitted parameters, self-definitional quantities, or load-bearing self-citations appear in the abstract or evaluation summary. The central performance claims are therefore independent of the inputs and rest on external benchmarks rather than reducing to the design by construction.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Object stores can provide lakehouse-style ACID storage semantics sufficient for training batch consistency

invented entities (2)

-

Transactional Global Batch (TGB)

no independent evidence

-

Decentralized Adaptive Commit (DAC)

no independent evidence

Lean theorems connected to this paper

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclearWe present Lakestream, a brokerless, object-store-native training data plane with three key properties. First, it introduces the Transactional Global Batch (TGB)... Third, its Decentralized Adaptive Commit (DAC) algorithm sustains stable ingestion throughput as the manifest grows, without any inter-producer communication.

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearThe DAC algorithm... derives a closed-form lower bound on T from each constraint (Equations 7–8) and taking their maximum.

Reference graph

Works this paper leans on

- [1]

-

[2]

Tyler Akidau, Robert Bradshaw, Craig Chambers, Slava Chernyak, Rafael J Dagum, Sam Knight, Frances Perry, Reiner Schmidt, and Sam Whittle. 2015. The dataflow model: a practical approach to balancing correctness, latency, and Lakestream: A Consistent and Brokerless Data Plane for Large Foundation Model Training cost in massive-scale, unbounded, out-of-orde...

work page 2015

-

[3]

Michael Armbrust, Tathagata Das, Liwen Sun, Burak Yavuz, Shixiong Zhu, Mukul Murthy, Joseph Torres, Herman van Hovell, Adrian Ionescu, Bogdan Ghit, Mad- hukara Bhat, Reynold Xin, Ali Ghodsi, Ion Stoica, and Matei Zaharia. 2020. Delta Lake: High-Performance ACID Table Storage over Cloud Object Stores. Proceedings of the VLDB Endowment (PVLDB)13, 12 (2020),...

work page 2020

-

[4]

Michael Armbrust, Tathagata Das, Joseph Torres, Burak Yavuz, Shixiong Liao, Yin Huai, Hossein Hosseini, Matei Zaharia, and Reynold Xin. 2018. Structured streaming: A declarative api for real-time applications in apache spark. InPro- ceedings of the 2018 International Conference on Management of Data (SIGMOD). Association for Computing Machinery, New York,...

work page 2018

-

[5]

Andrew Audibert, Yang Chen, Dan Graur, Ana Klimovic, Jiri Simsa, and Chan- dramohan A. Thekkath. 2023. tf.data service: A Case for Disaggregating ML Input Data Processing. InProceedings of the 2023 ACM Symposium on Cloud Computing (SoCC). Association for Computing Machinery, New York, NY, USA, 358–375

work page 2023

-

[6]

AutoMQ Team. 2024. AutoMQ: Cloud-Native Streaming with Offloaded Storage. https://www.automq.com

work page 2024

-

[7]

Shuai Bai, Yuxuan Cai, Ruizhe Chen, Keqin Chen, Xionghui Chen, Zesen Cheng, Lianghao Deng, Wei Ding, Chang Gao, Chunjiang Ge, Wenbin Ge, Zhifang Guo, Qidong Huang, Jie Huang, Fei Huang, Binyuan Hui, Shutong Jiang, Zhaohai Li, Mingsheng Li, Mei Li, Kaixin Li, Zicheng Lin, Junyang Lin, Xuejing Liu, Jiawei Liu, Chenglong Liu, Yang Liu, Dayiheng Liu, Shixuan ...

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[8]

Maximilian Böther, Xiaozhe Yao, Tolga Kerimoglu, Dan Graur, Viktor Gsteiger, and Ana Klimovic. 2026. Mixtera: A Data Plane for Foundation Model Training. Proc. ACM Manag. Data4, 1 (April 2026). doi:10.1145/3786668

-

[9]

Remi Cadene, Simon Alibert, Alexander Soare, Quentin Gallouedec, Adil Zoui- tine, Steven Palma, Pepijn Kooijmans, Michel Aractingi, Mustafa Shukor, Dana Aubakirova, Martino Russi, Francesco Capuano, Caroline Pascal, Jade Choghari, Jess Moss, and Thomas Wolf. 2024. LeRobot: State-of-the-art Machine Learning for Real-World Robotics in PyTorch. https://githu...

work page 2024

-

[10]

Paris Carbone, Asterios Katsifodimos, Stephan Ewen, Volker Markl, Seif Haridi, and Kostas Kostas. 2015. Apache Flink™: Stream processing at scale.ACM SIGMOD Record44, 4 (2015), 28–39

work page 2015

-

[11]

DeepSeek-AI. 2025. DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning. arXiv:2501.12948

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[12]

Dan Graur, Damien Aymon, Dan Kluser, Tanguy Albrici, Chandramohan A. Thekkath, and Ana Klimovic. 2022. Cachew: Machine Learning Input Data Processing as a Service. InProceedings of the 2022 USENIX Annual Technical Conference (USENIX ATC). USENIX Association, Berkeley, CA, USA, 689–706

work page 2022

-

[13]

Dan Graur, Oto Mraz, Muyu Li, Sepehr Pourghannad, Chandramohan A. Thekkath, and Ana Klimovic. 2024. Pecan: Cost-Efficient ML Data Preprocessing with Automatic Transformation Ordering and Hybrid Placement. InProceed- ings of the 2024 USENIX Annual Technical Conference (USENIX ATC). USENIX Association, Berkeley, CA, USA, 649–665

work page 2024

-

[14]

Gabriel Ilharco, Mitchell Wortsman, Nicholas Carlini, Rohan Taori, Achal Dave, Vaishaal Shankar, Hongseok Namkoong, John Miller, Hannaneh Hajishirzi, Ali Farhadi, and Ludwig Schmidt. 2021. OpenCLIP. doi:10.5281/zenodo.5143773

-

[15]

Uber Technologies Inc. 2018. Petastorm: Open source library to enable training deep learning models from Apache Parquet datasets. https://github.com/uber/ petastorm

work page 2018

-

[16]

Van Jacobson. 1988. Congestion Avoidance and Control. InSymposium Proceed- ings on Communications Architectures and Protocols (SIGCOMM ’88). Association for Computing Machinery, New York, NY, USA, 314–329. doi:10.1145/52324.52356

-

[17]

Taeyoon Kim, Youngbin Jeong, Myeongjae Jang, and Jong-Geun Lee. 2023. Fu- sionFlow: Accelerating Data Preprocessing for Machine Learning with CPU-GPU Cooperation.Proceedings of the VLDB Endowment (PVLDB)17, 3 (2023), 488–502

work page 2023

-

[18]

Jay Kreps, Neha Narkhede, and Jun Rao. 2011. Kafka: A Distributed Messaging System for Log Processing. InProceedings of the 4th International Workshop on Networking Meets Databases (NetDB). Association for Computing Machinery, New York, NY, USA, 1–7

work page 2011

-

[19]

Lance Format. 2025. Lance. https://github.com/lance-format/lance/

work page 2025

-

[20]

Chengshu Li, Ruohan Zhang, Josiah Wong, Cem Gokmen, Sanjana Srivastava, Roberto Martín-Martín, Chen Wang, Gabrael Levine, Michael Lingelbach, Jiankai Sun, Mona Anvari, Minjune Hwang, Manasi Sharma, Arman Aydin, Dhruva Bansal, Samuel Hunter, Kyu-Young Kim, Alan Lou, Caleb R Matthews, Ivan Villa-Renteria, Jerry Huayang Tang, Claire Tang, Fei Xia, Silvio Sav...

work page 2023

-

[21]

Jiahao Li, Biao Cao, Jielong Jian, Cheng Li, Sen Han, Yiduo Wang, Yufei Wu, Kang Chen, Zhihui Yin, Qiushi Chen, Jiwei Xiong, Jie Zhao, Fengyuan Liu, Yan Xing, Liguo Duan, Miao Yu, Ran Zheng, Feng Wu, and Xianjun Meng. 2025. Mantle: Efficient Hierarchical Metadata Management for Cloud Object Storage Services. InProceedings of the ACM SIGOPS 31st Symposium ...

-

[22]

Matteo Merli, Sijie Guo, Penghui Li, Hang Chen, and Neng Lu. 2025. Ursa: A Lakehouse-Native Data Streaming Engine for Kafka.Proceedings of the VLDB Endowment (PVLDB)18, 12 (2025), 5184–5196

work page 2025

-

[23]

Jayashree Mohan, Amar Phanishayee, Janardhan Kulkarni, and Vijay Chi- dambaram. 2021. CoorDL: Co-ordinated Data Loading for Deep Learning. In Proceedings of the 2021 USENIX Annual Technical Conference (USENIX ATC). USENIX Association, Berkeley, CA, USA, 305–319

work page 2021

-

[24]

Philipp Moritz, Robert Nishihara, Stephanie Wang, Alexey Tumanov, Richard Liaw, Eric Liang, Melih Elibol, Zongheng Yang, William Paul, Michael I. Jordan, and Ion Stoica. 2018. Ray: A Distributed Framework for Emerging AI Applications. InProceedings of the 13th USENIX Symposium on Operating Systems Design and Implementation (OSDI). USENIX Association, Berk...

work page 2018

-

[25]

MosaicML. 2023. StreamingDataset: A high-performance dataset for deep learn- ing. https://github.com/mosaicml/streaming

work page 2023

-

[26]

Murray, Jiří Šimša, Ana Klimovic, and Ihor Indyk

Derek G. Murray, Jiří Šimša, Ana Klimovic, and Ihor Indyk. 2021. tf.data: a machine learning data processing framework.Proc. VLDB Endow.14, 12 (July 2021), 2945–2958. doi:10.14778/3476311.3476374

-

[27]

NVIDIA, :, Johan Bjorck, Fernando Castañeda, Nikita Cherniadev, Xingye Da, Runyu Ding, Linxi "Jim" Fan, Yu Fang, Dieter Fox, Fengyuan Hu, Spencer Huang, Joel Jang, Zhenyu Jiang, Jan Kautz, Kaushil Kundalia, Lawrence Lao, Zhiqi Li, Zongyu Lin, Kevin Lin, Guilin Liu, Edith Llontop, Loic Magne, Ajay Mandlekar, Avnish Narayan, Soroush Nasiriany, Scott Reed, Y...

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[28]

Christiano, Jan Leike, and Ryan Lowe

Long Ouyang, Jeffrey Wu, Xu Jiang, Diogo Almeida, Carroll Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, John Schul- man, Jacob Hilton, Fraser Kelton, Luke Miller, Maddie Simens, Amanda Askell, Peter Welinder, Paul F. Christiano, Jan Leike, and Ryan Lowe. 2022. Training language models to follow instructions with huma...

work page 2022

-

[29]

Adam Paszke, Sam Gross, Francisco Massa, Adam Lerer, James Bradbury, Gre- gory Chanan, Trevor Killeen, Zeming Lin, Natalia Gimelshein, Luca Antiga, Alban Desmaison, Andreas Kopf, Edward Yang, Zachary DeVito, Martin Raison, Alykhan Tejani, Sasank Chilamkurthy, Benoit Steiner, Lu Fang, Junjie Bai, and Soumith Chintala. 2019. PyTorch: An Imperative Style, Hi...

work page 2019

-

[30]

PyTorch Team. 2025. torch.distributed.checkpoint Documentation. https:// pytorch.org/docs/stable/distributed.checkpoint.html

work page 2025

-

[31]

Matthew Rocklin. 2015. Dask: Parallel computation with blocked algorithms and task scheduling. InProceedings of the 14th Python in Science Conference, Vol. 130. SciPy, Austin, TX, USA, 136

work page 2015

-

[32]

Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Mingchuan Zhang, Y. K. Li, Y. Wu, and Daya Guo. 2024. DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models. arXiv:2402.03300

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[33]

Jun Song, Jingyi Ding, Irshad Kandy, Yanghao Lin, Zhongjia Wei, Zilong Zhou, Zhiwei Peng, Jixi Shan, Hongyue Mao, Xiuqi Huang, Xun Song, Cheng Chen, Yanjia Li, Tianhao Yang, Wei Jia, Xiaohong Dong, Kang Lei, Rui Shi, Pengwei Zhao, and Wei Chen. 2025. Magnus: A Holistic Approach to Data Management for Large-Scale Machine Learning Workloads.Proc. VLDB Endow...

-

[34]

The Apache Software Foundation. 2015. Apache Pulsar. https://pulsar.apache. org

work page 2015

-

[35]

The Apache Software Foundation. 2025. Apache Iceberg. https://iceberg.apache. org/

work page 2025

-

[36]

Taegeon Um, Goeun Byun, Hwarim Choi, Mincheol Han, and Hyuck Park. 2023. FastFlow: Accelerating Deep Learning Model Training with Smart Offloading of Input Data Pipeline.Proceedings of the VLDB Endowment (PVLDB)16, 11 (2023), 1086–1099

work page 2023

-

[37]

Xin Wang, Taein Kwon, Mahdi Rad, Bowen Pan, Ishani Chakraborty, Sean Andrist, Dan Bohus, Ashley Feniello, Bugra Tekin, Felipe Vieira Frujeri, Neel Joshi, and Marc Pollefeys. 2023. HoloAssist: an Egocentric Human Interaction Dataset for Interactive AI Assistants in the Real World. https://openaccess.thecvf. com/content/ICCV2023/html/Wang_HoloAssist_an_Egoc...

work page 2023

-

[38]

WarpStream Labs. 2025. WarpStream: A Cloud-Native, Zero-Disk Apache Kafka Alternative. https://www.warpstream.com

work page 2025

-

[39]

Matei Zaharia, Mosharaf Chowdhury, Tathagata Das, Ankur Dave, Justin Ma, Murphy McCauley, Michael J Franklin, Scott Shenker, and Ion Stoica. 2012. Re- silient distributed datasets: A fault-tolerant abstraction for in-memory cluster computing. In9th USENIX Symposium on Networked Systems Design and Imple- mentation (NSDI 12). USENIX Association, Berkeley, C...

work page 2012

-

[40]

Juntao Zhao, Qi Lu, Wei Jia, Borui Wan, Lei Zuo, Junda Feng, Jianyu Jiang, Yangrui Chen, Shuaishuai Cao, Jialing He, Kaihua Jiang, Yuanzhe Hu, Shibiao Nong, Yanghua Peng, Haibin Lin, and Chuan Wu. 2026. MegaScale-Data: Scaling Dataloader for Multisource Large Foundation Model Training. https://arxiv.org/ abs/2504.09844

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[41]

Mark Zhao, Emanuel Adamiak, and Christos Kozyrakis. 2024. Cedar: Optimized and Unified Machine Learning Input Data Pipelines.Proceedings of the VLDB Endowment (PVLDB)18, 2 (2024), 488–502

work page 2024

-

[42]

Yanli Zhao, Andrew Gu, Rohan Varma, Liang Luo, Chien-Chin Huang, Min Xu, Less Wright, Hamid Shojanazeri, Myle Ott, Sam Shleifer, Alban Desmaison, Can Balioglu, Pritam Damania, Bernard Nguyen, Geeta Chauhan, Yuchen Hao, Ajit Mathews, and Shen Li. 2023. PyTorch FSDP: Experiences on Scaling Fully Sharded Data Parallel.Proc. VLDB Endow.16, 12 (2023), 3848–386...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.