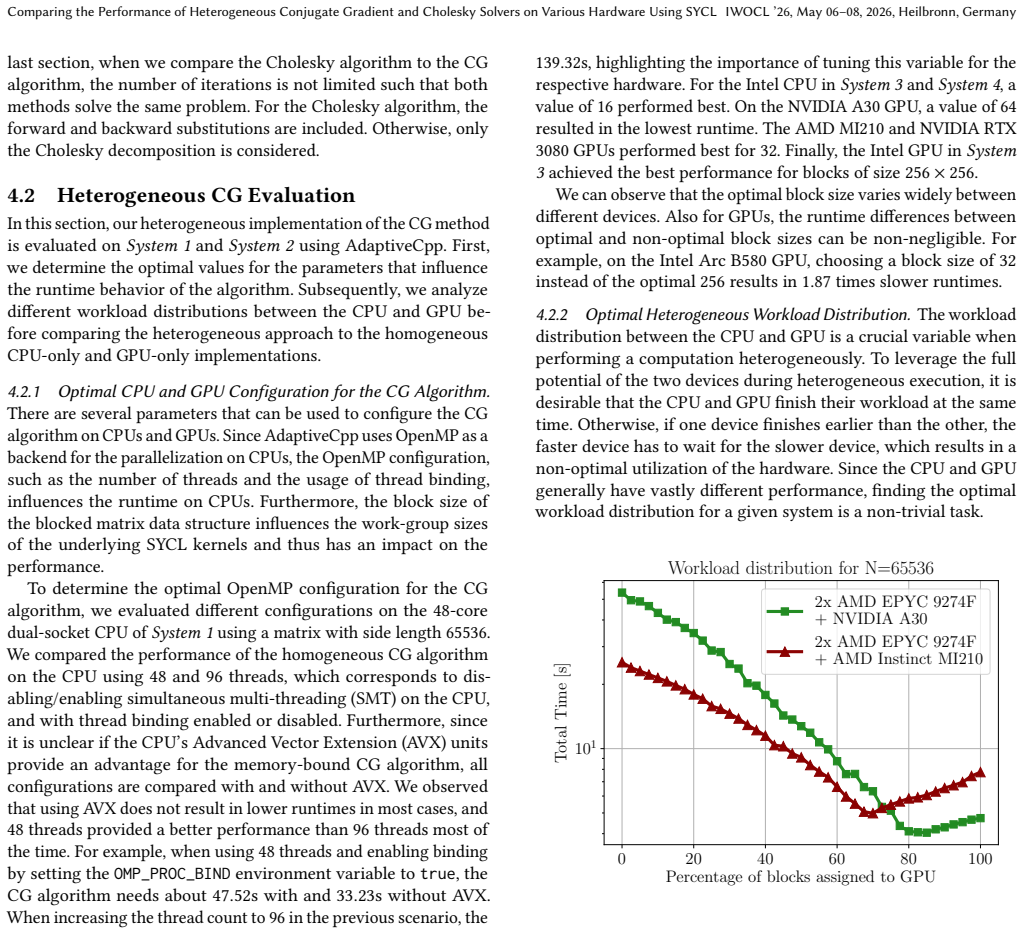

Heterogeneous solvers up to 32% faster than GPU-only for big matrices

Splitting CG and Cholesky work across CPU and GPU with SYCL beats pure-GPU code on NVIDIA, AMD and Intel systems.

full image

full image

Distributed, Parallel, and Cluster Computing

Covers fault-tolerance, distributed algorithms, stabilility, parallel computation, and cluster computing. Roughly includes material in ACM Subject Classes C.1.2, C.1.4, C.2.4, D.1.3, D.4.5, D.4.7, E.1.

Splitting CG and Cholesky work across CPU and GPU with SYCL beats pure-GPU code on NVIDIA, AMD and Intel systems.

full image

full image

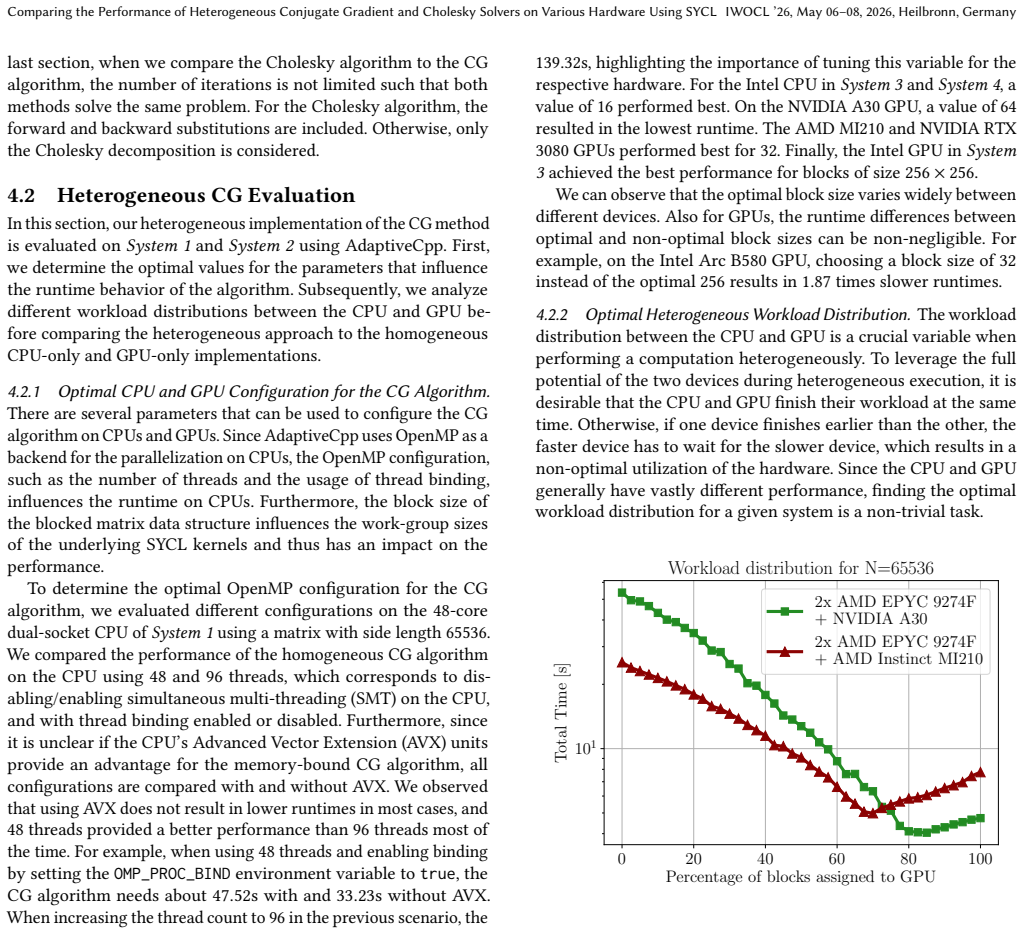

NCCLZ: Compression-Enabled GPU Collectives with Decoupled Quantization and Entropy Coding

Placing quantization at the interface and entropy coding inside NCCL primitives allows overlap with communication on scientific and training

full image

full image

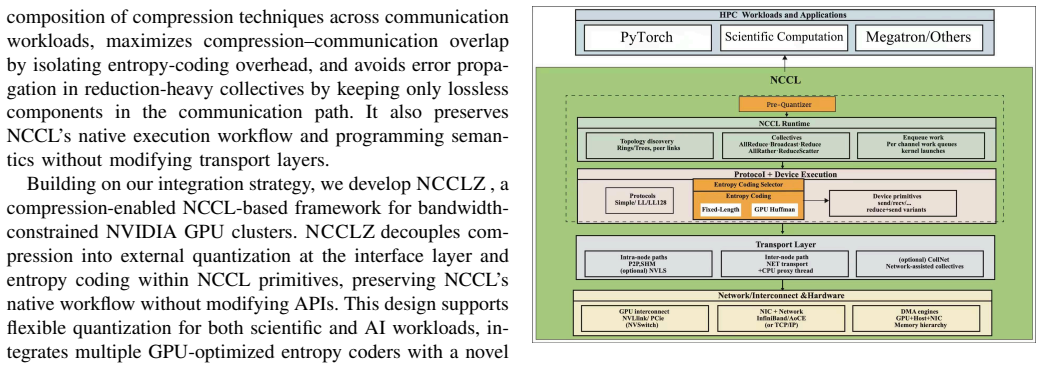

Attention heads differ in granularity needs, allowing targeted block sizing to recover accuracy lost by uniform methods without slowing down

full image

full image

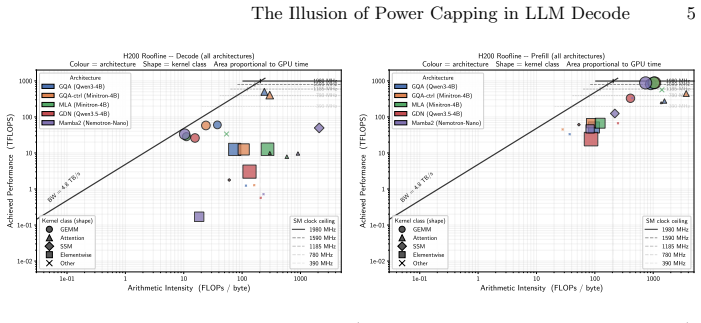

Decode draws 137-300 W on 700 W GPUs as memory saturates first; clock locking recovers 32% energy instead.

full image

full image

Trade-offs in Decentralized Agentic AI Discovery Across the Compute Continuum

Benchmarks on 4096 nodes show how Chord, Pastry and Kademlia perform in stationary and churn conditions across edge to cloud

full image

full image

GraphFlash: Enabling Fast and Elastic Graph Processing on Serverless Infrastructure

Shared storage and targeted optimizations let graph workloads scale without fixed clusters.

full image

full image

Three mechanisms cut position-seeking costs during concurrent searches and updates

full image

full image

State Twins: An Off-Chain Substrate for Agentic Reasoning over Decentralized Finance Protocols

Formalizing AMM pools as dynamical systems yields replicas that support instant forking and replay while bounding divergence from the live链.

GriNNder: Breaking the Memory Capacity Wall in Full-Graph GNN Training with Storage Offloading

GriNNder achieves up to 9.78x speedup on single GPU setups comparable to distributed systems

full image

full image

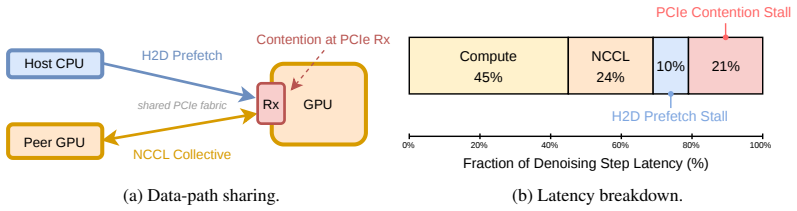

Communication-aware runtime hides prefetch latency behind compute and collectives on PCIe nodes, exposing a tunable memory-speed tradeoff.

full image

full image

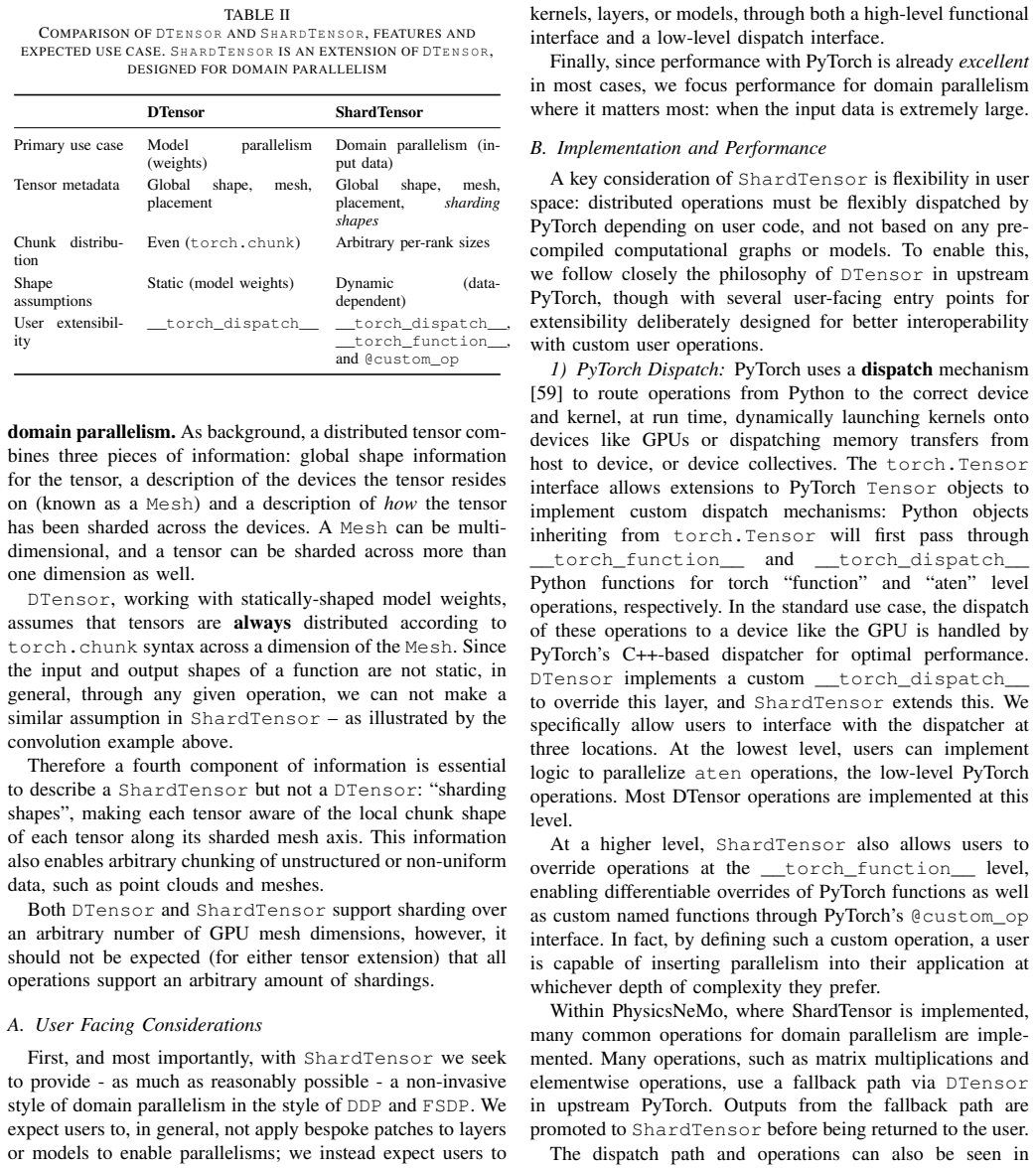

A portable format encodes operations, dependencies, timing, and constraints to support simulators and hardware co-design across vendors.

full image

full image

Byzantine Consensus in Directed Graphs with Message Authentication

With message authentication, exact agreement works in synchronous cases and approximate agreement in asynchronous cases precisely when the d

full image

full image

ReCoVer: Resilient LLM Pre-Training System via Fault-Tolerant Collective and Versatile Workload

Constant microbatch counts per iteration deliver 2.23x higher throughput than restart methods when 256 GPUs fail during the run.

full image

full image

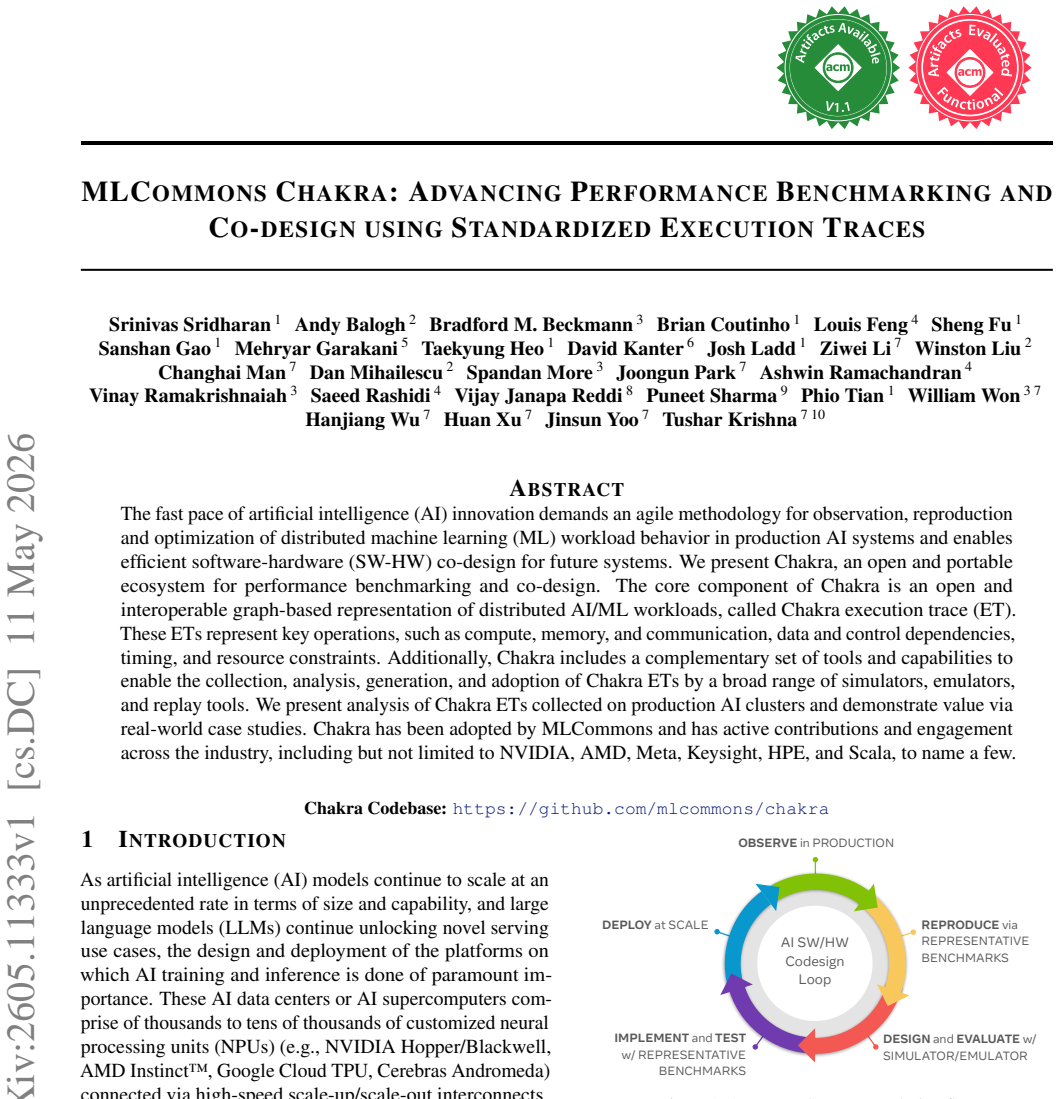

ShardTensor: Domain Parallelism for Scientific Machine Learning

Domain parallelism shards input data so models can train and infer on larger problems without hardware caps or accuracy loss.

full image

full image

Closer in the Gap: Towards Portable Performance on RISC-V Vector Processors

Microbenchmarks on real hardware show predication and stride loads limit current compilers, with GCC ahead except in matrix multiplies.

full image

full image

An Uncertainty-Aware Resilience Micro-Agent for Causal Observability in the Computing Continuum

Causal graphs and uncertainty checks let it repair at 62% accuracy in 3ms or escalate when unsure, preventing harm in ambiguous edge faults.

full image

full image

Surviving Partial Rank Failures in Wide Expert-Parallel MoE Inference

Targeted repairs for reachability and coverage replace full-instance downtime with short pauses that restore near-normal speed in under a 60

full image

full image

Accelerating Compound LLM Training Workloads with Maestro

Section graphs let each workload part pick its own settings; wavefront scheduling overlaps execution despite input-driven changes.

full image

full image

Privacy-preserving Chunk Scheduling in a BitTorrent Implementation of Federated Learning

FLTorrent keeps attribution near neighborhood levels with 6-10% overhead for large models over 100-500 peers.

full image

full image

It splits power decisions from task placement and coordinates them through queue states to handle mobility and varying loads.

full image

full image

FractalSortCPU: Bandwidth-Efficient Compressed Radix Sort on CPU

Compressed histograms and fully parallel key updates remove bucketing and pre-processing for 512 MB to 32 GB datasets.

full image

full image

FractalSortCPU: Bandwidth-Efficient Compressed Radix Sort on CPU

Histogram compression with parallel updates skips bucketing and preprocessing, lowering latency for database sorting tasks.

full image

full image

Amortized Asynchronous Byzantine Reliable Broadcast with Optimal Resilience

After initial rounds build guarantees, each new broadcast needs one round and O(n |m|) total cost while keeping n/3 fault tolerance.

Amortized Asynchronous Byzantine Reliable Broadcast with Optimal Resilience

Multi-round structure shares setup so later broadcasts need one round each while keeping resilience at f < n/3 for large messages.

Onboard filters send only candidate events while a cloud model marks exact pothole boundaries across distributed vehicle fleets.

full image

full image

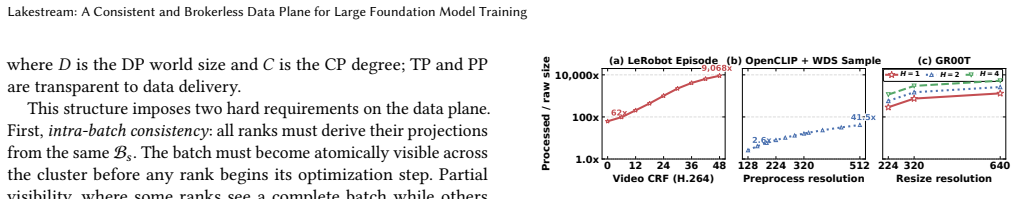

Lakestream: A Consistent and Brokerless Data Plane for Large Foundation Model Training

Object-store approach adds atomic visibility and recovery while exceeding Kafka in speed and isolation on large workloads.

full image

full image

Population Protocols over Ordered Agents

The immediate-observation variant restricted to order predicates exactly matches this language class with links to logic and automata.

Multi-Tier Labeling and Physics-Informed Learning for Orbital Anomaly Detection at Scale

Transformer trained on 232M TLE records reaches 55% maneuver and 63% decay recall as high-recall triage tool

Cloud Performance Decomposition for Long-Term Performance Engineering: A Case Study

Hybrid and automatic methods uncover hidden weekly and quarterly cycles in serverless data, cutting latency variability by 60% on AWS.

full image

full image

Adaptive DNN Partitioning and Offloading in Heterogeneous Edge-Cloud Continuum

Periodic network checks let layers move between Raspberry Pi, laptop and cloud to beat fixed partitions on latency as well

full image

full image

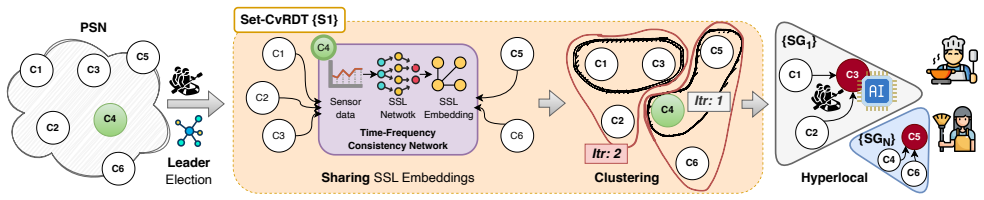

PoHAR: Understanding Hyperlocal Human Activities with Pollution Sensor Networks

Low-cost pollution monitors share data conflict-free, cluster affected nodes without labels, and classify hyperlocal activities locally in 2

full image

full image

ATLAS: Efficient Out-of-Core Inference for Billion-Scale Graph Neural Networks

Broadcast-based streaming replaces gather operations to handle graphs and features too large for memory using sequential disk reads.

full image

full image

From Detection to Recovery: Operational Analysis on LLM Pre-training with 504 GPUs

63-node B200 cluster data shows combined signals detect issues early with low false positives while NFS saturation limits checkpoint speed

full image

full image

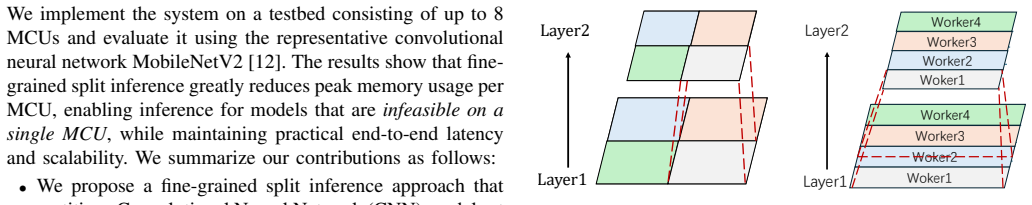

Split CNN Inference on Networked Microcontrollers

Distributing weights and activations across devices cuts peak RAM per unit while keeping latency practical.

full image

full image

Light Cone Consistency: Toward a Unified Theory of Consistency in Message-Passing Systems

Every active system must relax causal closure, fork resolution, or timeliness to escape the impossibility triangle.

MegaScale-Omni: A Hyper-Scale, Workload-Resilient System for MultiModal LLM Training in Production

Decoupled encoder and LLM parallelism plus adaptive balancing maintain high efficiency as data lengths and modality mixes vary in production

TS-Verkle: A TypeScript Native Verkle Library With On-chain Verifier

TypeScript library and Solidity verifier show unoptimized Verkle setups exceed Merkle costs despite smaller proofs.

full image

full image

Learning from historical archives turns massive observations into task-adaptive compressed representations.

full image

full image

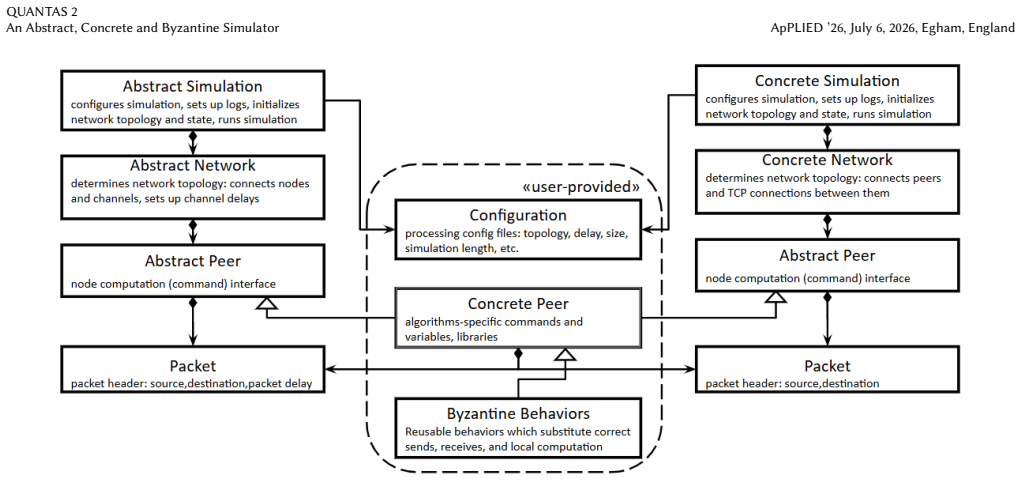

QUANTAS 2 An Abstract, Concrete and Byzantine Simulator

QUANTAS 2 adds concrete network mode and composable fault strategies so researchers can explore then validate without rewriting anything.

full image

full image

MARLaaS: Multi-Tenant Asynchronous Reinforcement Learning as a Service

LoRA sharing and decoupled async stages let 32 tasks run together while cutting end-to-end time 85 percent.

full image

full image

Unleashing Scalable Context Parallelism for Foundation Models Pre-Training via FCP

Flexible communication and bin-packing balance short and long sequences, lifting attention MFU by 1.13x to 2.21x.

full image

full image

Dooly: Configuration-Agnostic, Redundancy-Aware Profiling for LLM Inference Simulation

One inference pass labels dimensions to build a reusable database, holding simulation error to 5-8% MAPE on time metrics.

full image

full image

Stencil Computations on Cerebras Wafer-Scale Engine

CStencil uses on-chip SRAM and mesh interconnect to remove memory bottlenecks that limit GPUs for scientific workloads.

full image

full image

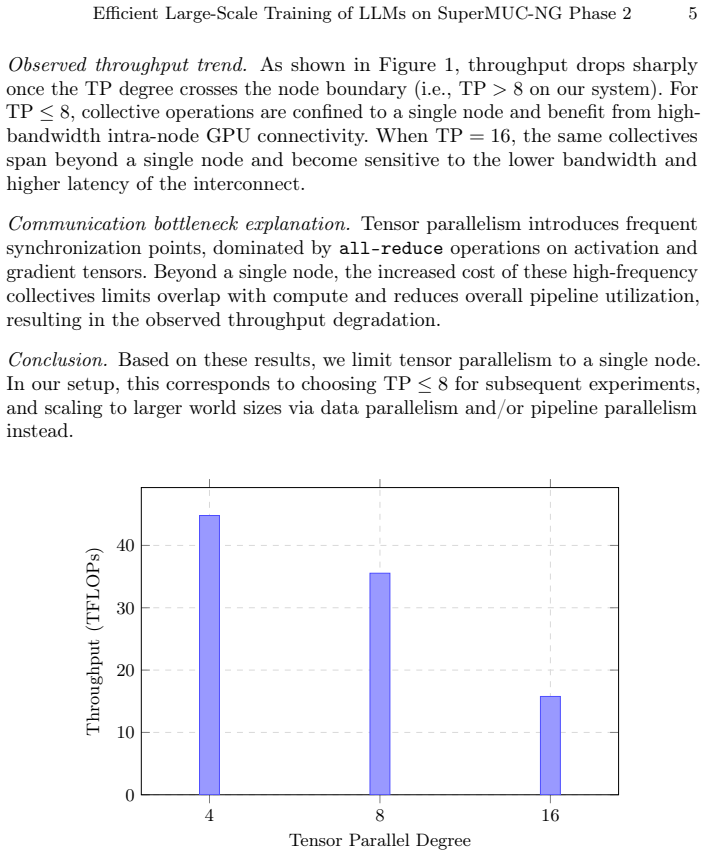

A Scalable Recipe on SuperMUC-NG Phase 2: Efficient Large-Scale Training of Language Models

Tensor, pipeline and sharded data parallelism yield 93% weak scaling on 128 nodes using unmodified software.

full image

full image

Stencil Computations on Tenstorrent Wormhole

Axpy version uses less energy on large inputs while profiling isolates PCIe and setup costs as the main gap.

full image

full image

HexiSeq: Accommodating Long Context Training of LLMs over Heterogeneous Hardware

Asymmetric partitioning of sequences and heads to match device capabilities delivers gains on both real and simulated heterogeneous setups.

Deadline-Driven Hierarchical Agentic Resource Sharing for AI Services and RAN Functions in AI-RAN

An LLM placement layer plus fast convex allocation meets deadlines while the critic blocks costly migrations.

full image

full image

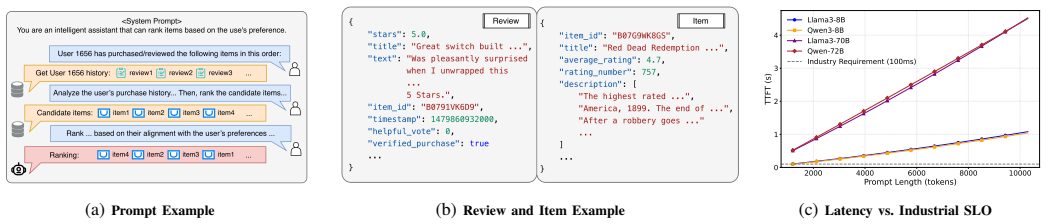

RcLLM: Accelerating Generative Recommendation via Beyond-Prefix KV Caching

Beyond-prefix block caching plus selective attention lets LLMs serve real-time personalized outputs from long prompts

full image

full image

MERBIT: A GPU-Based SpMV Method for Iterative Workloads

Merge-path and bit-fields balance nonzeros and memory traffic for repeated graph matrix work.

full image

full image

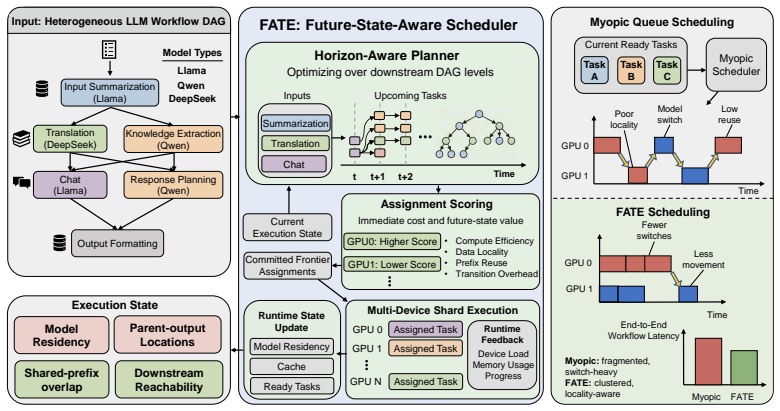

FATE: Future-State-Aware Scheduling for Heterogeneous LLM Workflows

By scoring placements on both immediate cost and induced downstream state, FATE beats round-robin and classical DAG methods on real and test

full image

full image

On Similarity of Computational Kernels in our Codes and Proxies

New scores based on resource patterns automate checks on whether benchmarks represent real HPC codes on CPU and GPU systems.

full image

full image

Regulating Branch Parallelism in LLM Serving

Extra output branches admitted only when their added latency fits current batch slack, keeping SLOs above 95%.

full image

full image

CCL-Bench 1.0: A Trace-Based Benchmark for LLM Infrastructure

A benchmark that records full execution traces exposes why some configurations underperform despite better overlap or hardware.

full image

full image

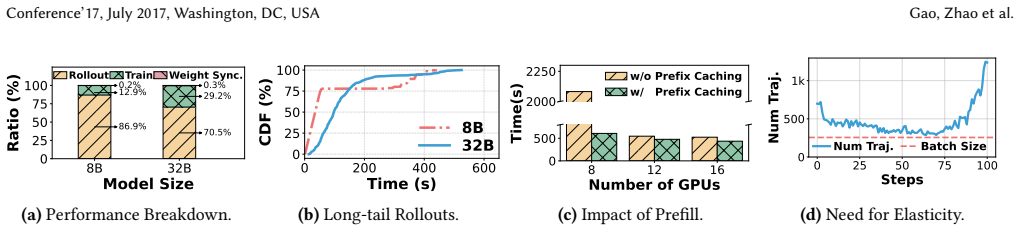

ROSE: Rollout On Serving GPUs via Cooperative Elasticity for Agentic RL

ROSE safely reuses spare capacity in production clusters for faster multi-turn agent training without breaking service guarantees

full image

full image

ADELIA: Automatic Differentiation for Efficient Laplace Inference Approximations

Structure-exploiting multi-GPU backward pass cuts energy 5-8x and enables reliable runs on models with 1.9 million latent variables.

full image

full image

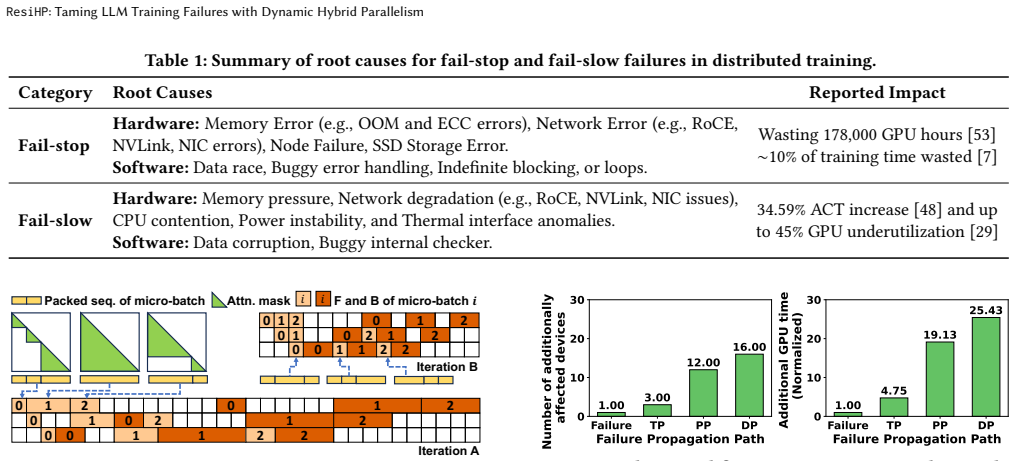

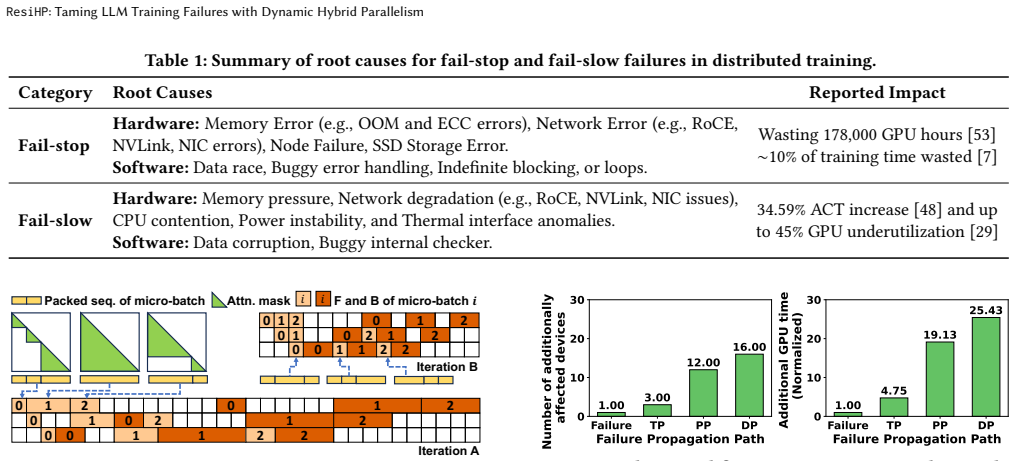

ResiHP: Taming LLM Training Failures with Dynamic Hybrid Parallelism

Workload-aware detection plus dynamic group resizing keeps hybrid-parallel training efficient when devices slow or drop out.

full image

full image

ResiHP: Taming LLM Training Failures with Dynamic Hybrid Parallelism

Workload predictor separates real slowdowns from data variation, then scheduler resizes parallelism groups for 1.04-4.39x higher speed on 16

full image

full image

TACO: A Toolsuite for the Verification of Threshold Automata

Implements three decidable model checkers and two semi-decision procedures to check fault-tolerant algorithms automatically.

full image

full image

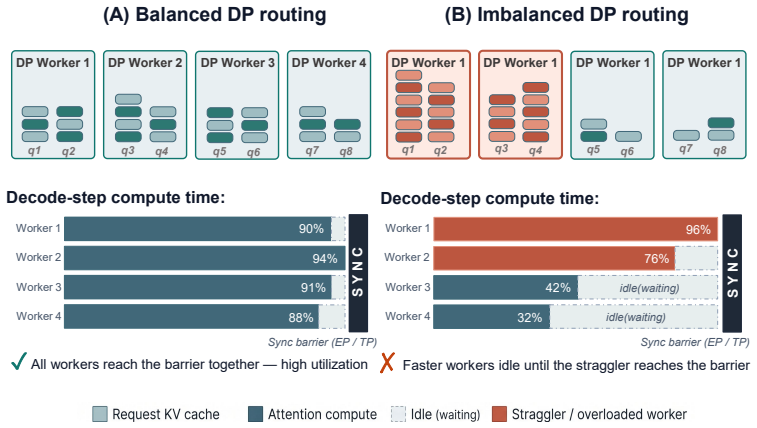

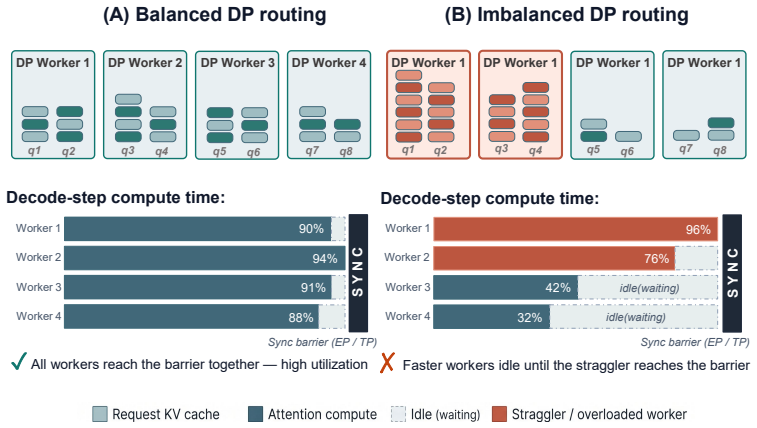

Asymmetric scoring of request assignments prevents the slowest worker from setting the pace at every decode step.

full image

full image

Piecewise-linear F-scores guide sticky assignments inside millisecond budgets and raise cluster throughput on production traces.

full image

full image

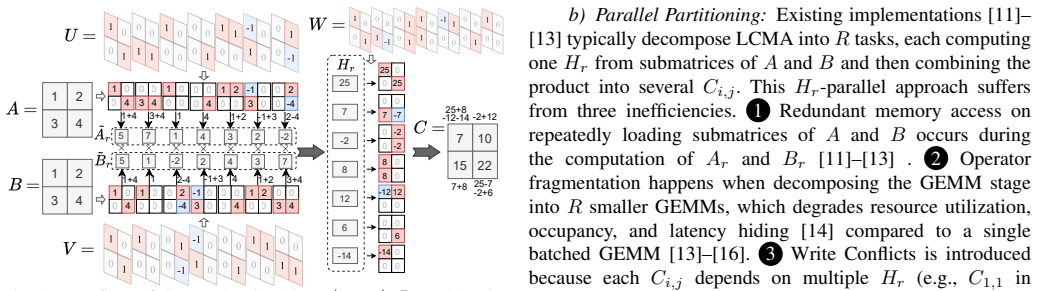

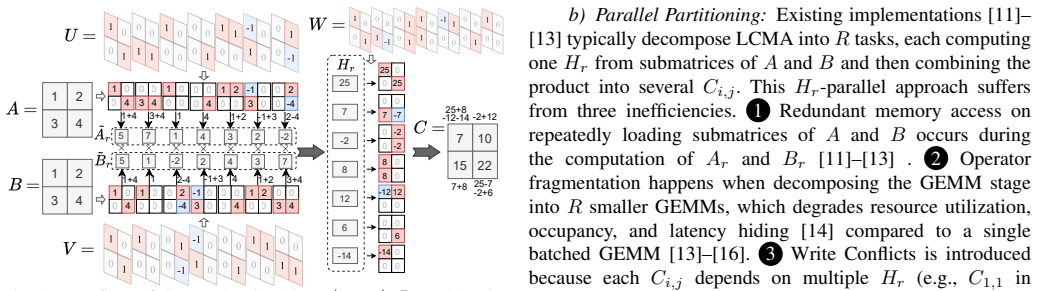

FalconGEMM: Surpassing Hardware Peaks with Lower-Complexity Matrix Multiplication

FalconGEMM selects and optimizes these algorithms to deliver 8-18 percent gains over cuBLAS on GPUs and CPUs for LLM tasks.

full image

full image

FalconGEMM: Surpassing Hardware Peaks with Lower-Complexity Matrix Multiplication

The framework automates LCMA selection and group-parallel execution to beat cuBLAS and AlphaTensor on GPUs and CPUs.

full image

full image

Relay Buffer Independent Communication over Pooled HBM for Efficient MoE Inference on Ascend

On pooled high-bandwidth memory, placing tokens straight into remote expert windows trims dispatch latency and widens practical serving head

full image

full image

Relay Buffer Independent Communication over Pooled HBM for Efficient MoE Inference on Ascend

Pooled HBM on Ascend lets dispatch and combine skip intermediate storage, lowering latency and widening feasible schedules.

full image

full image

A Privacy-Preserving Machine Learning Framework for Edge Intelligence: An Empirical Analysis

Real tests find DP matches plaintext speeds with accuracy tradeoffs, while SMC depends on bandwidth and FHE slows by 1000 times.

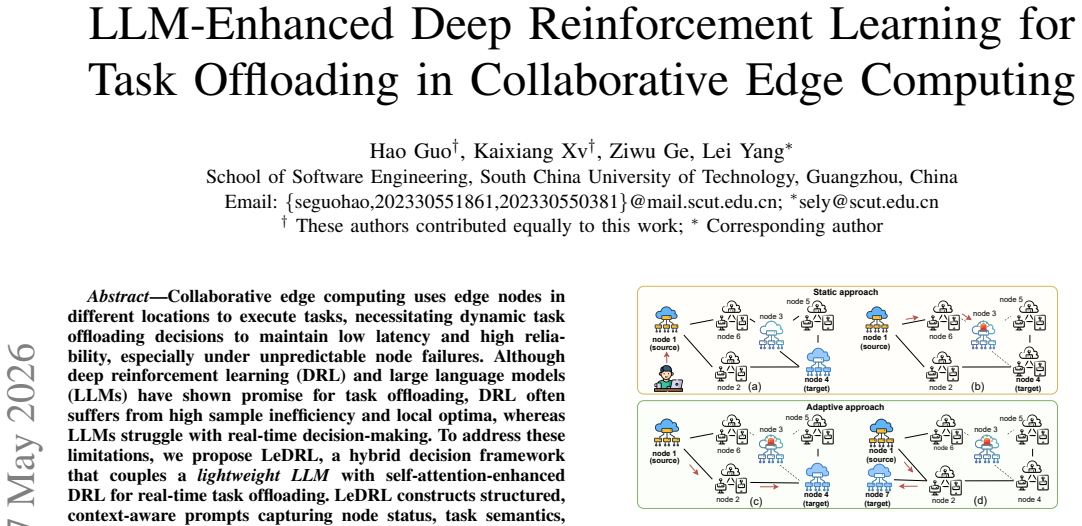

LLM-Enhanced Deep Reinforcement Learning for Task Offloading in Collaborative Edge Computing

Structured prompts and outcome reflection let the language model guide reinforcement learning to manage unpredictable node failures on low-p

full image

full image

Irminsul: MLA-Native Position-Independent Caching for Agentic LLM Serving

Irminsul hashes content directly and rotates only a 64-dim key slice to deliver 63% prefill energy savings on agentic traffic.

full image

full image

A Scalable Digital Twin Framework for Energy Optimization in Data Centers

IoT sensors and LSTM forecasts support real-time energy management and better PUE in tested setups.

full image

full image

EdgeServing: Deadline-Aware Multi-DNN Serving at the Edge

A stability score and early-exit choices expand the scheduler's options to lower deadline misses and P95 latency on shared GPUs.

full image

full image

Nitsum: Serving Tiered LLM Requests with Adaptive Tensor Parallelism

Nitsum reconfigures parallelism degree and GPU splits at runtime to meet mixed latency and throughput targets on fixed hardware.

full image

full image

Toward a Risk Assessment Framework for Institutional DeFi: A Nine-Dimension Approach

Extending prior taxonomies with composability, comprehension debt, and temporal dynamics captures systemic events that six-dimension methods

Piper: Efficient Large-Scale MoE Training via Resource Modeling and Pipelined Hybrid Parallelism

By quantifying memory, compute and communication needs, Piper selects pipelined schedules that cut idle time on large HPC clusters.

full image

full image

Communication Offloading on SmartNIC DPUs: A Quantitative Approach

Buddy engine frees host CPU cycles in five applications but reveals 625x DRAM traffic spike without cache-access hardware.

full image

full image

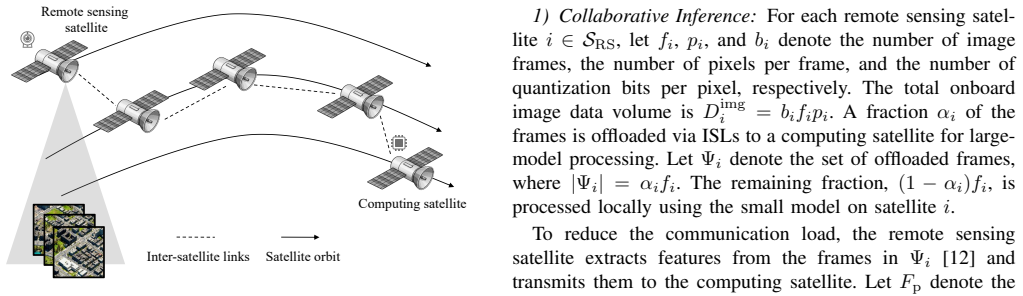

Delay-Aware Large-Small Model Collaboration over LEO Satellite Networks

Small models run locally on remote sensing satellites while large models process on computing satellites using smart offloading and routing.

full image

full image

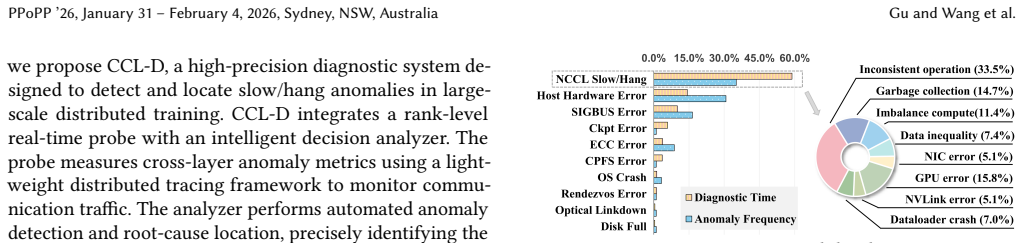

CCL-D: A High-Precision Diagnostic System for Slow and Hang Anomalies in Large-Scale Model Training

The probe and analyzer combination reduces diagnosis time from hours or days to minutes by monitoring cross-layer metrics in real time.

full image

full image

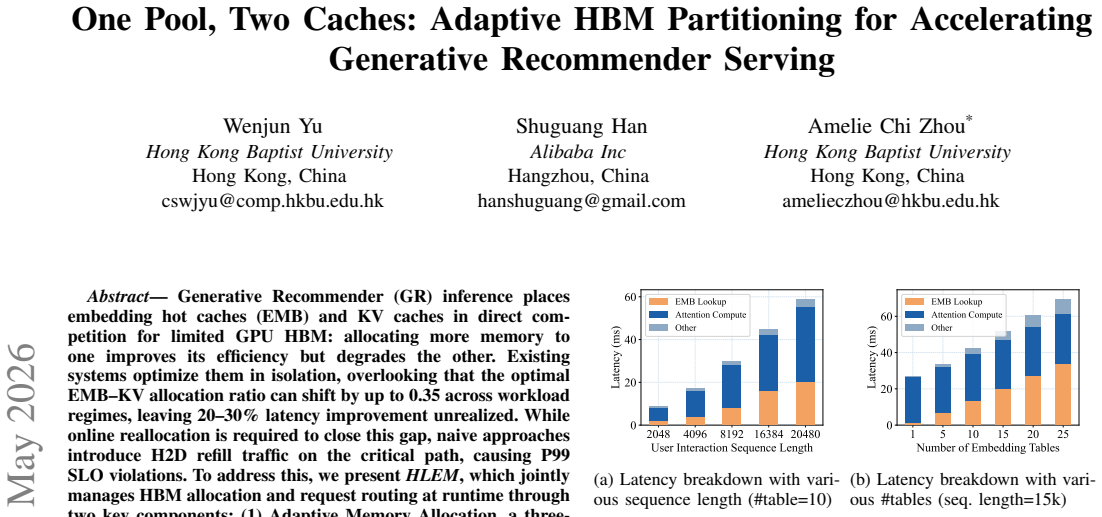

One Pool, Two Caches: Adaptive HBM Partitioning for Accelerating Generative Recommender Serving

PPO controller tracks optimal embedding-KV cache ratio at 32 μs latency, delivering 93-99% SLOs on 32-node production clusters without H2D b

full image

full image

Coral: Cost-Efficient Multi-LLM Serving over Heterogeneous Cloud GPUs

Joint optimization of allocation and strategies adapts to demand shifts for higher goodput when hardware is limited.

full image

full image

Ten assumptions fail under time-varying contacts and resource limits, requiring a new architecture for edge-space-cloud execution

ClusterLess: Deadline-Aware Serverless Workflow Orchestration on Federated Edge Clusters

A coordination layer across federated clusters lifts deadline satisfaction from under 50% to over 90% for concurrent serverless workloads.

full image

full image

phys-MCP: A Control Plane for Heterogeneous Physical Neural Networks

It registers diverse material-based computers as standard resources while preserving their speed, reset behavior, and plasticity for edge-to

full image

full image