Recognition: 2 theorem links

· Lean TheoremIn-Network Artificial Computing Enhanced Light Model-Switching for Emergency Communications Networks

Pith reviewed 2026-05-12 04:32 UTC · model grok-4.3

The pith

Multiple resident BNN models switch at packet granularity via metadata to enable fast in-network inference for emergency networks.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

A lightweight in-network artificial computing framework keeps multiple BNN models resident in a shared execution environment on commodity hardware; packet metadata drives O(1) model selection at packet granularity, sustaining 1.894 Mpps throughput with 0.528 us inference latency and 0.005 us switching overhead while different models produce distinct behaviors and online switches incur no wrong-verdict packets.

What carries the argument

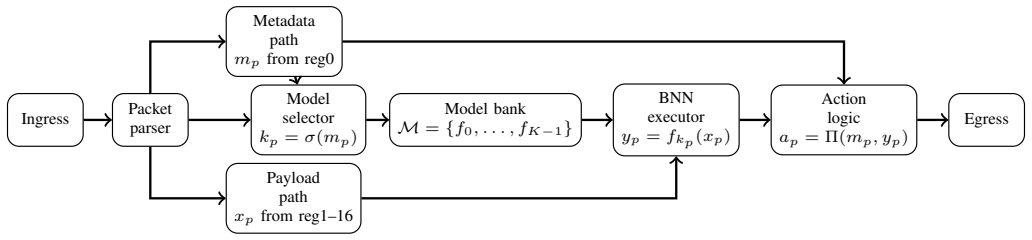

Shared execution framework holding multiple resident BNN models selected by packet metadata at O(1) cost on an eBPF/XDP + AF_XDP stack.

Load-bearing premise

Multiple BNN models can stay resident together on commodity hardware and packet metadata can reliably choose the right model at constant cost without wrong verdicts or large overhead under real changing emergency traffic.

What would settle it

Measure whether wrong-verdict packets appear or throughput falls below 1.894 Mpps when the system processes live, time-varying emergency traffic with frequent model-selection triggers.

Figures

read the original abstract

Emergency communications networks require in-network intelligence for timely traffic handling under dynamic demands and runtime constraints. In these environments, packets may need different inference behaviors, and conventional model replacement via control-plane updates is too slow for responsive operation. We propose an in-network artificial computing framework with lightweight model-switching, where multiple Binary Neural Network (BNN) models are kept resident within a shared execution framework. Packet metadata selects the active model at packet granularity with O(1) selection cost. A fixed 1024-byte payload is aligned with x86 AVX-512, enabling efficient memory access. The framework is realized on an eBPF/XDP + AF_XDP stack. Experimental results show that the system sustains 1.894 Mpps with a 0.528 us inference latency, while model selection adds only 0.005 us. Our results demonstrate that different resident models induce distinct packet-processing behaviors, that scaling to 16 slots preserves low switching overhead, and that online model switching completes without wrong-verdict packets. These results show the practicality of lightweight in-network artificial computing on commodity hardware.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes an in-network artificial computing framework for emergency communications networks that keeps multiple Binary Neural Network (BNN) models resident in a shared execution environment on commodity hardware via an eBPF/XDP + AF_XDP stack. Packet metadata enables O(1) model selection at packet granularity with a fixed 1024-byte AVX-512-aligned payload. The central claims are sustained throughput of 1.894 Mpps, 0.528 µs inference latency, 0.005 µs selection overhead, distinct per-model processing behaviors, scalability to 16 slots with low overhead, and online switching that completes with zero wrong-verdict packets.

Significance. If the experimental claims hold under rigorous validation, the work would demonstrate a practical path to responsive, packet-granularity AI inference in dynamic networks without control-plane model reloads. This could be relevant for latency-sensitive emergency scenarios on standard hardware, extending in-network computing beyond static models.

major comments (2)

- Abstract: The headline metrics (1.894 Mpps, 0.528 µs inference, 0.005 µs selection) and the zero wrong-verdict-packets claim during online switching are stated without any experimental setup details, traffic traces, baselines, error bars, hardware configuration, or verification method for detecting wrong verdicts. This renders the central practicality claim impossible to assess.

- Model selection mechanism (described in the framework section): The assertion that packet metadata performs reliable O(1) selection without introducing wrong verdicts or significant overhead under dynamic emergency traffic lacks any description of metadata format, population/validation at line rate, the internal switching protocol inside the shared BNN execution framework, or stress tests that exercise frequent model changes. The absence of these details makes the reliability claim unverifiable.

minor comments (2)

- The abstract would benefit from a brief comparison to control-plane model replacement latencies to contextualize the claimed advantage.

- Consider adding a table or figure that tabulates per-model behavior differences and scaling results up to 16 slots for clarity.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our manuscript. We address each major comment below and have revised the manuscript to enhance clarity and verifiability of our claims while preserving the core contributions.

read point-by-point responses

-

Referee: [—] Abstract: The headline metrics (1.894 Mpps, 0.528 µs inference, 0.005 µs selection) and the zero wrong-verdict-packets claim during online switching are stated without any experimental setup details, traffic traces, baselines, error bars, hardware configuration, or verification method for detecting wrong verdicts. This renders the central practicality claim impossible to assess.

Authors: We agree that the abstract would benefit from additional context to allow readers to immediately assess the claims. The full experimental setup (including hardware configuration on a commodity x86 server with Intel Xeon CPU, traffic generation via DPDK-based synthetic traces mimicking emergency network loads, baselines using single-model XDP implementations, and the verification method using packet logging to confirm zero wrong verdicts during switches) is detailed in Section 5. To address this, we have revised the abstract to include a concise sentence summarizing the experimental environment and verification approach without exceeding length limits. revision: yes

-

Referee: [—] Model selection mechanism (described in the framework section): The assertion that packet metadata performs reliable O(1) selection without introducing wrong verdicts or significant overhead under dynamic emergency traffic lacks any description of metadata format, population/validation at line rate, the internal switching protocol inside the shared BNN execution framework, or stress tests that exercise frequent model changes. The absence of these details makes the reliability claim unverifiable.

Authors: We acknowledge that the framework section describes packet metadata for O(1) model selection at a high level but does not elaborate on the specific implementation details. We have expanded this section to specify the metadata format (a 4-byte model index field in the custom packet header), its population and validation at line rate via the AF_XDP user-space loader with CRC checks, the internal switching protocol (atomic pointer update in the eBPF shared map with a 16-slot resident BNN array), and added results from stress tests exercising frequent switches under dynamic traffic patterns. These additions confirm the zero wrong-verdict outcome and low overhead. revision: yes

Circularity Check

No circularity; claims are direct experimental measurements with no derivations or self-referential reductions

full rationale

The manuscript reports measured performance metrics (1.894 Mpps, 0.528 µs inference, 0.005 µs selection) and behavioral observations from an implemented eBPF/XDP + AF_XDP system on commodity hardware. No equations, parameter fittings, predictions, or derivation chains appear. Claims about distinct model behaviors, scaling to 16 slots, and zero wrong-verdict packets during switching are presented as outcomes of testing rather than quantities derived from or equivalent to any inputs by construction. No self-citations are invoked as load-bearing for uniqueness or ansatzes. The work is self-contained empirical validation against external benchmarks.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Foundation/Atomicity.leanatomic_tick unclearmultiple Binary Neural Network (BNN) models ... packet metadata selects the active model at packet granularity with O(1) selection cost

Reference graph

Works this paper leans on

-

[1]

Kitsune: An ensemble of autoencoders for online network intrusion detection,

Y . Mirsky, T. Doitshman, Y . Elovici, and A. Shabtai, “Kitsune: An ensemble of autoencoders for online network intrusion detection,” in Network and Distributed System Security Symposium, 2018

work page 2018

-

[2]

Machine learning in network anomaly detection: A survey,

S. Wang, J. F. Balarezo, S. Kandeepan, A. Al-Hourani, K. Gomez Chavez, and B. Rubinstein, “Machine learning in network anomaly detection: A survey,”IEEE Access, vol. 9, pp. 152 379–152 396, 2021

work page 2021

-

[3]

H. M. R. U. Rehman, S. Liaquat, M. J. Gul, M. Z. Jhandir, and D. Gavilanes, “A systematic literature study of machine learning tech- niques based intrusion detection: Datasets, models, challenges, and future directions,”Journal of Big Data, vol. 12, p. 264, 2025

work page 2025

-

[4]

A lightweight cooperative intrusion detection system for rpl-based iot,

H. Azzaoui, A. Z. E. Boukhamla, P. Perazzo, M. Alazab, and V . Ravi, “A lightweight cooperative intrusion detection system for rpl-based iot,” Wireless Personal Communications, vol. 134, pp. 2235–2258, 2024

work page 2024

-

[5]

Achieving 100Gbps intrusion prevention on a single server,

Z. Zhao, H. Sadok, N. Atre, J. C. Hoe, V . Sekar, and J. Sherry, “Achieving 100Gbps intrusion prevention on a single server,” inUSENIX Symposium on Operating Systems Design and Implementation, 2020

work page 2020

-

[6]

Taurus: A data plane architecture for per-packet ml,

T. Swamy, A. Rucker, M. Shahbaz, I. Gaur, and K. Olukotun, “Taurus: A data plane architecture for per-packet ml,” inInternational Conference on Architectural Support for Programming Languages and Operating Systems, 2022

work page 2022

-

[7]

Azure accelerated net- working: Smartnics in the public cloud,

T. Jepsen, D. Firestone, A. Putnamet al., “Azure accelerated net- working: Smartnics in the public cloud,” inUSENIX Symposium on Networked Systems Design and Implementation, 2018

work page 2018

-

[8]

J. Yan, H. Xu, Z. Liu, Q. Li, K. Xu, M. Xu, and J. Wu, “Brain-on- switch: Towards advanced intelligent network data plane via nn-driven traffic analysis at line-speed,” in21st USENIX Symposium on Networked Systems Design and Implementation. USENIX Association, 2024, pp. 419–440

work page 2024

-

[9]

Fenix: Enabling in-network dnn inference with fpga-enhanced programmable switches,

X. Gao, T. Li, Y . Zhang, Z. Wang, X. Zeng, S. Yao, and K. Xu, “Fenix: Enabling in-network dnn inference with fpga-enhanced programmable switches,” in23rd USENIX Symposium on Networked Systems Design and Implementation. USENIX Association, 2026

work page 2026

-

[10]

Runtime programmable switches,

J. Xing, K.-F. Hsu, M. Kadosh, A. Lo, Y . Piasetzky, A. Krishnamurthy, and A. Chen, “Runtime programmable switches,” in19th USENIX Sym- posium on Networked Systems Design and Implementation. USENIX Association, 2022, pp. 651–667

work page 2022

-

[11]

Enabling in-situ programmability in network data plane: From architecture to language,

Y . Feng, Z. Chen, H. Song, W. Xu, J. Li, Z. Zhang, T. Yun, Y . Wan, and B. Liu, “Enabling in-situ programmability in network data plane: From architecture to language,” in19th USENIX Symposium on Networked Systems Design and Implementation. USENIX Association, 2022, pp. 635–649

work page 2022

-

[12]

Binaryconnect: Training deep neural networks with binary weights during propagations,

M. Courbariaux, Y . Bengio, and J.-P. David, “Binaryconnect: Training deep neural networks with binary weights during propagations,” in Advances in Neural Information Processing Systems, 2015

work page 2015

-

[13]

Xnor-net: Imagenet classification using binary convolutional neural networks,

M. Rastegari, V . Ordonez, J. Redmon, and A. Farhadi, “Xnor-net: Imagenet classification using binary convolutional neural networks,” in European Conference on Computer Vision, 2016

work page 2016

-

[14]

IoT-23: A Labeled Dataset with Malicious and Benign IoT Network Traffic,

S. Garcia, A. Parmisano, and M. J. Erquiaga, “IoT-23: A Labeled Dataset with Malicious and Benign IoT Network Traffic,” Stratosphere Laboratory, CTU, Tech. Rep., 2020

work page 2020

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.