Recognition: no theorem link

A Stability Benchmark of Generative Regularizers for Inverse Problems

Pith reviewed 2026-05-12 03:53 UTC · model grok-4.3

The pith

Generative diffusion priors for inverse problems in imaging are not universally stable and can underperform compared to optimization-based methods in imperfect settings.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central discovery is that while generative priors can provide state-of-the-art reconstructions in some settings, they fall short or become problematic in others, particularly regarding robustness to out-of-distribution data and inaccuracies in the forward operator or noise model, as revealed through numerical tests of convergent regularization and related properties.

What carries the argument

A set of numerical stability tests covering convergent regularization, out-of-distribution robustness, and robustness to forward operator or noise model inaccuracies, used to compare generative regularizers against variational methods.

Load-bearing premise

The chosen numerical test cases and metrics are representative of the imperfect conditions in actual scientific and medical imaging applications.

What would settle it

A finding that generative priors consistently outperform variational methods and remain stable across all tested conditions of out-of-distribution data and model inaccuracies would contradict the paper's conclusion that they can fall short or be problematic in certain settings.

Figures

read the original abstract

Generative (diffusion) priors demonstrate remarkable performance in addressing inverse problems in imaging. Yet, for scientific and medical imaging, it is crucial that reconstruction techniques remain stable and reliable under imperfect settings. Typical definitions of stability encompass the notion of ''convergent regularization'', robustness to out-of-distribution data, and to inaccuracies in the forward operator or noise model. We evaluate these properties numerically. Furthermore, we benchmark generative approaches against modern optimization-based methods inspired by the widely used variational techniques. Our results give insights for which settings and applications generative priors can deliver state-of-the-art reconstructions, and on those in which they fall short or may even be problematic.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript presents a numerical benchmark evaluating the stability properties of generative (primarily diffusion-based) regularizers for inverse problems in imaging. It assesses convergent regularization, robustness to out-of-distribution (OOD) data, and sensitivity to inaccuracies in the forward operator or noise model, while comparing these approaches against modern optimization-based variational methods. The central claim is that the results yield actionable insights into the settings where generative priors achieve state-of-the-art performance and where they fall short or become problematic, particularly for scientific and medical imaging applications.

Significance. If the benchmark design is representative, the work would be significant for guiding the adoption of generative priors in high-stakes inverse problems, where stability under imperfect conditions is essential. The explicit numerical comparisons to variational baselines and focus on multiple stability notions (convergent regularization, OOD, model mismatch) provide a useful empirical reference point that is currently lacking in the literature.

major comments (3)

- [§4 and Table 2] §4 (Experimental Setup) and Table 2: The central claim that the results provide insights for real scientific/medical deployments rests on the representativeness of the chosen forward operators, noise models, and OOD shifts. However, the paper provides no explicit quantification of perturbation magnitudes or structures (e.g., coil sensitivities, beam hardening, or motion artifacts) that would be typical in practice; if the tested mismatches remain small and synthetic, the observed stability rankings may not generalize and could reverse under realistic conditions.

- [§3.2 and §3.3] §3.2 (OOD Robustness) and §3.3 (Forward Operator Inaccuracies): The definitions of OOD shifts and operator perturbations lack precise metrics (e.g., distribution distance or perturbation norm) and do not include statistical significance testing or error bars on the reported reconstruction metrics. This makes it difficult to assess whether differences between generative and variational methods are robust or merely artifacts of the specific test cases.

- [§5] §5 (Discussion): The claim that generative priors 'may even be problematic' in certain settings is not sufficiently supported by the numerical evidence, as the paper does not explore failure modes under larger or structured mismatches that are common in real deployments; additional experiments with realistic operator errors would be needed to substantiate this part of the conclusion.

minor comments (3)

- [Throughout] Notation for the generative prior and regularization parameters is introduced inconsistently across sections; a single table summarizing all symbols and their definitions would improve readability.

- [Introduction] The abstract and introduction cite the importance of stability but do not reference prior benchmark papers on regularization stability (e.g., works on convergent regularization theory); adding these would better situate the contribution.

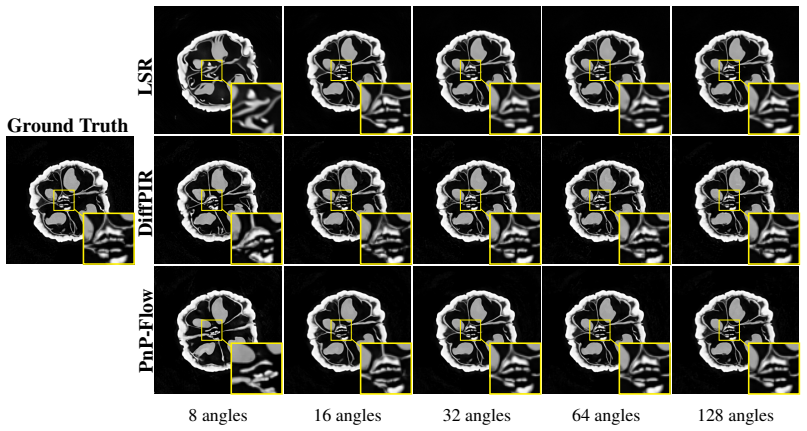

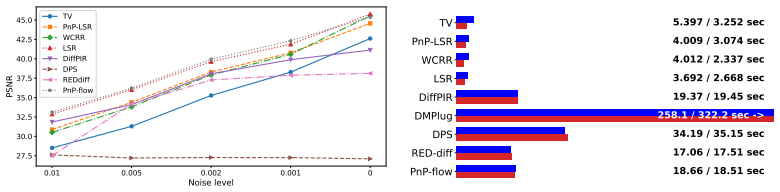

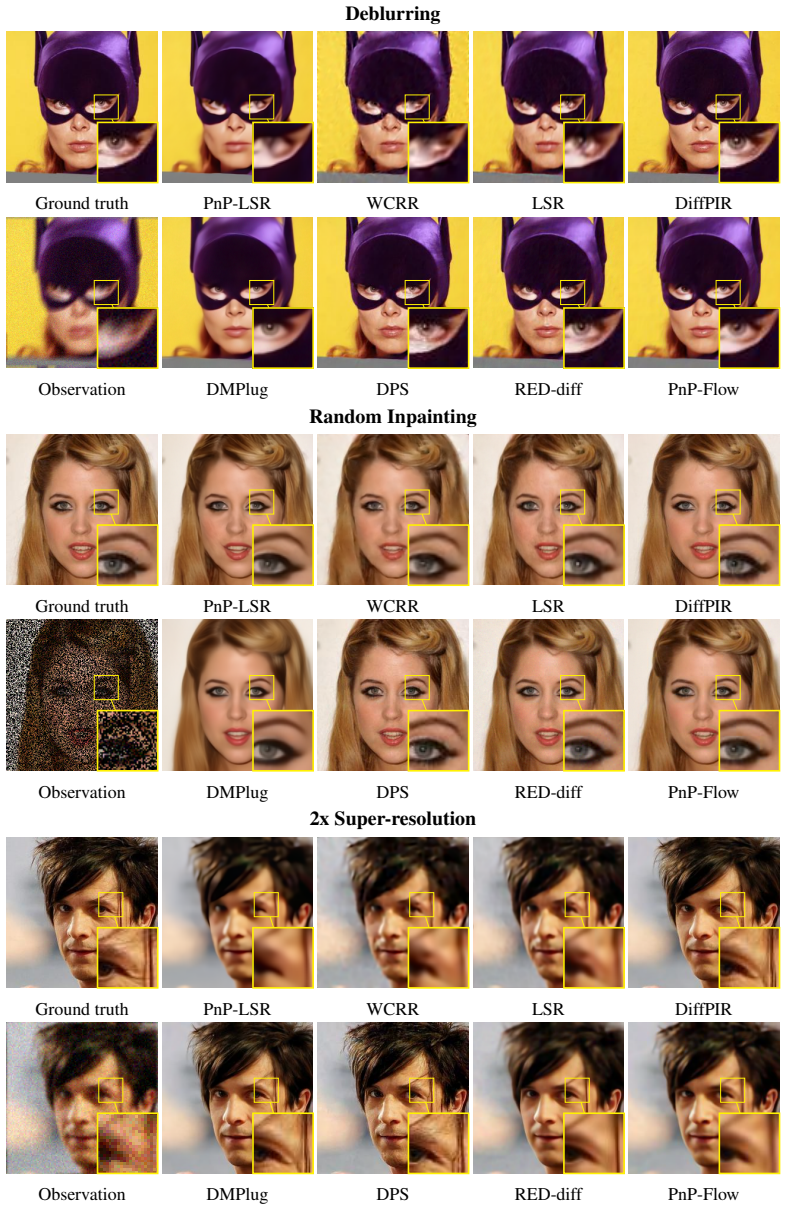

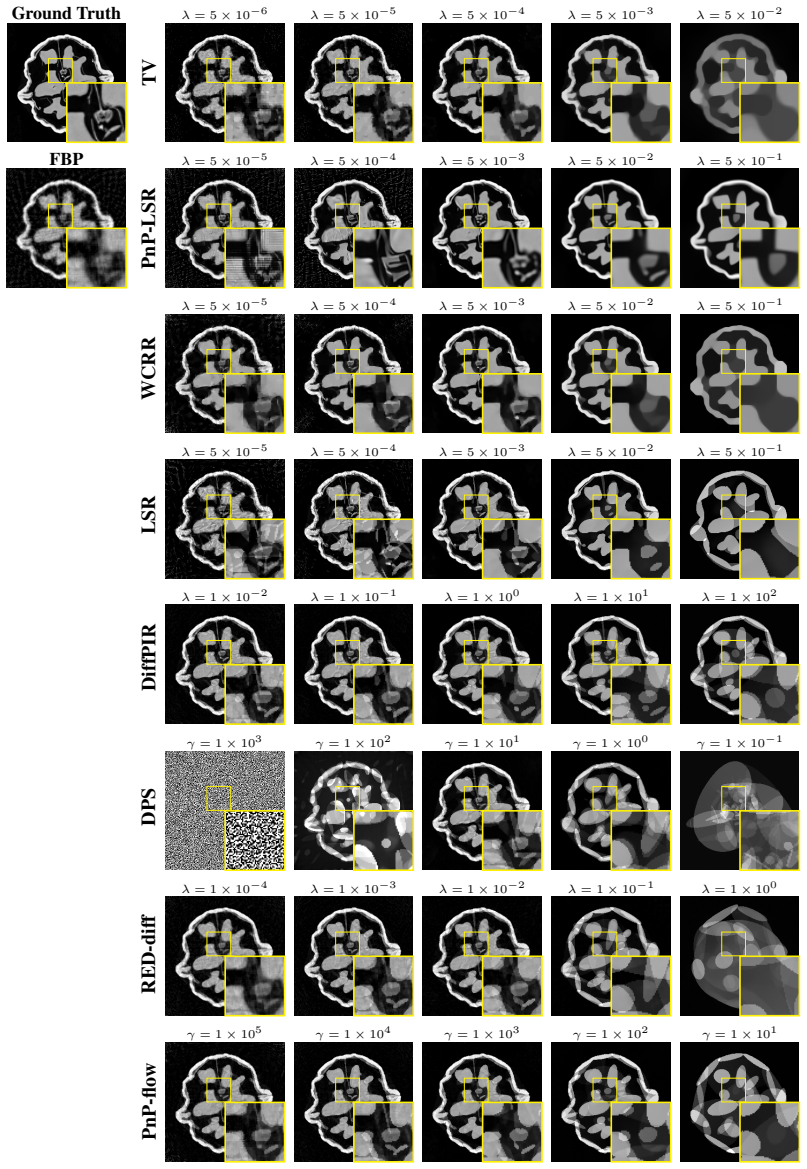

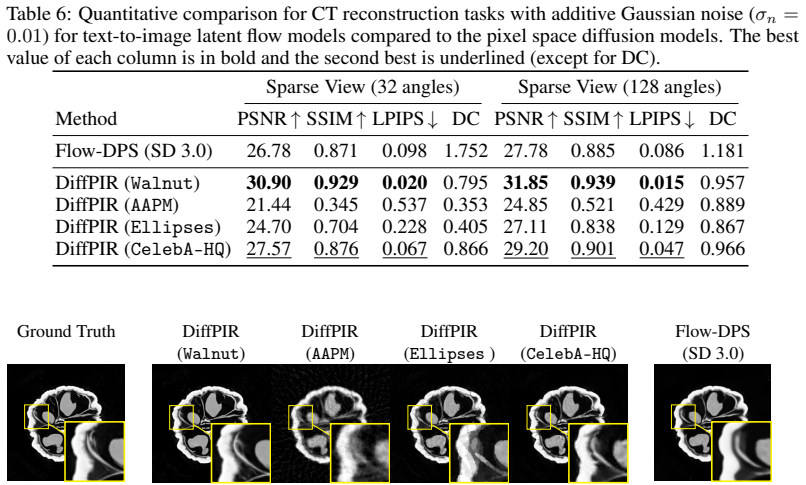

- [Figures] Figure captions for the reconstruction examples are too brief and do not indicate the specific forward operator or noise level used in each panel.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment below, indicating planned revisions where appropriate to strengthen the manuscript while remaining faithful to the scope of our benchmark study.

read point-by-point responses

-

Referee: [§4 and Table 2] §4 (Experimental Setup) and Table 2: The central claim that the results provide insights for real scientific/medical deployments rests on the representativeness of the chosen forward operators, noise models, and OOD shifts. However, the paper provides no explicit quantification of perturbation magnitudes or structures (e.g., coil sensitivities, beam hardening, or motion artifacts) that would be typical in practice; if the tested mismatches remain small and synthetic, the observed stability rankings may not generalize and could reverse under realistic conditions.

Authors: We agree that explicit quantification of perturbation magnitudes would better support claims of relevance to real deployments. In the revision we will add precise metrics (e.g., relative L2 norms for operator mismatches and a chosen distributional distance for OOD shifts) together with references to typical artifact magnitudes reported in the MRI and CT literature. Our benchmark deliberately employs controlled synthetic perturbations on public datasets to ensure reproducibility and isolate effects; fully realistic structured artifacts would require clinical data outside the present scope. revision: partial

-

Referee: [§3.2 and §3.3] §3.2 (OOD Robustness) and §3.3 (Forward Operator Inaccuracies): The definitions of OOD shifts and operator perturbations lack precise metrics (e.g., distribution distance or perturbation norm) and do not include statistical significance testing or error bars on the reported reconstruction metrics. This makes it difficult to assess whether differences between generative and variational methods are robust or merely artifacts of the specific test cases.

Authors: We will revise Sections 3.2 and 3.3 to supply explicit quantitative definitions, including the normalized perturbation norm for forward-operator inaccuracies and a distributional distance for OOD shifts. Error bars computed across multiple random seeds will be added to all reported metrics, and we will include statistical significance tests (e.g., paired Wilcoxon tests) to substantiate the observed differences between generative and variational approaches. revision: yes

-

Referee: [§5] §5 (Discussion): The claim that generative priors 'may even be problematic' in certain settings is not sufficiently supported by the numerical evidence, as the paper does not explore failure modes under larger or structured mismatches that are common in real deployments; additional experiments with realistic operator errors would be needed to substantiate this part of the conclusion.

Authors: The statement reflects the comparative instabilities we observed under the controlled mismatches tested. We will revise the discussion to qualify the claim more explicitly as an indication under synthetic conditions and to stress the need for caution in high-stakes applications. While we agree that larger or structured real-world mismatches merit further study, the current benchmark already demonstrates settings in which variational methods exhibit greater robustness; we will strengthen the caveats rather than add new experiments. revision: partial

- Additional experiments involving realistic clinical artifacts (e.g., motion, beam hardening, or actual coil-sensitivity maps on patient data) cannot be performed, as the study is restricted to public benchmark datasets to maintain controlled and reproducible evaluation.

Circularity Check

No circularity: empirical benchmark with direct numerical comparisons

full rationale

The paper is a numerical benchmark study that evaluates stability properties of generative priors versus optimization-based methods through direct simulations on chosen test cases. No derivations, first-principles predictions, or fitted parameters are presented that reduce to inputs by construction. Conclusions rest on comparative empirical results for convergent regularization, OOD robustness, and operator/noise inaccuracies, with no self-citation chains or ansatzes invoked as load-bearing steps. The analysis is self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Solving inverse problems with deep neural networks--robustness included? , author=. IEEE Trans. Pattern Anal. Mach. Intell. , volume=. 2022 , publisher=

work page 2022

-

[2]

and Antun, Vegard and Hansen, Anders C

Gottschling, Nina M. and Antun, Vegard and Hansen, Anders C. and Adcock, Ben , title =. SIAM Rev. , volume =

-

[3]

Van Aarle, Wim and Palenstijn, Willem Jan and De Beenhouwer, Jan and Altantzis, Thomas and Bals, Sara and Batenburg, K Joost and Sijbers, Jan , journal=. The. 2015 , publisher=

work page 2015

-

[4]

Orthonormal bases of compactly supported wavelets , author=. Commun. Pur. Appl. Math. , volume=. 1988 , publisher=

work page 1988

-

[5]

Convergent plug-and-play methods for image inverse problems with explicit and nonconvex deep regularization , author=. 2023 , school=

work page 2023

-

[6]

and Hanke, Martin and Neubauer, Andreas , TITLE =

Engl, Heinz W. and Hanke, Martin and Neubauer, Andreas , TITLE =. 1996 , MRCLASS =

work page 1996

-

[7]

Plug-and-play methods for integrating physical and learned models in computational imaging: Theory, algorithms, and applications , author=. IEEE Signal Process. Mag. , volume=. 2023 , publisher=

work page 2023

-

[8]

and Song, Maxime and Hertrich, Johannes and Neumayer, Sebastian and Schramm, Georg , title =

Tachella, Julián and Terris, Matthieu and Hurault, Samuel and Wang, Andrew and Davy, Leo and Scanvic, Jérémy and Sechaud, Victor and Vo, Romain and Moreau, Thomas and Davies, Thomas and Chen, Dongdong and Laurent, Nils and Monroy, Brayan and Dong, Jonathan and Hu, Zhiyuan and Nguyen, Minh-Hai and Sarron, Florian and Weiss, Pierre and Escande, Paul and Mas...

work page 2025

-

[9]

Kai Zhang and Yawei Li and Wangmeng Zuo and Lei Zhang and Luc Van Gool and Radu Timofte , title =. IEEE Trans. Pattern Anal. Mach. Intell. , volume =

-

[10]

Regularization by denoising: Clarifications and new interpretations , author=. IEEE Trans. Comput. Imaging , volume=. 2018 , publisher=

work page 2018

-

[11]

Gradient Step Denoiser for convergent

Samuel Hurault and Arthur Leclaire and Nicolas Papadakis , booktitle=. Gradient Step Denoiser for convergent

-

[12]

IEEE Global Conference on Signal and Information Processing , pages=

Plug-and-play priors for model based reconstruction , author=. IEEE Global Conference on Signal and Information Processing , pages=

- [13]

-

[14]

Deep Learning Techniques for Inverse Problems in Imaging , author=. IEEE Trans. Inf. Theory , volume=

-

[15]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , year =

Kobler, Erich and Effland, Alexander and Kunisch, Karl and Pock, Thomas , title =. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , year =

-

[16]

A Neural-Network-Based Convex Regularizer for Inverse Problems , author=. IEEE Trans. Comput. Imaging , volume=

-

[17]

Alexis Goujon and Sebastian Neumayer and Michael Unser , title =. SIAM J. Imaging Sci. , volume =

-

[18]

Accelerated Proximal Gradient Methods for Nonconvex Programming , pages =

Li, Huan and Lin, Zhouchen , booktitle =. Accelerated Proximal Gradient Methods for Nonconvex Programming , pages =

-

[19]

Advances in Neural Information Processing Systems 31 , year =

Adversarial regularizers in inverse problems , author=. Advances in Neural Information Processing Systems 31 , year =

-

[20]

Proceedings of the 38th International Conference on Machine Learning , pages =

Bilevel Optimization: Convergence Analysis and Enhanced Design , author =. Proceedings of the 38th International Conference on Machine Learning , pages =. 2021 , publisher =

work page 2021

-

[22]

Neural-network-based regularization methods for inverse problems in imaging , author=. GAMM-Mitteilungen , volume=. 2024 , publisher=

work page 2024

-

[23]

An introduction to compressive sampling , author=. IEEE Signal Process. Mag. , volume=. 2008 , publisher=

work page 2008

-

[24]

Nonlinear total variation based noise removal algorithms , author=. Phys. D , volume=. 1992 , publisher=

work page 1992

-

[25]

Thong, David YW and Mbakam, Charlesquin Kemajou and Pereyra, Marcelo , journal=. Do

-

[26]

Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition , pages=

The perception-distortion tradeoff , author=. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition , pages=

-

[27]

The 13th International Conference on Learning Representations , year=

Rethinking Diffusion Posterior Sampling: From Conditional Score Estimator to Maximizing a Posterior , author=. The 13th International Conference on Learning Representations , year=

-

[28]

Weak Diffusion Priors Can Still Achieve Strong Inverse-Problem Performance , author=. 2026 , journal=

work page 2026

-

[29]

Advances in Neural Information Processing Systems , volume=

Dmplug: A plug-in method for solving inverse problems with diffusion models , author=. Advances in Neural Information Processing Systems , volume=

-

[30]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Denoising diffusion models for plug-and-play image restoration , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[31]

Steerable Conditional Diffusion for Out-of-Distribution Adaptation in Medical Image Reconstruction , author=. IEEE Trans. Med. Imaging , pages=. 2025 , publisher=

work page 2025

-

[32]

Tomographic X-ray data of a walnut , author=. arXiv preprint arXiv:1502.04064 , year=

-

[33]

The 11th International Conference on Learning Representations , year=

Diffusion Posterior Sampling for General Noisy Inverse Problems , author=. The 11th International Conference on Learning Representations , year=

-

[34]

arXiv preprint arXiv:2303.05754 , year=

Decomposed diffusion sampler for accelerating large-scale inverse problems , author=. arXiv preprint arXiv:2303.05754 , year=

-

[35]

The 12th International Conference on Learning Representations , year=

A variational perspective on solving inverse problems with diffusion models , author=. The 12th International Conference on Learning Representations , year=

-

[36]

GitHub repository , howpublished =

Patrick von Platen and Suraj Patil and Anton Lozhkov and Pedro Cuenca and Nathan Lambert and Kashif Rasul and Mishig Davaadorj and Dhruv Nair and Sayak Paul and William Berman and Yiyi Xu and Steven Liu and Thomas Wolf , title =. GitHub repository , howpublished =. 2022 , publisher =

work page 2022

-

[37]

Advances in Neural Information Processing Systems , publisher =

Denoising diffusion probabilistic models , author=. Advances in Neural Information Processing Systems , publisher =

-

[38]

Exploiting deep generative prior for versatile image restoration and manipulation , author=. IEEE Trans. Pattern Anal. Mach. Intell. , volume=. 2021 , publisher=

work page 2021

-

[39]

Regularising inverse problems with generative machine learning models , author=. J. Math. Imaging and Vis. , volume=. 2024 , publisher=

work page 2024

-

[40]

Der Sarkissian, Henri and Lucka, Felix and Van Eijnatten, Maureen and Colacicco, Giulia and Coban, Sophia Bethany and Batenburg, Kees Joost , journal=. A cone-beam. 2019 , publisher=

work page 2019

- [41]

- [42]

-

[43]

Robust uncertainty principles: Exact signal reconstruction from highly incomplete frequency information , author=. IEEE Trans. Inform. Theory , volume=. 2006 , publisher=

work page 2006

-

[44]

Compressed sensing , author=. IEEE Trans. Inform. Theory , volume=. 2006 , publisher=

work page 2006

-

[45]

Statistical and computational inverse problems , author=. 2005 , publisher=

work page 2005

-

[46]

Proceedings of the 34th International Conference on Machine Learning , pages=

Compressed sensing using generative models , author=. Proceedings of the 34th International Conference on Machine Learning , pages=. 2017 , organization=

work page 2017

-

[47]

Advances in Neural Information Processing Systems , publisher =

Robust compressed sensing MRI with deep generative priors , author=. Advances in Neural Information Processing Systems , publisher =

-

[48]

The 9th International Conference on Learning Representations , year=

Denoising diffusion implicit models , author=. The 9th International Conference on Learning Representations , year=

-

[49]

Making a “completely blind” image quality analyzer , author=. IEEE Signal processing letters , volume=. 2012 , publisher=

work page 2012

-

[50]

Blind/referenceless image spatial quality evaluator , author=. 2011 conference record of the forty fifth asilomar conference on signals, systems and computers (ASILOMAR) , pages=. 2011 , organization=

work page 2011

-

[51]

Solving inverse problems using data-driven models , author=. Acta Numer. , volume=. 2019 , publisher=

work page 2019

-

[52]

Fundamentals of nonparametric Bayesian inference , author=. 2017 , publisher=

work page 2017

-

[53]

Le Calcul des Probabilit\'es et ses Applications , volume=

Application of the theory of martingales , author=. Le Calcul des Probabilit\'es et ses Applications , volume=. 1949 , publisher=

work page 1949

- [54]

-

[56]

Score-Based Generative Modeling through Stochastic Differential Equations

Score-based generative modeling through stochastic differential equations , author=. arXiv preprint arXiv:2011.13456 , year=

work page internal anchor Pith review Pith/arXiv arXiv 2011

-

[57]

The 3rd International Conference on Learning Representations , year=

Adam: A method for stochastic optimization , author=. The 3rd International Conference on Learning Representations , year=

-

[58]

LoDoPaB-CT, a benchmark dataset for low-dose computed tomography reconstruction , author=. Scientific Data , volume=. 2021 , publisher=

work page 2021

-

[59]

An educated warm start for deep image prior-based micro

Barbano, Riccardo and Leuschner, Johannes and Schmidt, Maximilian and Denker, Alexander and Hauptmann, Andreas and Maass, Peter and Jin, Bangti , journal=. An educated warm start for deep image prior-based micro. 2022 , publisher=

work page 2022

-

[60]

Advances in Neural Information Processing Systems , publisher =

Blind image restoration via fast diffusion inversion , author=. Advances in Neural Information Processing Systems , publisher =

-

[61]

Proceedings of the 41st International Conference on Machine Learning , pages=

D-Flow: Differentiating through Flows for Controlled Generation , author=. Proceedings of the 41st International Conference on Machine Learning , pages=. 2024 , publisher=

work page 2024

-

[62]

Foundations of computational mathematics , volume=

Random projections of smooth manifolds , author=. Foundations of computational mathematics , volume=. 2009 , publisher=

work page 2009

-

[63]

Advances in Neural Information Processing Systems , publisher =

Denoising diffusion restoration models , author=. Advances in Neural Information Processing Systems , publisher =

-

[64]

The 11th International Conference on Learning Representations , year=

Zero-shot image restoration using denoising diffusion null-space model , author=. The 11th International Conference on Learning Representations , year=

-

[65]

The 10th International Conference on Learning Representations , year=

Solving inverse problems in medical imaging with score-based generative models , author=. The 10th International Conference on Learning Representations , year=

-

[67]

Advances in Neural Information Processing Systems , publisher =

Principled probabilistic imaging using diffusion models as plug-and-play priors , author=. Advances in Neural Information Processing Systems , publisher =

-

[68]

Advances in Neural Information Processing Systems , publisher =

Provably robust score-based diffusion posterior sampling for plug-and-play image reconstruction , author=. Advances in Neural Information Processing Systems , publisher =

-

[69]

The little engine that could: Regularization by denoising

Romano, Yaniv and Elad, Michael and Milanfar, Peyman , journal=. The little engine that could: Regularization by denoising. 2017 , publisher=

work page 2017

-

[71]

The 13th International Conference on Learning Representations , year=

InverseBench: Benchmarking Plug-and-Play Diffusion Priors for Inverse Problems in Physical Sciences , author=. The 13th International Conference on Learning Representations , year=

-

[72]

Jiayang Shi and Daniel Pelt and Joost Batenburg , booktitle=

-

[73]

The 13th International Conference on Learning Representations , year=

Hybrid regularization improves diffusion-based inverse problem solving , author=. The 13th International Conference on Learning Representations , year=

-

[74]

Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition , pages=

Deep image prior , author=. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition , pages=

-

[75]

Tero Karras and Timo Aila and Samuli Laine and Jaakko Lehtinen , booktitle=. Progressive Growing of

-

[76]

Proceedings of the IEEE International Conference on Computer Vision , pages=

Deep learning face attributes in the wild , author=. Proceedings of the IEEE International Conference on Computer Vision , pages=

-

[77]

Learning the invisible: A hybrid deep learning-shearlet framework for limited angle computed tomography , author=. Inverse Probl. , volume=. 2019 , publisher=

work page 2019

-

[78]

Deep null space learning for inverse problems: convergence analysis and rates , author=. Inverse Probl. , volume=. 2019 , publisher=

work page 2019

-

[79]

Taming diffusion models for image restoration: a review , author=. Philos. Trans. Roy. Soc. A , volume=. 2025 , publisher=

work page 2025

-

[80]

Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition , pages=

Understanding and evaluating blind deconvolution algorithms , author=. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition , pages=

-

[81]

Deep diffusion image prior for efficient

Chung, Hyungjin and Ye, Jong Chul , booktitle=. Deep diffusion image prior for efficient. 2024 , organization=

work page 2024

-

[82]

Advances in Neural Information Processing Systems , publisher =

Ambient diffusion: Learning clean distributions from corrupted data , author=. Advances in Neural Information Processing Systems , publisher =

-

[83]

Bahjat Kawar and Noam Elata and Tomer Michaeli and Michael Elad , journal=

-

[84]

Exploring the acceleration limits of deep learning variational network--based two-dimensional brain

Radmanesh, Alireza and Muckley, Matthew J and Murrell, Tullie and Lindsey, Emma and Sriram, Anuroop and Knoll, Florian and Sodickson, Daniel K and Lui, Yvonne W , journal=. Exploring the acceleration limits of deep learning variational network--based two-dimensional brain. 2022 , publisher=

work page 2022

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.