Recognition: 2 theorem links

· Lean TheoremTowards an End-To-End System for Real-Time Gesture Recognition from Surface Vibrations

Pith reviewed 2026-05-12 03:30 UTC · model grok-4.3

The pith

A compact end-to-end pipeline using piezoelectric sensors and small 1D-CNNs turns ordinary desk vibrations into accurate gesture commands.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

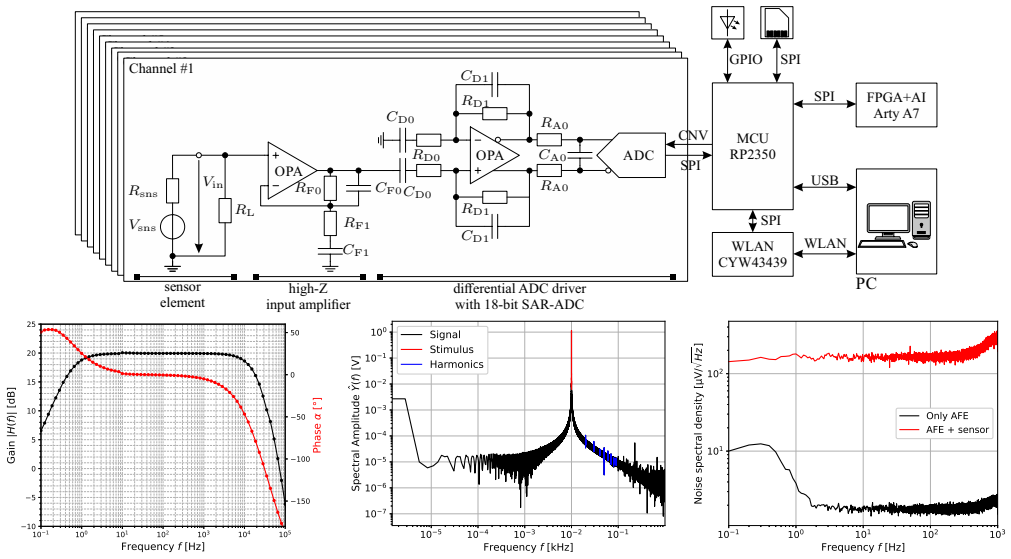

The authors present a sensor system and configurable pipeline that processes continuous vibration recordings into model-ready data and trains compact 1D-CNNs; after hyperparameter search they identify a specific preprocessing and model combination that delivers high gesture-recognition accuracy across data splits, with strong results in user-independent leave-one-subject-out validation on their 15-participant, six-gesture dataset.

What carries the argument

The configurable data-to-model pipeline that chains variable signal preprocessing steps with depthwise separable 1D-CNNs, carrying the end-to-end flow from raw sensor streams to gesture predictions.

If this is right

- Gesture commands could be added to existing furniture without visible sensors or design changes.

- The low parameter count of the winning model supports real-time inference on embedded hardware.

- Strong user-independent results indicate the learned features generalize beyond the training participants.

- The modular preprocessing framework allows systematic testing of alternative filters and windowing choices.

Where Pith is reading between the lines

- The same vibration-sensing approach might extend to other flat surfaces such as tables or counters if signal propagation remains comparable.

- Integration into smart-home platforms could let users control lights or appliances by tapping on any desk surface.

- Continuous streaming evaluation would be needed to confirm the pipeline handles overlapping or spontaneous gestures in daily life.

Load-bearing premise

The vibrations produced by the six gestures stay distinct and repeatable enough on a standard office desk that the collected dataset captures the variations seen in actual use.

What would settle it

Running the same pipeline on a fresh group of users or on desks made of different materials and observing whether leave-one-subject-out accuracy falls well below the levels reported in the paper.

Figures

read the original abstract

Sensing surface vibrations promise unobtrusive interaction for smart home systems by enabling gesture recognition on existing everyday surfaces without disturbing living-space design. Existing approaches typically address only parts of the processing chain, such as sensing hardware or offline gesture recognition, rather than providing an end-to-end system from surface-mounted sensors to the evaluation of the prediction model. This paper presents a custom sensor system and a configurable data-to-model pipeline for gesture recognition on a standard office desk. Our hardware enables a low-noise sensing of the vibrations using piezoelectric sensors. Building on a modular signal-processing framework, we model the full chain from continuous recordings through variable pre-processing to a model-ready dataset, and process the resulting data with compact depthwise separable 1D-CNNs. We conduct a joint search over pre-processing and model hyperparameters and identify a configuration with 8,722 parameters that uses band-pass filtering, fixed-length windows, and min-max normalization. On a self-recorded dataset with 15 participants performing six gestures this configuration achieves high accuracies across different data splitting methods, including strong user-independent performance in a leave-one-subject-out cross-validation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper presents a custom piezoelectric sensor hardware system for capturing surface vibrations on an office desk together with a modular end-to-end signal-processing pipeline that feeds into compact depthwise-separable 1D-CNN models. A joint hyperparameter search over preprocessing steps and model architecture identifies a final 8,722-parameter configuration using band-pass filtering, fixed-length windows, and min-max normalization. On a self-recorded dataset of 15 participants performing six gestures, this configuration is reported to deliver high accuracies under multiple data-splitting regimes, including strong user-independent results in leave-one-subject-out cross-validation.

Significance. If the reported generalization performance is shown to be free of selection bias, the work would provide a concrete demonstration of an unobtrusive, low-cost gesture interface that operates on unmodified everyday surfaces. The modular pipeline, emphasis on real-time constraints, and use of a very small CNN are positive features that could support deployment in smart-home settings. The significance is currently limited by the need to verify that the user-independent claim rests on properly nested validation.

major comments (1)

- [Experimental evaluation / LOSO cross-validation description] The manuscript states that a joint search over preprocessing and model hyperparameters was performed to select the final configuration (band-pass filtering, fixed-length windows, min-max normalization, 8,722-parameter depthwise-separable 1D-CNN). It is not stated whether this search was repeated independently inside each left-out-subject fold of the leave-one-subject-out cross-validation. If the search used the full dataset or any data from the held-out participant, the reported LOSO accuracies are no longer an unbiased estimate of user-independent performance and the central claim of “strong user-independent performance” is undermined. This issue is load-bearing for the primary empirical result.

minor comments (2)

- [Abstract] The abstract asserts “high accuracies” and “strong user-independent performance” without supplying concrete numerical values, confidence intervals, or baseline comparisons; readers must reach the results section to obtain any quantitative assessment.

- [Data collection / Dataset description] Reproducibility would benefit from explicit statements of the exact sensor placement protocol, gesture execution instructions given to participants, and the total number of samples per gesture per subject.

Simulated Author's Rebuttal

We thank the referee for their detailed and constructive review. The concern regarding proper nesting of the hyperparameter search within the leave-one-subject-out cross-validation is well-taken and directly impacts the strength of our user-independent performance claims. We address this point below.

read point-by-point responses

-

Referee: The manuscript states that a joint search over preprocessing and model hyperparameters was performed to select the final configuration (band-pass filtering, fixed-length windows, min-max normalization, 8,722-parameter depthwise-separable 1D-CNN). It is not stated whether this search was repeated independently inside each left-out-subject fold of the leave-one-subject-out cross-validation. If the search used the full dataset or any data from the held-out participant, the reported LOSO accuracies are no longer an unbiased estimate of user-independent performance and the central claim of “strong user-independent performance” is undermined. This issue is load-bearing for the primary empirical result.

Authors: We agree with the referee that the current description leaves the validation procedure ambiguous and that a non-nested search undermines the unbiased nature of the LOSO results. In the original experiments, the joint hyperparameter search over preprocessing steps and model architecture was performed once on the full dataset to identify the reported 8,722-parameter configuration; this fixed configuration was then evaluated in LOSO. We acknowledge this introduces potential selection bias. In the revised manuscript we will (1) explicitly state the original procedure, (2) implement and report a properly nested validation in which the entire hyperparameter search is repeated independently inside each LOSO training fold (using only the 14 subjects), and (3) update all accuracy figures, tables, and claims of “strong user-independent performance” to reflect the nested results. If the new numbers differ materially we will present both the original and revised figures with transparent discussion. revision: yes

Circularity Check

No significant circularity; empirical results rest on held-out evaluation.

full rationale

The paper describes a hardware sensor system, modular preprocessing pipeline, depthwise-separable 1D-CNN, and joint hyperparameter search, followed by accuracy reporting on a self-recorded 15-participant dataset under multiple splitting strategies including LOSO cross-validation. No equations, derivations, or fitted quantities are present that reduce any reported accuracy to a self-definitional or constructionally equivalent input. The hyperparameter search identifies one configuration whose performance is then measured on held-out subject folds; even if the search procedure itself is not nested inside every fold (a methodological concern), this does not constitute any of the enumerated circularity patterns such as self-definition, renaming a known result, or load-bearing self-citation. The central claim therefore remains an independent empirical measurement against external data splits rather than a tautological restatement of its own inputs.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

We conduct a joint search over pre-processing and model hyperparameters and identify a configuration with 8,722 parameters that uses band-pass filtering, fixed-length windows, and min-max normalization.

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

On a self-recorded dataset with 15 participants performing six gestures this configuration achieves high accuracies across different data splitting methods, including strong user-independent performance in a leave-one-subject-out cross-validation.

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

Smatable: A System to Transform Furni ture into Interface using Vibration Sensor,

M. Y oshida et al., “Smatable: A System to Transform Furni ture into Interface using Vibration Sensor,” in 19th International Conference on Intelligent Environments, 2023, pp. 1–8

work page 2023

-

[2]

HIC: An interactive and ubiquitou s home controller system for the smart home,

S. Pizzagalli et al., “HIC: An interactive and ubiquitou s home controller system for the smart home,” in IEEE 6th Int. Conf. on Serious Games and Applications for Health , 2018, pp. 1–6

work page 2018

-

[3]

Sensurfaces: A Novel Approach for Embedded Touch Sensing on Everyday Surfaces,

B. Parilusyan et al., “Sensurfaces: A Novel Approach for Embedded Touch Sensing on Everyday Surfaces,” Proc. ACM Interact. Mob. W earable Ubiquitous Technol., vol. 6, no. 2, Jul. 2022

work page 2022

-

[4]

HapTable: An Interactive Tabletop Pr oviding Online Haptic Feedback for Touch Gestures,

S. E. Emgin et al., “HapTable: An Interactive Tabletop Pr oviding Online Haptic Feedback for Touch Gestures,” IEEE Trans. on Visualization and Computer Graphics , vol. 25, no. 9, p. 2749–2762, 2019

work page 2019

-

[5]

Sprayable User Interfaces: Prototyp ing Large-Scale Interactive Surfaces with Sensors and Displays,

M. Wessely et al., “Sprayable User Interfaces: Prototyp ing Large-Scale Interactive Surfaces with Sensors and Displays,” in Conf. on Human Factors in Computing Systems , 2020, p. 1–12

work page 2020

-

[6]

Smart table surface: A novel approach to p ervasive dining monitoring,

B. Zhou et al., “Smart table surface: A novel approach to p ervasive dining monitoring,” in IEEE Int. Conf. on Pervasive Computing and Communications, 2015, pp. 155–162

work page 2015

-

[7]

Acustico: Surface Tap Detection and Loca lization using Wrist-based Acoustic TDOA Sensing,

J. Gong et al., “Acustico: Surface Tap Detection and Loca lization using Wrist-based Acoustic TDOA Sensing,” in 33rd Annual ACM Symp. on User Interface Software and Technol. , 2020, p. 406–419

work page 2020

-

[8]

SAWSense: Using Surface Acoustic Waves for Surface-bound Event Recognition,

Y . Iravantchi et al., “SAWSense: Using Surface Acoustic Waves for Surface-bound Event Recognition,” in Conf. on Human Factors in Computing Systems , 2023

work page 2023

-

[9]

VibSense: Sensing Touches on Ubiquitous S urfaces through Vibration,

J. Liu et al., “VibSense: Sensing Touches on Ubiquitous S urfaces through Vibration,” in 14th Annual IEEE Int. Conf on Sensing, Commu- nication, and Networking . IEEE Press, 2017, p. 1–9

work page 2017

-

[10]

P . Knierim et al., “Wrist-Powered Touch: Evaluating Sm artwatch-Based Touch Gesture Recognition for Interaction in Extended Real ity,” in Proceedings of the Mensch Und Computer 2025 , 2025, p. 345–353

work page 2025

-

[11]

Learning to Recognize Handwriting Input with Acoustic Features,

H. Yin et al., “Learning to Recognize Handwriting Input with Acoustic Features,” Proc. ACM Interact. Mob. W earable Ubiquitous Technol. , vol. 4, no. 2, 2020

work page 2020

-

[12]

Smatable: A Vibration-Based Sensin g Method for Making Ordinary Tables Touch-Interfaces,

M. Y oshida et al., “Smatable: A Vibration-Based Sensin g Method for Making Ordinary Tables Touch-Interfaces,” IEEE Access, vol. 11, 2023

work page 2023

-

[13]

K. Shibata et al., “Enabling Vibration-Based Gesture R ecognition on Everyday Furniture via Energy-Efficient FPGA Implementati on of 1D Convolutional Networks,” arXiv preprint arXiv:2510.23156 , 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[14]

Deep.Neural.Signal.Pre-Processor - Towards Develop- ment of AI-enhanced End-to-End BCIs,

L. Buron et al., “Deep.Neural.Signal.Pre-Processor - Towards Develop- ment of AI-enhanced End-to-End BCIs,” Current Directions in Biomed- ical Engineering , vol. 9, no. 1, pp. 471–474, 2023

work page 2023

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.