Recognition: 2 theorem links

· Lean TheoremMultifidelity Gaussian process regression for solving nonlinear partial differential equations

Pith reviewed 2026-05-12 03:45 UTC · model grok-4.3

The pith

A cokriging kernel-learning method extracts non-stationary kernels from low-fidelity simulations to build high-fidelity Gaussian process solvers for nonlinear PDEs.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

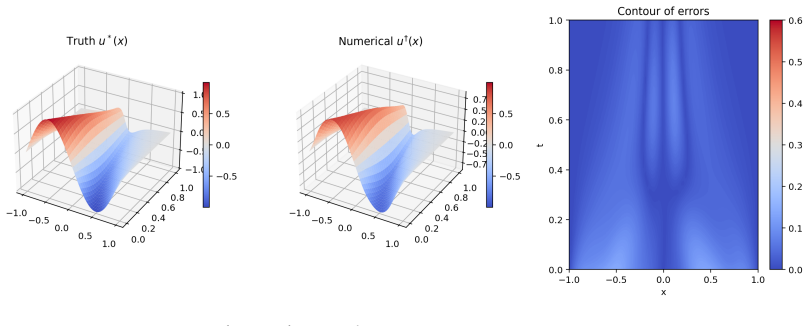

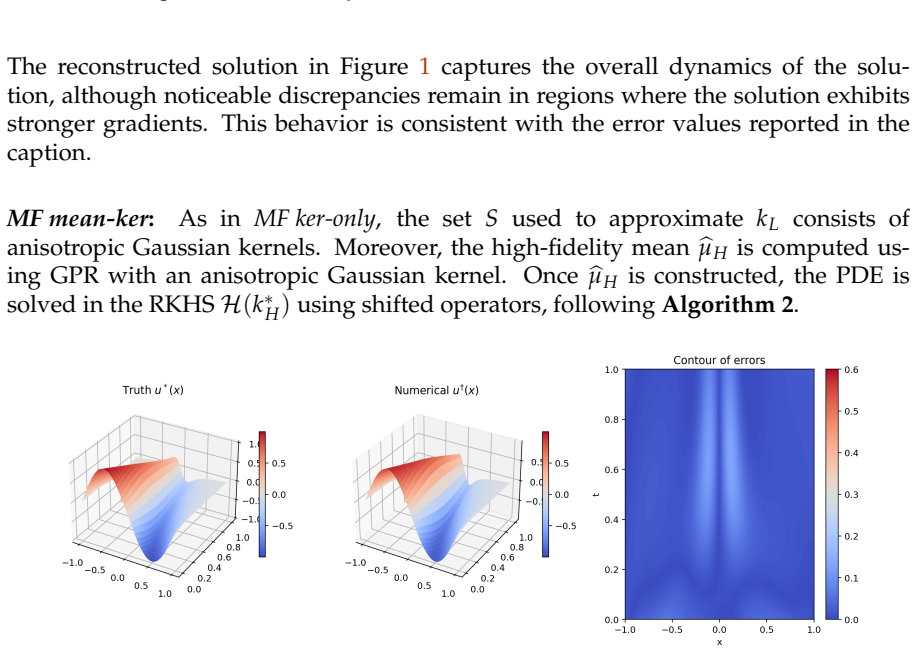

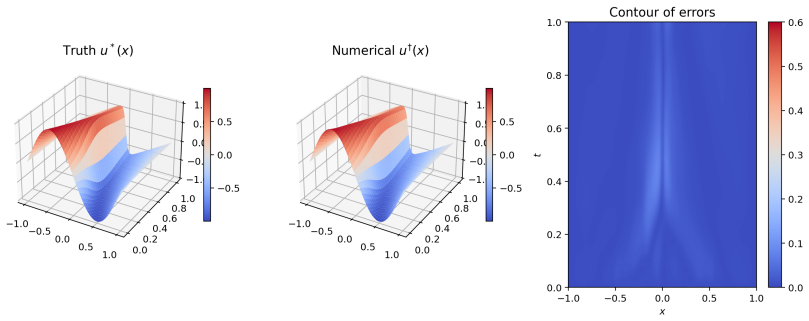

The authors claim that fitting a differentiable non-stationary kernel to an empirical kernel from low-fidelity simulations and then deriving a high-fidelity kernel together with its mean via the multifidelity cokriging framework supplies the necessary ingredients for a Gaussian process to solve nonlinear partial differential equations, with the resulting solver demonstrated on the Burgers' equation.

What carries the argument

The two-step cokriging procedure that fits a non-stationary kernel to a low-fidelity empirical kernel and transfers it to a high-fidelity kernel and mean for use inside a physics-informed Gaussian process.

Load-bearing premise

Empirical kernels extracted from low-fidelity simulations contain transferable information that, when processed through cokriging, produces a high-fidelity kernel and mean suitable for accurate Gaussian process PDE solving.

What would settle it

If the multifidelity method does not produce lower solution error than a single-fidelity Gaussian process on the Burgers' equation under identical high-fidelity data budgets, the central claim would be falsified.

Figures

read the original abstract

Solving nonlinear partial differential equations (PDEs) using kernel methods offers a compelling alternative to traditional numerical solvers. However, the performance of these methods strongly depends on the choice of kernel. In this work, as the available information is inherently multifidelity, we propose a kernel learning approach based on cokriging, leveraging empirical information from multifidelity simulations. In the first step, we fit a differentiable non-stationary kernel to an empirical kernel obtained from low-fidelity simulations. In the second step, we derive a high-fidelity kernel with estimated hyperparameters, and construct a corresponding high-fidelity mean using the multifidelity framework. These components can then be used within a Gaussian process framework for solving PDEs. Finally, we demonstrate the performance of the proposed physics-informed method on the Burgers' equation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes a two-step cokriging procedure for learning kernels in a multifidelity Gaussian process framework to solve nonlinear PDEs. Low-fidelity simulations are used to construct an empirical kernel to which a differentiable non-stationary kernel is fit; hyperparameters are then transferred to define a high-fidelity kernel and mean function that are inserted into a physics-informed GP solver. The approach is illustrated on Burgers' equation.

Significance. If the empirical transfer from low- to high-fidelity kernels proves reliable, the method supplies a practical, data-driven route to kernel construction for physics-informed GPs when high-fidelity data are scarce. This could reduce reliance on hand-crafted kernels and improve accuracy for nonlinear PDEs, adding a useful heuristic tool to the scientific machine-learning literature.

minor comments (3)

- The abstract summarizes the procedure but omits any equations, error metrics, or quantitative results; adding a brief statement of the observed accuracy on Burgers' equation would improve readability.

- Notation for the empirical kernel, the fitted non-stationary kernel, and the derived high-fidelity mean should be introduced once and used consistently; cross-references to the relevant equations would help readers follow the two-step construction.

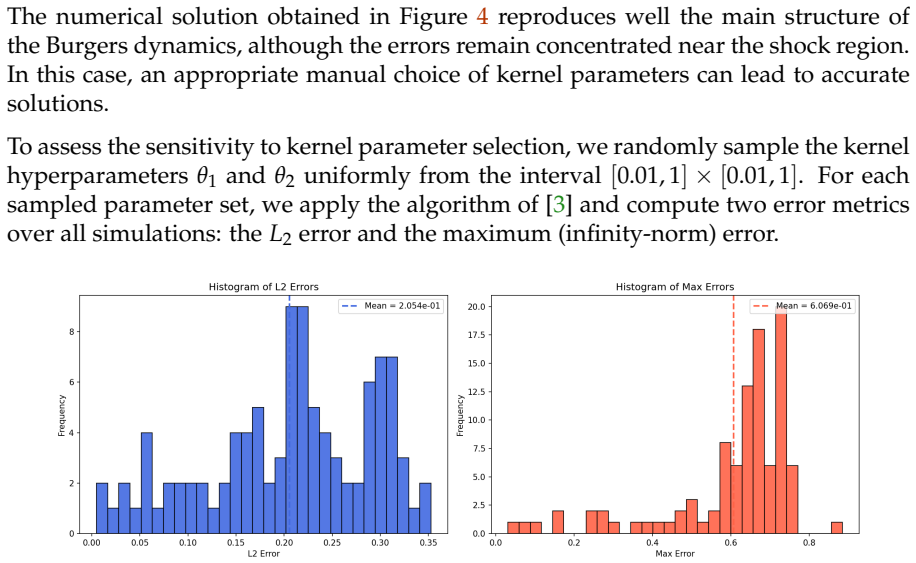

- The single numerical example on Burgers' equation is consistent with the stated procedure, but the manuscript would benefit from a short discussion of how sensitive the final GP solution is to the choice of low-fidelity resolution or the number of multifidelity samples.

Simulated Author's Rebuttal

We thank the referee for their careful summary of our work and for the positive recommendation of minor revision. The report raises no specific major comments or criticisms, so we have nothing to rebut point by point. We will incorporate any minor editorial suggestions in the revised manuscript.

Circularity Check

No significant circularity detected in derivation chain

full rationale

The paper describes an empirical two-step cokriging procedure: fitting a differentiable non-stationary kernel to an empirical kernel extracted from low-fidelity simulations, then deriving a high-fidelity kernel and mean via the multifidelity framework for subsequent use in a physics-informed GP PDE solver. This construction is presented as a heuristic that leverages external multifidelity data and standard GP components; it does not reduce any claimed prediction or result to its own fitted inputs by definition, nor does it rely on self-citation load-bearing uniqueness theorems or ansatzes smuggled from prior work. The single numerical demonstration on Burgers' equation is consistent with the stated procedure without internal reduction. The central claim therefore remains independent of its inputs on the paper's own terms.

Axiom & Free-Parameter Ledger

free parameters (1)

- high-fidelity kernel hyperparameters

axioms (2)

- domain assumption Gaussian processes with suitable kernels can solve nonlinear PDEs

- domain assumption Low-fidelity simulation data yields an empirical kernel that is informative for high-fidelity modeling

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearwe fit a differentiable non-stationary kernel to an empirical kernel obtained from low-fidelity simulations... k∗H=ρ²kopt+kd

-

IndisputableMonolith/Foundation/AlexanderDuality.leanalexander_duality_circle_linking unclearnon-stationary Gibbs kernel... Σx=diag(ℓ1(x),ℓ2(x))

Reference graph

Works this paper leans on

-

[1]

A. Argyriou, C. A. Micchelli, and M. Pontil. When is there a representer theo- rem? Vector versus matrix regularizers.The Journal of Machine Learning Research, 10:2507–2529, 2009

work page 2009

-

[2]

S. C. Brenner and L. R. Scott.The mathematical theory of finite element methods. Springer, 2008

work page 2008

-

[3]

Y. Chen, B. Hosseini, H. Owhadi, and A. M. Stuart. Solving and learning nonlin- ear PDEs with Gaussian processes.Journal of Computational Physics, 447:110668, 2021

work page 2021

-

[4]

J. Cockayne, C. Oates, T. Sullivan, and M. Girolami. Bayesian probabilistic nu- merical methods.SIAM Review, 61(4):756–789, 2019

work page 2019

-

[5]

Cressie.Statistics for spatial data

N. Cressie.Statistics for spatial data. Wiley, 1993

work page 1993

-

[6]

N. Doumèche, F. Bach, G. Biau, and C. Boyer. Physics-informed kernel learning. Journal of Machine Learning Research, 26(124):1–39, 2025

work page 2025

-

[7]

F.-Z. El-Boukkouri, J. Garnier, and O. Roustant. General reproducing properties in RKHS with application to derivative and integral operators.arXiv preprint arXiv:2503.15922, 2025

-

[8]

L. C. Evans.Partial differential equations, volume 19. American mathematical soci- ety, 2022

work page 2022

-

[9]

G. E. Fasshauer.Meshfree approximation methods with Matlab (With Cd-rom), vol- ume 6. World Scientific Publishing Company, 2007. 24

work page 2007

-

[10]

A. I. J. Forrester, A. Sóbester, and A. J. Keane. Multi-fidelity optimization via surrogate modelling.Proceedings of the Royal Society A, 463(2088):3251–3269, 2007

work page 2088

-

[11]

M. N. Gibbs.Bayesian Gaussian processes for regression and classification. PhD thesis, University of Cambridge, UK, 1998

work page 1998

-

[12]

C. Grossmann, H.-G. Roos, and M. Stynes.Numerical Treatment of Partial Differen- tial Equations: translated and revised by Martin Stynes. Springer, 2007

work page 2007

-

[13]

E. Hopf. The partial differential equationu t +uu x =µu xx.Communications on Pure and Applied Mathematics, 3(3):201–230, 1950

work page 1950

-

[14]

G. E. Karniadakis, I. G. Kevrekidis, L. Lu, P . Perdikaris, S. Wang, and L. Yang. Physics-informed machine learning.Nature Reviews Physics, 3(6):422–440, 2021

work page 2021

-

[15]

M. C. Kennedy and A. O’Hagan. Predicting the output from a complex computer code when fast approximations are available.Biometrika, 87(1):1–13, 2000

work page 2000

-

[16]

Le Gratiet.Multi-fidelity Gaussian process regression for computer experiments

L. Le Gratiet.Multi-fidelity Gaussian process regression for computer experiments. PhD thesis, Université Paris-Diderot, 2013

work page 2013

- [17]

-

[18]

C. J. Paciorek and M. J. Schervish. Spatial modelling using a new class of nonsta- tionary covariance functions.Environmetrics: The official journal of the International Environmetrics Society, 17(5):483–506, 2006

work page 2006

-

[19]

B. Peherstorfer, K. Willcox, and M. Gunzburger. Survey of multifidelity methods in uncertainty propagation, inference, and optimization.SIAM Review, 60(3):550– 591, 2018

work page 2018

- [20]

-

[21]

A. Quarteroni and A. Valli.Numerical approximation of partial differential equations. Springer, 1994

work page 1994

- [22]

-

[23]

M. Seydao˘ glu, U. Erdo˘ gan, and T. Özi¸ s. Numerical solution of Burgers’ equation with high order splitting methods.Journal of Computational and Applied Mathemat- ics, 291:410–421, 2016

work page 2016

-

[24]

M. L. Stein.Interpolation of spatial data. Springer, 1999

work page 1999

-

[25]

A. M. Stuart. Inverse problems: A Bayesian perspective.Acta Numerica, 19:451– 559, 2010

work page 2010

-

[26]

Z. Wang, W. Xing, R. Kirby, and S. Zhe. Physics informed deep kernel learning. InInternational conference on artificial intelligence and statistics, pages 1206–1218. PMLR, 2022. 25

work page 2022

-

[27]

Wendland.Scattered Data Approximation

H. Wendland.Scattered Data Approximation. Cambridge University Press, 2004

work page 2004

-

[28]

C. Williams and C. Rasmussen. Gaussian Processes for Machine Learning, MIT Press.Cambridge, MA, 2006

work page 2006

- [29]

-

[30]

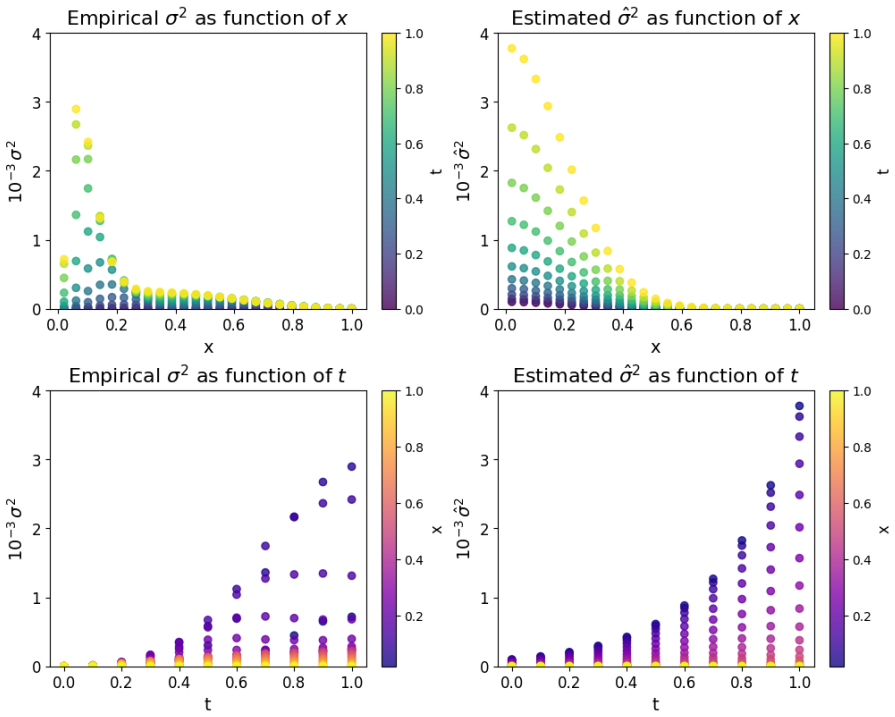

X. Yang, X. Zhu, and J. Li. When bifidelity meets cokriging: An efficient physics- informed multifidelity method.SIAM Journal on Scientific Computing, 42(1):A220– A249, 2020. A Additional numerical results for the Burgers’ equa- tion In this appendix, we report additional numerical results obtained for the Burgers’ equation in the multifidelity setting. A...

work page 2020

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.