Recognition: 2 theorem links

· Lean TheoremIndirect Comparisons For Health Technology Assessment: A Practical Methodological Guide And Tips With Insights From The French Transparency Commission

Pith reviewed 2026-05-12 04:29 UTC · model grok-4.3

The pith

Indirect treatment comparisons yield reliable results for health technology assessments when their methods align with the strength of available evidence and the validity of their assumptions.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central claim is that robust and reliable indirect treatment comparisons require methods that are consistent with the validity of their assumptions and the strength of the available evidence. This paper provides a practical methodological guide and tips drawn from expert insights and reviews of French Transparency Commission opinions to help researchers and assessors make appropriate choices in network meta-analyses, population-adjusted comparisons, and comparisons involving non-randomized studies.

What carries the argument

Expert-developed practical recommendations informed by reviews of HTA guidelines and HAS opinions, focusing on anticipation of needs, assessment of similarity and transitivity, network adaptation, and effective sample size reporting.

If this is right

- Early planning for indirect comparisons during trial design helps address potential confounding factors.

- Adapting the evidence network structure to the specific decision context improves relevance of network meta-analysis results.

- Careful interpretation of effective sample size in population-adjusted comparisons prevents overconfidence in adjusted estimates.

- Single-arm studies can be compared to external controls when randomized trials are not feasible, but this depends on plans for future randomized studies.

Where Pith is reading between the lines

- These recommendations might reduce variability in how different countries assess the same indirect evidence.

- Testing the tips in simulated datasets could reveal which ones most improve decision accuracy.

- Combining the guidance with emerging real-world evidence standards could further strengthen indirect comparisons in future assessments.

Load-bearing premise

The assumption that a panel of experts reviewing prior guidelines and authority opinions can generate practical tips that are generalizable and effective without direct empirical testing of those tips.

What would settle it

Observing that health technology assessment decisions based on indirect comparisons following this guidance lead to different outcomes than those not following it, or that recommended methods produce biased estimates in validation studies using known true effects.

Figures

read the original abstract

Context: Indirect treatment comparisons (ITC) are essential when direct head-to-head evidence is unavailable. Their reliability depends on rigorous methodological choices and careful assessment of underlying assumptions. Appropriate methodological choices can help address challenges such as cross-country variations in treatment practices, ethical constraints, and evolving treatment landscapes during trial conduct. This opinion and perspective paper provides practical guidance to strengthen the quality, robustness and accuracy of ITCs in the context of health technology assessment (HTA) in France. Methods: A panel of experts in ITCs and French market access environment developed the present strategic guidance, informed by previous work reviewing HTA methodological guidelines and complemented by a systematic review of Transparency Committee opinions from the French National Authority for Health (HAS). Results: Key considerations include early anticipation of ITCs, justification of potential confounding factors, and rigorous assessment of similarity and transitivity in randomized trial-based comparisons. In network meta-analysis, the structure of the evidence network should be adapted to the specific decision context. Population-Adjusted Indirect Comparisons require careful reporting and interpretation of the effective sample size. When evidence relies on non-randomized clinical trials, comparisons between single-arm studies and external control arms may be appropriate under different scenarios, depending on the feasibility of conducting subsequent randomized studies. Conclusions: Robust and reliable ITCs require methods consistent with the validity of their assumptions and the strength of the available evidence. This practical guidance supports the development of rigorous ITCs to inform decision-making in complex medical contexts where direct comparisons are not feasible.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript is an opinion and perspective paper that provides practical methodological guidance for indirect treatment comparisons (ITCs) in health technology assessment (HTA), focusing on the French context. It is based on expert panel insights informed by a review of HTA guidelines and a systematic review of opinions from the French National Authority for Health (HAS) Transparency Committee. The paper claims that robust and reliable ITCs require methods consistent with the validity of their assumptions and the strength of the available evidence, offering tips on early anticipation, confounding justification, similarity and transitivity checks, network adaptation in NMA, ESS reporting, and scenarios for single-arm versus external control comparisons.

Significance. This guidance is significant for improving the quality of ITCs in HTA submissions where direct evidence is lacking. The synthesis of expert consensus and regulatory opinions offers context-specific advice that can help address challenges like cross-country variations and ethical constraints. Credit is due for the systematic review of HAS opinions and the emphasis on aligning methods with assumption validity, which supports better-informed decision-making. As an untested set of recommendations, its value lies in its practicality rather than proven efficacy, but it serves as a useful reference for practitioners.

Simulated Author's Rebuttal

We thank the referee for their positive and constructive review of our manuscript. We appreciate the recognition of its practical value as an opinion and perspective piece synthesizing expert insights and HAS Transparency Committee opinions to guide robust ITCs in French HTA submissions. The recommendation to accept is encouraging, and we have no major comments to address point by point.

Circularity Check

No significant circularity

full rationale

The paper is explicitly an opinion and perspective piece that synthesizes expert-panel insights from prior HTA guidelines and a systematic review of HAS Transparency Committee opinions. Its central claim is the general principle that robust ITCs require methodological choices consistent with assumption validity and evidence strength; the body supplies practical tips as expert advice rather than as empirically tested interventions or derivations. No internal equations, fitted parameters, predictions, self-definitional loops, or load-bearing self-citations appear in the argument structure. The guidance draws on external sources without reducing any result to its own inputs by construction.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Expert panel review of HTA guidelines and HAS opinions yields reliable practical guidance for ITCs

Lean theorems connected to this paper

-

IndisputableMonolith/Foundation/AbsoluteFloorClosure.leanreality_from_one_distinction unclearRobust and reliable ITCs require methods consistent with the validity of their assumptions and the strength of the available evidence.

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearKey considerations include early anticipation of ITCs, justification of potential confounding factors, and rigorous assessment of similarity and transitivity

Reference graph

Works this paper leans on

-

[1]

FDA. Considerations for the Design and Conduct of Externally Controlled Trials for Drug and Biological Products [Internet]. 2023. Available from: https://www.fda.gov/regulatory - information/search-fda-guidance-documents/considerations-design-and-conduct-externally- controlled-trials-drug-and-biological-products

work page 2023

-

[2]

Hoaglin DC, Hawkins N, Jansen JP, Scott DA, Itzler R, Cappelleri JC, et al. Conducting indirect- treatment-comparison and network -meta-analysis studies: report of the ISPOR Task Force on Indirect Treatment Comparisons Good Research Practices: part 2. Value Health. 2011 Jun;14(4):429–37. doi:10.1016/j.jval.2011.01.011 PubMed PMID: 21669367

-

[3]

Enriching single -arm clinical trials with external controls: possibilities and pitfalls

Lambert J, Lengliné E, Porcher R, Thiébaut R, Zohar S, Chevret S. Enriching single -arm clinical trials with external controls: possibilities and pitfalls. Blood Advances. 2022 Dec 19;bloodadvances.2022009167. doi:10.1182/bloodadvances.2022009167

-

[4]

Are Target Trial Emulations the Gold Standard for Observational Studies? Epidemiology

Pearce N, Vandenbroucke JP. Are Target Trial Emulations the Gold Standard for Observational Studies? Epidemiology. 2023 Sep;34(5):614. doi:10.1097/EDE.0000000000001636

-

[5]

Rapid access to innovative medicinal products while ensuring relevant health technology assessment

Vanier A, Fernandez J, Kelley S, Alter L, Semenzato P, Alberti C, et al. Rapid access to innovative medicinal products while ensuring relevant health technology assessment. Position of the French National Authority for Health. BMJ EBM. 2023 Feb 14;bmjebm -2022-112091. doi:10.1136/bmjebm-2022-112091

-

[6]

real -world evidence framework [Internet]

NICE. real -world evidence framework [Internet]. 2022 Jun 23. Available from: www.nice.org.uk/corporate/ecd9

work page 2022

-

[7]

Real-World Database Studies in Oncology: A Call for Standards

Ramsey SD, Onar-Thomas A, Wheeler SB. Real-World Database Studies in Oncology: A Call for Standards. JCO. 2024 Mar 20;42(9):977–80. doi:10.1200/JCO.23.02399

-

[8]

Monnereau M, Jarne A, Benoist A, Fradet C, Perol M, Filleron T, et al. Methodological Advances and Challenges in Indirect Treatment Comparisons: A Review of International Guidelines and HAS TC Case Studies [Internet]. arXiv; 2025 [cited 2025 Jun 16]. Available from: http://arxiv.org/abs/2506.11587 doi:10.48550/arXiv.2506.11587

-

[9]

Twenty years of network meta-analysis: Continuing controversies and recent developments

Ades AE, Welton NJ, Dias S, Phillippo DM, Caldwell DM. Twenty years of network meta-analysis: Continuing controversies and recent developments. Research Synthesis Methods. 2024;15(5):702–

work page 2024

-

[10]

doi:10.1002/jrsm.1700

-

[11]

Practical Guideline for Quantitative Evidence Synthesis: Direct and Indirect Comparisons

HTA CG. Practical Guideline for Quantitative Evidence Synthesis: Direct and Indirect Comparisons. 2024

work page 2024

-

[12]

Beaver JA, Pazdur R. “Dangling” Accelerated Approvals in Oncology. N Engl J Med. 2021 May 6;384(18):e68. doi:10.1056/NEJMp2104846 PubMed PMID: 33882220

-

[13]

Methodological Guideline for Quantitative Evidence Synthesis: Direct and Indirect Comparisons

HTA CG. Methodological Guideline for Quantitative Evidence Synthesis: Direct and Indirect Comparisons. 2024

work page 2024

-

[14]

EUnetHTA. EUnetHTA 21 - Individual Practical Guideline Document D4.3.1: DIRECT AND INDIRECT COMPARISONS [Internet]. EUnetHTA; 2022 Feb. Report No. Available from: https://www.eunethta.eu/wp-content/uploads/2022/12/EUnetHTA-21-D4.3.1-Direct-and-indirect- comparisons-v1.0.pdf

work page 2022

-

[15]

Research C for DE and. Real -World Evidence: Considerations Regarding Non -Interventional Studies for Drug and Biological Products [Internet]. FDA; 2024 [cited 2024 Jul 23]. Available from: https://www.fda.gov/regulatory-information/search-fda-guidance-documents/real-world-evidence- considerations-regarding-non-interventional-studies-drug-and-biological-products

work page 2024

-

[16]

Haute Autorité de Santé [Internet]. [cited 2025 Apr 11]. KYMRIAH (tisagenlecleucel) - LDGCB. Available from: https://www.has-sante.fr/jcms/p_3262259/fr/kymriah-tisagenlecleucel-ldgcb

work page 2025

-

[17]

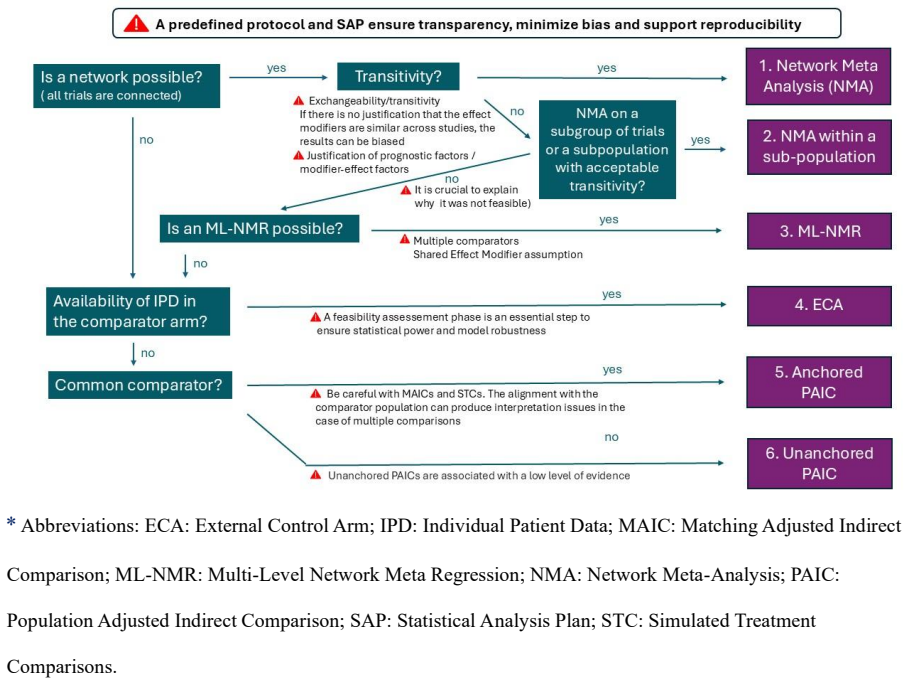

Haute Autorité de Santé [Internet]. [cited 2025 Apr 11]. YESCARTA (axicabtagène ciloleucel). Available from: https://www.has-sante.fr/jcms/p_3262244/fr/yescarta-axicabtagene-ciloleucel Figures 9 * Abbreviations: ECA: External Control Arm; IPD: Individual Patient Data; MAIC: Matching Adjusted Indirect Comparison; ML-NMR: Multi-Level Network Meta Regression...

work page 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.