Recognition: 2 theorem links

· Lean TheoremxApp Empowered Resource Management for Non-Terrestrial Users in 5G O-RAN Networks

Pith reviewed 2026-05-12 04:02 UTC · model grok-4.3

The pith

A DDQN xApp in O-RAN proactively optimizes UAV handovers to cut their frequency by up to 54.6 percent while keeping outage negligible.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

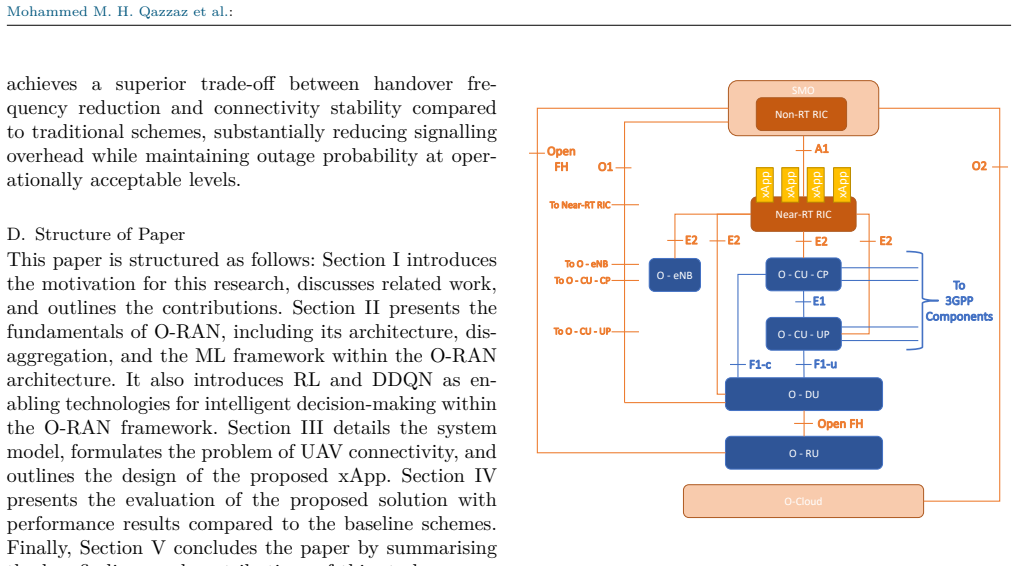

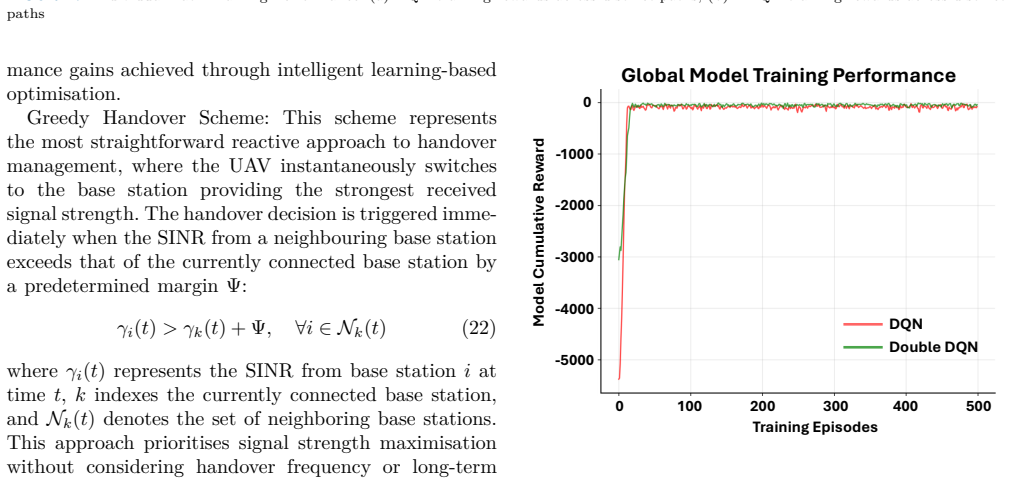

This paper introduces a proactive Unmanned Aerial Vehicle (UAV) mobility management xApp for Open Radio Access Network (O-RAN) Near Real-Time Radio Intelligent Controller (Near-RT RIC) environments, employing Double Deep Q-Network (DDQN) reinforcement learning (RL) enhanced with transfer learning to optimise handover decisions for UAVs operating along predetermined flight trajectories. Unlike reactive approaches that respond to signal degradation, the proposed framework anticipates network conditions and minimises both outage probability and handover frequency through predictive optimisation. The system leverages centralised weight averaging to consolidate knowledge from multiple flight sce

What carries the argument

DDQN reinforcement learning agent with transfer learning and centralized weight averaging, running as an xApp in the Near-RT RIC to predict and optimize UAV handover decisions.

Load-bearing premise

UAVs follow predetermined flight trajectories and the learned model generalizes to previously unseen operational environments without extensive retraining.

What would settle it

Field tests in which UAVs deviate from trained trajectories or enter new environments and the outage probability rises above negligible levels or the handover reduction disappears.

Figures

read the original abstract

This paper introduces a proactive Unmanned Aerial Vehicle (UAV) mobility management xApp for Open Radio Access Network (O-RAN) Near Real-Time Radio Intelligent Controller (Near-RT RIC) environments, employing Double Deep Q-Network (DDQN) reinforcement learning (RL) enhanced with transfer learning to optimise handover decisions for UAVs operating along predetermined flight trajectories. Unlike reactive approaches that respond to signal degradation, the proposed framework anticipates network conditions and minimises both outage probability and handover frequency through predictive optimisation. The system leverages centralised weight averaging to consolidate knowledge from multiple flight scenarios into a global model capable of generalising to previously unseen operational environments without extensive retraining. A comprehensive evaluation demonstrates that the proposed framework achieves a favourable trade-off between handover frequency and connectivity reliability, reducing handover events by up to 54.6% compared to greedy approaches while maintaining outage probability at practically negligible levels. The results validate the effectiveness of intelligent learning-based approaches for UAV mobility management in next-generation O-RAN architectures, thereby contributing to seamless integration of aerial user equipment into cellular networks.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes a proactive UAV mobility management xApp for O-RAN Near-RT RIC that uses Double Deep Q-Network (DDQN) reinforcement learning augmented by transfer learning and centralized weight averaging across multiple predetermined flight trajectories. The central claim is that this yields a global policy capable of generalizing to unseen environments without retraining, delivering up to 54.6% fewer handover events than greedy baselines while keeping outage probability negligible.

Significance. If the generalization and performance claims hold under broader conditions, the work would offer a practical contribution to integrating aerial users into 5G O-RAN by shifting from reactive to predictive handover control. The use of weight averaging for knowledge consolidation is a positive technical choice that could reduce retraining overhead in deployed xApps.

major comments (3)

- [Abstract / Evaluation] Abstract and evaluation description: the headline result of a 54.6% handover reduction with negligible outage is presented without any reported simulation parameters (UAV speeds, altitudes, channel models, number of runs, or statistical significance tests), baseline implementation details, or sensitivity analysis. This directly undermines the ability to assess whether the reported trade-off is robust or reproducible.

- [Abstract] Abstract: the claim that centralized weight averaging produces a model that 'generalises to previously unseen operational environments without extensive retraining' is load-bearing for the deployment argument, yet the evaluation only tests held-out trajectories drawn from the same family of flight paths and channel conditions. No experiments address genuine distribution shifts (different urban geometries, altitude bands, or propagation statistics), so the generalization result cannot be extrapolated beyond the training distribution.

- [Abstract / System Model] The proactive prediction step relies on the assumption that UAVs follow predetermined trajectories; if this assumption is relaxed to realistic dynamic paths, the reported handover-outage trade-off may no longer hold, but no such stress test is described.

minor comments (2)

- [Proposed Framework] Notation for the DDQN components (target network, experience replay, etc.) should be introduced with explicit equations rather than left implicit in the algorithm description.

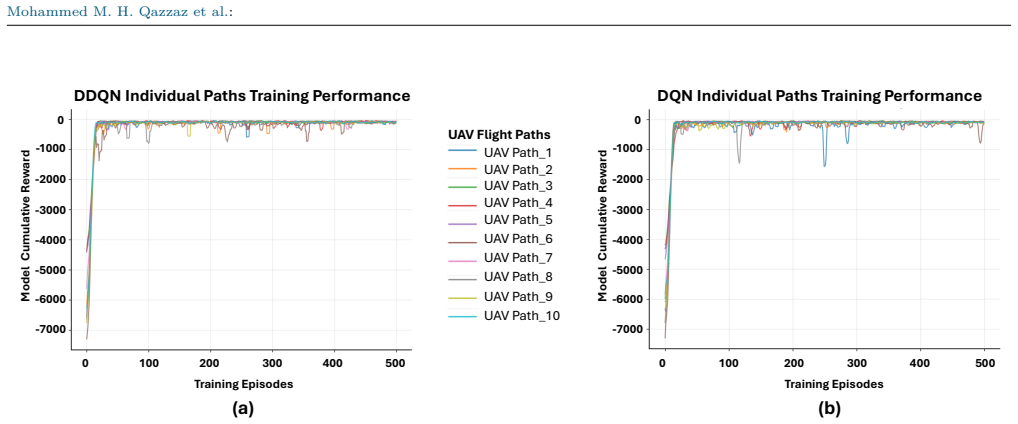

- [Evaluation] Figure captions and axis labels in the performance plots need to specify the exact baseline algorithms being compared and the number of Monte-Carlo runs used for averaging.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed comments. We address each major comment point by point below, providing clarifications and indicating revisions made to strengthen the manuscript.

read point-by-point responses

-

Referee: [Abstract / Evaluation] Abstract and evaluation description: the headline result of a 54.6% handover reduction with negligible outage is presented without any reported simulation parameters (UAV speeds, altitudes, channel models, number of runs, or statistical significance tests), baseline implementation details, or sensitivity analysis. This directly undermines the ability to assess whether the reported trade-off is robust or reproducible.

Authors: The full manuscript details the simulation parameters, UAV speeds, altitudes, 3GPP-based channel models, number of independent runs, baseline implementations (including greedy and other RL variants), and sensitivity analysis in the Evaluation section. To improve accessibility and reproducibility, we have revised the abstract to include a concise summary of key parameters and added explicit reporting of statistical significance and sensitivity results to the evaluation description. revision: yes

-

Referee: [Abstract] Abstract: the claim that centralized weight averaging produces a model that 'generalises to previously unseen operational environments without extensive retraining' is load-bearing for the deployment argument, yet the evaluation only tests held-out trajectories drawn from the same family of flight paths and channel conditions. No experiments address genuine distribution shifts (different urban geometries, altitude bands, or propagation statistics), so the generalization result cannot be extrapolated beyond the training distribution.

Authors: Our experiments demonstrate that centralized weight averaging combined with transfer learning enables generalization to held-out trajectories from the same family of predetermined flight paths and channel conditions without retraining. This is the scope of the claim supported by the results. We agree that genuine out-of-distribution shifts (e.g., novel urban geometries or propagation statistics) are not evaluated. We have revised the abstract and added a dedicated limitations paragraph to precisely delineate the generalization scope and note the need for further adaptation mechanisms in broader settings. revision: partial

-

Referee: [Abstract / System Model] The proactive prediction step relies on the assumption that UAVs follow predetermined trajectories; if this assumption is relaxed to realistic dynamic paths, the reported handover-outage trade-off may no longer hold, but no such stress test is described.

Authors: The framework targets UAV mobility management under predetermined trajectories, a common and practical setting for applications such as delivery routes or surveillance. The DDQN agent with transfer learning leverages trajectory knowledge for proactive decisions. We have added an explicit discussion in the System Model section on this modeling assumption, its rationale, and potential extensions (e.g., via online adaptation or additional sensing) for fully dynamic paths, while noting that the current results are conditioned on the stated assumption. revision: yes

Circularity Check

No significant circularity; claims rest on external simulation benchmarks

full rationale

The paper's derivation chain consists of a standard DDQN reinforcement learning setup augmented by centralized weight averaging for transfer learning, with performance metrics (e.g., 54.6% handover reduction) obtained via comparative simulation against greedy baselines rather than any self-referential definition or fitted parameter renamed as a prediction. No equations reduce the reported trade-off to the model's own inputs by construction, no load-bearing self-citations are invoked to justify uniqueness or ansatzes, and the generalization statement is presented as an empirical outcome of held-out trajectory evaluation rather than a definitional property. The framework is therefore self-contained against its stated external benchmarks.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearThe proposed framework achieves a favourable trade-off between handover frequency and connectivity reliability, reducing handover events by up to 54.6% compared to greedy approaches while maintaining outage probability at practically negligible levels.

-

IndisputableMonolith/Foundation/DimensionForcing.leanreality_from_one_distinction unclearThe system leverages centralised weight averaging to consolidate knowledge from multiple flight scenarios into a global model

Reference graph

Works this paper leans on

-

[1]

D. Mishra and E. Natalizio, “A survey on cellular-connected uavs: Design challenges, enabling 5g/b5g innovations, and experimental advancements,” Computer Networks, vol. 182, p. 107451, 2020

work page 2020

-

[2]

Study on New Radio (NR) to Support Non-Terrestrial Networks,

3GPP, “Study on New Radio (NR) to Support Non-Terrestrial Networks,” 3rd Generation Partnership Project (3GPP), Technical Report (TR) 38.811, 2020. [Online]. A vailable: https://www.3gpp.org/ftp/Specs/archive/38_series/38.811/

work page 2020

-

[3]

Solutions for NR to support non-terrestrial networks (NTN),

——, “Solutions for NR to support non-terrestrial networks (NTN),” 3rd Generation Partnership Project (3GPP), Technical Report (TR) 38.821, 2023. [Online]. A vailable: https://www.3gpp.org/ftp/Specs/archive/38_series/38.821/

work page 2023

-

[4]

Open ran— radio access network evolution, benefits and market trends,

D. Wypiór, M. Klinkowski, and I. Michalski, “Open ran— radio access network evolution, benefits and market trends,” Applied Sciences, vol. 12, no. 1, p. 408, 2022

work page 2022

-

[5]

Non-terrestrial uav clients for beyond 5g networks: A comprehensive survey,

M. M. Qazzaz, S. A. Zaidi, D. McLernon, A. M. Hayajneh, A. Salama, and S. A. Aldalahmeh, “Non-terrestrial uav clients for beyond 5g networks: A comprehensive survey,” Ad Hoc Networks, p. 103440, 2024

work page 2024

-

[6]

A tutorial on uavs for wireless networks: Applications, challenges, and open problems,

M. Mozaffari, W. Saad, M. Bennis, Y.-H. Nam, and M. Deb- bah, “A tutorial on uavs for wireless networks: Applications, challenges, and open problems,” IEEE communications sur- veys & tutorials, vol. 21, no. 3, pp. 2334–2360, 2019

work page 2019

-

[7]

Deep- ensemble-learning-based gps spoofing detection for cellular- connected uavs,

Y. Dang, C. Benzaïd, B. Yang, T. Taleb, and Y. Shen, “Deep- ensemble-learning-based gps spoofing detection for cellular- connected uavs,” IEEE Internet of Things Journal, vol. 9, no. 24, pp. 25 068–25 085, 2022

work page 2022

-

[8]

Ap- plication of noma for cellular-connected uavs: Opportunities and challenges,

W. K. New, C. Y. Leow, K. Navaie, Y. Sun, and Z. Ding, “Ap- plication of noma for cellular-connected uavs: Opportunities and challenges,” Science China Information Sciences, vol. 64, no. 4, pp. 1–14, 2021

work page 2021

-

[9]

I. A. Meer, M. Ozger, D. A. Schupke, and C. Cavdar, “Mobility management for cellular-connected uavs: Model-based ver- sus learning-based approaches for service availability,” IEEE Transactions on Network and Service Management, vol. 21, no. 2, pp. 2125–2139, 2024. VOLUME 2 , 19 Mohammed M. H. Qazzaz et al. :

work page 2024

-

[10]

Open, programmable, and virtualized 5g networks: State-of- the-art and the road ahead,

L. Bonati, M. Polese, S. D’Oro, S. Basagni, and T. Melodia, “Open, programmable, and virtualized 5g networks: State-of- the-art and the road ahead,” Computer Networks, vol. 182, p. 107516, 2020

work page 2020

-

[11]

M. M. Qazzaz, S. A. Zaidi, D. McLernon, A. Salama, and A. A. Al-Hameed, “Low complexity online rl enabled uav trajectory planning considering connectivity and obstacle avoidance con- straints,” in 2023 IEEE International Black Sea Conference on Communications and Networking (BlackSeaCom). IEEE, 2023, pp. 82–89

work page 2023

-

[12]

Understanding o-ran: Architecture, interfaces, algorithms, security, and research challenges,

M. Polese, L. Bonati, S. D’oro, S. Basagni, and T. Melodia, “Understanding o-ran: Architecture, interfaces, algorithms, security, and research challenges,” IEEE Communications Surveys & Tutorials, 2023

work page 2023

-

[13]

O. Orhan, V. N. Swamy, T. Tetzlaff, M. Nassar, H. Nikopour, and S. Talwar, “Connection management xapp for o-ran ric: A graph neural network and reinforcement learning approach,” in 2021 20th IEEE International Conference on Machine Learning and Applications (ICMLA). IEEE, 2021, pp. 936– 941

work page 2021

-

[14]

A. K. Singh and K. K. Nguyen, “User handover aware hierar- chical federated learning for open ran based next-generation mobile networks,” IEEE Transactions on Machine Learning in Communications and Networking, 2025

work page 2025

-

[15]

T. Seyfi et al., “Real: Reinforcement learning-enabled xapps for experimental closed-loop optimization in o-ran with osc ric and srsran,” arXiv preprint arXiv:2502.00715, 2025

-

[16]

Handover control in wireless systems via asynchronous multiuser deep reinforcement learning,

Z. Wang, L. Li, Y. Xu, H. Tian, and S. Cui, “Handover control in wireless systems via asynchronous multiuser deep reinforcement learning,” IEEE Internet of Things Journal, vol. 5, no. 6, pp. 4296–4307, 2018

work page 2018

-

[17]

Proactive handover decision for uavs with deep reinforcement learning,

Y. Jang, S. M. Raza, M. Kim, and H. Choo, “Proactive handover decision for uavs with deep reinforcement learning,” Sensors, vol. 22, no. 3, p. 1200, 2022

work page 2022

-

[18]

M. K. A. Kadir et al., “Machine learning-based approaches for handover decision of cellular-connected drones in future net- works: A comprehensive review,” Computer Communications, 2024

work page 2024

-

[19]

A uav-ugv cooperative system: Pa- trolling and energy management for urban monitoring,

O. S. Oubbati, J. Alotaibi, F. Alromithy, M. Atiquzzaman, and M. R. Altimania, “A uav-ugv cooperative system: Pa- trolling and energy management for urban monitoring,” IEEE Transactions on Vehicular Technology, 2025

work page 2025

-

[20]

C. Dutriez, O. S. Oubbati, C. Gueguen, and A. Rachedi, “Energy efficiency relaying election mechanism for 5g internet of things: A deep reinforcement learning technique,” in 2024 IEEE Wireless Communications and Networking Conference (WCNC). IEEE, 2024, pp. 1–6

work page 2024

-

[21]

Optimizing disaster response with uav- mounted ris and hap-enabled edge computing in 6g networks,

J. Alotaibi, O. S. Oubbati, M. Atiquzzaman, F. Alromithy, and M. R. Altimania, “Optimizing disaster response with uav- mounted ris and hap-enabled edge computing in 6g networks,” Journal of Network and Computer Applications, p. 104213, 2025

work page 2025

-

[22]

Connectivity-aware 3d uav path design with deep reinforcement learning,

H. Xie, D. Yang, L. Xiao, and J. Lyu, “Connectivity-aware 3d uav path design with deep reinforcement learning,” IEEE Transactions on Vehicular Technology, vol. 70, no. 12, pp. 13 022–13 034, 2021

work page 2021

-

[23]

Deep uav path planning with assured connectivity in dense urban setting,

J. Oh, S. M. Raza, L. J. Mwasinga, M. Kim, and H. Choo, “Deep uav path planning with assured connectivity in dense urban setting,” in NOMS 2024-2024 IEEE Network Opera- tions and Management Symposium. IEEE, 2024, pp. 1–5

work page 2024

-

[24]

Mobile network-connected drones: Field trials, simulations, and design insights,

X. Lin, R. Wiren, S. Euler, A. Sadam, H.-L. Määttänen, S. Mu- ruganathan, S. Gao, Y.-P. E. Wang, J. Kauppi, Z. Zou et al., “Mobile network-connected drones: Field trials, simulations, and design insights,” IEEE Vehicular Technology Magazine, vol. 14, no. 3, pp. 115–125, 2019

work page 2019

-

[25]

Efficient drone mobility support using reinforcement learning,

Y. Chen, X. Lin, T. Khan, and M. Mozaffari, “Efficient drone mobility support using reinforcement learning,” in 2020 IEEE wireless communications and networking conference (WCNC). IEEE, 2020, pp. 1–6

work page 2020

-

[26]

O-ran architecture description-v01. 00.00,

O.-R. A. W. Group-1, “O-ran architecture description-v01. 00.00,” Technical Specification, 2020

work page 2020

-

[27]

Human-level control through deep rein- forcement learning,

V. Mnih, K. Kavukcuoglu, D. Silver, A. A. Rusu, J. Veness, M. G. Bellemare, A. Graves, M. Riedmiller, A. K. Fidjeland, G. Ostrovski et al., “Human-level control through deep rein- forcement learning,” Nature, vol. 518, no. 7540, pp. 529–533, 2015

work page 2015

-

[28]

Deep reinforcement learning for mobile 5g and beyond: Fundamentals, applications, and challenges,

Z. Xiong, Y. Zhang, D. Niyato, R. Deng, P. Wang, and L.-C. Wang, “Deep reinforcement learning for mobile 5g and beyond: Fundamentals, applications, and challenges,” IEEE Vehicular Technology Magazine, vol. 14, no. 2, pp. 44–52, 2019

work page 2019

- [29]

-

[30]

Study on Enhanced LTE Support for Aerial Vehicles,

3GPP, “Study on Enhanced LTE Support for Aerial Vehicles,” 3rd Generation Partnership Project (3GPP), Technical Report (TR) 36.777, 2018. [Online]. A vailable: https://www.3gpp.org/ftp/Specs/archive/36_series/36.777/

work page 2018

-

[31]

Communication-efficient learning of deep networks from decentralized data,

B. McMahan, E. Moore, D. Ramage, S. Hampson, and B. A. y. Arcas, “Communication-efficient learning of deep networks from decentralized data,” in Artificial Intelligence and Statis- tics. PMLR, 2017, pp. 1273–1282

work page 2017

-

[32]

Unmanned aircraft system traffic man- agement (utm) concept of operations,

P. Kopardekar, J. Rios, T. Prevot, M. Johnson, J. Jung, and J. E. Robinson, “Unmanned aircraft system traffic man- agement (utm) concept of operations,” in AIAA A VIATION Forum and Exposition, no. ARC-E-DAA-TN32838, 2016. Mohammed M. H. Qazzaz is an accom- plished academic and researcher who earned his BSc and MSc degrees in Electronics & Communication Eng...

work page 2016

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.