Recognition: 2 theorem links

· Lean TheoremObjView-Bench: Rethinking Difficulty and Deployment for Object-Centric View Planning

Pith reviewed 2026-05-12 04:21 UTC · model grok-4.3

The pith

Disentangling object self-occlusion, saturation difficulty, and planning difficulty shows that budget and reachability constraints reorder view planner rankings and failure modes.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

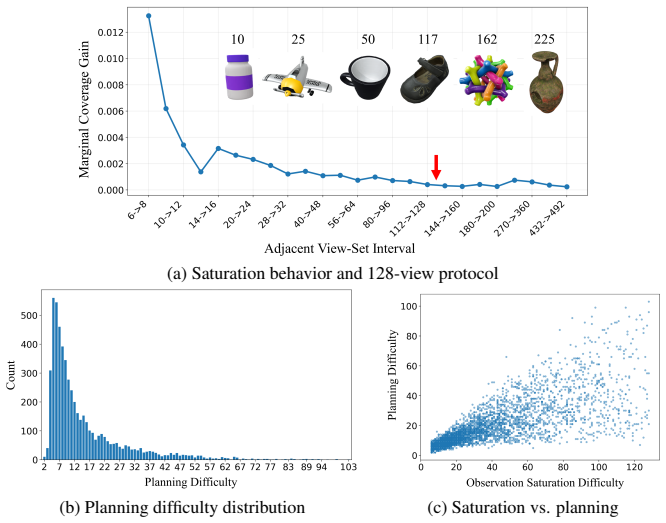

We introduce ObjView-Bench, an evaluation framework for object-centric view planning. First, we disentangle three quantities: omnidirectional self-occlusion as an object-side attribute, observation saturation difficulty, and protocol-dependent planning difficulty defined through a set-cover formulation. This separation supports controlled dataset construction, analysis of slow-saturation objects, and a case study showing that planning difficulty-aware sampling can improve learned view planners. Second, we design deployment-oriented evaluation protocols that reveal how budget regimes and reachable-view constraints alter method behavior. Across classical, learned, and hybrid planners, ObjView-

What carries the argument

ObjView-Bench, an evaluation framework that separates omnidirectional self-occlusion as an object attribute, observation saturation difficulty, and set-cover-based planning difficulty while adding deployment protocols for budgets and reachability constraints.

If this is right

- Difficulty, budget, and reachability constraints substantially change method rankings and failure modes across classical, learned, and hybrid planners.

- Planning difficulty-aware sampling improves performance of learned view planners.

- Controlled dataset construction becomes possible for analyzing slow-saturation objects.

- Conclusions from idealized view-planning tests may not reliably predict performance in realistic reconstruction settings.

- Deployment-oriented protocols expose how reachable-view limits alter planner behavior.

Where Pith is reading between the lines

- The set-cover formulation for planning difficulty could be reused to analyze other robotics tasks that involve selecting views or measurements under coverage constraints.

- Real robot deployments could test whether the disentangled difficulty measures predict actual reconstruction quality better than standard benchmarks.

- Hybrid planners might be tuned specifically for high-reachability or tight-budget regimes once the separate effects are measured.

- Similar disentanglement of object properties from protocol difficulty could help evaluation in related areas such as active sensing or map exploration.

Load-bearing premise

The proposed separation into omnidirectional self-occlusion, observation saturation difficulty, and set-cover planning difficulty, together with the deployment protocols, meaningfully reflects real object-centric view planning challenges.

What would settle it

If physical robot experiments show that method rankings and failure modes stay the same across varying budgets, reachability constraints, and object difficulties, the claim that these factors substantially alter performance would be falsified.

Figures

read the original abstract

Object-centric view planning is a core component of active geometric 3D reconstruction in robotics, yet existing evaluations often conflate object complexity, planning difficulty, budget assumptions, and physical reachability constraints. As a result, conclusions drawn from idealized view-planning evaluations may not reliably predict performance under realistic reconstruction settings. We introduce ObjView-Bench, an evaluation framework for rethinking difficulty and deployment in object-centric view planning. First, we disentangle three quantities underlying view-planning evaluation: omnidirectional self-occlusion as an object-side attribute, observation saturation difficulty, and protocol-dependent planning difficulty defined through a set-cover formulation. This separation supports controlled dataset construction, analysis of slow-saturation objects, and a case study showing that planning difficulty-aware sampling can improve learned view planners. Second, we design deployment-oriented evaluation protocols that reveal how budget regimes and reachable-view constraints alter method behavior. Across classical, learned, and hybrid planners, ObjView-Bench shows that difficulty, budget, and reachability constraints substantially change method rankings and failure modes.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces ObjView-Bench, an evaluation framework for object-centric view planning in active 3D reconstruction. It disentangles three quantities: omnidirectional self-occlusion (object-side attribute), observation saturation difficulty, and protocol-dependent planning difficulty defined via a set-cover formulation. The work also proposes deployment-oriented protocols incorporating budget regimes and reachable-view constraints, and demonstrates across classical, learned, and hybrid planners that these factors alter method rankings and failure modes.

Significance. If the disentanglement is cleanly implemented and the set-cover scores correlate with actual planner performance under geometric constraints, the benchmark could improve evaluation practices and method selection for realistic robotics applications. The empirical demonstration of ranking shifts under controlled protocols is a strength, as is the focus on slow-saturation objects and planning-aware sampling for learned planners.

major comments (1)

- [Abstract and §3] Abstract and §3 (planning difficulty definition): the protocol-dependent planning difficulty is defined through a set-cover formulation on views. This discrete combinatorial abstraction does not encode continuous SE(3) visibility, collision avoidance, or non-myopic objectives used by learned and hybrid planners. Without explicit validation that set-cover scores correlate with observed runtimes or success rates once full geometry is restored, the reported changes in rankings and failure modes risk being artifacts of the abstraction rather than genuine effects of difficulty, budget, or reachability.

minor comments (1)

- [Abstract] Abstract: the phrase 'substantially change method rankings' would benefit from a brief quantitative example or effect size to ground the claim before the full results.

Simulated Author's Rebuttal

We thank the referee for their thoughtful review and constructive criticism. We respond to the major comment point-by-point below and outline the revisions we will make to address the concerns raised.

read point-by-point responses

-

Referee: [Abstract and §3] Abstract and §3 (planning difficulty definition): the protocol-dependent planning difficulty is defined through a set-cover formulation on views. This discrete combinatorial abstraction does not encode continuous SE(3) visibility, collision avoidance, or non-myopic objectives used by learned and hybrid planners. Without explicit validation that set-cover scores correlate with observed runtimes or success rates once full geometry is restored, the reported changes in rankings and failure modes risk being artifacts of the abstraction rather than genuine effects of difficulty, budget, or reachability.

Authors: We appreciate the referee's concern regarding the validity of the set-cover abstraction as a measure of planning difficulty. The set-cover formulation is used as a discrete, protocol-dependent proxy to quantify the minimum number of views needed to cover the object under idealized conditions, which allows us to disentangle planning difficulty from object-specific geometric properties like self-occlusion. This enables the construction of controlled datasets where we can vary planning difficulty independently. Our empirical evaluations of the planners (classical, learned, and hybrid) are performed in full simulation environments that incorporate continuous SE(3) poses, visibility checks, and collision avoidance. The reported changes in rankings and failure modes are observed directly from these full-geometry runs under varying budget regimes and reachable-view constraints. To strengthen the link between the set-cover scores and actual planner performance, we will include in the revised manuscript an explicit correlation analysis demonstrating that higher set-cover difficulty objects correlate with increased planning effort (e.g., more views selected or higher failure rates) for the evaluated methods, even when full geometry is used. This will confirm that the observed effects are not artifacts of the abstraction. revision: yes

Circularity Check

No circularity: empirical benchmark with independent definitions

full rationale

The paper defines three difficulty quantities (omnidirectional self-occlusion, observation saturation, and set-cover planning difficulty) explicitly from domain first principles, introduces deployment protocols, and reports observed changes in planner rankings across classical/learned/hybrid methods. No derivation step reduces by construction to a fitted parameter, self-citation, or renamed input; the set-cover formulation is a stated modeling choice whose correlation with real behavior is left as an empirical question rather than assumed tautological. The work is self-contained against external benchmarks and planners.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption View planning difficulty can be defined through a set-cover formulation

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearprotocol-dependent planning difficulty defined through a set-cover formulation

-

IndisputableMonolith/Foundation/AbsoluteFloorClosure.leanabsolute_floor_iff_bare_distinguishability unclearomnidirectional self-occlusion as an object-side attribute, observation saturation difficulty

Reference graph

Works this paper leans on

-

[1]

Proceedings of the 23rd annual conference on Computer graphics and interactive techniques , pages=

Fitting smooth surfaces to dense polygon meshes , author=. Proceedings of the 23rd annual conference on Computer graphics and interactive techniques , pages=

-

[2]

Asian conference on computer vision , pages=

Model based training, detection and pose estimation of texture-less 3d objects in heavily cluttered scenes , author=. Asian conference on computer vision , pages=. 2012 , organization=

work page 2012

-

[3]

Proceedings of the IEEE/CVF International Conference on Computer Vision Workshops , pages=

Homebreweddb: Rgb-d dataset for 6d pose estimation of 3d objects , author=. Proceedings of the IEEE/CVF International Conference on Computer Vision Workshops , pages=

-

[4]

International journal of computer vision , volume=

A scale independent selection process for 3d object recognition in cluttered scenes , author=. International journal of computer vision , volume=. 2013 , publisher=

work page 2013

-

[5]

ShapeNet: An Information-Rich 3D Model Repository

Shapenet: An information-rich 3d model repository , author=. arXiv preprint arXiv:1512.03012 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[6]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Objaverse: A universe of annotated 3d objects , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[7]

Computers & Operations Research , volume=

Iterated dynamic neighborhood search for packing equal circles on a sphere , author=. Computers & Operations Research , volume=. 2023 , publisher=

work page 2023

-

[8]

Proceedings of the IEEE/CVF International Conference on Computer Vision , pages=

Objaverse++: Curated 3d object dataset with quality annotations , author=. Proceedings of the IEEE/CVF International Conference on Computer Vision , pages=

-

[9]

Accelerating 3d deep learning with py- torch3d,

Accelerating 3d deep learning with pytorch3d , author=. arXiv preprint arXiv:2007.08501 , year=

-

[10]

Computational Visual Media , volume=

View planning in robot active vision: A survey of systems, algorithms, and applications , author=. Computational Visual Media , volume=. 2020 , publisher=

work page 2020

-

[11]

ISPRS Open Journal of Photogrammetry and Remote Sensing , pages=

A Structured Review and Taxonomy of Next-Best-View Strategies for 3D Reconstruction , author=. ISPRS Open Journal of Photogrammetry and Remote Sensing , pages=. 2025 , publisher=

work page 2025

-

[12]

A comparison of volumetric information gain metrics for active 3D object reconstruction , author=. Autonomous Robots , volume=. 2018 , publisher=

work page 2018

-

[13]

Journal of Intelligent & Robotic Systems , volume=

Bayesian probabilistic stopping test and asymptotic shortest time trajectories for object reconstruction with a mobile manipulator robot , author=. Journal of Intelligent & Robotic Systems , volume=. 2022 , publisher=

work page 2022

-

[14]

IEEE Robotics and Automation Letters , year=

PB-NBV: Efficient Projection-Based Next-Best-View Planning Framework for Reconstruction of Unknown Objects , author=. IEEE Robotics and Automation Letters , year=

-

[15]

IEEE Robotics and Automation Letters , volume=

Scvp: Learning one-shot view planning via set covering for unknown object reconstruction , author=. IEEE Robotics and Automation Letters , volume=. 2022 , publisher=

work page 2022

-

[16]

View/state planning for three-dimensional object reconstruction under uncertainty , author=. Autonomous Robots , volume=. 2017 , publisher=

work page 2017

-

[17]

IEEE Robotics and Automation Letters , volume=

A global max-flow-based multi-resolution next-best-view method for reconstruction of 3d unknown objects , author=. IEEE Robotics and Automation Letters , volume=. 2021 , publisher=

work page 2021

-

[18]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Gennbv: Generalizable next-best-view policy for active 3d reconstruction , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[19]

Journal of Real-Time Image Processing , volume=

Efficient next-best-scan planning for autonomous 3D surface reconstruction of unknown objects , author=. Journal of Real-Time Image Processing , volume=. 2015 , publisher=

work page 2015

-

[20]

Computer Vision and Image Understanding , volume=

A global generalized maximum coverage-based solution to the non-model-based view planning problem for object reconstruction , author=. Computer Vision and Image Understanding , volume=. 2023 , publisher=

work page 2023

-

[21]

Pattern Recognition Letters , volume=

Supervised learning of the next-best-view for 3d object reconstruction , author=. Pattern Recognition Letters , volume=. 2020 , publisher=

work page 2020

-

[22]

IEEE Transactions on Robotics , volume=

Integrating one-shot view planning with a single next-best view via long-tail multiview sampling , author=. IEEE Transactions on Robotics , volume=. 2024 , publisher=

work page 2024

-

[23]

2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) , pages=

Pc-nbv: A point cloud based deep network for efficient next best view planning , author=. 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) , pages=. 2020 , organization=

work page 2020

-

[24]

arXiv preprint arXiv:2412.10444 , year=

Boundary exploration of next best view policy in 3D robotic scanning , author=. arXiv preprint arXiv:2412.10444 , year=

-

[25]

European Conference on Computer Vision , pages=

Next-best view policy for 3d reconstruction , author=. European Conference on Computer Vision , pages=. 2020 , organization=

work page 2020

-

[26]

2023 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) , pages=

Pred-nbv: Prediction-guided next-best-view planning for 3d object reconstruction , author=. 2023 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) , pages=. 2023 , organization=

work page 2023

-

[27]

IEEE Robotics and Automation Letters , volume=

Nbv/nbc planning considering confidence obtained from shape completion learning , author=. IEEE Robotics and Automation Letters , volume=. 2024 , publisher=

work page 2024

-

[28]

IEEE Robotics and Automation Letters , volume=

An adaptable, probabilistic, next-best view algorithm for reconstruction of unknown 3-d objects , author=. IEEE Robotics and Automation Letters , volume=. 2017 , publisher=

work page 2017

-

[29]

arXiv preprint arXiv:2510.07028 , year=

Temporal-Prior-Guided View Planning for Periodic 3D Plant Reconstruction , author=. arXiv preprint arXiv:2510.07028 , year=

-

[30]

Gurobi optimizer reference manual , author=

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.