Recognition: no theorem link

When Are Trade-Off Functions Testable from Finite Samples?

Pith reviewed 2026-05-12 04:19 UTC · model grok-4.3

The pith

Finite Vapnik-Chervonenkis dimension of the set class makes trade-off function testing possible from finite samples when optimal rejection regions are attainable.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Within the framework in which the Neyman-Pearson optimal rejection regions for any pair (P, Q) are attainable up to null sets by a prescribed class S of measurable sets, finite Vapnik-Chervonenkis dimension of S is both necessary and sufficient for the existence of nontrivial finite-sample tests of whether the trade-off function lies above a benchmark curve f0 or below a weaker benchmark f1. The constructed test controls type I error nonasymptotically without assuming attainability, while power holds uniformly over attainable alternatives satisfying an explicit separation condition. Inverting the test yields simultaneous confidence bands for the whole trade-off curve. In the monotonelikeli-h

What carries the argument

The class S of measurable sets that attain the Neyman-Pearson rejection regions for (P, Q), whose finite Vapnik-Chervonenkis dimension governs testability.

If this is right

- Type I error remains controlled nonasymptotically even without attainability.

- Power is uniform over all attainable alternatives that meet an explicit separation condition.

- Inversion of the test produces simultaneous confidence bands for the entire trade-off curve.

- Local separation rates are derived in the monotone likelihood-ratio model with matching lower bounds up to logarithmic factors.

- Approximate attainability extends the guarantees to univariate log-concave distributions by approximating rejection regions with unions of intervals.

Where Pith is reading between the lines

- Similar finite-sample guarantees may hold for other functionals whose optimal estimators belong to finite-VC classes, such as certain ROC curves or divergence measures.

- Practical implementations become feasible for common models whose rejection regions are intervals or half-spaces, both of which have finite VC dimension.

- The necessity result implies that fully nonparametric inference on trade-off functions will require either asymptotic approximations or explicit structural relaxations.

- The same attainability-plus-VC lens could be applied to testing problems with dependent observations or with multiple distributions.

Load-bearing premise

The best rejection regions that achieve the fundamental type-I versus type-II error frontier for the two distributions must be exactly realizable, up to null sets, by sets belonging to the fixed class S.

What would settle it

A class S with infinite Vapnik-Chervonenkis dimension for which a test nevertheless achieves uniform finite-sample power over all attainable alternatives separated by a fixed positive amount would falsify the necessity claim.

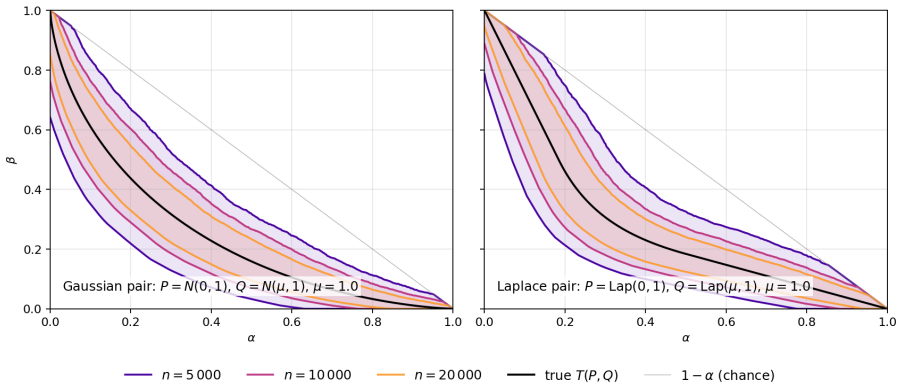

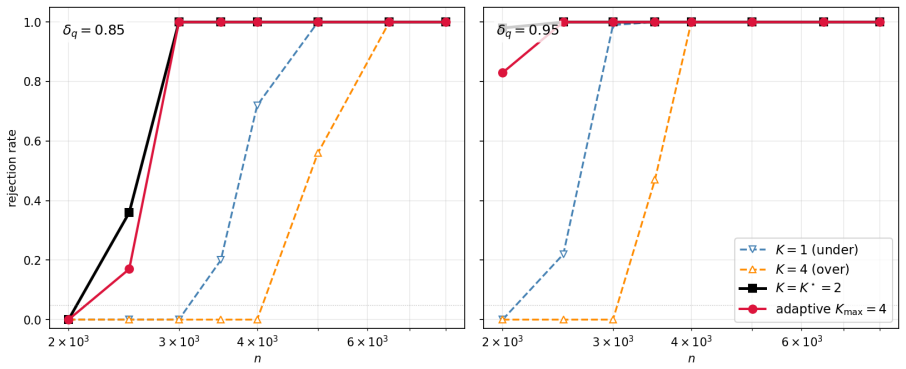

Figures

read the original abstract

We study finite-sample inference for the trade-off function of two unknown probability distributions, the function that traces the optimal type I/type II error frontier in binary testing. Given samples from distributions $P$ and $Q$, we consider the problem of testing whether their trade-off function lies above a benchmark curve $f_0$ or falls below a weaker benchmark $f_1$. Without structural restrictions, this problem is impossible uniformly over nonparametric classes. We identify a sharp condition under which it becomes possible. The key structural assumption is that the Neyman--Pearson rejection regions for $(P,Q)$ are attainable, up to null sets, by a prescribed class $S$ of measurable sets. Within this exact attainability framework, finite Vapnik--Chervonenkis dimension of $S$ is both sufficient and necessary for nontrivial finite-sample testing. We construct a test with nonasymptotic error guarantees: type I error control is valid without assuming attainability, while power holds uniformly over attainable alternatives satisfying an explicit separation condition. By inverting the test, we also obtain simultaneous confidence bands for the whole trade-off curve. Finally, we study the sharpness and robustness of the procedure. In the monotone likelihood-ratio model, we derive local separation rates and prove matching lower bounds up to logarithmic factors. We also allow approximate, rather than exact, attainability; this extension yields finite-sample guarantees for univariate log-concave distributions by approximating their rejection regions with unions of intervals.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper studies finite-sample inference for the trade-off function of two unknown distributions P and Q, which traces the optimal type I/II error frontier. Under the structural assumption that Neyman-Pearson rejection regions are attainable (up to null sets) by a prescribed class S of measurable sets, the authors prove that finite Vapnik-Chervonenkis dimension of S is both necessary and sufficient for nontrivial testing. They construct a test with nonasymptotic type I error control (valid without attainability) and power under an explicit separation condition for attainable alternatives, invert the test to obtain simultaneous confidence bands for the full curve, derive local separation rates in the monotone likelihood-ratio case with matching lower bounds up to logs, and extend to approximate attainability to cover univariate log-concave distributions via interval unions.

Significance. If the nonasymptotic guarantees and necessity result hold, the work supplies a sharp, VC-theoretic characterization of when trade-off functions are testable from finite samples, bridging empirical process theory with hypothesis testing for optimal frontiers. The unconditional type I control, inversion to confidence bands, and robustness extension to approximate attainability (with concrete application to log-concave laws) are particular strengths; the matching rates in the MLR case further align with known minimax behavior.

minor comments (2)

- The abstract and introduction would benefit from a brief explicit statement of the separation condition (e.g., in terms of the difference between the trade-off function and the benchmark) to make the power guarantee immediately accessible without reference to later sections.

- Notation for the trade-off function and the class S could be introduced with a short display equation early in the paper to avoid any ambiguity when the attainability assumption is first stated.

Simulated Author's Rebuttal

We thank the referee for their careful reading, accurate summary of our contributions, and positive recommendation to accept the manuscript. We are pleased that the referee highlights the nonasymptotic type I control, the VC-theoretic necessity result, the inversion to confidence bands, and the extension to approximate attainability for log-concave distributions.

Circularity Check

No significant circularity

full rationale

The paper derives that finite VC dimension of the attaining class S is necessary and sufficient for nontrivial finite-sample testing of the trade-off function under exact attainability. Sufficiency is obtained by constructing a test via uniform deviation bounds over S (standard empirical process theory) that controls type I error unconditionally and achieves power under an explicit separation condition; necessity follows from a standard shattering construction showing indistinguishability when VC dimension is infinite. These steps invoke the Neyman-Pearson lemma and VC theory as external results, with no reduction of target quantities to fitted parameters, self-definitional loops, or load-bearing self-citations within the paper. The nonasymptotic guarantees, inversion to confidence bands, and extensions to approximate attainability (e.g., log-concave distributions) remain independent of the paper's own inputs.

Axiom & Free-Parameter Ledger

axioms (2)

- standard math Standard uniform convergence properties of VC classes

- standard math Existence and optimality of Neyman-Pearson rejection regions

Reference graph

Works this paper leans on

-

[1]

Dong, Jinshuo and Roth, Aaron and Su, Weijie J. , title =. Journal of the Royal Statistical Society Series B: Statistical Methodology , volume =. 2022 , month =. doi:10.1111/rssb.12454 , url =

-

[2]

General-Purpose f -DP Estimation and Auditing in a Black-Box Setting , author=. 2025 , eprint=

work page 2025

-

[3]

Proceedings of the 41st International Conference on Machine Learning , pages =

Privacy Preserving Adaptive Experiment Design , author =. Proceedings of the 41st International Conference on Machine Learning , pages =. 2024 , editor =

work page 2024

-

[4]

Dwork, Cynthia , title =. 2006 , isbn =. doi:10.1007/11787006_1 , booktitle =

-

[5]

Theoreticalfoundations of conformal prediction

Theoretical Foundations of Conformal Prediction. arXiv e-prints , keywords =. doi:10.48550/arXiv.2411.11824 , archivePrefix =. 2411.11824 , primaryClass =

-

[6]

RAPPOR: Randomized Aggregatable Privacy-Preserving Ordinal Response , year =

Erlingsson, \'. RAPPOR: Randomized Aggregatable Privacy-Preserving Ordinal Response , year =. doi:10.1145/2660267.2660348 , booktitle =

- [7]

-

[8]

Proceedings of the 31st International Conference on Neural Information Processing Systems , pages =

Ding, Bolin and Kulkarni, Janardhan and Yekhanin, Sergey , title =. Proceedings of the 31st International Conference on Neural Information Processing Systems , pages =. 2017 , isbn =

work page 2017

-

[9]

Abowd, John M. , title =. 2018 , isbn =. doi:10.1145/3219819.3226070 , booktitle =

-

[10]

Advances in Neural Information Processing Systems , volume=

(Nearly) optimal algorithms for private online learning in full-information and bandit settings , author=. Advances in Neural Information Processing Systems , volume=

-

[11]

Advances in Neural Information Processing Systems , volume=

Differentially private contextual linear bandits , author=. Advances in Neural Information Processing Systems , volume=

-

[12]

arXiv preprint arXiv:2207.03445 , year=

Differentially private stochastic linear bandits:(almost) for free , author=. arXiv preprint arXiv:2207.03445 , year=

-

[13]

International Conference on Machine Learning , pages=

Robust and private stochastic linear bandits , author=. International Conference on Machine Learning , pages=. 2023 , organization=

work page 2023

-

[14]

Proceedings of the 2016 ACM SIGSAC conference on computer and communications security , pages=

Deep learning with differential privacy , author=. Proceedings of the 2016 ACM SIGSAC conference on computer and communications security , pages=

work page 2016

-

[15]

Differential privacy preservation in deep learning: Challenges, opportunities and solutions , author=. IEEE Access , volume=. 2019 , publisher=

work page 2019

-

[16]

Harvard data science review , volume=

Deep learning with gaussian differential privacy , author=. Harvard data science review , volume=

-

[17]

and Ren, Bocheng and Zou, Deqing and Dong, Mianxiong and Zhang, Shunli , journal=

Feng, Jun and Yang, Laurence T. and Ren, Bocheng and Zou, Deqing and Dong, Mianxiong and Zhang, Shunli , journal=. Tensor Recurrent Neural Network With Differential Privacy , year=

-

[18]

Bu, Zhiqi and Mao, Jialin and Xu, Shiyun , booktitle =. Scalable and Efficient Training of Large Convolutional Neural Networks with Differential Privacy , url =

-

[19]

A Randomized Approach to Tight Privacy Accounting , url =

Wang, Jiachen (Tianhao) and Mahloujifar, Saeed and Wu, Tong and Jia, Ruoxi and Mittal, Prateek , booktitle =. A Randomized Approach to Tight Privacy Accounting , url =

-

[20]

Privacy Auditing with One (1) Training Run , url =

Steinke, Thomas and Nasr, Milad and Jagielski, Matthew , booktitle =. Privacy Auditing with One (1) Training Run , url =

-

[21]

Ding, Zeyu and Wang, Yuxin and Wang, Guanhong and Zhang, Danfeng and Kifer, Daniel , title =. 2018 , isbn =. doi:10.1145/3243734.3243818 , booktitle =

-

[22]

Proceedings of the forty-sixth annual ACM symposium on Theory of computing , pages=

Efficient density estimation via piecewise polynomial approximation , author=. Proceedings of the forty-sixth annual ACM symposium on Theory of computing , pages=

-

[23]

2018 56th Annual Allerton Conference on Communication, Control, and Computing (Allerton) , pages=

Property testing for differential privacy , author=. 2018 56th Annual Allerton Conference on Communication, Control, and Computing (Allerton) , pages=. 2018 , organization=

work page 2018

- [24]

-

[25]

Tight Auditing of Differentially Private Machine Learning , booktitle =

Milad Nasr and Jamie Hayes and Thomas Steinke and Borja Balle and Florian Tram. Tight Auditing of Differentially Private Machine Learning , booktitle =. 2023 , isbn =

work page 2023

-

[26]

Auditing Differential Privacy Guarantees Using Density Estimation , author=. 2024 , eprint=

work page 2024

-

[27]

Yifan Yao and Jinhao Duan and Kaidi Xu and Yuanfang Cai and Zhibo Sun and Yue Zhang , keywords =. A survey on large language model (LLM) security and privacy: The Good, The Bad, and The Ugly , journal =. 2024 , issn =. doi:https://doi.org/10.1016/j.hcc.2024.100211 , url =

-

[28]

Proceedings of the Twenty-Fifth Annual ACM-SIAM Symposium on Discrete Algorithms , pages =

Chan, Siu-On and Diakonikolas, Ilias and Valiant, Gregory and Valiant, Paul , title =. Proceedings of the Twenty-Fifth Annual ACM-SIAM Symposium on Discrete Algorithms , pages =. 2014 , isbn =

work page 2014

-

[29]

Diakonikolas, Ilias and Kane, Daniel M. , booktitle=. A New Approach for Testing Properties of Discrete Distributions , year=

- [30]

-

[31]

Journal of the Royal Statistical Society Series A: Statistics in Society , volume=

Sampling and Bayes’ inference in scientific modelling and robustness , author=. Journal of the Royal Statistical Society Series A: Statistics in Society , volume=. 1980 , publisher=

work page 1980

-

[32]

Pattern recognition letters , volume=

An introduction to ROC analysis , author=. Pattern recognition letters , volume=. 2006 , publisher=

work page 2006

-

[33]

Equality of Opportunity in Supervised Learning , author=. 2016 , eprint=

work page 2016

- [34]

-

[35]

and Ko, Justin and Swetter, Susan M

Esteva, Andre and Kuprel, Brett and Novoa, Roberto A. and Ko, Justin and Swetter, Susan M. and Blau, Helen M. and Thrun, Sebastian , title =. Nature , volume =. 2017 , doi =

work page 2017

-

[36]

International Journal of Environmental Research and Public Health , VOLUME =

Haider, Mughees and Yousaf, Saira and Zaib, Asifa and Sarfraz, Azza and Sarfraz, Zouina and Cherrez-Ojeda, Ivan , TITLE =. International Journal of Environmental Research and Public Health , VOLUME =. 2022 , NUMBER =

work page 2022

-

[37]

Archives of Neurology , volume =

Tapiola, Tero and Alafuzoff, Irina and Herukka, Sanna-Kaisa and Parkkinen, Leena and Hartikainen, Paula and Soininen, Hilkka and Pirttil\"a, Tiina , title =. Archives of Neurology , volume =. 2009 , doi =

work page 2009

-

[38]

Measures of complexity: festschrift for alexey chervonenkis , pages=

On the uniform convergence of relative frequencies of events to their probabilities , author=. Measures of complexity: festschrift for alexey chervonenkis , pages=. 2015 , publisher=

work page 2015

-

[39]

Advances in neural information processing systems , volume=

A general agnostic active learning algorithm , author=. Advances in neural information processing systems , volume=

-

[40]

Ingster, Yu. I. , title =. Theory of Probability & Its Applications , volume =. 2001 , doi =

work page 2001

-

[41]

Tolerant property testing and distance approximation , journal =

Michal Parnas and Dana Ron and Ronitt Rubinfeld , keywords =. Tolerant property testing and distance approximation , journal =. 2006 , issn =. doi:https://doi.org/10.1016/j.jcss.2006.03.002 , url =

- [42]

-

[43]

Batu, T. and Fortnow, L. and Rubinfeld, R. and Smith, W.D. and White, P. , booktitle=. Testing that distributions are close , year=

-

[44]

A Coincidence-Based Test for Uniformity Given Very Sparsely Sampled Discrete Data , year=

Paninski, Liam , journal=. A Coincidence-Based Test for Uniformity Given Very Sparsely Sampled Discrete Data , year=

-

[45]

Calibrating Noise to Sensitivity in Private Data Analysis

Dwork, Cynthia and McSherry, Frank and Nissim, Kobbi and Smith, Adam. Calibrating Noise to Sensitivity in Private Data Analysis. Theory of Cryptography. 2006

work page 2006

-

[46]

The algorithmic foundations of differential privacy , author=. Foundations and trends. 2014 , publisher=

work page 2014

-

[47]

Collision-based testers are optimal for uniformity and closeness , author=. Chic. J. Theor. Comput. Sci , volume=

-

[48]

Cl\'emen. Concentration inequalities for two-sample rank processes with application to bipartite ranking , volume=. Electronic Journal of Statistics , publisher=. doi:10.1214/21-ejs1907 , number=

-

[49]

Electronic Journal of Statistics , number =

Stephan Cl. Electronic Journal of Statistics , number =. 2025 , doi =

work page 2025

-

[50]

Proceedings of The 27th International Conference on Artificial Intelligence and Statistics , pages =

On Ranking-based Tests of Independence , author =. Proceedings of The 27th International Conference on Artificial Intelligence and Statistics , pages =. 2024 , editor =

work page 2024

-

[51]

Proceedings of Thirty Fifth Conference on Learning Theory , pages =

The Price of Tolerance in Distribution Testing , author =. Proceedings of Thirty Fifth Conference on Learning Theory , pages =. 2022 , editor =

work page 2022

-

[52]

Annual international conference on the theory and applications of cryptographic techniques , pages=

Our data, ourselves: Privacy via distributed noise generation , author=. Annual international conference on the theory and applications of cryptographic techniques , pages=. 2006 , organization=

work page 2006

-

[53]

Exploring the Gap Between Tolerant and Non-Tolerant Distribution Testing , year=

Chakraborty, Sourav and Fischer, Eldar and Ghosh, Arijit and Mishra, Gopinath and Sen, Sayantan , journal=. Exploring the Gap Between Tolerant and Non-Tolerant Distribution Testing , year=

-

[54]

and Nikishkin, Vladimir , booktitle=

Diakonikolas, Ilias and Kane, Daniel M. and Nikishkin, Vladimir , booktitle=. Optimal Algorithms and Lower Bounds for Testing Closeness of Structured Distributions , year=

-

[55]

IEEE/ACM Transactions on Computational Biology and Bioinformatics , volume=

Maximum-scoring segment sets , author=. IEEE/ACM Transactions on Computational Biology and Bioinformatics , volume=. 2004 , publisher=

work page 2004

-

[56]

International Computing and Combinatorics Conference , pages=

Computing maximum-scoring segments in almost linear time , author=. International Computing and Combinatorics Conference , pages=. 2006 , organization=

work page 2006

-

[57]

arXiv preprint arXiv:2106.13851 , year=

Approximate maximum halfspace discrepancy , author=. arXiv preprint arXiv:2106.13851 , year=

-

[58]

43rd International Colloquium on Automata, Languages, and Programming (ICALP 2016) , pages=

Tight Hardness Results for Maximum Weight Rectangles , author=. 43rd International Colloquium on Automata, Languages, and Programming (ICALP 2016) , pages=. 2016 , organization=

work page 2016

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.