Recognition: no theorem link

Fixed-Point Neural Optimal Transport without Implicit Differentiation

Pith reviewed 2026-05-12 03:31 UTC · model grok-4.3

The pith

A single neural network solves optimal transport by reformulating the c-transform as a proximal fixed-point problem, enforcing dual feasibility exactly without adversarial training or implicit differentiation.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Parameterizing a single potential in the Kantorovich dual and reformulating the associated c-transform as a proximal fixed-point problem yields a stable single-network framework for neural optimal transport in which dual feasibility is enforced exactly through proximal optimality conditions rather than adversarial training, gradients can be computed without implicit differentiation, and stochastic gradient descent is shown to converge.

What carries the argument

the proximal fixed-point reformulation of the c-transform, which replaces the infimum operation with an iterative proximal step that enforces dual feasibility exactly upon convergence

If this is right

- Both forward and backward transport maps are recovered simultaneously from the single trained potential.

- The framework extends directly to class-conditional optimal transport.

- Stochastic gradient descent is guaranteed to converge for the resulting objective.

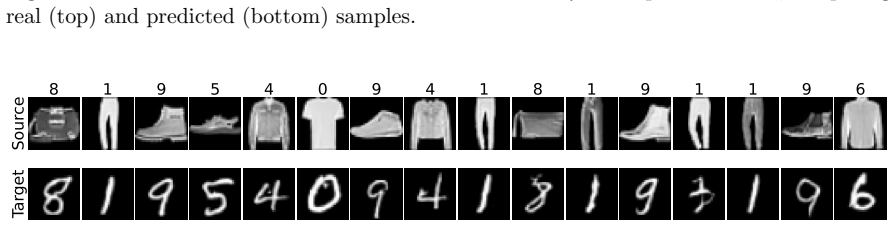

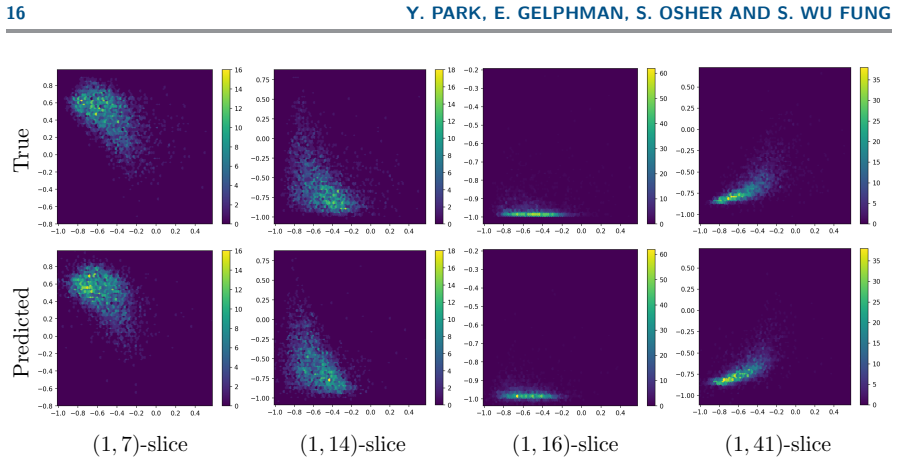

- Experiments confirm strong transport accuracy together with better stability and lower computational and memory cost than adversarial baselines on Gaussian benchmarks, physical datasets, and image translation tasks.

Where Pith is reading between the lines

- Using only one network could reduce memory usage enough to scale neural optimal transport to problems where storing multiple networks becomes prohibitive.

- Reliable fixed-point solves might allow the same proximal structure to be reused for other dual variational problems that involve transforms analogous to the c-transform.

- Avoiding adversarial training could produce transport maps that remain stable under moderate distribution shifts in the source or target measures.

Load-bearing premise

The proximal fixed-point reformulation of the c-transform can be solved accurately enough in practice to enforce dual feasibility exactly and that gradients computed without implicit differentiation remain faithful to the true optimal transport objective.

What would settle it

Training produces transport maps whose pushforward of the source measure deviates from the target by more than numerical tolerance on the marginal constraints, or the no-implicit-differentiation gradients yield measurably different convergence behavior than full differentiation through the fixed-point iterations on a small-scale problem.

Figures

read the original abstract

We propose an implicit neural formulation of optimal transport that eliminates adversarial min--max optimization and multi-network architectures commonly used in existing approaches. Our key idea is to parameterize a single potential in the Kantorovich dual and reformulate the associated c-transform as a proximal fixed-point problem. This yields a stable single-network framework in which dual feasibility is enforced exactly through proximal optimality conditions rather than adversarial training. Despite the inner fixed-point computation, gradients can be computed without differentiating through the fixed-point iterations, enabling efficient training without requiring implicit differentiation. We further establish convergence of stochastic gradient descent. The resulting framework is efficient, scalable, and broadly applicable: it simultaneously recovers forward and backward transport maps and naturally extends to class-conditional settings. Experiments on high-dimensional Gaussian benchmarks, physical datasets, and image translation tasks demonstrate strong transport accuracy together with improved training stability and favorable computational and memory efficiency.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes an implicit neural formulation of optimal transport that parameterizes a single Kantorovich potential and reformulates the c-transform as a proximal fixed-point problem. This yields a single-network architecture that enforces dual feasibility exactly via proximal optimality conditions (rather than adversarial training), computes gradients without implicit differentiation, proves SGD convergence, recovers forward and backward maps, and extends to class-conditional settings. Experiments on high-dimensional Gaussians, physical datasets, and image translation demonstrate strong accuracy, stability, and efficiency.

Significance. If the central claims hold—particularly that finite proximal iterations enforce exact dual feasibility and that the non-implicit gradient is unbiased for the Kantorovich objective—this would be a meaningful advance: it simplifies neural OT to a stable single-network framework without min-max optimization or multi-network setups, while providing a convergence guarantee. The ability to recover both transport maps and handle conditional settings is a practical strength. However, the lack of detailed error bounds or gradient derivations in the abstract leaves the practical validity open.

major comments (3)

- [§3] §3 (proximal fixed-point construction): the claim that dual feasibility is enforced exactly relies on the proximal optimality conditions, but with finite iterations the residual error means the computed potential is only approximately c-concave; the dual objective is then no longer guaranteed to be a valid lower bound. A quantitative bound on the duality gap as a function of iteration count is needed.

- [§4] §4 (gradient computation without implicit differentiation): the shortcut that avoids differentiating through the fixed-point iterations appears to omit the implicit dependence of the solution on network parameters. Without a derivation showing that the resulting direction is still the true gradient of the dual objective (or an analysis of the bias), it is unclear whether SGD converges to a stationary point of the original OT problem.

- [Theorem on SGD convergence] Theorem on SGD convergence: the stated convergence result assumes exact fixed-point solutions at each step. The proof must be extended (or an additional assumption stated) to cover the approximation error from early termination of the proximal iterations; otherwise the theorem does not apply to the implemented algorithm.

minor comments (2)

- [§2] Notation for the proximal operator and c-transform should be introduced with explicit definitions before the fixed-point equation to improve readability.

- [Experiments] The experimental section would benefit from an ablation on the number of proximal iterations versus transport accuracy and duality gap to quantify the practical impact of early termination.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed comments on our manuscript. We address each major comment point by point below, providing clarifications and committing to revisions that strengthen the theoretical foundations and practical applicability of the work.

read point-by-point responses

-

Referee: [§3] §3 (proximal fixed-point construction): the claim that dual feasibility is enforced exactly relies on the proximal optimality conditions, but with finite iterations the residual error means the computed potential is only approximately c-concave; the dual objective is then no longer guaranteed to be a valid lower bound. A quantitative bound on the duality gap as a function of iteration count is needed.

Authors: We agree that a finite number of proximal iterations yields an approximate c-concave potential and thus an approximate lower bound on the dual objective. The proximal optimality condition enforces exact feasibility only in the limit. In the revised manuscript we will derive and insert a quantitative bound on the duality gap that exploits the contraction property of the proximal mapping for the c-transform; the gap decreases exponentially in the iteration count. This bound will be stated in §3 and validated numerically in the experiments. revision: yes

-

Referee: [§4] §4 (gradient computation without implicit differentiation): the shortcut that avoids differentiating through the fixed-point iterations appears to omit the implicit dependence of the solution on network parameters. Without a derivation showing that the resulting direction is still the true gradient of the dual objective (or an analysis of the bias), it is unclear whether SGD converges to a stationary point of the original OT problem.

Authors: The gradient shortcut follows from the envelope theorem applied at the proximal fixed point: once the optimality condition is satisfied, the implicit dependence on the parameters cancels and the gradient of the dual objective reduces to an explicit expression that does not require differentiating through the iterations. We will add a self-contained derivation (including the precise statement of the envelope theorem used) to the appendix, confirming that the computed direction is unbiased for the Kantorovich dual and that SGD therefore targets its stationary points. revision: yes

-

Referee: Theorem on SGD convergence: the stated convergence result assumes exact fixed-point solutions at each step. The proof must be extended (or an additional assumption stated) to cover the approximation error from early termination of the proximal iterations; otherwise the theorem does not apply to the implemented algorithm.

Authors: The current theorem statement assumes exact fixed-point solutions. We will revise the theorem to incorporate a bounded residual assumption (the proximal iteration is terminated when the residual is at most ε) and extend the proof to show that the convergence guarantee continues to hold with an additive O(ε) term in the final bound. The revised statement and proof will appear in the main text and appendix, respectively, making the result directly applicable to the finite-iteration algorithm used throughout the paper. revision: yes

Circularity Check

No significant circularity; derivation introduces independent proximal fixed-point construction

full rationale

The paper's core contribution parameterizes a single potential in the Kantorovich dual and recasts the c-transform as a proximal fixed-point problem whose optimality conditions are asserted to enforce dual feasibility. This reformulation is presented as a novel modeling choice rather than a re-derivation of prior fitted quantities or self-cited results. No load-bearing step reduces by construction to an input parameter, a self-citation chain, or a renamed empirical pattern; the gradient shortcut and SGD convergence claims are derived from the fixed-point properties without definitional equivalence to the network outputs. The framework remains self-contained against external OT benchmarks.

Axiom & Free-Parameter Ledger

axioms (2)

- standard math Kantorovich duality applies to the optimal transport problem under consideration

- domain assumption The c-transform can be reformulated as a proximal fixed-point problem whose solution satisfies dual feasibility exactly

Reference graph

Works this paper leans on

-

[1]

Scalable Fixed-Point Framework for High-Dimensional Hamilton-Jacobi Equations , author=

-

[2]

Proceedings of the National Academy of Sciences , volume=

Alternating the population and control neural networks to solve high-dimensional stochastic mean-field games , author=. Proceedings of the National Academy of Sciences , volume=. 2021 , publisher=

work page 2021

-

[3]

Proceedings of the National Academy of Sciences , volume=

A machine learning framework for solving high-dimensional mean field game and mean field control problems , author=. Proceedings of the National Academy of Sciences , volume=. 2020 , publisher=

work page 2020

-

[4]

Journal of Mathematical Imaging and Vision , volume=

On Bayesian posterior mean estimators in imaging sciences and Hamilton--Jacobi partial differential equations , author=. Journal of Mathematical Imaging and Vision , volume=. 2021 , publisher=

work page 2021

-

[5]

Connecting Hamilton-Jacobi partial differential equations with maximum a posteriori and posterior mean estimators for some non-convex priors , author=. Handbook of Mathematical Models and Algorithms in Computer Vision and Imaging: Mathematical Imaging and Vision , pages=. 2021 , publisher=

work page 2021

-

[6]

SIAM Journal on Mathematics of Data Science , volume=

Wasserstein-based projections with applications to inverse problems , author=. SIAM Journal on Mathematics of Data Science , volume=. 2022 , publisher=

work page 2022

-

[8]

SIAM Journal on Mathematics of Data Science , volume=

Implicit deep learning , author=. SIAM Journal on Mathematics of Data Science , volume=. 2021 , publisher=

work page 2021

-

[9]

arXiv preprint arXiv:2410.07427 , year=

A generalization bound for a family of implicit networks , author=. arXiv preprint arXiv:2410.07427 , year=

-

[10]

A generalization bound for a family of implicit networks , journal =

Samy. A generalization bound for a family of implicit networks , journal =. 2026 , issn =

work page 2026

-

[12]

Proceedings of the AAAI Conference on Artificial Intelligence , volume=

Jfb: Jacobian-free backpropagation for implicit networks , author=. Proceedings of the AAAI Conference on Artificial Intelligence , volume=

-

[13]

Communications on Applied Mathematics and Computation , volume=

Global solutions to nonconvex problems by evolution of Hamilton-Jacobi PDEs , author=. Communications on Applied Mathematics and Computation , volume=. 2024 , publisher=

work page 2024

-

[14]

Foundations and Trends in optimization , volume=

Proximal algorithms , author=. Foundations and Trends in optimization , volume=. 2014 , publisher=

work page 2014

-

[15]

Proceedings of the National Academy of Sciences , volume=

A Hamilton--Jacobi-based proximal operator , author=. Proceedings of the National Academy of Sciences , volume=. 2023 , publisher=

work page 2023

-

[16]

Journal of Machine Learning Research , volume=

Laplace Meets Moreau: Smooth Approximation to Infimal Convolutions Using Laplace's Method , author=. Journal of Machine Learning Research , volume=

- [17]

- [18]

-

[19]

International conference on learning representations , year=

Neural optimal transport , author=. International conference on learning representations , year=

-

[21]

Advances in neural information processing systems , volume=

Do neural optimal transport solvers work? a continuous wasserstein-2 benchmark , author=. Advances in neural information processing systems , volume=

-

[23]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Wasserstein gan with quadratic transport cost , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[24]

International Conference on Learning Representations , year=

Neural Hamilton--Jacobi Characteristic Flows for Optimal Transport , author=. International Conference on Learning Representations , year=

-

[26]

International conference on machine learning , pages=

Input convex neural networks , author=. International conference on machine learning , pages=. 2017 , organization=

work page 2017

-

[28]

Optimization methods for large-scale machine learning , author=. SIAM review , volume=. 2018 , publisher=

work page 2018

-

[29]

Proceedings of the AAAI Conference on Artificial Intelligence , volume=

Ot-flow: Fast and accurate continuous normalizing flows via optimal transport , author=. Proceedings of the AAAI Conference on Artificial Intelligence , volume=

-

[30]

International Conference on Learning Representations , year=

Ffjord: Free-form continuous dynamics for scalable reversible generative models , author=. International Conference on Learning Representations , year=

-

[31]

International conference on machine learning , pages=

How to train your neural ode: the world of jacobian and kinetic regularization , author=. International conference on machine learning , pages=. 2020 , organization=

work page 2020

-

[32]

Markelle Kelly and Rachel Longjohn and Kolby Nottingham , title =

-

[33]

An Invitation to Optimal Transport, Wasserstein Distances, and Gradient Flows , author=. 2021 , publisher=

work page 2021

- [34]

-

[35]

Journal of Computational Physics , volume=

High order two dimensional nonoscillatory methods for solving Hamilton--Jacobi scalar equations , author=. Journal of Computational Physics , volume=. 1996 , publisher=

work page 1996

-

[36]

Level set methods and dynamic implicit surfaces , author=. Appl. Mech. Rev. , volume=

-

[37]

SIAM Journal on numerical analysis , volume=

High-order essentially nonoscillatory schemes for Hamilton--Jacobi equations , author=. SIAM Journal on numerical analysis , volume=. 1991 , publisher=

work page 1991

-

[38]

Journal of Computational Physics , volume=

A local discontinuous Galerkin method for directly solving Hamilton--Jacobi equations , author=. Journal of Computational Physics , volume=. 2011 , publisher=

work page 2011

-

[39]

Journal of Computational Physics , volume=

A discontinuous Galerkin finite element method for directly solving the Hamilton--Jacobi equations , author=. Journal of Computational Physics , volume=. 2007 , publisher=

work page 2007

-

[40]

Journal of Computational Physics , volume=

Algorithm for overcoming the curse of dimensionality for state-dependent Hamilton-Jacobi equations , author=. Journal of Computational Physics , volume=. 2019 , publisher=

work page 2019

-

[41]

Computers & Mathematics with Applications , volume=

Hopf-type representation formulas and efficient algorithms for certain high-dimensional optimal control problems , author=. Computers & Mathematics with Applications , volume=. 2024 , publisher=

work page 2024

-

[42]

Automatic differentiation in pytorch , author=

-

[43]

Physics-informed neural networks:

Raissi, Maziar and Perdikaris, Paris and Karniadakis, George E , journal=. Physics-informed neural networks:. 2019 , publisher=

work page 2019

-

[44]

Communications in Mathematics and Statistics , volume=

The deep Ritz method: a deep learning-based numerical algorithm for solving variational problems , author=. Communications in Mathematics and Statistics , volume=. 2018 , publisher=

work page 2018

-

[45]

Mathematical models for aircraft trajectory design: A survey , author=. Air Traffic Management and Systems: Selected Papers of the 3rd ENRI International Workshop on ATM/CNS (EIWAC2013) , pages=. 2014 , organization=

work page 2014

-

[46]

Optimal Control Applications and Methods , volume=

On a Hamilton-Jacobi-Bellman approach for coordinated optimal aircraft trajectories planning , author=. Optimal Control Applications and Methods , volume=. 2018 , publisher=

work page 2018

-

[47]

2016 IEEE international conference on robotics and automation (ICRA) , pages=

Application of an approximate model predictive control scheme on an unmanned aerial vehicle , author=. 2016 IEEE international conference on robotics and automation (ICRA) , pages=. 2016 , organization=

work page 2016

-

[48]

Proceedings of the 2005, American Control Conference, 2005

An efficient sequential linear quadratic algorithm for solving nonlinear optimal control problems , author=. Proceedings of the 2005, American Control Conference, 2005. , pages=. 2005 , organization=

work page 2005

-

[49]

Transactions of the American mathematical society , volume=

Viscosity solutions of Hamilton-Jacobi equations , author=. Transactions of the American mathematical society , volume=

-

[50]

Applications to nonholonomic mechanics , author=

Linear almost Poisson structures and Hamilton-Jacobi equation. Applications to nonholonomic mechanics , author=. arXiv preprint arXiv:0801.4358 , year=

-

[51]

Journal of Mathematical Physics , volume=

Solution of the Hamilton-Jacobi equation for certain dissipative classical mechanical systems , author=. Journal of Mathematical Physics , volume=. 1973 , publisher=

work page 1973

-

[52]

Robot manipulator control: theory and practice , author=. 2003 , publisher=

work page 2003

-

[53]

IEEE Transactions on robotics and automation , volume=

An optimal control approach to robust control of robot manipulators , author=. IEEE Transactions on robotics and automation , volume=. 1998 , publisher=

work page 1998

-

[54]

SIAM Journal on Control and Optimization , volume=

A max-plus-based algorithm for a Hamilton--Jacobi--Bellman equation of nonlinear filtering , author=. SIAM Journal on Control and Optimization , volume=. 2000 , publisher=

work page 2000

-

[55]

2020 IEEE International Conference on Robotics and Automation (ICRA) , pages=

A hamilton-jacobi reachability-based framework for predicting and analyzing human motion for safe planning , author=. 2020 IEEE International Conference on Robotics and Automation (ICRA) , pages=. 2020 , organization=

work page 2020

-

[56]

ESAIM: Control, Optimisation and Calculus of Variations , volume=

A Hamilton-Jacobi approach to junction problems and application to traffic flows , author=. ESAIM: Control, Optimisation and Calculus of Variations , volume=. 2013 , publisher=

work page 2013

-

[57]

Transportation Research Part B: Methodological , volume=

The Hamilton--Jacobi partial differential equation and the three representations of traffic flow , author=. Transportation Research Part B: Methodological , volume=. 2013 , publisher=

work page 2013

-

[58]

Journal of Computational Finance , volume=

Numerical methods for controlled Hamilton-Jacobi-Bellman PDEs in finance , author=. Journal of Computational Finance , volume=. 2007 , publisher=

work page 2007

-

[59]

Insurance: Mathematics and Economics , volume=

Optimal proportional reinsurance and investment based on Hamilton--Jacobi--Bellman equation , author=. Insurance: Mathematics and Economics , volume=. 2009 , publisher=

work page 2009

-

[60]

International Journal for Numerical Methods in Engineering , volume=

Simulation of a premixed turbulent flame with the discrete vortex method , author=. International Journal for Numerical Methods in Engineering , volume=. 2000 , publisher=

work page 2000

-

[61]

Multiscale Modeling & Simulation , volume=

Nonlocal operators with applications to image processing , author=. Multiscale Modeling & Simulation , volume=. 2009 , publisher=

work page 2009

-

[62]

SIAM Journal on Imaging Sciences , volume=

On convex finite-dimensional variational methods in imaging sciences and Hamilton--Jacobi equations , author=. SIAM Journal on Imaging Sciences , volume=. 2015 , publisher=

work page 2015

-

[63]

Geometric level set methods in imaging, vision, and graphics , author=. 2007 , publisher=

work page 2007

-

[64]

International journal of computer vision , volume=

Geodesic active contours , author=. International journal of computer vision , volume=. 1997 , publisher=

work page 1997

-

[65]

2017 IEEE 56th Annual Conference on Decision and Control (CDC) , pages=

Hamilton-jacobi reachability: A brief overview and recent advances , author=. 2017 IEEE 56th Annual Conference on Decision and Control (CDC) , pages=. 2017 , organization=

work page 2017

-

[66]

Indiana University mathematics journal , volume=

Differential games and representation formulas for solutions of Hamilton-Jacobi-Isaacs equations , author=. Indiana University mathematics journal , volume=. 1984 , publisher=

work page 1984

-

[67]

Particle dynamics inside shocks in Hamilton--Jacobi equations , author=. Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences , volume=. 2010 , publisher=

work page 2010

-

[68]

A Hamilton--Jacobi formalism for thermodynamics , author=. Annals of Physics , volume=. 2008 , publisher=

work page 2008

-

[69]

Hamilton-Jacobi equation in momentum space , author=. Optics Express , volume=. 2006 , publisher=

work page 2006

-

[70]

SIAM Journal on Mathematical Analysis , volume=

A level set formulation for the solution of the Dirichlet problem for Hamilton--Jacobi equations , author=. SIAM Journal on Mathematical Analysis , volume=. 1993 , publisher=

work page 1993

-

[71]

SIAM Journal on Numerical Analysis , volume=

High-order central WENO schemes for multidimensional Hamilton-Jacobi equations , author=. SIAM Journal on Numerical Analysis , volume=. 2003 , publisher=

work page 2003

-

[72]

Journal of Computational Physics , volume=

Hermite WENO schemes for Hamilton--Jacobi equations , author=. Journal of Computational Physics , volume=. 2005 , publisher=

work page 2005

-

[73]

SIAM Journal on Scientific computing , volume=

Weighted ENO schemes for Hamilton--Jacobi equations , author=. SIAM Journal on Scientific computing , volume=. 2000 , publisher=

work page 2000

-

[74]

Journal of computational physics , volume=

Semi-Lagrangian schemes for Hamilton--Jacobi equations, discrete representation formulae and Godunov methods , author=. Journal of computational physics , volume=. 2002 , publisher=

work page 2002

-

[75]

Semi-Lagrangian approximation schemes for linear and Hamilton—Jacobi equations , author=. 2013 , publisher=

work page 2013

-

[76]

arXiv preprint arXiv:2403.02468 , year=

A Primal-dual hybrid gradient method for solving optimal control problems and the corresponding Hamilton-Jacobi PDEs , author=. arXiv preprint arXiv:2403.02468 , year=

-

[77]

SIAM Journal on Numerical Analysis , volume=

Fast semi-Lagrangian schemes for the Eikonal equation and applications , author=. SIAM Journal on Numerical Analysis , volume=. 2007 , publisher=

work page 2007

-

[78]

Journal of computational physics , volume=

Fronts propagating with curvature-dependent speed: Algorithms based on Hamilton-Jacobi formulations , author=. Journal of computational physics , volume=. 1988 , publisher=

work page 1988

-

[79]

Computing reachable sets for continuous dynamic games using level set methods , author=. Submitted January , year=

-

[80]

Journal of Sound and Vibration , volume=

Application of level set method to optimal vibration control of plate structures , author=. Journal of Sound and Vibration , volume=. 2013 , publisher=

work page 2013

-

[81]

Journal of Scientific Computing , volume=

Algorithm for overcoming the curse of dimensionality for time-dependent non-convex Hamilton--Jacobi equations arising from optimal control and differential games problems , author=. Journal of Scientific Computing , volume=. 2017 , publisher=

work page 2017

-

[82]

Research in the Mathematical Sciences , volume=

Algorithms for overcoming the curse of dimensionality for certain Hamilton--Jacobi equations arising in control theory and elsewhere , author=. Research in the Mathematical Sciences , volume=. 2016 , publisher=

work page 2016

-

[83]

Max-plus methods for nonlinear control and estimation , author=. 2006 , publisher=

work page 2006

-

[84]

SIAM Journal on Control and Optimization , volume=

The max-plus finite element method for solving deterministic optimal control problems: basic properties and convergence analysis , author=. SIAM Journal on Control and Optimization , volume=. 2008 , publisher=

work page 2008

-

[85]

Applied Mathematics & Optimization , volume=

Perspectives on characteristics based curse-of-dimensionality-free numerical approaches for solving Hamilton--Jacobi equations , author=. Applied Mathematics & Optimization , volume=. 2021 , publisher=

work page 2021

-

[86]

SIAM Journal on Control and Optimization , volume=

Error analysis for POD approximations of infinite horizon problems via the dynamic programming approach , author=. SIAM Journal on Control and Optimization , volume=. 2017 , publisher=

work page 2017

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.