Recognition: no theorem link

Forecast-aware Gaussian Splatting for Predictive 3D Representation in Language-Guided Pick-and-Place Manipulation

Pith reviewed 2026-05-13 02:19 UTC · model grok-4.3

The pith

Forecasting task-completed 3D states with Gaussian Splatting enables better action selection for language-guided robotic pick-and-place.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

By building a predictive 3D representation of the task-completed state, Forecast-GS allows robots to evaluate candidate actions for feasibility and consistency with language instructions under partial observations.

What carries the argument

Forecast-aware Gaussian Splatting (Forecast-GS), which generates a forecasted 3D model of the scene once the task is complete to support action ranking and selection.

Load-bearing premise

The forecast of the task-completed 3D state can be reliably used to determine if a candidate action will produce a feasible and task-consistent result despite incomplete observations.

What would settle it

An experiment showing that actions chosen by the forecast method frequently fail to produce the predicted final 3D state, or that the method rejects actions that would actually succeed.

Figures

read the original abstract

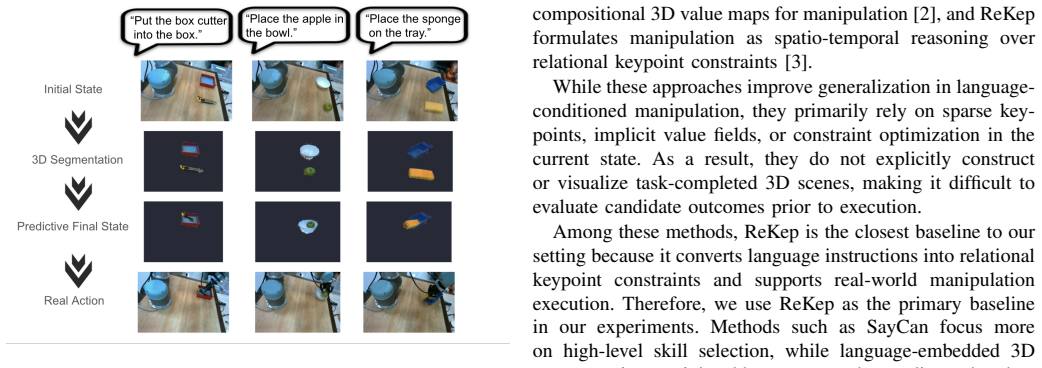

We introduce Forecast-aware Gaussian Splatting (Forecast-GS), a predictive 3D representation framework for language-conditioned robotic manipulation. While recent manipulation systems have made progress by grounding language instructions into robot affordances, value maps, or relational keypoint constraints, they usually reason over the current scene and do not explicitly model the task-completed state. This limitation is critical when success depends on satisfying spatial and semantic goals under partial observations, where the robot must evaluate whether a candidate action leads to a feasible task-consistent outcome. We validate Forecast-GS on real-world pick-and-place manipulation tasks, including Cutter-to-Box, Apple-to-Bowl, and Sponge-to-Tray. For each task, we conduct 25 real-world trials under varied initial object configurations using the same robot platform and sensing setup. Forecast-GS with automatic candidate selection achieves success rates of 21/25, 23/25, and 16/25 on the three tasks, respectively, outperforming the ReKep baseline, which achieves 15/25, 19/25, and 10/25. A diagnostic human-assisted setting further improves success rates to 23/25, 24/25, and 19/25, suggesting that candidate generation is effective while automatic ranking remains imperfect. These results suggest that explicitly forecasting task-completed 3D states enables more reliable action evaluation, while the gap between automatic and human-assisted selection indicates that robust final-state ranking remains an important challenge for fully autonomous manipulation. Overall, Forecast-GS provides an interpretable bridge between language understanding, 3D perception, and robotic manipulation planning.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces Forecast-aware Gaussian Splatting (Forecast-GS), a predictive 3D representation that explicitly forecasts task-completed states to improve action evaluation in language-guided pick-and-place manipulation under partial observations. It contrasts this with current-scene methods like ReKep and validates the approach via 25 real-world trials per task on Cutter-to-Box, Apple-to-Bowl, and Sponge-to-Tray, reporting success rates of 21/25, 23/25, and 16/25 (vs. baseline 15/25, 19/25, 10/25), with human-assisted selection reaching 23/25, 24/25, 19/25.

Significance. If the forecasting mechanism is robust and generalizable, Forecast-GS could provide a useful bridge between language grounding, 3D perception, and planning by enabling explicit goal-state prediction, which is particularly relevant for manipulation tasks where success depends on spatial-semantic consistency. The real-world trial results with a fixed robot platform and sensing setup offer concrete evidence of improved success rates over a named baseline, and the diagnostic gap to human-assisted selection usefully isolates candidate generation as effective while highlighting ranking as a remaining challenge.

major comments (2)

- [Experimental validation] The reported success rates (e.g., 21/25 vs. 15/25) lack accompanying statistical tests, confidence intervals, or error analysis on the forecast predictions themselves, which is load-bearing for confidently attributing the gains to Forecast-GS rather than trial variability or implementation specifics.

- [Abstract and Methods] The abstract and validation sections provide no details on the method for generating forecasts or adapting Gaussian Splatting for predictive task-completed states, leaving the core technical mechanism insufficiently specified to evaluate reproducibility or novelty.

minor comments (2)

- [null] Clarify the exact criteria used for automatic candidate selection and ranking in the Forecast-GS pipeline, as this directly affects interpretation of the automatic vs. human-assisted gap.

- [Abstract] The abstract could explicitly note the total number of trials (75) and the fixed sensing/robot setup earlier for improved readability.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback and the recommendation of minor revision. We address each major comment below and will incorporate revisions to strengthen the manuscript.

read point-by-point responses

-

Referee: [Experimental validation] The reported success rates (e.g., 21/25 vs. 15/25) lack accompanying statistical tests, confidence intervals, or error analysis on the forecast predictions themselves, which is load-bearing for confidently attributing the gains to Forecast-GS rather than trial variability or implementation specifics.

Authors: We agree that statistical support would strengthen the attribution of performance gains. In the revised version, we will add binomial confidence intervals for all reported success rates and apply McNemar's test to evaluate the statistical significance of differences versus the ReKep baseline. For error analysis on the forecasts, we will include a new subsection with qualitative examples of predicted versus actual task-completed states and quantitative metrics (e.g., Chamfer distance on reconstructed point clouds) to better isolate the forecasting contribution from other factors. revision: yes

-

Referee: [Abstract and Methods] The abstract and validation sections provide no details on the method for generating forecasts or adapting Gaussian Splatting for predictive task-completed states, leaving the core technical mechanism insufficiently specified to evaluate reproducibility or novelty.

Authors: The core technical details on forecast generation (language-conditioned future-state prediction via a learned dynamics model) and the adaptation of Gaussian Splatting (optimizing splats to represent both current and forecasted states with temporal consistency losses) are fully specified in Section 3 of the manuscript. However, we acknowledge that the abstract and validation sections could better highlight these elements for accessibility. We will revise the abstract to include a concise description of the forecasting mechanism and add explicit cross-references in the validation section to the relevant methodological components, improving reproducibility without changing the technical content. revision: partial

Circularity Check

No significant circularity in derivation chain

full rationale

The paper presents Forecast-GS as a new predictive 3D representation method for language-guided manipulation and supports its claims exclusively through empirical real-world trials (success rates of 21/25, 23/25, 16/25 vs. baseline 15/25, 19/25, 10/25). No equations, first-principles derivations, or predictive steps are described that reduce by construction to fitted parameters, self-definitions, or self-citation chains. The central argument rests on external performance comparisons under partial observations, which remain falsifiable and independent of the method's internal formulation.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Do As I Can, Not As I Say: Grounding Language in Robotic Affordances

M. Ahn, A. Brohan, N. Brown, Y . Chebotar, O. Cortes, B. David, C. Finn, C. Fu, K. Gopalakrishnan, K. Hausmanet al., “Do as i can, not as i say: Grounding language in robotic affordances,” arXiv preprint arXiv:2204.01691, 2022. [Online]. Available: https: //arxiv.org/abs/2204.01691

work page internal anchor Pith review Pith/arXiv arXiv 2022

-

[2]

V oxposer: Composable 3d value maps for robotic manipulation with language models,

W. Huang, C. Wang, R. Zhang, Y . Li, J. Wu, and L. Fei-Fei, “V oxposer: Composable 3d value maps for robotic manipulation with language models,” inProceedings of the 7th Conference on Robot Learning (CoRL), 2023

work page 2023

-

[3]

W. Huang, C. Wang, Y . Li, R. Zhang, and L. Fei-Fei, “Rekep: Spatio- temporal reasoning of relational keypoint constraints for robotic manip- ulation,”arXiv preprint arXiv:2409.01652, 2024

-

[4]

Nerf: Representing scenes as neural radiance fields for view synthesis,

B. Mildenhall, P. P. Srinivasan, M. Tancik, J. T. Barron, R. Ramamoorthi, and R. Ng, “Nerf: Representing scenes as neural radiance fields for view synthesis,”Communications of the ACM, vol. 65, no. 1, pp. 99–106, 2021

work page 2021

-

[5]

Lerf: Language embedded radiance fields,

J. Kerr, C. M. Kim, K. Goldberg, A. Kanazawa, and M. Tancik, “Lerf: Language embedded radiance fields,” inProceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2023

work page 2023

-

[6]

Distilled feature fields enable few-shot language-guided manipulation,

W. Shen, G. Yang, A. Yu, J. Wong, L. P. Kaelbling, and P. Isola, “Distilled feature fields enable few-shot language-guided manipulation,” inProceedings of the 7th Conference on Robot Learning (CoRL), 2023

work page 2023

-

[7]

Language embedded radiance fields for zero-shot task-oriented grasping,

A. Rashid, S. Sharma, C. M. Kim, J. Kerr, L. Y . Chen, A. Kanazawa, and K. Goldberg, “Language embedded radiance fields for zero-shot task-oriented grasping,” inProceedings of the 7th Conference on Robot Learning (CoRL), 2023

work page 2023

-

[8]

Decomposing nerf for editing via feature field distillation,

S. Kobayashi, E. Matsumoto, and V . Sitzmann, “Decomposing nerf for editing via feature field distillation,” inAdvances in Neural Information Processing Systems, S. Koyejo, S. Mohamed, A. Agarwal, D. Belgrave, K. Cho, and A. Oh, Eds., vol. 35. Curran Associates, Inc., 2022, pp. 23 311–23 330. [Online]. Available: https://proceedings.neurips.cc/paper files...

work page 2022

-

[9]

3d gaussian splatting for real-time radiance field rendering,

B. Kerbl, G. Kopanas, T. Leimk ¨uhler, and G. Drettakis, “3d gaussian splatting for real-time radiance field rendering,”ACM Transactions on Graphics, vol. 42, no. 4, July 2023. [Online]. Available: https://repo-sam.inria.fr/fungraph/3d-gaussian-splatting/

work page 2023

-

[10]

J. Yu, K. Hari, K. Srinivas, K. El-Refai, A. Rashid, C. M. Kim, J. Kerr, R. Cheng, M. Z. Irshad, A. Balakrishna, T. Kollar, and K. Goldberg, “Language-embedded gaussian splats (legs): Incrementally building room-scale representations with a mobile robot,” 2024. [Online]. Available: https://arxiv.org/abs/2409.18108

-

[11]

Feature 3dgs: Supercharging 3d gaussian splatting to enable distilled feature fields,

S. Zhou, H. Chang, S. Jiang, Z. Fan, Z. Zhu, D. Xu, P. Chari, S. You, Z. Wang, and A. Kadambi, “Feature 3dgs: Supercharging 3d gaussian splatting to enable distilled feature fields,” 2024. [Online]. Available: https://arxiv.org/abs/2312.03203

-

[12]

Feature splatting: Language-driven physics-based scene synthesis and editing,

R.-Z. Qiu, G. Yang, W. Zeng, and X. Wang, “Feature splatting: Language-driven physics-based scene synthesis and editing,” 2024. [Online]. Available: https://arxiv.org/abs/2404.01223

-

[13]

Langsplat: 3d language gaussian splatting,

M. Qin, W. Li, J. Zhou, H. Wang, and H. Pfister, “Langsplat: 3d language gaussian splatting,” 2024. [Online]. Available: https: //arxiv.org/abs/2312.16084

-

[14]

Gaussiangrasper: 3d language gaussian splatting for open-vocabulary robotic grasping,

Y . Zheng, X. Chen, Y . Zheng, S. Gu, R. Yang, B. Jin, P. Li, C. Zhong, Z. Wang, L. Liuet al., “Gaussiangrasper: 3d language gaussian splatting for open-vocabulary robotic grasping,”arXiv preprint arXiv:2403.09637, 2024

-

[15]

Detecting twenty-thousand classes using image-level supervision,

X. Zhou, R. Girdhar, A. Joulin, P. Kr ¨ahenb¨uhl, and I. Misra, “Detecting twenty-thousand classes using image-level supervision,” inEuropean conference on computer vision. Springer, 2022, pp. 350–368

work page 2022

-

[16]

DINOv2: Learning Robust Visual Features without Supervision

M. Oquab, T. Darcet, T. Moutakanni, H. V o, M. Szafraniec, V . Khalidov, P. Fernandez, D. Haziza, F. Massa, A. El-Noubyet al., “Dinov2: Learning robust visual features without supervision,”arXiv preprint arXiv:2304.07193, 2023

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[17]

Learning transferable visual models from natural language supervi- sion,

A. Radford, J. W. Kim, C. Hallacy, A. Ramesh, G. Goh, S. Agarwal, G. Sastry, A. Askell, P. Mishkin, J. Clark, G. Krueger, and I. Sutskever, “Learning transferable visual models from natural language supervi- sion,” inProceedings of the International Conference on Machine Learning (ICML). PMLR, 2021, pp. 8748–8763

work page 2021

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.