Recognition: 2 theorem links

· Lean TheoremA Data-Consistent Approach to Ensemble Filtering

Pith reviewed 2026-05-13 00:51 UTC · model grok-4.3

The pith

A deterministic spectral update on whitened residuals replaces stochastic perturbations in ensemble filters and improves calibration in low-ensemble chaotic systems.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

QPCA-EnDCF is a deterministic ensemble data-consistent filter that whitens the forecast-observation residual covariance, computes its empirical eigendecomposition, retains only the rank-κ principal components selected by a cutoff gap, and maps the resulting increment back to state space through an empirical gain. The analysis separates population and finite-ensemble quantities to obtain a bias-variance decomposition showing that stochastic EnKF variants carry an O(1/N) variance term from observation perturbations while QPCA-EnDCF carries an O(1/N) term from eigenspace estimation that is modulated by the retained rank and gap stability.

What carries the argument

The QPCA-EnDCF update: whitening of the residual covariance, empirical eigendecomposition restricted to a rank-κ subspace chosen by spectral cutoff, followed by mapping through an empirical gain operator.

If this is right

- Stochastic EnKF variants incur an irreducible O(1/N) variance contribution from observation perturbations that cannot be removed by increasing ensemble size alone.

- QPCA-EnDCF replaces that term with projector-estimation variability whose magnitude depends explicitly on the retained rank and the cutoff gap.

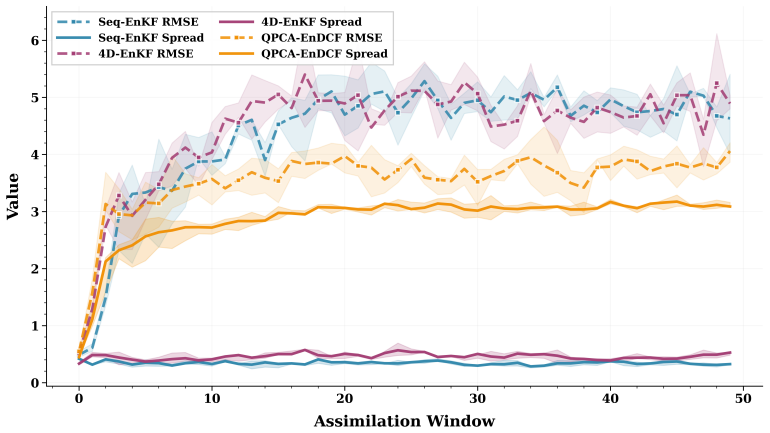

- In strongly undersampled regimes the method yields improved spread-skill behavior and rank-histogram reliability while often lowering RMSE relative to both sequential and four-dimensional stochastic EnKF.

- The bias-variance decomposition supplies an explicit trade-off between retained rank and estimation variability that can guide cutoff selection.

Where Pith is reading between the lines

- The same whitening-plus-restricted-projection idea could be applied to other ensemble-based data-assimilation schemes that currently rely on perturbed observations.

- Because the method operates entirely in observation space before the empirical gain step, it may extend naturally to nonlinear observation operators provided a suitable linearization or tangent-linear map is available.

- The dependence of performance on the cutoff gap suggests that adaptive gap detection could further stabilize results across changing dynamical regimes.

Load-bearing premise

The empirical eigendecomposition of the whitened residual covariance stays stable under finite-ensemble sampling so that restricting the update to the chosen rank-κ subspace reduces net analysis error without adding unaccounted bias when mapped to state space.

What would settle it

A controlled Lorenz-96 experiment in which the empirical eigenvectors of the whitened residual matrix shift substantially when the ensemble size is doubled, causing the restricted QPCA-EnDCF analysis error to exceed that of the stochastic EnKF baseline.

Figures

read the original abstract

Ensemble filtering of chaotic, partially observed systems is often performed with ensembles far smaller than the state dimension resulting in empirical covariances that are low rank. Subsequently, stochastic observation perturbations can degrade both accuracy and probabilistic calibration. We develop a data-consistent perspective on ensemble filtering and introduce the Quantity-of-Interest Principal Component Analysis Ensemble Data Consistent Filter (QPCA-EnDCF), which is a deterministic method that replaces perturbed observations with a spectrally regularized update in observation space. The method whitens forecast--observation residuals, computes an empirical eigendecomposition of the residual covariance, and restricts the correction to a rank-$\kappa$ subspace before mapping the increment back to state space through an empirical gain. We establish a theoretical framework that separates population and finite-ensemble objects and yields a bias--variance decomposition for the analysis mean. The analysis shows that stochastic EnKF variants incur an irreducible $\mathcal{O}(1/N)$ variance contribution from observation perturbations, whereas QPCA-EnDCF replaces this term with projector-estimation variability that is also $\mathcal{O}(1/N)$ but depends on the retained rank and the cutoff gap through eigenspace stability. Numerical experiments on the Lorenz--96 system in strongly undersampled regimes demonstrate that QPCA-EnDCF substantially improves spread--skill behavior, temporal tracking between spread and error, and rank-histogram reliability relative to sequential and four-dimensional stochastic EnKF. Under the baseline configuration, these calibration gains are accompanied by lower RMSE.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces the Quantity-of-Interest Principal Component Analysis Ensemble Data Consistent Filter (QPCA-EnDCF), a deterministic ensemble filtering method. It whitens forecast-observation residuals, computes their empirical eigendecomposition, restricts the update to a rank-κ subspace selected by spectral gap, and maps the increment back to state space via an empirical gain. A theoretical framework separates population-level and finite-ensemble objects to derive a bias-variance decomposition for the analysis mean, showing that stochastic EnKF incurs irreducible O(1/N) variance from observation perturbations while QPCA-EnDCF replaces this with O(1/N) projector-estimation variability controlled by retained rank and cutoff gap. Numerical experiments on Lorenz-96 in strongly undersampled regimes report improved spread-skill, temporal tracking, rank-histogram reliability, and often lower RMSE relative to sequential and 4D stochastic EnKF.

Significance. If the bias-variance decomposition is rigorous and the rank-κ projector remains stable, the work offers a principled deterministic alternative to stochastic perturbation methods in ensemble data assimilation for high-dimensional systems. The explicit separation of population and finite-ensemble terms, together with the reproducible Lorenz-96 experiments demonstrating calibration gains, provides a concrete advance that could be adopted in operational settings where ensemble size is limited.

major comments (3)

- [§3] §3 (theoretical framework), bias-variance decomposition: the claim that projector-estimation variability is O(1/N) and yields a net reduction requires quantitative control on eigenspace perturbation (e.g., via Davis-Kahan or Wedin bounds applied to the whitened residual covariance); without such bounds the comparison to the irreducible O(1/N) term in stochastic EnKF remains formal rather than guaranteed in undersampled regimes.

- [§4.2] §4.2 (numerical experiments), rank-κ selection: the cutoff-gap rule for choosing κ is load-bearing for the reported calibration improvements, yet no ablation or sensitivity study to the gap threshold or to ensemble fluctuations is presented; if the gap is sensitive, the claimed superiority in rank-histogram reliability and spread-skill may not hold across realizations.

- [§3.3] §3.3 and §4.1, empirical gain mapping: the stability assumption on the empirical eigendecomposition of the whitened residual covariance under finite N is stated but not accompanied by a perturbation analysis or diagnostic; violation of this assumption could introduce unaccounted bias when the rank-κ projector is mapped back to state space, undermining the central data-consistency claim.

minor comments (3)

- [§2] Notation for the whitened residual covariance and the projector P_κ should be introduced with a single consistent symbol set across the theoretical and algorithmic sections to avoid reader confusion.

- [§4] Figure captions for the Lorenz-96 rank histograms and spread-skill plots should explicitly state the ensemble size N, observation density, and the exact value of κ used in the baseline configuration.

- [§2.3] The implementation details for the empirical gain (e.g., regularization or pseudoinverse handling) are only sketched; a short pseudocode or explicit formula would improve reproducibility.

Simulated Author's Rebuttal

We thank the referee for the careful reading and constructive comments. We address each major comment below, indicating planned revisions to strengthen the manuscript.

read point-by-point responses

-

Referee: [§3] §3 (theoretical framework), bias-variance decomposition: the claim that projector-estimation variability is O(1/N) and yields a net reduction requires quantitative control on eigenspace perturbation (e.g., via Davis-Kahan or Wedin bounds applied to the whitened residual covariance); without such bounds the comparison to the irreducible O(1/N) term in stochastic EnKF remains formal rather than guaranteed in undersampled regimes.

Authors: We agree that the comparison would benefit from explicit quantitative bounds. In the revision we will apply the Davis-Kahan sin θ theorem to the whitened residual covariance, bounding the eigenspace perturbation in terms of the operator-norm deviation (O_p(1/N)) and the spectral gap at the cutoff. This yields an explicit O(1/N) expression for projector variability controlled by κ and the gap, allowing a sharper statement that the term is smaller than the stochastic EnKF perturbation variance when the gap condition holds. revision: yes

-

Referee: [§4.2] §4.2 (numerical experiments), rank-κ selection: the cutoff-gap rule for choosing κ is load-bearing for the reported calibration improvements, yet no ablation or sensitivity study to the gap threshold or to ensemble fluctuations is presented; if the gap is sensitive, the claimed superiority in rank-histogram reliability and spread-skill may not hold across realizations.

Authors: The referee is correct that robustness of the gap-based rule requires verification. We will add an ablation study in the revised §4.2 that varies the gap threshold over a range of values and reports RMSE, spread-skill ratio, and rank-histogram uniformity statistics averaged over multiple independent realizations for each ensemble size. This will demonstrate that the reported calibration gains remain stable under moderate changes in the threshold and across ensemble draws. revision: yes

-

Referee: [§3.3] §3.3 and §4.1, empirical gain mapping: the stability assumption on the empirical eigendecomposition of the whitened residual covariance under finite N is stated but not accompanied by a perturbation analysis or diagnostic; violation of this assumption could introduce unaccounted bias when the rank-κ projector is mapped back to state space, undermining the central data-consistency claim.

Authors: We will expand §3.3 with a brief perturbation argument for the empirical whitened covariance using standard matrix concentration and perturbation results, confirming that the deviation from the population operator is O_p(1/√N) under the moment conditions already used in the bias-variance analysis. In §4.1 we will add diagnostics (observed spectral gaps and condition numbers of the whitened residuals) from the Lorenz-96 runs to support that the stability assumption holds in the reported regimes. revision: partial

Circularity Check

No circularity: derivation separates population and finite-ensemble objects

full rationale

The paper establishes a bias-variance decomposition by explicitly distinguishing population-level quantities from their finite-ensemble empirical counterparts, producing O(1/N) variance terms whose analysis is independent of the QPCA-EnDCF parameters (retained rank and cutoff gap). No claimed prediction reduces to a fitted input by construction, no self-citation supplies a load-bearing uniqueness theorem, and no ansatz is smuggled through prior work. The numerical Lorenz-96 experiments function as external validation of spread-skill and rank-histogram improvements rather than tautological confirmation of the decomposition. The central claims therefore remain self-contained against the stated separation of scales.

Axiom & Free-Parameter Ledger

free parameters (1)

- retained rank κ

axioms (1)

- domain assumption Forecast-observation residuals admit a stable empirical eigendecomposition whose leading subspace yields a beneficial deterministic correction when mapped back to state space.

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearThe method whitens forecast–observation residuals, computes an empirical eigendecomposition of the residual covariance, and restricts the correction to a rank-κ subspace before mapping the increment back to state space through an empirical gain... bias–variance decomposition for the analysis mean... O(1/N) variance contribution from observation perturbations, whereas QPCA-EnDCF replaces this term with projector-estimation variability that is also O(1/N) but depends on the retained rank and the cutoff gap through eigenspace stability (Thm 6.1, Def 4.3, Cor 6.1).

-

IndisputableMonolith/Foundation/DimensionForcing.leanalexander_duality_circle_linking unclearNumerical experiments on the Lorenz–96 system... spread–skill behavior, temporal tracking... rank-histogram reliability

Reference graph

Works this paper leans on

-

[1]

R. E. Kalman, A new approach to linear filtering and prediction problems, Journal of Basic Engineering 82 (1) (1960) 35–45.doi:10.1115/1.3662552

-

[2]

G. Evensen, Sequential data assimilation with a nonlinear quasi-geostrophic model using monte carlo methods to forecast error statistics, Journal of Geophysical Research: Oceans 99 (C5) (1994) 10143–10162.doi:10.1029/94JC00572

-

[3]

G. Evensen, The ensemble Kalman filter: theoretical formulation and practical implemen- tation, Ocean Dynamics 53 (4) (2003) 343–367.doi:10.1007/s10236-003-0036-9

-

[4]

Evensen, Data Assimilation: The Ensemble Kalman Filter, 2nd Edition, Springer, 2009

G. Evensen, Data Assimilation: The Ensemble Kalman Filter, 2nd Edition, Springer, 2009. doi:10.1007/978-3-642-03711-5. 40

-

[5]

G. Burgers, P. J. van Leeuwen, G. Evensen, Analysis scheme in the ensemble Kalman filter, Monthly Weather Review 126 (6) (1998) 1719–1724.doi:10.1175/1520-0493(1998) 126<1719:ASITEK>2.0.CO;2

-

[6]

J. S. Whitaker, T. M. Hamill, Ensemble data assimilation without perturbed observations, Monthly Weather Review 130 (7) (2002) 1913–1924.doi:10.1175/1520-0493(2002) 130<1913:EDAWPO>2.0.CO;2

-

[7]

P. L. Houtekamer, F. Zhang, Review of the ensemble Kalman filter for atmospheric data assimilation, Monthly Weather Review 144 (12) (2016) 4489–4532.doi:10.1175/ MWR-D-15-0440.1

work page 2016

-

[9]

M. Bocquet, Ensemble Kalman filtering without the intrinsic need for inflation, Nonlinear Processes in Geophysics 18 (5) (2011) 735–750.doi:10.5194/npg-18-735-2011

-

[10]

M. Roth, G. Hendeby, C. Fritsche, F. Gustafsson, The ensemble Kalman filter: a signal pro- cessing perspective, EURASIP Journal on Advances in Signal Processing 2017 (1) (2017) 56.doi:10.1186/s13634-017-0492-x

-

[11]

A. Carrassi, M. Bocquet, L. Bertino, G. Evensen, Data assimilation in the geosciences: an overview of methods, issues, and perspectives, Wiley Interdisciplinary Reviews: Climate Change 9 (5) (2018) e535.doi:10.1002/wcc.535

-

[12]

M. Asch, M. Bocquet, M. Nodet, Data Assimilation: Methods, Algorithms, and Applica- tions, SIAM, Philadelphia, PA, 2016.doi:10.1137/1.9781611974546

-

[13]

K. Law, A. Stuart, K. Zygalakis, Data Assimilation: A Mathematical Introduction, no. 62 in Texts in Applied Mathematics, Springer, Cham, 2015.doi:10.1007/ 978-3-319-20325-6

work page 2015

-

[14]

D. Sanz-Alonso, A. Stuart, A. Taeb, Inverse Problems and Data Assimilation, no. 107 in London Mathematical Society Student Texts, Cambridge University Press, 2023.doi: 10.1017/9781009414319

-

[15]

S. Reich, C. Cotter, Probabilistic Forecasting and Bayesian Data Assimilation, Cambridge University Press, 2015.doi:10.1017/CBO9781107706804

-

[16]

A. M. Stuart, Inverse problems: a Bayesian perspective, Acta Numerica 19 (2010) 451–559. doi:10.1017/S0962492910000061

-

[17]

A. Tarantola, Inverse Problem Theory and Methods for Model Parameter Estimation, SIAM, Philadelphia, PA, 2005.doi:10.1137/1.9780898717921

-

[18]

J. P. Kaipio, E. Somersalo, Statistical and Computational Inverse Problems, no. 160 in Applied Mathematical Sciences, Springer, New York, NY , 2005.doi:10.1007/b138659. 41

-

[19]

J. Breidt, T. Butler, D. Estep, A measure-theoretic computational method for inverse sensi- tivity problems I: method and analysis, SIAM Journal on Numerical Analysis 49 (5) (2011) 1836–1859.doi:10.1137/100785946

-

[20]

T. Butler, D. Estep, J. Sandelin, A computational measure theoretic approach to inverse sensitivity problems II: a posteriori error analysis, SIAM Journal on Numerical Analysis 50 (1) (2012) 22–45.doi:10.1137/100785958

- [21]

-

[22]

T. Butler, J. Jakeman, T. Wildey, Combining push-forward measures and Bayes’ rule to construct consistent solutions to stochastic inverse problems, SIAM Journal on Scientific Computing 40 (2) (2018) A984–A1011.doi:10.1137/16M1087229

-

[23]

M. Pilosov, C. del-Castillo-Negrete, T. Y . Yen, T. Butler, C. Dawson, Parameter estimation with maximal updated densities, Computer Methods in Applied Mechanics and Engineer- ing 407 (2023) 115906.doi:10.1016/j.cma.2023.115906

-

[24]

C. del-Castillo-Negrete, R. Spence, T. Butler, C. Dawson, Sequential maximal updated den- sity parameter estimation for dynamical systems with parameter drift, International Journal for Numerical Methods in Engineering 126 (3) (2025) e7618.doi:10.1002/nme.7618

-

[25]

R. Spence, T. Butler, C. Dawson, Variational data-consistent assimilation, Computer Meth- ods in Applied Mechanics and Engineering 453 (2026) 118804.doi:10.1016/j.cma. 2026.118804

-

[26]

M. A. Iglesias, K. J. H. Law, A. M. Stuart, Ensemble Kalman methods for inverse problems, Inverse Problems 29 (4) (2013) 045001.doi:10.1088/0266-5611/29/4/045001

-

[27]

C. Schillings, A. M. Stuart, Analysis of the ensemble Kalman filter for inverse prob- lems, SIAM Journal on Numerical Analysis 55 (3) (2017) 1264–1290.doi:10.1137/ 16M105959X

work page 2017

-

[28]

J. A. Carrillo, F. Hoffmann, A. M. Stuart, U. Vaes, The mean-field ensemble Kalman filter: near-Gaussian setting, SIAM Journal on Numerical Analysis 62 (6) (2024) 2549–2587. doi:10.1137/24M1628207

-

[29]

J. L. Anderson, An ensemble adjustment Kalman filter for data assimilation, Monthly Weather Review 129 (12) (2001) 2884–2903.doi:10.1175/1520-0493(2001) 129<2884:AEAKFF>2.0.CO;2

-

[30]

C. H. Bishop, B. J. Etherton, S. J. Majumdar, Adaptive sampling with the ensemble trans- form Kalman filter. Part I: theoretical aspects, Monthly Weather Review 129 (3) (2001) 420–436.doi:10.1175/1520-0493(2001)129<0420:ASWTET>2.0.CO;2

-

[31]

M. K. Tippett, J. L. Anderson, C. H. Bishop, T. M. Hamill, J. S. Whitaker, Ensemble square root filters, Monthly Weather Review 131 (7) (2003) 1485–1490.doi:10.1175/ 1520-0493(2003)131<1485:ESRF>2.0.CO;2. 42

work page 2003

-

[32]

I. Kasanický, J. Mandel, M. Vejmelka, Spectral diagonal ensemble Kalman filters, Nonlin- ear Processes in Geophysics 22 (4) (2015) 485–497.doi:10.5194/npg-22-485-2015

-

[33]

M. Tsyrulnikov, A. Sotskiy, Regularization of the ensemble Kalman filter using a non- parametric, non-stationary spatial model, Spatial Statistics 64 (2024) 100870.doi:10. 1016/j.spasta.2024.100870

-

[34]

B. R. Hunt, E. J. Kostelich, I. Szunyogh, Efficient data assimilation for spatiotemporal chaos: a local ensemble transform Kalman filter, Physica D: Nonlinear Phenomena 230 (1–

-

[35]

(2007) 112–126.doi:10.1016/j.physd.2006.11.008

-

[36]

P. L. Houtekamer, H. L. Mitchell, Data assimilation using an ensemble Kalman fil- ter technique, Monthly Weather Review 126 (3) (1998) 796–811.doi:10.1175/ 1520-0493(1998)126<0796:DAUAEK>2.0.CO;2

work page 1998

-

[37]

B. R. Hunt, E. Kalnay, E. J. Kostelich, E. Ott, D. J. Patil, T. Sauer, I. Szunyogh, J. A. Yorke, A. V . Zimin, Four-dimensional ensemble Kalman filtering, Tellus A: Dynamic Meteorology and Oceanography 56 (4) (2004) 273–277.doi:10.3402/tellusa.v56i4.14424

-

[38]

E. N. Lorenz, Predictability: a problem partly solved, in: Predictability, V olume 1: Pro- ceedings of the Seminar on Predictability, V ol. 1, ECMWF, Shinfield Park, Reading, UK, 1996, pp. 1–18, seminar held 4–8 September 1995

work page 1996

-

[39]

E. N. Lorenz, K. A. Emanuel, Optimal sites for supplementary weather observations: sim- ulation with a small model, Journal of the Atmospheric Sciences 55 (3) (1998) 399–414. doi:10.1175/1520-0469(1998)055<0399:OSFSWO>2.0.CO;2

-

[40]

T. Butler, T. Wildey, W. Zhang,L p convergence of approximate maps and probability densities for forward and inverse problems in uncertainty quantification, International Journal for Uncertainty Quantification 12 (4) (2022) 65–92.doi:10.1615/Int.J. UncertaintyQuantification.2022038086. 43

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.