Recognition: no theorem link

Unified Operator Framework for Functional and Multivariate Regression

Pith reviewed 2026-05-13 01:21 UTC · model grok-4.3

The pith

All standard regression models arise as special cases of one integral operator by varying the input and output measures.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

We develop a unified operator framework for scalar, multivariate, and functional regression based on integral operators defined with respect to general measures. Within this framework, classical regression models, including scalar-on-function, function-on-scalar, function-on-function, and multivariate multiple regression, arise as special cases corresponding to different choices of input and output measures. The standard regression taxonomy can be expressed as a single operator under varying measures, discrete representations correspond to exact operator evaluations under discrete measures and converge to the continuous operator as the observation grid is refined, and estimation under the 3.

What carries the argument

The integral operator defined with respect to general input and output measures, which recovers each regression type as a special case through measure selection.

If this is right

- Scalar-on-function, function-on-scalar, and function-on-function regressions emerge simply by selecting appropriate input and output measures on the same operator.

- Discretized functional data can be modeled exactly as multivariate regression under a discrete measure, with no loss of information relative to the operator view.

- Statistical guarantees for estimators in the framework follow directly from classical multivariate regression theory.

- The competitive performance of vectorized multivariate regression in linear functional settings is explained as exact recovery of the discrete-measure operator.

Where Pith is reading between the lines

- Software for regression could be unified around a single estimation routine that accepts measure specifications instead of maintaining separate functional and multivariate code paths.

- The same measure-variation principle may apply to nonlinear or nonparametric extensions of regression without requiring entirely new operator theories.

- Connections between functional data analysis and kernel methods become interpretable as different measure choices on related operators.

Load-bearing premise

That discrete representations correspond to exact operator evaluations under discrete measures and converge to the continuous operator as the observation grid is refined, without additional regularity conditions that would break the equivalence for typical functional data.

What would settle it

A dataset or simulation in which the fitted operator from vectorized multivariate regression on discretized functional observations deviates systematically from the operator obtained by direct functional estimation on the same data, even as the grid is refined.

Figures

read the original abstract

We develop a unified operator framework for scalar, multivariate, and functional regression based on integral operators defined with respect to general measures. Within this framework, classical regression models, including scalar-on-function, function-on-scalar, function-on-function, and multivariate multiple regression, arise as special cases corresponding to different choices of input and output measures. We establish three main results. First, we show that the standard regression taxonomy can be expressed as a single operator under varying measures. Second, we demonstrate that discrete representations correspond to exact operator evaluations under discrete measures and converge to the continuous operator as the observation grid is refined. Third, we show that estimation under the discrete-measure formulation reduces to standard multivariate regression, with statistical properties governed by classical results. A simulation study illustrates these principles, highlighting the roles of discretization, conditioning, and estimation. Overall, the proposed framework clarifies the relationship between functional and multivariate regression and provides a meaningful interpretation of discretized modeling approaches as operator estimation under different measure specifications. This perspective also explains why vectorized multivariate regression is often competitive with functional methods in linear settings: it directly estimates the discrete-measure representation of the underlying operator.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

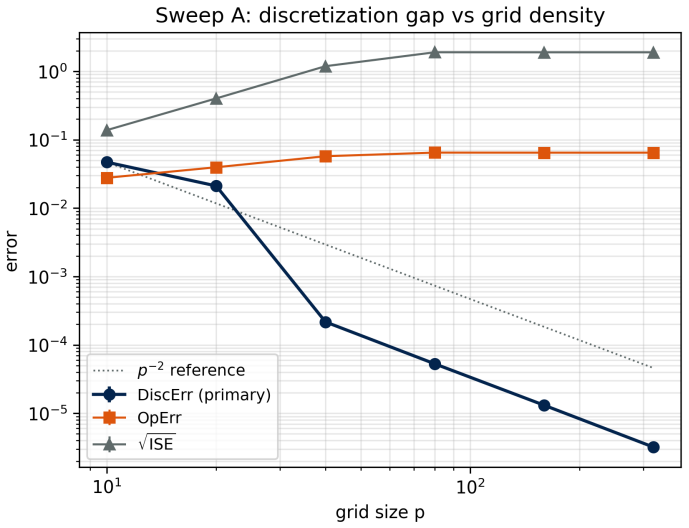

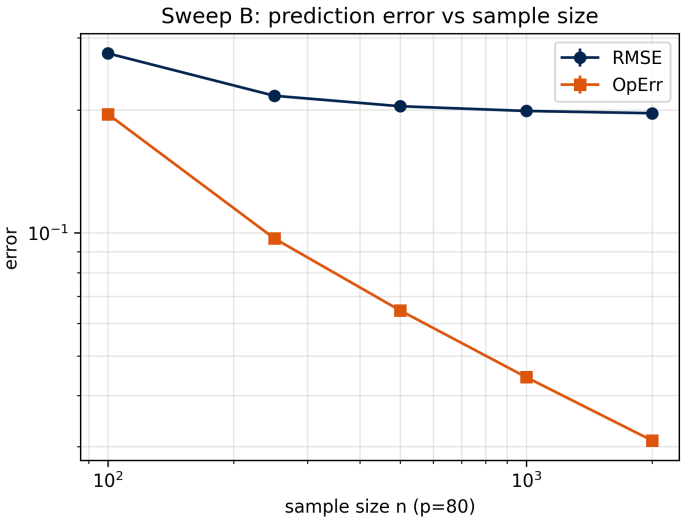

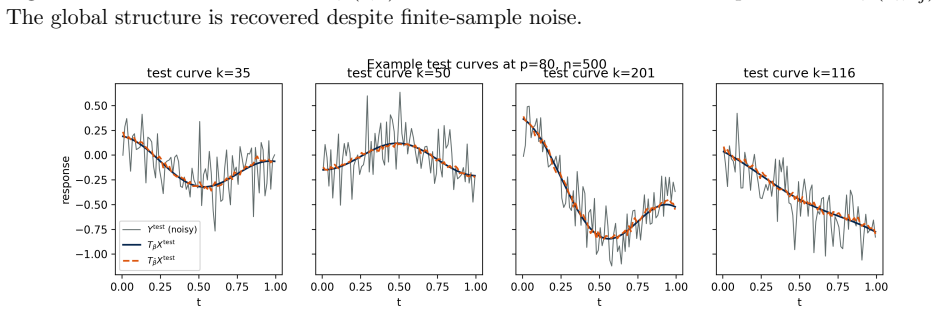

Summary. The paper develops a unified operator framework for scalar, multivariate, and functional regression using integral operators defined with respect to general measures. Classical models (scalar-on-function, function-on-scalar, function-on-function, multivariate multiple regression) arise as special cases by varying input/output measures. Three main results are claimed: (1) the standard regression taxonomy is expressible as a single operator under different measures; (2) discrete representations are exact operator evaluations under discrete measures and converge to the continuous operator as the observation grid is refined; (3) estimation under the discrete-measure formulation reduces to standard multivariate regression whose statistical properties follow from classical results. A simulation study illustrates discretization, conditioning, and estimation effects.

Significance. If the convergence and reduction results hold under appropriate conditions, the framework offers a coherent theoretical bridge between functional data analysis and multivariate regression, explaining the competitive performance of vectorized approaches in linear settings as direct estimation of the discrete-measure operator. It provides a measure-theoretic interpretation of discretization that could inform modeling choices across both literatures.

major comments (1)

- [statement of the second main result (abstract and corresponding section)] The second main result (discrete representations correspond to exact operator evaluations under discrete measures and converge to the continuous operator as the grid is refined) is load-bearing for the third result on classical statistical properties. The abstract states this convergence without specifying regularity conditions on the functions or measures (e.g., sample-path continuity, domination to interchange limit and integral, or membership in a specific function space such as L2). Typical functional data can violate such conditions, so the claimed equivalence and the reduction to multivariate regression may fail for non-smooth cases; the manuscript must either state the precise conditions under which the limit holds or demonstrate robustness under weaker assumptions.

Simulated Author's Rebuttal

We thank the referee for the careful review and the valuable suggestion to clarify the regularity conditions supporting the convergence result. We address the major comment below and will revise the manuscript accordingly.

read point-by-point responses

-

Referee: [statement of the second main result (abstract and corresponding section)] The second main result (discrete representations correspond to exact operator evaluations under discrete measures and converge to the continuous operator as the grid is refined) is load-bearing for the third result on classical statistical properties. The abstract states this convergence without specifying regularity conditions on the functions or measures (e.g., sample-path continuity, domination to interchange limit and integral, or membership in a specific function space such as L2). Typical functional data can violate such conditions, so the claimed equivalence and the reduction to multivariate regression may fail for non-smooth cases; the manuscript must either state the precise conditions under which the limit holds or demonstrate robustness under weaker assumptions.

Authors: We agree that the conditions for convergence should be stated explicitly. The framework is developed in the L^2 setting with respect to the input and output measures, which are taken to be finite (typically probability measures). Under these assumptions, the discrete-measure operator is the exact evaluation of the integral operator, and the convergence to the continuous operator holds in the operator norm as the discrete measure converges weakly to the continuous measure and the grid is refined. We will revise the abstract and the relevant section to state these conditions clearly. We will also add a brief discussion noting that for data with lower regularity the convergence may hold in a weaker topology or after suitable smoothing, while the reduction to multivariate regression remains valid at the discrete level regardless. This revision addresses the concern directly. revision: yes

Circularity Check

No circularity: framework re-expresses known models as operator special cases without self-referential definitions or fitted predictions

full rationale

The paper's three main results are presented as demonstrations that standard regression models arise as special cases of a single integral operator under different input/output measures, that discrete representations match exact evaluations under discrete measures, and that estimation then reduces to classical multivariate regression. No equations or steps in the provided abstract or description reduce any claimed result to a fitted parameter, self-definition, or self-citation chain by construction. The discrete-to-continuous convergence is asserted as a property of the framework rather than presupposed in its definition. The derivation chain is therefore self-contained as a re-expression and unification, with statistical properties imported from external classical results rather than generated internally.

Axiom & Free-Parameter Ledger

axioms (2)

- standard math Integral operators are well-defined with respect to general (including discrete) measures on the input and output spaces.

- domain assumption The regression map is a bounded linear operator between the appropriate L2 spaces.

Reference graph

Works this paper leans on

-

[1]

Ramsay, J. O., and Silverman, B. W. (2005).Functional Data Analysis, 2nd ed. Springer, New York

work page 2005

-

[2]

(2015).Theoretical Foundations of Functional Data Analysis, with an Introduction to Linear Operators

Hsing, T., and Eubank, R. (2015).Theoretical Foundations of Functional Data Analysis, with an Introduction to Linear Operators. Wiley, Hoboken, NJ

work page 2015

-

[3]

(2017).Introduction to Functional Data Analysis

Kokoszka, P., and Reimherr, M. (2017).Introduction to Functional Data Analysis. Chapman & Hall/CRC, Boca Raton, FL

work page 2017

-

[4]

Zhou, X., Lai, T., and Kong, L. (2023). Functional linear operator quantile regression for sparse longitudinal data.Statistica Sinica, forthcoming. doi:10.5705/ss.202023.0415

-

[5]

Yao, J., Mueller, J., and Wang, J.-L. (2021). Deep learning for functional data analysis with adaptive basis layers. Proceedings of the 38th International Conference on Machine Learning

work page 2021

-

[6]

Thind, B., Multani, K., and Cao, J. (2023). Deep learning with functional inputs. Journal of Computational and Graphical Statistics, 32(1), 171–180

work page 2023

-

[7]

Cohn, D. L. (1980).Measure Theory. Birkh¨ auser, Boston

work page 1980

-

[8]

(1987).Real and Complex Analysis, 3rd ed

Rudin, W. (1987).Real and Complex Analysis, 3rd ed. McGraw–Hill, New York

work page 1987

-

[9]

(1978).Introductory Functional Analysis with Applications

Kreyszig, E. (1978).Introductory Functional Analysis with Applications. Wiley, New York

work page 1978

-

[10]

Conway, J. B. (1990).A Course in Functional Analysis, 2nd ed. Springer, New York. 24

work page 1990

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.