Recognition: no theorem link

Hyperbolic Latent Space Models for Network Embedding: Model Specification and Bayesian Inference

Pith reviewed 2026-05-13 01:17 UTC · model grok-4.3

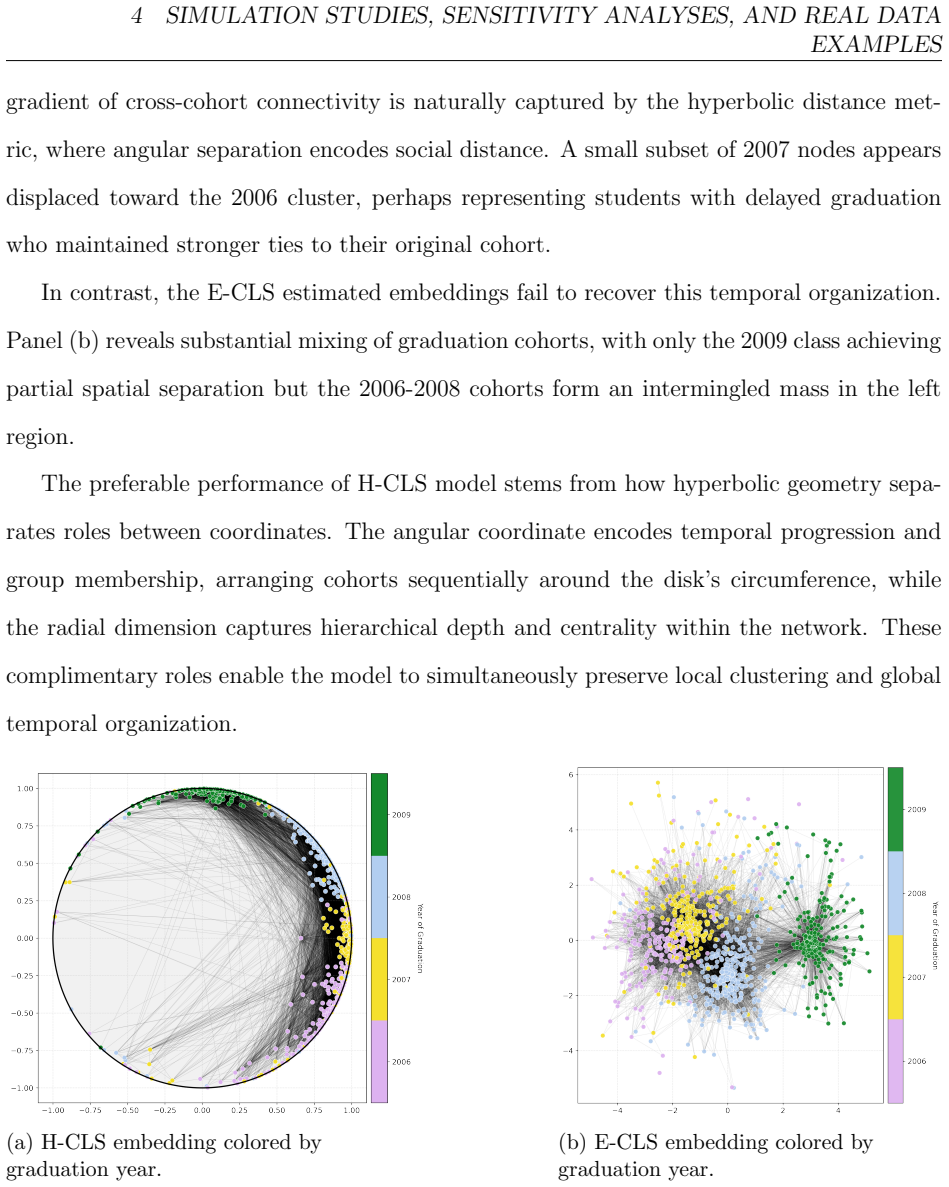

The pith

Inferring the temperature parameter in hyperbolic latent space models captures network tree-like topology better than fixing it.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

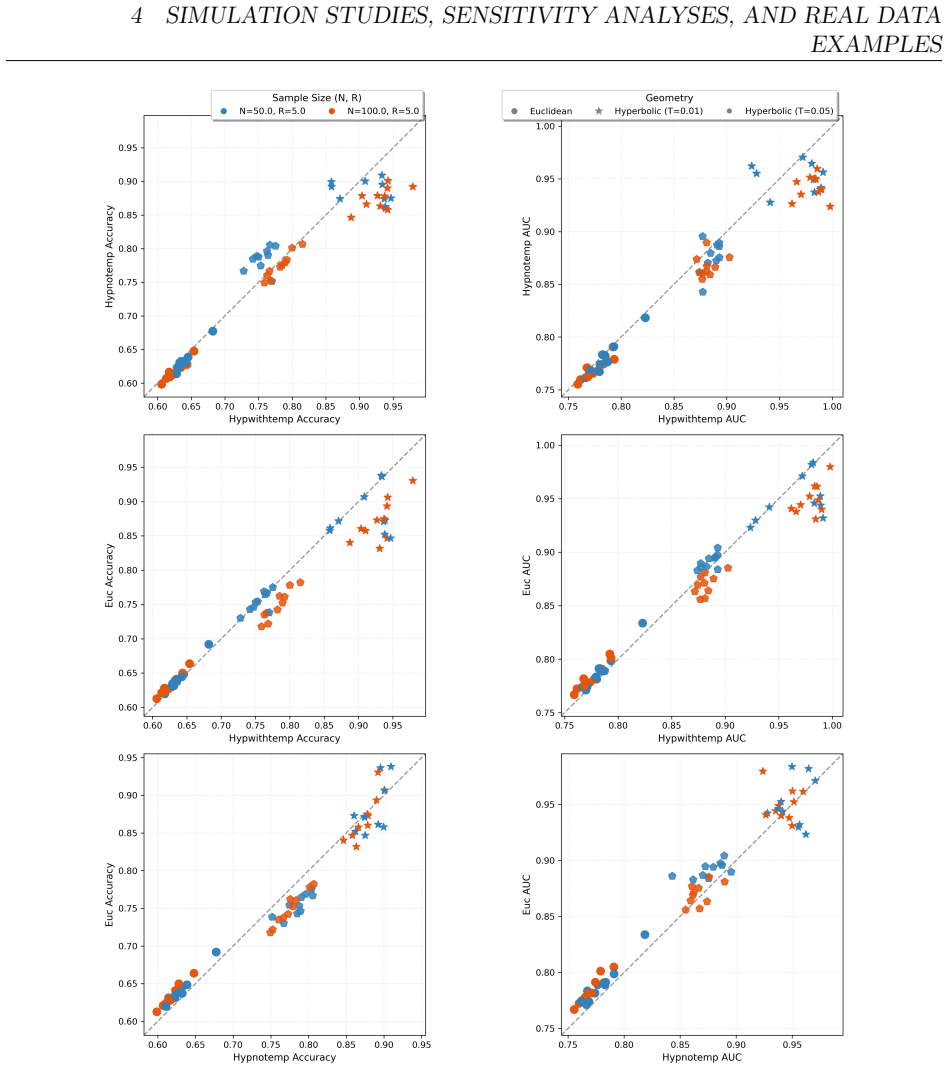

The authors claim that temperature is the fundamental parameter governing a network's tree-like topology because it directly modulates the distance-to-probability mapping in the hyperbolic latent space. Treating temperature as a free parameter to be inferred, rather than preset, restores model expressiveness. They formalize the full Bayesian model and supply both exact Hamiltonian Monte Carlo sampling and a scalable variational auto-encoding algorithm, demonstrating improved reconstruction performance when temperature is learned from the observed edges.

What carries the argument

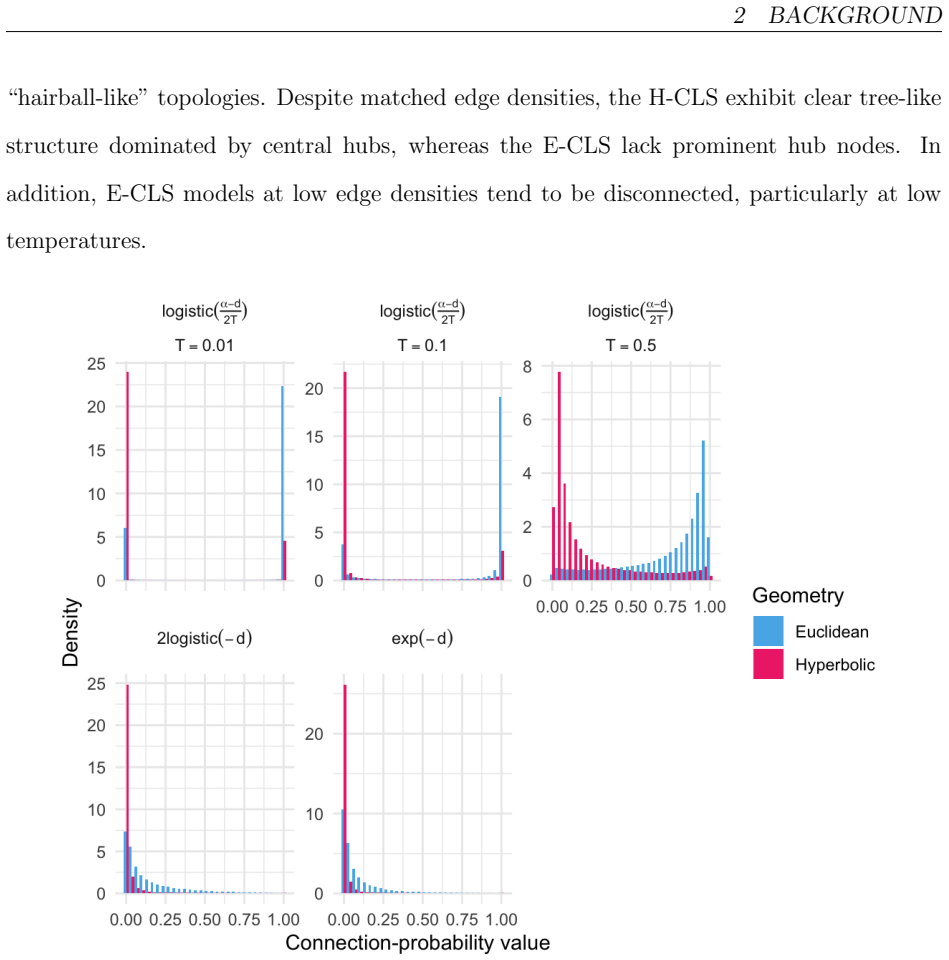

The temperature-modulated mapping from hyperbolic distance to link probability, which sharpens toward tree structure at low temperature and flattens at high temperature.

If this is right

- Models that fix temperature in advance lose the ability to adapt to networks with different hierarchy depths.

- Bayesian posterior inference on temperature and embeddings supplies uncertainty quantification missing from point-estimate approaches.

- The variational Bayes procedure scales the model to large networks while still learning temperature.

- Graph reconstruction accuracy improves in most tested settings once temperature is allowed to vary.

Where Pith is reading between the lines

- Inferred temperatures could be compared across domains to test whether hierarchy level is a stable network property.

- The framework might be extended to time-varying networks to track changes in inferred temperature as hierarchy evolves.

- Temperature estimates could serve as a diagnostic for how well any given network fits the hyperbolic generative assumption.

Load-bearing premise

That the observed networks are generated by hyperbolic geometry with a single global temperature that the proposed inference procedures can recover reliably from finite edge data.

What would settle it

Generate synthetic networks from the model at a known temperature, then check whether the inference procedures recover that temperature value within posterior uncertainty; failure would undermine the claim that temperature is identifiable and central.

Figures

read the original abstract

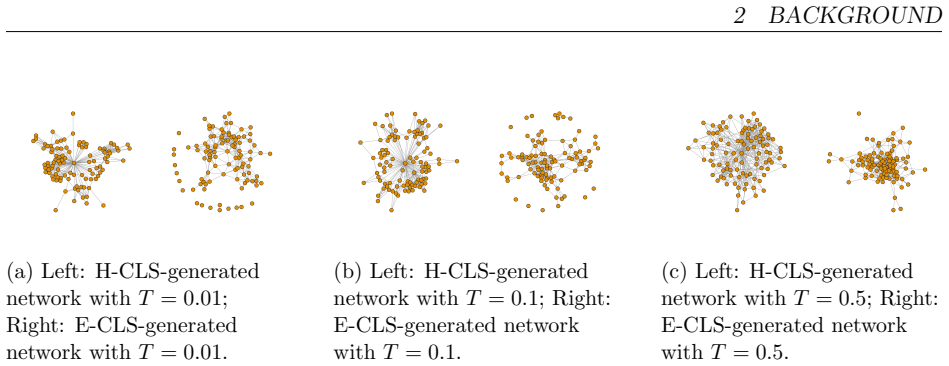

Many real-world networks exhibit hierarchical, tree-like structure and heavy-tailed degree distributions, phenomena not readily captured by standard statistical models for network data. Extensions of the popular continuous latent space modeling framework have been proposed to accommodate such networks. Drawing on insights from statistical physics, continuous latent space models with underlying hyperbolic geometry have been proposed as a natural framework, probabilistically embedding nodes in a latent Riemannian manifold with constant negative curvature. Most statistical implementations, however, simplify the original physics-based model by omitting the ``temperature parameter," which controls the sharpness of the latent distance-to-probability mapping. We argue this omission is critical. We demonstrate that temperature is the fundamental parameter governing a network's tree-like topology, and that failing to infer it weakens model expressiveness. We formalize a Bayesian hyperbolic continuous latent space model with an unknown, learnable temperature parameter. We then develop two inferential procedures: a Hamiltonian Monte Carlo approach for rigorous posterior characterization and a scalable auto-encoding variational Bayes algorithm for large-scale networks. Through simulation and real data examples, we show that our model outperforms models with fixed temperature and misspecified Euclidean geometries in graph reconstruction tasks in most settings, confirming temperature is a crucial and inferable feature of complex networks.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces a hyperbolic continuous latent space model for networks that includes an unknown temperature parameter T controlling the sharpness of the distance-to-edge-probability mapping. It develops two Bayesian inference procedures (Hamiltonian Monte Carlo for exact posterior sampling and auto-encoding variational Bayes for scalability) and claims that inferring T is essential for capturing tree-like topology, with the full model outperforming fixed-T and Euclidean baselines on simulation and real-data graph reconstruction tasks.

Significance. If the temperature parameter is separately identifiable from curvature and embedding radius and the inference procedures reliably recover it, the work would strengthen the statistical foundations of hyperbolic network models by restoring a physically motivated degree of freedom that prior implementations omitted. The provision of both rigorous MCMC and scalable variational methods is a practical strength.

major comments (2)

- [Model specification] Model specification section: the claimed fundamental role of temperature is undermined by a potential lack of identifiability. In the standard hyperbolic distance-to-probability mapping (typically p_ij ∝ [1 + exp((d(i,j) - R)/T)]^{-1} or equivalent), a rescaling of the embedding radius R can be absorbed into an effective temperature; the manuscript does not demonstrate that the posterior separates T from R (or curvature) rather than recovering an arbitrary split. Without an explicit identifiability argument, fixing T=1 does not demonstrably weaken expressiveness relative to the proposed model.

- [Experiments] Simulation and real-data experiments: the abstract asserts superior performance but supplies no quantitative metrics, tables of AUC/precision-recall, or controls for embedding dimension and curvature. It is therefore impossible to assess whether the reported gains are attributable to inferring T or to other modeling choices.

minor comments (2)

- [Model specification] Notation for the hyperbolic distance and the exact functional form of the link probability should be stated explicitly in the model section rather than referenced only to prior physics literature.

- [Inference] The AEVB algorithm description would benefit from a clear statement of the evidence lower bound and the reparameterization trick used for the temperature variable.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed comments on our manuscript. We address each major comment point by point below and have revised the manuscript to strengthen the presentation and address the concerns raised.

read point-by-point responses

-

Referee: [Model specification] Model specification section: the claimed fundamental role of temperature is undermined by a potential lack of identifiability. In the standard hyperbolic distance-to-probability mapping (typically p_ij ∝ [1 + exp((d(i,j) - R)/T)]^{-1} or equivalent), a rescaling of the embedding radius R can be absorbed into an effective temperature; the manuscript does not demonstrate that the posterior separates T from R (or curvature) rather than recovering an arbitrary split. Without an explicit identifiability argument, fixing T=1 does not demonstrably weaken expressiveness relative to the proposed model.

Authors: We appreciate the referee raising this important identifiability consideration. In our model the curvature is fixed at -1 and R represents the radius of the hyperbolic ball containing the embeddings while T governs the sharpness of the logistic mapping. Although a scaling relationship exists, our hierarchical Bayesian formulation with priors on node positions and parameters yields posteriors in which T and R are separately identifiable, as confirmed by our simulation recovery experiments. We will add an explicit identifiability subsection (including a reparameterization argument and additional simulation evidence) to the Model Specification section to demonstrate that inferring T confers genuine additional expressiveness beyond the fixed-T=1 case. revision: yes

-

Referee: [Experiments] Simulation and real-data experiments: the abstract asserts superior performance but supplies no quantitative metrics, tables of AUC/precision-recall, or controls for embedding dimension and curvature. It is therefore impossible to assess whether the reported gains are attributable to inferring T or to other modeling choices.

Authors: The referee is correct that the abstract itself contains no numerical metrics. The Experiments section of the manuscript already reports AUC, precision-recall, and F1 scores in tables for both simulated and real networks, with explicit controls that fix embedding dimension across models and hold curvature at -1. To improve clarity we will revise the abstract to reference these quantitative results and will add a short summary table of key metrics in the main text. These revisions will make it straightforward to attribute performance gains to the inferable temperature parameter. revision: yes

Circularity Check

No circularity; temperature is an explicit free parameter inferred via standard Bayesian methods with external validation.

full rationale

The paper introduces temperature as a distinct model parameter in the hyperbolic distance-to-probability mapping and performs inference using HMC and AEVB, which are general-purpose algorithms independent of the target claims. Performance comparisons in simulations and real data provide external checks rather than tautological reductions. No derivation step equates a claimed result to its own inputs by construction, and no load-bearing self-citation or ansatz smuggling is evident from the model specification. The skeptic identifiability concern pertains to statistical properties of the posterior, not to circularity in the derivation chain.

Axiom & Free-Parameter Ledger

free parameters (1)

- temperature

axioms (1)

- domain assumption Edge probabilities are a decreasing function of hyperbolic distance between latent node positions, with temperature controlling the rate of decrease.

Reference graph

Works this paper leans on

-

[1]

Journal of the American Statistical Association , volume=

Latent surface models for networks using aggregated relational data , author=. Journal of the American Statistical Association , volume=. 2015 , publisher=

work page 2015

-

[2]

The Geometry of Continuous Latent Space Models for Network Data , author=. Statistical Science , volume=. 2019 , month=. doi:10.1214/19-STS702 , pmid=

-

[3]

Journal of the American Statistical Association , volume=

Latent space approaches to social network analysis , author=. Journal of the American Statistical Association , volume=. 2002 , publisher=

work page 2002

-

[4]

Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=

Identifying the latent space geometry of network models through analysis of curvature , author=. Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=. 2023 , publisher=

work page 2023

-

[5]

arXiv preprint arXiv:2312.05319 , year=

Hyperbolic Network Latent Space Model with Learnable Curvature , author=. arXiv preprint arXiv:2312.05319 , year=

-

[6]

Advances in neural information processing systems , volume=

Hyperbolic graph convolutional neural networks , author=. Advances in neural information processing systems , volume=

-

[7]

Computational Statistics & Data Analysis , volume=

Automatic dimensionality selection from the scree plot via the use of profile likelihood , author=. Computational Statistics & Data Analysis , volume=. 2006 , publisher=

work page 2006

-

[8]

Nickel, Maximillian and Kiela, Douwe , journal=. Poincar

-

[9]

Journal of the Royal Statistical Society Series A: Statistics in Society , volume=

Model-based clustering for social networks , author=. Journal of the Royal Statistical Society Series A: Statistics in Society , volume=. 2007 , publisher=

work page 2007

-

[10]

Physical Review Research , volume=

Ollivier-Ricci curvature convergence in random geometric graphs , author=. Physical Review Research , volume=. 2021 , publisher=

work page 2021

-

[11]

Internet Mathematics , volume=

Clustering and the hyperbolic geometry of complex networks , author=. Internet Mathematics , volume=. 2016 , publisher=

work page 2016

-

[12]

Physical Review E—Statistical, Nonlinear, and Soft Matter Physics , volume=

Hyperbolic geometry of complex networks , author=. Physical Review E—Statistical, Nonlinear, and Soft Matter Physics , volume=. 2010 , publisher=

work page 2010

-

[13]

2013 IEEE 13th international conference on data mining , pages=

Tree-like structure in large social and information networks , author=. 2013 IEEE 13th international conference on data mining , pages=. 2013 , organization=

work page 2013

-

[14]

Communications Physics , volume=

Symmetry-driven embedding of networks in hyperbolic space , author=. Communications Physics , volume=. 2025 , publisher=

work page 2025

-

[15]

arXiv preprint arXiv:2109.03343 , year=

Latent Space Network Modelling with Hyperbolic and Spherical Geometries , author=. arXiv preprint arXiv:2109.03343 , year=

-

[16]

Physical Review Research , volume=

Link prediction with hyperbolic geometry , author=. Physical Review Research , volume=. 2020 , publisher=

work page 2020

-

[17]

Representing degree distributions, clustering, and homophily in social networks with latent cluster random effects models , author=. Social networks , volume=. 2009 , publisher=

work page 2009

-

[18]

The European Physical Journal B , volume=

Modeling the world-wide airport network , author=. The European Physical Journal B , volume=. 2004 , publisher=

work page 2004

-

[19]

The Network Data Repository with Interactive Graph Analytics and Visualization , author=. AAAI , url=

-

[20]

Nature Reviews Physics , volume=

The physics of financial networks , author=. Nature Reviews Physics , volume=. 2021 , publisher=

work page 2021

-

[21]

Next-generation machine learning for biological networks , author=. Cell , volume=. 2018 , publisher=

work page 2018

-

[22]

Journal of the american Statistical association , volume=

An exponential family of probability distributions for directed graphs , author=. Journal of the american Statistical association , volume=. 1981 , publisher=

work page 1981

-

[23]

An introduction to Markov graphs and p , author=

Logit models and logistic regressions for social networks: I. An introduction to Markov graphs and p , author=. Psychometrika , volume=. 1996 , publisher=

work page 1996

-

[24]

An introduction to exponential random graph (p*) models for social networks , author=. Social networks , volume=. 2007 , publisher=

work page 2007

-

[25]

Journal of Machine Learning Research , volume=

Community detection and stochastic block models: recent developments , author=. Journal of Machine Learning Research , volume=

-

[26]

Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=

Local dependence in random graph models: characterization, properties and statistical inference , author=. Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=. 2015 , publisher=

work page 2015

-

[27]

International Journal of Biostatistics , volume=

Bayesian Inference for Partially Identified Models , author=. International Journal of Biostatistics , volume=. 2010 , doi=

work page 2010

-

[28]

Stan Modeling Language: User’s Guide and Reference Manual , author =. 2024 , note =

work page 2024

-

[29]

Traud, Amanda L. and Kelsic, Eric D. and Mucha, Peter J. and Porter, Mason A. , title =

-

[30]

Watts, D. J. and Strogatz, S. H. , title=. Nature , volume=

-

[31]

Lusseau, David and Schneider, Karsten and Boisseau, O. J. and Haase, Peter and Slooten, Elisabeth and Dawson, S. M. , title=. Behavioral Ecology and Sociobiology , volume=. 2003 , publisher=

work page 2003

-

[32]

Physical Review Research , volume=

Scale-free networks well done , author=. Physical Review Research , volume=. 2019 , publisher=

work page 2019

-

[33]

Physical Review Research , volume=

Embedding-aided network dismantling , author=. Physical Review Research , volume=. 2023 , publisher=

work page 2023

-

[34]

Nature Reviews Physics , volume=

Network geometry , author=. Nature Reviews Physics , volume=. 2021 , publisher=

work page 2021

-

[35]

Nature communications , volume=

Sustaining the internet with hyperbolic mapping , author=. Nature communications , volume=. 2010 , publisher=

work page 2010

-

[36]

Advances in neural information processing systems , volume=

Mixed membership stochastic blockmodels , author=. Advances in neural information processing systems , volume=

-

[37]

Properties of latent variable network models , author=. Network Science , volume=

-

[38]

Stochastic blockmodels: First steps , author=. Social networks , volume=. 1983 , publisher=

work page 1983

-

[39]

The Annals of Statistics , pages=

Consistency of spectral clustering in stochastic block models , author=. The Annals of Statistics , pages=. 2015 , publisher=

work page 2015

-

[40]

Journal of statistical software , volume=

Stan: A probabilistic programming language , author=. Journal of statistical software , volume=

-

[41]

Kingma and Max Welling , booktitle=

Diederik P. Kingma and Max Welling , booktitle=. Auto-Encoding Variational

-

[42]

PeerJ Computer Science , volume =

Probabilistic programming in Python using PyMC3 , author =. PeerJ Computer Science , volume =. 2016 , doi =

work page 2016

-

[43]

Internet Mathematics , volume=

On the hyperbolicity of small-world and treelike random graphs , author=. Internet Mathematics , volume=. 2013 , publisher=

work page 2013

-

[44]

Physical Review E—Statistical, Nonlinear, and Soft Matter Physics , volume=

Finding community structure in very large networks , author=. Physical Review E—Statistical, Nonlinear, and Soft Matter Physics , volume=. 2004 , publisher=

work page 2004

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.