Recognition: no theorem link

Evaluating Structured Documentation as a Tool for Reflexivity in Dataset Development

Pith reviewed 2026-05-13 01:15 UTC · model grok-4.3

The pith

Structured documentation frameworks like datasheets engage little with major reflexivity themes from the literature.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

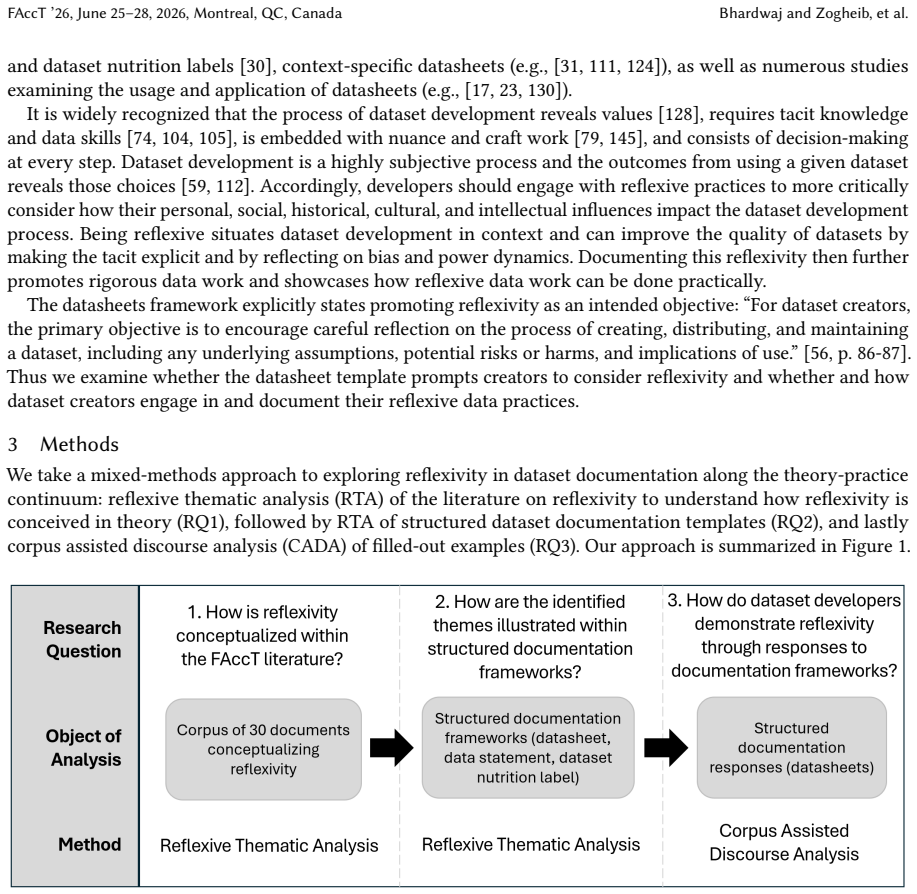

Through mixed-method thematic analysis of reflexivity literature and corpus-assisted discourse analysis of frameworks and applications, the paper establishes a general lack of engagement with major reflexivity themes in both the design of structured dataset documentation and in how those frameworks are applied in published work.

What carries the argument

A codebook of reflexivity topics derived from the literature, used to evaluate incorporation in documentation frameworks and their published applications via thematic and discourse analysis.

If this is right

- Framework creators should revise datasheets and similar tools to include questions that explicitly prompt reflexivity on positionality and power.

- Dataset developers can apply the provided codebook and extended questions to surface value-laden choices during documentation.

- Published applications of documentation frameworks can serve as better models if they address reflexivity themes more directly.

- The FAccT community can use the gap identified to prioritize reflexivity in future dataset work.

Where Pith is reading between the lines

- Adding reflexivity prompts might change how developers document datasets in practice, which could be tested by comparing before-and-after documentation quality.

- The finding points to a possible mismatch between stated goals of documentation tools and their actual effects on critical reflection.

- Extending the analysis to documentation in non-academic settings such as industry datasets could reveal whether the lack is specific to research publications.

Load-bearing premise

The chosen reflexivity themes from the literature and the selected sample of frameworks plus published applications are representative enough to support a claim of general lack beyond the cases examined.

What would settle it

A broader survey that identifies frequent, detailed engagement with multiple reflexivity themes such as positionality and value conflicts across a larger set of published dataset documentations would undermine the general-lack finding.

Figures

read the original abstract

It is prominently recognized that dataset development in machine learning is a value-laden process from problem formulation to data processing, use, and reuse. Structured documentation frameworks such as datasheets, data statements, and dataset nutrition labels have been created to aid developers in documenting how their datasets were produced and, according to the creators of the frameworks, to facilitate reflexivity in dataset development. While reflexivity is a stated goal, it is unclear whether and to what extent these structured dataset documentation frameworks incorporate concepts from reflexivity literature (at FAccT and elsewhere) and whether the use of the frameworks demonstrates reflexivity. Here, we adopt mixed-method thematic analysis and corpus-assisted discourse analysis to explore how reflexivity is incorporated in structured documentation frameworks and their responses. We demonstrate empirically that there is a general lack of engagement with major themes of reflexivity in both dataset documentation frameworks and published applications of these frameworks. We present a codebook of major reflexivity topics, recommend actionable strategies, and propose a set of extended datasheet questions to more effectively incorporate these topics into structured documentation frameworks and in the FAccT literature.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims that structured dataset documentation frameworks (e.g., datasheets, data statements, dataset nutrition labels), despite their stated goal of facilitating reflexivity in ML dataset development, show a general lack of engagement with major reflexivity themes drawn from FAccT and related literature. This holds both for the frameworks themselves and for published applications of the frameworks. The authors use mixed-method thematic analysis and corpus-assisted discourse analysis to derive a codebook of reflexivity topics, empirically demonstrate the gap, and propose actionable strategies plus a set of extended datasheet questions to address it.

Significance. If the sampling and analysis support the generalization to a 'general lack,' the work would be significant for the FAccT and responsible AI communities by providing empirical evidence of a disconnect between the reflexive intent of documentation frameworks and their actual content and usage. The codebook, recommendations, and concrete extended questions offer practical value for improving future frameworks and dataset practices. The mixed-methods design and focus on actionable outputs are strengths that could help translate the findings into impact.

major comments (2)

- [Methods] Methods section: The paper does not provide sufficient detail on the inclusion criteria, search strategy, time periods, venues, or keywords used to select the dataset documentation frameworks and the corpus of published applications. Given that the central empirical claim is a 'general lack' across the field (rather than within a convenience sample), the representativeness of the chosen frameworks and applications must be explicitly justified and documented to support the generalization.

- [Analysis and Results] Analysis and Results: The thematic analysis would be strengthened by including inter-coder reliability metrics, the full codebook with definitions and examples of application to framework text and published uses, and a clear mapping from the reflexivity literature themes to the coded categories. Without these, it is difficult to assess whether the evidence robustly supports the 'general lack' finding or whether alternative theme selections could alter the conclusion.

minor comments (2)

- [Abstract] Abstract: Consider adding a brief statement of the number of frameworks examined and the size of the application corpus to immediately convey the empirical scope.

- [Discussion] Discussion: A dedicated limitations subsection would help by addressing potential selection biases in the reflexivity themes, frameworks, and corpus, as well as the generalizability of the proposed extended questions.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed feedback. The comments highlight important areas for improving transparency and rigor, and we will incorporate revisions to address them fully.

read point-by-point responses

-

Referee: [Methods] Methods section: The paper does not provide sufficient detail on the inclusion criteria, search strategy, time periods, venues, or keywords used to select the dataset documentation frameworks and the corpus of published applications. Given that the central empirical claim is a 'general lack' across the field (rather than within a convenience sample), the representativeness of the chosen frameworks and applications must be explicitly justified and documented to support the generalization.

Authors: We agree that more explicit documentation of our sampling process is required to support the generalization. In the revised manuscript, we will expand the Methods section to detail the inclusion criteria, search strategy, time periods, venues, and keywords used to identify the documentation frameworks and the corpus of published applications. We will also add a justification of representativeness, explaining how the selected frameworks represent the major approaches in the literature and how the applications corpus provides broad coverage, while noting the boundaries of the sample. revision: yes

-

Referee: [Analysis and Results] Analysis and Results: The thematic analysis would be strengthened by including inter-coder reliability metrics, the full codebook with definitions and examples of application to framework text and published uses, and a clear mapping from the reflexivity literature themes to the coded categories. Without these, it is difficult to assess whether the evidence robustly supports the 'general lack' finding or whether alternative theme selections could alter the conclusion.

Authors: We agree these additions will strengthen the presentation of the analysis. In the revision, we will report inter-coder reliability metrics (including Cohen's kappa) from the thematic coding process. The complete codebook with definitions and examples drawn from both framework texts and published applications will be provided in an appendix. We will also add a mapping table that explicitly connects the reflexivity themes identified in the FAccT and related literature to our coded categories. These changes will allow readers to evaluate the robustness of the 'general lack' finding more directly. revision: yes

Circularity Check

No circularity: empirical thematic analysis of external frameworks and literature

full rationale

The paper conducts a mixed-method thematic analysis and corpus-assisted discourse analysis on selected dataset documentation frameworks and published applications, drawing reflexivity themes from FAccT and related literature. The central empirical claim of general lack of engagement is presented as an observation from this external evaluation rather than a derivation that reduces to self-defined inputs, fitted parameters, or self-citation chains. No equations, predictions, or uniqueness theorems are invoked; the methodology relies on codebook development and analysis of independently sourced materials. The representativeness concern raised in the skeptic note is a question of sampling validity, not a circular reduction of the result to its own construction.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Reflexivity concepts from FAccT literature can be operationalized into discrete themes suitable for thematic analysis of documentation frameworks.

Reference graph

Works this paper leans on

-

[1]

[n. d.]. https://alliedmedia.org/projects/our-data-bodies-odb

-

[2]

Call For Datasets and Benchmarks - NeurIPS

2021. Call For Datasets and Benchmarks - NeurIPS. https://web.archive.org/web/20210407213644/https://neurips.cc/Conferences/2021/ CallForDatasetsBenchmarks

-

[3]

Call For Datasets and Benchmarks - NeurIPS

2022. Call For Datasets and Benchmarks - NeurIPS. https://web.archive.org/web/20220521130452/https://neurips.cc/Conferences/2022/ CallForDatasetsBenchmarks

-

[4]

Call For Datasets and Benchmarks - NeurIPS

2023. Call For Datasets and Benchmarks - NeurIPS. https://web.archive.org/web/20230325021706/https://neurips.cc/Conferences/2023/ CallForDatasetsBenchmarks

-

[5]

Call For Datasets and Benchmarks - NeurIPS

2024. Call For Datasets and Benchmarks - NeurIPS. https://neurips.cc/Conferences/2024/CallForDatasetsBenchmarks

work page 2024

-

[6]

Gustaf Ahdritz, Nazim Bouatta, Sachin Kadyan, Lukas Jarosch, Dan Berenberg, Ian Fisk, Andrew Martin Watkins, Stephen Ra, Richard Bonneau, and Mohammed AlQuraishi. 2023. OpenProteinSet: Training data for structural biology at scale. Advances in Neural Information Processing Systems

work page 2023

-

[7]

Danielle Allard and Tami Oliphant. 2024. With a Little Help from Our Friends: Applying a Critical Friends Orientation to Critical Literature Reviews.Proceedings of the Association for Information Science and Technology61, 1 (2024), 13–24. doi:10.1002/pra2.1004

-

[8]

Doris Allhutter and Bettina Berendt. 2020. Deconstructing FAT: using memories to collectively explore implicit assumptions, values and context in practices of debiasing and discrimination-awareness. InProceedings of the 2020 Conference on Fairness, Accountability, and Transparency (FAT* ’20). Association for Computing Machinery, New York, NY, USA, 687. do...

-

[9]

Clyde Ancarno. 2020. Corpus-Assisted Discourse Studies. InThe Cambridge Handbook of Discourse Studies, Alexandra Georgakopoulou and Anna De Fina (Eds.). Cambridge University Press, Cambridge, 165–185

work page 2020

-

[10]

Gabriele Bammer. 2017. Toolkits for transdisciplinary research. https://i2insights.org/2017/07/25/toolkits-for-transdisciplinarity/

work page 2017

-

[11]

Shaowen Bardzell and Jeffrey Bardzell. 2011. Towards a feminist HCI methodology: social science, feminism, and HCI. InProceedings of the SIGCHI Conference on Human Factors in Computing Systems (CHI ’11). Association for Computing Machinery, New York, NY, USA, 675–684. doi:10.1145/1978942.1979041

-

[12]

Björn Barz and Joachim Denzler. 2021. WikiChurches: A Fine-Grained Dataset of Architectural Styles with Real-World Challenges. Advances in Neural Information Processing Systems

work page 2021

-

[13]

2023.Insolvent: How to Reorient Computing for Just Sustainability

Christoph Becker. 2023.Insolvent: How to Reorient Computing for Just Sustainability. MIT Press

work page 2023

-

[14]

Bilel Benbouzid. 2023. Fairness in machine learning from the perspective of sociology of statistics: How machine learning is becoming scientific by turning its back on metrological realism. InProceedings of the 2023 ACM Conference on Fairness, Accountability, and Transparency (FAccT ’23). Association for Computing Machinery, New York, NY, USA, 35–43. doi:...

-

[15]

Bender, Timnit Gebru, Angelina McMillan-Major, and Shmargaret Shmitchell

Emily M. Bender, Timnit Gebru, Angelina McMillan-Major, and Shmargaret Shmitchell. 2021. On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? . InProceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency (FAccT ’21). Association for Computing Machinery, New York, NY, USA, 610–623. doi:10.1145/3442188.3445922

-

[16]

Eshta Bhardwaj, Harshit Gujral, Siyi Wu, Ciara Zogheib, Tegan Maharaj, and Christoph Becker. 2024. Machine Learning Data Practices through a Data Curation Lens: An Evaluation Framework. In2024 ACM Conference on Fairness, Accountability, and Transparency. Association for Computing Machinery, New York, NY, USA, 1055–1067. doi:10.1145/3630106.3658955

-

[17]

Eshta Bhardwaj, Harshit Gujral, Siyi Wu, Ciara Zogheib, Tegan Maharaj, and Christoph Becker. 2024. The State of Data Curation at NeurIPS: An Assessment of Dataset Development Practices in the Datasets and Benchmarks Track.Advances in Neural Information Processing Systems37 (2024), 53626–53648

work page 2024

-

[18]

2009.Natural language processing with Python

Steven Bird, Ewan Klein, and Edward Loper. 2009.Natural language processing with Python. O’Reilly, Cambridge. FAccT ’26, June 25–28, 2026, Montreal, QC, Canada Bhardwaj and Zogheib, et al

work page 2009

-

[19]

Florian Bordes, Shashank Shekhar, Mark Ibrahim, Diane Bouchacourt, Pascal Vincent, and Ari S. Morcos. 2023. PUG: Photorealistic and Semantically Controllable Synthetic Data for Representation Learning. InThirty-seventh Conference on Neural Information Processing Systems Datasets and Benchmarks Track

work page 2023

-

[20]

Pierre Bourdieu. 2000.Pascalian meditations. Stanford University Press, Stanford, Calif

work page 2000

-

[21]

2004.Science of Science and Reflexivity

Pierre Bourdieu. 2004.Science of Science and Reflexivity. University of Chicago Press, Chicago, IL

work page 2004

- [22]

-

[23]

Karen L. Boyd. 2021. Datasheets for Datasets help ML Engineers Notice and Understand Ethical Issues in Training Data.Proceedings of the ACM on Human-Computer Interaction5, CSCW2 (2021), 1–27. doi:10.1145/3479582

-

[24]

Virginia Braun and Victoria Clarke. 2019. Reflecting on reflexive thematic analysis.Qualitative Research in Sport, Exercise and Health 11, 4 (2019), 589–597. doi:10.1080/2159676X.2019.1628806

-

[25]

Virginia Braun and Victoria Clarke. 2021. Can I use TA? Should I use TA? Should I not use TA? Comparing reflexive thematic analysis and other pattern-based qualitative analytic approaches.Counselling and Psychotherapy Research21, 1 (2021), 37–47. doi:10.1002/capr.12360

-

[26]

Samuel V. Bruton, Alicia L. Macchione, Mitch Brown, and Mohammad Hosseini. 2025. Citation Ethics: An Exploratory Survey of Norms and Behaviors.Journal of academic ethics23, 2 (2025), 329–346. doi:10.1007/s10805-024-09539-2

-

[27]

David Byrne. 2022. A worked example of Braun and Clarke’s approach to reflexive thematic analysis.Quality & Quantity56, 3 (2022), 1391–1412. doi:10.1007/s11135-021-01182-y

-

[28]

Scott Allen Cambo and Darren Gergle. 2022. Model Positionality and Computational Reflexivity: Promoting Reflexivity in Data Science. InCHI Conference on Human Factors in Computing Systems. ACM, New Orleans LA USA, 1–19. doi:10.1145/3491102.3501998

-

[29]

Tricco, Zachary Munn, Danielle Pollock, Ashrita Saran, Anthea Sutton, Howard White, and Hanan Khalil

Fiona Campbell, Andrea C. Tricco, Zachary Munn, Danielle Pollock, Ashrita Saran, Anthea Sutton, Howard White, and Hanan Khalil

-

[30]

Mapping reviews, scoping reviews, and evidence and gap maps (EGMs): the same but different— the “Big Picture” review family. Systematic Reviews12, 1 (2023), 45. doi:10.1186/s13643-023-02178-5

-

[31]

Kasia S. Chmielinski, Sarah Newman, Matt Taylor, Josh Joseph, Kemi Thomas, Jessica Yurkofsky, and Yue Chelsea Qiu. 2022. The Dataset Nutrition Label (2nd Gen): Leveraging Context to Mitigate Harms in Artificial Intelligence. doi:10.48550/arXiv.2201.03954

-

[32]

Charlotte J. Connolly, Daniel M. Hueholt, and Melissa A. Burt. 2025. Datasheets for Earth Science Datasets.Bulletin of the American Meteorological Society106, 4 (April 2025), E642–E648. doi:10.1175/BAMS-D-24-0203.1

-

[33]

Payton Croskey, Fabian Offert, Jennifer Jacobs, and Kai M. Thaler. 2025. Liberatory Collections and Ethical AI: Reimagining AI Development from Black Community Archives and Datasets. InProceedings of the 2025 ACM Conference on Fairness, Accountability, and Transparency (FAccT ’25). Association for Computing Machinery, New York, NY, USA, 900–913. doi:10.11...

- [34]

-

[35]

Sarah R. Davies and Constantin Holmer. 2024. Care, collaboration, and service in academic data work: biocuration as ‘academia otherwise’.Information, Communication & Society27, 4 (2024), 683–701. doi:10.1080/1369118X.2024.2315285

-

[36]

Suzanne Day. 2012. A Reflexive Lens: Exploring Dilemmas of Qualitative Methodology Through the Concept of Reflexivity.Qualitative Sociology Review8, 1 (2012), 60–85. doi:10.18778/1733-8077.8.1.04

-

[37]

Siddharth Peter De Souza and Linnet Taylor. 2025. Rebooting the global consensus: Norm entrepreneurship, data governance and the inalienability of digital bodies.Big Data & Society12, 2 (2025), 20539517251330191. doi:10.1177/20539517251330191

-

[38]

Matt Deitke, Ruoshi Liu, Matthew Wallingford, Huong Ngo, Oscar Michel, Aditya Kusupati, Alan Fan, Christian Laforte, Vikram Voleti, Samir Yitzhak Gadre, Eli VanderBilt, Aniruddha Kembhavi, Carl Vondrick, Georgia Gkioxari, Kiana Ehsani, Ludwig Schmidt, and Ali Farhadi. 2023. Objaverse-XL: A Universe of 10M+ 3D Objects. Advances in Neural Information Proces...

work page 2023

-

[39]

Melissa Dell, Jacob Carlson, Tom Bryan, Emily Silcock, Abhishek Arora, Zejiang Shen, Luca D’Amico-Wong, Quan Le, Pablo Querubin, and Leander Heldring. 2023. American Stories: A Large-Scale Structured Text Dataset of Historical U.S. Newspapers. Advances in Neural Information Processing Systems

work page 2023

-

[40]

Emily Denton, Alex Hanna, Razvan Amironesei, Andrew Smart, and Hilary Nicole. 2021. On the genealogy of machine learning datasets: A critical history of ImageNet.Big Data & Society8, 2 (2021), 20539517211035955. doi:10.1177/20539517211035955

-

[41]

Nadine Desrochers, Adèle Paul-Hus, and Jen Pecoskie. 2017. Five decades of gratitude: A meta-synthesis of acknowledgments research. Journal of the Association for Information Science and Technology68, 12 (2017), 2821–2833. doi:10.1002/asi.23903

-

[42]

Jwala Dhamala, Tony Sun, Varun Kumar, Satyapriya Krishna, Yada Pruksachatkun, Kai-Wei Chang, and Rahul Gupta. 2021. BOLD: Dataset and Metrics for Measuring Biases in Open-Ended Language Generation. InProceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency (FAccT ’21). Association for Computing Machinery, New York, NY, USA, 862...

-

[43]

Nicholas Diakopoulos. 2016. Accountability in algorithmic decision making.Commun. ACM59, 2 (2016), 56–62. doi:10.1145/2844110

-

[44]

Catherine D’Ignazio and Lauren Klein. 2020. 7. Show Your Work. InData Feminism. https://data-feminism.mitpress.mit.edu/pub/ 0vgzaln4/release/3

work page 2020

-

[45]

Mary Dixon-Woods, Debbie Cavers, Shona Agarwal, Ellen Annandale, Antony Arthur, Janet Harvey, Ron Hsu, Savita Katbamna, Richard Olsen, Lucy Smith, Richard Riley, and Alex J. Sutton. 2006. Conducting a critical interpretive synthesis of the literature on Evaluating Structured Documentation as a Tool for Reflexivity FAccT ’26, June 25–28, 2026, Montreal, QC...

-

[46]

Catherine D’Ignazio and Lauren Klein. 2020.Data Feminism. MIT Press

work page 2020

-

[47]

Catherine D’Ignazio and Lauren Klein. 2023. Introducing Data Feminism. InWomen’s Empowerment and Its Limits: Interdisciplinary and Transnational Perspectives Toward Sustainable Progress, Elisa Fornalé and Federica Cristani (Eds.). Springer International Publishing, 139–151. https://doi.org/10.1007/978-3-031-29332-0_8

- [48]

-

[49]

Kim V. L. England. 1994. Getting Personal: Reflexivity, Positionality, and Feminist Research.The Professional Geographer46, 1 (1994), 80–89. doi:10.1111/j.0033-0124.1994.00080.x

-

[50]

Linda Finlay. 2002. Negotiating the swamp: the opportunity and challenge of reflexivity in research practice.Qualitative Research2, 2 (2002), 209–230. doi:10.1177/146879410200200205

-

[51]

Kate Flemming. 2010. Synthesis of quantitative and qualitative research: an example using Critical Interpretive Synthesis.Journal of Advanced Nursing66, 1 (2010), 201–217. doi:10.1111/j.1365-2648.2009.05173.x

-

[52]

Louise Folkes. 2023. Moving beyond ‘shopping list’ positionality: Using kitchen table reflexivity and in/visible tools to develop reflexive qualitative research.Qualitative Research23, 5 (2023), 1301–1318. doi:10.1177/14687941221098922

-

[53]

Simon Frieder, Luca Pinchetti, Alexis Chevalier, Ryan-Rhys Griffiths, Tommaso Salvatori, Thomas Lukasiewicz, Philipp Christian Petersen, and Julius Berner. 2023. Mathematical Capabilities of ChatGPT. Advances in Neural Information Processing Systems

work page 2023

-

[54]

Samir Yitzhak Gadre, Gabriel Ilharco, Alex Fang, Jonathan Hayase, Georgios Smyrnis, Thao Nguyen, Ryan Marten, Mitchell Wortsman, Dhruba Ghosh, Jieyu Zhang, Eyal Orgad, Rahim Entezari, Giannis Daras, Sarah M. Pratt, Vivek Ramanujan, Yonatan Bitton, Kalyani Marathe, Stephen Mussmann, Richard Vencu, Mehdi Cherti, Ranjay Krishna, Pang Wei Koh, Olga Saukh, Ale...

work page 2023

-

[55]

Jingxian Gan and Yong Qi. 2021. Selection of the Optimal Number of Topics for LDA Topic Model—Taking Patent Policy Analysis as an Example.Entropy23, 10 (2021), 1301. doi:10.3390/e23101301

-

[56]

Anatol Garioud, Nicolas Gonthier, Loic Landrieu, Apolline De Wit, Marion Valette, Marc Poupée, Sebastien Giordano, and Boris Wattrelos. 2023. FLAIR : a Country-Scale Land Cover Semantic Segmentation Dataset From Multi-Source Optical Imagery. In Thirty-seventh Conference on Neural Information Processing Systems Datasets and Benchmarks Track

work page 2023

-

[57]

Timnit Gebru, Jamie Morgenstern, Briana Vecchione, Jennifer Wortman Vaughan, Hanna Wallach, Hal Daumé III, and Kate Crawford

-

[58]

doi:10.48550/arXiv.1803.09010 arXiv:1803.09010 [cs]

Datasheets for Datasets. arXiv:1803.09010 (2018). http://arxiv.org/abs/1803.09010 arXiv:1803.09010 [cs]

-

[59]

Timnit Gebru, Jamie Morgenstern, Briana Vecchione, Jennifer Wortman Vaughan, Hanna Wallach, Hal Daumé Iii, and Kate Crawford

-

[60]

W., Wallach, H., Daum \'e III, H., and Crawford, K

Datasheets for datasets.Commun. ACM64, 12 (2021), 86–92. doi:10.1145/3458723

-

[61]

Mathew Gillings and Andrew Hardie. 2023. The interpretation of topic models for scholarly analysis: An evaluation and critique of current practice.Digital Scholarship in the Humanities38, 2 (2023), 530–543

work page 2023

-

[62]

Gonzalez Zelay and Carlos Vladimiro. 2019. Towards Explaining the Effects of Data Preprocessing on Machine Learning. In2019 IEEE 35th International Conference on Data Engineering (ICDE). 2086–2090. doi:10.1109/ICDE.2019.00245

-

[63]

David S. A. Guttormsen and Fiona Moore. 2023. ‘Thinking About How We Think’: Using Bourdieu’s Epistemic Reflexivity to Reduce Bias in International Business Research.Management International Review63, 4 (2023), 531–559. doi:10.1007/s11575-023-00507-3

-

[64]

Donna Haraway. 1988. Situated Knowledges: The Science Question in Feminism and the Privilege of Partial Perspective.Feminist Studies14, 3 (1988), 575. doi:10.2307/3178066

-

[65]

Sandra Harding. 1992. After the Neutrality Ideal: Science, Politics, and "Strong Objectivity".Social Research59, 3 (1992), 567–587. https://www.jstor.org/stable/40970706

-

[66]

Sandra Harding. 1992. Rethinking Standpoint Epistemology: What Is "Strong Objectivity?".The Centennial Review36, 3 (1992), 437–470. https://www.jstor.org/stable/23739232

-

[67]

Sheikh Md Shakeel Hassan, Arthur Feeney, Akash Dhruv, Jihoon Kim, Youngjoon Suh, Jaiyoung Ryu, Yoonjin Won, and Aparna Chandramowlishwaran. 2023. BubbleML: A Multiphase Multiphysics Dataset and Benchmarks for Machine Learning. Advances in Neural Information Processing Systems

work page 2023

-

[68]

Amy K. Heger, Liz B. Marquis, Mihaela Vorvoreanu, Hanna Wallach, and Jennifer Wortman Vaughan. 2022. Understanding Machine Learning Practitioners’ Data Documentation Perceptions, Needs, Challenges, and Desiderata.Proceedings of the ACM on Human- Computer Interaction6, CSCW2 (2022), 1–29. doi:10.1145/3555760

-

[69]

Anna Heinke, LingLing Huang, Kyongmi U. Simpkins, Fritz Gerald P. Kalaw, Apoorva Karsolia, Kiratjit Singh, Sanjay Soundarajan, Camille Nebeker, Sally L. Baxter, Cecilia S. Lee, Aaron Y. Lee, Bhavesh Patel, and the AI-READI Consortium. 2025. Dataset Documentation for Responsible AI: Analysis of Suitability and Usage for Health Datasets. doi:10.1101/2025.11...

-

[70]

David J. Hess. 2013. Neoliberalism and the History of STS Theory: Toward a Reflexive Sociology.Social Epistemology27, 2 (2013), 177–193. doi:10.1080/02691728.2013.793754 FAccT ’26, June 25–28, 2026, Montreal, QC, Canada Bhardwaj and Zogheib, et al

-

[71]

Sharlene Nagy Hesse-Bibber and Deborah Piatelli. 2012. The Feminist Practice of Holistic Reflexivity. InHandbook of Feminist Research: Theory and Praxis. SAGE Publications, Inc., 557–582. https://doi.org/10.4135/9781483384740.n27

-

[72]

Simon David Hirsbrunner, Michael Tebbe, and Claudia Müller-Birn. 2024. From critical technical practice to reflexive data science. Convergence: The International Journal of Research into New Media Technologies30, 1 (2024), 190–215. doi:10.1177/13548565221132243

-

[73]

Rink Hoekstra and Simine Vazire. 2021. Aspiring to greater intellectual humility in science.Nature Human Behaviour5, 12 (2021), 1602–1607. doi:10.1038/s41562-021-01203-8

-

[74]

Thibaut Horel, Lorenzo Masoero, Raj Agrawal, Daria Roithmayr, and Trevor Campbell. 2021. The CPD Data Set: Personnel, Use of Force, and Complaints in the Chicago Police Department. Advances in Neural Information Processing Systems

work page 2021

-

[75]

Rodrigo Hormazabal, Changyoung Park, Soonyoung Lee, Sehui Han, Yeonsik Jo, Jaewan Lee, Ahra Jo, Seung Hwan Kim, Jaegul Choo, Moontae Lee, and Honglak Lee. 2022. CEDe: A collection of expert-curated datasets with atom-level entity annotations for Optical Chemical Structure Recognition. Advances in Neural Information Processing Systems

work page 2022

-

[76]

Zhe Huang, Liang Wang, Giles Blaney, Christopher Slaughter, Devon McKeon, Ziyu Zhou, Robert Jacob, and Michael C. Hughes

-

[77]

Advances in Neural Information Processing Systems

The Tufts fNIRS Mental Workload Dataset & Benchmark for Brain-Computer Interfaces that Generalize. Advances in Neural Information Processing Systems

-

[78]

Ben Hutchinson, Andrew Smart, Alex Hanna, Emily Denton, Christina Greer, Oddur Kjartansson, Parker Barnes, and Margaret Mitchell

-

[79]

InProceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency (FAccT ’21)

Towards Accountability for Machine Learning Datasets: Practices from Software Engineering and Infrastructure. InProceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency (FAccT ’21). Association for Computing Machinery, New York, NY, USA, 560–575. doi:10.1145/3442188.3445918

-

[80]

Othering & Belonging Institute. 2025. Power Analysis. https://belonging.berkeley.edu/transformative-research-toolkit/power-analysis

work page 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.