Recognition: no theorem link

Adaptive Calibration in Non-Stationary Environments

Pith reviewed 2026-05-13 01:43 UTC · model grok-4.3

The pith

Online algorithms achieve calibration errors that adapt to the unknown degree of non-stationarity in the outcome sequence.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The authors introduce a family of algorithms that use epoch-based scheduling together with a non-uniform partition of the prediction space allocating finer bins near the underlying mean outcome. For any unknown C in [0,T], the algorithms attain Õ(√T + (T C)^{1/3}) l1 calibration error and Õ((1+C)^{1/3}) l2 and pseudo KL calibration error. The bounds are achieved without prior knowledge of C and match the optimal stationary rates at C=0 while recovering the fully adversarial guarantees at C=T.

What carries the argument

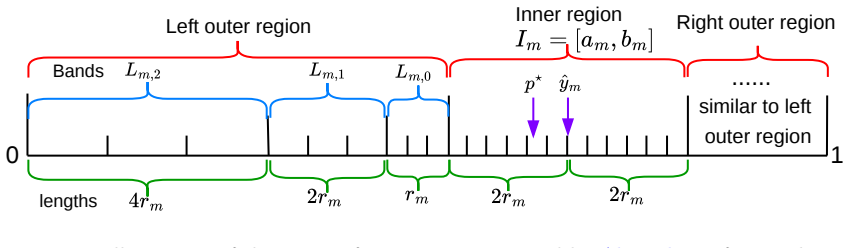

Epoch-based scheduling combined with a non-uniform partition of the prediction space that places finer resolution near the ground-truth mean outcome.

If this is right

- The same algorithms attain the optimal stationary calibration rates without any modification when the environment happens to be i.i.d.

- They recover the best known adversarial calibration guarantees when the environment is fully non-stationary.

- The adaptive rates hold simultaneously for l1, l2, and pseudo KL calibration error measures.

- No advance knowledge of the non-stationarity measure C is required for the bounds to hold.

Where Pith is reading between the lines

- The same epoch-plus-non-uniform-partition idea could be tested on drifting concept problems in supervised learning where only a modest total variation budget is available.

- One could check whether the cube-root dependence on C extends to other proper scoring rules or to multi-dimensional outcome spaces.

- In practice the method may allow deployed predictors to maintain low calibration error over long periods even when gradual covariate shift occurs, without periodic full retraining.

Load-bearing premise

Non-stationarity is fully captured by a single scalar C equal to the minimal total l1 deviation of the sequence of mean outcomes from some fixed value, and epoch scheduling with non-uniform bins suffices to adapt without knowing C ahead of time.

What would settle it

A concrete sequence of outcomes whose mean drifts produce a small C yet force any algorithm using the described epoch schedule and partition to exceed the claimed (T C)^{1/3} term by more than a constant factor.

Figures

read the original abstract

Making calibrated online predictions is a central challenge in modern AI systems. Much of the existing literature focuses on fully adversarial environments where outcomes may be arbitrary, leading to conservative algorithms that can perform suboptimally in more benign settings, such as when outcomes are nearly stationary. This gap raises a natural question: can we design online prediction algorithms whose calibration error automatically adapts to the degree of non-stationarity in the environment, smoothly interpolating between i.i.d. and adversarial regimes? We answer this question in the affirmative and develop a suite of algorithms that achieve adaptive calibration guarantees under multiple calibration measures. Specifically, with $T$ being the number of rounds and $C\in[0,T]$ being an unknown non-stationary measure defined as the minimal $\ell_1$ deviation of the mean outcomes, our algorithms attain $\widetilde{O}(\sqrt{T}+(TC)^{\frac{1}{3}})$ for $\ell_1$ calibration error and $\widetilde{O}((1+C)^{\frac{1}{3}})$ for both $\ell_2$ and pseudo KL calibration error. These bounds match the optimal rates in the stationary case ($C=0$) and recover known guarantees in the fully adversarial regime ($C=T$). Our approach builds on and extends prior work [Hu et al., 2026, Luo et al., 2025], introducing an epoch-based scheduling together with a novel non-uniform partition of the prediction space that allocates finer resolution near the underlying ground truth.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper develops online prediction algorithms for calibration that adapt to an unknown non-stationarity measure C (minimal ℓ1 deviation of mean outcomes). Using epoch-based scheduling and a non-uniform partition of the prediction space, the algorithms attain Õ(√T + (T C)^{1/3}) ℓ1 calibration error and Õ((1+C)^{1/3}) for both ℓ2 and pseudo-KL calibration error. These rates match the optimal stationary-case bounds when C=0 and recover adversarial guarantees when C=T. The approach extends prior results from Hu et al. (2026) and Luo et al. (2025).

Significance. If the constructions are fully rigorous, the adaptive interpolation between regimes without knowledge of C would be a meaningful advance, as most prior calibration work is either i.i.d.-optimal or fully adversarial. The epoch scheduling and tight boundary-case matching are strengths; the paper also supplies explicit rates under multiple calibration measures.

major comments (2)

- [Abstract / main algorithmic construction] Abstract and approach section: the non-uniform partition is stated to 'allocate finer resolution near the underlying ground truth.' No online mechanism is described for selecting or adapting the partition without knowledge of the mean outcomes (or a proxy for them). If the partition must be fixed in advance, it presupposes information unavailable in the online setting and renders the 'without knowledge of C' guarantee non-implementable. This is load-bearing for the central adaptive claim.

- [Epoch scheduling and partition analysis] Epoch-scheduling argument: the claimed Õ(√T + (T C)^{1/3}) bound for ℓ1 error relies on the interaction between epoch length and the non-uniform bins. Without an explicit derivation showing how the partition choice is independent of the unknown means, it is unclear whether the bound holds or reduces to a quantity that implicitly depends on fitted parameters from Luo et al. (2025).

minor comments (1)

- Notation for pseudo-KL calibration error is introduced without an explicit definition or comparison to standard KL; a short paragraph clarifying the distinction would aid readability.

Simulated Author's Rebuttal

We thank the referee for the careful reading and constructive comments on our work. The points raised about the non-uniform partition and the epoch-scheduling analysis are important for clarifying the online implementability and rigor of our adaptive guarantees. We address each major comment below.

read point-by-point responses

-

Referee: [Abstract / main algorithmic construction] Abstract and approach section: the non-uniform partition is stated to 'allocate finer resolution near the underlying ground truth.' No online mechanism is described for selecting or adapting the partition without knowledge of the mean outcomes (or a proxy for them). If the partition must be fixed in advance, it presupposes information unavailable in the online setting and renders the 'without knowledge of C' guarantee non-implementable. This is load-bearing for the central adaptive claim.

Authors: We agree that the abstract phrasing could lead to confusion and will revise it. The non-uniform partition is a fixed, predetermined construction over the prediction space [0,1] that does not require knowledge of the mean outcomes, C, or any proxy. It uses a static scheme (detailed in Section 3 of the manuscript) with bin widths that decrease in a data-independent manner to allocate finer resolution in regions that improve calibration bounds across regimes. This is fully online-implementable from the start and does not presuppose unavailable information. The description 'near the underlying ground truth' is an interpretive summary of its effect under low non-stationarity, not a claim that the partition adapts to the unknown means. We will expand the approach section with an explicit algorithmic description of the partition construction to eliminate any ambiguity. revision: yes

-

Referee: [Epoch scheduling and partition analysis] Epoch-scheduling argument: the claimed Õ(√T + (T C)^{1/3}) bound for ℓ1 error relies on the interaction between epoch length and the non-uniform bins. Without an explicit derivation showing how the partition choice is independent of the unknown means, it is unclear whether the bound holds or reduces to a quantity that implicitly depends on fitted parameters from Luo et al. (2025).

Authors: The epoch schedule is completely independent of C and the unknown means, using a fixed doubling schedule that requires no fitting. The ℓ1 bound derivation (in the proof of Theorem 1) explicitly accounts for the interaction by bounding the per-epoch calibration error via the non-uniform bin widths and the total variation C accumulated across epochs; the partition enters the analysis only through its fixed, predetermined widths and does not introduce any dependence on fitted parameters from prior work. Our extension of Luo et al. (2025) uses their base regret bounds but substitutes the new partition and epoch analysis without implicit fitting. We will add a self-contained proof sketch in the main text (or expanded appendix) that isolates the independence of the partition choice from the means. revision: yes

Circularity Check

No significant circularity in derivation chain

full rationale

The paper introduces epoch-based scheduling and a non-uniform partition of the prediction space as algorithmic innovations to achieve the stated adaptive calibration bounds that interpolate between stationary (C=0) and adversarial (C=T) regimes. These bounds are presented as consequences of the analysis of the new algorithms, which extend but do not reduce to prior results by self-citation or redefinition. No load-bearing step equates a derived quantity to an input parameter by construction, nor renames a fitted value as a prediction. The non-uniform partition is described as a fixed design element within the algorithm rather than a quantity derived from the target bounds themselves. The derivation remains self-contained against the external benchmarks of matching known rates at the extremes of C.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Non-stationarity of the environment is captured by C, the minimal ℓ1 deviation of the mean outcomes.

Reference graph

Works this paper leans on

-

[1]

J. B asiok, P. Gopalan, L. Hu, and P. Nakkiran. A unifying theory of distance from calibration. In Proceedings of the 55th Annual ACM Symposium on Theory of Computing, pages 1727--1740, 2023

work page 2023

-

[2]

L. Chen, H. Luo, and C.-Y. Wei. Impossible tuning made possible: A new expert algorithm and its applications. In Conference on Learning Theory, 2021

work page 2021

- [3]

-

[4]

A. P. Dawid. The well-calibrated bayesian. Journal of the American statistical Association, 77 0 (379): 0 605--610, 1982

work page 1982

-

[5]

M. Fishelson, R. Kleinberg, P. Okoroafor, R. P. Leme, J. Schneider, and Y. Teng. Full swap regret and discretized calibration. arXiv preprint arXiv:2502.09332, 2025

-

[6]

D. P. Foster and S. Hart. “calibeating”: Beating forecasters at their own game. Theoretical Economics, 18 0 (4): 0 1441--1474, 2023

work page 2023

-

[7]

D. P. Foster and R. V. Vohra. Asymptotic calibration. Biometrika, 85 0 (2): 0 379--390, 1998

work page 1998

-

[8]

N. Haghtalab, M. Qiao, K. Yang, and E. Zhao. Truthfulness of calibration measures. In Advances in Neural Information Processing Systems, 2024

work page 2024

- [9]

- [10]

-

[11]

L. Hu, H. Luo, S. Senapati, and V. Sharan. Efficient swap multicalibration of elicitable properties. Conference on Learning Theory, 2026

work page 2026

-

[12]

T. Jin, J. Liu, C. Rouyer, W. Chang, C.-Y. Wei, and H. Luo. No-regret online reinforcement learning with adversarial losses and transitions. In Advances in Neural Information Processing Systems, 2023

work page 2023

-

[13]

S. M. Kakade and D. P. Foster. Deterministic calibration and nash equilibrium. Journal of Computer and System Sciences, 74 0 (1): 0 115--130, 2008

work page 2008

-

[14]

B. Kleinberg, R. P. Leme, J. Schneider, and Y. Teng. U-calibration: Forecasting for an unknown agent. In Conference on Learning Theory, 2023

work page 2023

-

[15]

J. Liu, H. Luo, Z. Zhang, and L. J. Ratliff. Online learning for uninformed markov games: Empirical nash-value regret and non-stationarity adaptation. In Conference on Learning Theory, 2026

work page 2026

-

[16]

H. Luo, S. Senapati, and V. Sharan. Optimal multiclass u-calibration error and beyond. Advances in Neural Information Processing Systems, 2024

work page 2024

-

[17]

H. Luo, S. Senapati, and V. Sharan. Simultaneous swap regret minimization via kl-calibration. In Advances in Neural Information Processing Systems, 2025

work page 2025

-

[18]

M. Qiao and G. Valiant. Stronger calibration lower bounds via sidestepping. In Proceedings of the 53rd Annual ACM SIGACT Symposium on Theory of Computing, 2021

work page 2021

-

[19]

M. Qiao and L. Zheng. On the distance from calibration in sequential prediction. In Conference on Learning Theory, 2024

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.