Recognition: 2 theorem links

· Lean TheoremRainbow Deep Q-Learning with Kinematics-Aware Design for Cooperative Delta and 3-RRS Parallel Robot Insertion

Pith reviewed 2026-05-13 06:10 UTC · model grok-4.3

The pith

Tuning the 3-RRS robot geometry to enlarge its singularity-free workspace lets Rainbow DQN learn reliable cooperative peg-in-hole insertions.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

By first optimizing the 3-RRS geometry to maximize the singularity-free workspace and improve conditioning, the cooperative Delta-plus-3-RRS system exposes a larger safe region in which the Rainbow DQN policy can explore, allowing it to learn insertion behaviors that succeed reliably while respecting kinematic limits.

What carries the argument

The kinematics-aware design-optimization stage that tunes 3-RRS geometry to maximize singularity-free workspace, which enlarges the safe exploration region for the subsequent Rainbow DQN training on the 12-dimensional state MDP.

If this is right

- The co-designed system achieves stable policy convergence in the high-fidelity kinematic simulator.

- The learned policy performs reliable insertions on the five-dimensional task manifold.

- Constraint violations drop compared with a vanilla DQN agent and a classical sampling-based planner.

- The two-stage curriculum supports effective training of the shaped-reward MDP.

Where Pith is reading between the lines

- The same pre-optimization of parallel-robot geometry could be tested on other cooperative manipulator pairs to check whether it consistently aids RL convergence.

- If the kinematic simulator matches real dynamics, the policy may transfer to hardware with only light fine-tuning rather than full retraining.

- Adding joint-velocity limits or compliance terms to the state could reveal whether the current kinematic focus is sufficient when dynamics become non-negligible.

Load-bearing premise

That optimizing the 3-RRS geometry to maximize its singularity-free workspace will meaningfully enlarge the safe region available for reinforcement learning exploration and produce better policies.

What would settle it

Training the identical Rainbow DQN without the geometry optimization step and measuring whether convergence speed, insertion success rate, and constraint-violation count remain unchanged, or transferring the learned policy to physical hardware and observing a sharp drop in reliable insertions.

Figures

read the original abstract

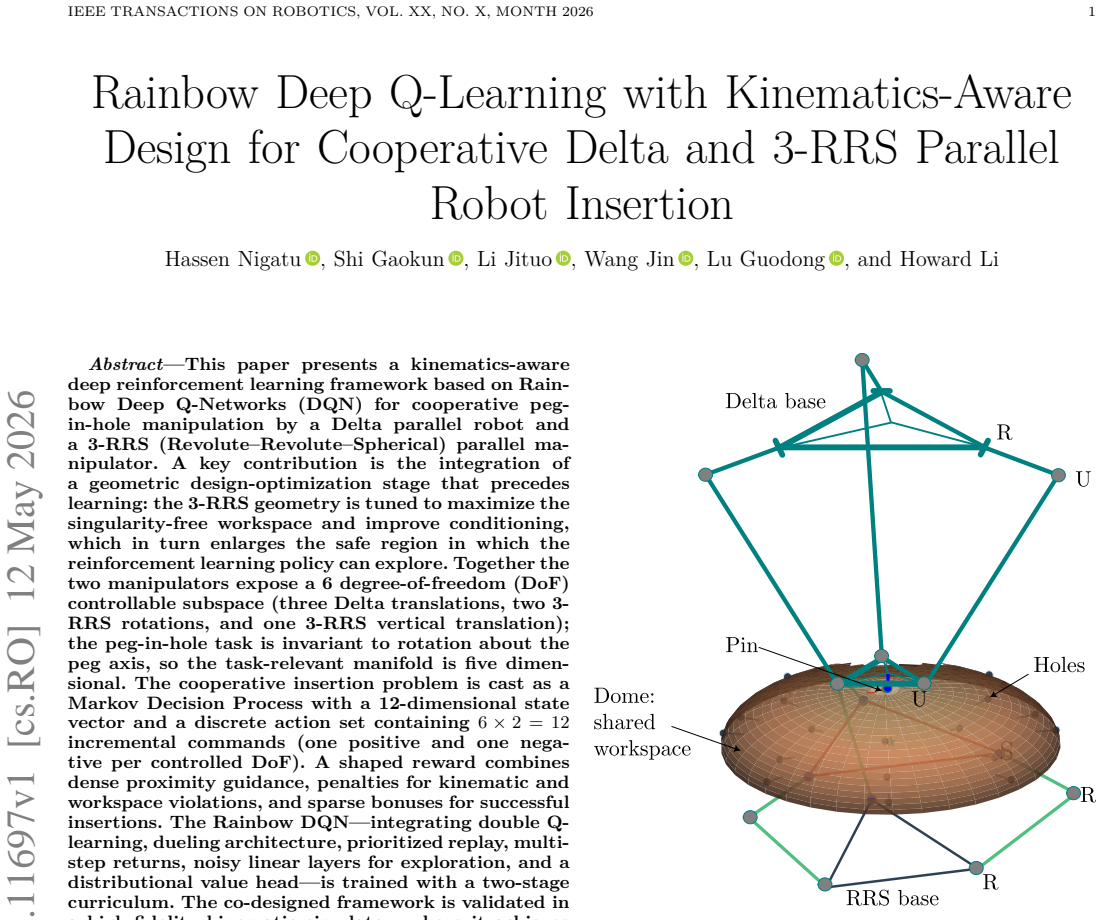

This paper presents a kinematics-aware deep reinforcement learning framework based on Rainbow Deep Q-Networks (DQN) for cooperative peg-in-hole manipulation by a Delta parallel robot and a 3-RRS (Revolute--Revolute--Spherical) parallel manipulator. A key contribution is the integration of a geometric design-optimization stage that precedes learning: the 3-RRS geometry is tuned to maximize the singularity-free workspace and improve conditioning, which in turn enlarges the safe region in which the reinforcement learning policy can explore. Together the two manipulators expose a 6~degree-of-freedom (DoF) controllable subspace (three Delta translations, two 3-RRS rotations, and one 3-RRS vertical translation); the peg-in-hole task is invariant to rotation about the peg axis, so the task-relevant manifold is five dimensional. The cooperative insertion problem is cast as a Markov Decision Process with a 12-dimensional state vector and a discrete action set containing $6 \times 2 = 12$ incremental commands (one positive and one negative per controlled DoF). A shaped reward combines dense proximity guidance, penalties for kinematic and workspace violations, and sparse bonuses for successful insertions. The Rainbow DQN -- integrating double Q-learning, dueling architecture, prioritized replay, multi-step returns, noisy linear layers for exploration, and a distributional value head -- is trained with a two-stage curriculum. The co-designed framework is validated in a high-fidelity kinematic simulator, where it achieves stable policy convergence, reliable insertions, and reduced constraint violations compared against a vanilla DQN agent and a classical sampling-based planner.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript presents a kinematics-aware Rainbow DQN framework for cooperative peg-in-hole insertion using a Delta parallel robot and a 3-RRS parallel manipulator. A geometric optimization stage tunes the 3-RRS to maximize singularity-free workspace before RL training; the task is formulated as an MDP with a 12-dimensional state, 12 discrete incremental actions, and a shaped reward combining proximity, kinematic penalties, and sparse success bonuses. Rainbow DQN (with double Q, dueling, prioritized replay, multi-step returns, noisy layers, and distributional head) is trained via two-stage curriculum. Validation occurs in a high-fidelity kinematic simulator, reporting stable convergence, reliable insertions, and fewer constraint violations versus vanilla DQN and a sampling-based planner.

Significance. If the kinematic simulation results prove robust under more complete dynamics, the work would usefully demonstrate how mechanism design optimization can enlarge the feasible region for RL exploration in parallel-robot cooperative tasks, extending Rainbow DQN to a 6-DoF hybrid system with a 5-DoF task manifold. The explicit separation of design and learning stages, together with the curriculum, provides a reproducible template that could be tested on other parallel mechanisms.

major comments (2)

- [Abstract and validation experiments] Abstract and validation experiments: the central claim of reliable insertions and reduced constraint violations rests on results from a purely kinematic simulator. Peg-in-hole insertion is contact-rich; the shaped reward penalizes only kinematic/workspace violations with no force, friction, or compliance terms, so the reported superiority over baselines may not survive when contact dynamics are present. This modeling gap directly affects transferability of the policy-convergence and reliability claims.

- [MDP formulation and reward section] MDP formulation and reward section: the assumption that maximizing the singularity-free workspace of the 3-RRS automatically enlarges the safe exploration region for RL is stated but not quantified; no ablation shows the performance delta attributable to the optimized geometry versus an unoptimized 3-RRS under identical RL training.

minor comments (2)

- [Abstract and results] The abstract and results paragraphs do not report the number of independent training runs, statistical tests, or variance measures supporting the convergence and insertion-success claims.

- [MDP formulation] Notation for the 12-dimensional state vector and the exact mapping of the six controlled DoFs (three Delta translations, two 3-RRS rotations, one vertical translation) should be tabulated for clarity.

Simulated Author's Rebuttal

We thank the referee for the constructive comments, which help clarify the scope and limitations of our work. We address each major point below and indicate the revisions we will incorporate.

read point-by-point responses

-

Referee: [Abstract and validation experiments] Abstract and validation experiments: the central claim of reliable insertions and reduced constraint violations rests on results from a purely kinematic simulator. Peg-in-hole insertion is contact-rich; the shaped reward penalizes only kinematic/workspace violations with no force, friction, or compliance terms, so the reported superiority over baselines may not survive when contact dynamics are present. This modeling gap directly affects transferability of the policy-convergence and reliability claims.

Authors: We agree that the purely kinematic simulator is a significant modeling gap for a contact-rich task. The shaped reward and reported metrics are indeed limited to kinematic constraints, and we cannot claim that the observed superiority will necessarily hold under full dynamics. The kinematics-aware design stage still provides value by enlarging the singularity-free region, which would remain relevant to avoid lock-ups even in dynamic models. We will revise the abstract to explicitly qualify results as obtained in kinematic simulation, add a limitations subsection discussing the absence of force/friction modeling, and tone down transferability claims while highlighting the framework as a reproducible template for future dynamic extensions. revision: partial

-

Referee: [MDP formulation and reward section] MDP formulation and reward section: the assumption that maximizing the singularity-free workspace of the 3-RRS automatically enlarges the safe exploration region for RL is stated but not quantified; no ablation shows the performance delta attributable to the optimized geometry versus an unoptimized 3-RRS under identical RL training.

Authors: The referee is correct that no direct ablation isolating the geometric optimization's contribution is provided. The design stage follows standard practice in parallel-robot literature for improving workspace conditioning, but we did not run the identical Rainbow DQN training on an unoptimized 3-RRS geometry. We will expand the relevant section with additional references to workspace-optimization benefits in RL contexts and explicitly note the missing quantitative delta as a limitation. A full ablation would require new experiments; we will therefore add only textual clarification and rationale rather than new results. revision: partial

Circularity Check

No significant circularity in the co-design and RL pipeline

full rationale

The paper presents a sequential, non-circular derivation: an independent geometric optimization stage first tunes the 3-RRS parameters to enlarge the singularity-free workspace, after which the Rainbow DQN policy is trained in the resulting configuration. The MDP formulation, shaped reward (proximity terms plus kinematic penalties), and curriculum are defined directly from task geometry and simulator constraints without any fitted parameter being relabeled as a prediction or any self-citation serving as a load-bearing uniqueness theorem. Validation metrics are obtained from explicit simulator rollouts rather than by algebraic identity with the design inputs. No step reduces to its own inputs by construction.

Axiom & Free-Parameter Ledger

free parameters (2)

- reward shaping weights

- action increment sizes

axioms (2)

- domain assumption The peg-in-hole task is invariant to rotation about the peg axis, so the task-relevant manifold is five dimensional.

- domain assumption The high-fidelity kinematic simulator accurately models real robot behavior for policy validation.

Lean theorems connected to this paper

-

IndisputableMonolith/Foundation/AlexanderDuality.leanalexander_duality_circle_linking unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

geometric optimization of the 3-RRS geometry is tuned to maximize the singularity-free workspace... objective is to maximize the area of the singularity-free orientation workspace max Aw = ∬_Ω dθx dθy, Ω = {(θx,θy) | σ_min(J) ≥ 0.15}

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

shaped reward combines dense proximity guidance, penalties for kinematic and workspace violations, and sparse bonuses for successful insertions

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

R. S. Sutton and A. G. Barto,Reinforcement Learning: An Introduction. MIT Press, 2018

work page 2018

-

[2]

Multi-robotsystemsandsoftrobotics,

X.Chenetal.,“Multi-robotsystemsandsoftrobotics,”Sensors, vol. 25, p. 1353, 2025

work page 2025

-

[3]

Peg-in-hole robotic assembly: A survey,

S. Sunet al., “Peg-in-hole robotic assembly: A survey,”IEEE Trans. Automation Science & Eng., 2023

work page 2023

-

[4]

Human-level control through deep reinforce- ment learning,

V. Mnihet al., “Human-level control through deep reinforce- ment learning,”Nature, vol. 518, pp. 529–533, 2015

work page 2015

-

[5]

Drl for robotics: Real-world successes,

C. Tanget al., “Drl for robotics: Real-world successes,” arXiv:2408.03539, 2024

-

[6]

Multi-agent actor-critic for mixed cooperative- competitive environments,

R. Loweet al., “Multi-agent actor-critic for mixed cooperative- competitive environments,”Proc. NeurIPS, 2017

work page 2017

-

[7]

Decentralised q-learning for multi- agent markov decision processes,

K. Keval and V. Borkar, “Decentralised q-learning for multi- agent markov decision processes,”arXiv:2311.12613, 2023

-

[8]

Edge-enabled digital twin for multi-robot collision avoidance,

D. Mtoweet al., “Edge-enabled digital twin for multi-robot collision avoidance,”Sensors, vol. 25, p. 4666, 2025

work page 2025

-

[9]

The delta parallel robot: Kinematics so- lutions,

R. L. Williams II, “The delta parallel robot: Kinematics so- lutions,” 2016, available at: https://people.ohio.edu/williams/ html/PDF/DeltaKin.pdf

work page 2016

-

[10]

Device for the movement and positioning of an element in space,

R. Clavel, “Device for the movement and positioning of an element in space,”U.S. Patent, no. 4,976,582, 1990

work page 1990

-

[11]

The 3-rrs wrist: A new spherical parallel manipulator,

R. Di Gregorio, “The 3-rrs wrist: A new spherical parallel manipulator,”Journal of Mechanical Design, vol. 126, pp. 850– 855, 2004

work page 2004

-

[12]

Kinematic performance of a 3-rrs parallel mech- anism,

D. Guoet al., “Kinematic performance of a 3-rrs parallel mech- anism,”Robotica, vol. 38, pp. 1252–1271, 2020

work page 2020

-

[13]

Inverse kinematics of the 3-rrs parallel platform,

J. Liet al., “Inverse kinematics of the 3-rrs parallel platform,” inProc. ICRA, 2001, pp. 2506–2511

work page 2001

-

[14]

Position kinematics of a 3-rrs parallel manipu- lator,

H. Tetiket al., “Position kinematics of a 3-rrs parallel manipu- lator,” 2015

work page 2015

-

[15]

Asynchronous multi-agent rl for real-time coopera- tive exploration,

A. Team, “Asynchronous multi-agent rl for real-time coopera- tive exploration,”arXiv, 2025

work page 2025

-

[16]

Learning to communicate with deep multi- agent reinforcement learning,

J. Foersteret al., “Learning to communicate with deep multi- agent reinforcement learning,” inProc. NeurIPS, 2016

work page 2016

-

[17]

Delta-like parallel robot for peg-in-hole assem- bly,

P. Chenet al., “Delta-like parallel robot for peg-in-hole assem- bly,”Journal of Mechanisms and Robotics, vol. 17, p. 021014, 2024

work page 2024

-

[18]

Domainrandomizationforsim-to-realtransfer,

J.Tobinetal.,“Domainrandomizationforsim-to-realtransfer,” inProc. IROS, 2017, pp. 23–30

work page 2017

-

[19]

Y. Bengioet al., “Curriculum learning,” inProc. ICML, 2009, pp. 41–48

work page 2009

-

[20]

Curriculum design for dual-arm peg-in-hole assembly,

G. Germanoet al., “Curriculum design for dual-arm peg-in-hole assembly,”Machines, vol. 12, p. 1385, 2024

work page 2024

-

[21]

Reverse curriculum generation for rl,

C. Florensaet al., “Reverse curriculum generation for rl,” in Proc. CoRL, 2017

work page 2017

-

[22]

Singularity analysis of 3-rrs parallel manipulator,

H. Tetik and G. Kiper, “Singularity analysis of 3-rrs parallel manipulator,” inAdvances in Mechanism and Machine Science, 2018, pp. 349–356

work page 2018

-

[23]

Design of 3-rrs parallel ankle rehabilitation robot,

P. Zhaoet al., “Design of 3-rrs parallel ankle rehabilitation robot,”Applied Sciences, vol. 12, p. 6125, 2022

work page 2022

-

[24]

A comprehensive survey on safe reinforcement learning,

J. García and F. Fernández, “A comprehensive survey on safe reinforcement learning,”JMLR, vol. 16, pp. 1437–1480, 2015

work page 2015

-

[25]

L. Brunkeet al., “Safe learning in robotics,”Annual Review of Control, Robotics, and Autonomous Systems, vol. 5, pp. 411– 444, 2022

work page 2022

-

[26]

Collision avoidance algorithms for multi-robot systems,

H. Yeet al., “Collision avoidance algorithms for multi-robot systems,”Autonomous Robots, 2025

work page 2025

-

[27]

Rainbow: Combining improvements in deep reinforcement learning,

M. Hesselet al., “Rainbow: Combining improvements in deep reinforcement learning,”Proc. AAAI, 2018

work page 2018

-

[28]

Deep reinforcement learning with double Q-learning,

H. van Hasselt, A. Guez, and D. Silver, “Deep reinforcement learning with double Q-learning,” inProceedings of the Thirti- eth AAAI Conference on Artificial Intelligence, ser. AAAI’16. AAAI Press, Feb 2016, pp. 2094–2100

work page 2016

-

[29]

Dueling network architectures for deep reinforcementlearning,

Z. Wang, T. Schaul, M. Hessel, H. van Hasselt, M. Lanctot, and N. de Freitas, “Dueling network architectures for deep reinforcementlearning,”inProceedingsofthe33rdInternational Conference on Machine Learning, ser. Proceedings of Machine Learning Research, M. F. Balcan and K. Q. Weinberger, Eds., vol. 48. New York, New York, USA: PMLR, 20–22 Jun 2016, pp. ...

work page 2016

-

[30]

T. Schaul, J. Quan, I. Antonoglou, and D. Silver, “Prioritized experience replay,”CoRR, vol. abs/1511.05952, 2015. [Online]. Available: http://arxiv.org/abs/1511.05952

work page Pith review arXiv 2015

-

[31]

Prioritized experience replay,

T. Schaulet al., “Prioritized experience replay,”Proc. ICLR, 2016

work page 2016

-

[32]

Noisy Networks for Exploration

M. Fortunato, M. G. Azar, B. Piot, J. Menick, I. Osband, A. Graves, V. Mnih, R. Munos, D. Hassabis, O. Pietquin, C. Blundell, and S. Legg, “Noisy networks for exploration,” CoRR, vol. abs/1706.10295, 2017. [Online]. Available: http: //arxiv.org/abs/1706.10295

-

[33]

A distributional perspective on reinforcement learning,

M. G. Bellemare, W. Dabney, and R. Munos, “A distributional perspective on reinforcement learning,” inProceedings of the 34th International Conference on Machine Learning (ICML 2017), ser. Proceedings of Machine Learning Research, D. Pre- cup and Y. W. Teh, Eds., vol. 70. Sydney, Australia: PMLR, 6–11 Aug 2017, pp. 449–458

work page 2017

-

[34]

Viki-r: Multi-agent cooperation via rl,

S. A. Lab, “Viki-r: Multi-agent cooperation via rl,” arXiv:2506.09049, 2025

-

[35]

Learning safe control for multi-robot systems,

K. Garget al., “Learning safe control for multi-robot systems,” Robotics and Autonomous Systems, 2024

work page 2024

-

[36]

Safe multi-agent rl for multi-robot control,

X. Liet al., “Safe multi-agent rl for multi-robot control,”Arti- ficial Intelligence, vol. 322, p. 103905, 2023

work page 2023

-

[37]

Hierarchical distributed policies for multi-robot transport,

Y. Naitoet al., “Hierarchical distributed policies for multi-robot transport,”arXiv:2404.02362, 2024

-

[38]

Learning from delayed rewards,

C. J. C. H. Watkins, “Learning from delayed rewards,” Ph.D. dissertation, King’s College, University of Cambridge, Cam- bridge, United Kingdom, 1989

work page 1989

-

[39]

C. J. C. H. Watkins and P. Dayan, “Q-learning,”Machine Learning, vol. 8, no. 3-4, pp. 279–292, 1992

work page 1992

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.