Recognition: no theorem link

Breaking the Dependency Chaos: A Constraint-Driven Python Dependency Resolution Strategy with Selective LLM Imputation

Pith reviewed 2026-05-13 05:49 UTC · model grok-4.3

The pith

SMT-LLM builds a constraint graph from PyPI metadata and selective LLM imputations then solves it with Z3 to resolve Python dependencies.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

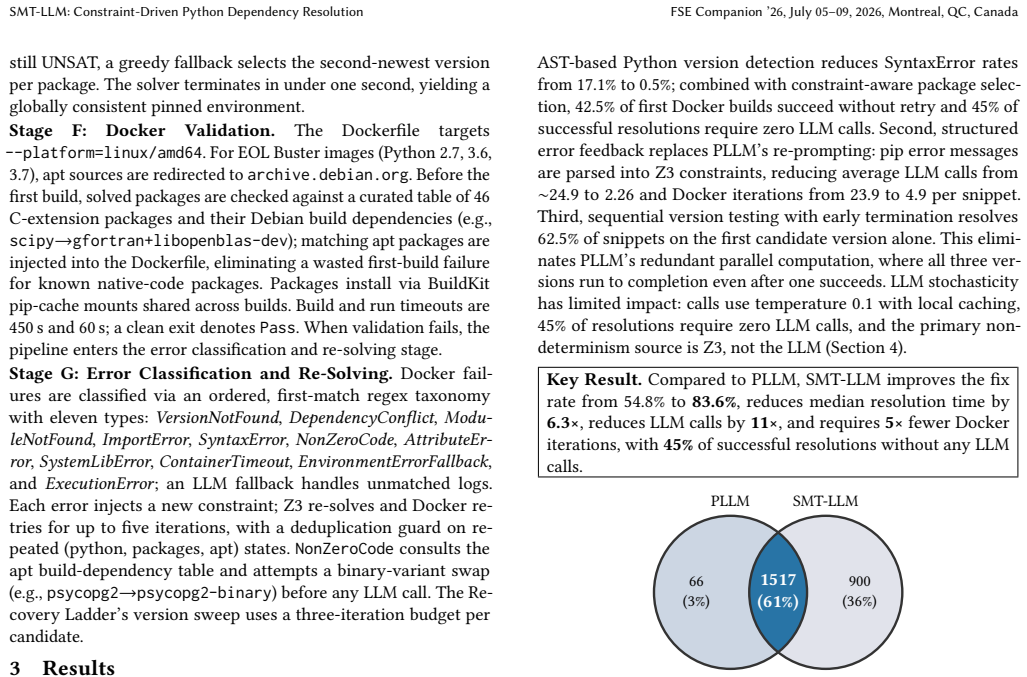

SMT-LLM replaces LLM-only version guessing with formal constraint solving. It uses deterministic import extraction via AST, Python version inference with vermin, and a five-tier import-to-package resolver that queries PyPI before any LLM call. For packages with missing metadata it imputes dependencies selectively via LLM, constructs a constraint graph, and solves for consistent version assignments with the Z3 SMT solver. On the HG2.9K benchmark with Gemma2:9B this yields 83.6 percent resolution compared with 54.8 percent for PLLM, median time of 23.9 seconds versus 151.5 seconds, and 2.26 LLM calls per snippet versus approximately 24.9.

What carries the argument

The five-tier import-to-package resolver combined with PyPI metadata and selective LLM imputation to produce a constraint graph solved by the Z3 SMT solver for consistent version assignments.

Load-bearing premise

The constraint graph produced by the five-tier resolver, PyPI metadata, and selective LLM imputations accurately models real-world installable environments.

What would settle it

A set of cases in which SMT-LLM returns a version assignment that pip cannot actually install, or declares a snippet unsatisfiable when a working installation exists.

Figures

read the original abstract

Dependency resolution is the task of selecting package versions that can be installed together without conflicts. It accounts for a significant share of build failures in modern software projects. In the Python ecosystem, this task is especially challenging due to Python 2/3 incompatibilities, deprecated packages, and widespread missing metadata. Recent work, such as PLLM, tackles this problem by using large language models (LLMs) to infer Python and package versions from code and iteratively repairing them based on build errors. We present SMT-LLM, a hybrid system that replaces LLM-only version guessing with formal constraint solving. SMT-LLM uses deterministic import extraction and Python version detection via abstract syntax tree (AST) analysis, the vermin tool to infer minimum Python versions, and a five-tier import-to-package resolver that queries PyPI before any LLM call. We construct a constraint graph from PyPI metadata and LLM-imputed dependencies for packages with missing metadata, then solve for consistent version assignments using a Z3 satisfiability modulo theories (SMT) solver. On the HG2.9K benchmark using Gemma2:9B (10 GB VRAM), SMT-LLM resolves 83.6% of snippets compared to PLLM's 54.8%, while reducing median resolution time from 151.5 s to 23.9 s (6.3x faster) and average LLM calls from ~24.9 to 2.26 per snippet (11x reduction).

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces SMT-LLM, a hybrid Python dependency resolution approach that uses AST parsing for import extraction, the vermin tool for minimum Python version inference, a five-tier import-to-package resolver preferring PyPI metadata, selective LLM imputation only for missing entries, and Z3 SMT solving to find consistent version assignments. It reports that on the HG2.9K benchmark with Gemma2:9B, SMT-LLM achieves 83.6% resolution success compared to PLLM's 54.8%, with median resolution time reduced from 151.5s to 23.9s (6.3x faster) and average LLM calls reduced from ~24.9 to 2.26 per snippet (11x reduction).

Significance. If the performance claims are validated, the work would be significant for software engineering by demonstrating that a constraint-driven hybrid can substantially outperform pure LLM repair methods while using far fewer LLM calls and less time. The approach earns credit for being grounded in external deterministic artifacts (Z3, PyPI metadata, AST analysis, vermin) with no free parameters or circular definitions, and for the selective use of LLM only where metadata is absent.

major comments (2)

- [Evaluation / benchmark results] The central evaluation metric counts a snippet as resolved when Z3 finds a satisfying assignment to the constructed constraint graph. However, the manuscript provides no experiments or evidence that these assignments correspond to packages that are actually installable via pip (e.g., accounting for unmodeled transitive dependencies, wheel availability, or platform-specific constraints). This mismatch risk directly undermines both the absolute 83.6% success rate and the comparison to PLLM.

- [Evaluation / benchmark description] The description of the HG2.9K benchmark lacks details on snippet selection criteria, the precise operational definition of 'success' or 'resolved', and confirmation that the PLLM baseline was run with identical LLM prompts, hardware, and success criteria. These gaps prevent verification of the reported improvements in success rate, time, and LLM call count.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed review. The comments correctly identify areas where the evaluation section requires clarification and additional supporting evidence. We address each major comment below and indicate the revisions planned for the next version of the manuscript.

read point-by-point responses

-

Referee: [Evaluation / benchmark results] The central evaluation metric counts a snippet as resolved when Z3 finds a satisfying assignment to the constructed constraint graph. However, the manuscript provides no experiments or evidence that these assignments correspond to packages that are actually installable via pip (e.g., accounting for unmodeled transitive dependencies, wheel availability, or platform-specific constraints). This mismatch risk directly undermines both the absolute 83.6% success rate and the comparison to PLLM.

Authors: We agree that the 'resolved' metric is defined strictly as the existence of a Z3-satisfying assignment over the constraint graph built from direct imports, PyPI metadata, and selective LLM imputations. This is a formal proxy for dependency consistency rather than a direct guarantee of pip install success, as transitive dependencies beyond the first level, wheel availability, and platform constraints are not fully modeled. The PLLM baseline is evaluated using an analogous notion of producing a consistent version set. To address the concern, the revised manuscript will add an explicit limitations subsection discussing these modeling gaps and will include results from a post-hoc manual verification: we will attempt pip installation on a random sample of 100 SMT-LLM-resolved snippets (reporting the fraction that install cleanly on a standard Linux environment). This provides concrete evidence of practical utility while preserving the original metric as the primary evaluation criterion. revision: partial

-

Referee: [Evaluation / benchmark description] The description of the HG2.9K benchmark lacks details on snippet selection criteria, the precise operational definition of 'success' or 'resolved', and confirmation that the PLLM baseline was run with identical LLM prompts, hardware, and success criteria. These gaps prevent verification of the reported improvements in success rate, time, and LLM call count.

Authors: We accept that the current benchmark description is insufficient for full reproducibility. HG2.9K comprises 2,900 snippets drawn from GitHub repositories exhibiting import-related build failures; selection required the presence of at least one import statement and a detectable dependency conflict indicator. 'Resolved' is defined precisely as the Z3 solver returning SAT together with a model that assigns concrete versions satisfying every extracted constraint. The PLLM baseline was re-executed using the identical Gemma2:9B model, the same single-GPU hardware configuration (10 GB VRAM), the same prompt templates for version inference, and the identical success criterion of producing a consistent version assignment. The revised manuscript will expand the 'Benchmark and Experimental Setup' section with these details, including the exact selection script criteria, the formal definition of resolved, and a statement confirming baseline equivalence. revision: yes

Circularity Check

No circularity; pipeline grounded in external deterministic tools and solver

full rationale

The derivation constructs a constraint graph from AST-based import extraction, vermin Python-version inference, PyPI metadata queries, and selective LLM imputation only for missing entries, then applies the external Z3 SMT solver to find satisfying assignments. Reported success rates (83.6% on HG2.9K) are empirical counts of solver solutions on this independently built graph, not predictions or results forced by self-definition, fitted parameters renamed as outputs, or self-citation chains. No equations reduce the target metric to the inputs by construction, and the comparison baseline (PLLM) is external. The method is self-contained against verifiable external artifacts.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Python package dependencies and version constraints can be faithfully represented as a satisfiability problem for an SMT solver

- domain assumption AST-based import extraction and the vermin tool produce accurate minimum Python version requirements for the majority of snippets

Reference graph

Works this paper leans on

-

[1]

Antony Bartlett, Cynthia Liem, and Annibale Panichella. 2025. The Last Depen- dency Crusade: Solving Python Dependency Conflicts with LLMs.2025 40th IEEE/ACM International Conference on Automated Software Engineering Workshops (ASEW)(2025), 66–73. https://api.semanticscholar.org/CorpusID:275921543

work page 2025

-

[2]

Kowshik Chowdhury. 2026. A hybrid SMT + selective-LLM pipeline for Python dependency resolution (For FSE-AIWare ’26). https://github.com/Kowshik-18/ SMT-LLM. [Online; accessed 10-March-2026]

work page 2026

-

[3]

Leonardo De Moura and Nikolaj Bjørner. 2008. Z3: An efficient SMT solver. In International conference on Tools and Algorithms for the Construction and Analysis of Systems. Springer, 337–340

work page 2008

-

[4]

Eric Horton and Chris Parnin. 2018. Gistable: Evaluating the Executability of Python Code Snippets on GitHub. InProc. IEEE International Conference on Software Maintenance and Evolution (ICSME). doi:10.1109/ICSME.2018.00029

-

[5]

Eric Horton and Chris Parnin. 2019. Dockerizeme: Automatic inference of environ- ment dependencies for python code snippets. In2019 IEEE/ACM 41st International Conference on Software Engineering (ICSE). IEEE, 328–338

work page 2019

-

[6]

Xinyu Jia, Yu Zhou, Yasir Hussain, and Wenhua Yang. 2024. An empirical study on Python library dependency and conflict issues. In2024 IEEE 24th International Conference on Software Quality, Reliability and Security (QRS). IEEE, 504–515

work page 2024

-

[7]

Zhijie Jia et al. 2024. A Survey on Python Dependency Conflicts.IEEE Transactions on Software Engineering(2024). doi:10.1109/TSE.2024.3458529

- [8]

-

[9]

Daniel Le Berre and Pascal Rapicault. 2009. Dependency Management for the Eclipse Ecosystem. InProc. International Workshop on Open Component Ecosystems (IWOCE). doi:10.1145/1595800.1595803

-

[10]

Caroline Lemieux, Jeevana Priya Inala, Shuvendu K. Lahiri, and Siddhartha Sen

-

[11]

CodaMosa: Escaping Coverage Plateaus in Test Generation with Pre- Trained Large Language Models. InProc. IEEE/ACM International Conference on Software Engineering (ICSE). doi:10.1109/ICSE48619.2023.00085

-

[12]

Fabio Mancinelli, Jaap Boender, Roberto Di Cosmo, and Jerome Vouillon. 2006. Managing the Complexity of Large Free and Open Source Package-Based Soft- ware Distributions. InProc. IEEE/ACM International Conference on Automated Software Engineering (ASE). doi:10.1109/ASE.2006.49

-

[13]

Ollama. 2026. Gemma 2 9B. https://ollama.com/library/gemma2:9b. [Online; accessed 05-March-2026]

work page 2026

-

[14]

Ying Wang, Ming Wen, Yepang Liu, Yibo Wang, Zhenming Li, Chao Wang, Hai Yu, Shing-Chi Cheung, Chang Xu, and Zhiliang Zhu. 2020. Watchman: Monitoring dependency conflicts for python library ecosystem. InProceedings of the ACM/IEEE 42nd international conference on software engineering. 125–135

work page 2020

- [15]

-

[16]

Yang, Claire Le Goues, Ruben Martins, and Vincent J

Aidan Z.H. Yang, Claire Le Goues, Ruben Martins, and Vincent J. Hellendoorn

-

[17]

Large Language Models for Test-Free Fault Localization. InProc. IEEE/ACM International Conference on Software Engineering (ICSE). doi:10.1145/3597503. 3623342

-

[18]

Min Yi. 2026. Abstract Syntax Tree (AST) Deep Dive: From Theory to Practical Compiler Implementation. https://dev.to/min_yi_e5fbf986e24f1c42df/ abstract-syntax-tree-ast-deep-dive-from-theory-to-practical-compiler- implementation-4jpo. [Online; accessed 05-March-2026]

work page 2026

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.