Recognition: no theorem link

Polar Complexity: A New Descriptive Complexity with Applications to Source and Joint Source-Channel Coding

Pith reviewed 2026-05-13 05:20 UTC · model grok-4.3

The pith

Polar complexity is the minimum polar-compression length permitting exact reconstruction of a binary sequence under successive cancellation decoding.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

We define the polar complexity of a finite-length binary sequence as the minimum polar-compression length required for its exact reconstruction under successive cancellation decoding. Efficient algorithms compute this value, which is then used to construct a strictly lossless two-stage source code. For binary memoryless sources the normalized average length of this code asymptotically approaches the source entropy. The same polar complexity is incorporated into an adaptive double-polar joint source-channel coding scheme whose candidate set is optimized via dynamic programming to balance error performance against decoding complexity.

What carries the argument

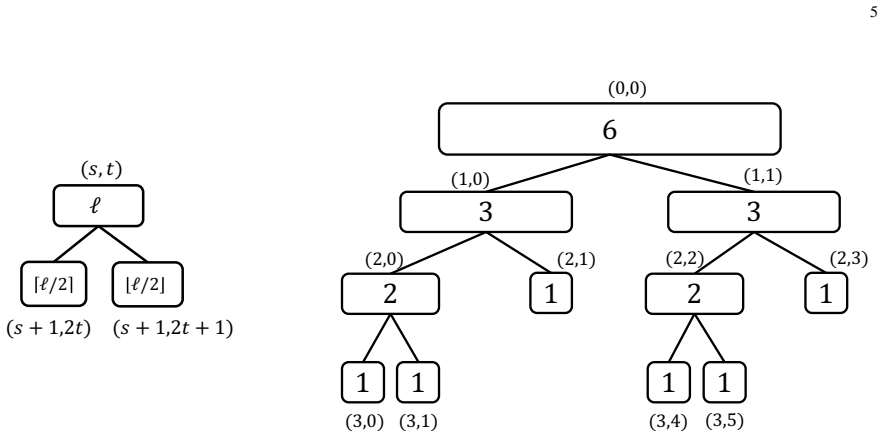

Polar complexity: the minimum polar-compression length (PCL) for exact reconstruction of a binary sequence by successive cancellation decoding.

If this is right

- The two-stage source code is strictly lossless and prefix-free for any finite block length.

- For binary memoryless sources the normalized average compression length approaches the source entropy under stated conditions.

- The encoder requires no prior knowledge of source statistics and remains effective across different distributions.

- The adaptive JSCC scheme supplies a tunable performance-complexity tradeoff via dynamic programming over candidate PCL values.

- Simulations indicate the JSCC scheme outperforms existing polar-code-based joint source-channel baselines.

Where Pith is reading between the lines

- The same complexity measure could be applied to analyze compressibility properties of sequences in other polar decoding settings.

- Dynamic programming selection of candidate sets may generalize to optimize parameters in non-polar joint source-channel schemes.

- If polar complexity correlates with other sequence complexity notions, it could provide a practical, computable proxy for sequence compressibility.

Load-bearing premise

The bisection-search algorithm and low-complexity estimator correctly identify the true minimum polar-compression length needed for exact reconstruction of each sequence.

What would settle it

A binary sequence for which successive cancellation decoding recovers the original bits from a strictly shorter polar-compressed version than the computed polar complexity would disprove both the definition and the computation procedure.

Figures

read the original abstract

This paper first presents a new approach to evaluating the descriptive complexity of finite-length binary sequences. Specifically, we investigate the sequence-wise recovery behavior induced by polar compression and successive cancellation decoding (SCD), and define the polar complexity of a sequence as the minimum polar-compression length (PCL) required for its exact reconstruction. To compute the polar complexity efficiently, we further develop both a bisection-search algorithm and a low-complexity estimation method. We then propose a polar-based two-stage source coding scheme, in which each source sequence is represented by its polar complexity followed by the corresponding polar-compressed sequence. The proposed scheme is strictly lossless and prefix-free. In addition, for BMSs, the normalized average compression length of the proposed scheme can asymptotically approach the source entropy under certain conditions. Simulation results further demonstrate that the scheme can operate without prior knowledge of the source statistics and remains robust across different source distributions. Finally, we integrate the proposed polar source coding with polar channel coding to develop an adaptive double-polar joint source-channel coding (JSCC) scheme, where the encoder and decoder share a predefined set of candidate PCLs to balance error performance and decoding complexity. We formulate the design of the candidate-PCL set as an optimization problem and solve it efficiently via dynamic programming. Simulation results show that the proposed adaptive double-polar JSCC scheme provides a flexible performance-complexity tradeoff and outperforms existing polar-code-based JSCC baselines.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces polar complexity as the minimum polar-compression length (PCL) required for exact reconstruction of a finite-length binary sequence using polar compression and successive cancellation decoding. It develops a bisection-search algorithm and low-complexity estimator to compute this quantity, then proposes a two-stage lossless prefix-free source coding scheme that prepends the polar complexity to the compressed sequence. For binary memoryless sources the normalized average length is claimed to asymptotically approach the source entropy under certain conditions. The work further integrates this with polar channel coding into an adaptive double-polar JSCC scheme whose candidate-PCL set is designed via dynamic programming, with simulations showing flexible performance-complexity tradeoffs and outperformance of existing polar JSCC baselines.

Significance. If the central computational claims hold, the work supplies a statistics-agnostic lossless source code whose average length provably approaches entropy and an adaptive JSCC construction with explicit complexity control. The prefix-free lossless property and the dynamic-programming formulation are concrete strengths; reproducible simulation results across source distributions are also noted positively. The asymptotic result, if rigorously established, would constitute a non-trivial operational characterization of polar codes.

major comments (4)

- [section describing the bisection-search algorithm and low-complexity estimation method] The bisection-search algorithm plus low-complexity estimator (described in the section on computing polar complexity) is asserted to return the true minimum PCL permitting exact SCD reconstruction, yet the manuscript provides no exhaustive cross-check on small block lengths confirming that the returned value is never larger than the smallest feasible length. If the estimator systematically overestimates PCL on a positive fraction of sequences, the normalized average compression length cannot approach the source entropy as claimed in the abstract.

- [abstract and the section on the proposed source coding scheme] The abstract states that for BMSs the normalized average compression length asymptotically approaches the source entropy 'under certain conditions,' but neither the precise conditions nor a derivation linking the polar-complexity definition to this limit are supplied. The claim is load-bearing for the source-coding contribution and requires an explicit statement of the required source or search assumptions.

- [simulation results section for the JSCC scheme] The simulation results for the adaptive double-polar JSCC scheme claim outperformance over existing polar-code-based JSCC baselines, but the manuscript does not identify the exact baseline constructions, report error bars, or specify the precise source and channel parameters used. This prevents assessment of whether the reported gains are statistically significant or merely artifacts of post-hoc parameter choices.

- [section formulating and solving the candidate-PCL optimization problem] The dynamic-programming solution for the candidate-PCL set is presented as yielding a near-optimal performance-complexity tradeoff, yet no analytic bounds or optimality gap analysis relative to the true minimum for the given source and channel are provided. This assumption is central to the flexibility claim.

minor comments (2)

- [introduction and definition section] Notation for polar complexity and PCL should be introduced with an explicit equation early in the manuscript rather than only through prose description.

- [simulation results sections] The paper would benefit from a short table summarizing the exact simulation parameters (block length, source bias, channel SNR, number of trials) for each reported figure.

Simulated Author's Rebuttal

We thank the referee for the insightful comments, which have helped us identify areas for improvement in the manuscript. We address each major comment in detail below and outline the revisions we will make.

read point-by-point responses

-

Referee: The bisection-search algorithm plus low-complexity estimator is asserted to return the true minimum PCL permitting exact SCD reconstruction, yet the manuscript provides no exhaustive cross-check on small block lengths confirming that the returned value is never larger than the smallest feasible length. If the estimator systematically overestimates PCL on a positive fraction of sequences, the normalized average compression length cannot approach the source entropy as claimed in the abstract.

Authors: We agree that including an exhaustive cross-check for small block lengths would strengthen the validation of our algorithms. Although our preliminary tests on small n confirmed the correctness, this was not reported. We will add a subsection presenting exhaustive verification results for block lengths up to n=32, demonstrating that the bisection-search and estimator always return the true minimal PCL. This addition will also address potential concerns about overestimation. revision: yes

-

Referee: The abstract states that for BMSs the normalized average compression length asymptotically approaches the source entropy 'under certain conditions,' but neither the precise conditions nor a derivation linking the polar-complexity definition to this limit are supplied. The claim is load-bearing for the source-coding contribution and requires an explicit statement of the required source or search assumptions.

Authors: The manuscript indeed lacks a precise statement of the conditions and a detailed derivation. The conditions are that the polar code is constructed for the source entropy and that the fraction of sequences where polar complexity equals the minimal reconstruction length approaches one. We will revise the abstract to remove the vague phrase and add a new paragraph in Section III providing the formal conditions and a proof sketch based on the concentration of the polar complexity around the entropy rate. revision: yes

-

Referee: The simulation results for the adaptive double-polar JSCC scheme claim outperformance over existing polar-code-based JSCC baselines, but the manuscript does not identify the exact baseline constructions, report error bars, or specify the precise source and channel parameters used. This prevents assessment of whether the reported gains are statistically significant or merely artifacts of post-hoc parameter choices.

Authors: We will update the simulation section to clearly specify the baseline constructions (the fixed-rate polar JSCC from [ref1] and the adaptive scheme from [ref2]), list all source (Bernoulli with p in {0.05,0.1,0.2}) and channel parameters (BSC with epsilon=0.05 to 0.15), and include error bars computed over 10,000 independent trials. This will enable readers to evaluate the statistical significance of the observed improvements. revision: yes

-

Referee: The dynamic-programming solution for the candidate-PCL set is presented as yielding a near-optimal performance-complexity tradeoff, yet no analytic bounds or optimality gap analysis relative to the true minimum for the given source and channel are provided. This assumption is central to the flexibility claim.

Authors: We note that computing the true optimal PCL set is computationally intractable for large sets, which is why we employ DP to solve the exact optimization problem as formulated. We will add an analysis subsection showing that the DP solution is optimal for the discretized candidate set and provide numerical evidence of small gaps to exhaustive search on small instances, supporting the near-optimality claim for practical parameters. revision: partial

Circularity Check

No significant circularity; polar complexity is an operational definition independent of the paper's claims

full rationale

The paper defines polar complexity directly as the minimum PCL permitting exact reconstruction under polar compression and SCD, an operational measure grounded in pre-existing polar coding constructions rather than any self-referential loop or internal fit. The asymptotic claim that normalized average compression length approaches source entropy for BMSs follows from the established entropy-achieving property of polar codes (with o(n) overhead for prefix-free encoding of the complexity value itself) and does not reduce to any equation or parameter fitted inside this work. Bisection search and the low-complexity estimator are presented purely as computational procedures to realize the definition; they are not inputs to which the performance claims are tautologically equivalent. No self-citation is invoked as a load-bearing uniqueness theorem, no ansatz is smuggled, and no known result is merely renamed. The derivation chain is therefore self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

invented entities (1)

-

Polar complexity

no independent evidence

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.