Recognition: no theorem link

From Clever Hans to Scientific Discovery: Interpreting EEG Foundational Transformers with LRP

Pith reviewed 2026-05-13 06:18 UTC · model grok-4.3

The pith

LRP on EEG foundation models can verify decisions and generate new biological hypotheses.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

We find that LRP can both verify EEG-FM decisions and surface novel, biologically plausible hypotheses from them. In motor imagery, it unmasks 'Clever Hans' behavior where models prioritize task correlated ocular signals over the intended motor correlates. In a naturalistic paradigm for affect prediction, it reveals a recurring reliance on a central electrode cluster, suggesting a candidate sensorimotor signature of arousal.

What carries the argument

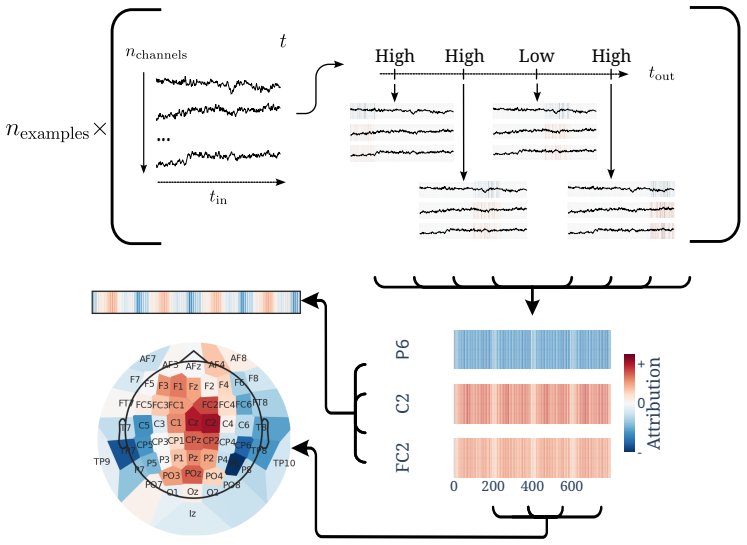

Attention-aware Layer-wise Relevance Propagation (LRP) extended from CNNs to Transformer architectures for attributing relevance in EEG foundation model predictions.

If this is right

- LRP can detect when EEG models are using spurious correlations like ocular artifacts rather than intended neural features.

- It can propose new candidate brain signatures, such as central clusters for arousal in affect tasks.

- The method supports both validation of model behavior and exploratory discovery in neuroscience.

- As EEG foundation models advance, LRP's role in interpretation and hypothesis generation will become more significant.

Where Pith is reading between the lines

- Applying LRP could help refine EEG experimental designs by identifying which signals models actually use.

- This approach might be adapted to other brain imaging techniques to extract insights from their models.

- If the hypotheses hold, they could lead to targeted studies on sensorimotor involvement in emotional arousal.

Load-bearing premise

LRP heatmaps accurately reflect biologically meaningful brain signals rather than being influenced by model artifacts or ambiguities in EEG interpretation.

What would settle it

Observing whether LRP heatmaps align with known neurophysiological patterns, such as activation in motor cortex areas during motor imagery tasks when those are the true correlates.

Figures

read the original abstract

Emerging foundation models (FMs) in electroencephalography (EEG) promise a path to scale deep learning in diagnostics and brain-computer interfaces despite data scarcity, yet their opaque nature remains a barrier to wider adoption. We investigate attention-aware Layer-wise relevance propagation (LRP) as a post-hoc attribution method for EEG-FMs, extending LRP's use on convolutional neural network (CNN)-based EEG models to the Transformer architectures that current FMs are based on. We find that LRP can both verify EEG-FM decisions and surface novel, biologically plausible hypotheses from them. In motor imagery, it unmasks 'Clever Hans' behavior where models prioritize task correlated ocular signals over the intended motor correlates. In a naturalistic paradigm for affect prediction, it reveals a recurring reliance on a central electrode cluster, suggesting a candidate sensorimotor signature of arousal. Though heatmap interpretation remains ambiguous in this complex domain, the results position LRP as a tool for both verification and exploration of EEG-FMs, a role that will grow in both importance and discovery potential as the underlying models mature.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper extends attention-aware Layer-wise Relevance Propagation (LRP) to transformer-based EEG foundation models. It claims that LRP both verifies model decisions by detecting Clever Hans behavior (e.g., reliance on ocular artifacts in motor imagery) and generates novel hypotheses (e.g., a recurring central electrode cluster as a candidate sensorimotor signature of arousal in affect prediction). The work positions LRP as a verification and exploration tool for EEG-FMs despite ambiguities in heatmap interpretation.

Significance. If the LRP attributions can be shown to reliably isolate task-relevant neural sources rather than artifacts, the work would provide a practical post-hoc method for interpreting opaque EEG foundation models, potentially accelerating their use in BCI and diagnostics while enabling scientific discovery from model internals. It builds on prior LRP applications to CNN EEG models by addressing transformer architectures.

major comments (3)

- [Affect prediction experiment subsection] Affect prediction results: The recurring central electrode cluster is presented as a candidate sensorimotor signature of arousal, but without quantitative overlap metrics against established markers (e.g., frontal alpha asymmetry or mu-rhythm desynchronization), statistical tests across subjects, or controls for volume conduction/reference effects. This makes the biological plausibility claim load-bearing yet under-supported.

- [Methods, LRP extension] LRP application to transformers: No ablation or sensitivity analysis is provided for the LRP rules on attention layers, leaving open whether attributions reflect stable task-relevant signals or propagation instabilities specific to the transformer architecture.

- [Motor imagery results] Motor imagery results: Identification of ocular artifacts as Clever Hans behavior is plausible, yet the section lacks baseline comparisons, error bars, or explicit data exclusion rules to quantify the effect size and rule out inter-subject variability.

minor comments (2)

- [Abstract and Results] The abstract and results would benefit from explicit statements on the number of subjects, cross-validation scheme, and any preprocessing steps that could influence electrode cluster findings.

- [Methods] Notation for attention-aware LRP modifications could be clarified with a small equation or pseudocode to distinguish it from standard LRP.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback, which has helped us clarify and strengthen the manuscript. We address each major comment below and indicate the revisions made.

read point-by-point responses

-

Referee: [Affect prediction experiment subsection] Affect prediction results: The recurring central electrode cluster is presented as a candidate sensorimotor signature of arousal, but without quantitative overlap metrics against established markers (e.g., frontal alpha asymmetry or mu-rhythm desynchronization), statistical tests across subjects, or controls for volume conduction/reference effects. This makes the biological plausibility claim load-bearing yet under-supported.

Authors: We agree that additional quantitative support improves the claim. In the revised manuscript we have added Dice overlap metrics between the central cluster and established markers (frontal alpha asymmetry, mu-rhythm desynchronization) as well as subject-level statistical tests (Wilcoxon signed-rank with FDR correction) confirming consistency. For volume conduction and reference effects we have expanded the Discussion to explicitly acknowledge these confounds and note that electrode-level LRP cannot fully disambiguate them without source localization; we therefore frame the finding as a candidate hypothesis rather than a definitive signature. These changes make the biological plausibility argument more rigorous while preserving its exploratory nature. revision: partial

-

Referee: [Methods, LRP extension] LRP application to transformers: No ablation or sensitivity analysis is provided for the LRP rules on attention layers, leaving open whether attributions reflect stable task-relevant signals or propagation instabilities specific to the transformer architecture.

Authors: We acknowledge the value of such analysis. We have added a dedicated sensitivity subsection in Methods that ablates the LRP rules applied to attention layers (comparing epsilon, gamma, and composite rules) and reports attribution stability via cosine similarity and rank correlation across rule variants. The key electrode clusters and Clever Hans patterns remain consistent, indicating that the reported attributions are not driven by propagation instabilities. revision: yes

-

Referee: [Motor imagery results] Motor imagery results: Identification of ocular artifacts as Clever Hans behavior is plausible, yet the section lacks baseline comparisons, error bars, or explicit data exclusion rules to quantify the effect size and rule out inter-subject variability.

Authors: We have revised the motor imagery section to include (i) baseline relevance maps obtained from label-shuffled and random-initialized models, (ii) error bars showing mean and standard deviation of ocular relevance across subjects, and (iii) explicit exclusion criteria based on EOG amplitude thresholds (>100 µV). These additions allow direct quantification of the effect size and demonstrate that the ocular bias exceeds baseline levels in the majority of subjects. revision: yes

Circularity Check

No circularity: LRP application is an independent post-hoc analysis on external EEG datasets

full rationale

The paper applies the pre-existing LRP attribution technique to transformer-based EEG foundation models on motor-imagery and affect-prediction datasets. No equations, parameters, or predictions are defined in terms of the target interpretations; the Clever Hans detection and central-electrode observation are empirical observations from heatmap inspection rather than quantities fitted to or derived from the same data by construction. No self-citation chains, uniqueness theorems, or ansatzes are invoked as load-bearing premises. The derivation chain is therefore self-contained against external benchmarks and does not reduce to its inputs.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption LRP attributions are faithful to the model's internal computations for transformer architectures

Reference graph

Works this paper leans on

-

[1]

Dingkun Liu et al.EEG Foundation Models: Progresses, Benchmarking, and Open Problems. Feb. 5, 2026.DOI:10.48550/arXiv.2601.17883. arXiv:2601.17883 [cs]. Pre-published

-

[2]

Large Brain Model for Learning Generic Represen- tations with Tremendous EEG Data in BCI

Weibang Jiang, Liming Zhao, and Bao-liang Lu. “Large Brain Model for Learning Generic Represen- tations with Tremendous EEG Data in BCI”. In: The Twelfth International Conference on Learning Representations. Oct. 13, 2023

work page 2023

-

[3]

Jiquan Wang et al.CBraMod: A Criss-Cross Brain Foundation Model for EEG Decoding. Apr. 13, 2025. DOI:10.48550/arXiv.2412.07236. arXiv:2412.07236 [eess]. Pre-published. 8

-

[4]

An Accurate and Rapidly Calibrating Speech Neuroprosthesis

Nicholas S. Card et al. “An Accurate and Rapidly Calibrating Speech Neuroprosthesis”. In:New England Journal of Medicine391.7 (Aug. 14, 2024), pp. 609–618.ISSN: 0028-4793.DOI: 10.1056/ NEJMoa2314132

work page 2024

-

[5]

PLOS ONE10(7), e0130140 (Jul 2015).https://doi.org/ 10.1371/journal.pone.0130140

Sebastian Bach et al. “On Pixel-Wise Explanations for Non-Linear Classifier Decisions by Layer- Wise Relevance Propagation”. In:PLOS ONE10.7 (July 10, 2015), e0130140.ISSN: 1932-6203.DOI: 10.1371/journal.pone.0130140

-

[6]

Backpropagation through time and the brain.Current Opinion in Neurobiology, 55:82–89, 2019

Grégoire Montavon et al. “Explaining Nonlinear Classification Decisions with Deep Taylor Decompo- sition”. In:Pattern Recognition65 (May 1, 2017), pp. 211–222.ISSN: 0031-3203.DOI: 10.1016/j. patcog.2016.11.008

work page doi:10.1016/j 2017

-

[7]

Layer-Wise Relevance Propagation: An Overview

Grégoire Montavon et al. “Layer-Wise Relevance Propagation: An Overview”. In:Explainable AI: Interpreting, Explaining and Visualizing Deep Learning. Ed. by Wojciech Samek et al. V ol. 11700. Cham: Springer International Publishing, 2019, pp. 193–209.ISBN: 978-3-030-28953-9 978-3-030-28954-6. DOI:10.1007/978-3-030-28954-6_10

-

[8]

AttnLRP: Attention-Aware Layer-Wise Relevance Propagation for Transformers

Reduan Achtibat et al. “AttnLRP: Attention-Aware Layer-Wise Relevance Propagation for Transformers”. In:Proceedings of the 41st International Conference on Machine Learning. International Conference on Machine Learning. PMLR, July 8, 2024, pp. 135–168

work page 2024

-

[9]

Yann Lecun et al. “Gradient-Based Learning Applied to Document Recognition”. In:Proceedings of the IEEE86.11 (Nov. 1998), pp. 2278–2324.ISSN: 1558-2256.DOI:10.1109/5.726791

-

[10]

Ashish Vaswani et al. “Attention Is All You Need”. In:Advances in Neural Information Processing Systems. Ed. by I. Guyon et al. V ol. 30. Curran Associates, Inc., 2017

work page 2017

-

[11]

Weibang Jiang et al. “NeuroLM: A Universal Multi-task Foundation Model for Bridging the Gap between Language and EEG Signals”. In: The Thirteenth International Conference on Learning Representations. Oct. 4, 2024

work page 2024

-

[12]

BIOT: Biosignal Transformer for Cross-data Learning in the Wild

Chaoqi Yang, M. Westover, and Jimeng Sun. “BIOT: Biosignal Transformer for Cross-data Learning in the Wild”. In:Advances in Neural Information Processing Systems36 (Dec. 15, 2023), pp. 78240–78260

work page 2023

-

[13]

EEG-DINO: Learning EEG Foundation Models via Hierarchical Self-distillation

Xujia Wang et al. “EEG-DINO: Learning EEG Foundation Models via Hierarchical Self-distillation”. In:Medical Image Computing and Computer Assisted Intervention – MICCAI 2025. Ed. by James C. Gee et al. Cham: Springer Nature Switzerland, 2026, pp. 196–205.ISBN: 978-3-032-04927-8.DOI: 10.1007/978-3-032-04927-8_19

-

[14]

A Path towards Autonomous Machine Intelligence Version 0.9. 2, 2022-06-27

Yann LeCun. “A Path towards Autonomous Machine Intelligence Version 0.9. 2, 2022-06-27”. In:Open Review62.1 (2022), pp. 1–62

work page 2022

-

[15]

Brain-JEPA: Brain Dynamics Foundation Model with Gradient Positioning and Spatiotemporal Masking

Zijian Dong et al. “Brain-JEPA: Brain Dynamics Foundation Model with Gradient Positioning and Spatiotemporal Masking”. In:Advances in Neural Information Processing Systems37 (Dec. 16, 2024), pp. 86048–86073

work page 2024

-

[16]

Akhil Kondepudi et al.Health System Learning Achieves Generalist Neuroimaging Models. Version 1. Nov. 23, 2025.DOI:10.48550/arXiv.2511.18640. arXiv:2511.18640 [cs]. Pre-published

-

[17]

Interpretable Deep Neural Networks for Single-Trial EEG Classification

Irene Sturm et al. “Interpretable Deep Neural Networks for Single-Trial EEG Classification”. In:Journal of Neuroscience Methods274 (Dec. 1, 2016), pp. 141–145.ISSN: 0165-0270.DOI: 10 . 1016 / j . jneumeth.2016.10.008

work page 2016

-

[18]

Interpretable Sleep Stage Classification Based on Layer-Wise Relevance Propaga- tion

Dongdong Zhou et al. “Interpretable Sleep Stage Classification Based on Layer-Wise Relevance Propaga- tion”. In:IEEE Transactions on Instrumentation and Measurement73 (2024), pp. 1–10.ISSN: 1557-9662. DOI:10.1109/TIM.2024.3370799

-

[19]

Ali Nouri and Zahra Tabanfar. “Detection of ADHD Disorder in Children Using Layer-Wise Rele- vance Propagation and Convolutional Neural Network: An EEG Analysis”. In:Frontiers in Biomedical Technologies(Dec. 26, 2023).ISSN: 2345-5837.DOI:10.18502/fbt.v11i1.14507

-

[20]

Explainable Fuzzy Deep Learning for Prediction of Epileptic Seizures Using EEG

Faiq Ahmad Khan et al. “Explainable Fuzzy Deep Learning for Prediction of Epileptic Seizures Using EEG”. In:IEEE Transactions on Fuzzy Systems32.10 (Oct. 2024), pp. 5428–5437.ISSN: 1941-0034. DOI:10.1109/TFUZZ.2024.3434709

-

[21]

Ahmad Almadhor et al. “An Interpretable XAI Deep EEG Model for Schizophrenia Diagnosis Using Feature Selection and Attention Mechanisms”. In:Frontiers in Oncology15 (July 22, 2025), p. 1630291. ISSN: 2234-943X.DOI:10.3389/fonc.2025.1630291. PMID:40766336

-

[22]

Feature Selection Based on Layer-Wise Relevance Propagation for EEG-based MI Classification

Hyeonyeong Nam, Jun-Mo Kim, and Tae-Eui Kam. “Feature Selection Based on Layer-Wise Relevance Propagation for EEG-based MI Classification”. In:2023 11th International Winter Conference on Brain- Computer Interface (BCI). 2023 11th International Winter Conference on Brain-Computer Interface (BCI). Feb. 2023, pp. 1–3.DOI:10.1109/BCI57258.2023.10078676

-

[23]

Akshay Sujatha Ravindran and Jose Contreras-Vidal. “An Empirical Comparison of Deep Learning Explainability Approaches for EEG Using Simulated Ground Truth”. In:Scientific Reports13.1 (Oct. 18, 2023), p. 17709.ISSN: 2045-2322.DOI:10.1038/s41598-023-43871-8

-

[24]

Analyzing Neuroimaging Data Through Recurrent Deep Learning Models

Armin W. Thomas et al. “Analyzing Neuroimaging Data Through Recurrent Deep Learning Models”. In: Frontiers in Neuroscience13 (Dec. 10, 2019).ISSN: 1662-453X.DOI:10.3389/fnins.2019.01321. 9

-

[25]

Simon M. Hofmann et al. “Towards the Interpretability of Deep Learning Models for Multi-Modal Neuroimaging: Finding Structural Changes of the Ageing Brain”. In:NeuroImage261 (Nov. 1, 2022), p. 119504.ISSN: 1053-8119.DOI:10.1016/j.neuroimage.2022.119504

-

[26]

Fabian Eitel et al. “Uncovering Convolutional Neural Network Decisions for Diagnosing Multiple Sclerosis on Conventional MRI Using Layer-Wise Relevance Propagation”. In:NeuroImage: Clinical24 (Jan. 1, 2019), p. 102003.ISSN: 2213-1582.DOI:10.1016/j.nicl.2019.102003

-

[27]

Moritz Böhle et al. “Layer-Wise Relevance Propagation for Explaining Deep Neural Network Decisions in MRI-Based Alzheimer’s Disease Classification”. In:Frontiers in Aging Neuroscience11 (July 31, 2019).ISSN: 1663-4365.DOI:10.3389/fnagi.2019.00194

-

[28]

Interpreting Mental State Decoding with Deep Learning Models

Armin W. Thomas, Christopher Ré, and Russell A. Poldrack. “Interpreting Mental State Decoding with Deep Learning Models”. In:Trends in Cognitive Sciences26.11 (Nov. 1, 2022), pp. 972–986.ISSN: 1364-6613, 1879-307X.DOI:10.1016/j.tics.2022.07.003. PMID:36223760

-

[29]

Self-Supervised Learning of Brain Dynamics from Broad Neuroimaging Data

Armin Thomas, Christopher Ré, and Russell Poldrack. “Self-Supervised Learning of Brain Dynamics from Broad Neuroimaging Data”. In:Advances in Neural Information Processing Systems35 (Dec. 6, 2022), pp. 21255–21269

work page 2022

-

[30]

Cardiac Field Effects on the EEG

Gerhard Dirlich et al. “Cardiac Field Effects on the EEG”. In:Electroencephalography and Clinical Neurophysiology102.4 (Apr. 1, 1997), pp. 307–315.ISSN: 0013-4694.DOI: 10.1016/S0013-4694(96) 96506-2

-

[31]

Ary L. Goldberger et al. “PhysioBank, PhysioToolkit, and PhysioNet: Components of a New Research Resource for Complex Physiologic Signals”. In:Circulation101.23 (June 13, 2000), E215–220.ISSN: 1524-4539.DOI:10.1161/01.cir.101.23.e215. PMID:10851218

-

[32]

physionet.org, 2009.DOI: 10.13026/ C28G6P

Gerwin Schalk et al.EEG Motor Movement/Imagery Dataset. physionet.org, 2009.DOI: 10.13026/ C28G6P

work page 2009

-

[33]

James A. Russell. “A Circumplex Model of Affect”. In:Journal of Personality and Social Psychology 39.6 (1980), pp. 1161–1178.ISSN: 1939-1315.DOI:10.1037/h0077714

-

[34]

Linking Brain–Heart Interactions to Emotional Arousal in Immersive Virtual Reality

Antonin Fourcade et al. “Linking Brain–Heart Interactions to Emotional Arousal in Immersive Virtual Reality”. In:Psychophysiology61.12 (2024), e14696.ISSN: 1469-8986.DOI:10.1111/psyp.14696

-

[35]

AffectTracker: Real-time Continuous Rating of Affective Experience in Im- mersive Virtual Reality

Antonin Fourcade et al. “AffectTracker: Real-time Continuous Rating of Affective Experience in Im- mersive Virtual Reality”. In:Frontiers in Virtual Reality6 (Aug. 26, 2025).ISSN: 2673-4192.DOI: 10.3389/frvir.2025.1567854

-

[36]

Zenodo, June 6, 2023.DOI:10.5281/zenodo.8262486

Eric Larson et al.MNE-Python. Zenodo, June 6, 2023.DOI:10.5281/zenodo.8262486

- [37]

- [38]

-

[39]

ACL, 2020.ht tps://arxiv.org/abs/2005.00928

Samira Abnar and Willem Zuidema.Quantifying Attention Flow in Transformers. May 31, 2020.DOI: 10.48550/arXiv.2005.00928. arXiv:2005.00928 [cs]. Pre-published

-

[40]

A Unified Approach to Interpreting Model Predictions

Scott M Lundberg and Su-In Lee. “A Unified Approach to Interpreting Model Predictions”. In:Advances in Neural Information Processing Systems. V ol. 30. Curran Associates, Inc., 2017

work page 2017

-

[41]

Christopher J. Anders et al.Software for Dataset-wide XAI: From Local Explanations to Global Insights with Zennit, CoRelAy, and ViRelAy. Feb. 28, 2023.DOI: 10 . 48550 / arXiv . 2106 . 13200. arXiv: 2106.13200 [cs]. Pre-published

-

[42]

Decoupled Weight Decay Regularization

Ilya Loshchilov and Frank Hutter. “Decoupled Weight Decay Regularization”. In: International Confer- ence on Learning Representations. Sept. 27, 2018

work page 2018

-

[43]

SGDR: Stochastic Gradient Descent with Warm Restarts

Ilya Loshchilov and Frank Hutter.SGDR: Stochastic Gradient Descent with Warm Restarts. May 3, 2017. DOI:10.48550/arXiv.1608.03983. arXiv:1608.03983 [cs]. Pre-published

work page internal anchor Pith review Pith/arXiv arXiv doi:10.48550/arxiv.1608.03983 2017

-

[44]

July 28, 2016.DOI: 10.48550/arXiv.1603

Gao Huang et al.Deep Networks with Stochastic Depth. July 28, 2016.DOI: 10.48550/arXiv.1603. 09382. arXiv:1603.09382 [cs]. Pre-published

-

[45]

Optimal Spatial Filtering of Single Trial EEG during Imagined Hand Movement

Herbert Ramoser, Johannes Muller-Gerking, and Gert Pfurtscheller. “Optimal Spatial Filtering of Single Trial EEG during Imagined Hand Movement”. In:IEEE Transactions on Rehabilitation Engineering8.4 (Dec. 2000), pp. 441–446.ISSN: 1558-0024.DOI:10.1109/86.895946

-

[46]

Optimizing Spatial Filters for Robust EEG Single-Trial Analysis

Benjamin Blankertz et al. “Optimizing Spatial Filters for Robust EEG Single-Trial Analysis”. In:IEEE Signal Processing Magazine25.1 (2008), pp. 41–56.ISSN: 1558-0792.DOI: 10.1109/MSP.2008. 4408441

-

[47]

Decoding Subjective Emotional Arousal from EEG during an Immersive Virtual Reality Experience

Simon M Hofmann et al. “Decoding Subjective Emotional Arousal from EEG during an Immersive Virtual Reality Experience”. In:eLife10 (Oct. 28, 2021). Ed. by Alexander Shackman, Chris I Baker, and Peter König, e64812.ISSN: 2050-084X.DOI:10.7554/eLife.64812

-

[48]

A Somato-Cognitive Action Network Alternates with Effector Regions in Motor Cortex

Evan M. Gordon et al. “A Somato-Cognitive Action Network Alternates with Effector Regions in Motor Cortex”. In:Nature617.7960 (May 2023), pp. 351–359.ISSN: 1476-4687.DOI: 10.1038/s41586-023- 05964-2. 10

-

[49]

Unmasking Clever Hans Predictors and Assessing What Machines Really Learn

Sebastian Lapuschkin et al. “Unmasking Clever Hans Predictors and Assessing What Machines Really Learn”. In:Nature Communications10.1 (Mar. 11, 2019), p. 1096.ISSN: 2041-1723.DOI: 10.1038/ s41467-019-08987-4

work page 2019

-

[50]

Emotionotopy in the Human Right Temporo-Parietal Cortex

Giada Lettieri et al. “Emotionotopy in the Human Right Temporo-Parietal Cortex”. In:Nature Communi- cations10 (Dec. 5, 2019), p. 5568.ISSN: 2041-1723.DOI: 10.1038/s41467-019-13599-z . PMID: 31804504

-

[51]

Hans H. Kornhuber and Lüder Deecke. “Hirnpotentialänderungen bei Willkürbewegungen und passiven Bewegungen des Menschen: Bereitschaftspotential und reafferente Potentiale”. In:Pflüger’s Archiv für die gesamte Physiologie des Menschen und der Tiere284.1 (Mar. 1, 1965), pp. 1–17.ISSN: 1432-2013. DOI:10.1007/BF00412364

-

[52]

Distribution of the Human Average Movement Potential

Lauren K Gerbrandt, William R Goff, and Dennis B. Smith. “Distribution of the Human Average Movement Potential”. In:Electroencephalography and Clinical Neurophysiology34.5 (May 1, 1973), pp. 461–474.ISSN: 0013-4694.DOI:10.1016/0013-4694(73)90064-3

-

[53]

Preparing to Grasp Emotionally Laden Stimuli

Laura Alice Santos de Oliveira et al. “Preparing to Grasp Emotionally Laden Stimuli”. In:PLOS ONE 7.9 (Sept. 14, 2012), e45235.ISSN: 1932-6203.DOI:10.1371/journal.pone.0045235

-

[54]

Daniel Senkowski et al. “Emotional Facial Expressions Modulate Pain-Induced Beta and Gamma Oscillations in Sensorimotor Cortex”. In:Journal of Neuroscience31.41 (Oct. 12, 2011), pp. 14542– 14550.ISSN: 0270-6474, 1529-2401.DOI:10.1523/JNEUROSCI.6002-10.2011. PMID:21994371

-

[55]

DEAP: A Database for Emotion Analysis ;Using Physiological Signals

Sander Koelstra et al. “DEAP: A Database for Emotion Analysis ;Using Physiological Signals”. In:IEEE Transactions on Affective Computing3.1 (Jan. 2012), pp. 18–31.ISSN: 1949-3045.DOI: 10.1109/T- AFFC.2011.15

work page doi:10.1109/t- 2012

-

[56]

The Perils and Pitfalls of Block Design for EEG Classification Experiments

Ren Li et al. “The Perils and Pitfalls of Block Design for EEG Classification Experiments”. In:IEEE Transactions on Pattern Analysis and Machine Intelligence43.1 (Jan. 2021), pp. 316–333.ISSN: 1939- 3539.DOI:10.1109/TPAMI.2020.2973153

-

[57]

Evaluating the Visualization of What a Deep Neural Network Has Learned

Wojciech Samek et al. “Evaluating the Visualization of What a Deep Neural Network Has Learned”. In: IEEE Transactions on Neural Networks and Learning Systems28.11 (Nov. 2017), pp. 2660–2673.ISSN: 2162-2388.DOI:10.1109/TNNLS.2016.2599820

-

[58]

June 23, 2025.DOI:10.48550/arXiv.2506.19141

Bruno Aristimunha et al.EEG Foundation Challenge: From Cross-Task to Cross-Subject EEG Decoding. June 23, 2025.DOI:10.48550/arXiv.2506.19141. arXiv:2506.19141 [eess]. Pre-published

-

[59]

Biopeaks: A Graphical User Interface for Feature Extraction from Heart- and Breathing Biosignals

Jan C. Brammer. “Biopeaks: A Graphical User Interface for Feature Extraction from Heart- and Breathing Biosignals”. In:Journal of Open Source Software5.54 (Oct. 27, 2020), p. 2621.ISSN: 2475-9066.DOI: 10.21105/joss.02621

-

[60]

NeuroKit2: A Python Toolbox for Neurophysiological Signal Processing

Dominique Makowski et al. “NeuroKit2: A Python Toolbox for Neurophysiological Signal Processing”. In:Behavior Research Methods53.4 (Aug. 1, 2021), pp. 1689–1696.ISSN: 1554-3528.DOI: 10.3758/ s13428-020-01516-y

work page 2021

-

[61]

Christian Szegedy et al.Rethinking the Inception Architecture for Computer Vision. Dec. 11, 2015.DOI: 10.48550/arXiv.1512.00567. arXiv:1512.00567 [cs]. Pre-published

-

[62]

Scikit-Learn: Machine Learning in Python

Fabian Pedregosa et al. “Scikit-Learn: Machine Learning in Python”. In:Journal of Machine Learning Research12.85 (2011), pp. 2825–2830.ISSN: 1533-7928

work page 2011

-

[63]

Raven User Guide — Technical Documentation.URL: https : / / docs . mpcdf . mpg . de / doc / computing/raven-user-guide.html#system-overviewAccessed: July 24, 2025

work page 2025

-

[64]

Optuna: A next-Generation Hyperparameter Optimization Framework

Takuya Akiba et al. “Optuna: A next-Generation Hyperparameter Optimization Framework”. In:Pro- ceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. 2019, pp. 2623–2631

work page 2019

-

[65]

arXiv preprint arXiv:2304.11127 , year=

Shuhei Watanabe.Tree-Structured Parzen Estimator: Understanding Its Algorithm Components and Their Roles for Better Empirical Performance. Version 3. May 26, 2023.DOI: 10.48550/arXiv.2304.11127. arXiv:2304.11127 [cs]. Pre-published

-

[66]

Viper-GPU User Guide — Technical Documentation.URL: https://docs.mpcdf.mpg.de/doc/ computing/viper-gpu-user-guide.htmlAccessed: July 24, 2025. A Data acquisition and cohort details AffectiveVRThe recording setup for EEG and physiological data in a naturalistic setting shares similarities to [34]. Stimuli and emotional feedback mechanism are identical to t...

work page 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.