Recognition: 2 theorem links

· Lean TheoremGenerative Transfer for Entropic Optimal Transport with Unknown Costs

Pith reviewed 2026-05-13 05:06 UTC · model grok-4.3

The pith

A generative framework transfers entropic optimal transport couplings to new marginals using samples from a reference coupling under an unknown shared cost.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

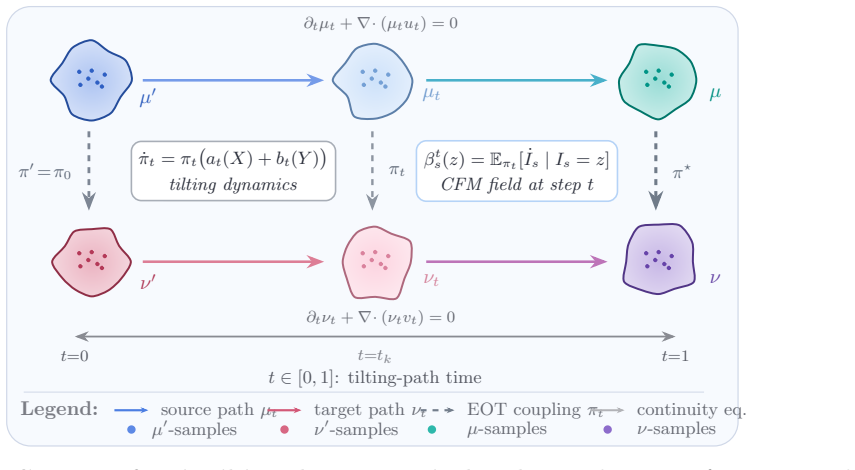

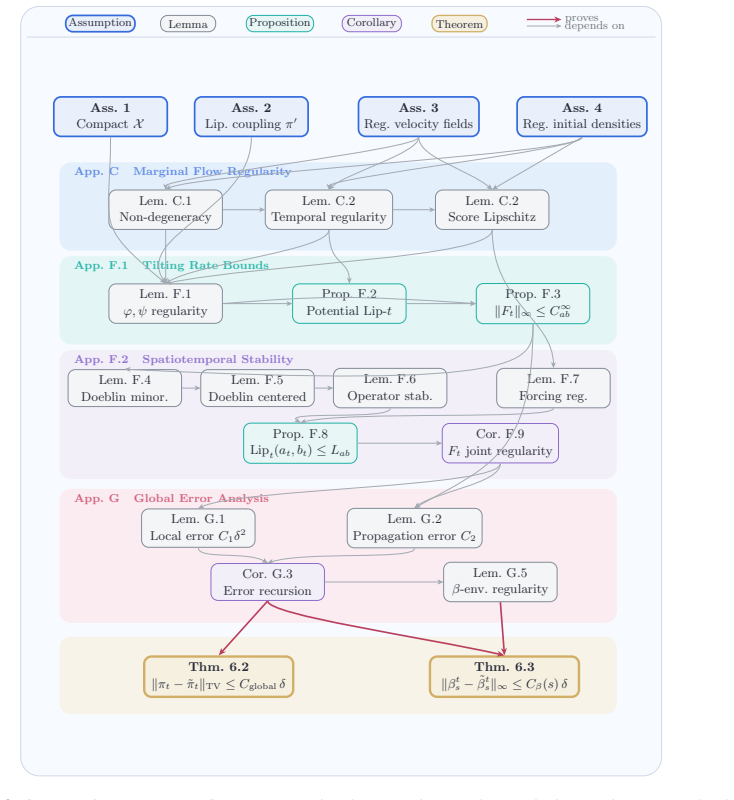

Given samples from a reference optimal coupling under a latent cost, an iterative path-wise tilting algorithm can be used to evolve the coupling to new marginals, yielding covariance-type equations for transport fields that, when integrated with conditional flow matching, generate the target EOT plan with global convergence rate O(δ) in W1 distance.

What carries the argument

The iterative path-wise tilting algorithm that evolves the coupling jointly with a marginal transport path, producing infinitesimal updates via covariance-type evolution equations for transport vector fields.

If this is right

- The generated coupling converges to the target EOT plan in W1 distance at rate O(δ).

- The joint evolution of coupling and transport path permits mass to move outside the support of the reference samples.

- Sample-level learning rules derived from the tilting dynamics integrate with conditional flow matching to yield a practical paired-data sampler.

- Global convergence guarantees apply to the full iterative process.

Where Pith is reading between the lines

- Reference couplings can act as data-driven proxies for inferring latent costs when direct cost access is unavailable.

- The covariance evolution equations may extend to other generative transport settings where plans must adapt to shifted marginals.

- The approach enables sampling of paired observations consistent with the transferred EOT plan without recomputing costs from scratch.

Load-bearing premise

A single latent cost function is shared between the reference coupling and the new marginals and can be recovered sufficiently well through the iterative path-wise tilting process to allow mass to move beyond the reference support.

What would settle it

Running the tilting algorithm on synthetic data with known ground-truth cost, then measuring whether the W1 distance between the generated coupling and the exact target EOT plan for new marginals decreases proportionally to the step-size parameter δ.

Figures

read the original abstract

This paper addresses the practical challenge in Entropic Optimal Transport (EOT) where the underlying ground cost function is typically latent and unobserved. Rather than assuming a fixed geometric cost, we adopt a data-driven approach where a shared cost is revealed only through samples from a reference optimal coupling. The question is then: given samples from a reference optimal coupling, can we recover the optimal coupling for new marginals under the same latent cost? We introduce a generative transfer framework that recovers the optimal coupling for new marginals by utilizing an iterative path-wise tilting algorithm. Unlike static importance reweighting, this method evolves the coupling jointly with a marginal transport path, allowing mass to move beyond the reference support. We derive sample-level learning rules for these infinitesimal updates, which yield covariance-type evolution equations for the associated transport vector fields. By integrating this dynamics with Conditional Flow Matching (CFM), we produce a practical sampler for paired data. Finally, we provide theoretical guarantees establishing a global convergence rate of \mathcal{O}(\delta), ensuring the generated coupling converges to the target EOT plan in W_1 distance.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper addresses Entropic Optimal Transport (EOT) with unknown latent costs by proposing a generative transfer framework. Given samples from a reference optimal coupling, an iterative path-wise tilting algorithm evolves the coupling jointly with a marginal transport path to recover the optimal coupling for new marginals under the shared cost. Sample-level learning rules are derived to yield covariance-type evolution equations for the transport vector fields; these are integrated with Conditional Flow Matching (CFM) to produce a practical paired-data sampler. Theoretical guarantees are provided for an O(δ) global convergence rate in W1 distance of the generated coupling to the target EOT plan.

Significance. If the O(δ) rate holds for the full generative pipeline (including CFM approximation), the work would provide a practical, data-driven method for transferring EOT plans across marginals without explicit cost specification. The path-wise tilting combined with covariance evolution equations and CFM integration offers a novel sampler construction, and the explicit convergence guarantee is a strength if the error terms are fully controlled.

major comments (2)

- [theoretical guarantees (abstract and convergence theorem)] The abstract and theoretical guarantees claim an O(δ) W1 convergence rate for the generated coupling produced by integrating the tilting dynamics with CFM. However, the analysis appears to control only the ideal continuous tilting path (assuming exact vector fields), without propagating the CFM training error, discretization error, or extrapolation error beyond the reference support into the final W1 bound. This is load-bearing for the central claim that the practical sampler converges at the stated rate.

- [path-wise tilting algorithm and learning rules] The weakest assumption—that a single latent cost function is shared and can be recovered sufficiently well via the iterative path-wise tilting to permit mass transport beyond the reference support—is stated but lacks explicit quantitative bounds or verification in the derivation of the evolution equations. This directly affects whether the sample-level learning rules remain valid for the new marginals.

minor comments (1)

- [abstract] The abstract could specify the dependence of δ on dimension, sample size, and training budget to clarify the practical scope of the O(δ) rate.

Simulated Author's Rebuttal

We thank the referee for the thorough review and constructive feedback on our manuscript. We value the positive remarks on the novelty of the path-wise tilting approach combined with CFM and the potential practical impact. We address each major comment below, clarifying the scope of our current analysis and outlining targeted revisions to strengthen the theoretical claims and assumptions.

read point-by-point responses

-

Referee: [theoretical guarantees (abstract and convergence theorem)] The abstract and theoretical guarantees claim an O(δ) W1 convergence rate for the generated coupling produced by integrating the tilting dynamics with CFM. However, the analysis appears to control only the ideal continuous tilting path (assuming exact vector fields), without propagating the CFM training error, discretization error, or extrapolation error beyond the reference support into the final W1 bound. This is load-bearing for the central claim that the practical sampler converges at the stated rate.

Authors: We appreciate the referee identifying this gap in the error propagation. Theorem 4.1 and the associated analysis establish the O(δ) rate specifically for the continuous-time tilting dynamics under exact vector fields obtained from the covariance evolution equations. The CFM component is introduced in Section 3.3 as a practical neural approximation to these fields, with the sampler in Algorithm 1 relying on it for implementation. We agree that the abstract and main claim would benefit from explicit control of approximation errors. In the revision, we will add a new corollary (Corollary 4.2) that decomposes the total W1 error into the ideal tilting term O(δ) plus additive terms for CFM training error (bounded via existing CFM generalization results by increasing training samples or network width), Euler discretization error (standard O(h) bound for step size h), and a support extrapolation term controlled by the tilting parameter δ. This preserves the core O(δ) rate for the dynamics while making the practical pipeline's convergence rigorous up to controllable terms. The abstract will be updated to reflect this decomposition. revision: yes

-

Referee: [path-wise tilting algorithm and learning rules] The weakest assumption—that a single latent cost function is shared and can be recovered sufficiently well via the iterative path-wise tilting to permit mass transport beyond the reference support—is stated but lacks explicit quantitative bounds or verification in the derivation of the evolution equations. This directly affects whether the sample-level learning rules remain valid for the new marginals.

Authors: The shared latent cost is the modeling foundation that allows the reference coupling samples to inform transport for new marginals; the path-wise tilting evolves the joint while enforcing the fixed-cost optimality condition at each step, and the covariance-type evolution equations (Eq. 3.4) are derived by differentiating the entropic OT dual along the marginal path without explicit cost estimation. The sample-level rules (Eq. 3.5) arise as Monte Carlo estimators of the resulting expectations. We concur that quantitative verification of implicit cost recovery would improve rigor, especially for mass transport outside the reference support. In the revised version, we will insert a new supporting lemma (Lemma 3.3) that bounds the deviation between the learned vector fields and the ideal fields in terms of reference sample size n and tilting increment δ, with high-probability guarantees under standard concentration assumptions on the reference samples. This lemma will be invoked to justify validity of the rules for new marginals and to tighten the extrapolation error term in the updated convergence analysis. revision: yes

Circularity Check

No significant circularity; derivation builds from tilting dynamics to sampler and rate independently.

full rationale

The paper derives sample-level rules from the iterative path-wise tilting process to obtain covariance-type evolution equations for transport vector fields, then integrates those dynamics with Conditional Flow Matching to produce the generative sampler, and separately states an O(δ) W1 convergence guarantee for the generated coupling to the target EOT plan. No equations or steps are shown that reduce the claimed convergence rate, the sampler, or the vector fields to a fitted parameter or input quantity defined inside the paper by construction. The central guarantee is presented as a derived bound on the tilting path rather than a tautology, and no self-citation load-bearing, ansatz smuggling, or renaming of known results is evident in the provided derivation outline. The chain remains self-contained against external benchmarks for the tilting and CFM components.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Existence and uniqueness properties of entropic optimal couplings for given marginals

- domain assumption The latent ground cost is identical for the reference and new marginals

invented entities (1)

-

Path-wise tilting algorithm

no independent evidence

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearWe derive sample-level learning rules for these infinitesimal updates, which yield covariance-type evolution equations for the associated transport vector fields... global convergence rate of O(δ) ... in W1 distance (Theorem 6.2-6.3).

-

IndisputableMonolith/Foundation/AlphaCoordinateFixation.leanJ_uniquely_calibrated_via_higher_derivative unclearπ⋆(x,y) ∝ π′(x,y) exp(φ(x)+ψ(y)) ... ˙πt = πt (at(X)+bt(Y)) ... Jt(a,b) := Eπt[|a(X)+b(Y)|²] − ...

Reference graph

Works this paper leans on

-

[1]

Topics in Optimal Transportation , series =

Villani, C. Topics in Optimal Transportation , series =

-

[2]

Optimal Transport: Old and New , series =

Villani, C. Optimal Transport: Old and New , series =. 2009 , doi =

work page 2009

-

[3]

Computational optimal transport: With applications to data science , author=. 2019 , publisher=

work page 2019

-

[4]

Santambrogio, Filippo , title =

-

[5]

Advances in Neural Information Processing Systems , volume =

Cuturi, Marco , title =. Advances in Neural Information Processing Systems , volume =. 2013 , publisher =

work page 2013

-

[6]

Lecture Notes, Columbia University , year =

Nutz, Marcel , title =. Lecture Notes, Columbia University , year =

-

[7]

SIAM Journal on Mathematical Analysis , volume=

Convergence of entropic schemes for optimal transport and gradient flows , author=. SIAM Journal on Mathematical Analysis , volume=. 2017 , publisher=

work page 2017

-

[8]

Probability Theory and Related Fields , volume =

Conforti, Giovanni , title =. Probability Theory and Related Fields , volume =

-

[9]

Identifiability of the Optimal Transport Cost on Finite Spaces , journal =

Gonz. Identifiability of the Optimal Transport Cost on Finite Spaces , journal =

-

[10]

arXiv preprint arXiv:2109.12004 , year =

Pooladian, Aram-Alexandre and Niles-Weed, Jonathan , title =. arXiv preprint arXiv:2109.12004 , year =

-

[11]

Large-Scale Optimal Transport and Mapping Estimation , booktitle =

Seguy, Vivien and Damodaran, Bharath Bhushan and Flamary, R. Large-Scale Optimal Transport and Mapping Estimation , booktitle =

-

[12]

Advances in Neural Information Processing Systems , volume =

Kassraie, Parnian and Pooladian, Aram-Alexandre and Klein, Michal and Thornton, James and Niles-Weed, Jonathan and Cuturi, Marco , title =. Advances in Neural Information Processing Systems , volume =

-

[13]

Advances in Neural Information Processing Systems , volume =

Gushchin, Nikita and Kolesov, Alexander and Korotin, Alexander and Vetrov, Dmitry and Burnaev, Evgeny , title =. Advances in Neural Information Processing Systems , volume =. 2023 , note =

work page 2023

-

[14]

Advances in Neural Information Processing Systems (Datasets and Benchmarks Track) , volume =

Gushchin, Nikita and Kolesov, Alexander and Mokrov, Petr and Karpikova, Polina and Spiridonov, Andrey and Burnaev, Evgeny and Korotin, Alexander , title =. Advances in Neural Information Processing Systems (Datasets and Benchmarks Track) , volume =

-

[15]

International Conference on Learning Representations , year =

Mokrov, Petr and Korotin, Alexander and Kolesov, Alexander and Gushchin, Nikita and Burnaev, Evgeny , title =. International Conference on Learning Representations , year =

-

[16]

International Conference on Learning Representations , year =

Korotin, Alexander and Selikhanovych, Daniil and Burnaev, Evgeny , title =. International Conference on Learning Representations , year =

- [17]

-

[18]

Advances in Neural Information Processing Systems , volume =

Diffusion. Advances in Neural Information Processing Systems , volume =

- [19]

- [20]

-

[21]

International Conference on Machine Learning , year =

Gushchin, Nikita and Kholkin, Sergei and Burnaev, Evgeny and Korotin, Alexander , title =. International Conference on Machine Learning , year =

-

[22]

Advances in Neural Information Processing Systems , volume =

De Bortoli, Valentin and Korshunova, Iryna and Mnih, Andriy and Doucet, Arnaud , title =. Advances in Neural Information Processing Systems , volume =. 2024 , note =

work page 2024

-

[23]

International Conference on Learning Representations , year =

Kim, Beomsu and Kwon, Gihyun and Kim, Kwanyoung and Ye, Jong Chul , title =. International Conference on Learning Representations , year =

-

[24]

International Conference on Learning Representations , year =

Chen, Tianrong and Liu, Guan-Horng and Theodorou, Evangelos , title =. International Conference on Learning Representations , year =

-

[25]

Lipman, Yaron and Chen, Ricky T. Q. and Ben-Hamu, Heli and Nickel, Maximilian and Le, Matt , title =. International Conference on Learning Representations , year =

-

[26]

Lipman, Yaron and Chen, Ricky T. Q. and Ben-Hamu, Heli and Nickel, Maximilian and Le, Matt , title =. arXiv preprint arXiv:2210.02747 , year =

work page internal anchor Pith review Pith/arXiv arXiv

-

[27]

Improving and generalizing flow-based generative models with minibatch optimal transport

Improving and generalizing flow-based generative models with minibatch optimal transport , author=. arXiv preprint arXiv:2302.00482 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[28]

Transactions on Machine Learning Research , year =

Tong, Alexander and Malkin, Nikolay and Huguet, Guillaume and Zhang, Yanlei and Rector-Brooks, Jarrid and Fatras, Kilian and Wolf, Guy and Bengio, Yoshua , title =. Transactions on Machine Learning Research , year =

-

[29]

International Conference on Learning Representations , year =

Liu, Xingchao and Gong, Chengyue and Liu, Qiang , title =. International Conference on Learning Representations , year =

-

[30]

and Vanden-Eijnden, Eric , title =

Albergo, Michael S. and Vanden-Eijnden, Eric , title =. International Conference on Learning Representations , year =

-

[31]

Stochastic Interpolants: A Unifying Framework for Flows and Diffusions

Albergo, Michael S. and Boffi, Nicholas M. and Vanden-Eijnden, Eric , title =. arXiv preprint arXiv:2303.08797 , year =

work page internal anchor Pith review Pith/arXiv arXiv

-

[32]

and Goldstein, Mark and Boffi, Nicholas M

Albergo, Michael S. and Goldstein, Mark and Boffi, Nicholas M. and Ranganath, Rajesh and Vanden-Eijnden, Eric , title =. International Conference on Machine Learning , year =

-

[33]

International Conference on Artificial Intelligence and Statistics , year =

Tong, Alexander and Malkin, Nikolay and Fatras, Kilian and Atanackovic, Lazar and Zhang, Yanlei and Huguet, Guillaume and Wolf, Guy and Bengio, Yoshua , title =. International Conference on Artificial Intelligence and Statistics , year =

-

[34]

Advances in neural information processing systems , volume=

Denoising diffusion probabilistic models , author=. Advances in neural information processing systems , volume=

-

[35]

and Kumar, Abhishek and Ermon, Stefano and Poole, Ben , title =

Song, Yang and Sohl-Dickstein, Jascha and Kingma, Diederik P. and Kumar, Abhishek and Ermon, Stefano and Poole, Ben , title =. International Conference on Learning Representations , year =

-

[36]

Rezende, Danilo J. and Mohamed, Shakir , title =. International Conference on Machine Learning , volume =

-

[37]

International Conference on Machine Learning , volume =

Amos, Brandon and Luise, Giulia and Cohen, Samuel and Redko, Ievgen , title =. International Conference on Machine Learning , volume =

-

[38]

Advances in Neural Information Processing Systems , volume=

Supervised training of conditional monge maps , author=. Advances in Neural Information Processing Systems , volume=

-

[39]

arXiv preprint arXiv:2511.19741 , year=

Efficient Transferable Optimal Transport via Min-Sliced Transport Plans , author=. arXiv preprint arXiv:2511.19741 , year=

-

[40]

Advances in Neural Information Processing Systems , volume=

Mapping estimation for discrete optimal transport , author=. Advances in Neural Information Processing Systems , volume=

-

[41]

arXiv preprint arXiv:2512.21829 , year=

Tilt Matching for Scalable Sampling and Fine-Tuning , author=. arXiv preprint arXiv:2512.21829 , year=

-

[42]

arXiv preprint arXiv:2410.02601 , year=

Diffusion & adversarial schr " odinger bridges via iterative proportional markovian fitting , author=. arXiv preprint arXiv:2410.02601 , year=

-

[43]

Learning single-cell perturbation responses using neural optimal transport , author=. Nature methods , volume=. 2023 , publisher=

work page 2023

-

[44]

Optimal-transport analysis of single-cell gene expression identifies developmental trajectories in reprogramming , author=. Cell , volume=. 2019 , publisher=

work page 2019

-

[45]

International conference on machine learning , pages=

Trajectorynet: A dynamic optimal transport network for modeling cellular dynamics , author=. International conference on machine learning , pages=. 2020 , organization=

work page 2020

-

[46]

arXiv preprint arXiv:2504.08328 , year=

Towards generalizable single-cell perturbation modeling via the Conditional Monge Gap , author=. arXiv preprint arXiv:2504.08328 , year=

-

[47]

Proceedings of the IEEE international conference on computer vision , pages=

Unpaired image-to-image translation using cycle-consistent adversarial networks , author=. Proceedings of the IEEE international conference on computer vision , pages=

-

[48]

Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

Photo-realistic single image super-resolution using a generative adversarial network , author=. Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

-

[49]

International Conference on Medical image computing and computer-assisted intervention , pages=

U-net: Convolutional networks for biomedical image segmentation , author=. International Conference on Medical image computing and computer-assisted intervention , pages=. 2015 , organization=

work page 2015

-

[50]

CryoDRGN: reconstruction of heterogeneous cryo-EM structures using neural networks , author=. Nature methods , volume=. 2021 , publisher=

work page 2021

-

[51]

arXiv preprint arXiv:1904.04554 , year=

From (Martingale) Schrodinger bridges to a new class of Stochastic Volatility Models , author=. arXiv preprint arXiv:1904.04554 , year=

-

[52]

Mathematics of Operations Research , year=

Convergence of Sinkhorn’s algorithm for entropic martingale optimal transport problem , author=. Mathematics of Operations Research , year=

-

[53]

arXiv preprint arXiv:2503.11843 , year=

Entropic Optimal Transport Problem with Convex Functional Cost , author=. arXiv preprint arXiv:2503.11843 , year=

-

[54]

BIT Numerical Mathematics , volume=

A dynamical systems framework for intermittent data assimilation , author=. BIT Numerical Mathematics , volume=. 2011 , publisher=

work page 2011

-

[55]

Journal of Geophysical Research: Oceans , volume=

Sequential data assimilation with a nonlinear quasi-geostrophic model using Monte Carlo methods to forecast error statistics , author=. Journal of Geophysical Research: Oceans , volume=. 1994 , publisher=

work page 1994

-

[56]

Journal of the Operational Research Society , volume=

Nonlinear programming , author=. Journal of the Operational Research Society , volume=. 1997 , publisher=

work page 1997

-

[57]

Journal of optimization theory and applications , volume=

Convergence of a block coordinate descent method for nondifferentiable minimization , author=. Journal of optimization theory and applications , volume=. 2001 , publisher=

work page 2001

-

[58]

International Conference on Machine Learning , pages=

Optimal transport mapping via input convex neural networks , author=. International Conference on Machine Learning , pages=. 2020 , organization=

work page 2020

-

[59]

International Conference on Artificial Intelligence and Statistics , pages=

Learning generative models with sinkhorn divergences , author=. International Conference on Artificial Intelligence and Statistics , pages=. 2018 , organization=

work page 2018

-

[60]

Measure Theory and Fine Properties of Functions , author =. 2015 , edition =

work page 2015

-

[61]

Advances in Neural Information Processing Systems , volume=

Light unbalanced optimal transport , author=. Advances in Neural Information Processing Systems , volume=

-

[62]

Advances in Neural Information Processing Systems , volume=

Equivariant flow matching , author=. Advances in Neural Information Processing Systems , volume=

-

[63]

Feynman-Kac formulae: genealogical and interacting particle systems with applications , author=. 2004 , publisher=

work page 2004

-

[64]

Advances in neural information processing systems , volume=

Maximum likelihood training of implicit nonlinear diffusion model , author=. Advances in neural information processing systems , volume=

-

[65]

GANs Trained by a Two Time-Scale Update Rule Converge to a Local Nash Equilibrium , url =

Heusel, Martin and Ramsauer, Hubert and Unterthiner, Thomas and Nessler, Bernhard and Hochreiter, Sepp , booktitle =. GANs Trained by a Two Time-Scale Update Rule Converge to a Local Nash Equilibrium , url =

- [66]

-

[67]

Implicit Neural Representations with Periodic Activation Functions , url =

Sitzmann, Vincent and Martel, Julien and Bergman, Alexander and Lindell, David and Wetzstein, Gordon , booktitle =. Implicit Neural Representations with Periodic Activation Functions , url =

-

[68]

International conference on machine learning , pages=

On the spectral bias of neural networks , author=. International conference on machine learning , pages=. 2019 , organization=

work page 2019

-

[69]

Massively multiplex chemical transcriptomics at single-cell resolution , author=. Science , volume=. 2020 , publisher=

work page 2020

-

[70]

Sliced and Radon Wasserstein Barycenters of Measures , journal =

Bonneel, Nicolas and Rabin, Julien and Peyr. Sliced and Radon Wasserstein Barycenters of Measures , journal =. 2015 , volume =. doi:10.1007/s10851-014-0506-3 , url =

-

[71]

Interpolating between Optimal Transport and MMD using Sinkhorn Divergences , author =. Proceedings of the Twenty-Second International Conference on Artificial Intelligence and Statistics , pages =. 2019 , editor =

work page 2019

- [72]

-

[73]

Lecun, Y. and Bottou, L. and Bengio, Y. and Haffner, P. , journal=. Gradient-based learning applied to document recognition , year=

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.