Recognition: 2 theorem links

· Lean TheoremQDSB: Quantized Diffusion Schr\"odinger Bridges

Pith reviewed 2026-05-13 07:18 UTC · model grok-4.3

The pith

Anchor quantization yields stable regularized couplings for Schrödinger bridges whose error is bounded by approximation quality.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The regularized optimal coupling between two distributions remains stable under anchor quantization: the plan computed on the quantized marginals can be lifted cell-wise to the original points, and the resulting coupling's deviation from the true entropic optimum is bounded by the quality of the anchor approximation.

What carries the argument

Anchor quantization of the endpoint distributions followed by cell-wise lifting of the discrete optimal coupling plan.

If this is right

- Training time for simulation-free Schrödinger bridges drops because the entropic OT problem is solved only on the much smaller anchor set.

- Generated sample quality remains comparable to minibatch-based baselines on real data.

- The error introduced by quantization is explicitly controlled by the choice of anchors rather than by minibatch locality.

- The method extends to any setting that requires an entropic coupling between two distributions given as samples.

Where Pith is reading between the lines

- The same anchor-and-lift strategy could be applied to other regularized transport problems that currently rely on minibatch approximations.

- Adaptive anchor placement might further tighten the error bound without increasing the number of anchors.

- For very large datasets the approach opens a path to coupling computation that scales with the number of anchors rather than the number of samples.

Load-bearing premise

Anchor quantization must preserve enough of the global transport geometry so that the cell-wise lifted plan does not lose material quality relative to the unquantized solution.

What would settle it

An experiment in which, for a fixed quantization resolution, the Wasserstein-2 distance between the lifted QDSB coupling and the true entropic optimum exceeds the bound predicted by the anchor approximation error.

Figures

read the original abstract

Learning generative models in settings where the source and target distributions are only specified through unpaired samples is gaining in importance. Here, one frequently-used model are Schr\"odinger bridges (SB), which represent the most likely evolution between both endpoint distributions. To accelerate training, simulation-free SBs avoid the path simulation of the original SB models. However, learning simulation-free SBs requires paired data; a coupling of the source and target samples is obtained as the solution of the entropic optimal transport (OT) problem. As obtaining the optimal global coupling is infeasible in many practical cases, the entropic OT problem is iteratively solved on minibatches instead. Still, the repeated cost remains substantial and the locality can distort the global transport geometry. We propose quantized diffusion Schr\"odinger bridges (QDSB), which compute the endpoint coupling on anchor-quantized endpoint distributions and lift the resulting plan back to original data points through cell-wise sampling. We show that the regularized optimal coupling is stable w.r.t. anchor quantization, with an error controlled by the quality of the anchor approximation. In real-world experiments, QDSB matches the sample quality of existing baselines, requiring substantially less time. Code and data are available at github.com/mathefuchs/qdsb.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

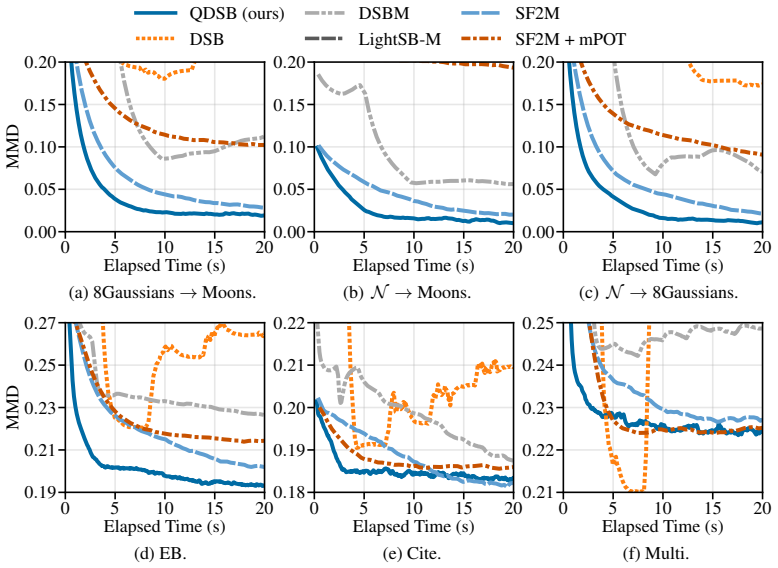

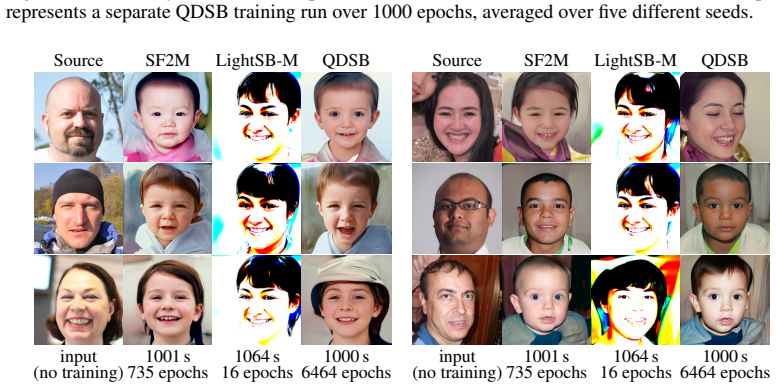

Summary. The manuscript proposes Quantized Diffusion Schrödinger Bridges (QDSB) to accelerate simulation-free Schrödinger bridge training from unpaired samples. It solves the entropic OT problem on anchor-quantized endpoint marginals rather than the full data, then lifts the resulting plan back to the original points via cell-wise sampling. The central theoretical claim is that the regularized optimal coupling remains stable under anchor quantization, with the approximation error controlled by anchor quality. Experiments report that QDSB matches baseline sample quality while requiring substantially less computation time.

Significance. If the stability result extends to the lifted coupling and the reported speed-ups hold without degradation in transport quality, QDSB would offer a practical route to scaling Schrödinger bridge models to large unpaired datasets by avoiding repeated full-batch entropic OT solves.

major comments (1)

- [Theoretical stability result] The stability result (abstract and theoretical section) is stated for the regularized optimal coupling between the anchor-quantized marginals. The deployed object, however, is the cell-wise lifted plan obtained by sampling original points inside each quantization cell. No derivation or bound is given for the additional discrepancy introduced by lifting (e.g., via cell diameter, intra-cell variance, or mismatch between intra-cell conditionals and the true transport map). This gap is load-bearing because the method's error-control claim rests on the lifted plan, not the quantized plan alone.

minor comments (2)

- [Abstract] The abstract states that QDSB 'matches the sample quality of existing baselines' but does not name the baselines, datasets, or quantitative metrics (FID, MMD, etc.). These details should be added for reproducibility.

- [Method] Notation for the quantization cells and the lifting operator is introduced without an explicit definition or diagram; a small illustrative figure would improve clarity.

Simulated Author's Rebuttal

We thank the referee for the detailed and constructive review. The major comment correctly identifies that our stability result is formulated for the quantized coupling, while the implemented procedure uses a lifted coupling. Below we provide a point-by-point response and commit to strengthening the theoretical section accordingly.

read point-by-point responses

-

Referee: The stability result (abstract and theoretical section) is stated for the regularized optimal coupling between the anchor-quantized marginals. The deployed object, however, is the cell-wise lifted plan obtained by sampling original points inside each quantization cell. No derivation or bound is given for the additional discrepancy introduced by lifting (e.g., via cell diameter, intra-cell variance, or mismatch between intra-cell conditionals and the true transport map). This gap is load-bearing because the method's error-control claim rests on the lifted plan, not the quantized plan alone.

Authors: We agree that the current theorem bounds the entropic OT plan between the quantized marginals and that the practical output is the lifted plan. The lifting step samples original points from the empirical distribution inside each quantization cell. Because the cell diameter is governed by the quality of the anchor approximation (finer anchors yield smaller cells), the additional discrepancy between the quantized plan and the lifted plan is controlled by the same quantization error term already appearing in our stability result. Concretely, the Wasserstein distance between the two plans is at most the maximum cell radius, which vanishes as the anchor approximation improves. We will add a short lemma in the theoretical section that composes the existing stability bound with this cell-diameter term, thereby extending the error control directly to the lifted coupling used in the algorithm. This revision will be included in the next version of the manuscript. revision: yes

Circularity Check

No circularity: stability theorem is an independent result

full rationale

The paper's central claim is a stability result for the regularized OT coupling under anchor quantization, with error controlled by anchor approximation quality. This is presented as a mathematical theorem derived from properties of entropic OT and quantization, not by fitting parameters to data or redefining quantities in terms of themselves. The quantization step and cell-wise lifting are algorithmic choices justified by the stability bound rather than presupposed by it. No equations reduce the claimed result to a fitted input or self-referential definition, and no load-bearing step relies on self-citation chains that collapse to unverified premises. The derivation remains self-contained against external OT theory.

Axiom & Free-Parameter Ledger

free parameters (1)

- number of anchors / quantization granularity

axioms (1)

- standard math Entropic optimal transport admits a unique regularized solution

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearWe show that the regularized optimal coupling is stable w.r.t. anchor quantization, with an error controlled by the quality of the anchor approximation.

-

IndisputableMonolith/Foundation/BranchSelection.leanbranch_selection unclearthe entropic OT problem is iteratively solved on minibatches instead... compute the endpoint coupling on anchor-quantized endpoint distributions

Reference graph

Works this paper leans on

-

[1]

LightSBB-M: Bridging Schr\"odinger and Bass for Generative Diffusion Modeling

Alexandre Alouadi, Pierre Henry - Labord \`e re, Gr \'e goire Loeper, Othmane Mazhar, Huy \^e n Pham, and Nizar Touzi. LightSBB-M : Bridging S chr \" o dinger and B ass for generative diffusion modeling. CoRR, abs/2601.19312, 2026

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[2]

Align your latents: H igh-resolution video synthesis with latent diffusion models

Andreas Blattmann, Robin Rombach, Huan Ling, Tim Dockhorn, Seung Wook Kim, Sanja Fidler, and Karsten Kreis. Align your latents: H igh-resolution video synthesis with latent diffusion models. In Conference on Computer Vision and Pattern Recognition ( CVPR ) , pages 22563--22575, 2023

work page 2023

-

[3]

S inkhorn distances: Lightspeed computation of optimal transport

Marco Cuturi. S inkhorn distances: Lightspeed computation of optimal transport. In Advances in Neural Information Processing Systems ( NeurIPS ) , volume 26, pages 2292--2300, 2013

work page 2013

-

[4]

Diffusion S chr \" o dinger bridge with applications to score-based generative modeling

Valentin De Bortoli , James Thornton, Jeremy Heng, and Arnaud Doucet. Diffusion S chr \" o dinger bridge with applications to score-based generative modeling. In Advances in Neural Information Processing Systems ( NeurIPS ) , volume 34, pages 17695--17709, 2021

work page 2021

-

[5]

Quantitative stability of regularized optimal transport and convergence of S inkhorn's algorithm

Stephan Eckstein and Marcel Nutz. Quantitative stability of regularized optimal transport and convergence of S inkhorn's algorithm. SIAM Journal on Mathematical Analysis , 54 0 (6): 0 5922--5948, 2022

work page 2022

-

[6]

Unbalanced minibatch optimal transport; applications to domain adaptation

Kilian Fatras, Thibault Sejourne, R \'e mi Flamary, and Nicolas Courty. Unbalanced minibatch optimal transport; applications to domain adaptation. In International Conference on Machine Learning ( ICML ) , volume 139, pages 3186--3197, 2021

work page 2021

-

[7]

Random fields and diffusion processes

Hans F \"o llmer. Random fields and diffusion processes. In \'E cole d' \'E t \'e de Probabilit \'e s de Saint-Flour XV--XVII, 1985--87 , pages 101--203. Springer, 1988

work page 1985

-

[8]

Light and optimal S chr \" o dinger bridge matching

Nikita Gushchin, Sergei Kholkin, Evgeny Burnaev, and Alexander Korotin. Light and optimal S chr \" o dinger bridge matching. In International Conference on Machine Learning ( ICML ) , volume 235, pages 17100--17122, 2024

work page 2024

-

[9]

Geometric Approximation Algorithms

Sariel Har-Peled. Geometric Approximation Algorithms. American Mathematical Society, 2011

work page 2011

-

[10]

Jonathan Ho, Ajay N. Jain, and Pieter Abbeel. Denoising diffusion probabilistic models. In Advances in Neural Information Processing Systems ( NeurIPS ) , volume 33, pages 6840--6851, 2020

work page 2020

-

[11]

On the translocation of masses

Leonid Kantorovich. On the translocation of masses. In Proceedings of the USSR Academy of Sciences, volume 37, pages 199--201, 1942

work page 1942

-

[12]

A style-based generator architecture for generative adversarial networks

Tero Karras, Samuli Laine, and Timo Aila. A style-based generator architecture for generative adversarial networks. In Conference on Computer Vision and Pattern Recognition ( CVPR ) , pages 4401--4410, 2019

work page 2019

-

[13]

Diffwave: A versatile diffusion model for audio synthesis

Zhifeng Kong, Wei Ping, Jiaji Huang, Kexin Zhao, and Bryan Catanzaro. Diffwave: A versatile diffusion model for audio synthesis. In International Conference on Learning Representations ( ICLR ) , 2021

work page 2021

- [14]

-

[15]

Chi - Heng Lin, Mehdi Azabou, and Eva Dyer. Making transport more robust and interpretable by moving data through a small number of anchor points. In International Conference on Machine Learning ( ICML ) , volume 139, pages 6631--6641, 2021

work page 2021

-

[16]

Yaron Lipman, Ricky T. Q. Chen, Heli Ben - Hamu, Maximilian Nickel, and Matthew Le. Flow matching for generative modeling. In International Conference on Learning Representations ( ICLR ) , 2023

work page 2023

-

[17]

Guan - Horng Liu, Yaron Lipman, Maximilian Nickel, Brian Karrer, Evangelos Theodorou, and Ricky T. Q. Chen. Generalized S chr \" o dinger bridge matching. In International Conference on Learning Representations ( ICLR ) , 2024

work page 2024

-

[18]

Unsupervised image - to - image translation networks

Ming - Yu Liu, Thomas Breuel, and Jan Kautz. Unsupervised image - to - image translation networks. In Advances in Neural Information Processing Systems ( NeurIPS ) , volume 30, pages 700--708, 2017

work page 2017

-

[19]

Flow straight and fast: Learning to generate and transfer data with rectified flow

Xingchao Liu, Chengyue Gong, and Qiang Liu. Flow straight and fast: Learning to generate and transfer data with rectified flow. In International Conference on Learning Representations ( ICLR ) , 2023

work page 2023

-

[20]

Luecken, Scott Gigante, Daniel B

Malte D. Luecken, Scott Gigante, Daniel B. Burkhardt, Robrecht Cannoodt, Daniel C. Strobl, Nikolay S. Markov, Luke Zappia, Giovanni Palla, Wesley Lewis, Daniel Dimitrov, Michael E. Vinyard, D. S. Magruder, Michaela F. Mueller, Alma Andersson, Emma Dann, Qian Qin, Dominik J. Otto, Michal Klein, Olga Borisovna Botvinnik, Louise Deconinck, Kai Waldrant, Sai ...

work page 2025

-

[21]

M \'e moire sur la th \'e orie des d \'e blais et des remblais

Gaspard Monge. M \'e moire sur la th \'e orie des d \'e blais et des remblais. M \'e moires de math \'e matique et de physique, present \'e s \`a l'Acad \'e mie royale des sciences , pages 666--704, 1781

-

[22]

On transportation of mini - batches: A hierarchical approach

Khai Nguyen, Dang Nguyen, Quoc Dinh Nguyen, Tung Pham, Hung Bui, Dinh Phung, and Trung Le. On transportation of mini - batches: A hierarchical approach. In International Conference on Machine Learning ( ICML ) , volume 162, pages 16091--16119, 2022 a

work page 2022

-

[23]

Improving mini - batch optimal transport via partial transportation

Khai Nguyen, Dang Nguyen, The - Anh Vu - Le, Tung Pham, and Nhat Ho. Improving mini - batch optimal transport via partial transportation. In International Conference on Machine Learning ( ICML ) , volume 162, pages 16656--16690, 2022 b

work page 2022

-

[24]

Adjeroh, and Gianfranco Doretto

Stanislav Pidhorskyi, Donald A. Adjeroh, and Gianfranco Doretto. Adversarial latent autoencoders. In Conference on Computer Vision and Pattern Recognition ( CVPR ) , pages 14104--14113, 2020

work page 2020

-

[25]

High-resolution image synthesis with latent diffusion models

Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser, and Bj \" o rn Ommer. High-resolution image synthesis with latent diffusion models. In Conference on Computer Vision and Pattern Recognition ( CVPR ) , pages 10674--10685, 2022

work page 2022

-

[26]

Low - rank S inkhorn factorization

Meyer Scetbon, Marco Cuturi, and Gabriel Peyr \'e . Low - rank S inkhorn factorization. In International Conference on Machine Learning ( ICML ) , volume 139, pages 9344--9354, 2021

work page 2021

-

[27]

Diffusion S chr \" o dinger bridge matching

Yuyang Shi, Valentin De Bortoli , Andrew Campbell, and Arnaud Doucet. Diffusion S chr \" o dinger bridge matching. In Advances in Neural Information Processing Systems ( NeurIPS ) , volume 36, 2023

work page 2023

-

[28]

Kingma, Abhishek Kumar, Stefano Ermon, and Ben Poole

Yang Song, Jascha Sohl - Dickstein, Diederik P. Kingma, Abhishek Kumar, Stefano Ermon, and Ben Poole. Score - based generative modeling through stochastic differential equations. In International Conference on Learning Representations ( ICLR ) , 2021

work page 2021

-

[29]

Yang Song, Prafulla Dhariwal, Mark Chen, and Ilya Sutskever. Consistency models. In International Conference on Machine Learning ( ICML ) , volume 202, pages 32211--32252, 2023

work page 2023

-

[30]

TrajectoryNet : A dynamic optimal transport network for modeling cellular dynamics

Alexander Tong, Jessie Huang, Guy Wolf, David van Dijk, and Smita Krishnaswamy. TrajectoryNet : A dynamic optimal transport network for modeling cellular dynamics. In International Conference on Machine Learning ( ICML ) , volume 119, pages 9526--9536, 2020

work page 2020

-

[31]

Simulation-free S chr \" o dinger bridges via score and flow matching

Alexander Tong, Nikolay Malkin, Kilian Fatras, Lazar Atanackovic, Yanlei Zhang, Guillaume Huguet, Guy Wolf, and Yoshua Bengio. Simulation-free S chr \" o dinger bridges via score and flow matching. In International Conference on Artificial Intelligence and Statistics ( AISTATS ) , volume 238, pages 1279--1287, 2024

work page 2024

-

[32]

Optimal Transport: Old and New

C \'e dric Villani. Optimal Transport: Old and New. Springer, 2009

work page 2009

-

[33]

Topics in Optimal Transportation

C \'e dric Villani. Topics in Optimal Transportation. American Mathematical Society, 2021

work page 2021

-

[34]

LaVie : H igh-quality video generation with cascaded latent diffusion models

Yaohui Wang, Xinyuan Chen, Xin Ma, Shangchen Zhou, Ziqi Huang, Yi Wang, Ceyuan Yang, Yinan He, Jiashuo Yu, Peiqing Yang, Yuwei Guo, Tianxing Wu, Chenyang Si, Yuming Jiang, Cunjian Chen, Chen Change Loy, Bo Dai, Dahua Lin, Yu Qiao, and Ziwei Liu. LaVie : H igh-quality video generation with cascaded latent diffusion models. International Journal of Computer...

work page 2025

-

[35]

Diffusion-4k: U ltra-high-resolution image synthesis with latent diffusion models

Jinjin Zhang, Qiuyu Huang, Junjie Liu, Xiefan Guo, and Di Huang. Diffusion-4k: U ltra-high-resolution image synthesis with latent diffusion models. In Conference on Computer Vision and Pattern Recognition ( CVPR ) , pages 23464--23473, 2025

work page 2025

-

[36]

Jun - Yan Zhu, Taesung Park, Phillip Isola, and Alexei A. Efros. Unpaired image - to - image translation using cycle - consistent adversarial networks. In International Conference on Computer Vision ( ICCV ) , pages 2223--2232, 2017

work page 2017

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.