Recognition: no theorem link

LegalCheck: Retrieval- and Context-Augmented Generation for Drafting Municipal Legal Advice Letters

Pith reviewed 2026-05-13 04:58 UTC · model grok-4.3

The pith

A retrieval- and context-augmented system generates near-final municipal legal advice letters in minutes rather than hours.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

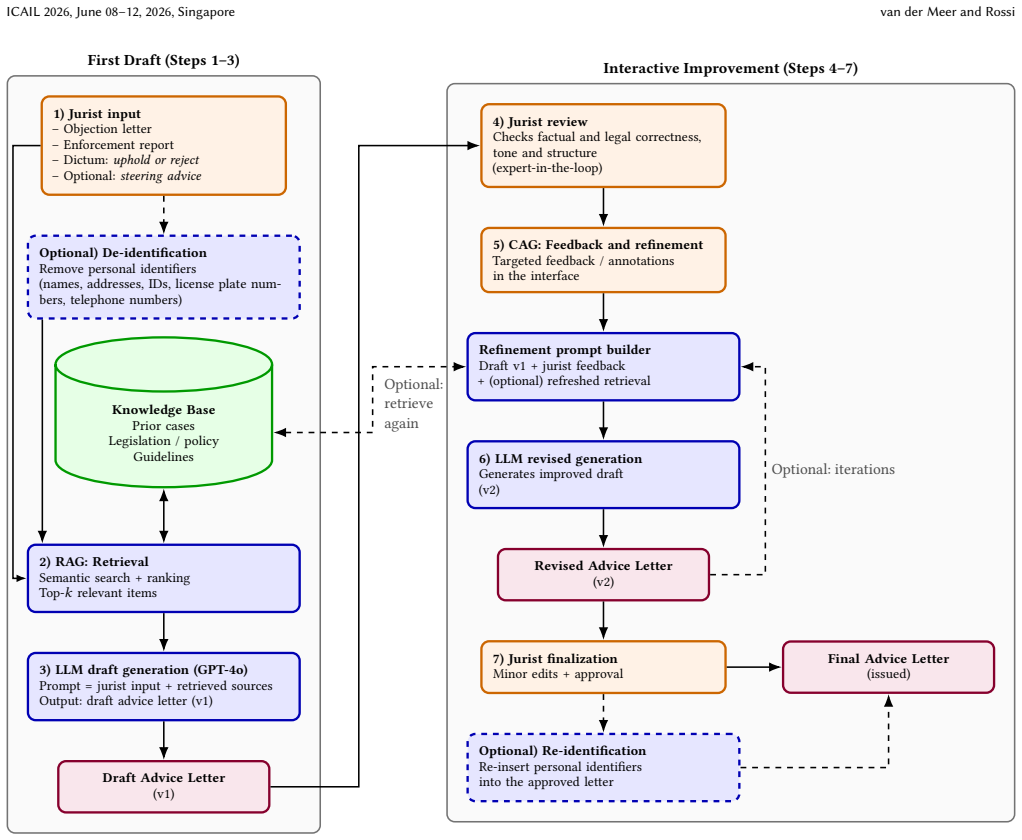

LegalCheck automates the drafting of objection response letters by retrieving relevant laws and precedents, using controlled prompting to incorporate external knowledge and case-specific details into a coherent draft, and relying on expert-in-the-loop review to confirm legal soundness. In the Amsterdam deployment, it produced near-final advice letters in minutes rather than hours, with outputs grounded in actual regulations and prior cases that included the vast majority of required legal reasoning.

What carries the argument

The LegalCheck pipeline, which pairs Retrieval-Augmented Generation to pull laws and precedents with Context-Augmented Generation to tailor content to each case, all wrapped in an expert-in-the-loop review.

If this is right

- Substantial reduction in drafting time for objection letters.

- Improved consistency in how legal standards are applied across cases.

- Positive acceptance by legal professionals who retain final judgment.

- Explainable outputs that cite the underlying regulations and precedents.

- Demonstration that responsible AI deployment is possible in the legal domain through augmentation rather than full automation.

Where Pith is reading between the lines

- The same retrieval-plus-context approach could extend to other administrative law tasks such as permit reviews or compliance checks.

- Curated knowledge bases would need regular updates to track changes in regulations or new case law.

- If expert review becomes a bottleneck at higher volumes, the system might require additional automated checks for common error patterns.

- Deployment in other municipalities would depend on creating equivalent curated legal databases tailored to local rules.

Load-bearing premise

Expert-in-the-loop review combined with retrieval from curated legal knowledge bases is enough to prevent legally significant errors or omissions in the generated drafts.

What would settle it

A generated letter that omits or misstates a key legal requirement or precedent, is approved by the expert reviewer, and later leads to an incorrect decision that is successfully challenged on appeal.

Figures

read the original abstract

Public-sector legal departments in the Netherlands face acute staff shortages, increased case volumes, and increased pressure to meet regulatory compliance. This paper presents LegalCheck, a novel system that addresses these challenges by automating the drafting of objection response letters through a combination of Retrieval-Augmented Generation (RAG) and Context-Augmented Generation (CAG). Using a large language model (LLM) alongside curated legal knowledge bases, LegalCheck performs retrieval of relevant laws and precedents, and uses controlled prompting to incorporate both external knowledge and case-specific details into a coherent draft. An expert-in-the-loop review ensures that each generated letter is legally sound and contextually appropriate. In a real-world deployment within the Municipality of Amsterdam, LegalCheck produced near-final advice letters in minutes rather than hours, while maintaining high legal consistency and factual accuracy. The output is based on actual regulations and prior cases, providing explainable outputs that captured the vast majority of required legal reasoning (often 80\% to 100\% of essential content). Legal professionals found that the system reduced their workload and ensured a consistent application of legal standards, without replacing human judgment. These results demonstrate substantial efficiency gains, improved legal consistency, and positive user acceptance. More broadly, this work illustrates how responsible AI can be deployed in the legal domain by augmenting LLMs with domain knowledge and governance mechanisms.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper presents LegalCheck, a system that combines Retrieval-Augmented Generation (RAG) and Context-Augmented Generation (CAG) with LLMs and curated legal knowledge bases to draft municipal objection response letters. It incorporates controlled prompting for external knowledge and case details, followed by expert-in-the-loop review. The central claims concern a real-world deployment in the Municipality of Amsterdam, where the system produced near-final letters in minutes rather than hours, captured 80-100% of essential legal reasoning, maintained high consistency and accuracy, reduced workload, and ensured consistent legal standards without replacing human judgment.

Significance. If the deployment outcomes hold under rigorous scrutiny, the work would demonstrate a practical, governed application of LLMs in a high-stakes public-sector legal setting, addressing staff shortages through knowledge-augmented generation and human oversight. The emphasis on explainable, regulation-grounded outputs and retention of expert review offers a template for responsible AI deployment; however, the current lack of supporting data limits its immediate contribution to the literature on AI-assisted legal drafting.

major comments (2)

- [Abstract] Abstract: The performance claims (near-final letters in minutes vs. hours; 80-100% capture of essential legal reasoning; high consistency and factual accuracy) are asserted without any reported sample size, quantitative metrics, baselines, error analysis, definition of 'essential content,' or inter-rater reliability measures. This absence directly undermines verification of the central efficiency and reliability assertions.

- [Deployment description] Deployment description: The expert-in-the-loop review is described as ensuring legal soundness, yet no protocol details, measurement of residual errors/omissions, comparison against unaided drafting, or assessment of whether retrieval/prompting failures are systematically caught are supplied. This leaves the sufficiency of the human safeguard untested and the workload-reduction claim unsupported.

minor comments (1)

- [Abstract] Abstract: The acronym 'CAG' is introduced without an explicit definition or citation distinguishing it from standard RAG techniques.

Simulated Author's Rebuttal

We thank the referee for their constructive feedback on our manuscript describing the LegalCheck system. We appreciate the emphasis on the need for greater transparency in reporting the deployment outcomes. Below, we provide point-by-point responses to the major comments, outlining how we will revise the paper to address the concerns while maintaining the integrity of our reported experiences.

read point-by-point responses

-

Referee: [Abstract] Abstract: The performance claims (near-final letters in minutes vs. hours; 80-100% capture of essential legal reasoning; high consistency and factual accuracy) are asserted without any reported sample size, quantitative metrics, baselines, error analysis, definition of 'essential content,' or inter-rater reliability measures. This absence directly undermines verification of the central efficiency and reliability assertions.

Authors: The referee correctly identifies that the abstract makes strong claims without accompanying quantitative details. This is because the paper reports on a practical deployment rather than a laboratory-style evaluation with predefined metrics. The 80-100% figure and time savings were observed by the legal team during use, but no formal counting of sample size or error analysis was performed. We will revise the abstract to qualify these claims as 'observed in deployment' and add a new subsection in the paper detailing the evaluation approach used by the experts, including how 'essential content' was defined (as the key legal arguments and references required for a complete response). We will also explicitly state the lack of baselines and inter-rater measures as a limitation of the current study. revision: yes

-

Referee: [Deployment description] Deployment description: The expert-in-the-loop review is described as ensuring legal soundness, yet no protocol details, measurement of residual errors/omissions, comparison against unaided drafting, or assessment of whether retrieval/prompting failures are systematically caught are supplied. This leaves the sufficiency of the human safeguard untested and the workload-reduction claim unsupported.

Authors: We will provide more protocol details in the revised manuscript, describing the steps the experts followed in reviewing the drafts, such as verifying citations to laws and precedents, checking for logical consistency, and ensuring the response addresses the objection points. However, we did not measure residual errors after review or perform a comparison to unaided drafting, as the deployment was not designed as an A/B test. The workload reduction is supported by anecdotal reports from the users who noted faster turnaround. We will add this information and acknowledge that the human safeguard's effectiveness is assumed based on the experts' expertise rather than quantified. This is a genuine limitation we will highlight. revision: partial

Circularity Check

No circularity: system description with no derivations or fitted parameters

full rationale

The paper is a descriptive account of a RAG/CAG system for drafting legal letters, augmented by expert review, with a high-level report of an Amsterdam deployment. No equations, parameters, or predictive derivations exist that could reduce to their own inputs by construction. The central claims rest on qualitative deployment outcomes rather than any self-referential fitting, uniqueness theorem, or ansatz smuggling. Absence of mathematical structure means none of the enumerated circularity patterns apply; the evaluation limitations noted in the skeptic attack concern evidence strength, not internal circularity.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

AI4Citizens. 2025. Ethical leaflet: Get transparency about the moral implications of technology used. Interreg Europe – Good prac- tices. https://www.interregeurope.eu/good-practices/ethical-leaflet-get- transparency-about-moral-implications-of-technology-used

work page 2025

-

[2]

Nikolaos Aletras, Dimitrios Tsarapatsanis, Daniel Preotiuc-Pietro, and Vasileios Lampos. 2016. Predicting Judicial Decisions of the European Court of Human Rights: A Natural Language Processing Perspective.PeerJ Computer Science2 (2016), e93. doi:10.7717/peerj-cs.93

-

[3]

Saar Alon-Barkat and Madalina Busuioc. 2023. Human–AI Interactions in Public Sector Decision Making: “Automation Bias” and “Selective Adherence” to Algo- rithmic Advice.Journal of Public Administration Research and Theory33, 1 (Jan. 2023), 153–169. doi:10.1093/jopart/muac007

-

[4]

In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems

Saleema Amershi, Daniel Weld, Mihaela Vorvoreanu, Adam Fourney, Besmira Nushi, Penny Collisson, Jina Suh, Shamsi Iqbal, Paul N. Bennett, Kori Inkpen, and Jaime Teevan. 2019. Guidelines for Human–AI Interaction. InProceedings of the 2019 CHI Conference on Human Factors in Computing Systems (CHI ’19). ACM. doi:10.1145/3290605.3300233 Article 3, pp. 1–13

-

[5]

Alejandro Barredo Arrieta, Natalia Díaz-Rodríguez, Javier Del Ser, Adrien Bennetot, Siham Tabik, Alberto Barbado, Salvador García, Sergio Gil-López, Daniel Molina, Richard Benjamins, Raja Chatila, and Francisco Herrera. 2020. Explainable artificial intelligence (XAI): Concepts, taxonomies, opportunities and challenges toward responsible AI.Information Fus...

-

[6]

Kevin D. Ashley. 2017.Artificial Intelligence and Legal Analytics: New Tools for Law Practice in the Digital Age. Cambridge University Press

work page 2017

-

[7]

On the Opportunities and Risks of Foundation Models

Rishi Bommasani et al. 2021.On the Opportunities and Risks of Foundation Models. Technical Report. Stanford Institute for Human-Centered Artificial Intelligence. https://arxiv.org/abs/2108.07258 arXiv:2108.07258

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[8]

Colleen V. Chien and M Kim. 2024. Generative AI and Legal Aid: Results from a Field Study and 100 Use Cases to Bridge the Access to Justice Gap. SSRN Working Paper (UC Berkeley Public Law Research Paper; forthcoming in Loyola of Los Angeles Law Review). https://ssrn.com/abstract=4733061

work page 2024

-

[9]

Thomas H. Davenport and Rajeev Ronanki. 2018. Artificial Intelligence for the Real World.Harvard Business Review96, 1 (2018), 108–116

work page 2018

-

[10]

European Commission: Directorate-General for Communications Networks, Con- tent and Technology and High-Level Expert Group on Artificial Intelligence. 2019. Ethics Guidelines for Trustworthy AI. doi:10.2759/346720

-

[11]

European Union. 2016. Regulation (EU) 2016/679 (General Data Protection Regulation). Official Journal of the European Union, L 119, 1–88. https://eur- lex.europa.eu/eli/reg/2016/679/oj

work page 2016

-

[12]

Samer Faraj, Stella Pachidi, and Karim Sayegh. 2018. Working and organizing in the age of the learning algorithm.Information and Organization28, 1 (2018), 62–70. doi:10.1016/j.infoandorg.2018.02.005

-

[13]

Gemeente Amsterdam. 2024. Amsterdam’s vision on AI (English version). https: //www.amsterdam.nl/innovatie/amsterdamse-visie-ai/

work page 2024

-

[14]

T. Haesevoets, B. Verschuere, and A. Roets. 2025. AI adoption in public ad- ministration: Perspectives of public sector managers and public sector non- managerial employees.Government Information Quarterly42, 2 (2025), 102029. doi:10.1016/j.giq.2025.102029

-

[15]

Yousra Hashem, Jonathan Bright, Shreya Chakraborty, Kait Onslow, James Fran- cis, A. Poletav, and S. Esnaashari. 2025. Mapping the Potential: Generative AI and Public Sector Work. Using time use data to identify opportunities for AI adoption in Great Britain’s public sector. https://www.turing.ac.uk/sites/default/files/2025- 05/ons_tus_final_report.pdf

work page 2025

-

[16]

Kenneth Holstein, Jennifer Wortman Vaughan, Hal Daumé III, Miro Dudik, and Hanna Wallach. 2019. Improving Fairness in Machine Learning Systems: What Do Industry Practitioners Need?. InProceedings of the 2019 CHI Conference on Human Factors in Computing Systems (CHI ’19). ACM. doi:10.1145/3290605.3300830 pp. 1–16

-

[17]

Daniel Martin Katz, Michael J. Bommarito, and Josh Blackman. 2017. A General Approach for Predicting the Behavior of the Supreme Court of the United States. PLoS ONE12, 4 (2017), e0174698. doi:10.1371/journal.pone.0174698

-

[18]

John P. Kotter. 1996.Leading Change. Harvard Business School Press

work page 1996

-

[19]

Patrick Lewis, Ethan Perez, Aleksandra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, Heinrich Küttler, Mike Lewis, Wen-tau Yih, Tim Rocktäschel, Sebastian Riedel, and Douwe Kiela. 2020. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. InAdvances in Neural Information Processing Systems 33. 9459–9474

work page 2020

-

[20]

Chih-Hao Lin and Pei-Ju Cheng. 2024. Legal documents drafting with fine- tuned pre-trained large language model. InProceedings of the 12th International Conference on Software Engineering & Trends (SE 2024). Copenhagen, Denmark. doi:10.48550/arXiv.2406.04202

-

[21]

Varun Magesh, F. Surani, M. Dahl, Mirac Suzgun, Christopher D. Manning, and Daniel E. Ho. 2024. Hallucination-free? Assessing the reliability of leading AI legal research tools. arXiv preprint. doi:10.48550/arXiv.2405.20362

- [22]

-

[23]

OpenAI. 2024. Hello GPT-4o. https://openai.com/nl-NL/index/hello-gpt-4o/

work page 2024

-

[24]

PwC. 2023. Half of Dutch jobs might be significantly changed by gen- erative AI. PwC Netherlands. https://www.pwc.nl/en/insights-and- publications/themes/the-future-of-work/half-of-dutch-jobs-might-be- significantly-changed-by-generative-ai.html

work page 2023

-

[25]

Jirui Qi, Gabriele Sarti, Raquel Fernández, and Arianna Bisazza. 2024. Model Internals-based Answer Attribution for Trustworthy Retrieval-Augmented Gen- eration. InProceedings of the 2024 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, Miami, Florida, USA. doi:10.18653/v1/2024.emnlp-main.347

-

[26]

Daniel Schwarcz, Sam Manning, Patrick J. Barry, David R. Cleveland, J. J. Prescott, and Beverly Rich. 2025.AI-Powered Lawyering: AI Reasoning Models, Retrieval Augmented Generation, and the Future of Legal Practice. Technical Report. Min- nesota Legal Studies Research Paper No. 25-16 (SSRN). Available at SSRN: https://ssrn.com/abstract=5162111

work page 2025

- [27]

-

[28]

Peizhang Shao, Linrui Xu, Jinxi Wang, Wei Zhou, and Xingyu Wu. 2025. When Large Language Models Meet Law: Dual-Lens Taxonomy, Technical Advances, and Ethical Governance. doi:10.48550/arXiv.2507.07748

-

[29]

Benedict Sheehy and Yee-Fui Ng. 2024. The Challenges of AI-Decision-Making in Government and Administrative Law: A Proposal for Regulatory Design.Indiana Law Review57, 3 (June 2024), 665–699. doi:10.18060/28360

-

[30]

Harry Surden. 2019. Artificial Intelligence and Law: An Overview.Georgia State University Law Review35, 4 (2019), 1305–1337

work page 2019

-

[31]

2019.Tomorrow’s Lawyers: An Introduction to Your Future(2nd ed.)

Richard Susskind. 2019.Tomorrow’s Lawyers: An Introduction to Your Future(2nd ed.). Oxford University Press

work page 2019

-

[32]

Thomson Reuters. 2025. Less drudge, more expertise: How AI is redefining the future of legal professionals in Australia. The Guardian (Thomson Reuters AI Futures). https://www.theguardian.com/thomson-reuters-ai- futures/2025/jul/21/less-drudge-more-expertise-how-ai-is-redefining-the- future-of-legal-professionals-in-australia

work page 2025

-

[33]

European Union. 2024. Regulation (EU) 2024/1689 laying down harmonised rules on artificial intelligence (AI Act).Official Journal of the European Union(2024)

work page 2024

-

[34]

Vereniging van Nederlandse Gemeenten (VNG). 2024. Pilot big data & AI- tools voor efficiëntere afhandeling bezwaarschriften. VNG website (Oct 29, 2024). URL: https://vng.nl/artikelen/pilot-big-data-ai-tools-voor-efficientere- afhandeling-bezwaarschriften

work page 2024

-

[35]

Andrei F. Vatamanu and M. Tofan. 2025. Integrating artificial intelligence into public administration: Challenges and vulnerabilities.Administrative Sciences15, 4 (2025), 149. doi:10.3390/admsci15040149

-

[36]

Viswanath Venkatesh, Michael G. Morris, Gordon B. Davis, and Fred D. Davis

-

[37]

User Acceptance of Information Technology: Toward a Unified View.MIS Quarterly27, 3 (2003), 425–478

work page 2003

-

[38]

S. Weerts. 2025. Generative AI in public administration in light of the regulatory awakening in the US and EU.Cambridge Forum on AI: Law and Governance(2025), e3. doi:10.1017/cfl.2024.10

-

[39]

N. Wiratunga, R. Abeyratne, L. Jayawardena, K. Martin, S. Massie, I. Nkisi-Orji, R. Weerasinghe, A. Liret, and B. Fleisch. 2024. CBR-RAG: Case-Based reasoning for retrieval augmented generation in LLMs for legal question answering. arXiv preprint. doi:10.48550/arXiv.2404.04302

-

[40]

L. Wrzesniowska. 2023.Can AI make a case? AI vs. lawyer in the Dutch legal context. Master’s thesis. University of Amsterdam. Master’s thesis; later appearing in LegalCheck: Retrieval- and Context-Augmented Generation for Drafting Municipal Legal Advice Letters ICAIL 2026, June 08–12, 2026, Singapore The International Journal of Law, Ethics, and Technology

work page 2023

-

[41]

Liming Zhu, Qinghua Lu, Ding Ming, Sung Une Lee, and Chen Wang. 2025. Designing Meaningful Human Oversight in AI. doi:10.2139/ssrn.5501939 SSRN working paper

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.