Recognition: no theorem link

Efficient TV regularization of large-scale linear inverse problems via the SCD semismooth* Newton method with applications in tomography

Pith reviewed 2026-05-13 04:28 UTC · model grok-4.3

The pith

The SCD semismooth* Newton method minimizes TV-regularized Tikhonov functionals efficiently for large-scale linear inverse problems.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The SCD semismooth* Newton method, employing a novel concept of graphical derivatives, can be tailored to the nonsmooth total-variation penalty so that the resulting algorithm solves large-scale Tikhonov functionals with locally superlinear convergence and strong mathematical guarantees, without resorting to smoothing approximations.

What carries the argument

The SCD semismooth* Newton method, which uses graphical derivatives to handle the nonsmooth TV penalty term while preserving local superlinear convergence.

If this is right

- TV-regularized reconstructions of large linear inverse problems become feasible without introducing smoothing error.

- The algorithm inherits local superlinear convergence from the semismooth* Newton framework.

- The method applies directly to tomographic imaging and other large-scale linear inverse problems.

- Strong convergence guarantees are available without additional regularization of the penalty term.

Where Pith is reading between the lines

- The approach may reduce the need for hand-tuned smoothing parameters in practical TV imaging pipelines.

- Similar semismooth* techniques could be developed for other nonsmooth penalties such as total generalized variation.

- If the method scales to three-dimensional volumes, it could support faster iterative reconstruction in clinical CT workflows.

- The graphical-derivative framework might be reused for related nonsmooth problems in optimal control or sparse recovery.

Load-bearing premise

The semismooth* Newton method can be applied to the nonsmooth TV penalty without requiring excessive computational resources or losing its superlinear convergence rate on large-scale problems.

What would settle it

Numerical runs on the two tomographic test problems that fail to exhibit superlinear convergence rates or that require more CPU time than standard smoothed or proximal-gradient TV solvers.

Figures

read the original abstract

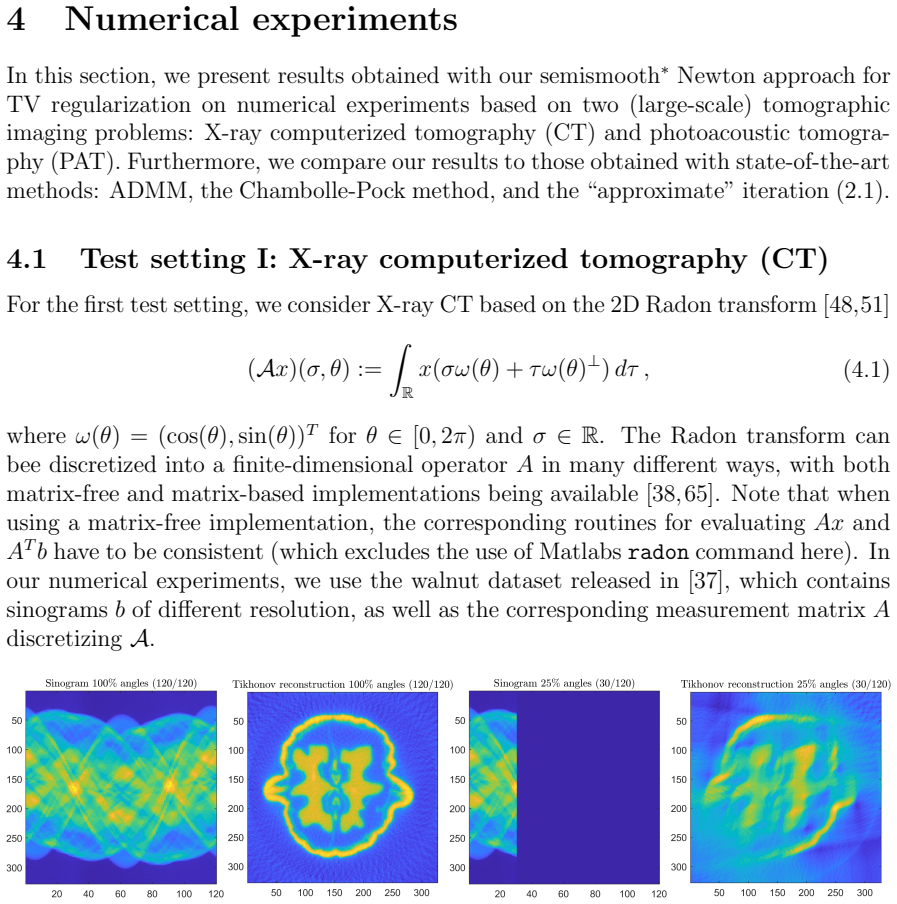

In this paper, we consider the efficient numerical minimization of Tikhonov functionals resulting from total-variation (TV) regularization of linear inverse problems. Since the TV penalty is non-smooth, this is typically done either via smooth approximations, which are inexact, or using non-smooth optimization techniques, which can often be numerically expensive, in particular for large-scale problems. Here, we present a numerically efficient minimization approach based on the recently proposed semismooth* Newton method, which employs a novel concept of graphical derivatives and exhibits locally superlinear convergence. The proposed approach is specifically tailored to TV regularization, suitable for large-scale inverse problems, and supported by strong mathematical convergence guarantees. Furthermore, we demonstrate its performance on two (large-scale) tomographic imaging problems and compare our results to those obtained via other state-of-the-art TV regularization approaches.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes an efficient numerical method for minimizing Tikhonov functionals with total-variation (TV) regularization for linear inverse problems. It introduces a tailored SCD semismooth* Newton approach based on graphical derivatives that is claimed to achieve locally superlinear convergence with strong theoretical guarantees, to be suitable for large-scale problems, and to outperform or match state-of-the-art methods when applied to tomographic imaging examples.

Significance. If the central claims on efficiency and observed superlinear rates hold for discrete TV on large grids, the work would provide a valuable, rigorously grounded alternative to smoothing or proximal methods for TV-regularized tomography and similar inverse problems, potentially reducing computational cost while preserving exact non-smooth handling.

major comments (2)

- [§3.2] §3.2 (SCD semismooth* Newton step for the TV term): the construction of the graphical derivative for the discrete anisotropic/isotropic TV seminorm must be shown to produce a linear system whose assembly and solve remain O(N) (or better) per iteration even when the active-set pattern changes across a 2-D or 3-D grid; without an explicit complexity argument or flop-count table, the efficiency claim for large-scale tomography cannot be verified.

- [§4] §4 (Numerical results on tomographic problems): the reported iteration counts and CPU times for the proposed method versus competing TV solvers do not include per-iteration breakdown of active-set identification cost or condition-number monitoring of the Newton systems; this leaves open whether the locally superlinear rate is realized in practice before the active-set pattern stabilizes on realistic grid sizes.

minor comments (2)

- [§2] The notation for the graphical derivative operator (e.g., the selection of the subdifferential elements) should be introduced with a short self-contained definition before its use in the Newton system, rather than relying solely on the external reference.

- [§4] Figure captions for the tomographic reconstructions should state the exact grid dimensions, noise level, and regularization parameter used, to allow direct reproduction of the timing comparisons.

Simulated Author's Rebuttal

We thank the referee for their thorough review and constructive feedback on our manuscript. We address each of the major comments below and will make the necessary revisions to strengthen the presentation of the method's efficiency and numerical performance.

read point-by-point responses

-

Referee: [§3.2] §3.2 (SCD semismooth* Newton step for the TV term): the construction of the graphical derivative for the discrete anisotropic/isotropic TV seminorm must be shown to produce a linear system whose assembly and solve remain O(N) (or better) per iteration even when the active-set pattern changes across a 2-D or 3-D grid; without an explicit complexity argument or flop-count table, the efficiency claim for large-scale tomography cannot be verified.

Authors: We agree that an explicit complexity analysis would enhance the clarity of §3.2. The graphical derivative for the TV term results in a sparse linear system where each row/column corresponds to local interactions on the grid, allowing assembly in O(N) time and solution via multigrid or iterative solvers that scale linearly for the structured systems arising in tomography. We will add a dedicated paragraph or subsection in the revised manuscript providing the flop-count estimates and confirming the O(N) per-iteration cost independent of the active-set changes, as the support of the derivative remains local. revision: yes

-

Referee: [§4] §4 (Numerical results on tomographic problems): the reported iteration counts and CPU times for the proposed method versus competing TV solvers do not include per-iteration breakdown of active-set identification cost or condition-number monitoring of the Newton systems; this leaves open whether the locally superlinear rate is realized in practice before the active-set pattern stabilizes on realistic grid sizes.

Authors: Thank you for this observation. While our current numerical results demonstrate overall efficiency through total iteration counts and CPU times, we acknowledge that detailed per-iteration metrics would better illustrate the practical realization of the superlinear convergence. In the revised version, we will include additional data or plots showing the evolution of active-set sizes, identification costs, and condition number estimates of the Newton systems across iterations for the tomographic examples. Our experiments indicate that the active-set stabilizes after a small number of iterations, after which the superlinear rate is observed, leading to the reported low total iteration counts. revision: yes

Circularity Check

No significant circularity detected in derivation chain

full rationale

The paper applies the externally proposed semismooth* Newton method (with graphical derivatives) to the TV-regularized inverse problem, tailoring the framework for discrete TV seminorms on grids and providing convergence analysis based on the general theory of the method. No step reduces a claimed result to a fitted parameter, self-definition, or load-bearing self-citation chain; the numerical examples on tomographic problems serve as validation rather than derivation. The central claims of efficiency and superlinear convergence rest on the independent properties of the SCD variant and the structure of the discrete gradient operator, without the result being equivalent to its inputs by construction.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

R. Acar and C. R. Vogel. Analysis of bounded variation penalty methods for ill-posed problems. Inverse Problems, 10(6):1217–1229, 1994

work page 1994

- [2]

-

[3]

Oxford Mathematical Monographs

Luigi Ambrosio, Nicola Fusco, and Diego Pallara.Functions of bounded variation and free dis- continuity problems. Oxford Mathematical Monographs. The Clarendon Press, Oxford University Press, New York, 2000

work page 2000

- [4]

-

[5]

S. R. Arridge, M. M. Betcke, B. T. Cox, F. Lucka, and B. E. Treeby. On the adjoint operator in photoacoustic tomography.Inverse Problems, 32(11):115012, 2016

work page 2016

-

[6]

J. Barzilai and J. M. Borwein. Two-Point Step Size Gradient Methods.IMA Journal of Numerical Analysis, 8:141–148, 1988

work page 1988

-

[7]

H. H. Bauschke and P. L. Combettes.Convex analysis and monotone operator theory in Hilbert spaces. Springer, 2017

work page 2017

-

[8]

P. Beard. Biomedical photoacoustic imaging.Interface Focus, 1:602–631, 2011

work page 2011

-

[9]

Beck.First-Order Methods in Optimization

A. Beck.First-Order Methods in Optimization. MOS-SIAM Series on Optimization. SIAM, Mathematical Optimization Society, Philadelphia, 2017

work page 2017

-

[10]

Amir Beck and Marc Teboulle. Fast gradient-based algorithms for constrained total variation image denoising and deblurring problems.IEEE Trans. Image Process., 18(11):2419–2434, 2009

work page 2009

-

[11]

A fast iterative shrinkage-thresholding algorithm for linear inverse problems.SIAM J

Amir Beck and Marc Teboulle. A fast iterative shrinkage-thresholding algorithm for linear inverse problems.SIAM J. Imaging Sci., 2(1):183–202, 2009

work page 2009

-

[12]

Stephen Boyd, Neal Parikh, Eric Chu, Borja Peleato, and Jonathan Eckstein. Distributed op- timization and statistical learning via the alternating direction method of multipliers.Found. Trends Mach. Learn., 3(1):1–122, January 2011. 35

work page 2011

-

[13]

K. Bredies and M. Holler. A pointwise characterization of the subdifferential of the total variation functional, 2016

work page 2016

-

[14]

Sparsity of solutions for variational inverse problems with finite-dimensional data.Calc

Kristian Bredies and Marcello Carioni. Sparsity of solutions for variational inverse problems with finite-dimensional data.Calc. Var. Partial Differential Equations, 59(1):Paper No. 14, 26, 2020

work page 2020

-

[15]

Regularization of linear inverse problems with total general- ized variation.J

Kristian Bredies and Martin Holler. Regularization of linear inverse problems with total general- ized variation.J. Inverse Ill-Posed Probl., 22(6):871–913, 2014

work page 2014

-

[16]

Convergence rates of convex variational regularization.Inverse Problems, 20(5):1411–1421, 2004

Martin Burger and Stanley Osher. Convergence rates of convex variational regularization.Inverse Problems, 20(5):1411–1421, 2004

work page 2004

-

[17]

An algorithm for total variation minimization and applications.J

Antonin Chambolle. An algorithm for total variation minimization and applications.J. Math. Imaging Vision, 20(1-2):89–97, 2004. Special issue on mathematics and image analysis

work page 2004

-

[18]

An introduction to total variation for image analysis

Antonin Chambolle, Vicent Caselles, Daniel Cremers, Matteo Novaga, and Thomas Pock. An introduction to total variation for image analysis. InTheoretical foundations and numerical methods for sparse recovery, volume 9 ofRadon Ser. Comput. Appl. Math., pages 263–340. Walter de Gruyter, Berlin, 2010

work page 2010

-

[19]

Image recovery via total variation minimization and related problems.Numer

Antonin Chambolle and Pierre-Louis Lions. Image recovery via total variation minimization and related problems.Numer. Math., 76(2):167–188, 1997

work page 1997

-

[20]

A first-order primal-dual algorithm for convex problems with applications to imaging.J

Antonin Chambolle and Thomas Pock. A first-order primal-dual algorithm for convex problems with applications to imaging.J. Math. Imaging Vision, 40(1):120–145, 2011

work page 2011

-

[21]

Tony F. Chan and Selim Esedo¯ glu. Aspects of total variation regularizedL 1 function approxima- tion.SIAM J. Appl. Math., 65(5):1817–1837, 2005

work page 2005

-

[22]

Tony F. Chan, Gene H. Golub, and Pep Mulet. A nonlinear primal-dual method for total variation- based image restoration.SIAM J. Sci. Comput., 20(6):1964–1977, 1999

work page 1964

-

[23]

Giacomo Cristinelli, Jos´ e A. Iglesias, and Daniel Walter. Conditional gradients for total variation regularization with PDE constraints: a graph cuts approach.Comput. Optim. Appl., 93(1):209– 265, 2026

work page 2026

-

[24]

R. Ellwood, O. Ogunlade, E. Zhang, P. Beard, and B. Cox. Photoacoustic tomography using orthogonal Fabry-Perot sensors.Journal of Biomedical Optics, 22(4), 2017

work page 2017

-

[25]

H. W. Engl, M. Hanke, and A. Neubauer.Regularization of inverse problems.Dordrecht: Kluwer Academic Publishers, 1996

work page 1996

-

[26]

Jens Flemming.Generalized Tikhonov regularization and modern convergence rate theory in Ba- nach spaces. Berichte aus der Mathematik. Shaker Verlag, Aachen, Mrz 2012

work page 2012

-

[27]

A new approach to source conditions in regularization with general residual term.Numer

Jens Flemming and Bernd Hofmann. A new approach to source conditions in regularization with general residual term.Numer. Funct. Anal. Optim., 31(1-3):254–284, 2010

work page 2010

-

[28]

Jens Flemming and Bernd Hofmann. Convergence rates in constrained Tikhonov regulariza- tion: equivalence of projected source conditions and variational inequalities.Inverse Problems, 27(8):085001, 11, 2011

work page 2011

-

[29]

H. Gfrerer. On a globally convergent semismooth ∗ Newton method in nonsmooth nonconvex optimization.Comput. Optim. Appl., 91:67–124, 2025

work page 2025

-

[30]

H. Gfrerer, S. Hubmer, and R. Ramlau. On SCD Semismooth ∗ Newton methods for the effi- cient minimization of Tikhonov functionals with non-smooth and non-convex penalties.Inverse Problems, 41(7):075002, 2025. Gold OA

work page 2025

-

[31]

H. Gfrerer and J. V. Outrata. On a semismooth* Newton method for solving generalized equations. SIAM J. Optim., 31(1):489–517, 2021. 36

work page 2021

-

[32]

H. Gfrerer and J. V. Outrata. On (local) analysis of multifunctions via subspaces contained in graphs of generalized derivatives.J. Math. Anal. Appl., 508:125895: 1–37, 2022

work page 2022

-

[33]

Nonlocal operators with applications to image processing.Mul- tiscale Model

Guy Gilboa and Stanley Osher. Nonlocal operators with applications to image processing.Mul- tiscale Model. Simul., 7(3):1005–1028, 2008

work page 2008

-

[34]

The split Bregman method forL1-regularized problems.SIAM J

Tom Goldstein and Stanley Osher. The split Bregman method forL1-regularized problems.SIAM J. Imaging Sci., 2(2):323–343, 2009

work page 2009

-

[35]

Markus Grasmair. Generalized Bregman distances and convergence rates for non-convex regular- ization methods.Inverse Problems, 26(11):115014, 16, 2010

work page 2010

-

[36]

Markus Grasmair. Variational inequalities and higher order convergence rates for Tikhonov reg- ularisation on Banach spaces.J. Inverse Ill-Posed Probl., 21(3):379–394, 2013

work page 2013

-

[37]

K. H¨ am¨ al¨ ainen, L. Harhanen, A. Kallonen, A. Kujanp¨ a¨ a, E. Niemi, and S. Siltanen. Tomographic X-ray data of a walnut.arXiv preprint arXiv:1502.04064, 2015

-

[38]

P. C. Hansen and J. S. Jørgensen. AIR Tools II: algebraic iterative reconstruction methods, improved implementation.Numerical Algorithms, 79(1):107–137, 2018

work page 2018

-

[39]

Torsten Hein. Convergence rates for regularization of ill-posed problems in Banach spaces by approximate source conditions.Inverse Problems, 24(4):045007, 10, 2008

work page 2008

-

[40]

M. Hinterm¨ uller and K. Kunisch. Total bounded variation regularization as a bilaterally con- strained optimization problem.SIAM J. Appl. Math., 64(4):1311–1333, 2004

work page 2004

-

[41]

B. Hofmann, B. Kaltenbacher, C. P¨ oschl, and O. Scherzer. A convergence rates result for Tikhonov regularization in Banach spaces with non-smooth operators.Inverse Problems, 23(3):987–1010, 2007

work page 2007

-

[42]

Iglesias, Gwenael Mercier, and Otmar Scherzer

Jos´ e A. Iglesias, Gwenael Mercier, and Otmar Scherzer. A note on convergence of solutions of total variation regularized linear inverse problems.Inverse Problems, 34(5):055011, 28, 2018

work page 2018

-

[43]

S. Kindermann and S. Hubmer. Norms in sinogram space and stability estimates for the Radon transform.Inverse Problems, 41(2):025008, 2025

work page 2025

-

[44]

Convex Tikhonov regularization in Banach spaces: new results on conver- gence rates.J

Stefan Kindermann. Convex Tikhonov regularization in Banach spaces: new results on conver- gence rates.J. Inverse Ill-Posed Probl., 24(3):341–350, 2016

work page 2016

-

[45]

Stefan Kindermann, Stanley Osher, and Peter W. Jones. Deblurring and denoising of images by nonlocal functionals.Multiscale Model. Simul., 4(4):1091–1115, 2005

work page 2005

-

[46]

P. Kuchment and L. Kunyansky. Mathematics of thermoacoustic tomography.European Journal of Applied Mathematics, 19(2):191–224, 2008

work page 2008

- [47]

-

[48]

A. K. Louis.Inverse und schlecht gestellte Probleme. Teubner Studienb¨ ucher Mathematik. Vieweg+Teubner Verlag, 1989

work page 1989

-

[49]

American Mathematical Society, Providence, RI, 2001

Yves Meyer.Oscillating patterns in image processing and nonlinear evolution equations, volume 22 ofUniversity Lecture Series. American Mathematical Society, Providence, RI, 2001. The fifteenth Dean Jacqueline B. Lewis memorial lectures

work page 2001

-

[50]

J. Mueller and S. Siltanen.Linear and Nonlinear Inverse Problems with Practical Applications. Society for Industrial and Applied Mathematics, Philadelphia, PA, 2012

work page 2012

-

[51]

Natterer.The Mathematics of Computerized Tomography

F. Natterer.The Mathematics of Computerized Tomography. Society for Industrial and Applied Mathematics, Philadelphia, PA, 2001

work page 2001

- [52]

-

[53]

Andreas Neubauer. On enhanced convergence rates for Tikhonov regularization of nonlinear ill-posed problems in Banach spaces.Inverse Problems, 25(6):065009, 10, 2009

work page 2009

-

[54]

Modified Tikhonov regularization for nonlinear ill-posed problems in Banach spaces.J

Andreas Neubauer. Modified Tikhonov regularization for nonlinear ill-posed problems in Banach spaces.J. Integral Equations Appl., 22(2):341–351, 2010

work page 2010

-

[55]

Andreas Neubauer, Torsten Hein, Bernd Hofmann, Stefan Kindermann, and Ulrich Tautenhahn. Improved and extended results for enhanced convergence rates of Tikhonov regularization in Banach spaces.Appl. Anal., 89(11):1729–1743, 2010

work page 2010

- [56]

-

[57]

Regularization of ill-posed problems in Banach spaces: convergence rates

Elena Resmerita. Regularization of ill-posed problems in Banach spaces: convergence rates. Inverse Problems, 21(4):1303–1314, 2005

work page 2005

-

[58]

Elena Resmerita and Otmar Scherzer. Error estimates for non-quadratic regularization and the relation to enhancement.Inverse Problems, 22(3):801–814, 2006

work page 2006

-

[59]

S. M. Robinson. Some continuity properties of polyhedral multifunctions. In H. K¨ onig, B. Korte, and K. Ritter, editors,Mathematical Programming at Oberwolfach, volume 14 ofMathematical Programming Study, pages 206–214. Springer, Berlin, Heidelberg, 1981

work page 1981

-

[60]

R. T. Rockafellar.Convex Analysis. Princeton University Press, Princeton, 1970

work page 1970

-

[61]

R. T. Rockafellar and R. J. B. Wets.Variational Analysis. Grundlehren der mathematischen Wissenschaften. Springer Berlin Heidelberg, 2009

work page 2009

-

[62]

Rudin, Stanley Osher, and Emad Fatemi

Leonid I. Rudin, Stanley Osher, and Emad Fatemi. Nonlinear total variation based noise removal algorithms.Phys. D, 60(1-4):259–268, 1992

work page 1992

-

[63]

O. Scherzer, M. Grasmair, H. Grossauer, M. Haltmeier, and F. Lenzen.Variational Methods in Imaging. Applied Mathematical Sciences. Springer New York, 2008

work page 2008

-

[64]

B. E. Treeby and B. T. Cox. k-Wave: MATLAB toolbox for the simulation and reconstruction of photoacoustic wave fields.J Biomed Opt, 15(2):021314, 2010

work page 2010

-

[65]

W. van Aarle, W. J. Palenstijn, J. Cant, E. Janssens, F. Bleichrodt, A. Dabravolski, J. D. Been- houwer, K. J. Batenburg, and J. Sijbers. Fast and flexible x-ray tomography using the astra toolbox.Opt. Express, 24(22):25129–25147, 2016

work page 2016

-

[66]

K. Wang and M. A. Anastasio. Photoacoustic and thermoacoustic tomography: Image formation principles. InHandbook of Mathematical Methods in Imaging, pages 781–815. Springer New York, New York, NY, 2011

work page 2011

-

[67]

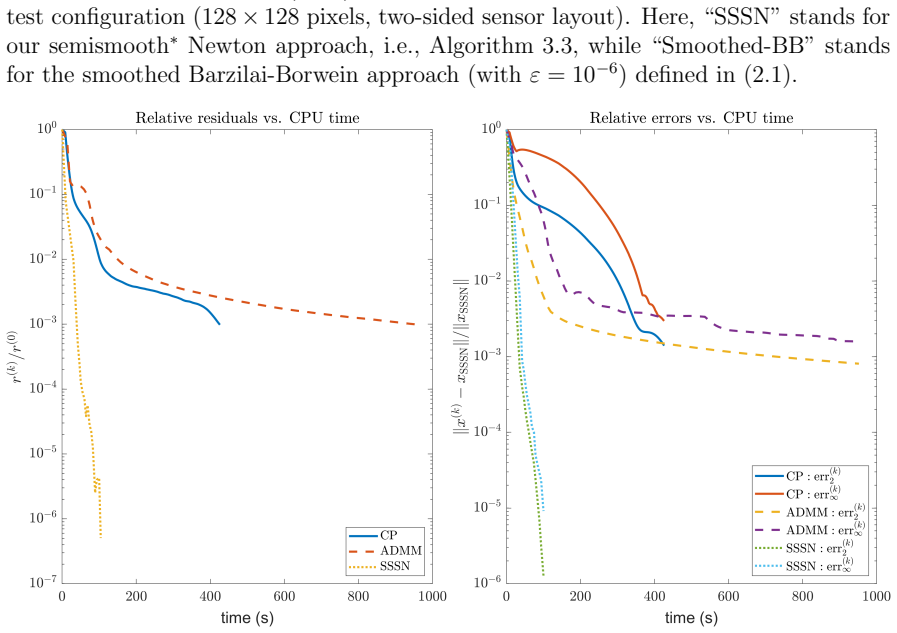

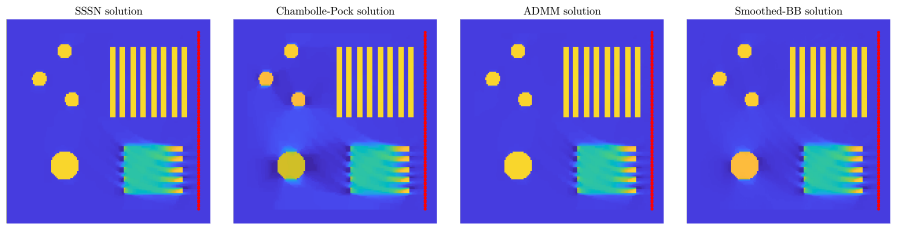

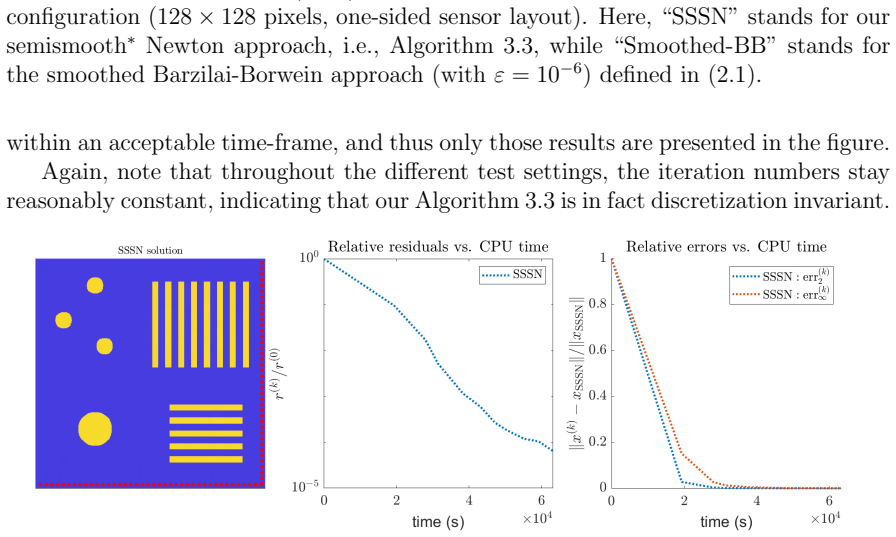

SSSN” stands for our semismooth ∗ Newton approach, i.e., Algorithm 3.3, and “CP

E. Zhang, J. Laufer, and P. Beard. Backward-mode multiwavelength photoacoustic scanner using a planar Fabry-Perot polymer film ultrasound sensor for high-resolution three-dimensional imaging of biological tissues.Appl. Opt., 47(4):561–577, 2008. A Supplemental figures of numerical results 38 Figure A.1: Test setting I (CT): Comparison of relative residual...

work page 2008

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.