Recognition: 2 theorem links

· Lean TheoremMemory Constrained Adversarial Hypothesis Testing

Pith reviewed 2026-05-13 04:45 UTC · model grok-4.3

The pith

Memory constraints in adversarial hypothesis testing lead to error bounds with identical exponential scaling in S.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central discovery is that when the hypothesis tester is restricted to a time-invariant randomized finite-state machine with S states, and the adversary selects distributions from hypothesis-specific sets with knowledge of past observations and machine states, the minimax asymptotic error probability admits upper and lower bounds that exhibit identical exponential scaling in S and are tight for particular problem instances.

What carries the argument

A time-invariant randomized finite state machine with S states that processes samples sequentially to decide between two hypotheses, while the adversary adapts distribution choices based on the FSM's state history.

If this is right

- The asymptotic error probability improves exponentially as the number of states S increases.

- The derived bounds coincide exactly for a class of hypothesis testing problems.

- This result characterizes the fundamental performance loss due to memory limits in the presence of an adaptive adversary.

- For large S the guaranteed error rate approaches zero at a rate determined by the shared exponential term.

Where Pith is reading between the lines

- The same style of analysis might apply to hypothesis testing under other sequential constraints such as limited per-step computation.

- Numerical simulations for small fixed S and simple distribution families could check whether observed error rates follow the predicted scaling.

- The bounds may inform the design of streaming detectors in settings like network security where memory is scarce and attackers can adapt.

Load-bearing premise

The tester is forced to use a fixed, time-invariant randomized finite state machine with a limited number of states S.

What would settle it

A concrete counterexample consisting of two sets of distributions where the exponential rate of the minimax asymptotic error probability differs between the paper's upper and lower bounds, or where the bounds fail to match for the stated class of problems.

Figures

read the original abstract

We study adversarial binary hypothesis testing under memory constraints. The test is a time-invariant randomized finite state machine (FSM) with S states. Associated with each hypothesis is a set of distributions. Given the hypothesis, the distribution of each sample is chosen from the set associated with the hypothesis by an adversary who has access to past samples and the history of states of the FSM so far. We obtain upper and lower bounds on the minimax asymptotic probability of error as a function of S. The bounds have the same exponential behaviour in S and match for a class of problems.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper studies adversarial binary hypothesis testing in which the tester is a time-invariant randomized finite-state machine (FSM) with S states. Each hypothesis is associated with a set of distributions; at each step an adversary, who observes all past samples and the current FSM state history, selects the distribution from the appropriate set. The authors derive upper and lower bounds on the minimax asymptotic probability of error that exhibit identical exponential dependence on S and coincide exactly for a specified class of problems.

Significance. If the central claims hold, the work supplies a precise characterization of how finite memory constrains performance against an adaptive adversary in hypothesis testing. The matching exponential-in-S bounds constitute a clean information-theoretic result that quantifies the memory-error trade-off under worst-case adaptive attacks. Such results are useful for resource-limited detection systems in security applications and extend classical minimax hypothesis testing to explicitly memory-constrained, non-stationary adversarial models.

major comments (1)

- [Definition of asymptotic error probability and main theorem statement] The central claim concerns bounds on the 'minimax asymptotic probability of error' (abstract and main-result section). Because the adversary controls the distribution at each step while observing the FSM state, the controlled Markov chain need not be ergodic and Pe(n) need not converge; the manuscript must therefore state explicitly whether the bounds are on lim Pe(n), limsup Pe(n), or the exponential rate, and must supply conditions guaranteeing that the limit exists for the claimed matching to hold.

minor comments (2)

- [Model section] Notation for the FSM transition kernel and the adversary's policy should be introduced once in a dedicated model section rather than piecemeal.

- [Main results] The precise class of problems for which the upper and lower bounds coincide should be stated explicitly (e.g., via a theorem or corollary) rather than left as 'a class of problems'.

Simulated Author's Rebuttal

We thank the referee for the thoughtful review and for highlighting the need for greater precision in the definition of the asymptotic error probability. We address the major comment below and will incorporate the necessary clarifications in the revised manuscript.

read point-by-point responses

-

Referee: The central claim concerns bounds on the 'minimax asymptotic probability of error' (abstract and main-result section). Because the adversary controls the distribution at each step while observing the FSM state, the controlled Markov chain need not be ergodic and Pe(n) need not converge; the manuscript must therefore state explicitly whether the bounds are on lim Pe(n), limsup Pe(n), or the exponential rate, and must supply conditions guaranteeing that the limit exists for the claimed matching to hold.

Authors: We agree that explicit clarification is required. Our bounds are formulated for the lim sup_{n→∞} of the minimax error probability Pe(n), which is the natural quantity that remains well-defined even when the adaptive adversary renders the controlled process non-ergodic. The exponential dependence on S is obtained via this lim-sup characterization. We will revise the abstract, the introduction, and the statement of the main theorem to state this definition explicitly. We will also add a remark identifying sufficient conditions (e.g., when the adversary’s choice sets admit a common ergodic measure or when the FSM transition structure forces eventual mixing) under which the ordinary limit lim Pe(n) exists and the upper and lower bounds coincide exactly for the indicated class of problems. These additions do not alter the technical results but improve the rigor of the presentation. revision: yes

Circularity Check

No circularity: bounds derived from FSM-adversary model without self-referential reduction

full rationale

The paper analyzes minimax asymptotic error probability for a time-invariant randomized FSM tester against an adaptive adversary with full history access. Upper and lower bounds are obtained by examining the controlled Markov chain evolution under worst-case distribution choices from each hypothesis set. No step reduces a claimed result to a fitted input renamed as prediction, a self-citation chain, or a definitional tautology; the exponential matching in S for certain problem classes emerges from the state-transition analysis rather than being presupposed. The derivation remains self-contained against the stated model assumptions.

Axiom & Free-Parameter Ledger

axioms (1)

- standard math Standard assumptions of binary hypothesis testing and finite-state automata

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel; dAlembert_to_ODE_general echoes?

echoesECHOES: this paper passage has the same mathematical shape or conceptual pattern as the Recognition theorem, but is not a direct formal dependency.

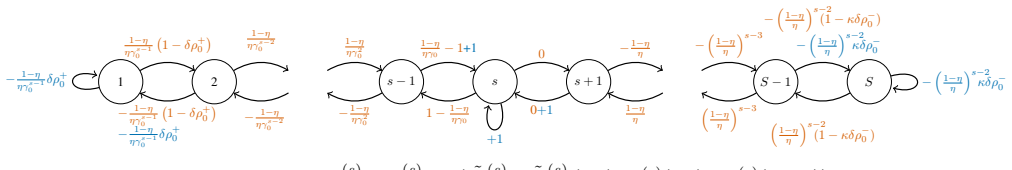

γ := sup_{f+,f-} min_{p∈P0,q∈P1} E_q[f+(X)] E_p[f-(X)] / (E_q[f-(X)] E_p[f+(X)]) (Eq. 10); P*_e(S) = 1/(1 + √(C γ) S^{-2}) (Thm 1); Γ = ½ log γ (Cor 1)

-

IndisputableMonolith/Foundation/ArithmeticFromLogic.leanembed_strictMono_of_one_lt; LogicNat recovery refines?

refinesRelation between the paper passage and the cited Recognition theorem.

Hellman-Cover saturable counter as birth-and-death chain on S states; martingale M_n = sum 1_{R_i=1} - κ C_0 γ^{S-2} 1_{R_i=S} (Claim 1)

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

A robust version of the probability ratio test,

P. J. Huber, “A robust version of the probability ratio test,”Ann. Math. Statist., pp. 1753–1758, 1965

work page 1965

-

[2]

Minimax tests and the Neyman-Pearson lemma for capacities,

P. J. Huber and V . Strassen, “Minimax tests and the Neyman-Pearson lemma for capacities,”The Annals of Statistics, pp. 251–263, 1973

work page 1973

-

[3]

Robust hypothesis testing with a relative entropy tolerance,

B. C. Levy, “Robust hypothesis testing with a relative entropy tolerance,”IEEE Transactions on Information Theory, vol. 55, no. 1, pp. 413–421, 2009

work page 2009

-

[4]

Hypothesis testing for arbitrarily varying source,

F. Fang-Wei and S. Shi-Yi, “Hypothesis testing for arbitrarily varying source,”Acta Mathematica Sinica, vol. 12, no. 1, pp. 33–39, 1996

work page 1996

-

[5]

Hypothesis testing for arbitrarily varying source with exponential-type constraint,

——, “Hypothesis testing for arbitrarily varying source with exponential-type constraint,”IEEE Transactions on Information The- ory, vol. 44, no. 2, pp. 892–895, 1998

work page 1998

-

[6]

Adversarial hypothesis testing and a quantum Stein’s lemma for restricted mea- surements,

F. G. Brand ˜ao, A. W. Harrow, J. R. Lee, and Y . Peres, “Adversarial hypothesis testing and a quantum Stein’s lemma for restricted mea- surements,”IEEE Transactions on Information Theory, vol. 66, no. 8, pp. 5037–5054, 2020

work page 2020

-

[7]

On the adversarial robustness of hypothesis testing,

Y . Jin and L. Lai, “On the adversarial robustness of hypothesis testing,”IEEE Transactions on Signal Processing, vol. 69, pp. 515– 530, 2021

work page 2021

-

[8]

Generalized likelihood ratio test for adversarially robust hypothesis testing,

B. Puranik, U. Madhow, and R. Pedarsani, “Generalized likelihood ratio test for adversarially robust hypothesis testing,”IEEE Transac- tions on Signal Processing, vol. 70, pp. 4124–4139, 2022

work page 2022

-

[9]

Asymptotic Nash equilibrium for the M-ary sequential adversarial hypothesis testing game,

J. Pan, Y . Li, and V . Y . F. Tan, “Asymptotic Nash equilibrium for the M-ary sequential adversarial hypothesis testing game,”IEEE Transactions on Information Forensics and Security, vol. 18, pp. 831– 845, 2023

work page 2023

-

[10]

Error exponents for robust hypothesis testing with abstention,

M. Managoli, K. R. Sahasranand, and V . M. Prabhakaran, “Error exponents for robust hypothesis testing with abstention,” in2025 IEEE International Symposium on Information Theory (ISIT), 2025, arXiv: 2501.12938

-

[11]

I. Diakonikolas and D. M. Kane,Algorithmic High-Dimensional Robust Statistics. Cambridge University Press, 2023

work page 2023

-

[12]

Hypothesis testing with finite statistics,

T. M. Cover, “Hypothesis testing with finite statistics,”The Annals of Mathematical Statistics, vol. 40, no. 3, pp. 828–835, 1969

work page 1969

-

[13]

Necessary and sufficient memory size for M- hypothesis testing,

J. Koplowitz, “Necessary and sufficient memory size for M- hypothesis testing,”IEEE Transactions on Information Theory, vol. 21, no. 1, pp. 44–46, 1975

work page 1975

-

[14]

M. E. Hellman and T. M. Cover, “Learning with finite memory,” The Annals of Mathematical Statistics, vol. 41, no. 3, pp. 765 – 782, 1970

work page 1970

-

[15]

Binary hypothesis testing with deterministic finite-memory decision rules,

T. Berg, O. Ordentlich, and O. Shayevitz, “Binary hypothesis testing with deterministic finite-memory decision rules,” in2020 IEEE International Symposium on Information Theory (ISIT), 2020, pp. 1259–1264

work page 2020

-

[16]

Statistical inference with limited memory: A survey,

——, “Statistical inference with limited memory: A survey,”IEEE Journal on Selected Areas in Information Theory, vol. 5, pp. 623– 644, 2024

work page 2024

-

[17]

M. Sion, “On general minimax theorems.”Pacific Journal of Math- ematics, vol. 8, no. 1, pp. 171–176, 1958. APPENDIXA MISSING PROOFS FROMSECTIONIII Proof of Corollary 1.Firstly, (13) impliesΓ≤ 1 2 logγ. Furthermore, on the one hand, whenC >0, (14) implies Γ≥ 1 2 logγ. On the other hand, whenC= 0,Γ≥ 1 2 logγis vacuously true sinceC= 0 =⇒γ= 0. To see this, n...

work page 1958

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.