Recognition: no theorem link

Autonomy and Agency in Agentic AI: Architectural Tactics for Regulated Contexts

Pith reviewed 2026-05-13 04:28 UTC · model grok-4.3

The pith

A two-dimensional design space with five levels each for agency and autonomy, plus six tactics, makes compliance an explicit part of agentic AI design rather than a retrofit.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

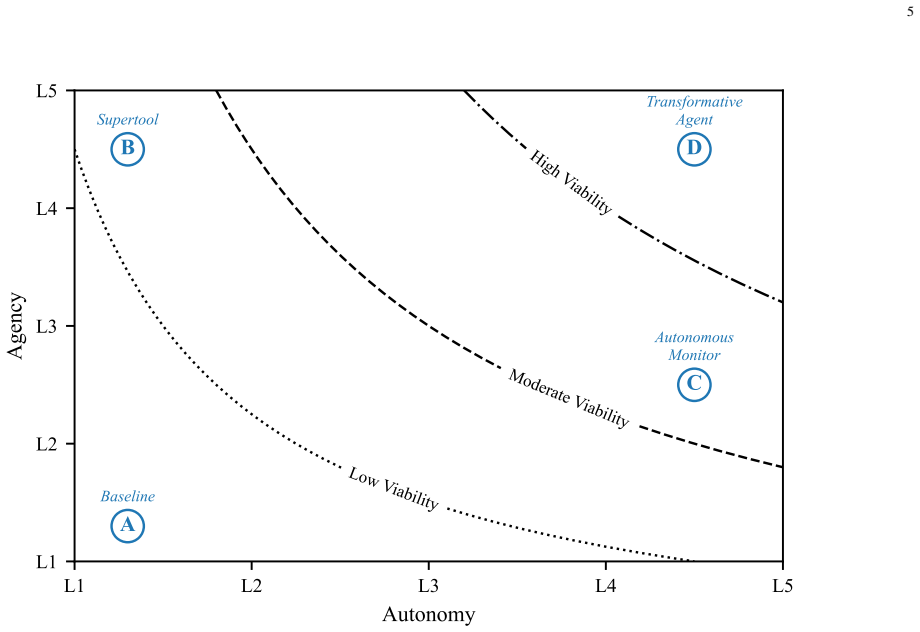

The central claim is that a two-dimensional design space with five operational levels for autonomy and five for agency makes their necessary coupling explicit, and that six architectural tactics grounded in compliance constraints allow principled navigation of the space while five independent deployment parameters determine what configurations are achievable.

What carries the argument

The two-dimensional design space that places autonomy on one axis (L1 human-commanded to L5 fully autonomous monitoring) and agency on the other (L1 reasoning over supplied context to L5 committed writes to authoritative records), together with the six tactics that adjust a deployment's position within it.

If this is right

- At higher autonomy levels, agency must be constrained so that human error correction remains available.

- Compliance mandates for human involvement can be met by design choices rather than added after deployment.

- Oversight, action consequences, and error correction become jointly addressable through explicit positioning in the space.

- The five deployment parameters must be evaluated separately to determine whether a desired level pair is feasible.

- Public-sector deployments can use the tactics to satisfy realistic compliance constraints while retaining useful capability.

Where Pith is reading between the lines

- The same space and tactics could be tested in healthcare or financial regulation to check whether the five levels still align with domain-specific rules.

- Quantitative risk metrics could be attached to each level pair to turn qualitative navigation into measurable trade-off analysis.

- The framework could be combined with existing audit logging standards to produce automated compliance reports at each configuration.

- Simulation environments could be used to verify that the tactics preserve required reversibility before live deployment.

Load-bearing premise

The five-level structures for each dimension and the six listed tactics are sufficient to capture the necessary constraints and trade-offs under realistic regulatory requirements.

What would settle it

A concrete regulatory scenario or compliance requirement arising in a regulated context that cannot be satisfied by any placement within the five-by-five space or any combination of the six tactics.

Figures

read the original abstract

Deploying agentic AI in regulated contexts requires principled reasoning about two design dimensions: agency (what the system can do) and autonomy (how much it acts without human involvement). Though often treated independently, they are coupled: at higher autonomy, human error correction is less available, so reliable operation requires constraining agency accordingly; compliance requirements reinforce this by mandating human involvement as action consequences grow. Yet no established approach addresses them jointly, leaving practitioners without a principled basis for reasoning about oversight, action consequences, and error correction. This work introduces a two-dimensional design space in which both dimensions are organised into five operational levels, making the coupling explicit and navigable. Autonomy ranges from human-commanded operation (L1) to fully autonomous monitoring (L5); agency ranges from reasoning over supplied context (L1) to committed writes to authoritative records (L5). Building on this space, we propose six architectural tactics--checkpoints, escalation, multi-agent delegation, tool provisioning, tool fencing, and write staging--for adjusting a deployment's position within it. The tactics are grounded in two worked examples from public-sector contexts, illustrating how they apply under realistic compliance constraints. We further examine five deployment parameters--model capability, agent architecture, tool fidelity, workflow bottlenecks, and evaluation--that shape what is achievable at any configuration independently of agency and autonomy. Together, the design space, tactics, and deployment parameters provide a shared vocabulary for principled, compliance-aware agentic AI design in which responsibility, auditability, and reversibility are explicit design considerations rather than properties that must be retrofitted after deployment.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims that agentic AI deployments in regulated contexts require jointly reasoning about agency (what the system can do) and autonomy (how much it acts without human involvement), which are coupled because higher autonomy reduces opportunities for human error correction and compliance often mandates human involvement for higher-consequence actions. It introduces a two-dimensional design space with five operational levels for each dimension (autonomy: L1 human-commanded to L5 fully autonomous monitoring; agency: L1 reasoning over supplied context to L5 committed writes to authoritative records), proposes six architectural tactics (checkpoints, escalation, multi-agent delegation, tool provisioning, tool fencing, write staging) for navigating positions in the space, illustrates them via two public-sector worked examples, and identifies five deployment parameters (model capability, agent architecture, tool fidelity, workflow bottlenecks, evaluation) that shape achievable configurations. The central claim is that these elements together supply a shared vocabulary making responsibility, auditability, and reversibility explicit design considerations.

Significance. If the modest claim holds, the work supplies a timely, constructive shared vocabulary for compliance-aware agentic AI design that makes the autonomy-agency coupling explicit and navigable for practitioners. The clear definitions, motivating examples, and grounding in realistic public-sector constraints are strengths; the proposal is internally consistent and avoids overclaiming exhaustiveness or optimality. No machine-checked proofs or empirical validation are provided, which is proportionate to the conceptual scope.

minor comments (3)

- [§3] §3 (design space): the five-level discretization is presented as sufficient for navigability, but a brief justification or sensitivity discussion would help readers assess whether coarser or finer granularity might be needed for certain regulatory regimes.

- [§4] §4 (tactics): while the six tactics are well-motivated, a compact summary table mapping each tactic to its primary effects on autonomy and agency levels (and to the deployment parameters) would improve scannability and reduce repetition across the worked examples.

- [§5] §5 (deployment parameters): the interaction between 'evaluation' and the other parameters is described qualitatively; adding one concrete example of how an evaluation metric would shift a configuration from L3 to L4 would make the parameter more actionable.

Simulated Author's Rebuttal

We thank the referee for their positive and accurate summary of the manuscript, which correctly identifies the core contribution as a two-dimensional design space for agency and autonomy together with six architectural tactics. The recommendation for minor revision is noted with appreciation.

Circularity Check

No significant circularity: constructive conceptual framework

full rationale

The paper advances a two-dimensional design space (autonomy and agency, each with five levels) plus six named architectural tactics and five deployment parameters, illustrated via public-sector examples. Its central claim is only that these elements supply a shared vocabulary making responsibility, auditability, and reversibility explicit design choices. No equations, fitted parameters, self-referential definitions, or load-bearing self-citations appear; the proposal is presented as a constructive navigational tool rather than a derivation that reduces to its inputs by construction. The modest claim remains self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Agency and autonomy are coupled such that higher autonomy requires correspondingly constrained agency to maintain reliable and compliant operation.

- ad hoc to paper Five operational levels per dimension are sufficient to make the design space navigable for practitioners.

invented entities (2)

-

Two-dimensional design space with L1-L5 autonomy and L1-L5 agency levels

no independent evidence

-

Six architectural tactics (checkpoints, escalation, multi-agent delegation, tool provisioning, tool fencing, write staging)

no independent evidence

Reference graph

Works this paper leans on

-

[1]

A. Baird and L. M. Maruping, ``The next generation of research on is use: A theoretical framework of delegation to and from agentic is artifacts,'' MIS Quarterly: Management Information Systems, vol. 45, no. 1, pp. 315--341, 3 2021

work page 2021

-

[2]

M. A. Ali, F. Dornaika, and J. Charafeddine, ``Agentic ai: a comprehensive survey of architectures, applications, and future directions,'' Artificial Intelligence Review, vol. 59, no. 1, p. 11, 11 2025

work page 2025

-

[3]

https://eur-lex.europa.eu/eli/reg/2016/679/oj, 2016

European Parliament and Council of the European Union , ``Regulation ( EU ) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data, and repealing Directive 95/46/ EC ( General Data Protection Regulation ),'' Official J...

work page 2016

-

[4]

https://eur-lex.europa.eu/eli/reg/2024/1689/oj, July 2024

------, ``Regulation ( EU ) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence ( Artificial Intelligence Act ),'' Official Journal of the European Union, L 2024/1689. https://eur-lex.europa.eu/eli/reg/2024/1689/oj, July 2024

work page 2024

- [5]

-

[6]

T. Grønsund and M. Aanestad, ``Augmenting the algorithm: Emerging human-in-the-loop work configurations,'' The Journal of Strategic Information Systems, vol. 29, no. 2, p. 101614, 6 2020

work page 2020

-

[7]

R. Sapkota, K. I. Roumeliotis, and M. Karkee, ``Ai agents vs. agentic ai: A conceptual taxonomy, applications and challenges,'' Information Fusion, vol. 126, p. 103599, 2 2026

work page 2026

-

[8]

D. Sonnabend, M. M. Li, and C. Peters, `` LLMs for intelligent automation -- insights from a systematic literature review,'' 20th International Conference on Wirtschaftsinformatik (WI). https://www.alexandria.unisg.ch/handle/20.500.14171/123682, 2025

work page 2025

-

[9]

C. Winston and R. Just, ``A taxonomy of failures in tool-augmented llms,'' Proceedings - 2025 IEEE/ACM International Conference on Automation of Software Test, AST 2025, pp. 125--135, 2025

work page 2025

-

[10]

H. Mozannar, G. Bansal, C. Tan, A. Fourney, V. Dibia, J. Chen, J. Gerrits, T. Payne, M. K. Maldaner, M. Grunde-McLaughlin, E. Zhu, G. Bassman, J. Alber, P. Chang, R. Loynd, F. Niedtner, E. Kamar, M. Murad, R. Hosn, and S. Amershi, ``Magentic-ui: Towards human-in-the-loop agentic systems,'' 7 2025

work page 2025

-

[11]

E. Miehling, K. N. Ramamurthy, K. R. Varshney, M. Riemer, D. Bouneffouf, J. T. Richards, A. Dhurandhar, E. M. Daly, M. Hind, P. Sattigeri, D. Wei, A. Rawat, J. Gajcin, and W. Geyer, ``Agentic ai needs a systems theory,'' 3 2025

work page 2025

-

[12]

K. J. K. Feng, T. S. Kim, R. Y. Pang, F. Huq, T. August, and A. X. Zhang, ``On the regulatory potential of user interfaces for ai agent governance,'' 11 2025

work page 2025

-

[13]

C. Diebel, A. Kassymova, M.-K. Stein, M. Adam, and A. Benlian, ``Ensembling vs. delegating: Different types of AI involved decision-making and their effects on procedural fairness perceptions,'' 20th International Conference on Wirtschaftsinformatik (WI). https://ideas.repec.org/p/dar/wpaper/158983.html, 2025

work page 2025

-

[14]

M. Mitchell, A. Ghosh, A. S. Luccioni, and G. Pistilli, ``Fully autonomous ai agents should not be developed,'' 2 2025

work page 2025

-

[15]

A. Gieß, S. Schöbel, and F. Möller, ``Navigating generative ai usage tensions in knowledge work: A socio-technical perspective,'' 20th International Conference on Wirtschaftsinformatik (WI), 2025

work page 2025

-

[16]

N. Spatscheck, ``A framework for context-specific theorizing on trust and reliance in collaborative human-ai decision-making environments,'' 20th International Conference on Wirtschaftsinformatik (WI), 2025

work page 2025

-

[17]

C. Schmitz, J. Rystrøm, and J. Batzner, ``Oversight structures for agentic ai in public-sector organizations,'' pp. 298--308, 6 2025

work page 2025

- [18]

-

[19]

M. McCain, T. Millar, S. Huang, J. Eaton, K. Handa, M. Stern, A. Tamkin, M. Kearney, E. Durmus, J. Shen, J. Hong, B. Calvert, J. S. Chan, F. Mosconi, D. Saunders, T. Neylon, G. Nicholas, S. Pollack, J. Clark, and D. Ganguli, ``Measuring AI agent autonomy in practice,'' Anthropic Research. https://anthropic.com/research/measuring-agent-autonomy, February 2026

work page 2026

-

[20]

L. Huang, W. Yu, W. Ma, W. Zhong, Z. Feng, H. Wang, Q. Chen, W. Peng, X. Feng, B. Qin, and T. Liu, ``A survey on hallucination in large language models: Principles, taxonomy, challenges, and open questions,'' ACM Transactions on Information Systems, vol. 43, no. 2, pp. 1--55, 1 2025

work page 2025

-

[21]

Z. Wang, J. He, and F. Lu, ``Requesting expert reasoning: Augmenting llm agents with learned collaborative intervention,'' 2 2026

work page 2026

-

[22]

N. Alampara, M. R \' os-Garc \' a, C. Gupta, S. Mannan, S. Miret, N. M. A. Krishnan, and K. M. Jablonka, ``Task alignment outweighs framework choice in scientific LLM agents,'' in AI for Accelerated Materials Design - NeurIPS 2025, 2025

work page 2025

-

[23]

E. Debenedetti, I. Shumailov, T. Fan, J. Hayes, N. Carlini, D. Fabian, C. Kern, C. Shi, A. Terzis, and F. Tram \`e r, ``Defeating prompt injections by design,'' 3 2025

work page 2025

- [24]

-

[25]

B. Mohammadi, N. Potamitis, L. Klein, A. Arora, and L. Bindschaedler, ``Atomix: Timely, transactional tool use for reliable agentic workflows,'' 2 2026

work page 2026

-

[26]

M. Mazeika, L. Phan, X. Yin, A. Zou, Z. Wang, N. Mu, E. Sakhaee, N. Li, S. Basart, B. Li, D. Forsyth, and D. Hendrycks, ``Harmbench: A standardized evaluation framework for automated red teaming and robust refusal,'' Proceedings of Machine Learning Research, vol. 235, pp. 35\,181--35\,224, 2 2024

work page 2024

-

[27]

Y. Zhu, T. Jin, Y. Pruksachatkun, A. Zhang, S. Liu, S. Cui, S. Kapoor, S. Longpre, K. Meng, R. Weiss, F. Barez, R. Gupta, J. Dhamala, J. Merizian, M. Giulianelli, H. Coppock, C. Ududec, J. Sekhon, J. Steinhardt, A. Kellermann, S. Schwettmann, M. Zaharia, I. Stoica, P. Liang, and D. Kang, ``Establishing best practices for building rigorous agentic benchmar...

work page 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.