Recognition: 2 theorem links

· Lean TheoremFrom Image Hashing to Scene Change Detection

Pith reviewed 2026-05-13 06:14 UTC · model grok-4.3

The pith

HashSCD turns patch-wise hashing into scene change detection and localization using XOR aggregation in Hamming space.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

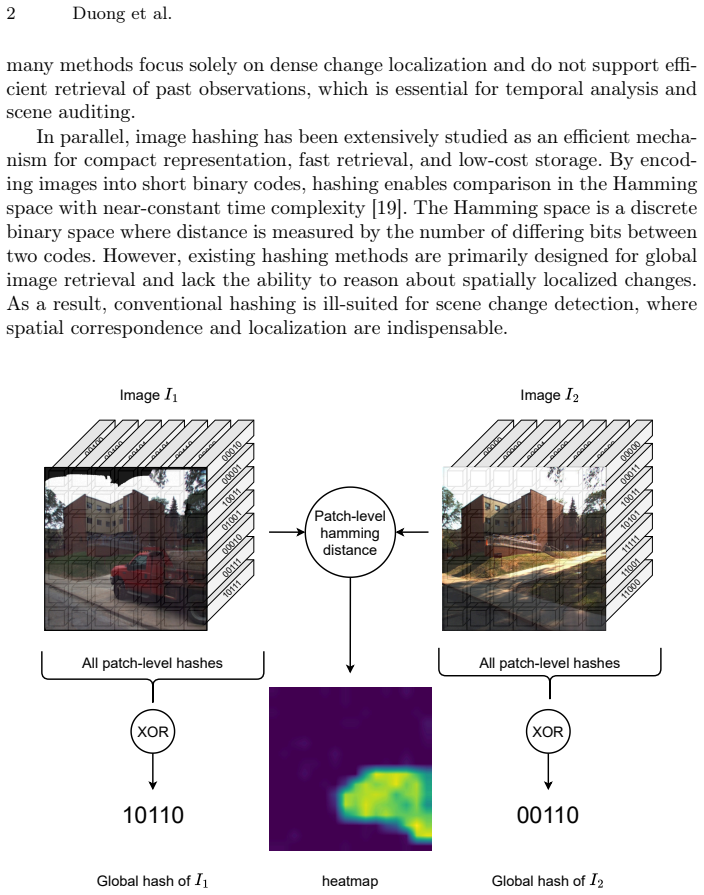

HashSCD encodes spatially aligned patches from an image into compact hash codes with a network trained by unsupervised contrastive learning at patch and global levels. These codes are aggregated by an XOR-like operation so that both the presence and the location of changes can be read directly from Hamming distances, without repeated inference on previous images.

What carries the argument

Patch-wise hash encoding followed by XOR-like aggregation that produces a change map directly in Hamming space.

If this is right

- Change detection runs using only stored hash codes instead of full previous images.

- Localization of changed regions occurs without extra model passes or heavy post-processing.

- Both storage footprint and inference cost drop compared with methods that store or re-process full images.

- No labeled change pairs are required because training relies on contrastive learning alone.

- Global and local decisions share the same compact representation.

Where Pith is reading between the lines

- The same patch-hash-plus-XOR pattern could be tested on video streams to track changes over time with minimal memory.

- If the aggregation step generalizes, hashing might become usable for other tasks that need spatial output such as anomaly localization.

- Replacing the contrastive objective with a different unsupervised signal could be checked to see whether localization quality improves.

- Deployment on edge devices becomes more feasible once only hash codes need to be transmitted or stored.

Load-bearing premise

Aggregating patch hash codes with an XOR-like operation still keeps enough spatial detail to localize changes accurately.

What would settle it

On a standard scene-change benchmark, measure whether HashSCD's change-localization F1 score falls substantially below pixel-level or feature-based unsupervised baselines while the claimed storage and compute reductions are verified.

Figures

read the original abstract

Image hashing provides compact representations for efficient storage and retrieval but is inherently limited to global comparison and cannot reason about where changes occur. This limitation prevents hashing from being directly applicable to scene change detection, where spatial localization is essential. In this work, we revisit hashing from a scene change detection perspective and propose HashSCD, a patch-wise hashing framework that enables both efficient global change detection and localized change identification. HashSCD encodes spatially aligned patches into compact hash codes and aggregates them through an XOR-like operation, allowing change detection and localization to be performed directly in the Hamming space without repeated inference on previous images. The model is trained in an unsupervised manner using contrastive learning at both patch and global levels. Experiments demonstrate that HashSCD achieves competitive performance compared to state-of-the-art unsupervised hashing and scene change detection methods, while significantly reducing computational cost and storage requirements.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces HashSCD, a patch-wise hashing framework for scene change detection. It encodes spatially aligned image patches into compact hash codes, aggregates them via an XOR-like operation to perform both global change detection and localization directly in Hamming space without repeated full-image inference on prior frames, and trains the model unsupervised using contrastive learning at patch and global levels. The central claim is that HashSCD matches the performance of state-of-the-art unsupervised hashing and scene change detection methods while substantially lowering computational cost and storage requirements.

Significance. If the experimental claims hold, the work offers a practical bridge between compact hashing representations and spatially aware change detection. The efficiency gains from Hamming-space differencing and avoidance of repeated inference could be valuable for large-scale surveillance or archival monitoring tasks where storage and compute budgets are constrained. The unsupervised dual-level contrastive training removes the need for labeled change data, which is a common bottleneck, and the approach appears internally consistent without circular definitions.

major comments (1)

- Abstract: the assertion of 'competitive performance' and 'significantly reducing computational cost' is load-bearing for the central claim yet supplies no quantitative metrics, baselines, datasets, or error analysis, preventing verification of whether the data actually support the stated advantages.

minor comments (1)

- The phrase 'XOR-like operation' for aggregation is used without an explicit equation or pseudocode; a precise definition (e.g., bit-wise XOR followed by a distance metric) would improve reproducibility.

Simulated Author's Rebuttal

We thank the referee for the constructive comment on the abstract. We agree that strengthening the abstract with quantitative details will better support the central claims and improve verifiability.

read point-by-point responses

-

Referee: [—] Abstract: the assertion of 'competitive performance' and 'significantly reducing computational cost' is load-bearing for the central claim yet supplies no quantitative metrics, baselines, datasets, or error analysis, preventing verification of whether the data actually support the stated advantages.

Authors: We agree that the abstract would benefit from explicit quantitative support. The full manuscript contains detailed experimental results (Tables 1–4 and Figures 3–5) comparing HashSCD against unsupervised hashing baselines (e.g., DeepHash, HashNet) and scene change detection methods (e.g., CDNet, CSCD) on standard datasets including VL-CMU-CD and PCD, reporting metrics such as F1-score, precision, and recall, along with runtime and storage measurements. In the revised manuscript we will update the abstract to include the key figures: e.g., “achieves competitive F1-scores (within 1–3% of state-of-the-art) while reducing inference time by 4–6× and storage by >90% compared to full-image feature methods.” This revision will directly address the concern without altering any experimental claims. revision: yes

Circularity Check

No significant circularity detected

full rationale

The paper introduces HashSCD as a patch-wise hashing framework trained via dual-level unsupervised contrastive learning, with XOR-style aggregation for Hamming-space change detection. No derivation step reduces by construction to a fitted parameter or self-defined quantity; the method is presented as a direct construction from established hashing and contrastive learning primitives, with performance claims evaluated against external baselines rather than internal fits. No self-citation load-bearing, uniqueness theorems, or ansatz smuggling appear in the provided description or abstract. The central result remains independent of its own outputs.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearpatch-wise hashing and XOR-like aggregation scheme... Hamming space

-

IndisputableMonolith/Foundation/AbsoluteFloorClosure.leanabsolute_floor_iff_bare_distinguishability unclearcontrastive learning at both patch and global levels

Reference graph

Works this paper leans on

-

[1]

Autonomous Robots42(7), 1301–1322 (2018)

Alcantarilla, P.F., Stent, S., Ros, G., Arroyo, R., Gherardi, R.: Street-view change detection with deconvolutional networks. Autonomous Robots42(7), 1301–1322 (2018)

work page 2018

- [2]

- [3]

- [4]

-

[5]

In: Proceedings of the ACM International Conference on Image and Video Retrieval

Chua, T.S., Tang, J., Hong, R., Li, H., Luo, Z., Zheng, Y.: Nus-wide: a real-world web image database from national university of singapore. In: Proceedings of the ACM International Conference on Image and Video Retrieval. pp. 1—-9. CIVR ’09, Association for Computing Machinery (2009)

work page 2009

-

[6]

He,K.,Zhang,X.,Ren,S.,Sun,J.:Deepresiduallearningforimagerecognition.In: Proceedings of the IEEE conference on computer vision and pattern recognition. pp. 770–778 (2016)

work page 2016

- [7]

-

[8]

Hu, Q., Wu, J., Cheng, J., Wu, L., Lu, H.: Pseudo label based unsupervised deep discriminative hashing for image retrieval. In: ACM MM. pp. 1584–1590. MM ’17, Association for Computing Machinery (2017)

work page 2017

- [9]

-

[10]

Neurocomputing418, 102–113 (2020)

Huang, R., Zhou, M., Zhao, Q., Zou, Y.: Change detection with absolute difference of multiscale deep features. Neurocomputing418, 102–113 (2020)

work page 2020

- [11]

- [12]

-

[13]

Adam: A Method for Stochastic Optimization

Kingma, D.P., Ba, J.: Adam: A method for stochastic optimization. arXiv preprint arXiv:1412.6980 (2014)

work page internal anchor Pith review Pith/arXiv arXiv 2014

- [14]

-

[15]

Master’s thesis, Department of Computer Science, University of Toronto (2009)

Krizhevsky, A., Hinton, G., et al.: Learning multiple layers of features from tiny images. Master’s thesis, Department of Computer Science, University of Toronto (2009)

work page 2009

-

[16]

In: 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)

Li, D., Chen, L., Xu, C.Z., Kong, H.: Umad: University of macau anomaly detection benchmark dataset. In: 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). pp. 5836–5843. IEEE (2024)

work page 2024

-

[17]

Li, S., Han, P., Bu, S., Tong, P., Li, Q., Li, K., Wan, G.: Change detection in imagesusingshape-awaresiameseconvolutionalnetwork.EngineeringApplications of Artificial Intelligence94, 103819 (2020)

work page 2020

- [18]

-

[19]

ACM Transactions on Knowledge Discovery from Data 17(1), 1–50 (2023)

Luo, X., Wang, H., Wu, D., Chen, C., Deng, M., Huang, J., Hua, X.S.: A survey on deep hashing methods. ACM Transactions on Knowledge Discovery from Data 17(1), 1–50 (2023)

work page 2023

- [20]

-

[21]

IEEE Transactions on Circuits and Systems for Video Technology29(2), 433–446 (2018)

Nguyen, T.P., Pham, C.C., Ha, S.V.U., Jeon, J.W.: Change detection by training a triplet network for motion feature extraction. IEEE Transactions on Circuits and Systems for Video Technology29(2), 433–446 (2018)

work page 2018

-

[22]

In: 2008 Sixth Indian Conference on Computer Vision, Graphics & Image Processing

Nilsback, M.E., Zisserman, A.: Automated flower classification over a large number of classes. In: 2008 Sixth Indian Conference on Computer Vision, Graphics & Image Processing. pp. 722–729 (2008)

work page 2008

-

[23]

DINOv2: Learning Robust Visual Features without Supervision

Oquab, M., Darcet, T., Moutakanni, T., Vo, H., Szafraniec, M., Khalidov, V., Fernandez, P., Haziza, D., Massa, F., El-Nouby, A., et al.: Dinov2: Learning robust visual features without supervision. arXiv preprint arXiv:2304.07193 (2023)

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[24]

Paszke, A., Gross, S., Massa, F., Lerer, A., Bradbury, J., Chanan, G., Killeen, T., Lin, Z., Gimelshein, N., Antiga, L., Desmaison, A., Kopf, A., Yang, E., DeVito, Z., Raison, M., Tejani, A., Chilamkurthy, S., Steiner, B., Fang, L., Bai, J., Chintala, S.: Pytorch: An imperative style, high-performance deep learning library. In: NeurIPS. pp. 8024–8035 (2019)

work page 2019

-

[25]

In: 2020 International Joint Conference on Neural Networks (IJCNN)

Prabhakar, K.R., Ramaswamy, A., Bhambri, S., Gubbi, J., Babu, R.V., Pu- rushothaman, B.: Cdnet++: Improved change detection with deep neural network feature correlation. In: 2020 International Joint Conference on Neural Networks (IJCNN). pp. 1–8. IEEE (2020)

work page 2020

- [26]

-

[27]

Ramkumar, V.R.T., Bhat, P., Arani, E., Zonooz, B.: Self-supervised pre-training for scene change detection. In: NeurIPS. pp. 6–14 (2021) From Image Hashing to Scene Change Detection 15

work page 2021

- [28]

-

[29]

Shi, W., Zhang, M., Zhang, R., Chen, S., Zhan, Z.: Change detection based on artificial intelligence: State-of-the-art and challenges. Remote Sensing 12(10) (2020). https://doi.org/10.3390/rs12101688, https://www.mdpi.com/2072- 4292/12/10/1688

-

[30]

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. In: ICLR (2015)

work page 2015

-

[31]

Advances in neural information processing systems 31(2018)

Su, S., Zhang, C., Han, K., Tian, Y.: Greedy hash: Towards fast optimization for accurate hash coding in cnn. Advances in neural information processing systems 31(2018)

work page 2018

-

[32]

In: 2025 IEEE/RSJ International Conferenceon IntelligentRobots andSystems(IROS).pp

Thakur, M., Sharma, R.S., Kurmi, V.K., Samant, R., Patro, B.N.: Spectral- temporal attention for robust change detection. In: 2025 IEEE/RSJ International Conferenceon IntelligentRobots andSystems(IROS).pp. 8073–8079. IEEE(2025)

work page 2025

- [33]

-

[34]

Wah,C.,Branson,S.,Welinder,P.,Perona,P.,Belongie,S.:Thecaltech-ucsdbirds- 200-2011 dataset. Tech. Rep. CNS-TR-2011-001, California Institute of Technology (2011)

work page 2011

-

[35]

Wang, G.H., Gao, B.B., Wang, C.: How to reduce change detection to semantic segmentation. PR138, 109384 (2023)

work page 2023

- [36]

- [37]

- [38]

-

[39]

Zhang, W., Wu, D., Zhou, Y., Li, B., Wang, W., Meng, D.: Deep unsupervised hybrid-similarity hadamard hashing. In: ACM MM. pp. 3274–3282. MM ’20, As- sociation for Computing Machinery (2020)

work page 2020

-

[40]

Zieba, M., Semberecki, P., El-Gaaly, T., Trzcinski, T.: Bingan: Learning compact binary descriptors with a regularized gan. In: NeurIPS. vol. 31, pp. 3608–3618. Curran Associates, Inc. (2018) From Image Hashing to Scene Change Detection (Supplementary Material) Anh-Kiet Duong1,2[0009−0005−0230−6104], Marie-Claire Iatrides1,3[0009−0005−3961−0564], Petra Go...

work page 2018

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.