Recognition: 2 theorem links

· Lean TheoremReal-Time Whole-Body Teleoperation of a Humanoid Robot Using IMU-Based Motion Capture with Sim2Sim and Sim2Real Validation

Pith reviewed 2026-05-13 03:59 UTC · model grok-4.3

The pith

A direct real-time pipeline from IMU motion capture enables stable whole-body teleoperation of a humanoid robot from simulation to physical hardware.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

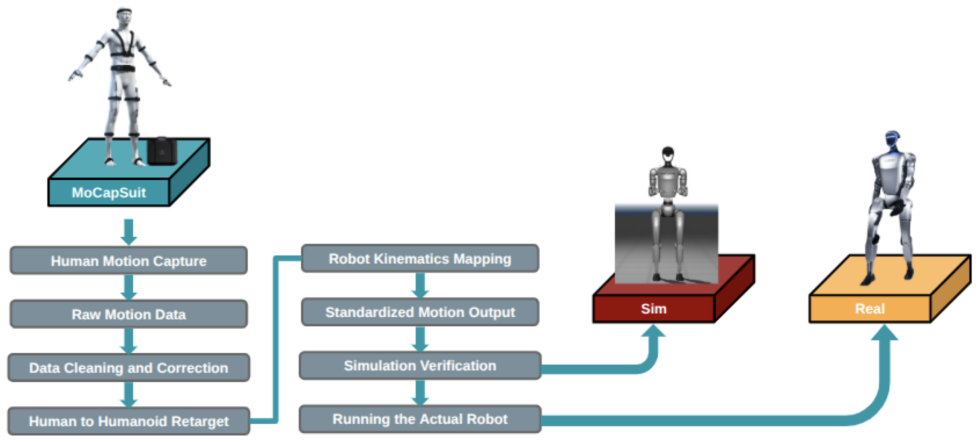

The central discovery is a complete real-time whole-body teleoperation system that maps data from a Virdyn IMU suit to the Unitree G1 humanoid using a custom motion-processing, kinematic retargeting, and control pipeline. This pipeline operates continuously at low latency without offline buffering or learning-based components. It achieves stable, synchronized reproduction of motions including walking, standing, sitting, turning, bowing, and expressive gestures, with validation showing direct transfer from MuJoCo simulation to the physical robot.

What carries the argument

The custom motion-processing, kinematic retargeting, and control pipeline engineered for continuous low-latency operation.

If this is right

- Stable synchronized reproduction of walking, standing, sitting, turning, bowing, and full-body gestures is possible.

- Validation in simulation transfers directly to the physical robot without any modifications to the pipeline.

- The system handles accumulated IMU noise and kinematic mismatches sufficiently for stability.

- No offline buffering or learning-based compensation is required for low-latency performance.

Where Pith is reading between the lines

- This method could extend to other humanoid platforms by adjusting the retargeting parameters for different morphologies.

- Real-time teleoperation might enable more intuitive control in remote or hazardous environments where direct human presence is not feasible.

- Further testing could explore integration with additional sensors for improved accuracy in dynamic settings.

Load-bearing premise

The kinematic retargeting and control pipeline can sufficiently manage IMU noise, body mismatches, and latency to keep the robot stable without any buffering or learned adjustments.

What would settle it

A demonstration of the physical robot losing balance or failing to match human motion timing during a sequence of walking and gesturing that succeeds in the MuJoCo simulation would disprove the claim of successful sim-to-real transfer.

Figures

read the original abstract

Stable, low-latency whole-body teleoperation of humanoid robots is an open research challenge, complicated by kinematic mismatches between human and robot morphologies, accumulated inertial sensor noise, non-trivial control latency, and persistent sim-to-real transfer gaps. This paper presents a complete real-time whole-body teleoperation system that maps human motion, recorded with a Virdyn IMU-based full-body motion capture suit, directly onto a Unitree G1 humanoid robot. We introduce a custom motion-processing, kinematic retargeting, and control pipeline engineered for continuous, low-latency operation without any offline buffering or learning-based components. The system is first validated in simulation using the MuJoCo physics model of the Unitree G1 (sim2sim), and then deployed without modification on the physical platform (sim2real). Experimental results demonstrate stable, synchronized reproduction of a broad motion repertoire, including walking, standing, sitting, turning, bowing, and coordinated expressive full-body gestures. This work establishes a practical, scalable framework for whole-body humanoid teleoperation using commodity wearable motion capture hardware.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper presents a real-time whole-body teleoperation system for the Unitree G1 humanoid that maps motion from a Virdyn IMU-based motion capture suit via a custom kinematic retargeting and control pipeline. The pipeline operates continuously without offline buffering or learning-based components, using explicit low-pass filtering, joint-limit clamping, and proportional-derivative tracking. Validation proceeds first in MuJoCo simulation of the G1 (sim2sim) and then without modification on the physical robot (sim2real), with results showing synchronized reproduction of walking, standing, sitting, turning, bowing, and expressive full-body gestures.

Significance. If the central claim holds, the work supplies a practical, scalable baseline for whole-body humanoid teleoperation using only commodity wearable hardware and a purely kinematic pipeline. Explicit credit is due for the reproducible description of the filtering, clamping, and PD control steps, the successful sim2sim-to-sim2real transfer without retraining, and the breadth of the tested motion repertoire. This approach could lower barriers for human-robot interaction research by avoiding data-driven compensation methods.

major comments (1)

- [Abstract] Abstract and experimental validation section: the claim that the kinematic pipeline 'suffices' for stable reproduction despite IMU noise, kinematic mismatch, and latency is supported only by qualitative descriptions of synchronized motion. No quantitative metrics (joint-angle RMSE, end-effector tracking error, measured end-to-end latency, or balance/stability indicators) or error bars are reported, leaving the evidence for robustness descriptive rather than measured. This is load-bearing for the central claim.

minor comments (1)

- [Methods] A block diagram or pseudocode listing the exact sequence of retargeting, filtering, and control steps would improve clarity of the pipeline.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our manuscript. We address the major comment below and will incorporate revisions to strengthen the quantitative support for our claims.

read point-by-point responses

-

Referee: [Abstract] Abstract and experimental validation section: the claim that the kinematic pipeline 'suffices' for stable reproduction despite IMU noise, kinematic mismatch, and latency is supported only by qualitative descriptions of synchronized motion. No quantitative metrics (joint-angle RMSE, end-effector tracking error, measured end-to-end latency, or balance/stability indicators) or error bars are reported, leaving the evidence for robustness descriptive rather than measured. This is load-bearing for the central claim.

Authors: We agree that the current presentation relies primarily on qualitative descriptions and video demonstrations of synchronized motion across the tested repertoire. This leaves the robustness claims open to the critique that they are descriptive rather than measured. In the revised manuscript we will add quantitative metrics computed from the existing experimental data: joint-angle RMSE between retargeted human poses and robot joint trajectories, end-effector position/orientation tracking error, measured end-to-end system latency (from IMU capture to actuator command), and stability indicators such as center-of-mass deviation and number of balance interventions or falls. These will be reported with means, standard deviations, and error bars across repeated trials for each motion class (walking, gestures, sitting, etc.). The added analysis will directly support the claim that the purely kinematic pipeline suffices for stable reproduction. revision: yes

Circularity Check

No circularity: empirical system description with no derivations or fitted predictions

full rationale

The paper is a system description and experimental demonstration of a real-time whole-body teleoperation pipeline using IMU motion capture, kinematic retargeting, low-pass filtering, joint-limit clamping, and PD control on the Unitree G1 robot. It reports sim2sim and sim2real results for motions like walking and gestures but contains no equations, parameter fitting presented as prediction, uniqueness theorems, or self-citations that reduce the central claim to its own inputs. The results are direct outcomes of the explicitly described pipeline without any load-bearing circular step.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearcustom motion-processing, kinematic retargeting, and control pipeline engineered for continuous, imperceptibly-low-latency operation without any offline buffering or learning-based components

-

IndisputableMonolith/Foundation/ArithmeticFromLogic.leanLogicNat unclearlightweight exponential moving average (EMA) filter per joint

Reference graph

Works this paper leans on

- [1]

- [2]

-

[3]

Isaac Lab: A unified and modular rein- forcement learning framework for robot learning, 2024

NVIDIA. Isaac Lab: A unified and modular rein- forcement learning framework for robot learning, 2024. https://isaac-sim.github.io/IsaacLab

work page 2024

-

[4]

Unitree G1 humanoid robot techni- cal specification, 2024

Unitree Robotics. Unitree G1 humanoid robot techni- cal specification, 2024. https://www.unitree. com/g1

work page 2024

-

[5]

T. He, Z. Luo, and G. Shi. TWIST: Teleoperation via wearable IMU for scalable and transferable dexterous manipulation.arXiv preprint arXiv:2407.xxxxx, 2024. 5

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.