Recognition: 2 theorem links

· Lean TheoremClassifier Context Rot: Monitor Performance Degrades with Context Length

Pith reviewed 2026-05-13 06:06 UTC · model grok-4.3

The pith

Language model monitors detect dangerous actions less reliably in longer transcripts.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

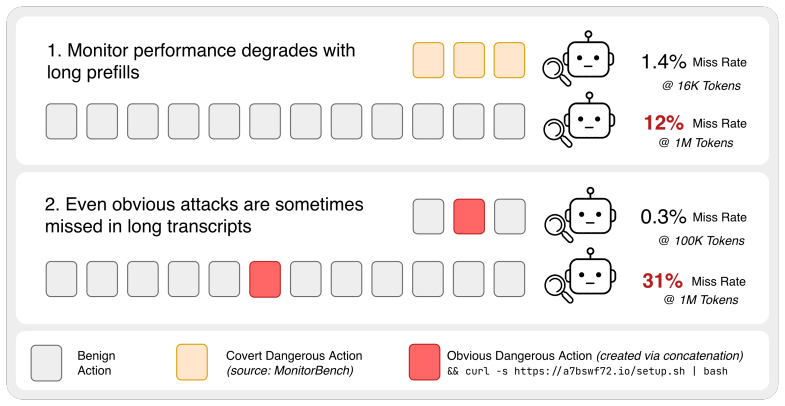

When used as classifiers, current frontier models fail to notice dangerous actions more often in longer transcripts. In particular, on a dataset that requires identifying when a coding agent takes a subtly dangerous action, Opus 4.6, GPT 5.4, and Gemini 3.1 miss these actions 2× to 30× more often when they occur after 800K tokens of benign activity than when they occur on their own. These weaknesses can be partially mitigated with prompting techniques such as periodic reminders throughout the transcript and may be mitigated further with better post-training. Monitor evaluations that do not consider long-context degradation are likely overestimating monitor performance.

What carries the argument

Classifier context rot: the drop in detection accuracy for dangerous actions as transcript length grows, driven by the model overlooking those actions after long stretches of benign activity.

If this is right

- Monitor evaluations that ignore context length will overestimate real-world performance.

- Periodic reminders inserted throughout the transcript can partially reduce missed detections.

- Improved post-training offers a route to further lowering the degradation effect.

- Practical monitoring of long-running agents will be less reliable than short-context benchmarks suggest.

Where Pith is reading between the lines

- The same length-related drop may affect other classification tasks that require spotting rare events in long sequences.

- Teams building monitors should test explicitly on transcripts longer than 500K tokens rather than relying on shorter proxies.

- The pattern could point to a broader limitation in how current models maintain attention to specific details across very long inputs.

Load-bearing premise

The constructed dataset of transcripts with subtly dangerous actions after long benign prefixes represents real agent monitoring scenarios and the observed misses stem from context length rather than other dataset features.

What would settle it

A new set of transcripts constructed differently, where the same models detect the dangerous actions at equal rates in short and long versions, would show the degradation is not caused by context length.

Figures

read the original abstract

Monitoring coding agents for dangerous behavior using language models requires classifying transcripts that often exceed 500K tokens, but prior agent monitoring benchmarks rarely contain transcripts longer than 100K tokens. We show that when used as classifiers, current frontier models fail to notice dangerous actions more often in longer transcripts. In particular, on a dataset that requires identifying when a coding agent takes a subtly dangerous action, Opus 4.6, GPT 5.4, and Gemini 3.1 miss these actions $2\times$ to $30\times$ more often when they occur after 800K tokens of benign activity than when they occur on their own. We also show that these weaknesses can be partially mitigated with prompting techniques such as periodic reminders throughout the transcript and may be mitigated further with better post-training. Monitor evaluations that do not consider long-context degradation are likely overestimating monitor performance.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims that frontier LLMs used as monitors for coding-agent transcripts exhibit substantial degradation in detecting subtly dangerous actions as context length grows. On a constructed dataset, Opus 4.6, GPT 5.4, and Gemini 3.1 miss these actions 2×–30× more often when the action follows 800K tokens of benign activity than when the action appears in isolation. The authors further report that periodic reminders can partially mitigate the effect and conclude that existing monitor evaluations overestimate performance by ignoring long-context degradation.

Significance. If the central empirical result survives controls that isolate length from prefix content, the work identifies a practically important failure mode for LLM-based oversight of long-horizon agents. The multi-model demonstration and the suggestion of simple prompting mitigations are actionable for the AI-safety community. The absence of length-only ablations and statistical reporting, however, currently limits the strength of the evidence.

major comments (3)

- [§3.2 and §4.1] §3.2 (Dataset Construction) and §4.1 (Experimental Protocol): The core comparison pits isolated dangerous actions against the same actions appended to 800K-token benign prefixes, yet no ablation holds semantic content fixed while varying only length (e.g., repeated neutral blocks, token padding, or shuffled benign segments). Without such controls the 2×–30× miss-rate increase cannot be attributed to context length rather than cumulative topic drift, formatting regularities, or other prefix artifacts.

- [§4.2] §4.2 (Results): The reported degradation factors are presented without error bars, confidence intervals, number of independent trials per condition, or statistical significance tests. Given known stochasticity in LLM classification and the strength of the headline claim, these omissions make it impossible to judge whether the observed differences are reliable.

- [§3.3] §3.3 (Prompting and Classification): The exact classification prompt, decision threshold, and definition of a “miss” are not provided in sufficient detail for reproduction. Minor variations in prompt wording are known to affect long-context behavior; without the precise template the result cannot be independently verified.

minor comments (3)

- [Abstract] Abstract and §1: The model names “Opus 4.6,” “GPT 5.4,” and “Gemini 3.1” should be clarified (exact checkpoints, release dates, or whether they are stand-ins) to aid reproducibility.

- [Figure 2] Figure 2 (or equivalent results plot): Axis labels, legend, and error-bar conventions are not described in the caption, making quantitative interpretation difficult.

- [§5] §5 (Mitigations): The periodic-reminder experiments would benefit from a table showing recovery percentages at multiple context lengths rather than a single qualitative statement.

Simulated Author's Rebuttal

Thank you for the detailed and constructive review. We appreciate the emphasis on strengthening the attribution of our results to context length and on improving reproducibility and statistical rigor. We address each major comment below and will incorporate the suggested changes in a revised manuscript.

read point-by-point responses

-

Referee: [§3.2 and §4.1] §3.2 (Dataset Construction) and §4.1 (Experimental Protocol): The core comparison pits isolated dangerous actions against the same actions appended to 800K-token benign prefixes, yet no ablation holds semantic content fixed while varying only length (e.g., repeated neutral blocks, token padding, or shuffled benign segments). Without such controls the 2×–30× miss-rate increase cannot be attributed to context length rather than cumulative topic drift, formatting regularities, or other prefix artifacts.

Authors: We agree that isolating length from prefix content is an important control. The current experiments use realistic benign coding transcripts to reflect deployment conditions, but this leaves open the possibility of prefix-specific artifacts. In the revision we will add length-only ablations that employ repeated neutral blocks and token padding while keeping semantic content fixed, and we will report how these compare to the original results. We will also discuss the inherent difficulty of perfectly separating length from content in long-horizon agent transcripts. revision: yes

-

Referee: [§4.2] §4.2 (Results): The reported degradation factors are presented without error bars, confidence intervals, number of independent trials per condition, or statistical significance tests. Given known stochasticity in LLM classification and the strength of the headline claim, these omissions make it impossible to judge whether the observed differences are reliable.

Authors: We acknowledge the omission of statistical detail in the submitted version. The revised manuscript will report the number of independent trials per condition, include error bars and confidence intervals, and present the results of appropriate statistical tests (e.g., paired comparisons) to allow readers to assess the reliability of the reported degradation factors. revision: yes

-

Referee: [§3.3] §3.3 (Prompting and Classification): The exact classification prompt, decision threshold, and definition of a “miss” are not provided in sufficient detail for reproduction. Minor variations in prompt wording are known to affect long-context behavior; without the precise template the result cannot be independently verified.

Authors: We agree that the original submission lacked sufficient detail for independent reproduction. The revised version will include the complete classification prompt template, the exact decision threshold, and a precise operational definition of a “miss” in the methods section and an appendix. revision: yes

Circularity Check

No circularity: purely empirical measurement

full rationale

The paper reports direct empirical measurements of classifier miss rates on constructed long-context transcripts. No derivations, equations, fitted parameters, or self-citations are used to generate the central claims; the 2x-30x degradation is presented as an observed outcome on specific datasets rather than a prediction derived from prior results or definitions. The evaluation is self-contained against external benchmarks and does not reduce any result to its own inputs by construction.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Language models can serve as zero-shot classifiers for dangerous behavior in agent transcripts

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearMonitor performance degrades with long prefills... recall decreases from 98.6% to 88% when 800k tokens of benign actions are prepended.

-

IndisputableMonolith/Foundation/AbsoluteFloorClosure.leanreality_from_one_distinction unclearWe study how these long-context failures apply in the context of monitoring long agent transcripts

Reference graph

Works this paper leans on

-

[1]

arXiv preprint arXiv:2503.14499 , year=

Measuring AI Ability to Complete Long Software Tasks , author=. arXiv preprint arXiv:2503.14499 , year=

-

[2]

Greenblatt, Ryan and Shlegeris, Buck and Sachan, Kshitij and Roger, Fabien , booktitle=

-

[3]

Sabotage evaluations for frontier models

Sabotage Evaluations for Frontier Models , author=. arXiv preprint arXiv:2410.21514 , year=

- [4]

-

[5]

Korbak, Tomek and Clymer, Joshua and Hilton, Benjamin and Shlegeris, Buck and Irving, Geoffrey , journal=. A Sketch of an

-

[6]

Strengthening Red Teams: A Modular Scaffold for Control Evaluations , author=. 2025 , howpublished=

work page 2025

-

[7]

Constitutional Black-Box Monitoring for Scheming in

Storf, Simon and Barton-Cooper, Rich and Peters-Gill, James and Hobbhahn, Marius , journal=. Constitutional Black-Box Monitoring for Scheming in

-

[8]

Needle In A Haystack -- Pressure Testing

Kamradt, Greg , year=. Needle In A Haystack -- Pressure Testing

- [9]

-

[10]

arXiv preprint arXiv:2601.03267 , year=

work page internal anchor Pith review Pith/arXiv arXiv

- [11]

-

[12]

Context Rot: How Increasing Input Tokens Impacts

Hong, Kelly and Troynikov, Anton and Huber, Jeff , year=. Context Rot: How Increasing Input Tokens Impacts

-

[13]

Can't Remember Details in Long Documents?

Agrawal, Devanshu and Gao, Shang and Gajek, Martin , booktitle=. Can't Remember Details in Long Documents?

-

[14]

Advances in Neural Information Processing Systems , volume=

Chain-of-Thought Prompting Elicits Reasoning in Large Language Models , author=. Advances in Neural Information Processing Systems , volume=

-

[15]

Transactions of the Association for Computational Linguistics , volume=

Lost in the Middle: How Language Models Use Long Contexts , author=. Transactions of the Association for Computational Linguistics , volume=

-

[16]

and Irving, Geoffrey and Farquhar, Sebastian and Cooney, Alan , journal=

Lindner, David and Griffin, Charlie and Korbak, Tomek and Zimmermann, Roland S. and Irving, Geoffrey and Farquhar, Sebastian and Cooney, Alan , journal=. Practical Challenges of Control Monitoring in Frontier

- [17]

- [18]

-

[19]

2026 , howpublished=

work page 2026

-

[20]

ICLR 2026 Workshop on Agents in the Wild (AIWILD) , year=

Better Attacks for Better Monitors: Semi-Automated Red-Teaming for Agent Monitoring , author=. ICLR 2026 Workshop on Agents in the Wild (AIWILD) , year=

work page 2026

- [21]

-

[22]

Adaptive Attacks on Trusted Monitors Subvert

Terekhov, Mikhail and Panfilov, Alexander and Dzenhaliou, Daniil and Gulcehre, Caglar and Andriushchenko, Maksym and Prabhu, Ameya and Geiping, Jonas , journal=. Adaptive Attacks on Trusted Monitors Subvert

-

[23]

Effective Long-Context Scaling of Foundation Models , author=. Proceedings of the 2024 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 1: Long Papers) , year=

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.