Recognition: 2 theorem links

· Lean TheoremEnvironment-Adaptive Preference Optimization for Wildfire Prediction

Pith reviewed 2026-05-13 06:06 UTC · model grok-4.3

The pith

Environment-Adaptive Preference Optimization adapts wildfire prediction models to new environments by aligning data with nearest neighbors and combining supervised learning with preference optimization on rare events.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

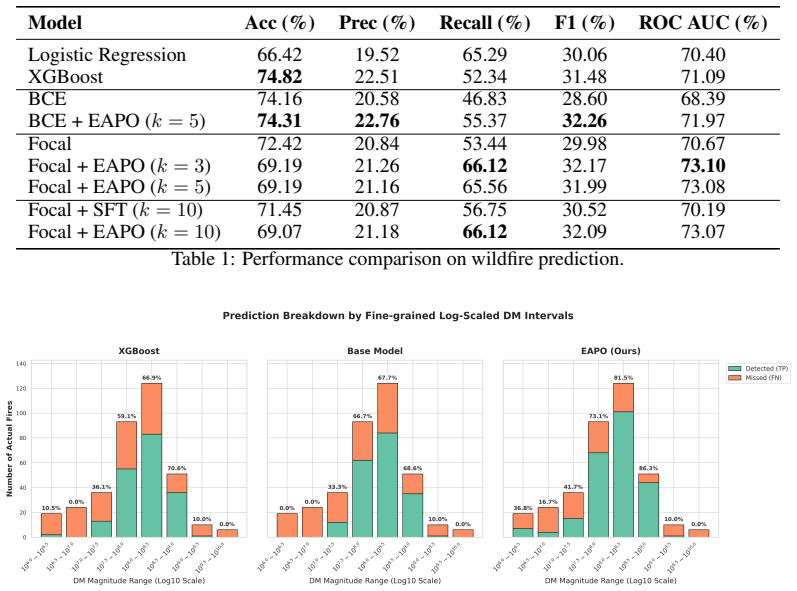

EAPO first constructs distribution-aligned datasets via k-nearest neighbor retrieval on the new input distribution and then applies hybrid fine-tuning that combines supervised learning with preference optimization while emphasizing rare extreme events. This process refines decision boundaries and avoids conflicting signals from heterogeneous historical data, yielding robust performance with ROC-AUC of 0.7310 and better detection in extreme regimes on real-world wildfire tasks that include environmental shifts.

What carries the argument

The k-nearest neighbor retrieval that builds local manifolds aligned to the target environment, paired with the hybrid fine-tuning procedure of supervised learning plus preference optimization that prioritizes the long-tailed fire class.

If this is right

- Prediction reliability holds across evolving environmental conditions rather than degrading on new data.

- Detection of rare fire events improves specifically in extreme regimes while overall metrics stay stable.

- Decision boundaries become cleaner because the method sidesteps mixed signals from mismatched historical data.

- The framework supports dynamic wildfire prediction systems that must update to current conditions.

Where Pith is reading between the lines

- The same alignment-plus-optimization steps could be applied to other rare-event tasks such as flood or storm prediction.

- If the nearest-neighbor step generalizes, models could be updated quickly for new regions without full retraining.

- Preference optimization focused on minority classes may transfer to other imbalanced classification settings outside wildfire data.

- Testing the method on additional shift-heavy datasets would show whether the reported gains hold beyond the evaluated cases.

Load-bearing premise

That k-nearest neighbor retrieval from a new input distribution builds datasets that accurately match the target environment's structure without selection bias or missing important shifts.

What would settle it

Running EAPO on a new wildfire dataset with a documented environmental shift and finding no improvement in ROC-AUC or extreme-event detection compared with standard fine-tuning would falsify the claim of effective adaptation.

Figures

read the original abstract

Predicting rare extreme events such as wildfires from meteorological data requires models that remain reliable under evolving environmental conditions. This problem is inherently long-tailed: wildfire events are rare but high-impact, while most observations correspond to non-fire conditions, causing standard learning objectives to underemphasize the minority class (fire) that matters most. In addition, models trained on historical distributions often fail under distribution shifts, exhibiting degraded performance in new environments. To this end, we propose Environment-Adaptive Preference Optimization (EAPO), a framework that adapts prediction to the target environment with long-tail distribution. Given a new input distribution, we first construct distribution-aligned datasets via $k$-nearest neighbor retrieval. We then perform a hybrid fine-tuning procedure on this local manifold, combining supervised learning with preference optimization, as well as emphasizing on rare extreme events. EAPO refines decision boundaries while avoiding conflicting signals from heterogeneous training data. We evaluate EAPO on a real-world wildfire prediction task with environmental shifts. EAPO achieves robust performance (ROC-AUC 0.7310) and improves detection in extreme regimes, demonstrating its effectiveness in dynamic wildfire prediction systems.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes Environment-Adaptive Preference Optimization (EAPO) for wildfire prediction under environmental distribution shifts. Given a new input distribution, it constructs a local dataset via k-nearest neighbor retrieval and then applies hybrid fine-tuning that combines supervised learning with preference optimization while emphasizing rare extreme (fire) events. The central empirical claim is that this yields robust performance with ROC-AUC 0.7310 and improved detection in extreme regimes compared to standard approaches.

Significance. If the reported gains are shown to be robust and attributable to the hybrid procedure rather than retrieval artifacts, EAPO would offer a practical recipe for adapting long-tailed predictors to shifting meteorological conditions. The approach addresses a real operational need in environmental ML, but its significance cannot be assessed without comparative evidence.

major comments (2)

- [Abstract] Abstract: The single reported ROC-AUC value of 0.7310 is presented without baselines (e.g., standard supervised learning on the full historical data, vanilla preference optimization, or other domain-adaptation methods), ablation studies on the kNN step versus the preference-optimization step, error bars, or statistical tests. This absence prevents any determination of whether EAPO improves extreme-regime detection beyond existing methods.

- [Method (kNN retrieval and dataset construction)] Method description of kNN retrieval: The claim that k-nearest-neighbor retrieval produces a distribution-aligned local manifold rests on an untested assumption that the retrieved neighbors faithfully represent the target environment's support and label distribution. In high-dimensional meteorological space with extreme class imbalance, this step is prone to oversampling dense non-fire regions and missing isolated fire events; no analysis, sensitivity study on k, or coverage metric is supplied to address selection bias or distribution-shift effects.

minor comments (1)

- [Abstract / Method] The manuscript would benefit from explicit statements of the preference-optimization loss, the exact definition of 'extreme regimes,' and the value of k used in retrieval.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback highlighting the need for stronger empirical validation. We address each major comment below and have revised the manuscript to incorporate additional baselines, ablations, statistical tests, and methodological analysis on the kNN step.

read point-by-point responses

-

Referee: [Abstract] Abstract: The single reported ROC-AUC value of 0.7310 is presented without baselines (e.g., standard supervised learning on the full historical data, vanilla preference optimization, or other domain-adaptation methods), ablation studies on the kNN step versus the preference-optimization step, error bars, or statistical tests. This absence prevents any determination of whether EAPO improves extreme-regime detection beyond existing methods.

Authors: We agree that comparative evidence is required to substantiate the gains. In the revised manuscript we have added explicit baselines (standard supervised learning on full historical data, vanilla preference optimization, and a domain-adaptation method) with quantitative improvements reported in both the abstract and Section 4. Ablation studies isolating the kNN retrieval versus the hybrid preference-optimization component appear in a new subsection 4.3. We now report mean ROC-AUC with standard deviation over five random seeds and include paired t-tests confirming statistical significance of the improvements in extreme-regime detection. revision: yes

-

Referee: [Method (kNN retrieval and dataset construction)] Method description of kNN retrieval: The claim that k-nearest-neighbor retrieval produces a distribution-aligned local manifold rests on an untested assumption that the retrieved neighbors faithfully represent the target environment's support and label distribution. In high-dimensional meteorological space with extreme class imbalance, this step is prone to oversampling dense non-fire regions and missing isolated fire events; no analysis, sensitivity study on k, or coverage metric is supplied to address selection bias or distribution-shift effects.

Authors: We acknowledge that the original submission lacked explicit validation of the kNN step. We have added a sensitivity study varying k from 10 to 100 and measuring impact on ROC-AUC and extreme-event recall. We also introduce two new metrics: (i) coverage, defined as the fraction of target-environment samples whose nearest neighbors fall within the retrieved set, and (ii) label-distribution alignment measured by KL divergence between the retrieved and target label distributions. These results, now in Section 3.2, show that moderate k values achieve reasonable coverage of rare fire events while mitigating oversampling of non-fire regions. revision: yes

Circularity Check

No significant circularity; empirical procedure is self-contained

full rationale

The paper describes EAPO as a two-stage empirical procedure: kNN retrieval to build a distribution-aligned local dataset from a new input distribution, followed by hybrid supervised fine-tuning plus preference optimization that emphasizes rare events. No equations, derivations, or parameter-fitting steps are presented that reduce by construction to the inputs (e.g., no fitted quantities renamed as independent predictions, no self-referential definitions, and no load-bearing self-citations whose validity depends on the current work). Performance numbers such as ROC-AUC 0.7310 are reported as measured outcomes on real data rather than tautological consequences of the method definition. The derivation chain therefore remains independent of its own outputs.

Axiom & Free-Parameter Ledger

free parameters (1)

- k (number of neighbors)

axioms (1)

- domain assumption k-nearest neighbor retrieval yields a distribution-aligned local dataset for the target environment

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearwe first construct distribution-aligned datasets via k-nearest neighbor retrieval... LEAPO = LSFT + λ1 · LDPO-local + λ2 · LDPO-extreme

-

IndisputableMonolith/Foundation/ArithmeticFromLogic.leanreality_from_one_distinction unclearEAPO achieves robust performance (ROC-AUC 0.7310) and improves detection in extreme regimes

Reference graph

Works this paper leans on

-

[1]

John T Abatzoglou. Development of gridded surface meteorological data for ecological applica- tions and modelling.International journal of climatology, 33(1):121–131, 2013

work page 2013

-

[2]

John T. Abatzoglou, Crystal A. Kolden, Alison C. Cullen, Mojtaba Sadegh, Emily L. Williams, Marco Turco, and Matthew W. Jones. Climate change has increased the odds of extreme regional forest fire years globally.Nature Communications, 16(1), 7 2025

work page 2025

-

[3]

Tabnet: Attentive interpretable tabular learning

Sercan Ö Arik and Tomas Pfister. Tabnet: Attentive interpretable tabular learning. InProceed- ings of the AAAI conference on artificial intelligence, volume 35, pages 6679–6687, 2021

work page 2021

-

[4]

Jennifer L. Baltzer, Nicola J. Day, Xanthe J. Walker, David Greene, Michelle C. Mack, Heather D. Alexander, Dominique Arseneault, Jennifer Barnes, Yves Bergeron, Yan Boucher, Laura Bourgeau-Chavez, Carissa D. Brown, Suzanne Carrière, Brian K. Howard, Sylvie Gau- thier, Marc-André Parisien, Kirsten A. Reid, Brendan M. Rogers, Carl Roland, Luc Sirois, Sarah...

work page 2021

-

[5]

J. Buch, A. P. Williams, C. S. Juang, W. D. Hansen, and P. Gentine. SMLFire1.0: a stochastic machine learning (sml) model for wildfire activity in the western United States.Geoscientific Model Development, 16(12):3407–3433, 2023

work page 2023

-

[6]

Brendan Byrne, Junjie Liu, Kevin W. Bowman, Madeleine Pascolini-Campbell, Abhishek Chatterjee, Sudhanshu Pandey, Kazuyuki Miyazaki, Guido R. van der Werf, Debra Wunch, Paul O. Wennberg, Coleen M. Roehl, and Saptarshi Sinha. Carbon emissions from the 2023 Canadian wildfires.Nature, 633(8031):835–839, 8 2024

work page 2023

-

[7]

Contrastive test-time adaptation

Dian Chen, Dequan Wang, Trevor Darrell, and Sayna Ebrahimi. Contrastive test-time adaptation. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 295–305, 2022

work page 2022

-

[8]

Xgboost: A scalable tree boosting system

Tianqi Chen and Carlos Guestrin. Xgboost: A scalable tree boosting system. InProceedings of the 22nd acm sigkdd international conference on knowledge discovery and data mining, pages 785–794, 2016

work page 2016

-

[9]

Janice L. Coen, E. Natasha Stavros, and Josephine A. Fites-Kaufman. Deconstructing the King megafire.Ecological Applications, 28(6):1565–1580, 2018

work page 2018

-

[10]

Andrea Duane, Marc Castellnou, and Lluís Brotons. Towards a comprehensive look at global drivers of novel extreme wildfire events.Climatic Change, 165(3–4), 4 2021

work page 2021

-

[11]

Todd M. Ellis, David M. J. S. Bowman, Piyush Jain, Mike D. Flannigan, and Grant J. Williamson. Global increase in wildfire risk due to climate-driven declines in fuel moisture.Global Change Biology, 28(4):1544–1559, 2022

work page 2022

-

[12]

Jessica E. Halofsky, David L. Peterson, and Brian J. Harvey. Changing wildfire, changing forests: the effects of climate change on fire regimes and vegetation in the Pacific Northwest, USA.Fire Ecology, 16(1), 1 2020

work page 2020

-

[13]

David W Hosmer Jr, Stanley Lemeshow, and Rodney X Sturdivant.Applied logistic regression. John Wiley & Sons, 2013

work page 2013

-

[14]

Coogan, Sriram Ganapathi Subramanian, Mark Crowley, Steve Taylor, and Mike D

Piyush Jain, Sean C.P. Coogan, Sriram Ganapathi Subramanian, Mark Crowley, Steve Taylor, and Mike D. Flannigan. A review of machine learning applications in wildfire science and management.Environmental Reviews, 28(4):478–505, 2020

work page 2020

-

[15]

Matthew W. Jones, John T. Abatzoglou, Sander Veraverbeke, Niels Andela, Gitta Lasslop, Matthias Forkel, Adam J. P. Smith, Chantelle Burton, Richard A. Betts, Guido R. van der Werf, Stephen Sitch, Josep G. Canadell, Cristina Santín, Crystal Kolden, Stefan H. Doerr, and Corinne Le Quéré. Global and regional trends and drivers of fire under climate change.Re...

work page 2022

-

[16]

Jon E. Keeley and Alexandra D. Syphard. Causal analysis of fire regime drivers in California. International Journal of Wildland Fire, 34(12):WF25166, 12 2025

work page 2025

-

[17]

Adam: A Method for Stochastic Optimization

Diederik P Kingma and Jimmy Ba. Adam: A method for stochastic optimization.arXiv preprint arXiv:1412.6980, 2014

work page internal anchor Pith review Pith/arXiv arXiv 2014

-

[18]

Spyros Kondylatos, Ioannis Prapas, Michele Ronco, Ioannis Papoutsis, Gustau Camps-Valls, María Piles, Miguel-Ángel Fernández-Torres, and Nuno Carvalhais. Wildfire danger prediction and understanding with deep learning.Geophysical Research Letters, 49(17):e2022GL099368,

-

[19]

e2022GL099368 2022GL099368

-

[20]

Mark R. Kreider, Philip E. Higuera, Sean A. Parks, William L. Rice, Nadia White, and Andrew J. Larson. Fire suppression makes wildfires more severe and accentuates impacts of climate change and fuel accumulation.Nature Communications, 15(1), 3 2024

work page 2024

-

[21]

Learning to generalize: Meta- learning for domain generalization

Da Li, Yongxin Yang, Yi-Zhe Song, and Timothy Hospedales. Learning to generalize: Meta- learning for domain generalization. InProceedings of the AAAI conference on artificial intelligence, volume 32, 2018

work page 2018

-

[22]

Fa Li, Qing Zhu, Kunxiaojia Yuan, Fujiang Ji, Arindam Paul, Peng Lee, V olker C. Radeloff, and Min Chen. Projecting large fires in the western US with an interpretable and accurate hybrid machine learning method.Earth’s Future, 12(10):e2024EF004588, 2024. e2024EF004588 2024EF004588

work page 2024

-

[23]

Long-tailed visual recognition via gaussian clouded logit adjustment

Mengke Li, Yiu-ming Cheung, and Yang Lu. Long-tailed visual recognition via gaussian clouded logit adjustment. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 6929–6938, June 2022

work page 2022

-

[24]

Shu Li and Tirtha Banerjee. Spatial and temporal pattern of wildfires in california from 2000 to 2019.Scientific reports, 11(1):8779, 2021

work page 2000

-

[25]

Focal loss for dense object detection

Tsung-Yi Lin, Priya Goyal, Ross Girshick, Kaiming He, and Piotr Dollár. Focal loss for dense object detection. InProceedings of the IEEE international conference on computer vision, pages 2980–2988, 2017

work page 2017

-

[26]

Y . Liu, H. Huang, S.-C. Wang, T. Zhang, D. Xu, and Y . Chen. ELM2.1-XGBfire1.0: improving wildfire prediction by integrating a machine learning fire model in a land surface model. Geoscientific Model Development, 18(13):4103–4117, 2025

work page 2025

-

[27]

Rebecca K. Miller, Christopher B. Field, and Katharine J. Mach. Barriers and enablers for prescribed burns for wildfire management in California.Nature Sustainability, 3(2):101–109, 1 2020

work page 2020

-

[28]

Domain generalization via invariant feature representation

Krikamol Muandet, David Balduzzi, and Bernhard Schölkopf. Domain generalization via invariant feature representation. InInternational conference on machine learning, pages 10–18. PMLR, 2013

work page 2013

-

[29]

Chae Yeon Park, Kiyoshi Takahashi, Shinichiro Fujimori, Thanapat Jansakoo, Chantelle Burton, Huilin Huang, Sian Kou-Giesbrecht, Christopher P. O. Reyer, Matthias Mengel, Eleanor Burke, Fang Li, Stijn Hantson, Junya Takakura, Dong Kun Lee, and Tomoko Hasegawa. Attributing human mortality from fire PM2.5 to climate change.Nature Climate Change, 14(11):1193–...

work page 2024

-

[30]

K-nearest neighbor.Scholarpedia, 4(2):1883, 2009

Leif E Peterson. K-nearest neighbor.Scholarpedia, 4(2):1883, 2009

work page 2009

-

[31]

Gould, Renzhi Jing, Makoto Kelp, Marissa L

Minghao Qiu, Jessica Li, Carlos F. Gould, Renzhi Jing, Makoto Kelp, Marissa L. Childs, Jeff Wen, Yuanyu Xie, Meiyun Lin, Mathew V . Kiang, Sam Heft-Neal, Noah S. Diffenbaugh, and Marshall Burke. Wildfire smoke exposure and mortality burden in the USA under climate change.Nature, 647(8091):935–943, 9 2025

work page 2025

-

[32]

Direct preference optimization: Your language model is secretly a reward model

Rafael Rafailov, Archit Sharma, Eric Mitchell, Christopher D Manning, Stefano Ermon, and Chelsea Finn. Direct preference optimization: Your language model is secretly a reward model. Advances in neural information processing systems, 36:53728–53741, 2023. 6

work page 2023

-

[33]

Krishna Rao, A. Park Williams, Noah S. Diffenbaugh, Marta Yebra, Colleen Bryant, and Alexan- dra G. Konings. Dry live fuels increase the likelihood of lightning-caused fires.Geophysical Research Letters, 50(15):e2022GL100975, 2023. e2022GL100975 2022GL100975

work page 2023

-

[34]

Jason J. Sharples, Richard H. D. McRae, and Stephen R. Wilkes. Wind–terrain effects on the propagation of wildfires in rugged terrain: fire channelling.International Journal of Wildland Fire, 21(3):282–296, 01 2012

work page 2012

-

[35]

Preference ranking optimization for human alignment

Feifan Song, Bowen Yu, Minghao Li, Haiyang Yu, Fei Huang, Yongbin Li, and Houfeng Wang. Preference ranking optimization for human alignment. InProceedings of the AAAI Conference on Artificial Intelligence, volume 38, pages 18990–18998, 2024

work page 2024

-

[36]

Masashi Sugiyama and Motoaki Kawanabe.Machine learning in non-stationary environments: Introduction to covariate shift adaptation. MIT press, 2012

work page 2012

-

[37]

Test-time training with self-supervision for generalization under distribution shifts

Yu Sun, Xiaolong Wang, Zhuang Liu, John Miller, Alexei Efros, and Moritz Hardt. Test-time training with self-supervision for generalization under distribution shifts. InInternational conference on machine learning, pages 9229–9248. PMLR, 2020

work page 2020

-

[38]

Daniel L. Swain. A shorter, sharper rainy season amplifies California wildfire risk.Geophysical Research Letters, 48(5):e2021GL092843, 2021. e2021GL092843 2021GL092843

work page 2021

-

[39]

Guido R van der Werf, James T Randerson, Dave van Wees, Yang Chen, Louis Giglio, Joanne Hall, Roland Vernooij, Mingquan Mu, Samiha Binte Shahid, Kelley C Barsanti, et al. Landscape fire emissions from the 5th version of the global fire emissions database (gfed5).Scientific Data, 12(1):1870, 2025

work page 2025

-

[40]

Daoping Wang, Dabo Guan, Shupeng Zhu, Michael Mac Kinnon, Guannan Geng, Qiang Zhang, Heran Zheng, Tianyang Lei, Shuai Shao, Peng Gong, and Steven J. Davis. Economic footprint of California wildfires in 2018.Nature Sustainability, 4(3):252–260, 12 2020

work page 2018

-

[41]

arXiv preprint arXiv:2006.10726 (2020) 2, 3, 12, 13, 36

Dequan Wang, Evan Shelhamer, Shaoteng Liu, Bruno Olshausen, and Trevor Darrell. Tent: Fully test-time adaptation by entropy minimization.arXiv preprint arXiv:2006.10726, 2020

-

[42]

S. S.-C. Wang, Y . Qian, L. R. Leung, and Y . Zhang. Interpreting machine learning prediction of fire emissions and comparison with firemip process-based models.Atmospheric Chemistry and Physics, 22(5):3445–3468, 2022

work page 2022

-

[43]

Sally S.-C. Wang, Yun Qian, L. Ruby Leung, and Yang Zhang. Identifying key drivers of wildfires in the contiguous US using machine learning and game theory interpretation.Earth’s Future, 9(6):e2020EF001910, 2021. e2020EF001910 2020EF001910

work page 2021

-

[44]

Distribution-balanced loss for multi-label classification in long-tailed datasets

Tong Wu, Qingqiu Huang, Ziwei Liu, Yu Wang, and Dahua Lin. Distribution-balanced loss for multi-label classification in long-tailed datasets. InEuropean conference on computer vision, pages 162–178. Springer, 2020

work page 2020

-

[45]

Xiao Wu, Erik Sverdrup, Michael D. Mastrandrea, Michael W. Wara, and Stefan Wager. Low- intensity fires mitigate the risk of high-intensity wildfires in California’s forests.Science Advances, 9(45):eadi4123, 2023

work page 2023

-

[46]

A systematic review on long-tailed learning

Chongsheng Zhang, George Almpanidis, Gaojuan Fan, Binquan Deng, Yanbo Zhang, Ji Liu, Aouaidjia Kamel, Paolo Soda, and João Gama. A systematic review on long-tailed learning. IEEE Transactions on Neural Networks and Learning Systems, 2025

work page 2025

-

[47]

arXiv preprint arXiv:2104.02008 (2021) 1, 2, 3, 9, 35

Kaiyang Zhou, Yongxin Yang, Yu Qiao, and Tao Xiang. Domain generalization with mixstyle. arXiv preprint arXiv:2104.02008, 2021. 7

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.