Recognition: 2 theorem links

· Lean TheoremFuTCR: Future-Targeted Contrast and Repulsion for Continual Panoptic Segmentation

Pith reviewed 2026-05-13 06:17 UTC · model grok-4.3

The pith

FuTCR identifies future-like regions in background pixels and uses contrast plus repulsion to reserve space for new classes in continual panoptic segmentation.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

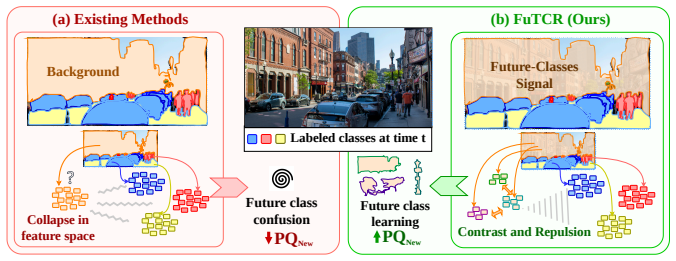

FuTCR discovers confident future-like regions by grouping model-predicted masks whose pixels are consistently classified as background but exhibit non-background logits, builds coherent prototypes from these unlabeled regions via pixel-to-region contrast, and simultaneously repels background features from known-class prototypes to reserve representational space, thereby improving adaptation when new categories are introduced.

What carries the argument

Future-targeted contrastive and repulsive (FuTCR) mechanism that groups background pixels with non-background logits into prototypes and pushes background features away from existing class centers.

If this is right

- New categories can be added with less interference from prior background training signals.

- Base-class performance is preserved or slightly improved while new-class quality rises substantially.

- The approach scales across different dataset sizes and multiple continual learning protocols.

- Representation space is proactively prepared for unknown future objects instead of being overwritten.

Where Pith is reading between the lines

- The same region-discovery plus repulsion pattern could be tested in continual semantic segmentation or instance segmentation without panoptic heads.

- Varying the logit threshold used to flag future-like pixels would reveal how sensitive performance is to the discovery step.

- The method might reduce forgetting in other dense-prediction continual tasks where unlabeled content is common.

Load-bearing premise

Grouping pixels that the model labels background yet assigns non-background logits will reliably produce coherent regions that match actual future classes rather than noise or misclassified known objects.

What would settle it

If ablating the future-region grouping step or the repulsion term produces no gain in new-class panoptic quality, or if the grouped regions show low overlap with ground-truth future objects across multiple datasets, the central claim would be falsified.

Figures

read the original abstract

Continual Panoptic Segmentation (CPS) requires methods that can quickly adapt to new categories over time. The nature of this dense prediction task means that training images may contain a mix of labeled and unlabeled objects. As nothing is known about these unlabeled objects a priori, existing methods often simply group any unlabeled pixel into a single "background" class during training. In effect, during training, they repeatedly tell the model that all the different background categories are the same (even when they aren't). This makes learning to identify different background categories as they are added challenging since these new categories may require using information the model was previously told was unimportant and ignored. Thus, we propose a Future-Targeted Contrastive and Repulsive (FuTCR) framework that addresses this limitation by restructuring representations before new classes are introduced. FuTCR first discovers confident future-like regions by grouping model-predicted masks whose pixels are consistently classified as background but exhibit non-background logits. Next, FuTCR applies pixel-to-region contrast to build coherent prototypes from these unlabeled regions, while simultaneously repelling background features away from known-class prototypes to explicitly reserve representational space for future categories. Experiments across six CPS settings and a range of dataset sizes show FuTCR improves relative new-class panoptic quality over the state-of-the-art by up to 28%, while preserving or improving base-class performance with gains up to 4%.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes FuTCR, a Future-Targeted Contrast and Repulsion framework for Continual Panoptic Segmentation. It first discovers future-like regions by grouping model-predicted masks classified as background yet exhibiting non-background logits, then applies pixel-to-region contrastive learning to form prototypes from these regions while repelling background features from known-class prototypes to reserve space for future categories. Experiments across six CPS settings and varying dataset sizes report relative improvements in new-class panoptic quality of up to 28% over state-of-the-art methods, with base-class performance preserved or improved by up to 4%.

Significance. If the gains are robust, the work would advance continual learning for dense prediction by proactively structuring representations around unknown future objects rather than collapsing them into a single background class. The approach could influence incremental segmentation pipelines in robotics and autonomous systems where new object categories emerge over time.

major comments (3)

- [§3.2] §3.2 (Region Discovery): The grouping of background-classified masks with elevated non-background logits is presented as reliably producing coherent future-like regions, yet no ablation, quantitative coherence metric, or visualization against ground-truth future objects is provided to rule out noise or over-grouping; this step is load-bearing for the subsequent contrastive and repulsive objectives and the reported 28% new-class gains.

- [§4] §4 (Experiments): The abstract and results claim consistent gains across six settings, but the manuscript omits exact baseline implementations, statistical significance tests, error bars or standard deviations over multiple runs, and isolated ablations of the region-grouping threshold and the contrast/repulsion terms; without these, the magnitude and reliability of the improvements cannot be verified.

- [§3.3] §3.3 (Repulsion Loss): The claim that repelling background features from known-class prototypes reserves space for future categories lacks supporting analysis of feature-space geometry, t-SNE visualizations, or a controlled study showing reduced interference with new-class learning; this mechanism is central to the base-class preservation result.

minor comments (3)

- [Abstract] Abstract: Replace the vague 'up to 28%' and 'up to 4%' with the specific dataset/setting and baseline for each reported maximum.

- [§3.3] Notation: Explicitly define all symbols in the contrastive and repulsion loss equations in the main text rather than deferring to the supplement.

- [Figures] Figures: Add side-by-side comparisons of discovered future-like regions against ground-truth annotations in at least one figure to illustrate coherence.

Simulated Author's Rebuttal

We thank the referee for the thorough and constructive review. We address each major comment below and will incorporate the requested additions and clarifications in the revised manuscript.

read point-by-point responses

-

Referee: [§3.2] §3.2 (Region Discovery): The grouping of background-classified masks with elevated non-background logits is presented as reliably producing coherent future-like regions, yet no ablation, quantitative coherence metric, or visualization against ground-truth future objects is provided to rule out noise or over-grouping; this step is load-bearing for the subsequent contrastive and repulsive objectives and the reported 28% new-class gains.

Authors: We agree that the region discovery step is central and would benefit from stronger empirical support. In the revision we will add an ablation on the non-background logit threshold used for grouping, a quantitative coherence metric (average IoU between discovered regions and ground-truth future-class pixels where available), and visualizations that overlay the grouped regions on future-object annotations to show they capture coherent structures rather than noise. revision: yes

-

Referee: [§4] §4 (Experiments): The abstract and results claim consistent gains across six settings, but the manuscript omits exact baseline implementations, statistical significance tests, error bars or standard deviations over multiple runs, and isolated ablations of the region-grouping threshold and the contrast/repulsion terms; without these, the magnitude and reliability of the improvements cannot be verified.

Authors: We acknowledge these omissions limit verifiability. In the revised manuscript we will (i) provide precise reproduction details for all baselines, (ii) report mean and standard deviation over three random seeds together with paired t-test significance results, and (iii) present isolated ablations that separately disable the region-grouping threshold, the contrast term, and the repulsion term to quantify each component's contribution. revision: yes

-

Referee: [§3.3] §3.3 (Repulsion Loss): The claim that repelling background features from known-class prototypes reserves space for future categories lacks supporting analysis of feature-space geometry, t-SNE visualizations, or a controlled study showing reduced interference with new-class learning; this mechanism is central to the base-class preservation result.

Authors: We agree that direct evidence for the geometric effect of the repulsion loss would strengthen the central claim. In the revision we will include t-SNE plots of feature embeddings before and after repulsion, quantitative measurements of the distance between background features and known-class prototypes, and a controlled ablation that isolates the repulsion term to demonstrate its role in preserving base-class performance while facilitating new-class learning. revision: yes

Circularity Check

No significant circularity; method is self-contained

full rationale

The paper defines FuTCR as a new framework that first groups model-predicted background masks with non-background logits to form future-like regions, then applies standard pixel-to-region contrastive and repulsive losses on those regions. No equations, parameters, or self-citations reduce the reported performance gains to quantities defined by construction within the same paper. The central steps rely on externally standard contrastive objectives applied to newly introduced region definitions, with no load-bearing self-citation chains or fitted-input renamings. The derivation chain is independent of its own outputs.

Axiom & Free-Parameter Ledger

free parameters (1)

- region grouping threshold

axioms (1)

- domain assumption Model logits on background pixels can indicate latent future classes

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel (J uniqueness) unclearFuTCR first discovers confident future-like regions by grouping model-predicted masks whose pixels are consistently classified as background but exhibit non-background logits. Next, FuTCR applies pixel-to-region contrast to build coherent prototypes... while simultaneously repelling background features away from known-class prototypes

-

IndisputableMonolith/Foundation/BranchSelection.leanbranch_selection (coupling combiner forces bilinear branch) unclearLreg = −1/N ∑ log[exp(sim(fn,pr(n))/τ) / ∑ exp(sim(fn,pk)/τ)] (InfoNCE-style)

Reference graph

Works this paper leans on

-

[1]

Ferret: An efficient online continual learning framework under varying memory constraints

Yuhao Zhou, Yuxin Tian, Jindi Lv, Mingjia Shi, Yuanxi Li, Qing Ye, Shuhao Zhang, and Jiancheng Lv. Ferret: An efficient online continual learning framework under varying memory constraints. InProceedings of the Computer Vision and Pattern Recognition Conference, pages 4850–4861, 2025

work page 2025

-

[2]

Do your best and get enough rest for continual learning

Hankyul Kang, Gregor Seifer, Donghyun Lee, and Jongbin Ryu. Do your best and get enough rest for continual learning. InProceedings of the Computer Vision and Pattern Recognition Conference, pages 10077–10086, 2025

work page 2025

-

[3]

End-to-end incremental learning

Francisco M Castro, Manuel J Marín-Jiménez, Nicolás Guil, Cordelia Schmid, and Karteek Alahari. End-to-end incremental learning. InProceedings of the European conference on computer vision (ECCV), pages 233–248, 2018

work page 2018

-

[4]

icarl: Incremental classifier and representation learning

Sylvestre-Alvise Rebuffi, Alexander Kolesnikov, Georg Sperl, and Christoph H Lampert. icarl: Incremental classifier and representation learning. InProceedings of the IEEE conference on Computer Vision and Pattern Recognition, pages 2001–2010, 2017

work page 2001

-

[5]

Riemannian walk for incremental learning: Understanding forgetting and intransigence

Arslan Chaudhry, Puneet K Dokania, Thalaiyasingam Ajanthan, and Philip HS Torr. Riemannian walk for incremental learning: Understanding forgetting and intransigence. InProceedings of the European conference on computer vision (ECCV), pages 532–547, 2018

work page 2018

-

[6]

Hanul Shin, Jung Kwon Lee, Jaehong Kim, and Jiwon Kim. Continual learning with deep generative replay.Advances in neural information processing systems, 30, 2017

work page 2017

-

[7]

Der: Dynamically expandable representation for class incremental learning

Shipeng Yan, Jiangwei Xie, and Xuming He. Der: Dynamically expandable representation for class incremental learning. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 3014–3023, 2021

work page 2021

-

[8]

Few-shot class-incremental learning

Xiaoyu Tao, Xiaopeng Hong, Xinyuan Chang, Songlin Dong, Xing Wei, and Yihong Gong. Few-shot class-incremental learning. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 12183–12192, 2020

work page 2020

-

[9]

Vector quantization prompting for continual learning

Li Jiao, Qiuxia Lai, Yu Li, and Qiang Xu. Vector quantization prompting for continual learning. Advances in Neural Information Processing Systems, 37:34056–34076, 2024

work page 2024

-

[10]

Liyuan Wang, Kuo Yang, Chongxuan Li, Lanqing Hong, Zhenguo Li, and Jun Zhu. Ordisco: Effective and efficient usage of incremental unlabeled data for semi-supervised continual learning. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 5383–5392, 2021

work page 2021

-

[11]

PAC-bayes bounds for cumulative loss in continual learning

Lior Friedman and Ron Meir. PAC-bayes bounds for cumulative loss in continual learning. In The Fourteenth International Conference on Learning Representations, 2026. URL https: //openreview.net/forum?id=hWw269fPov

work page 2026

-

[12]

Bors, Jingling Sun, Rongyao Hu, and Shijie Zhou

Fei Ye, Yulong Zhao, Qihe Liu, Junlin Chen, Adrian G. Bors, Jingling Sun, Rongyao Hu, and Shijie Zhou. Dynamic siamese expansion framework for improving robustness in online continual learning. InAdvances in Neural Information Processing Systems, 2025

work page 2025

-

[13]

Exploiting task relationships in continual learning via transferability-aware task embeddings

Yanru Wu, Jianning Wang, Xiangyu Chen, Enming Zhang, Yang Tan, Hanbing Liu, and Yang Li. Exploiting task relationships in continual learning via transferability-aware task embeddings. InAdvances in Neural Information Processing Systems, 2025. URL https: //openreview.net/forum?id=V8FnYzDX35. 10

work page 2025

-

[14]

Anacp: Toward upper-bound continual learning via analytic contrastive projection

Saleh Momeni, Changnan Xiao, and Bing Liu. Anacp: Toward upper-bound continual learning via analytic contrastive projection. InAdvances in Neural Information Processing Systems,

-

[15]

URLhttps://openreview.net/forum?id=qQbvLU34F1

-

[16]

Hippotune: A hippocampal associative loop–inspired fine-tuning method for continual learning

chenyanxi, Xiuxing Li, Han Yuyang, Zhuo Wang, Qing Li, Ziyu Li, Xiang Li, Chen Wei, and Xia Wu. Hippotune: A hippocampal associative loop–inspired fine-tuning method for continual learning. InThe Fourteenth International Conference on Learning Representations, 2026. URL https://openreview.net/forum?id=MtDiLnnYgm

work page 2026

-

[17]

Ze Yang, Shichao Dong, Ruibo Li, Nan Song, and Guosheng Lin. ADAPT: Attentive self- distillation and dual-decoder prediction fusion for continual panoptic segmentation. InThe Thirteenth International Conference on Learning Representations, 2025. URL https:// openreview.net/forum?id=HF1UmIVv6a

work page 2025

-

[18]

Beyond background shift: Rethinking instance replay in continual semantic segmentation

Hongmei Yin, Tingliang Feng, Fan Lyu, Fanhua Shang, Hongying Liu, Wei Feng, and Liang Wan. Beyond background shift: Rethinking instance replay in continual semantic segmentation. InProceedings of the Computer Vision and Pattern Recognition Conference, pages 9839–9848, 2025

work page 2025

-

[19]

Thanh-Dat Truong, Utsav Prabhu, Bhiksha Raj, Jackson Cothren, and Khoa Luu. Falcon: Fairness learning via contrastive attention approach to continual semantic scene understanding. InProceedings of the Computer Vision and Pattern Recognition Conference, pages 15065– 15075, 2025

work page 2025

-

[20]

Continual gaussian mixture distribution modeling for class incremental semantic segmentation

Guilin Zhu, Runmin Wang, Yuanjie Shao, Wei dong Yang, Nong Sang, and Changxin Gao. Continual gaussian mixture distribution modeling for class incremental semantic segmentation. InThe Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. URL https://openreview.net/forum?id=dtYKDOBkc7

work page 2025

-

[21]

Parameter release and knowledge reuse for class-incremental semantic segmentation, 2025

Xinyue Zhang, Xu Zou, Liqun Chen, Jiahuan Zhou, Guodong Wang, Sheng Zhong, and Luxin Yan. Parameter release and knowledge reuse for class-incremental semantic segmentation, 2025. URLhttps://openreview.net/forum?id=9qbKOaF8YJ

work page 2025

-

[22]

Combo: Conflict mitigation via branched optimization for class incremental segmentation

Kai Fang, Anqi Zhang, Guangyu Gao, Jianbo Jiao, Chi Harold Liu, and Yunchao Wei. Combo: Conflict mitigation via branched optimization for class incremental segmentation. InPro- ceedings of the Computer Vision and Pattern Recognition Conference, pages 25667–25676, 2025

work page 2025

-

[23]

Continual semantic segmentation with automatic memory sample selection

Lanyun Zhu, Tianrun Chen, Jianxiong Yin, Simon See, and Jun Liu. Continual semantic segmentation with automatic memory sample selection. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 3082–3092, 2023

work page 2023

-

[24]

Rethinking query-based transformer for continual image segmentation

Yuchen Zhu, Cheng Shi, Dingyou Wang, Jiajin Tang, Zhengxuan Wei, Yu Wu, Guanbin Li, and Sibei Yang. Rethinking query-based transformer for continual image segmentation. In Proceedings of the Computer Vision and Pattern Recognition Conference, pages 4595–4606, 2025

work page 2025

-

[25]

Contrastive grouping with transformer for referring image segmentation

Jiajin Tang, Ge Zheng, Cheng Shi, and Sibei Yang. Contrastive grouping with transformer for referring image segmentation. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 23570–23580, 2023

work page 2023

-

[26]

Cheng Shi and Sibei Yang. The devil is in the object boundary: Towards annotation-free instance segmentation using foundation models.arXiv preprint arXiv:2404.11957, 2024

-

[27]

Coinseg: Contrast inter-and intra-class representations for incremental segmentation

Zekang Zhang, Guangyu Gao, Jianbo Jiao, Chi Harold Liu, and Yunchao Wei. Coinseg: Contrast inter-and intra-class representations for incremental segmentation. InProceedings of the IEEE/CVF International Conference on Computer Vision, pages 843–853, 2023

work page 2023

-

[28]

Towards continual universal segmentation

Zihan Lin, Zilei Wang, and Xu Wang. Towards continual universal segmentation. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 29417–29427, June 2025. 11

work page 2025

-

[29]

Eclipse: Efficient continual learning in panoptic segmentation with visual prompt tuning

Beomyoung Kim, Joonsang Yu, and Sung Ju Hwang. Eclipse: Efficient continual learning in panoptic segmentation with visual prompt tuning. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 3346–3356, 2024

work page 2024

-

[30]

Comformer: Continual learning in semantic and panoptic segmentation

Fabio Cermelli, Matthieu Cord, and Arthur Douillard. Comformer: Continual learning in semantic and panoptic segmentation. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 3010–3020, 2023

work page 2023

-

[31]

Strike a balance in continual panoptic segmentation

Jinpeng Chen, Runmin Cong, Yuxuan Luo, Horace Ho Shing Ip, and Sam Kwong. Strike a balance in continual panoptic segmentation. InEuropean Conference on Computer Vision, pages 126–142. Springer, 2024

work page 2024

-

[32]

Kihyuk Sohn, David Berthelot, Nicholas Carlini, Zizhao Zhang, Han Zhang, Colin A Raf- fel, Ekin Dogus Cubuk, Alexey Kurakin, and Chun-Liang Li. Fixmatch: Simplifying semi- supervised learning with consistency and confidence.Advances in neural information processing systems, 33:596–608, 2020

work page 2020

-

[33]

A simple framework for contrastive learning of visual representations

Ting Chen, Simon Kornblith, Mohammad Norouzi, and Geoffrey Hinton. A simple framework for contrastive learning of visual representations. InInternational conference on machine learning, pages 1597–1607. PmLR, 2020

work page 2020

-

[34]

Momentum contrast for unsupervised visual representation learning

Kaiming He, Haoqi Fan, Yuxin Wu, Saining Xie, and Ross Girshick. Momentum contrast for unsupervised visual representation learning. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 9729–9738, 2020

work page 2020

-

[35]

Classmix: Segmentation-based data augmentation for semi-supervised learning

Viktor Olsson, Wilhelm Tranheden, Juliano Pinto, and Lennart Svensson. Classmix: Segmentation-based data augmentation for semi-supervised learning. InProceedings of the IEEE/CVF winter conference on applications of computer vision, pages 1369–1378, 2021

work page 2021

-

[36]

Preparing the future for continual semantic segmenta- tion

Zihan Lin, Zilei Wang, and Yixin Zhang. Preparing the future for continual semantic segmenta- tion. InProceedings of the IEEE/CVF International Conference on Computer Vision, pages 11910–11920, 2023

work page 2023

-

[37]

Bo Yuan, Danpei Zhao, Zhuoran Liu, Wentao Li, and Tian Li. Continual panoptic perception: Towards multi-modal incremental interpretation of remote sensing images. InProceedings of the 32nd ACM International Conference on Multimedia, pages 2117–2126, 2024

work page 2024

-

[38]

Sungmin Cha, YoungJoon Yoo, Taesup Moon, et al. Ssul: Semantic segmentation with unknown label for exemplar-based class-incremental learning.Advances in neural information processing systems, 34:10919–10930, 2021

work page 2021

-

[39]

Vista-clip: Visual incre- mental self-tuned adaptation for efficient continual panoptic segmentation

D Manjunath, Shrikar Madhu, Aniruddh Sikdar, and Suresh Sundaram. Vista-clip: Visual incre- mental self-tuned adaptation for efficient continual panoptic segmentation. In2025 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), pages 6557–

-

[40]

Catastrophic forgetting in connectionist networks.Trends in cognitive sciences, 3(4):128–135, 1999

Robert M French. Catastrophic forgetting in connectionist networks.Trends in cognitive sciences, 3(4):128–135, 1999

work page 1999

-

[41]

Catastrophic forgetting, rehearsal and pseudorehearsal.Connection Science, 7 (2):123–146, 1995

Anthony Robins. Catastrophic forgetting, rehearsal and pseudorehearsal.Connection Science, 7 (2):123–146, 1995

work page 1995

-

[42]

Sebastian Thrun. Lifelong learning algorithms. InLearning to learn, pages 181–209. Springer, 1998

work page 1998

-

[43]

Bo Yuan and Danpei Zhao. A survey on continual semantic segmentation: Theory, challenge, method and application.IEEE Transactions on Pattern Analysis and Machine Intelligence, 2024

work page 2024

-

[44]

Mitigating background shift in class-incremental semantic segmentation

Gilhan Park, WonJun Moon, SuBeen Lee, Tae-Young Kim, and Jae-Pil Heo. Mitigating background shift in class-incremental semantic segmentation. InEuropean Conference on Computer Vision, pages 71–88. Springer, 2024. 12

work page 2024

-

[45]

Modeling the background for incremental learning in semantic segmentation

Fabio Cermelli, Massimiliano Mancini, Samuel Rota Bulo, Elisa Ricci, and Barbara Caputo. Modeling the background for incremental learning in semantic segmentation. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 9233–9242, 2020

work page 2020

-

[46]

KIT Scientific Publishing, 2024

Tobias Michael Kalb.Principles of Catastrophic Forgetting for Continual Semantic Segmenta- tion in Automated Driving. KIT Scientific Publishing, 2024

work page 2024

-

[47]

Continual semantic segmentation via structure preserving and projected feature alignment

Zihan Lin, Zilei Wang, and Yixin Zhang. Continual semantic segmentation via structure preserving and projected feature alignment. InEuropean Conference on Computer Vision, pages 345–361. Springer, 2022

work page 2022

-

[48]

Zichen Song, Xiaoliang Zhang, and Zhaofeng Shi. Scale-hybrid group distillation with knowl- edge disentangling for continual semantic segmentation.Sensors, 23(18):7820, 2023

work page 2023

-

[49]

Bacs: Background aware continual semantic segmentation.arXiv preprint arXiv:2404.13148, 2024

Mostafa ElAraby, Ali Harakeh, and Liam Paull. Bacs: Background aware continual semantic segmentation.arXiv preprint arXiv:2404.13148, 2024

-

[50]

Yuxuan Luo, Jinpeng Chen, Runmin Cong, Horace Ho Shing Ip, and Sam Kwong. Trace back and go ahead: Completing partial annotation for continual semantic segmentation.Pattern Recognition, 165:111613, 2025

work page 2025

-

[51]

Exemplar-based open- set panoptic segmentation network

Jaedong Hwang, Seoung Wug Oh, Joon-Young Lee, and Bohyung Han. Exemplar-based open- set panoptic segmentation network. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 1175–1184, 2021

work page 2021

-

[52]

Revis- iting open-set panoptic segmentation

Yufei Yin, Hao Chen, Wengang Zhou, Jiajun Deng, Haiming Xu, and Houqiang Li. Revis- iting open-set panoptic segmentation. InProceedings of the AAAI Conference on Artificial Intelligence, volume 38, pages 6747–6754, 2024

work page 2024

-

[53]

Hexin Dong, Zifan Chen, Mingze Yuan, Yutong Xie, Jie Zhao, Fei Yu, Bin Dong, and Li Zhang. Region-aware metric learning for open world semantic segmentation via meta-channel aggrega- tion.arXiv preprint arXiv:2205.08083, 2022

-

[54]

Dual decision improves open-set panoptic segmentation.arXiv preprint arXiv:2207.02504, 2022

Hai-Ming Xu, Hao Chen, Lingqiao Liu, and Yufei Yin. Dual decision improves open-set panoptic segmentation.arXiv preprint arXiv:2207.02504, 2022

-

[55]

Alexander Kirillov, Kaiming He, Ross Girshick, Carsten Rother, and Piotr Dollár. Panoptic segmentation. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 9404–9413, 2019

work page 2019

-

[56]

Huangjie Zheng, Xu Chen, Jiangchao Yao, Hongxia Yang, Chunyuan Li, Ya Zhang, Hao Zhang, Ivor Tsang, Jingren Zhou, and Mingyuan Zhou. Contrastive attraction and contrastive repulsion for representation learning.arXiv preprint arXiv:2105.03746, 2021

-

[57]

Jan Niklas Böhm, Philipp Berens, and Dmitry Kobak. Attraction-repulsion spectrum in neighbor embeddings.Journal of Machine Learning Research, 23(95):1–32, 2022

work page 2022

-

[58]

Stella X. Yu and Jianbo Shi. Segmentation with pairwise attraction and repulsion. InProceedings Eighth IEEE International Conference on Computer Vision. ICCV 2001, volume 1, pages 52–58. IEEE, 2001

work page 2001

-

[59]

Scene parsing through ade20k dataset

Bolei Zhou, Hang Zhao, Xavier Puig, Sanja Fidler, Adela Barriuso, and Antonio Torralba. Scene parsing through ade20k dataset. InProceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2017

work page 2017

-

[60]

Masked-attention mask transformer for universal image segmentation

Bowen Cheng, Ishan Misra, Alexander G Schwing, Alexander Kirillov, and Rohit Girdhar. Masked-attention mask transformer for universal image segmentation. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 1290–1299, 2022

work page 2022

-

[61]

Deep residual learning for image recognition

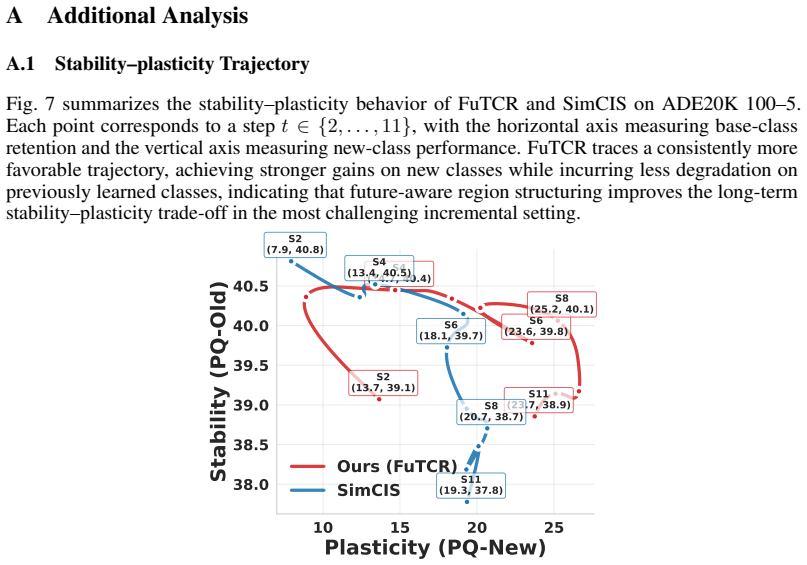

Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Deep residual learning for image recognition. InProceedings of the IEEE conference on computer vision and pattern recognition, pages 770–778, 2016. 13 A Additional Analysis A.1 Stability–plasticity Trajectory Fig. 7 summarizes the stability–plasticity behavior of FuTCR and SimCIS on ADE20K 100–5. Each...

work page 2016

-

[62]

The two panels depict diverse scenes where FuTCR recovers more accurate panoptic masks, particularly on newly introduced classes. and a balance term: Laux = 1 |Rfut| X r CE(gr, ℓr) +λ bal KL ¯p∥u ,(5) where ¯p is the mean predicted distribution over clusters and u is the uniform distribution. This head is intended to encourage diverse usage of latent slot...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.