Recognition: 2 theorem links

· Lean TheoremPion: A Spectrum-Preserving Optimizer via Orthogonal Equivalence Transformation

Pith reviewed 2026-05-13 04:48 UTC · model grok-4.3

The pith

Pion updates each weight matrix through left and right orthogonal transformations, preserving its singular values throughout training.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Pion is a spectrum-preserving optimizer for LLM training based on orthogonal equivalence transformation. Unlike additive optimizers such as Adam and Muon, Pion updates each weight matrix through left and right orthogonal transformations, preserving its singular values throughout training. This yields an optimization mechanism that modulates the geometry of weight matrices while keeping their spectral norm fixed. The authors derive the Pion update rule, systematically examine its design choices, and analyze its convergence behavior along with several key properties. Empirical results show that Pion offers a stable and competitive alternative to standard optimizers for both LLM pretraining and

What carries the argument

The orthogonal equivalence transformation: a weight matrix W is replaced by U W V^T where U and V are orthogonal matrices chosen to reduce the loss; this operation leaves the singular values of W exactly the same.

If this is right

- The spectral norm of every weight matrix remains constant for the entire run, removing one source of training instability.

- Geometry of the weight matrices can still evolve because only their orientation and aspect ratios are allowed to change.

- The same update rule applies without modification to both pretraining and finetuning stages.

- Design parameters inside the orthogonal step (step size, choice of how to compute U and V) can be varied independently of the spectrum constraint.

Where Pith is reading between the lines

- The fixed-spectrum property may reduce the need for separate weight-decay or norm-clipping heuristics that are otherwise used to control scale.

- Because the update never changes the rank or the set of singular values, the method could be useful in settings where one wants to preserve the initial conditioning of the network.

- Extending the same left-right orthogonal step to attention or MLP blocks in other architectures might yield analogous spectrum control without extra regularization terms.

Load-bearing premise

That updates performed solely through orthogonal equivalence transformations can steer the loss downward to competitive minima without requiring additive changes to the weights.

What would settle it

Train a standard transformer with Pion on a common pretraining corpus and measure whether final perplexity is materially worse than the same run with Adam or whether the loss fails to decrease after the first few epochs.

Figures

read the original abstract

We introduce Pion, a spectrum-preserving optimizer for large language model (LLM) training based on orthogonal equivalence transformation. Unlike additive optimizers such as Adam and Muon, Pion updates each weight matrix through left and right orthogonal transformations, preserving its singular values throughout training. This yields an optimization mechanism that modulates the geometry of weight matrices while keeping their spectral norm fixed. We derive the Pion update rule, systematically examine its design choices, and analyze its convergence behavior along with several key properties. Empirical results show that Pion offers a stable and competitive alternative to standard optimizers for both LLM pretraining and finetuning.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

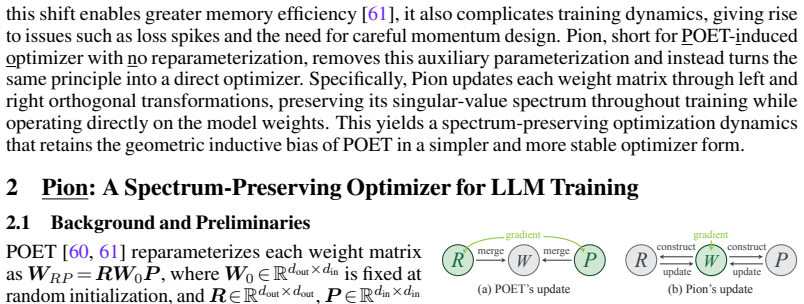

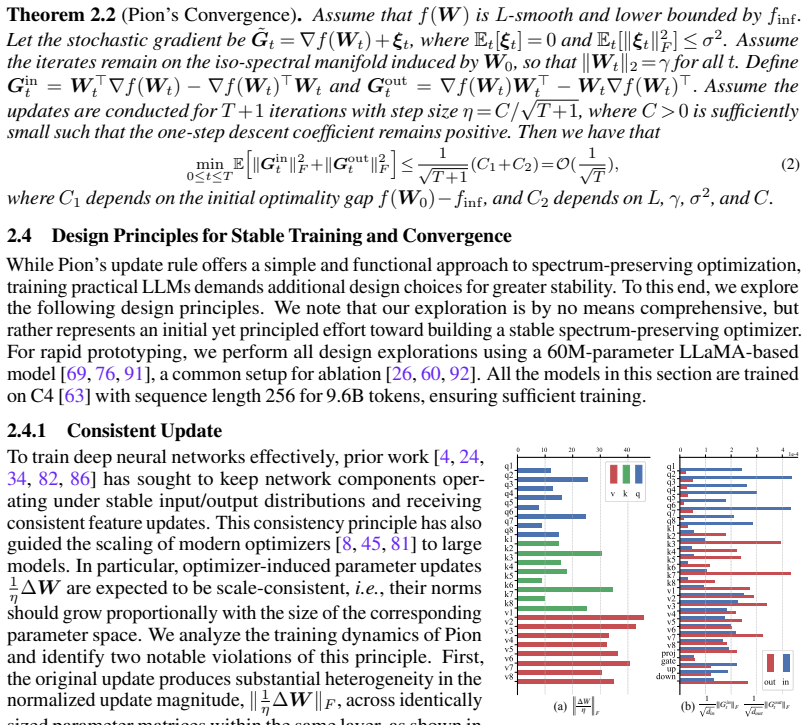

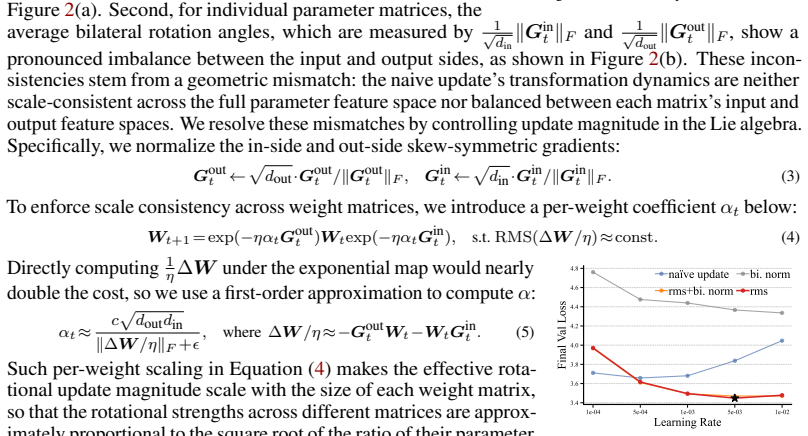

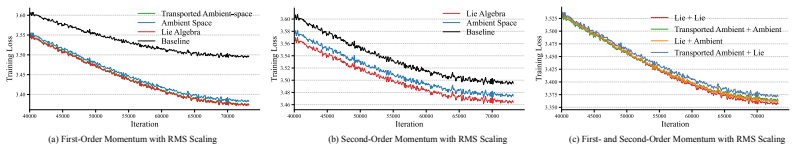

Summary. The manuscript introduces Pion, a spectrum-preserving optimizer for LLM training. It updates each weight matrix W via left and right orthogonal transformations (W ← Q_l W Q_r) that leave the singular values unchanged, derives the corresponding update rule, examines design choices, analyzes convergence properties, and reports empirical competitiveness with Adam and Muon on both pretraining and finetuning tasks.

Significance. If the convergence analysis and empirical claims hold, the approach offers a distinct optimization paradigm that decouples geometric modulation from spectral scaling. This could improve training stability for large models by enforcing fixed spectral norms, and the explicit derivation plus convergence study would constitute a useful theoretical contribution in the space of manifold-constrained optimizers.

major comments (3)

- [Convergence analysis section] Convergence analysis section: the claimed convergence behavior rests on the assumption that every useful gradient direction can be realized by an orthogonal-equivalence update without loss of progress. The analysis must explicitly address whether the tangent-space projection onto the fixed-singular-value manifold can still guarantee descent when the loss landscape requires rescaling of singular values (as occurs routinely with Adam/Muon). Without a supporting lemma or counter-example discussion, the competitiveness claim is not yet load-bearing.

- [Update rule derivation] Update rule derivation: the paper states that the Pion rule is derived from standard orthogonal transformations, yet it is unclear how the left and right orthogonal matrices Q_l and Q_r are chosen from the gradient (e.g., via polar decomposition, SVD-based projection, or another mechanism). If this choice is not parameter-free and requires additional hyperparameters, the “spectrum-preserving” advantage over additive methods is reduced.

- [Empirical results] Empirical section: the competitiveness claim for LLM pretraining and finetuning is central, but the reported results must include ablation on model scale, sequence length, and whether singular-value histograms remain exactly constant across training steps. If any run shows drift in the singular-value spectrum, the core invariance is violated and the comparison to Adam/Muon is undermined.

minor comments (2)

- [Introduction] Notation for the orthogonal factors Q_l and Q_r should be introduced with an explicit equation immediately after the first mention of the update.

- [Abstract] The abstract claims “several key properties” are analyzed; these should be enumerated in a dedicated subsection or table for clarity.

Simulated Author's Rebuttal

We thank the referee for the constructive and insightful comments on our manuscript. We address each major point below and will revise the paper to incorporate clarifications and additional material where needed.

read point-by-point responses

-

Referee: Convergence analysis section: the claimed convergence behavior rests on the assumption that every useful gradient direction can be realized by an orthogonal-equivalence update without loss of progress. The analysis must explicitly address whether the tangent-space projection onto the fixed-singular-value manifold can still guarantee descent when the loss landscape requires rescaling of singular values (as occurs routinely with Adam/Muon). Without a supporting lemma or counter-example discussion, the competitiveness claim is not yet load-bearing.

Authors: We agree that the convergence section would benefit from greater explicitness on this point. In the revision we will add a lemma establishing that the tangent-space projection of the orthogonal-equivalence update still guarantees sufficient descent for gradient directions that do not require singular-value rescaling, together with a short discussion of the complementary role of spectral rescaling in other optimizers. This directly addresses the concern while preserving the manuscript's core claims. revision: yes

-

Referee: Update rule derivation: the paper states that the Pion rule is derived from standard orthogonal transformations, yet it is unclear how the left and right orthogonal matrices Q_l and Q_r are chosen from the gradient (e.g., via polar decomposition, SVD-based projection, or another mechanism). If this choice is not parameter-free and requires additional hyperparameters, the “spectrum-preserving” advantage over additive methods is reduced.

Authors: The choice of Q_l and Q_r is obtained via polar decomposition of the projected gradient components, which is a deterministic, parameter-free operation. We will expand Section 3 with an explicit algorithmic description and pseudocode to remove any ambiguity and confirm that no additional hyperparameters are introduced. revision: yes

-

Referee: Empirical section: the competitiveness claim for LLM pretraining and finetuning is central, but the reported results must include ablation on model scale, sequence length, and whether singular-value histograms remain exactly constant across training steps. If any run shows drift in the singular-value spectrum, the core invariance is violated and the comparison to Adam/Muon is undermined.

Authors: We will augment the empirical section with ablations across additional model scales and sequence lengths. We will also add singular-value histogram plots at multiple training checkpoints to document that the spectrum remains exactly constant, as required by the orthogonal equivalence construction; our existing runs already exhibit this invariance with no drift. revision: yes

Circularity Check

No circularity: derivation rests on standard SVD properties

full rationale

The paper defines Pion via left/right orthogonal multiplications on weight matrices and states that this preserves singular values. This follows immediately from the SVD definition (W = U Σ V^T yields Q_l W Q_r = (Q_l U) Σ (V^T Q_r) with the new factors orthogonal), which is an external linear-algebra fact rather than a self-referential construction or fitted input renamed as prediction. No equations in the abstract or description reduce the update rule, convergence claim, or empirical performance to the paper's own outputs or self-citations; the design choices and analysis are presented as independent examinations of the resulting optimizer.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearPion updates each weight matrix through left and right orthogonal transformations, preserving its singular values throughout training... Wt+1 = exp(−η G_out_t) W_t exp(−η G_in_t)

-

IndisputableMonolith/Foundation/AlexanderDuality.leanalexander_duality_circle_linking unclearPion’s update preserves the singular values of Wt and only changes its row and column subspaces through orthogonal transformations

Reference graph

Works this paper leans on

-

[1]

gpt-oss-120b & gpt-oss-20b Model Card

Sandhini Agarwal, Lama Ahmad, Jason Ai, Sam Altman, Andy Applebaum, Edwin Arbus, Rahul K Arora, Yu Bai, Bowen Baker, Haiming Bao, et al. gpt-oss-120b & gpt-oss-20b model card.arXiv preprint arXiv:2508.10925, 2025. 7

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[2]

The Polar Express: Optimal Matrix Sign Methods and Their Application to the Muon Algorithm

Noah Amsel, David Persson, Christopher Musco, and Robert M Gower. The polar express: Optimal matrix sign methods and their application to the muon algorithm.arXiv preprint arXiv:2505.16932, 2025. 9

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[3]

Chenxin An, Zhihui Xie, Xiaonan Li, Lei Li, Jun Zhang, Shansan Gong, Ming Zhong, Jingjing Xu, Xipeng Qiu, Mingxuan Wang, and Lingpeng Kong. Polaris: A post-training recipe for scaling reinforcement learning on advanced reasoning models, 2025. 9, 23

work page 2025

-

[4]

Jimmy Lei Ba, Jamie Ryan Kiros, and Geoffrey E Hinton. Layer normalization.arXiv preprint arXiv:1607.06450, 2016. 3, 8, 9

work page internal anchor Pith review Pith/arXiv arXiv 2016

-

[5]

Spectrally-normalized margin bounds for neural networks

Peter L Bartlett, Dylan J Foster, and Matus J Telgarsky. Spectrally-normalized margin bounds for neural networks. InNIPS, 2017. 1

work page 2017

-

[6]

Gary Bécigneul and Octavian-Eugen Ganea. Riemannian adaptive optimization methods.arXiv preprint arXiv:1810.00760, 2018. 4

-

[7]

Modular duality in deep learning.arXiv preprint arXiv:2410.21265, 2024

Jeremy Bernstein and Laker Newhouse. Modular duality in deep learning.arXiv preprint arXiv:2410.21265, 2024. 9

-

[8]

Old optimizer, new norm: An anthology

Jeremy Bernstein and Laker Newhouse. Old optimizer, new norm: An anthology.arXiv preprint arXiv:2409.20325, 2024. 3, 9

-

[9]

Lora learns less and forgets less.arXiv preprint arXiv:2405.09673, 2024

Dan Biderman, Jacob Portes, Jose Javier Gonzalez Ortiz, Mansheej Paul, Philip Greengard, Connor Jennings, Daniel King, Sam Havens, Vitaliy Chiley, Jonathan Frankle, et al. Lora learns less and forgets less.arXiv preprint arXiv:2405.09673, 2024. 8

-

[10]

Piqa: Reasoning about physical commonsense in natural language

Yonatan Bisk, Rowan Zellers, Ronan Le Bras, Jianfeng Gao, and Yejin Choi. Piqa: Reasoning about physical commonsense in natural language. InAAAI, 2020. 9

work page 2020

-

[11]

Optimization methods for large-scale machine learning.SIAM review, 60(2):223–311, 2018

Léon Bottou, Frank E Curtis, and Jorge Nocedal. Optimization methods for large-scale machine learning.SIAM review, 60(2):223–311, 2018. 22

work page 2018

-

[12]

Cambridge University Press, 2023

Nicolas Boumal.An introduction to optimization on smooth manifolds. Cambridge University Press, 2023. 2

work page 2023

-

[13]

Stochastic spectral descent for restricted boltzmann machines

David Carlson, V olkan Cevher, and Lawrence Carin. Stochastic spectral descent for restricted boltzmann machines. InAISTATS, 2015. 9

work page 2015

-

[14]

David Carlson, Ya-Ping Hsieh, Edo Collins, Lawrence Carin, and V olkan Cevher. Stochastic spectral descent for discrete graphical models.IEEE Journal of Selected Topics in Signal Processing, 10(2):296–311, 2016. 9

work page 2016

-

[15]

Muon optimizes under spectral norm constraints

Lizhang Chen, Jonathan Li, and Qiang Liu. Muon optimizes under spectral norm constraints. arXiv preprint arXiv:2506.15054, 2025. 9

-

[16]

Evaluating Large Language Models Trained on Code

Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Ponde De Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, et al. Evaluating large language models trained on code.arXiv preprint arXiv:2107.03374, 2021. 8, 23

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[17]

Think you have Solved Question Answering? Try ARC, the AI2 Reasoning Challenge

Peter Clark, Isaac Cowhey, Oren Etzioni, Tushar Khot, Ashish Sabharwal, Carissa Schoenick, and Oyvind Tafjord. Think you have solved question answering? try arc, the ai2 reasoning challenge. arXiv preprint arXiv:1803.05457, 2018. 9

work page internal anchor Pith review Pith/arXiv arXiv 2018

-

[18]

Training Verifiers to Solve Math Word Problems

Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, et al. Training verifiers to solve math word problems.arXiv preprint arXiv:2110.14168, 2021. 8, 23

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[19]

Deepseek-v4: Towards highly efficient million-token context intelligence, 2026

DeepSeek-AI. Deepseek-v4: Towards highly efficient million-token context intelligence, 2026. 7 10

work page 2026

-

[20]

Scaling vision transformers to 22 billion parameters

Mostafa Dehghani, Josip Djolonga, Basil Mustafa, Piotr Padlewski, Jonathan Heek, Justin Gilmer, Andreas Peter Steiner, Mathilde Caron, Robert Geirhos, Ibrahim Alabdulmohsin, et al. Scaling vision transformers to 22 billion parameters. InICML, 2023. 7

work page 2023

-

[21]

Cogview: Mastering text-to-image generation via transformers

Ming Ding, Zhuoyi Yang, Wenyi Hong, Wendi Zheng, Chang Zhou, Da Yin, Junyang Lin, Xu Zou, Zhou Shao, Hongxia Yang, et al. Cogview: Mastering text-to-image generation via transformers. InNeurIPS, 2021. 1

work page 2021

-

[22]

John Duchi, Elad Hazan, and Yoram Singer. Adaptive subgradient methods for online learning and stochastic optimization.Journal of machine learning research, 12(7), 2011. 4

work page 2011

-

[23]

The language model evaluation harness, 07 2024

Leo Gao, Jonathan Tow, Baber Abbasi, Stella Biderman, Sid Black, Anthony DiPofi, Charles Foster, Laurence Golding, Jeffrey Hsu, Alain Le Noac’h, Haonan Li, Kyle McDonell, Niklas Muennighoff, Chris Ociepa, Jason Phang, Laria Reynolds, Hailey Schoelkopf, Aviya Skowron, Lintang Sutawika, Eric Tang, Anish Thite, Ben Wang, Kevin Wang, and Andy Zou. The languag...

work page 2024

-

[24]

Understanding the difficulty of training deep feedforward neural networks

Xavier Glorot and Yoshua Bengio. Understanding the difficulty of training deep feedforward neural networks. InAISTATS, 2010. 3

work page 2010

-

[25]

Aaron Grattafiori, Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Alex Vaughan, et al. The llama 3 herd of models.arXiv preprint arXiv:2407.21783, 2024. 8, 22

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[26]

Mano: Restriking manifold optimization for llm training.arXiv preprint arXiv:2601.23000, 2026

Yufei Gu and Zeke Xie. Mano: Restriking manifold optimization for llm training.arXiv preprint arXiv:2601.23000, 2026. 3, 7

-

[27]

DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning

Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Peiyi Wang, Qihao Zhu, Runxin Xu, Ruoyu Zhang, Shirong Ma, Xiao Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning.arXiv preprint arXiv:2501.12948, 2025. 9, 23

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[28]

Shampoo: Preconditioned stochastic tensor optimization

Vineet Gupta, Tomer Koren, and Yoram Singer. Shampoo: Preconditioned stochastic tensor optimization. InICML, 2018. 9

work page 2018

-

[29]

Chaoqun He, Renjie Luo, Yuzhuo Bai, Shengding Hu, Zhen Thai, Junhao Shen, Jinyi Hu, Xu Han, Yujie Huang, Yuxiang Zhang, et al. Olympiadbench: A challenging benchmark for promoting agi with olympiad-level bilingual multimodal scientific problems. InProceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Paper...

work page 2024

-

[30]

Zhiwei He, Tian Liang, Jiahao Xu, Qiuzhi Liu, Xingyu Chen, Yue Wang, Linfeng Song, Dian Yu, Zhenwen Liang, Wenxuan Wang, et al. Deepmath-103k: A large-scale, challenging, de- contaminated, and verifiable mathematical dataset for advancing reasoning.arXiv preprint arXiv:2504.11456, 2025. 9, 23

-

[31]

Orthogonal recurrent neural networks with scaled cayley transform

Kyle Helfrich, Devin Willmott, and Qiang Ye. Orthogonal recurrent neural networks with scaled cayley transform. InICML, 2018. 5

work page 2018

-

[32]

Query-key normalization for transformers

Alex Henry, Prudhvi Raj Dachapally, Shubham Shantaram Pawar, and Yuxuan Chen. Query-key normalization for transformers. InFindings of EMNLP, 2020. 1, 9

work page 2020

-

[33]

Training Compute-Optimal Large Language Models

Jordan Hoffmann, Sebastian Borgeaud, Arthur Mensch, Elena Buchatskaya, Trevor Cai, Eliza Rutherford, DDL Casas, Lisa Anne Hendricks, Johannes Welbl, Aidan Clark, et al. Training compute-optimal large language models.arXiv preprint arXiv:2203.15556, 10, 2022. 7

work page internal anchor Pith review Pith/arXiv arXiv 2022

-

[34]

Batch normalization: Accelerating deep network training by reducing internal covariate shift

Sergey Ioffe and Christian Szegedy. Batch normalization: Accelerating deep network training by reducing internal covariate shift. InICML, 2015. 3

work page 2015

-

[35]

On computation and generalization of generative adversarial networks under spectrum control

Haoming Jiang, Zhehui Chen, Minshuo Chen, Feng Liu, Dingding Wang, and Tuo Zhao. On computation and generalization of generative adversarial networks under spectrum control. In ICLR, 2019. 1

work page 2019

-

[36]

Muon: An optimizer for hidden layers in neural networks, 2024

Keller Jordan, Yuchen Jin, Vlado Boza, Jiacheng You, Franz Cesista, Laker Newhouse, and Jeremy Bernstein. Muon: An optimizer for hidden layers in neural networks, 2024. 1, 7, 9 11

work page 2024

-

[37]

Adam: A Method for Stochastic Optimization

Diederik P Kingma and Jimmy Ba. Adam: A method for stochastic optimization.arXiv preprint arXiv:1412.6980, 2014. 1, 4, 9

work page internal anchor Pith review Pith/arXiv arXiv 2014

-

[38]

Efficient memory management for large language model serving with pagedattention

Woosuk Kwon, Zhuohan Li, Siyuan Zhuang, Ying Sheng, Lianmin Zheng, Cody Hao Yu, Joseph Gonzalez, Hao Zhang, and Ion Stoica. Efficient memory management for large language model serving with pagedattention. InProceedings of the 29th symposium on operating systems principles, pages 611–626, 2023. 23

work page 2023

-

[39]

Aitor Lewkowycz, Anders Andreassen, David Dohan, Ethan Dyer, Henryk Michalewski, Vinay Ramasesh, Ambrose Slone, Cem Anil, Imanol Schlag, Theo Gutman-Solo, et al. Solving quanti- tative reasoning problems with language models.Advances in neural information processing systems, 35:3843–3857, 2022. 9, 23

work page 2022

-

[40]

Mario Lezcano-Casado and David Martınez-Rubio. Cheap orthogonal constraints in neural networks: A simple parametrization of the orthogonal and unitary group. InICML, 2019. 2, 9

work page 2019

-

[41]

Jun Li, Li Fuxin, and Sinisa Todorovic. Efficient riemannian optimization on the stiefel manifold via the cayley transform.arXiv preprint arXiv:2002.01113, 2020. 5

-

[42]

Normuon: Making muon more efficient and scalable.arXiv preprint arXiv:2510.05491, 2025

Zichong Li, Liming Liu, Chen Liang, Weizhu Chen, and Tuo Zhao. Normuon: Making muon more efficient and scalable.arXiv preprint arXiv:2510.05491, 2025. 1, 9

-

[43]

Rongmei Lin, Weiyang Liu, Zhen Liu, Chen Feng, Zhiding Yu, James M. Rehg, Li Xiong, and Le Song. Regularizing neural networks via minimizing hyperspherical energy. InCVPR, 2020. 1

work page 2020

-

[44]

Aixin Liu, Bei Feng, Bing Xue, Bingxuan Wang, Bochao Wu, Chengda Lu, Chenggang Zhao, Chengqi Deng, Chenyu Zhang, Chong Ruan, et al. Deepseek-v3 technical report.arXiv preprint arXiv:2412.19437, 2024. 7

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[45]

Muon is Scalable for LLM Training

Jingyuan Liu, Jianlin Su, Xingcheng Yao, Zhejun Jiang, Guokun Lai, Yulun Du, Yidao Qin, Weixin Xu, Enzhe Lu, Junjie Yan, et al. Muon is scalable for llm training.arXiv preprint arXiv:2502.16982, 2025. 3, 6, 7, 9

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[46]

Learning towards minimum hyperspherical energy

Weiyang Liu, Rongmei Lin, Zhen Liu, Lixin Liu, Zhiding Yu, Bo Dai, and Le Song. Learning towards minimum hyperspherical energy. InNeurIPS, 2018. 1, 9

work page 2018

-

[47]

Rehg, Liam Paull, Li Xiong, Le Song, and Adrian Weller

Weiyang Liu, Rongmei Lin, Zhen Liu, James M. Rehg, Liam Paull, Li Xiong, Le Song, and Adrian Weller. Orthogonal over-parameterized training. InCVPR, 2021. 1, 5

work page 2021

-

[48]

Learning with hyperspherical uniformity

Weiyang Liu, Rongmei Lin, Zhen Liu, Li Xiong, Bernhard Schölkopf, and Adrian Weller. Learning with hyperspherical uniformity. InAISTATS, 2021. 1, 9

work page 2021

-

[49]

Decoupled weight decay regularization

Ilya Loshchilov and Frank Hutter. Decoupled weight decay regularization. InICLR, 2019. 1, 7

work page 2019

-

[50]

American mathematics contest 12 (amc 12), November 2023

MAA. American mathematics contest 12 (amc 12), November 2023. 9, 23

work page 2023

-

[51]

American invitational mathematics examination (aime), February 2024

MAA. American invitational mathematics examination (aime), February 2024. 9, 23

work page 2024

-

[52]

American invitational mathematics examination (aime), February 2025

MAA. American invitational mathematics examination (aime), February 2025. 9, 23

work page 2025

-

[53]

Martial Mermillod, Aurélia Bugaiska, and Patrick Bonin. The stability-plasticity dilemma: Investigating the continuum from catastrophic forgetting to age-limited learning effects.Frontiers in psychology, 4:504, 2013. 8

work page 2013

-

[54]

Efficient orthogonal parametrisation of recurrent neural networks using householder reflections

Zakaria Mhammedi, Andrew Hellicar, Ashfaqur Rahman, and James Bailey. Efficient orthogonal parametrisation of recurrent neural networks using householder reflections. InICML, 2017. 2, 9

work page 2017

-

[55]

arXiv preprint arXiv:1802.05957

Takeru Miyato, Toshiki Kataoka, Masanori Koyama, and Yuichi Yoshida. Spectral normalization for generative adversarial networks.arXiv preprint arXiv:1802.05957, 2018. 1

-

[56]

When does sparsity mitigate the curse of depth in llms.arXiv preprint arXiv:2603.15389, 2026

Dilxat Muhtar, Xinyuan Song, Sebastian Pokutta, Max Zimmer, Nico Pelleriti, Thomas Hof- mann, and Shiwei Liu. When does sparsity mitigate the curse of depth in llms.arXiv preprint arXiv:2603.15389, 2026. 8 12

-

[57]

A method for solving the convex programming problem with convergence rate o (1/k2)

Yurii Nesterov. A method for solving the convex programming problem with convergence rate o (1/k2). InDokl akad nauk Sssr, volume 269, page 543, 1983. 4

work page 1983

-

[58]

Thomas Pethick, Wanyun Xie, Kimon Antonakopoulos, Zhenyu Zhu, Antonio Silveti-Falls, and V olkan Cevher. Training deep learning models with norm-constrained lmos.arXiv preprint arXiv:2502.07529, 2025. 9

-

[59]

Boris T Polyak. Some methods of speeding up the convergence of iteration methods.Ussr computational mathematics and mathematical physics, 4(5):1–17, 1964. 4

work page 1964

-

[60]

Reparameterized llm training via orthogonal equivalence transformation

Zeju Qiu, Simon Buchholz, Tim Z Xiao, Maximilian Dax, Bernhard Schölkopf, and Weiyang Liu. Reparameterized llm training via orthogonal equivalence transformation. InNeurIPS, 2025. 1, 2, 3, 5, 7, 9

work page 2025

-

[61]

Poet-x: Memory-efficient llm training by scaling orthogonal transformation

Zeju Qiu, Lixin Liu, Adrian Weller, Han Shi, and Weiyang Liu. Poet-x: Memory-efficient llm training by scaling orthogonal transformation. InICML, 2026. 1, 2, 9

work page 2026

-

[62]

Controlling text-to-image diffusion by orthogonal finetuning

Zeju Qiu, Weiyang Liu, Haiwen Feng, Yuxuan Xue, Yao Feng, Zhen Liu, Dan Zhang, Adrian Weller, and Bernhard Schölkopf. Controlling text-to-image diffusion by orthogonal finetuning. InNeurIPS, 2023. 5

work page 2023

-

[63]

Colin Raffel, Noam Shazeer, Adam Roberts, Katherine Lee, Sharan Narang, Michael Matena, Yanqi Zhou, Wei Li, and Peter J Liu. Exploring the limits of transfer learning with a unified text-to-text transformer.Journal of machine learning research, 21(140):1–67, 2020. 3, 7

work page 2020

-

[64]

On the convergence of adam and beyond.arXiv preprint arXiv:1904.09237, 2019

Sashank J Reddi, Satyen Kale, and Sanjiv Kumar. On the convergence of adam and beyond.arXiv preprint arXiv:1904.09237, 2019. 4

-

[65]

A stochastic approximation method.The annals of mathe- matical statistics, pages 400–407, 1951

Herbert Robbins and Sutton Monro. A stochastic approximation method.The annals of mathe- matical statistics, pages 400–407, 1951. 22

work page 1951

-

[66]

Methods of improving llm training stability.arXiv preprint arXiv:2410.16682, 2024

Oleg Rybakov, Mike Chrzanowski, Peter Dykas, Jinze Xue, and Ben Lanir. Methods of improving llm training stability.arXiv preprint arXiv:2410.16682, 2024. 7

-

[67]

Keisuke Sakaguchi, Ronan Le Bras, Chandra Bhagavatula, and Yejin Choi. Winogrande: An adversarial winograd schema challenge at scale.Communications of the ACM, 64(9):99–106,

-

[68]

DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models

Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Yang Wu, et al. Deepseekmath: Pushing the limits of mathematical reasoning in open language models.arXiv preprint arXiv:2402.03300, 2024. 9, 23

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[69]

GLU Variants Improve Transformer

Noam Shazeer. Glu variants improve transformer.arXiv preprint arXiv:2002.05202, 2020. 3

work page internal anchor Pith review Pith/arXiv arXiv 2002

-

[70]

HybridFlow: A Flexible and Efficient RLHF Framework

Guangming Sheng, Chi Zhang, Zilingfeng Ye, Xibin Wu, Wang Zhang, Ru Zhang, Yanghua Peng, Haibin Lin, and Chuan Wu. Hybridflow: A flexible and efficient rlhf framework.arXiv preprint arXiv: 2409.19256, 2024. 9, 23

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[71]

Optimization techniques on riemannian manifolds.arXiv preprint arXiv:1407.5965, 2014

Steven Thomas Smith. Optimization techniques on riemannian manifolds.arXiv preprint arXiv:1407.5965, 2014. 4

-

[72]

The curse of depth in large language models.arXiv preprint arXiv:2502.05795, 2025

Wenfang Sun, Xinyuan Song, Pengxiang Li, Lu Yin, Yefeng Zheng, and Shiwei Liu. The curse of depth in large language models.arXiv preprint arXiv:2502.05795, 2025. 8

-

[73]

Gemma 2: Improving Open Language Models at a Practical Size

Gemma Team, Morgane Riviere, Shreya Pathak, Pier Giuseppe Sessa, Cassidy Hardin, Surya Bhupatiraju, Léonard Hussenot, Thomas Mesnard, Bobak Shahriari, Alexandre Ramé, et al. Gemma 2: Improving open language models at a practical size.arXiv preprint arXiv:2408.00118,

work page internal anchor Pith review Pith/arXiv arXiv

-

[74]

Kimi K2: Open Agentic Intelligence

Kimi Team, Yifan Bai, Yiping Bao, Y Charles, Cheng Chen, Guanduo Chen, Haiting Chen, Huarong Chen, Jiahao Chen, Ningxin Chen, et al. Kimi k2: Open agentic intelligence.arXiv preprint arXiv:2507.20534, 2025. 1, 6, 9

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[75]

Qwen2.5: A party of foundation models, 2024

Qwen Team. Qwen2.5: A party of foundation models, 2024. 8, 22 13

work page 2024

-

[76]

Llama 2: Open Foundation and Fine-Tuned Chat Models

Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Almahairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava, Shruti Bhosale, et al. Llama 2: Open foundation and fine-tuned chat models.arXiv preprint arXiv:2307.09288, 2023. 3

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[77]

Mark Tuddenham, Adam Prügel-Bennett, and Jonathan Hare. Orthogonalising gradients to speed up neural network optimisation.arXiv preprint arXiv:2202.07052, 2022. 9

-

[78]

Soap: Improving and stabilizing shampoo using adam.arXiv preprint arXiv:2409.11321, 2024

Nikhil Vyas, Depen Morwani, Rosie Zhao, Mujin Kwun, Itai Shapira, David Brandfonbrener, Lucas Janson, and Sham Kakade. Soap: Improving and stabilizing shampoo using adam.arXiv preprint arXiv:2409.11321, 2024. 9

-

[79]

Magicoder: Empowering code generation with oss-instruct

Yuxiang Wei, Zhe Wang, Jiawei Liu, Yifeng Ding, and Lingming Zhang. Magicoder: Empowering code generation with oss-instruct. InICML, 2024. 8, 22

work page 2024

-

[80]

Small-scale proxies for large-scale transformer training instabilities

Mitchell Wortsman, Peter J Liu, Lechao Xiao, Katie Everett, Alex Alemi, Ben Adlam, John D Co- Reyes, Izzeddin Gur, Abhishek Kumar, Roman Novak, et al. Small-scale proxies for large-scale transformer training instabilities.arXiv preprint arXiv:2309.14322, 2023. 7

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.