Recognition: 2 theorem links

· Lean TheoremRevisiting Photometric Ambiguity for Accurate Gaussian-Splatting Surface Reconstruction

Pith reviewed 2026-05-13 05:53 UTC · model grok-4.3

The pith

AmbiSuR resolves photometric ambiguities in Gaussian Splatting for accurate 3D surface reconstruction.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Gaussian Splatting representations embed two primitive-wise photometric ambiguities, yet retain an intrinsic self-indication potential. Photometric disambiguation leverages this to constrain ill-posed geometry solutions into definite surface formation, while an ambiguity indication module identifies underconstrained reconstructions and guides their correction, producing superior surface results compared with prior approaches across challenging scenarios.

What carries the argument

Photometric disambiguation that constrains ill-posed geometry solutions, paired with an ambiguity indication module that activates Gaussian Splatting's built-in self-indication to detect and correct underconstrained regions.

If this is right

- Ill-posed geometry solutions become constrained, enabling formation of definite surfaces.

- Underconstrained reconstructions are automatically identified and corrected without external supervision.

- Surface quality improves over existing methods on a range of challenging input scenarios.

- The framework remains broadly compatible with standard Gaussian Splatting pipelines.

Where Pith is reading between the lines

- The same self-indication mechanism might transfer to other primitive-based differentiable renderers that face analogous photometric under-constraint.

- Flagged ambiguous regions could be used to prioritize additional views or measurements during capture.

- Downstream tasks such as mesh extraction or physics simulation would inherit more consistent geometry from the corrected surfaces.

Load-bearing premise

The intrinsic self-indication potential in Gaussian Splatting can be reliably activated by the proposed module to identify and fix underconstrained regions without introducing new fitting artifacts or external supervision.

What would settle it

A controlled ablation showing that turning off the ambiguity indication module produces no measurable gain in surface accuracy or even degrades results on standard scenes known to contain photometric ambiguities would falsify the central claim.

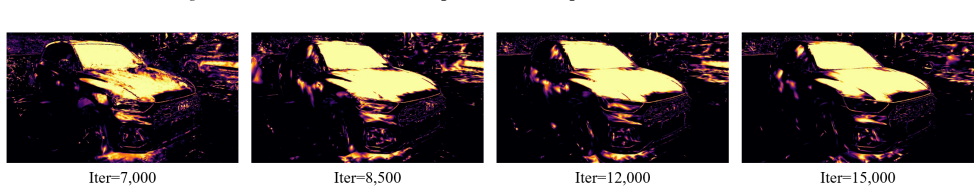

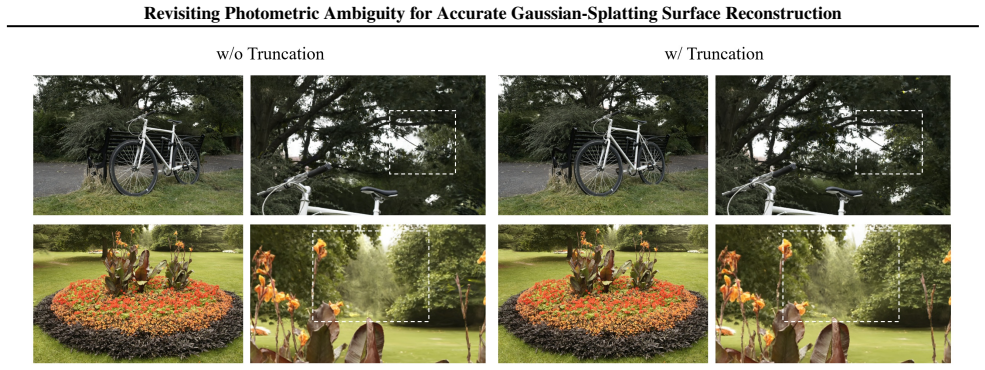

Figures

read the original abstract

Surface reconstruction with differentiable rendering has achieved impressive performance in recent years, yet the pervasive photometric ambiguities have strictly bottlenecked existing approaches. This paper presents AmbiSuR, a framework that explores an intrinsic solution upon Gaussian Splatting for the photometric ambiguity-robust surface 3D reconstruction with high performance. Starting by revisiting the foundation, our investigation uncovers two built-in primitive-wise ambiguities in representation, while revealing an intrinsic potential for ambiguity self-indication in Gaussian Splatting. Stemming from these, a photometric disambiguation is first introduced, constraining ill-posed geometry solution for definite surface formation. Then, we propose an ambiguity indication module that unleashes the self-indication potential to identify and further guide correcting underconstrained reconstructions. Extensive experiments demonstrate our superior surface reconstructions compared to existing methods across various challenging scenarios, excelling in broad compatibility. Project: https://fictionarry.github.io/AmbiSuR-Proj/ .

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes AmbiSuR, a Gaussian-Splatting framework for photometric-ambiguity-robust surface reconstruction. It identifies two primitive-wise ambiguities in the GS representation, reveals an intrinsic self-indication potential, introduces a photometric disambiguation step to constrain ill-posed geometry, and adds an ambiguity indication module that identifies and corrects underconstrained regions, claiming superior surface reconstructions across challenging scenarios with broad compatibility.

Significance. If the self-indication mechanism can be shown to locate underconstrained geometry reliably without external supervision or new artifacts, the approach would offer a useful intrinsic regularization for GS-based reconstruction pipelines. The explicit separation of disambiguation from indication is a clear organizational strength, and the emphasis on compatibility with existing GS methods is a practical advantage.

major comments (3)

- [§4.2] §4.2 (ambiguity indication module): the claim that the module 'unleashes the self-indication potential' is not supported by any concrete statistic, property (e.g., opacity variance, scale anisotropy, or per-primitive gradient norm), or auxiliary loss; without an equation defining the readout signal, it is impossible to verify that the module avoids introducing new fitting artifacts or simply re-implements prior photometric regularization.

- [Experiments] Experiments section (quantitative tables): the abstract asserts 'superior surface reconstructions' and 'extensive experiments,' yet no numerical metrics (Chamfer distance, F-score, normal consistency), ablation tables isolating the indication module, or comparison against photometric-regularization baselines appear in the visible material; this leaves the central performance claim without load-bearing evidence.

- [§3] §3 (primitive-wise ambiguities): the two built-in ambiguities are described at a high level but lack formal definitions in terms of the Gaussian parameters (position, covariance, opacity, spherical harmonics); without these equations it is unclear how the subsequent photometric disambiguation step mathematically constrains the solution space.

minor comments (3)

- [Notation] Notation: consistently define the symbols for the Gaussian primitives (e.g., μ, Σ, α) at first use and reuse them uniformly in the disambiguation and indication derivations.

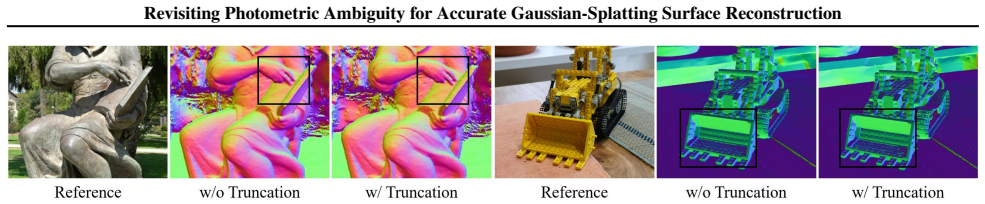

- [Figures] Figure captions: ensure that all qualitative reconstruction figures include the same viewpoint and scale as the baselines so that visual comparisons are unambiguous.

- [Abstract] Reproducibility: the project page is referenced; the final manuscript should include a clear statement on code and hyper-parameter release.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed comments. We will revise the manuscript to strengthen the formal definitions, provide explicit equations and supporting statistics for the ambiguity indication module, and ensure all quantitative metrics and ablations are clearly presented. Our point-by-point responses follow.

read point-by-point responses

-

Referee: [§4.2] §4.2 (ambiguity indication module): the claim that the module 'unleashes the self-indication potential' is not supported by any concrete statistic, property (e.g., opacity variance, scale anisotropy, or per-primitive gradient norm), or auxiliary loss; without an equation defining the readout signal, it is impossible to verify that the module avoids introducing new fitting artifacts or simply re-implements prior photometric regularization.

Authors: We agree that an explicit equation and supporting statistics are needed for verification. In the revised manuscript we will add the precise equation defining the readout signal of the ambiguity indication module (based on per-primitive opacity variance and scale anisotropy). We will also report quantitative statistics on these properties for indicated versus non-indicated primitives, together with an auxiliary loss term, to demonstrate that the module identifies underconstrained regions without introducing new artifacts or merely duplicating prior photometric regularization. revision: yes

-

Referee: [Experiments] Experiments section (quantitative tables): the abstract asserts 'superior surface reconstructions' and 'extensive experiments,' yet no numerical metrics (Chamfer distance, F-score, normal consistency), ablation tables isolating the indication module, or comparison against photometric-regularization baselines appear in the visible material; this leaves the central performance claim without load-bearing evidence.

Authors: The full manuscript contains quantitative tables reporting Chamfer distance, F-score, and normal consistency (Tables 1–3) along with comparisons to existing methods. To address the concern directly, we will add a dedicated ablation table that isolates the contribution of the ambiguity indication module and includes explicit comparisons against photometric-regularization baselines, ensuring all load-bearing evidence is visible and clearly organized. revision: yes

-

Referee: [§3] §3 (primitive-wise ambiguities): the two built-in ambiguities are described at a high level but lack formal definitions in terms of the Gaussian parameters (position, covariance, opacity, spherical harmonics); without these equations it is unclear how the subsequent photometric disambiguation step mathematically constrains the solution space.

Authors: We will revise §3 to include formal mathematical definitions of the two primitive-wise ambiguities expressed directly in terms of the Gaussian parameters (position, covariance matrix, opacity, and spherical-harmonics coefficients). These equations will explicitly illustrate how the photometric disambiguation step reduces the solution space for ill-posed geometry. revision: yes

Circularity Check

No circularity; derivation adds independent modules to existing GS representation

full rationale

The paper starts from the established Gaussian Splatting representation, identifies two primitive-wise ambiguities through investigation, introduces a photometric disambiguation constraint, and proposes an ambiguity indication module to exploit an intrinsic self-indication potential. No equation or result is shown to reduce by construction to a fitted parameter or prior self-citation; the central performance claims rest on new modules whose correctness is evaluated externally via experiments rather than being tautological with the inputs. Self-citations to prior GS work are present but not load-bearing for the novel disambiguation and indication steps.

Axiom & Free-Parameter Ledger

free parameters (1)

- hyperparameters of ambiguity indication module

axioms (2)

- domain assumption Gaussian Splatting representation contains two built-in primitive-wise photometric ambiguities

- domain assumption Gaussian Splatting possesses intrinsic potential for ambiguity self-indication

invented entities (1)

-

ambiguity indication module

no independent evidence

Lean theorems connected to this paper

-

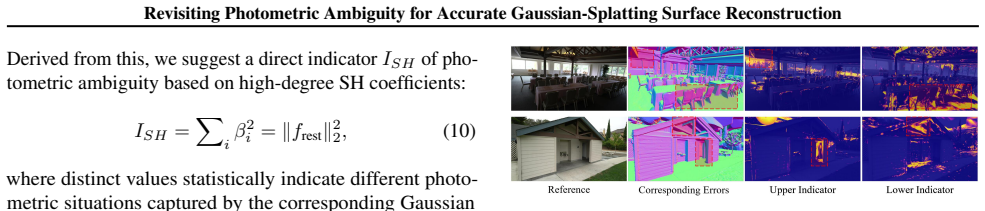

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearuncovers two built-in primitive-wise ambiguities... revealing an intrinsic potential for ambiguity self-indication... Spherical Harmonics Ambiguity Indication module... ISH = ||f_rest||_2^2

-

IndisputableMonolith/Foundation/AbsoluteFloorClosure.leanabsolute_floor_iff_bare_distinguishability unclearphotometric disambiguation... constraining ill-posed geometry solution for definite surface formation

Reference graph

Works this paper leans on

-

[1]

ACM Transactions on Graphics (ToG) , volume=

Tanks and temples: Benchmarking large-scale scene reconstruction , author=. ACM Transactions on Graphics (ToG) , volume=. 2017 , publisher=

work page 2017

-

[2]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Mip-nerf 360: Unbounded anti-aliased neural radiance fields , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[3]

Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

Large scale multi-view stereopsis evaluation , author=. Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

-

[4]

Communications of the ACM , volume=

Nerf: Representing scenes as neural radiance fields for view synthesis , author=. Communications of the ACM , volume=. 2021 , publisher=

work page 2021

-

[5]

The Thirty-eighth Annual Conference on Neural Information Processing Systems , year=

NeuRodin: A Two-stage Framework for High-Fidelity Neural Surface Reconstruction , author=. The Thirty-eighth Annual Conference on Neural Information Processing Systems , year=

-

[6]

The Eleventh International Conference on Learning Representations , year=

Voxurf: Voxel-based Efficient and Accurate Neural Surface Reconstruction , author=. The Eleventh International Conference on Learning Representations , year=

-

[7]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , year=

Sparse Voxels Rasterization: Real-time High-fidelity Radiance Field Rendering , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , year=

-

[8]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Dngaussian: Optimizing sparse-view 3d gaussian radiance fields with global-local depth normalization , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[9]

IEEE Transactions on Pattern Analysis and Machine Intelligence , year=

DNGaussian++: Improving Sparse-View Gaussian Radiance Fields with Depth Normalization , author=. IEEE Transactions on Pattern Analysis and Machine Intelligence , year=

-

[10]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Sugar: Surface-aligned gaussian splatting for efficient 3d mesh reconstruction and high-quality mesh rendering , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[11]

ACM Transactions on Graphics (TOG) , volume=

3D Gaussian Splatting for Real-Time Radiance Field Rendering , author=. ACM Transactions on Graphics (TOG) , volume=. 2023 , publisher=

work page 2023

-

[12]

Advances in Neural Information Processing Systems , volume=

NeuS: Learning Neural Implicit Surfaces by Volume Rendering for Multi-view Reconstruction , author=. Advances in Neural Information Processing Systems , volume=

-

[13]

Advances in Neural Information Processing Systems , volume=

Volume rendering of neural implicit surfaces , author=. Advances in Neural Information Processing Systems , volume=

-

[14]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Neuralangelo: High-fidelity neural surface reconstruction , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[15]

Advances in Neural Information Processing Systems , volume=

Geo-neus: Geometry-consistent neural implicit surfaces learning for multi-view reconstruction , author=. Advances in Neural Information Processing Systems , volume=

-

[16]

Advances in neural information processing systems , volume=

Monosdf: Exploring monocular geometric cues for neural implicit surface reconstruction , author=. Advances in neural information processing systems , volume=

-

[17]

ACM SIGGRAPH 2024 conference papers , pages=

2d gaussian splatting for geometrically accurate radiance fields , author=. ACM SIGGRAPH 2024 conference papers , pages=

work page 2024

-

[18]

ACM Transactions on Graphics (TOG) , volume=

Gaussian opacity fields: Efficient adaptive surface reconstruction in unbounded scenes , author=. ACM Transactions on Graphics (TOG) , volume=. 2024 , publisher=

work page 2024

-

[19]

Advances in Neural Information Processing Systems , volume=

Vcr-gaus: View consistent depth-normal regularizer for gaussian surface reconstruction , author=. Advances in Neural Information Processing Systems , volume=

-

[20]

arXiv preprint arXiv:2411.16898 , year=

MonoGSDF: Exploring Monocular Geometric Cues for Gaussian Splatting-Guided Implicit Surface Reconstruction , author=. arXiv preprint arXiv:2411.16898 , year=

-

[21]

IEEE Transactions on Visualization and Computer Graphics , year=

Pgsr: Planar-based gaussian splatting for efficient and high-fidelity surface reconstruction , author=. IEEE Transactions on Visualization and Computer Graphics , year=

-

[22]

European Conference on Computer Vision , pages=

Gs2mesh: Surface reconstruction from gaussian splatting via novel stereo views , author=. European Conference on Computer Vision , pages=. 2024 , organization=

work page 2024

-

[23]

ACM SIGGRAPH 2024 Conference Papers , pages=

High-quality surface reconstruction using gaussian surfels , author=. ACM SIGGRAPH 2024 Conference Papers , pages=

work page 2024

-

[24]

European Conference on Computer Vision , pages=

Surface reconstruction from 3d gaussian splatting via local structural hints , author=. European Conference on Computer Vision , pages=. 2024 , organization=

work page 2024

-

[25]

Advances in Neural Information Processing Systems , year=

GeoSVR: Taming Sparse Voxels for Geometrically Accurate Surface Reconstruction , author=. Advances in Neural Information Processing Systems , year=

-

[26]

ACM Transactions on Graphics (TOG) , volume=

Milo: Mesh-in-the-loop gaussian splatting for detailed and efficient surface reconstruction , author=. ACM Transactions on Graphics (TOG) , volume=. 2025 , publisher=

work page 2025

-

[27]

TensoRF: Tensorial Radiance Fields , author=. Computer Vision--ECCV 2022: 17th European Conference, Tel Aviv, Israel, October 23--27, 2022, Proceedings, Part XXXII , pages=. 2022 , organization=

work page 2022

-

[28]

ACM Transactions on Graphics (ToG) , volume=

Instant Neural Graphics Primitives with a Multiresolution Hash Encoding , author=. ACM Transactions on Graphics (ToG) , volume=. 2022 , publisher=

work page 2022

-

[29]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Plenoxels: Radiance fields without neural networks , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[30]

Proceedings of the IEEE/CVF International Conference on Computer Vision , pages=

PlenOctrees for Real-Time Rendering of Neural Radiance Fields , author=. Proceedings of the IEEE/CVF International Conference on Computer Vision , pages=

-

[31]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Direct Voxel Grid Optimization: Super-Fast Convergence for Radiance Fields Reconstruction , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[32]

Advances in Neural Information Processing Systems , volume=

Neural Sparse Voxel Fields , author=. Advances in Neural Information Processing Systems , volume=

-

[33]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

K-planes: Explicit radiance fields in space, time, and appearance , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[34]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Hexplane: A fast representation for dynamic scenes , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[35]

Octree-gs: Towards consistent real-time rendering with lod-structured 3d gaussians , author=. arXiv preprint arXiv:2403.17898 , year=

-

[36]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Scaffold-gs: Structured 3d gaussians for view-adaptive rendering , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[37]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Mip-splatting: Alias-free 3d gaussian splatting , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[38]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Gaussianshader: 3d gaussian splatting with shading functions for reflective surfaces , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[39]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Efficient geometry-aware 3d generative adversarial networks , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[40]

Proceedings of the IEEE/CVF International Conference on Computer Vision , pages=

Tri-miprf: Tri-mip representation for efficient anti-aliasing neural radiance fields , author=. Proceedings of the IEEE/CVF International Conference on Computer Vision , pages=

-

[41]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Mip-nerf: A multiscale representation for anti-aliasing neural radiance fields , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[42]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Unisurf: Unifying neural implicit surfaces and radiance fields for multi-view reconstruction , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[43]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Deepsdf: Learning continuous signed distance functions for shape representation , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[44]

Advances in Neural Information Processing Systems , volume=

Multiview neural surface reconstruction by disentangling geometry and appearance , author=. Advances in Neural Information Processing Systems , volume=

-

[45]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Improving neural implicit surfaces geometry with patch warping , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[46]

Proceedings of the European conference on computer vision (ECCV) , pages=

Mvsnet: Depth inference for unstructured multi-view stereo , author=. Proceedings of the European conference on computer vision (ECCV) , pages=

-

[47]

Advances in Neural Information Processing Systems , volume=

Gsdf: 3dgs meets sdf for improved neural rendering and reconstruction , author=. Advances in Neural Information Processing Systems , volume=

-

[48]

arXiv preprint arXiv:2312.00846 , year=

Neusg: Neural implicit surface reconstruction with 3d gaussian splatting guidance , author=. arXiv preprint arXiv:2312.00846 , year=

-

[49]

ACM Transactions on Graphics (TOG) , volume=

3dgsr: Implicit surface reconstruction with 3d gaussian splatting , author=. ACM Transactions on Graphics (TOG) , volume=. 2024 , publisher=

work page 2024

-

[50]

arXiv preprint arXiv:2411.15723 , year=

Gsurf: 3d reconstruction via signed distance fields with direct gaussian supervision , author=. arXiv preprint arXiv:2411.15723 , year=

-

[51]

Advances in Neural Information Processing Systems , volume=

Neural signed distance function inference through splatting 3d gaussians pulled on zero-level set , author=. Advances in Neural Information Processing Systems , volume=

-

[52]

European conference on computer vision , pages=

Neuris: Neural reconstruction of indoor scenes using normal priors , author=. European conference on computer vision , pages=. 2022 , organization=

work page 2022

-

[53]

Forty-first International Conference on Machine Learning , year=

Gaussianpro: 3d gaussian splatting with progressive propagation , author=. Forty-first International Conference on Machine Learning , year=

-

[54]

The Thirty-ninth Annual Conference on Neural Information Processing Systems , year=

Eve3D: Elevating Vision Models for Enhanced 3D Surface Reconstruction via Gaussian Splatting , author=. The Thirty-ninth Annual Conference on Neural Information Processing Systems , year=

-

[55]

The Thirty-ninth Annual Conference on Neural Information Processing Systems , year=

MaterialRefGS: Reflective gaussian splatting with multi-view consistent material inference , author=. The Thirty-ninth Annual Conference on Neural Information Processing Systems , year=

-

[56]

The Thirteenth International Conference on Learning Representations , year=

Reflective Gaussian Splatting , author=. The Thirteenth International Conference on Learning Representations , year=

-

[57]

ACM Transactions on Graphics , volume=

Rade-gs: Rasterizing depth in gaussian splatting , author=. ACM Transactions on Graphics , volume=. 2026 , publisher=

work page 2026

-

[58]

Proceedings of the 32nd ACM International Conference on Multimedia , pages=

Absgs: Recovering fine details in 3d gaussian splatting , author=. Proceedings of the 32nd ACM International Conference on Multimedia , pages=

-

[59]

European Conference on Computer Vision , pages=

Improving Neural Surface Reconstruction with Feature Priors from Multi-view Images , author=. European Conference on Computer Vision , pages=. 2024 , organization=

work page 2024

-

[60]

Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision , pages=

Recovering fine details for neural implicit surface reconstruction , author=. Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision , pages=

-

[61]

ACM Transactions on Graphics (TOG) , volume=

Stopthepop: Sorted gaussian splatting for view-consistent real-time rendering , author=. ACM Transactions on Graphics (TOG) , volume=. 2024 , publisher=

work page 2024

-

[62]

arXiv preprint arXiv:2410.01804 , year=

Ever: Exact volumetric ellipsoid rendering for real-time view synthesis , author=. arXiv preprint arXiv:2410.01804 , year=

-

[63]

ACM Transactions on Graphics (TOG) , volume=

3D Gaussian Ray Tracing: Fast Tracing of Particle Scenes , author=. ACM Transactions on Graphics (TOG) , volume=. 2024 , publisher=

work page 2024

-

[64]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Sal: Sign agnostic learning of shapes from raw data , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[65]

IEEE transactions on pattern analysis and machine intelligence , volume=

Accurate, dense, and robust multiview stereopsis , author=. IEEE transactions on pattern analysis and machine intelligence , volume=. 2009 , publisher=

work page 2009

-

[66]

IEEE transactions on image processing , volume=

Accurate multiple view 3d reconstruction using patch-based stereo for large-scale scenes , author=. IEEE transactions on image processing , volume=. 2013 , publisher=

work page 2013

-

[67]

Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

Patchmatch based joint view selection and depthmap estimation , author=. Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

-

[68]

Forty-second International Conference on Machine Learning , year=

Stochastic Poisson Surface Reconstruction with One Solve using Geometric Gaussian Processes , author=. Forty-second International Conference on Machine Learning , year=

-

[69]

Forty-first International Conference on Machine Learning , year=

Neuralindicator: Implicit surface reconstruction from neural indicator priors , author=. Forty-first International Conference on Machine Learning , year=

-

[70]

International Conference on Machine Learning , pages=

NeuralSlice: Neural 3D Triangle Mesh Reconstruction via Slicing 4D Tetrahedral Meshes , author=. International Conference on Machine Learning , pages=. 2023 , organization=

work page 2023

-

[71]

International Conference on Machine Learning , pages=

GeCoNeRF: Few-shot Neural Radiance Fields via Geometric Consistency , author=. International Conference on Machine Learning , pages=. 2023 , organization=

work page 2023

-

[72]

Forty-second International Conference on Machine Learning , year=

VTGaussian-SLAM: RGBD SLAM for Large Scale Scenes with Splatting View-Tied 3D Gaussians , author=. Forty-second International Conference on Machine Learning , year=

-

[73]

Forty-second International Conference on Machine Learning , year=

Perceptual-GS: Scene-adaptive Perceptual Densification for Gaussian Splatting , author=. Forty-second International Conference on Machine Learning , year=

-

[74]

Proceedings of the AAAI Conference on Artificial Intelligence , volume=

SparseSurf: Sparse-View 3D Gaussian Splatting for Surface Reconstruction , author=. Proceedings of the AAAI Conference on Artificial Intelligence , volume=

-

[75]

Proceedings of the Computer Vision and Pattern Recognition Conference , pages=

Sparse2dgs: Geometry-prioritized gaussian splatting for surface reconstruction from sparse views , author=. Proceedings of the Computer Vision and Pattern Recognition Conference , pages=

-

[76]

European conference on computer vision , pages=

Cor-gs: sparse-view 3d gaussian splatting via co-regularization , author=. European conference on computer vision , pages=. 2024 , organization=

work page 2024

-

[77]

Advances in Neural Information Processing Systems , volume=

Depth anything v2 , author=. Advances in Neural Information Processing Systems , volume=

-

[78]

arXiv preprint arXiv:2504.01016 , year=

GeometryCrafter: Consistent Geometry Estimation for Open-world Videos with Diffusion Priors , author=. arXiv preprint arXiv:2504.01016 , year=

-

[79]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Rethinking inductive biases for surface normal estimation , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[80]

Structure-from-Motion Revisited , booktitle=

Sch\". Structure-from-Motion Revisited , booktitle=

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.