Recognition: no theorem link

FiTS: Interpretable Spiking Neurons via Frequency Selectivity and Temporal Shaping

Pith reviewed 2026-05-14 02:15 UTC · model grok-4.3

The pith

Spiking neurons improve in simple feedforward networks when each one separately selects a target frequency and then adjusts its timing contribution through group delay.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

FiTS factorizes temporal computation inside each spiking neuron into a Frequency Selectivity module that defines a target frequency as the maximizer of subthreshold magnitude response and a Temporal Shaping module that modulates group delay to reshape when frequency components contribute to membrane voltage accumulation. In simple feedforward spiking networks this factorization produces consistent accuracy gains over plain LIF neurons on auditory benchmarks while remaining competitive with stronger temporal baselines, and the learned parameters directly summarize the frequency and timing structure acquired by the network.

What carries the argument

The FiTS neuron, whose Frequency Selectivity module sets a target frequency that maximizes subthreshold magnitude response and whose Temporal Shaping module applies group-delay modulation to control voltage accumulation timing.

If this is right

- Simple feedforward SNNs using FiTS neurons outperform standard LIF baselines on tasks where frequency and timing structure matter.

- Learned target frequencies and group-delay values supply neuron-level summaries of the frequency and timing organization inside the network.

- The factorization keeps performance competitive with more complex temporal SNNs that use recurrence or network delays.

- Individual neurons can specialize in distinct frequency bands and timing offsets without requiring post-training analysis.

- The approach works in networks that lack recurrence or explicit delay lines.

Where Pith is reading between the lines

- The same split might reduce reliance on network-level delays when building temporal models for other sensory streams.

- Readable neuron summaries could make it easier to inspect what a trained SNN has extracted in non-auditory domains.

- Mapping the frequency and delay parameters directly to hardware filters might lower the cost of event-driven chips.

Load-bearing premise

Factorizing each neuron's temporal work into a frequency selector and a separate group-delay shaper is enough to produce useful specialization and performance gains without extra network mechanisms.

What would settle it

Running the same auditory benchmarks with either the frequency-selection or the group-delay module removed from FiTS and finding that accuracy falls back to or below the plain LIF level.

Figures

read the original abstract

Spiking Neural Networks (SNNs) are a promising framework for event-driven temporal processing. Prior work has improved temporal modeling through richer neuron dynamics and network-level mechanisms such as recurrence and delays, but it remains unclear how individual spiking neurons should specialize within a network. In this work, we introduce FiTS, a spiking neuron that factorizes temporal computation within each neuron into Frequency Selectivity (FS) and Temporal Shaping (TS). The FS module parameterizes each neuron's target frequency as the maximizer of its subthreshold magnitude response, while the TS module reshapes when frequency components contribute to membrane voltage accumulation through group-delay modulation. On auditory benchmarks where frequency selectivity and timing are central to the input structure, FiTS consistently improves over a plain Leaky Integrate-and-Fire (LIF) baseline in simple feedforward SNNs without recurrence or network-level delays, while remaining competitive with strong temporal SNN baselines. Beyond accuracy, the learned target frequencies and group-delay shifts provide interpretable neuron-level summaries of the frequency and timing organization learned within the network.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

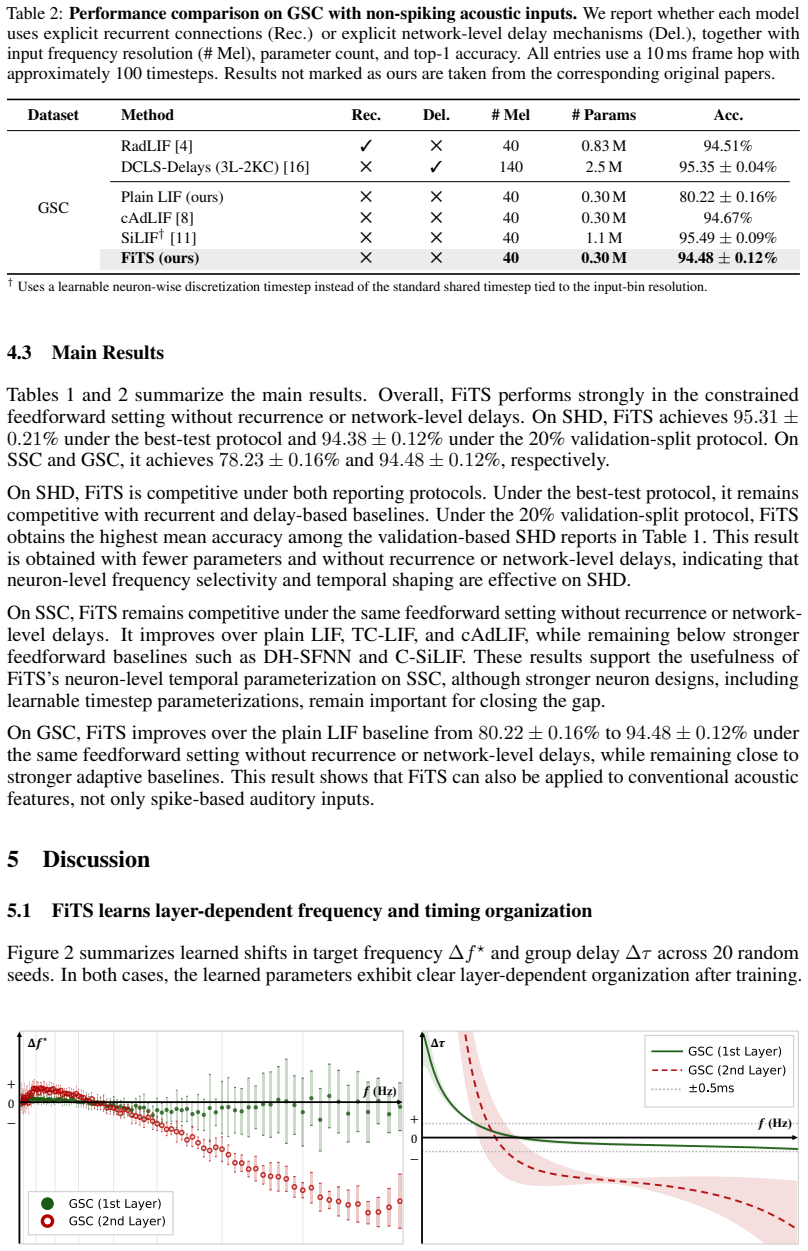

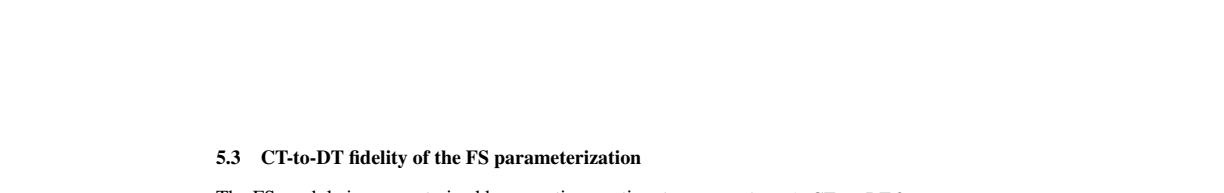

Summary. The manuscript introduces FiTS, a spiking neuron that factorizes temporal computation into a Frequency Selectivity (FS) module—setting each neuron's target frequency as the maximizer of its subthreshold magnitude response—and a Temporal Shaping (TS) module that applies group-delay modulation to reshape membrane voltage accumulation. It claims consistent accuracy gains over plain LIF baselines in simple feedforward SNNs on auditory benchmarks, competitiveness with strong temporal SNN baselines, and that the learned target frequencies and group-delay shifts yield interpretable neuron-level summaries of frequency and timing organization.

Significance. If the reported gains hold and the learned parameters align with actual spiking behavior, the work demonstrates that neuron-level factorization of frequency selectivity and timing can improve temporal processing in feedforward SNNs without recurrence or network delays, while adding interpretability that prior neuron models lack.

major comments (1)

- [FS module definition (abstract and methods)] The central claim that FS sets interpretable target frequencies controlling spiking behavior rests on subthreshold magnitude maximization, but the manuscript provides no analysis of how threshold crossing, reset, and refractory dynamics alter the effective frequency response (see skeptic concern on subthreshold vs. spiking regime). This is load-bearing for both the performance improvement and interpretability claims.

minor comments (1)

- [Abstract] The abstract does not name the specific auditory benchmarks or report quantitative deltas versus baselines; adding these would strengthen the summary.

Simulated Author's Rebuttal

We thank the referee for their constructive review and for identifying a key point regarding the relationship between the subthreshold definition of the FS module and the full spiking dynamics. We address this comment below and will incorporate additional analysis in the revision.

read point-by-point responses

-

Referee: [FS module definition (abstract and methods)] The central claim that FS sets interpretable target frequencies controlling spiking behavior rests on subthreshold magnitude maximization, but the manuscript provides no analysis of how threshold crossing, reset, and refractory dynamics alter the effective frequency response (see skeptic concern on subthreshold vs. spiking regime). This is load-bearing for both the performance improvement and interpretability claims.

Authors: We agree that the manuscript would benefit from explicit analysis of how the spiking regime (threshold crossing, reset, and refractory dynamics) modifies the effective frequency response relative to the subthreshold magnitude maximization used to define the target frequency. The FS parameterization is intentionally grounded in the linear subthreshold response to yield an interpretable, closed-form target frequency per neuron; the TS module then modulates accumulation timing. While these nonlinearities are present, our empirical results across auditory benchmarks show that the learned parameters produce both accuracy gains over LIF and neuron-level behaviors consistent with the designed selectivity. To directly address the concern, we will add a new analysis subsection that quantifies the effective frequency response by driving each trained FiTS neuron with sinusoidal inputs across a frequency grid and measuring spike-rate tuning curves (or vector strength for phase locking). This will demonstrate the degree to which the subthreshold peak is preserved or shifted under realistic spiking conditions and will be included in the revised manuscript. revision: yes

Circularity Check

No significant circularity in FiTS neuron model derivation

full rationale

The paper defines the FiTS neuron by introducing FS (target frequency as maximizer of subthreshold magnitude response) and TS (group-delay modulation) modules as explicit design choices. Parameters are learned from data during training on auditory benchmarks, and performance gains are shown via empirical comparison to LIF and other baselines in feedforward SNNs. No equations reduce a claimed prediction or result to a fitted input by construction, no self-citations are invoked as load-bearing uniqueness theorems, and no ansatz is smuggled via prior work. The interpretability claim follows directly from the learned parameters without tautological redefinition. The derivation chain is self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

free parameters (2)

- target frequency

- group-delay shifts

axioms (1)

- domain assumption Subthreshold membrane voltage follows dynamics similar to leaky integrate-and-fire neurons

invented entities (2)

-

FS module

no independent evidence

-

TS module

no independent evidence

Reference graph

Works this paper leans on

-

[1]

A low power, fully event-based gesture recognition system

Arnon Amir, Brian Taba, David Berg, Timothy Melano, Jeffrey McKinstry, Carmelo Di Nolfo, Tapan Nayak, Alexander Andreopoulos, Guillaume Garreau, Marcela Mendoza, Jeff Kusnitz, et al. A low power, fully event-based gesture recognition system. InProc. CVPR, 2017

work page 2017

-

[2]

Maximilian Baronig, Romain Ferrand, Silvester Sabathiel, and Robert Legenstein. Advancing spatio-temporal processing in spiking neural networks through adaptation.arXiv preprint arXiv:2408.07517, 2024

-

[3]

Long short-term memory and learning-to-learn in networks of spiking neurons

Guillaume Bellec, Darjan Salaj, Anand Subramoney, Robert Legenstein, and Wolfgang Maass. Long short-term memory and learning-to-learn in networks of spiking neurons. InNeurIPS, 2018

work page 2018

-

[4]

Alexandre Bittar and Philip N. Garner. A surrogate gradient spiking baseline for speech command recognition.Frontiers in Neuroscience, V olume 16 - 2022, 2022

work page 2022

-

[5]

Xinyi Chen, Jibin Wu, Chenxiang Ma, Yinsong Yan, Yujie Wu, and Kay Chen Tan. PMSN: A parallel multi-compartment spiking neuron for multi-scale temporal processing.arXiv preprint arXiv:2408.14917, 2024

-

[6]

The heidelberg spiking data sets for the systematic evaluation of spiking neural networks.IEEE Trans

Benjamin Cramer, Yannik Stradmann, Johannes Schemmel, and Friedemann Zenke. The heidelberg spiking data sets for the systematic evaluation of spiking neural networks.IEEE Trans. Neural Networks, 33:2744–2757, 2020

work page 2020

-

[7]

Loihi: A neuromorphic manycore processor with on-chip learning.IEEE Micro, 38:82–99, 2018

Mike Davies, Narayan Srinivasa, Tsung-Han Lin, Gautham Chinya, Yongqiang Cao, Sri Harsha Choday, Georgios Dimou, Prasad Joshi, Nabil Imam, Shweta Jain, Yuyun Liao, et al. Loihi: A neuromorphic manycore processor with on-chip learning.IEEE Micro, 38:82–99, 2018

work page 2018

-

[8]

Lucas Deckers, Laurens Van Damme, Werner Van Leekwijck, Ing Jyh Tsang, and Steven Latré. Co-learning synaptic delays, weights and adaptation in spiking neural networks.Frontiers in Neuroscience, 18:1360300, 2024

work page 2024

-

[9]

Temporal efficient training of spiking neural network via gradient re-weighting

Shikuang Deng, Yuhang Li, Shanghang Zhang, and Shi Gu. Temporal efficient training of spiking neural network via gradient re-weighting. InProc. ICLR, 2022

work page 2022

-

[10]

Steven K. Esser, Paul A. Merolla, John V . Arthur, Andrew S. Cassidy, Rathinakumar Ap- puswamy, et al. Convolutional networks for fast, energy-efficient neuromorphic computing. Proceedings of the National Academy of Sciences, 113:11441–11446, 2016

work page 2016

-

[11]

SiLIF: Structured State Space Model Dynamics and Parametrization for Spiking Neural Networks

Maxime Fabre, Lyubov Dudchenko, and Emre Neftci. Structured state space model dynamics and parametrization for spiking neural networks.arXiv preprint arXiv:2506.06374, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[12]

Incorporating learnable membrane time constant to enhance learning of spiking neural networks

Wei Fang, Zhaofei Yu, Yanqi Chen, Timothée Masquelier, Tiejun Huang, and Yonghong Tian. Incorporating learnable membrane time constant to enhance learning of spiking neural networks. InProc. ICCV, 2021

work page 2021

-

[13]

Parallel spiking neurons with high efficiency and ability to learn long-term dependencies

Wei Fang, Zhaofei Yu, Zhaokun Zhou, Ding Chen, Yanqi Chen, Zhengyu Ma, Timothée Masquelier, and Yonghong Tian. Parallel spiking neurons with high efficiency and ability to learn long-term dependencies. InNeurIPS, 2023

work page 2023

-

[14]

Event-based vision: A survey.IEEE Trans

Guillermo Gallego, Tobi Delbrück, Garrick Orchard, Chiara Bartolozzi, Brian Taba, Andrea Censi, Stefan Leutenegger, Andrew J Davison, Jörg Conradt, et al. Event-based vision: A survey.IEEE Trans. on Pattern Analysis and Machine Intelligence, 44:154–180, 2020

work page 2020

-

[15]

Take a shortcut back: Mitigating the gradient vanishing for training spiking neural networks

Yufei Guo, Yuanpei Chen, Zecheng Hao, Weihang Peng, Zhou Jie, Yuhan Zhang, Xiaode Liu, and Zhe Ma. Take a shortcut back: Mitigating the gradient vanishing for training spiking neural networks. InNeurIPS, 2024

work page 2024

-

[16]

Learning delays in spiking neural networks using dilated convolutions with learnable spacings

Ilyass Hammouamri, Ismail Khalfaoui-Hassani, and Timothée Masquelier. Learning delays in spiking neural networks using dilated convolutions with learnable spacings. InProc. ICLR, 2024

work page 2024

-

[17]

Saya Higuchi, Sebastian Kairat, Sander M. Bohté, and Sebastian Otte. Balanced resonate-and- fire neurons. InProc. ICML, 2024. 10

work page 2024

-

[18]

1.1 computing’s energy problem (and what we can do about it)

Mark Horowitz. 1.1 computing’s energy problem (and what we can do about it). In2014 IEEE International Solid-State Circuits Conference Digest of Technical Papers (ISSCC), 2014

work page 2014

-

[19]

CLIF: Complementary leaky integrate-and-fire neuron for spiking neural networks

Yulong Huang, Xiaopeng Lin, Hongwei Ren, Haotian Fu, Yue Zhou, Zunchang Liu, Biao Pan, and Bojun Cheng. CLIF: Complementary leaky integrate-and-fire neuron for spiking neural networks. InProc. ICLR, 2024

work page 2024

-

[20]

Yulong Huang, Zunchang Liu, Changchun Feng, Xiaopeng Lin, Hongwei Ren, Haotian Fu, Yue Zhou, Hong Xing, and Bojun Cheng. PRF: Parallel resonate and fire neuron for long sequence learning in spiking neural networks.arXiv preprint arXiv:2410.03530, 2024

-

[21]

Scaling up resonate-and-fire networks for fast deep learning

Thomas E Huber, Jules Lecomte, Borislav Polovnikov, and Axel von Arnim. Scaling up resonate-and-fire networks for fast deep learning. InProc. ECCV, 2024

work page 2024

-

[22]

Bruce Hutcheon and Yosef Yarom. Resonance, oscillation and the intrinsic frequency prefer- ences of neurons.Trends in Neurosciences, 23:216–222, 2000

work page 2000

-

[23]

Eugene M. Izhikevich. Resonate-and-fire neurons.Neural Networks, pages 883–894, 2001

work page 2001

-

[24]

Sanja Karilanova, Subhrakanti Dey, and Ayça Özçelikkale. Delays in spiking neural networks: A state space model approach.arXiv preprint arXiv:2512.01906, 2025

-

[25]

Adam: A method for stochastic optimization

Diederik P Kingma, Jimmy Ba, Y Bengio, and Y LeCun. Adam: A method for stochastic optimization. InProc. ICLR, 2015

work page 2015

-

[26]

SGDR: Stochastic gradient descent with warm restarts

Ilya Loshchilov and Frank Hutter. SGDR: Stochastic gradient descent with warm restarts. In Proc. ICLR, 2017

work page 2017

-

[27]

Networks of spiking neurons: The third generation of neural network models

Wolfgang Maass. Networks of spiking neurons: The third generation of neural network models. Neural Networks, pages 1659–1671, 1997

work page 1997

-

[28]

Towards memory-and time-efficient backpropagation for training spiking neural networks

Qingyan Meng, Mingqing Xiao, Shen Yan, Yisen Wang, Zhouchen Lin, and Zhi-Quan Luo. Towards memory-and time-efficient backpropagation for training spiking neural networks. In Proc. ICCV, 2023

work page 2023

-

[29]

DelRec: Learning delays in recurrent spiking neural networks.arXiv preprint arXiv:2509.24852, 2025

Alexandre Queant, Ulysse Rançon, Benoit R Cottereau, and Timothée Masquelier. DelRec: Learning delays in recurrent spiking neural networks.arXiv preprint arXiv:2509.24852, 2025

-

[30]

Magnus J. E. Richardson, Nicolas Brunel, and Vincent Hakim. From subthreshold to firing-rate resonance.Journal of Neurophysiology, 89:2538–2554, 2003

work page 2003

-

[31]

Jaiswal, and Priyadarshini Panda

Kaushik Roy, Akhilesh R. Jaiswal, and Priyadarshini Panda. Towards spike-based machine intelligence with neuromorphic computing.Nature, 575:607–617, 2019

work page 2019

-

[32]

SpikingSSMs: Learning long sequences with sparse and parallel spiking state space models

Shuaijie Shen, Chao Wang, Renzhuo Huang, Yan Zhong, Qinghai Guo, Zhichao Lu, Jianguo Zhang, and Luziwei Leng. SpikingSSMs: Learning long sequences with sparse and parallel spiking state space models. InNeurIPS, 2025

work page 2025

-

[33]

SLAYER: Spike layer error reassignment in time

Sumit Bam Shrestha and Garrick Orchard. SLAYER: Spike layer error reassignment in time. In NeurIPS, 2018

work page 2018

-

[34]

Axonal delay as a short-term memory for feed forward deep spiking neural networks

Pengfei Sun, Longwei Zhu, and Dick Botteldooren. Axonal delay as a short-term memory for feed forward deep spiking neural networks. InProc. ICASSP, 2022

work page 2022

-

[35]

Kexin Wang, Yuhong Chou, Di Shang, Shijie Mei, Jiahong Zhang, Yanbin Huang, Man Yao, Bo Xu, and Guoqi Li. MMDEND: Dendrite-inspired multi-branch multi-compartment parallel spiking neuron for sequence modeling. InProc. ACL, 2025

work page 2025

-

[36]

Pete Warden. Speech commands: A dataset for limited-vocabulary speech recognition.arXiv preprint arXiv:1804.03209, 2018

-

[37]

Online training through time for spiking neural networks

Mingqing Xiao, Qingyan Meng, Zongpeng Zhang, Di He, and Zhouchen Lin. Online training through time for spiking neural networks. InNeurIPS, 2022. 11

work page 2022

-

[38]

GLIF: A unified gated leaky integrate- and-fire neuron for spiking neural networks

Xingting Yao, Fanrong Li, Zitao Mo, and Jian Cheng. GLIF: A unified gated leaky integrate- and-fire neuron for spiking neural networks. InNeurIPS, 2022

work page 2022

-

[39]

Bojian Yin, Federico Corradi, and Sander M Bohté. Accurate and efficient time-domain classification with adaptive spiking recurrent neural networks.Nature Machine Intelligence, 3:905–913, 2021

work page 2021

-

[40]

FSTA-SNN: Frequency-based spatial-temporal attention module for spiking neural networks

Kairong Yu, Tianqing Zhang, Hongwei Wang, and Qi Xu. FSTA-SNN: Frequency-based spatial-temporal attention module for spiking neural networks. InProc. AAAI, 2025

work page 2025

-

[41]

Friedemann Zenke and Surya Ganguli. SuperSpike: Supervised learning in multilayer spiking neural networks.Neural computation, 30:1514–1541, 2018

work page 2018

-

[42]

Dendritic resonate-and-fire neuron for effective and efficient long sequence modeling

Dehao Zhang, Malu Zhang, Shuai Wang, Jingya Wang, Wenjie Wei, Zeyu Ma, Guoqing Wang, Yang Yang, and Haizhou Li. Dendritic resonate-and-fire neuron for effective and efficient long sequence modeling. InNeurIPS, 2026

work page 2026

-

[43]

TC-LIF: A two-compartment spiking neuron model for long-term sequential modelling

Shimin Zhang, Qu Yang, Chenxiang Ma, Jibin Wu, Haizhou Li, and Kay Chen Tan. TC-LIF: A two-compartment spiking neuron model for long-term sequential modelling. InProc. AAAI, 2024

work page 2024

-

[44]

DA-LIF: Dual adaptive leaky integrate-and-fire model for deep spiking neural networks

Tianqing Zhang, Kairong Yu, Jian Zhang, and Hongwei Wang. DA-LIF: Dual adaptive leaky integrate-and-fire model for deep spiking neural networks. InProc. ICASSP, 2025

work page 2025

-

[45]

Hanle Zheng, Zhong Zheng, Rui Hu, Bo Xiao, Yujie Wu, Fangwen Yu, Xue Liu, and Guoqi Li. Temporal dendritic heterogeneity incorporated with spiking neural networks for learning multi-timescale dynamics.Nature Communications, 15, 2024. 12 Supplementary Material: FiTS A Theoretical Details 14 A.1 Proof of Theorem 1 . . . . . . . . . . . . . . . . . . . . . ....

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.