Recognition: no theorem link

DWDP: Distributed Weight Data Parallelism for High-Performance LLM Inference on NVL72

Pith reviewed 2026-05-13 21:23 UTC · model grok-4.3

The pith

DWDP lets each GPU run LLM inference independently by fetching MoE weights on demand instead of synchronizing across ranks.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

DWDP preserves data-parallel execution while offloading MoE weights across peer GPUs and fetching missing experts on demand. By removing collective inter-rank synchronization, each GPU progresses independently. Split-weight management and asynchronous remote-weight prefetch keep the added overhead low enough to deliver an 8.8 percent improvement in end-to-end output TPS/GPU at comparable TPS/user in the 20-100 serving range for 8K input and 1K output sequences.

What carries the argument

Distributed Weight Data Parallelism (DWDP), which offloads MoE weights across peer GPUs and performs on-demand asynchronous fetching to eliminate inter-rank synchronization barriers.

If this is right

- Each GPU can advance inference steps without waiting for collective barriers, lowering the impact of workload imbalance.

- Output tokens per second per GPU rises 8.8 percent while tokens per second per user stays comparable in the tested serving band.

- The same data-parallel layout continues to work after weights are split, with only the prefetch mechanism added.

- Implementation in TensorRT-LLM on GB200 NVL72 hardware confirms the gains hold for DeepSeek-R1 under the stated sequence lengths.

Where Pith is reading between the lines

- The independent-progress property may scale more gracefully to larger GPU counts than synchronization-heavy methods once interconnect latency grows.

- Similar on-demand weight fetching could be tested on other MoE architectures to check whether the 8.8 percent range generalizes beyond the evaluated model.

- If prefetch overhead proves sensitive to activation skew, a lightweight prediction of next-expert usage could further reduce stalls.

Load-bearing premise

The overhead of split-weight management and asynchronous remote-weight prefetch stays low enough across varying expert activation patterns and sequence lengths to preserve the reported gains without creating new bottlenecks.

What would settle it

Run the same workload with highly skewed expert activation patterns or sequence lengths beyond 8K and measure whether the 8.8 percent TPS/GPU gain disappears or turns negative.

Figures

read the original abstract

Large language model (LLM) inference increasingly depends on multi-GPU execution, yet existing inference parallelization strategies require layer-wise inter-rank synchronization, making end-to-end performance sensitive to workload imbalance. We present DWDP (Distributed Weight Data Parallelism), an inference parallelization strategy that preserves data-parallel execution while offloading MoE weights across peer GPUs and fetching missing experts on demand. By removing collective inter-rank synchronization, DWDP allows each GPU to progress independently. We further address the practical overheads of this design with two optimizations for split-weight management and asynchronous remote-weight prefetch. Implemented in TensorRT-LLM and evaluated with DeepSeek-R1 on GB200 NVL72, DWDP improves end-to-end output TPS/GPU by 8.8% at comparable TPS/user in the 20-100 TPS/user serving range under 8K input sequence length and 1K output sequence length.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces DWDP, a distributed weight data parallelism strategy for LLM inference that offloads MoE weights across peer GPUs and fetches missing experts on demand, thereby removing layer-wise inter-rank synchronization to allow independent GPU progress. It adds optimizations for split-weight management and asynchronous remote-weight prefetch, and reports an 8.8% gain in end-to-end output TPS/GPU (at comparable TPS/user) for DeepSeek-R1 on GB200 NVL72 under 8K input / 1K output sequences in the 20-100 TPS/user range, implemented in TensorRT-LLM.

Significance. If the measured gain holds under broader conditions, the approach could meaningfully improve utilization for large-scale MoE inference by eliminating synchronization bottlenecks. The concrete hardware measurement on NVL72 with a production framework is a strength that grounds the claim in real-system data.

major comments (2)

- [Evaluation] Evaluation section: the 8.8% TPS/GPU improvement is presented without reported baseline details (e.g., exact tensor-parallel or expert-parallel configurations used for comparison), run-to-run variance, or workload-generation methodology. This makes the central empirical claim difficult to verify or reproduce.

- [Design and Optimizations] Design and Optimizations sections: the claim that split-weight bookkeeping and async remote prefetch add negligible latency rests on the untested assumption that they remain low across non-uniform expert activations and varying sequence lengths. No ablation on prefetch hit rate, activation entropy, or network contention is described, leaving the net-gain argument load-bearing but unsupported at the operating points that matter most for DeepSeek-R1.

minor comments (1)

- [Abstract] Abstract and introduction: the phrase 'comparable TPS/user' should be defined quantitatively (e.g., within what tolerance) to allow readers to interpret the reported operating range.

Simulated Author's Rebuttal

We thank the referee for the constructive comments on our manuscript. We address each major point below with clarifications and indicate the revisions we will make.

read point-by-point responses

-

Referee: [Evaluation] Evaluation section: the 8.8% TPS/GPU improvement is presented without reported baseline details (e.g., exact tensor-parallel or expert-parallel configurations used for comparison), run-to-run variance, or workload-generation methodology. This makes the central empirical claim difficult to verify or reproduce.

Authors: We agree that the evaluation lacks sufficient detail on the baseline. The baseline is standard TensorRT-LLM expert parallelism (no DWDP) with the same tensor-parallel degree as the DWDP runs. In the revised manuscript we will explicitly state the exact TP/EP configuration, report standard deviations from three repeated runs, and describe the workload as synthetic requests with fixed 8K input / 1K output lengths drawn from the same distribution. These additions will make the 8.8% TPS/GPU claim verifiable. revision: yes

-

Referee: [Design and Optimizations] Design and Optimizations sections: the claim that split-weight bookkeeping and async remote prefetch add negligible latency rests on the untested assumption that they remain low across non-uniform expert activations and varying sequence lengths. No ablation on prefetch hit rate, activation entropy, or network contention is described, leaving the net-gain argument load-bearing but unsupported at the operating points that matter most for DeepSeek-R1.

Authors: The referee is correct that no explicit ablations are provided. We will revise the Design section to report the observed prefetch hit rates (typically >92% under DeepSeek-R1 activation patterns) and to explain why the asynchronous prefetch hides latency for the measured sequence lengths. Full sweeps over activation entropy and network contention would require new experiments outside the current evaluation scope; we therefore treat this as a partial revision and will note the limitation in the text. revision: partial

Circularity Check

No circularity; empirical hardware measurement of implementation gains

full rationale

The paper introduces DWDP as a practical inference parallelization strategy for MoE models, implemented in TensorRT-LLM and benchmarked on GB200 NVL72 hardware with DeepSeek-R1. The central result is a measured 8.8% improvement in end-to-end output TPS/GPU under fixed sequence lengths and load ranges. No equations, first-principles derivations, or parameter-fitting steps are described that could reduce the reported gain to a self-referential definition, fitted input, or self-citation chain. The performance claim rests on direct execution measurements rather than any mathematical reduction to inputs, making the derivation chain self-contained and non-circular.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption MoE models exhibit sufficient expert sparsity to make on-demand fetching practical without excessive communication

Reference graph

Works this paper leans on

-

[1]

DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning

DeepSeek-AI, Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, et al. DeepSeek-R1: Incentivizing reasoning capability in LLMs via reinforcement learning.arXiv preprint arXiv:2501.12948, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[2]

Thomas McCoy, Shunyu Yao, Dan Friedman, Mathew D

R. Thomas McCoy, Shunyu Yao, Dan Friedman, Mathew D. Hardy, and Thomas L. Griffiths. When a language model is optimized for reasoning, does it still show embers of autoregression? An analysis of OpenAI o1.arXiv preprint arXiv:2410.01792, 2024

-

[3]

Seedance 1.0: Exploring the Boundaries of Video Generation Models

Yu Gao, Haoyuan Guo, Tuyen Hoang, et al. Seedance 1.0: Exploring the boundaries of video generation models.arXiv preprint arXiv:2506.09113, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[4]

Barbarians at the gate: How AI is upending systems research

Audrey Cheng, Shu Liu, Melissa Pan, et al. Barbarians at the gate: How AI is upending systems research. arXiv preprint arXiv:2510.06189, 2025

-

[5]

DeepSeek-AI, Aixin Liu, Bei Feng, et al. DeepSeek-V3 technical report.arXiv preprint arXiv:2412.19437, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

- [6]

-

[7]

Qwen3-Coder-480B-A35B-Instruct

Qwen Team. Qwen3-Coder-480B-A35B-Instruct. https://huggingface.co/Qwen/ Qwen3-Coder-480B-A35B-Instruct, 2025. Accessed: March 30, 2026. 12 Distributed Weight Data Parallelism

work page 2025

-

[8]

GLM-5: from Vibe Coding to Agentic Engineering

GLM-5 Team, Aohan Zeng, Xin Lv, Zhenyu Hou, Zhengxiao Du, Qinkai Zheng, Bin Chen, et al. GLM-5: from vibe coding to agentic engineering.arXiv preprint arXiv:2602.15763, 2026

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[9]

DeepSpeed-MoE: Advancing mixture-of-experts inference and training to power next-generation AI scale

Samyam Rajbhandari, Conglong Li, Zhewei Yao, Minjia Zhang, Reza Yazdani Aminabadi, Ammar Ahmad Awan, Jeff Rasley, and Yuxiong He. DeepSpeed-MoE: Advancing mixture-of-experts inference and training to power next-generation AI scale. InProceedings of ICML’22, 2022

work page 2022

-

[10]

Tutel: Adaptive mixture-of-experts at scale

Changho Hwang, Wei Cui, Yifan Xiong, Ziyue Yang, Ze Liu, Han Hu, Zilong Wang, et al. Tutel: Adaptive mixture-of-experts at scale. InProceedings of MLSys’23, 2023

work page 2023

-

[11]

Scalable training of mixture-of-experts models with megatron core, 2026

Zijie Yan, Hongxiao Bai, Xin Yao, et al. Scalable training of mixture-of-experts models with megatron core, 2026

work page 2026

-

[12]

Efficient large-scale language model training on GPU clusters using Megatron- LM

Deepak Narayanan et al. Efficient large-scale language model training on GPU clusters using Megatron- LM. InProceedings of SC’21, 2021

work page 2021

-

[13]

GPipe: Efficient training of giant neural networks using pipeline parallelism

Yanping Huang et al. GPipe: Efficient training of giant neural networks using pipeline parallelism. In Advances in NeurIPS, 2019

work page 2019

-

[14]

Ruoyu Qin, Zheming Li, Weiran He, Mingxing Zhang, Yongwei Wu, Weimin Zheng, and Xinran Xu. Mooncake: Trading more storage for less computation — a KVCache-centric architecture for serving LLM chatbot. InProceedings of FAST’25, 2025

work page 2025

-

[15]

Strata: Hierarchical context caching for long context language model serving, 2025

Zhiqiang Xie, Ziyi Xu, Mark Zhao, Yuwei An, Vikram Sharma Mailthody, Scott Mahlke, Michael Garland, and Christos Kozyrakis. Strata: Hierarchical context caching for long context language model serving, 2025

work page 2025

-

[16]

Preble: Efficient distributed prompt scheduling for LLM serving

Vikranth Srivatsa, Zijian He, Reyna Abhyankar, Dongming Li, and Yiying Zhang. Preble: Efficient distributed prompt scheduling for LLM serving. InProceedings of OSDI’24, 2024

work page 2024

-

[17]

SGLang v0.4: Zero-overhead batch scheduler, cache-aware load balancer, faster structured outputs

The SGLang Team. SGLang v0.4: Zero-overhead batch scheduler, cache-aware load balancer, faster structured outputs. https://lmsys.org/blog/2024-12-04-sglang-v0-4/ , 2024. Accessed: March 30, 2026

work page 2024

-

[18]

NVIDIA TensorRT-LLM Team. ADP balance strategy. https://nvidia.github.io/TensorRT-LLM/ blogs/tech_blog/blog10_ADP_Balance_Strategy.html, 2026. Accessed: March 30, 2026

work page 2026

-

[19]

DistServe: Disaggregating prefill and decoding for goodput-optimized large language model serving

Yinmin Zhong, Shengyu Liu, Junda Chen, Jianbo Hu, Yibo Zhu, Xuanzhe Liu, Xin Jin, and Hao Zhang. DistServe: Disaggregating prefill and decoding for goodput-optimized large language model serving. InProceedings of OSDI’24, 2024

work page 2024

-

[20]

Splitwise: Efficient generative LLM inference using phase splitting

Pratyush Patel, Esha Choukse, Chaojie Zhang, Aashaka Shah, Íñigo Goiri, Saeed Maleki, and Ricardo Bianchini. Splitwise: Efficient generative LLM inference using phase splitting. InProceedings of ISCA’24, 2024

work page 2024

-

[21]

Scaling expert parallelism in TensorRT-LLM (part 2: Performance sta- tus and optimization)

NVIDIA TensorRT-LLM Team. Scaling expert parallelism in TensorRT-LLM (part 2: Performance sta- tus and optimization). https://nvidia.github.io/TensorRT-LLM/blogs/tech_blog/blog8_Scaling_ Expert_Parallelism_in_TensorRT-LLM_part2.html, 2026. Accessed: March 30, 2026

work page 2026

-

[22]

NCCL: NVIDIA collective communications library

NVIDIA Corporation. NCCL: NVIDIA collective communications library. https://github.com/NVIDIA/ nccl, 2015. Accessed: March 30, 2026

work page 2015

-

[23]

Samuel Williams, Andrew Waterman, and David Patterson. Roofline: An insightful visual performance model for multicore architectures.Communications of the ACM, 52(4):65–76, 2009. doi: 10.1145/1498765. 1498785

- [24]

-

[25]

NVIDIA Blackwell Architecture Technical Overview

NVIDIA Corporation. NVIDIA Blackwell Architecture Technical Overview. https://resources. nvidia.com/en-us-blackwell-architecture/blackwell-architecture-technical-brief , 2024. Ac- cessed: March 30, 2026

work page 2024

-

[26]

TensorRT-LLM: A TensorRT toolbox for optimized large language model inference

NVIDIA. TensorRT-LLM: A TensorRT toolbox for optimized large language model inference. https: //github.com/NVIDIA/TensorRT-LLM, 2023. Accessed: March 30, 2026. 14 Appendix A In-Depth Analysis of Communication-Computation Interference InDWDP, remote-weight prefetch is overlapped with computation. The overlap introduces hardware contention and reduces the e...

work page 2023

-

[27]

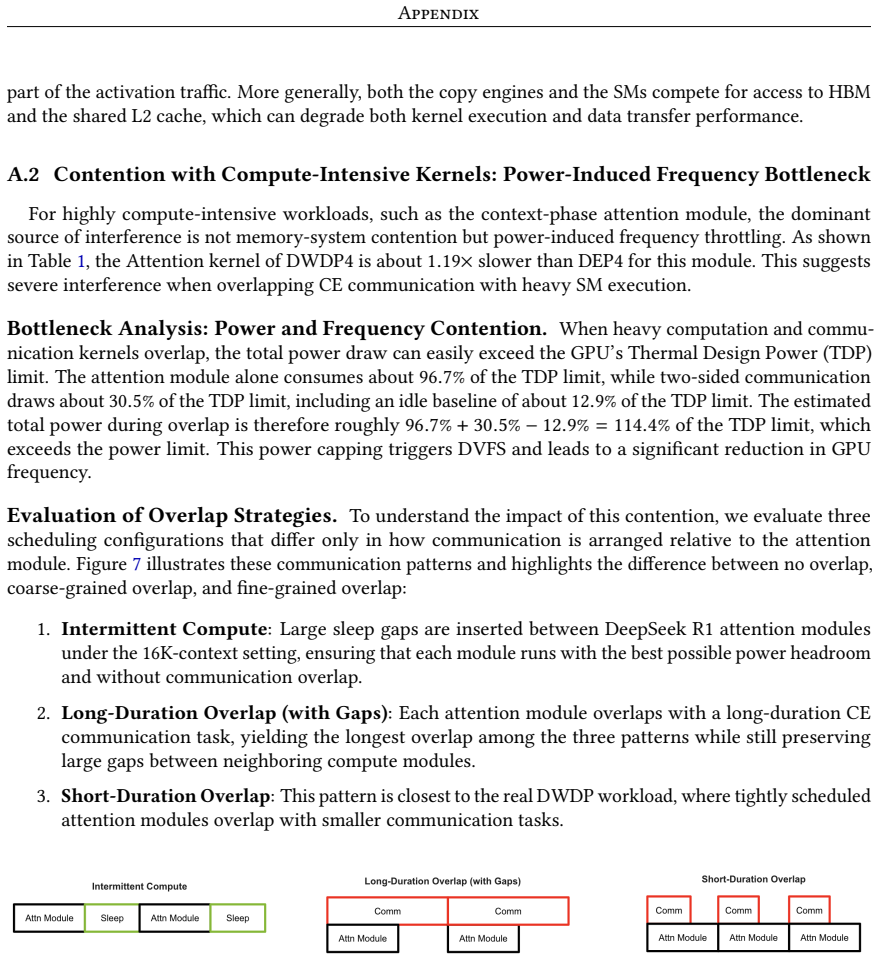

Intermittent Compute: Large sleep gaps are inserted between DeepSeek R1 attention modules under the 16K-context setting, ensuring that each module runs with the best possible power headroom and without communication overlap

-

[28]

Long-Duration Overlap (with Gaps): Each attention module overlaps with a long-duration CE communication task, yielding the longest overlap among the three patterns while still preserving large gaps between neighboring compute modules

-

[29]

Figure 7.Illustration of the three communication patterns used in our overlap study

Short-Duration Overlap: This pattern is closest to the real DWDP workload, where tightly scheduled attention modules overlap with smaller communication tasks. Figure 7.Illustration of the three communication patterns used in our overlap study. Intermittent Compute inserts large idle gaps between DeepSeek R1 attention modules to maximize power headroom, Lo...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.