Recognition: 1 theorem link

· Lean TheoremPoM: A Linear-Time Replacement for Attention with the Polynomial Mixer

Pith reviewed 2026-05-10 19:16 UTC · model grok-4.3

The pith

A learned polynomial aggregates tokens into a compact representation from which each token retrieves context, replacing self-attention at linear cost.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

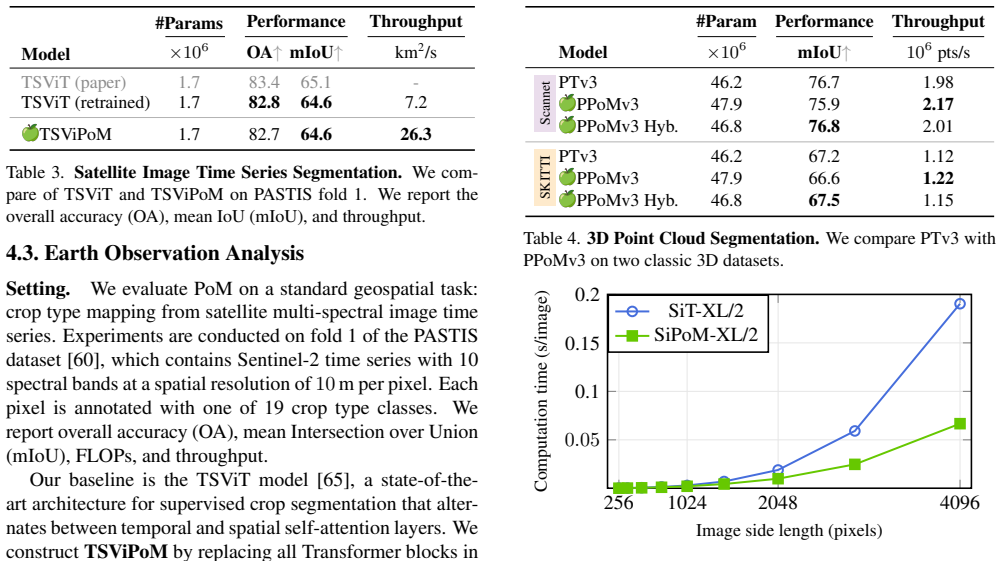

PoM aggregates input tokens into a compact representation through a learned polynomial function, from which each token retrieves contextual information. This satisfies the contextual mapping property and therefore preserves the universal approximation capability of the transformer. When substituted for self-attention, PoM produces models that match attention-based performance across five domains while running in linear time.

What carries the argument

The Polynomial Mixer, a token-mixing operation that aggregates inputs via a learned polynomial into a compact representation and lets tokens retrieve context from it.

If this is right

- Transformers can process longer sequences without the quadratic cost of attention.

- The same model architectures and training procedures remain valid when attention is replaced by PoM.

- Performance parity holds across text, image, 3D, and remote-sensing domains.

- The universality guarantee means no fundamental loss of modeling capacity.

Where Pith is reading between the lines

- The same aggregation idea could be tested in other sequence models that currently rely on attention for mixing.

- If the polynomial degree can be kept small while retaining performance, further speed-ups become possible on hardware optimized for dense operations.

- Replacing only the mixing step leaves the rest of the transformer unchanged, so existing scaling recipes and optimizers transfer directly.

Load-bearing premise

The learned polynomial can aggregate and retrieve the necessary contextual information across tasks without losing the expressive power that the universality proof assumes.

What would settle it

A sequence-to-sequence task where a transformer equipped with PoM fails to approximate the target mapping that a standard attention transformer can learn, or a long-sequence benchmark where PoM runtime grows quadratically instead of linearly.

Figures

read the original abstract

This paper introduces the Polynomial Mixer (PoM), a novel token mixing mechanism with linear complexity that serves as a drop-in replacement for self-attention. PoM aggregates input tokens into a compact representation through a learned polynomial function, from which each token retrieves contextual information. We prove that PoM satisfies the contextual mapping property, ensuring that transformers equipped with PoM remain universal sequence-to-sequence approximators. We replace standard self-attention with PoM across five diverse domains: text generation, handwritten text recognition, image generation, 3D modeling, and Earth observation. PoM matches the performance of attention-based models while drastically reducing computational cost when working with long sequences. The code is available at https://github.com/davidpicard/pom.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces the Polynomial Mixer (PoM) as a linear-complexity drop-in replacement for self-attention. PoM aggregates input tokens into a compact representation via a learned polynomial function, from which each token retrieves contextual information. The authors prove that PoM satisfies the contextual mapping property, ensuring transformers using PoM remain universal sequence-to-sequence approximators. They evaluate PoM by replacing attention in five domains (text generation, handwritten text recognition, image generation, 3D modeling, Earth observation), reporting performance parity with attention models at linear cost for long sequences. Code is released.

Significance. If the proof and results hold, this is a significant contribution to efficient transformer design, offering a scalable alternative for long-sequence tasks while preserving theoretical universality. The explicit proof of the contextual mapping property and multi-domain validation are notable strengths; open-sourced code aids reproducibility.

major comments (2)

- [Theoretical analysis / contextual mapping proof] Proof of contextual mapping property: the central universality claim depends on this property holding for the learned polynomial aggregation and retrieval. The manuscript should provide an explicit derivation or bounds showing that finite-degree polynomials do not restrict the representable contextual mappings, particularly addressing how coefficient learning interacts with the property.

- [Experiments / results across domains] Empirical evaluation sections: performance is reported to match attention baselines across five domains, but without details on run counts, variance, hyperparameter search, or ablations on polynomial degree, it is difficult to confirm that the linear-cost replacement incurs no hidden accuracy loss, undermining the 'matches performance' claim.

minor comments (3)

- [Related work] Related work: add explicit positioning against other linear-time attention variants (e.g., Performer, Linformer) to clarify novelty.

- [Method / PoM definition] Notation and definitions: the polynomial function and aggregation/retrieval steps should be formalized with explicit equations early in the main text for reader clarity.

- [Figures] Figure captions: ensure visual diagrams of the PoM mechanism include labels for polynomial degree and token flow.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment below and describe the planned revisions to strengthen the manuscript.

read point-by-point responses

-

Referee: [Theoretical analysis / contextual mapping proof] Proof of contextual mapping property: the central universality claim depends on this property holding for the learned polynomial aggregation and retrieval. The manuscript should provide an explicit derivation or bounds showing that finite-degree polynomials do not restrict the representable contextual mappings, particularly addressing how coefficient learning interacts with the property.

Authors: We appreciate the referee's emphasis on making the theoretical guarantees fully explicit. Section 3.2 of the manuscript already contains a proof that PoM satisfies the contextual mapping property for any finite polynomial degree, relying on the fact that polynomials are dense in continuous functions on compact sets (via Stone-Weierstrass) and that coefficient learning allows the aggregation and retrieval steps to realize arbitrary mappings. To address the request for explicit derivation and bounds, we will add a corollary with approximation-error bounds and a step-by-step derivation of how learned coefficients interact with the property in the revised version. revision: yes

-

Referee: [Experiments / results across domains] Empirical evaluation sections: performance is reported to match attention baselines across five domains, but without details on run counts, variance, hyperparameter search, or ablations on polynomial degree, it is difficult to confirm that the linear-cost replacement incurs no hidden accuracy loss, undermining the 'matches performance' claim.

Authors: We agree that additional experimental details are necessary to fully substantiate the performance-parity claim. The revised manuscript will report the number of independent runs per experiment, include standard deviations or confidence intervals, describe the hyperparameter search protocol, and add an ablation study on polynomial degree (in an appendix) to demonstrate that accuracy remains stable across reasonable degrees without hidden costs. revision: yes

Circularity Check

No significant circularity; derivation is self-contained

full rationale

The paper introduces PoM as a new token-mixing mechanism defined via a learned polynomial aggregation followed by per-token retrieval. It supplies an explicit proof that this construction satisfies the contextual mapping property, from which universality of the resulting transformer follows directly. No step reduces a claimed prediction or first-principles result to a fitted parameter, self-citation, or definitional renaming of the target quantity. The five-domain empirical replacements are presented as independent validation rather than forced outputs of the same fit. The derivation chain therefore remains non-circular.

Axiom & Free-Parameter Ledger

free parameters (1)

- polynomial coefficients

axioms (1)

- domain assumption A polynomial function can encode sufficient contextual mappings for sequence-to-sequence tasks

Reference graph

Works this paper leans on

-

[1]

Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ah- mad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. GPT-4 technical report.arXiv:2303.08774, 2023

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[2]

wav2vec 2.0: A framework for self-supervised learning of speech representations.NeurIPS, 2020

Alexei Baevski, Yuhao Zhou, Abdelrahman Mohamed, and Michael Auli. wav2vec 2.0: A framework for self-supervised learning of speech representations.NeurIPS, 2020

2020

-

[3]

Fixed point diffusion models

Xingjian Bai and Luke Melas-Kyriazi. Fixed point diffusion models. InCVPR, 2024

2024

-

[4]

ediff-i: Text-to-image diffusion models with ensemble of expert denoisers

Yogesh Balaji, Seungjun Nah, Xun Huang, Arash Vahdat, Ji- aming Song, Qinsheng Zhang, Karsten Kreis, Miika Aittala, Timo Aila, Samuli Laine, Bryan Catanzaro, Tero Karras, and Ming-Yu Liu. eDiff-I: Text-to-image diffusion models with ensemble of expert denoisers.arXiv:2211.01324, 2022

-

[5]

Se- manticKITTI: A dataset for semantic scene understanding of LiDAR sequences

Jens Behley, Martin Garbade, Andres Milioto, Jan Quen- zel, Sven Behnke, Cyrill Stachniss, and Jurgen Gall. Se- manticKITTI: A dataset for semantic scene understanding of LiDAR sequences. InICCV, 2019

2019

-

[6]

Reducing transformer key-value cache size with cross-layer attention

William Brandon, Mayank Mishra, Aniruddha Nrusimha, Rameswar Panda, and Jonathan Ragan Kelly. Reducing transformer key-value cache size with cross-layer attention. NeurIPS, 2024

2024

-

[7]

The LAM dataset: A novel benchmark for line- level handwritten text recognition

Silvia Cascianelli, Vittorio Pippi, Martin Maarand, Marcella Cornia, Lorenzo Baraldi, Christopher Kermorvant, and Rita Cucchiara. The LAM dataset: A novel benchmark for line- level handwritten text recognition. InICPR, 2022

2022

-

[8]

Pixart-\sigma: Weak-to-strong training of diffusion transformer for 4k text-to-image generation

Junsong Chen, Chongjian Ge, Enze Xie, Yue Wu, Lewei Yao, Xiaozhe Ren, Zhongdao Wang, Ping Luo, Huchuan Lu, and Zhenguo Li. Pixart-\sigma: Weak-to-strong training of diffusion transformer for 4k text-to-image generation. In ECCV, 2024

2024

-

[9]

Fit: Far-reaching interleaved transformers,

Ting Chen and Lala Li. FIT: Far-reaching interleaved trans- formers.arXiv:2305.12689, 2023

-

[10]

Generating Long Sequences with Sparse Transformers

Rewon Child, Scott Gray, Alec Radford, and Ilya Sutskever. Generating long sequences with sparse transform- ers.arXiv:1904.10509, 2019

work page internal anchor Pith review arXiv 1904

-

[11]

Self-supervised learning with random- projection quantizer for speech recognition

Chung-Cheng Chiu, James Qin, Yu Zhang, Jiahui Yu, and Yonghui Wu. Self-supervised learning with random- projection quantizer for speech recognition. InInternational Conference on Machine Learning. PMLR, 2022

2022

-

[12]

Think you have Solved Question Answering? Try ARC, the AI2 Reasoning Challenge

Peter Clark, Isaac Cowhey, Oren Etzioni, Tushar Khot, Ashish Sabharwal, Carissa Schoenick, and Oyvind Tafjord. Think you have solved question answering? Ttry ARC, the AI2 reasoning challenge.arXiv:1803.05457, 2018

work page internal anchor Pith review Pith/arXiv arXiv 2018

-

[13]

Scalable high-resolution pixel-space image syn- thesis with hourglass diffusion transformers

Katherine Crowson, Stefan Andreas Baumann, Alex Birch, Tanishq Mathew Abraham, Daniel Z Kaplan, and Enrico Shippole. Scalable high-resolution pixel-space image syn- thesis with hourglass diffusion transformers. InInt. Conf. Mach. Learn., 2024

2024

-

[14]

Chang, Manolis Savva, Maciej Hal- ber, Thomas Funkhouser, and Matthias Niessner

Angela Dai, Angel X. Chang, Manolis Savva, Maciej Hal- ber, Thomas Funkhouser, and Matthias Niessner. ScanNet: Richly-annotated 3D reconstructions of indoor scenes. In CVPR, 2017

2017

-

[15]

FlashAttention-2: Faster attention with better par- allelism and work partitioning.ICLR, 2024

Tri Dao. FlashAttention-2: Faster attention with better par- allelism and work partitioning.ICLR, 2024

2024

-

[16]

Transformers are SSMs: General- ized models and efficient algorithms through structured state space duality

Tri Dao and Albert Gu. Transformers are SSMs: General- ized models and efficient algorithms through structured state space duality. InInt. Conf. Mach. Learn., 2024

2024

-

[17]

FlashAttention: Fast and memory-efficient exact attention with io-awareness

Tri Dao, Dan Fu, Stefano Ermon, Atri Rudra, and Christo- pher Ré. FlashAttention: Fast and memory-efficient exact attention with io-awareness. InNeurIPS, 2022

2022

-

[18]

Fu, Stefano Ermon, Atri Rudra, and Christopher Ré

Tri Dao, Daniel Y . Fu, Stefano Ermon, Atri Rudra, and Christopher Ré. FlashAttention: Fast and memory-efficient exact attention with IO-awareness. InNeurIPS, 2022

2022

-

[19]

Moshi: a speech-text foundation model for real-time dialogue

Alexandre Défossez, Laurent Mazaré, Manu Orsini, Amélie Royer, Patrick Pérez, Hervé Jégou, Edouard Grave, and Neil Zeghidour. Moshi: A speech-text foundation model for real- time dialogue.arXiv:2410.00037, 2024

work page internal anchor Pith review arXiv 2024

-

[20]

An image is worth 16x16 words: Trans- formers for image recognition at scale

Alexey Dosovitskiy, Lucas Beyer, Alexander Kolesnikov, Dirk Weissenborn, Xiaohua Zhai, Thomas Unterthiner, Mostafa Dehghani, Matthias Minderer, Georg Heigold, Syl- vain Gelly, et al. An image is worth 16x16 words: Trans- formers for image recognition at scale. InICLR, 2020

2020

-

[21]

Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Ab- hishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Amy Yang, Angela Fan, et al. The LLAMA 3 herd of models.arXiv:2407.21783, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[22]

Don’t drop your samples! Coherence-aware training benefits conditional diffusion

Nicolas Dufour, Victor Besnier, Vicky Kalogeiton, and David Picard. Don’t drop your samples! Coherence-aware training benefits conditional diffusion. InCVPR, 2024

2024

-

[23]

Scaling recti- fied flow transformers for high-resolution image synthesis

Patrick Esser, Sumith Kulal, Andreas Blattmann, Rahim Entezari, Jonas Müller, Harry Saini, Yam Levi, Dominik Lorenz, Axel Sauer, Frederic Boesel, et al. Scaling recti- fied flow transformers for high-resolution image synthesis. InInt. Conf. Mach. Learn., 2024

2024

-

[24]

Peng Gao, Le Zhuo, Ziyi Lin, Chris Liu, Junsong Chen, Ruoyi Du, Enze Xie, Xu Luo, Longtian Qiu, Yuhang Zhang, et al. Lumina-T2X: Transforming text into any modality, resolution, and duration via flow-based large diffusion trans- formers.arXiv:2405.05945, 2024

-

[25]

Zamba: A compact 7b SSM.arXiv preprint arXiv:2405.16712,

Paolo Glorioso, Quentin Anthony, Yury Tokpanov, James Whittington, Jonathan Pilault, Adam Ibrahim, and Beren Millidge. Zamba: A compact 7B SSM hybrid model. arXiv:2405.16712, 2024

-

[26]

Feder Cooper, Jasmine Collins, Lan- dan Seguin, Austin Jacobson, Mihir Patel, Jonathan Frankle, Cory Stephenson, and V olodymyr Kuleshov

Aaron Gokaslan, A. Feder Cooper, Jasmine Collins, Lan- dan Seguin, Austin Jacobson, Mihir Patel, Jonathan Frankle, Cory Stephenson, and V olodymyr Kuleshov. CommonCan- vas: Open diffusion models trained on creative-commons images. InCVPR, 2024

2024

-

[27]

Efficiently mod- eling long sequences with structured state spaces

Albert Gu, Karan Goel, and Christopher Re. Efficiently mod- eling long sequences with structured state spaces. InICLR, 2021. 9

2021

-

[28]

Combining recurrent, convolutional, and continuous-time models with linear state space layers

Albert Gu, Isys Johnson, Karan Goel, Khaled Saab, Tri Dao, Atri Rudra, and Christopher Ré. Combining recurrent, convolutional, and continuous-time models with linear state space layers. InNeurIPS, 2021

2021

-

[29]

Matryoshka diffusion models

Jiatao Gu, Shuangfei Zhai, Yizhe Zhang, Joshua M Susskind, and Navdeep Jaitly. Matryoshka diffusion models. InICLR, 2023

2023

-

[30]

Improved noise schedule for diffusion training.ICCV, 2024

Tiankai Hang and Shuyang Gu. Improved noise schedule for diffusion training.ICCV, 2024

2024

-

[31]

DifFit: Diffusion vision transformers for im- age generation

Ali Hatamizadeh, Jiaming Song, Guilin Liu, Jan Kautz, and Arash Vahdat. DifFit: Diffusion vision transformers for im- age generation. InECCV, 2024

2024

-

[32]

Mea- suring massive multitask language understanding

Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. Mea- suring massive multitask language understanding. InICLR, 2021

2021

-

[33]

Denoising diffu- sion probabilistic models

Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising diffu- sion probabilistic models. InNeurIPS, 2020

2020

-

[34]

Long short-term memory.Neural computation, 1997

Sepp Hochreiter and Jürgen Schmidhuber. Long short-term memory.Neural computation, 1997

1997

-

[35]

ZigMa: A DiT-style zigzag Mamba diffusion model

Vincent Tao Hu, Stefan Andreas Baumann, Ming Gui, Olga Grebenkova, Pingchuan Ma, Johannes Fischer, and Björn Ommer. ZigMa: A DiT-style zigzag Mamba diffusion model. InECCV, 2024

2024

-

[36]

Scalable adap- tive computation for iterative generation

Allan Jabri, David J Fleet, and Ting Chen. Scalable adap- tive computation for iterative generation. InInt. Conf. Mach. Learn., 2023

2023

-

[37]

Metric learning with horde: High-order regularizer for deep embeddings

Pierre Jacob, David Picard, Aymeric Histace, and Edouard Klein. Metric learning with horde: High-order regularizer for deep embeddings. InICCV, pages 6539–6548, 2019

2019

-

[38]

Perceiver IO: A general architecture for structured inputs & outputs

Andrew Jaegle, Sebastian Borgeaud, Jean-Baptiste Alayrac, Carl Doersch, Catalin Ionescu, David Ding, Skanda Kop- pula, Daniel Zoran, Andrew Brock, Evan Shelhamer, et al. Perceiver IO: A general architecture for structured inputs & outputs. InICLR, 2022

2022

-

[39]

Scaling Laws for Neural Language Models

Jared Kaplan, Sam McCandlish, Tom Henighan, Tom B Brown, Benjamin Chess, Rewon Child, Scott Gray, Alec Radford, Jeffrey Wu, and Dario Amodei. Scaling laws for neural language models.arXiv preprint arXiv:2001.08361, 2020

work page internal anchor Pith review Pith/arXiv arXiv 2001

-

[40]

NanoGPT.https://github.com/ karpathy/nanoGPT, 2022

Andrej Karpathy. NanoGPT.https://github.com/ karpathy/nanoGPT, 2022

2022

-

[42]

Analyzing and improving the training dynamics of diffusion models

Tero Karras, Miika Aittala, Jaakko Lehtinen, Janne Hellsten, Timo Aila, and Samuli Laine. Analyzing and improving the training dynamics of diffusion models. InCVPR, 2024

2024

-

[43]

Re- Former: The efficient transformer

Nikita Kitaev, Lukasz Kaiser, and Anselm Levskaya. Re- Former: The efficient transformer. InICLR, 2020

2020

-

[44]

Parrot: Pareto-optimal multi-reward rein- forcement learning framework for text-to-image generation

Seung Hyun Lee, Yinxiao Li, Junjie Ke, Innfarn Yoo, Han Zhang, Jiahui Yu, Qifei Wang, Fei Deng, Glenn Entis, Jun- feng He, et al. Parrot: Pareto-optimal multi-reward rein- forcement learning framework for text-to-image generation. InECCV, 2025

2025

-

[45]

HTR-VT: Handwritten text recognition with vision trans- former.Pattern Recognition, 2025

Yuting Li, Dexiong Chen, Tinglong Tang, and Xi Shen. HTR-VT: Handwritten text recognition with vision trans- former.Pattern Recognition, 2025

2025

-

[46]

Jamba: A hybrid transformer-Mamba language model.ICLR, 2025

Opher Lieber, Barak Lenz, Hofit Bata, Gal Cohen, Jhonathan Osin, Itay Dalmedigos, Erez Safahi, Shaked Meirom, Yonatan Belinkov, Shai Shalev-Shwartz, et al. Jamba: A hybrid transformer-Mamba language model.ICLR, 2025

2025

-

[47]

Flow matching for generative modeling

Yaron Lipman, Ricky TQ Chen, Heli Ben-Hamu, Maximil- ian Nickel, and Matthew Le. Flow matching for generative modeling. InICLR, 2022

2022

-

[48]

Flow straight and fast: Learning to generate and transfer data with rectified flow

Xingchao Liu, Chengyue Gong, et al. Flow straight and fast: Learning to generate and transfer data with rectified flow. In ICLR, 2023

2023

-

[49]

Vmamba: Visual state space model, 2024

Yue Liu, Yunjie Tian, Yuzhong Zhao, Hongtian Yu, Lingxi Xie, Yaowei Wang, Qixiang Ye, and Yunfan Liu. Vmamba: Visual state space model, 2024

2024

-

[50]

Correcting diffusion generation through resampling

Yujian Liu, Yang Zhang, Tommi Jaakkola, and Shiyu Chang. Correcting diffusion generation through resampling. In CVPR, 2024

2024

-

[51]

Keep the cost down: A review on methods to optimize LLM’s KV-cache consumption.COLM, 2024

Shi Luohe, Zhang Hongyi, Yao Yao, Li Zuchao, and Zhao Hai. Keep the cost down: A review on methods to optimize LLM’s KV-cache consumption.COLM, 2024

2024

-

[52]

SIT: Explor- ing flow and diffusion-based generative models with scalable interpolant transformers

Nanye Ma, Mark Goldstein, Michael S Albergo, Nicholas M Boffi, Eric Vanden-Eijnden, and Saining Xie. SIT: Explor- ing flow and diffusion-based generative models with scalable interpolant transformers. InECCV, 2024

2024

-

[53]

Improved denoising diffusion probabilistic models

Alexander Quinn Nichol and Prafulla Dhariwal. Improved denoising diffusion probabilistic models. InInt. Conf. Mach. Learn., 2021

2021

-

[54]

Scalable diffusion models with transformers

William Peebles and Saining Xie. Scalable diffusion models with transformers. InICCV, 2023

2023

-

[55]

EfficientV- Mamba: Atrous selective scan for light weight visual mamba, 2025

Xiaohuan Pei, Tao Huang, and Chang Xu. EfficientV- Mamba: Atrous selective scan for light weight visual mamba, 2025

2025

-

[56]

The fineweb datasets: Decanting the web for the finest text data at scale.NeurIPS, 2024

Guilherme Penedo, Hynek Kydlí ˇcek, Anton Lozhkov, Mar- garet Mitchell, Colin A Raffel, Leandro V on Werra, Thomas Wolf, et al. The fineweb datasets: Decanting the web for the finest text data at scale.NeurIPS, 2024

2024

-

[57]

Improving image similarity with vectors of locally aggregated tensors

David Picard and Philippe-Henri Gosselin. Improving image similarity with vectors of locally aggregated tensors. InICIP, pages 669–672, 2011

2011

-

[58]

Language models are unsu- pervised multitask learners.OpenAI blog, 2019

Alec Radford, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei, Ilya Sutskever, et al. Language models are unsu- pervised multitask learners.OpenAI blog, 2019

2019

-

[59]

High-resolution image syn- thesis with latent diffusion models

Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser, and Björn Ommer. High-resolution image syn- thesis with latent diffusion models. InCVPR, 2022

2022

-

[60]

Panoptic seg- mentation of satellite image time series with convolutional temporal attention networks.ICCV, 2021

Vivien Sainte Fare Garnot and Loic Landrieu. Panoptic seg- mentation of satellite image time series with convolutional temporal attention networks.ICCV, 2021

2021

-

[61]

Winogrande: An adversarial winograd schema challenge at scale.Communications of the ACM, 2021

Keisuke Sakaguchi, Ronan Le Bras, Chandra Bhagavatula, and Yejin Choi. Winogrande: An adversarial winograd schema challenge at scale.Communications of the ACM, 2021

2021

-

[62]

Diffusion Schrödinger bridge matching

Yuyang Shi, Valentin De Bortoli, Andrew Campbell, and Ar- naud Doucet. Diffusion Schrödinger bridge matching. In NeurIPS, 2024

2024

-

[63]

FreeU: Free lunch in diffusion U-net

Chenyang Si, Ziqi Huang, Yuming Jiang, and Ziwei Liu. FreeU: Free lunch in diffusion U-net. InCVPR, 2024. 10

2024

-

[64]

Score-based generative modeling through stochastic differential equa- tions

Yang Song, Jascha Sohl-Dickstein, Diederik P Kingma, Ab- hishek Kumar, Stefano Ermon, and Ben Poole. Score-based generative modeling through stochastic differential equa- tions. InICLR, 2021

2021

-

[65]

ViTs for SITS: Vision transformers for satellite image time series

Michail Tarasiou, Erik Chavez, and Stefanos Zafeiriou. ViTs for SITS: Vision transformers for satellite image time series. InCVPR, 2023

2023

-

[66]

Gemini: A Family of Highly Capable Multimodal Models

Gemini Team, Rohan Anil, Sebastian Borgeaud, Jean- Baptiste Alayrac, Jiahui Yu, Radu Soricut, Johan Schalk- wyk, Andrew M Dai, Anja Hauth, Katie Millican, et al. GEMINI: A family of highly capable multimodal models. arXiv:2312.11805, 2023

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[67]

Gemma 2: Improving Open Language Models at a Practical Size

Gemma Team, Morgane Riviere, Shreya Pathak, Pier Giuseppe Sessa, Cassidy Hardin, Surya Bhupati- raju, Léonard Hussenot, Thomas Mesnard, Bobak Shahriari, Alexandre Ramé, et al. Gemma 2: Improving open language models at a practical size.arXiv:2408.00118, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[68]

MLP-Mixer: An all-MLP architecture for vision

Ilya O Tolstikhin, Neil Houlsby, Alexander Kolesnikov, Lucas Beyer, Xiaohua Zhai, Thomas Unterthiner, Jessica Yung, Andreas Steiner, Daniel Keysers, Jakob Uszkoreit, et al. MLP-Mixer: An all-MLP architecture for vision. In NeurIPS, 2021

2021

-

[69]

ResMLP: Feedforward networks for image classification with data-efficient training.IEEE TPAMI, 2022

Hugo Touvron, Piotr Bojanowski, Mathilde Caron, Matthieu Cord, Alaaeldin El-Nouby, Edouard Grave, Gautier Izac- ard, Armand Joulin, Gabriel Synnaeve, Jakob Verbeek, et al. ResMLP: Feedforward networks for image classification with data-efficient training.IEEE TPAMI, 2022

2022

-

[70]

Attention is all you need

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszko- reit, Llion Jones, Aidan N Gomez, Łukasz Kaiser, and Illia Polosukhin. Attention is all you need. InNeurIPS, 2017

2017

-

[71]

Diffusion model align- ment using direct preference optimization

Bram Wallace, Meihua Dang, Rafael Rafailov, Linqi Zhou, Aaron Lou, Senthil Purushwalkam, Stefano Ermon, Caiming Xiong, Shafiq Joty, and Nikhil Naik. Diffusion model align- ment using direct preference optimization. InCVPR, 2024

2024

-

[72]

Linformer: Self-Attention with Linear Complexity

Sinong Wang, Belinda Z Li, Madian Khabsa, Han Fang, and Hao Ma. LinFormer: Self-attention with linear complexity. arXiv:2006.04768, 2020

work page internal anchor Pith review arXiv 2006

-

[73]

Powerful and flexible: Personalized text- to-image generation via reinforcement learning

Fanyue Wei, Wei Zeng, Zhenyang Li, Dawei Yin, Lixin Duan, and Wen Li. Powerful and flexible: Personalized text- to-image generation via reinforcement learning. InECCV, 2024

2024

-

[74]

Point Transformer v3: Simpler, faster, stronger

Xiaoyang Wu, Li Jiang, Peng-Shuai Wang, Zhijian Liu, Xi- hui Liu, Yu Qiao, Wanli Ouyang, Tong He, and Hengshuang Zhao. Point Transformer v3: Simpler, faster, stronger. In CVPR, 2024

2024

-

[75]

Diffu- sion models without attention

Jing Nathan Yan, Jiatao Gu, and Alexander M Rush. Diffu- sion models without attention. InCVPR, 2024

2024

-

[76]

CogVideoX: Text-to-video diffusion models with an expert transformer.ICLR, 2025

Zhuoyi Yang, Jiayan Teng, Wendi Zheng, Ming Ding, Shiyu Huang, Jiazheng Xu, Yuanming Yang, Wenyi Hong, Xiao- han Zhang, Guanyu Feng, et al. CogVideoX: Text-to-video diffusion models with an expert transformer.ICLR, 2025

2025

-

[77]

Are transformers uni- versal approximators of sequence-to-sequence functions? In ICLR, 2020

Chulhee Yun, Srinadh Bhojanapalli, Ankit Singh Rawat, Sashank Reddi, and Sanjiv Kumar. Are transformers uni- versal approximators of sequence-to-sequence functions? In ICLR, 2020

2020

-

[78]

HellaSwag: Can a Machine Really Finish Your Sentence?

Rowan Zellers, Ari Holtzman, Yonatan Bisk, Ali Farhadi, and Yejin Choi. HellaSwag: Can a machine really finish your sentence?arXiv:1905.07830, 2019

work page internal anchor Pith review arXiv 1905

-

[79]

Scaling vision transformers

Xiaohua Zhai, Alexander Kolesnikov, Neil Houlsby, and Lu- cas Beyer. Scaling vision transformers. InCVPR, 2022

2022

-

[80]

Yu Zhang, Wei Han, James Qin, Yongqiang Wang, Ankur Bapna, Zhehuai Chen, Nanxin Chen, Bo Li, Vera Axelrod, Gary Wang, et al. Google USM: Scaling automatic speech recognition beyond 100 languages.arXiv:2303.01037, 2023

-

[81]

MobileDiffusion: Instant text-to-image gen- eration on mobile devices

Yang Zhao, Yanwu Xu, Zhisheng Xiao, Haolin Jia, and Tingbo Hou. MobileDiffusion: Instant text-to-image gen- eration on mobile devices. InECCV, 2024

2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.