Recognition: 2 theorem links

· Lean TheoremThe Theorems of Dr. David Blackwell and Their Contributions to Artificial Intelligence

Pith reviewed 2026-05-10 18:28 UTC · model grok-4.3

The pith

Blackwell's three theorems from the 1940s and 1950s provide a unified framework for the information and decision problems at the center of modern AI.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

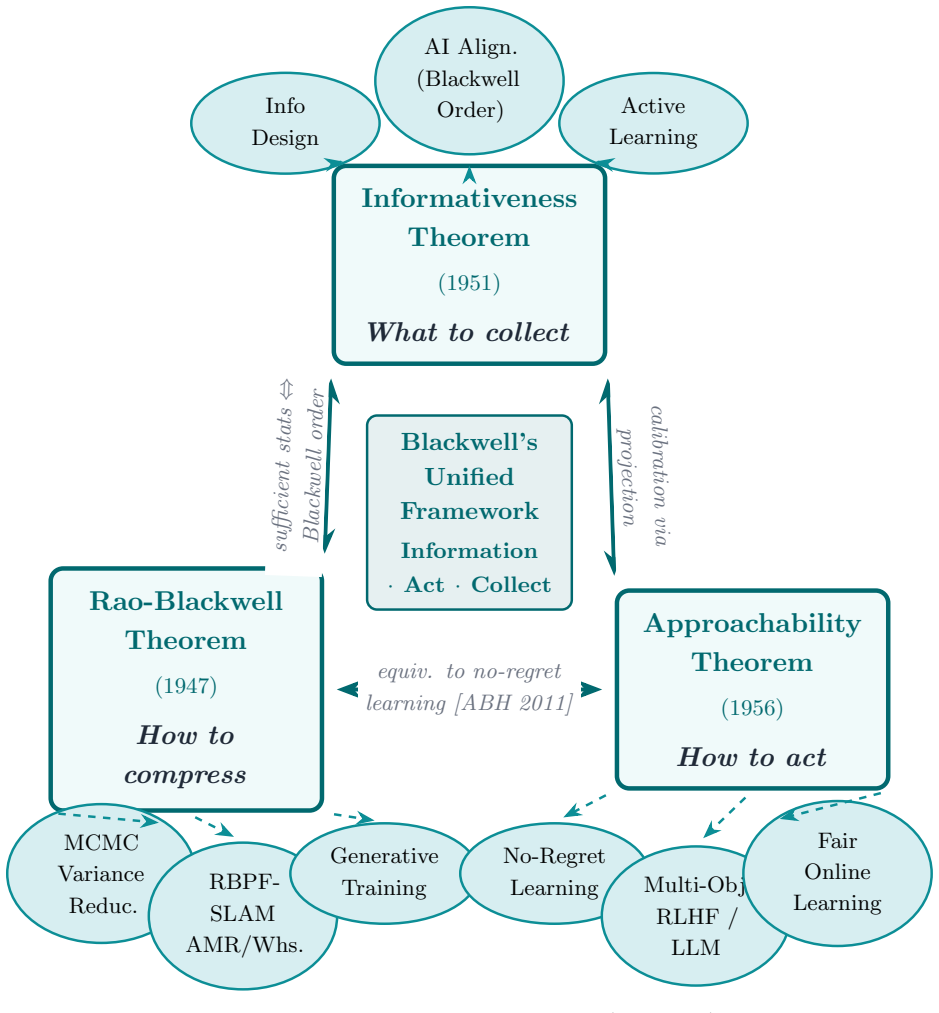

The author claims that the Rao-Blackwell theorem, the Blackwell approachability theorem, and the Blackwell informativeness theorem together form a unified framework addressing information compression, sequential decision making under uncertainty, and the comparison of information sources, which are the precise problems at the core of modern artificial intelligence.

What carries the argument

The three theorems that respectively reduce variance in estimates by conditioning, guarantee approachability in repeated games for no-regret play, and rank statistical experiments by their usefulness for decisions.

Load-bearing premise

The listed AI subfields draw direct technical influence from these specific theorems rather than loose historical or analogical connections.

What would settle it

A review of recent papers on RLHF pipelines or LLM alignment that finds no references to or implementations of Blackwell theorems would show the claimed direct influence is not present.

Figures

read the original abstract

Dr. David Blackwell was a mathematician and statistician of the first rank, whose contributions to statistical theory, game theory, and decision theory predated many of the algorithmic breakthroughs that define modern artificial intelligence. This survey examines three of his most consequential theoretical results the Rao Blackwell theorem, the Blackwell Approachability theorem, and the Blackwell Informativeness theorem (comparison of experiments) and traces their direct influence on contemporary AI and machine learning. We show that these results, developed primarily in the 1940s and 1950s, remain technically live across modern subfields including Markov Chain Monte Carlo inference, autonomous mobile robot navigation (SLAM), generative model training, no-regret online learning, reinforcement learning from human feedback (RLHF), large language model alignment, and information design. NVIDIAs 2024 decision to name their flagship GPU architecture (Blackwell) provides vivid testament to his enduring relevance. We also document an emerging frontier: explicit Rao Blackwellized variance reduction in LLM RLHF pipelines, recently proposed but not yet standard practice. Together, Blackwell theorems form a unified framework addressing information compression, sequential decision making under uncertainty, and the comparison of information sources precisely the problems at the core of modern AI.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. This survey examines three theorems by David Blackwell—the Rao-Blackwell theorem, the Blackwell Approachability theorem, and the Blackwell Informativeness (comparison of experiments) theorem—and traces their direct influence on modern AI and machine learning. It claims these 1940s-1950s results remain technically live in MCMC inference, SLAM navigation, generative model training, no-regret online learning, RLHF, LLM alignment, and information design, forming a unified framework for information compression, sequential decision-making under uncertainty, and comparing information sources. NVIDIA's 2024 Blackwell GPU architecture is cited as evidence of relevance, along with an emerging (but not yet standard) application of Rao-Blackwellization in RLHF pipelines.

Significance. If the direct technical influences and unified framework were substantiated with explicit citations, derivations, and mappings from the theorems to current algorithms, the paper would usefully highlight historical roots of core AI problems and encourage cross-field awareness. As presented, the significance is modest because the narrative rests on shared problem statements rather than documented usage, limiting its value for advancing research or pedagogy in statistics or AI.

major comments (3)

- [Abstract] Abstract: The assertion that the theorems 'remain technically live across modern subfields including ... reinforcement learning from human feedback (RLHF), large language model alignment' is contradicted by the paper's own qualification that 'explicit Rao-Blackwellized variance reduction in LLM RLHF pipelines' is 'recently proposed but not yet standard practice.' This admission indicates the technique is not currently part of live, standard practice, undermining the central claim of direct, ongoing influence.

- [Abstract] Abstract: The claim that 'Together, Blackwell theorems form a unified framework addressing information compression, sequential decision making under uncertainty, and the comparison of information sources' is not supported by any derivation, common mathematical structure, or theorem that interconnects the three results in an AI context; the text only lists overlapping problem areas without demonstrating unification.

- [Abstract] Abstract and sections on applications: For the asserted direct influence on MCMC variance reduction, SLAM filtering, no-regret learning, and RLHF, the paper must supply specific citations to modern algorithms or papers that explicitly invoke or derive from Blackwell (1951) or the other theorems, rather than relying on conceptual parallels to information compression and decisions under uncertainty.

minor comments (2)

- [Abstract] Abstract: 'NVIDIAs' is missing the possessive apostrophe and should read 'NVIDIA's'.

- The manuscript would be clearer with a summary table mapping each theorem to its claimed AI applications, including key references.

Simulated Author's Rebuttal

We thank the referee for their constructive comments, which identify opportunities to clarify claims and provide stronger evidence of influence. We address each major comment point by point below and will implement revisions to improve the manuscript's precision and substantiation.

read point-by-point responses

-

Referee: [Abstract] Abstract: The assertion that the theorems 'remain technically live across modern subfields including ... reinforcement learning from human feedback (RLHF), large language model alignment' is contradicted by the paper's own qualification that 'explicit Rao-Blackwellized variance reduction in LLM RLHF pipelines' is 'recently proposed but not yet standard practice.' This admission indicates the technique is not currently part of live, standard practice, undermining the central claim of direct, ongoing influence.

Authors: We agree the abstract phrasing risks overstating the current status of explicit Rao-Blackwellization in RLHF. The qualification correctly notes that this specific technique is emerging rather than standard. The broader intent was to highlight that principles such as variance reduction from the Rao-Blackwell theorem continue to inform related methods in RLHF and alignment. We will revise the abstract to distinguish ongoing conceptual relevance from the non-standard status of explicit applications, ensuring the claim accurately reflects documented practice. revision: yes

-

Referee: [Abstract] Abstract: The claim that 'Together, Blackwell theorems form a unified framework addressing information compression, sequential decision making under uncertainty, and the comparison of information sources' is not supported by any derivation, common mathematical structure, or theorem that interconnects the three results in an AI context; the text only lists overlapping problem areas without demonstrating unification.

Authors: The manuscript emphasizes complementary roles of the three theorems in addressing core AI challenges but does not present a formal derivation or single interconnecting theorem. We accept that the 'unified framework' language requires qualification. We will revise the claim to state that the theorems collectively address these problems and add a new discussion subsection that explicitly maps each theorem to its AI applications while outlining thematic links, without asserting a single mathematical unification. revision: yes

-

Referee: [Abstract] Abstract and sections on applications: For the asserted direct influence on MCMC variance reduction, SLAM filtering, no-regret learning, and RLHF, the paper must supply specific citations to modern algorithms or papers that explicitly invoke or derive from Blackwell (1951) or the other theorems, rather than relying on conceptual parallels to information compression and decisions under uncertainty.

Authors: We will add explicit citations to modern papers and algorithms that directly invoke or extend Blackwell's results where such references exist, including Rao-Blackwellized MCMC methods, approachability-based no-regret algorithms, and any documented links in RLHF. For domains such as SLAM or certain generative modeling aspects where the connection is more foundational or thematic, we will qualify the language to reflect conceptual influence rather than direct invocation. These changes will replace reliance on parallels with documented usage and citations. revision: partial

Circularity Check

No circularity: historical survey with no derivations or self-referential fits

full rationale

The paper is a survey tracing the historical influence of Blackwell's independent theorems (Rao-Blackwell, Approachability, Informativeness) on AI subfields. It contains no mathematical derivations, equations, fitted parameters, or predictions that reduce to the paper's own inputs by construction. Central claims rest on conceptual mapping to external historical results and modern applications, with no load-bearing self-citations from the author (Paxton) or ansatz smuggling. The derivation chain is self-contained against external benchmarks like Blackwell's original papers and cited AI literature.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

The Rao-Blackwell theorem... S* = E[S|T]... Var(S*) ≤ Var(S)

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

Blackwell Approachability Theorem... closed convex set S is approachable

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

Abernethy, J., Bartlett, P., & Hazan, E. (2011). Blackwell approachability and no-regret learning are equivalent. In Proceedings of the 24th COLT, 2011.. http://proceedings.mlr.press/v19/abernethy11b/abernethy11b.pdf

2011

-

[2]

ABI Research. (2024). Mobile robots set to reach 2.8 million shipments by 2030. https://www.abiresearch.com/press/mobile-robots-set-to-reach-28-million-shipments-by-2030-as-applications-expand-across-industries/

2024

-

[3]

Alignment Forum. (2023). The Blackwell order as a formalization of knowledge. https://www.alignmentforum.org/posts/wEjozSY9rhkpAaABt/the-blackwell-order-as-a-formalization-of-knowledge

2023

-

[4]

Andrieu, C., de Freitas, N., Doucet, A., & Jordan, M. I. (2003). An introduction to MCMC for machine learning. Machine Learning, 50, 5--43. https://link.springer.com/article/10.1023/A:1020281327116

-

[5]

Bergemann, D., & Morris, S. (2019). Information design: A unified perspective. Journal of Economic Literature, 57(1), 44--95. https://www.aeaweb.org/articles?id=10.1257/jel.20181489

-

[6]

Blackwell, D. (1947). Conditional expectation and unbiased sequential estimation. Annals of Mathematical Statistics, 18(1), 105--110

1947

-

[7]

Blackwell, D. (1951). The comparison of experiments. Proc.\ Second Berkeley Symp.\ Math.\ Statist.\ Probab., 93--102

1951

-

[8]

Blackwell, D. (1953). Equivalent comparisons of experiments. Annals of Mathematical Statistics, 24(2), 265--272

1953

-

[9]

Blackwell, D. (1956). An analog of the minimax theorem for vector payoffs. Pacific Journal of Mathematics, 6(1), 1--8

1956

-

[10]

Blackwell, D. (1965). Discounted dynamic programming. Annals of Mathematical Statistics, 36(1), 226--235. https://projecteuclid.org/journals/annals-of-mathematical-statistics/volume-36/issue-1/Discounted-Dynamic-Programming/10.1214/aoms/1177700285.full

-

[11]

Blackwell, D., & Girshick, M. A. (1954). Theory of Games and Statistical Decisions. John Wiley & Sons

1954

-

[12]

Casper, S., et al. (2023). Open problems and fundamental limitations of reinforcement learning from human feedback. arXiv preprint arXiv:2307.15217. https://arxiv.org/abs/2307.15217

work page internal anchor Pith review arXiv 2023

-

[13]

Cesa-Bianchi, N., & Lugosi, G. (2006). Prediction, Learning, and Games. Cambridge University Press. https://www.cambridge.org/core/books/prediction-learning-and-games/D5FFDBE0D58EEC0DE6DC6A2B04B4B68D

2006

-

[14]

Chakraborty, S., et al. (2024). MaxMin-RLHF: Alignment with diverse human preferences. In Proceedings of the 41st ICML, 2024.. https://proceedings.mlr.press/v235/chakraborty24b.html

2024

- [15]

-

[16]

Doucet, A., de Freitas, N., Murphy, K., & Russell, S. (2001). Rao-Blackwellised particle filtering for dynamic Bayesian networks. In Proceedings of the 16th Conference on Uncertainty in Artificial Intelligence (UAI), 2000

2001

-

[17]

Foster, D. P. (1999). A proof of calibration via Blackwell's approachability theorem. Games and Economic Behavior, 29(1--2), 73--78. https://repository.upenn.edu/bitstreams/899602a7-f134-4ecd-bd3d-9b8f7ea0be25/download

1999

-

[18]

P., & Vohra, R

Foster, D. P., & Vohra, R. V. (1998). Asymptotic calibration. Biometrika, 85(2), 379--390

1998

-

[19]

Grand View Research. (2024). Mobile Robotics Market Size & Forecast. https://www.grandviewresearch.com/industry-analysis/mobile-robotics-market

2024

-

[20]

Grisetti, G., Stachniss, C., & Burgard, W. (2007). Improved techniques for grid mapping with Rao-Blackwellized particle filters. IEEE Transactions on Robotics, 23(1), 34--46. https://doi.org/10.1109/TRO.2006.889486

- [21]

- [22]

-

[23]

Lattimore, T., & Szepesv\'ari, C. (2020). Bandit Algorithms. Cambridge University Press. https://tor-lattimore.com/downloads/book/book.pdf

2020

-

[24]

S., Wong, W

Liu, J. S., Wong, W. H., & Kong, A. (1994). Covariance structure of the Gibbs sampler with applications to the comparisons of estimators and augmentation schemes. Biometrika, 81(1), 27--40

1994

-

[25]

Liu, C., Maddison, C. J., & Mnih, A. (2019). Rao-Blackwellized stochastic gradients for discrete distributions. In Proceedings of the 36th ICML, 2019.. https://arxiv.org/abs/1810.04777

-

[26]

LogisticsIQ. (2025). Warehouse Automation Market. https://www.thelogisticsiq.com/research/warehouse-automation-market

2025

-

[27]

Market Research Future. (2025). Indoor Robots Market: Industry Analysis and Forecast to 2035. https://www.marketresearchfuture.com/reports/indoor-robots-market-6915

2025

-

[28]

MarketsandMarkets. (2025). Autonomous Mobile Robots (AMR) Market Worth \ 4.56 Billion by 2030. https://www.prnewswire.com/news-releases/autonomous-mobile-robots-amr-market-worth-4-56-billion-in-2030---exclusive-report-by-marketsandmarkets-302342746.html

2025

- [29]

-

[30]

NVIDIA Corporation. (2024). NVIDIA Blackwell platform arrives to power a new era of computing. GTC 2024 Press Release. https://nvidianews.nvidia.com/news/nvidia-blackwell-platform-arrives-to-power-a-new-era-of-computing

2024

- [31]

- [32]

-

[33]

Rao, C. R. (1945). Information and the accuracy attainable in the estimation of statistical parameters. Bulletin of the Calcutta Mathematical Society, 37, 81--91

1945

- [34]

-

[35]

SellersCommerce. (2025). Warehouse Automation Statistics 2025. https://www.sellerscommerce.com/blog/warehouse-automation-statistics/

2025

-

[36]

Settles, B. (2009). Active learning literature survey. Computer Sciences Technical Report 1648, University of Wisconsin-Madison

2009

-

[37]

S., & Barto, A

Sutton, R. S., & Barto, A. G. (2018). Reinforcement Learning: An Introduction (2nd ed.). MIT Press

2018

-

[38]

J., Lawson, J., & Sohl-Dickstein, J

Tucker, G., Mnih, A., Maddison, C. J., Lawson, J., & Sohl-Dickstein, J. (2017). REBAR: Low-variance, unbiased gradient estimates for discrete latent variable models. In Advances in Neural Information Processing Systems (NeurIPS), 2017.. https://arxiv.org/abs/1703.07370

-

[39]

urnkranz, J., & H\

Wirth, C., F\"urnkranz, J., & H\"ullermeier, E. (2017). A survey of preference-based reinforcement learning methods. Journal of Machine Learning Research, 18(136), 1--46. https://www.jmlr.org/papers/v18/16-634.html

2017

- [40]

- [41]

- [42]

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.