Recognition: 2 theorem links

· Lean TheoremCloudMamba: An Uncertainty-Guided Dual-Scale Mamba Network for Cloud Detection in Remote Sensing Imagery

Pith reviewed 2026-05-10 19:23 UTC · model grok-4.3

The pith

An uncertainty-guided two-stage dual-scale Mamba network improves cloud detection accuracy for thin and fragmented regions in remote sensing images.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

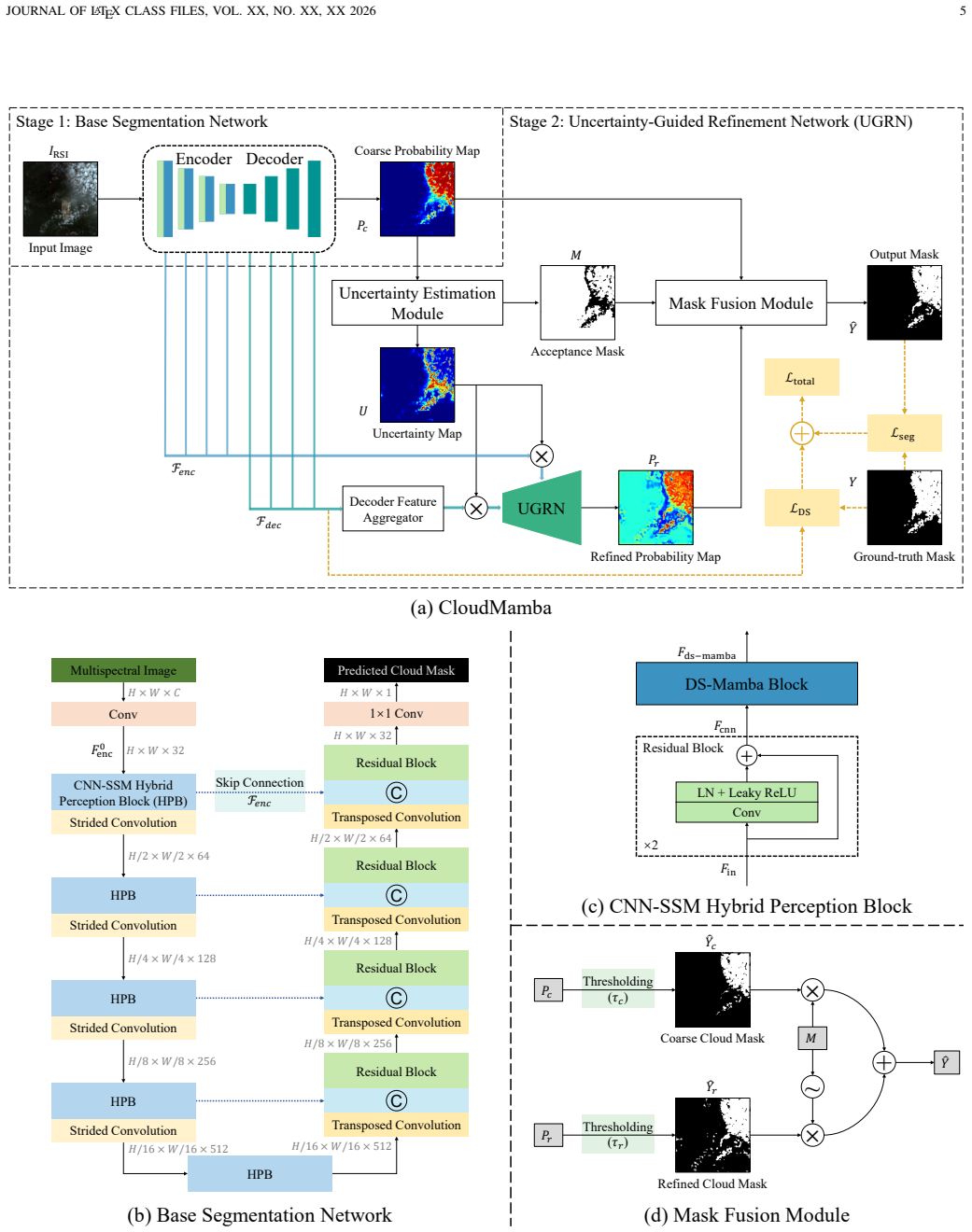

CloudMamba shows that embedding an uncertainty estimation module into a dual-scale CNN-Mamba architecture enables automatic detection of ambiguous thin-cloud and boundary regions, followed by targeted second-stage refinement that raises segmentation accuracy while preserving linear computational complexity.

What carries the argument

The uncertainty estimation module that identifies low-confidence thin-cloud and boundary pixels for a second-stage refinement inside a dual-scale CNN-Mamba hybrid network.

If this is right

- Segmentation accuracy rises on standard remote sensing benchmarks for both overall cloud morphology and fine boundary details.

- Linear computational complexity supports faster processing of large satellite scenes compared with quadratic Transformer alternatives.

- Uncertainty maps provide built-in transparency by highlighting regions where the model is least confident.

- The two-stage design directly targets the ambiguity that single-pass methods leave unresolved in fragmented or thin clouds.

Where Pith is reading between the lines

- The same uncertainty-plus-refinement pattern could be tested on other ambiguous segmentation problems such as shadow or haze removal in aerial imagery.

- Linear scaling might allow on-board cloud detection on satellites with limited compute, reducing the need to downlink raw data.

- Combining the dual-scale Mamba backbone with additional state-space layers could further extend the range of captured spatial dependencies without extra cost.

Load-bearing premise

The uncertainty estimation module reliably flags thin-cloud and boundary regions as low-confidence so that the second-stage refinement improves accuracy rather than introducing new errors.

What would settle it

If adding the second-stage refinement produces no gain or a loss in accuracy metrics specifically on thin-cloud and boundary subsets of the GF1_WHU or Levir_CS datasets, the central claim would be falsified.

Figures

read the original abstract

Cloud detection in remote sensing imagery is a fundamental, critical, and highly challenging problem. Existing deep learning-based cloud detection methods generally formulate it as a single-stage pixel-wise binary segmentation task with one forward pass. However, such single-stage approaches exhibit ambiguity and uncertainty in thin-cloud regions and struggle to accurately handle fragmented clouds and boundary details. In this paper, we propose a novel deep learning framework termed CloudMamba. To address the ambiguity in thin-cloud regions, we introduce an uncertainty-guided two-stage cloud detection strategy. An embedded uncertainty estimation module is proposed to automatically quantify the confidence of thin-cloud segmentation, and a second-stage refinement segmentation is introduced to improve the accuracy in low-confidence hard regions. To better handle fragmented clouds and fine-grained boundary details, we design a dual-scale Mamba network based on a CNN-Mamba hybrid architecture. Compared with Transformer-based models with quadratic computational complexity, the proposed method maintains linear computational complexity while effectively capturing both large-scale structural characteristics and small-scale boundary details of clouds, enabling accurate delineation of overall cloud morphology and precise boundary segmentation. Extensive experiments conducted on the GF1_WHU and Levir_CS public datasets demonstrate that the proposed method outperforms existing approaches across multiple segmentation accuracy metrics, while offering high efficiency and process transparency. Our code is available at https://github.com/jayoungo/CloudMamba.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes CloudMamba, a two-stage uncertainty-guided dual-scale Mamba network for cloud detection in remote sensing imagery. It introduces an embedded uncertainty estimation module to quantify confidence in thin-cloud regions followed by a refinement segmentation stage, combined with a CNN-Mamba hybrid architecture that captures large-scale cloud structures and fine boundary details at linear computational complexity. The authors claim that experiments on the GF1_WHU and Levir_CS datasets demonstrate outperformance over existing methods across segmentation metrics while providing high efficiency and process transparency, with code released publicly.

Significance. If the performance gains are substantiated and the uncertainty module delivers net-positive refinement on hard cases, the work would provide an efficient, linear-complexity alternative to Transformer-based cloud segmentation models. The emphasis on handling thin clouds and boundaries addresses a practical challenge in remote sensing, and the public code release supports reproducibility.

major comments (2)

- [Experimental evaluation] The central claim depends on the uncertainty-guided refinement stage improving accuracy in thin-cloud and boundary regions rather than introducing errors. However, only aggregate metrics (overall accuracy, mIoU, etc.) are reported on GF1_WHU and Levir_CS; no per-region breakdown for thin clouds, no thin-cloud-specific IoU/F1 scores, and no ablation isolating the second-stage refinement on the low-confidence mask are described. This leaves open whether the reported gains arise from the dual-scale backbone alone.

- [Abstract and Experimental Results] The abstract states that the method 'outperforms existing approaches across multiple segmentation accuracy metrics' but provides no quantitative tables, specific metric values, or error analysis. Without these in the main experimental section, the soundness of the outperformance claim cannot be verified from the provided summary.

minor comments (2)

- [Abstract] The phrase 'process transparency' is invoked in the abstract but is not defined or demonstrated (e.g., via visualization of uncertainty maps or intermediate outputs).

- [Method] Minor notation or figure clarity issues may exist in the dual-scale Mamba description; ensure all architectural diagrams clearly label the uncertainty module and refinement pathway.

Simulated Author's Rebuttal

We thank the referee for the constructive comments. We address each major point below and indicate the changes we will incorporate.

read point-by-point responses

-

Referee: [Experimental evaluation] The central claim depends on the uncertainty-guided refinement stage improving accuracy in thin-cloud and boundary regions rather than introducing errors. However, only aggregate metrics (overall accuracy, mIoU, etc.) are reported on GF1_WHU and Levir_CS; no per-region breakdown for thin clouds, no thin-cloud-specific IoU/F1 scores, and no ablation isolating the second-stage refinement on the low-confidence mask are described. This leaves open whether the reported gains arise from the dual-scale backbone alone.

Authors: We agree that isolating the refinement stage's contribution is necessary. The manuscript already contains ablations comparing the dual-scale backbone to the full model (Section 4.3), but we will add a dedicated ablation that applies the second-stage refinement only on the low-confidence mask. We will also include thin-cloud-specific IoU and F1 scores computed on a manually curated subset of thin-cloud images from both datasets. These additions will clarify that the uncertainty-guided stage yields net-positive gains on hard regions. revision: yes

-

Referee: [Abstract and Experimental Results] The abstract states that the method 'outperforms existing approaches across multiple segmentation accuracy metrics' but provides no quantitative tables, specific metric values, or error analysis. Without these in the main experimental section, the soundness of the outperformance claim cannot be verified from the provided summary.

Authors: The experimental section of the full manuscript includes quantitative tables (Tables 1 and 2) reporting specific metric values (mIoU, F1, OA, etc.) and direct comparisons against prior methods on GF1_WHU and Levir_CS, together with runtime and parameter counts. Error analysis appears in the accompanying discussion. To improve clarity we will insert a short summary of the key metric improvements into the abstract while retaining its conventional length. revision: partial

Circularity Check

No significant circularity in derivation chain

full rationale

The paper is an empirical architecture proposal for a CNN-Mamba hybrid network with an added uncertainty module and two-stage refinement. No equations, derivations, or first-principles results are presented that reduce to fitted parameters or self-citations by construction. Design choices are motivated by addressing limitations of prior single-stage methods, and claims rest on aggregate metrics from external public datasets (GF1_WHU, Levir_CS). No load-bearing self-citation chains, ansatzes smuggled via citation, or renaming of known results appear in the text.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Spatial–spectral attention network guided with change magnitude image for land cover change detection using remote sensing images,

Z. Lv, F. Wang, G. Cui, J. A. Benediktsson, T. Lei, and W. Sun, “Spatial–spectral attention network guided with change magnitude image for land cover change detection using remote sensing images,”IEEE Transactions on Geoscience and Remote Sensing, vol. 60, pp. 1–12, 2022

2022

-

[2]

Adaptive frequency enhancement network for remote sensing image semantic seg- mentation,

F. Gao, M. Fu, J. Cao, J. Dong, and Q. Du, “Adaptive frequency enhancement network for remote sensing image semantic seg- mentation,”IEEE Transactions on Geoscience and Remote Sensing, vol. 63, pp. 1–15, 2025

2025

-

[3]

Remote sensing spatiotemporal vision–language models: A comprehensive survey,

C. Liu, J. Zhang, K. Chen, M. Wang, Z. Zou, and Z. Shi, “Remote sensing spatiotemporal vision–language models: A comprehensive survey,”IEEE Geoscience and Remote Sensing Magazine, vol. 14, no. 1, pp. 383–423, 2026

2026

-

[4]

Multi- source remote sensing data classification based on convolutional neural network,

X. Xu, W. Li, Q. Ran, Q. Du, L. Gao, and B. Zhang, “Multi- source remote sensing data classification based on convolutional neural network,”IEEE Transactions on Geoscience and Remote Sensing, vol. 56, no. 2, pp. 937–949, 2017

2017

-

[5]

Agrifm: A multi-source temporal remote sensing foundation model for agriculture map- ping,

W. Li, S. Liang, K. Chen, Y . Chen, H. Ma, J. Xu, Y . Ma, Y . Zhang, S. Guan, H. Fanget al., “Agrifm: A multi-source temporal remote sensing foundation model for agriculture map- ping,”Remote Sensing of Environment, vol. 334, p. 115234, 2026

2026

-

[6]

Fine-scale urban informal settlements mapping by fusing remote sensing images and building data via a transformer-based multimodal fusion network,

R. Fan, F. Li, W. Han, J. Yan, J. Li, and L. Wang, “Fine-scale urban informal settlements mapping by fusing remote sensing images and building data via a transformer-based multimodal fusion network,”IEEE Transactions on Geoscience and Remote Sensing, vol. 60, pp. 1–16, 2022

2022

-

[7]

Bifa: Remote sensing image change detection with bitemporal feature alignment,

H. Zhang, H. Chen, C. Zhou, K. Chen, C. Liu, Z. Zou, and Z. Shi, “Bifa: Remote sensing image change detection with bitemporal feature alignment,”IEEE Transactions on Geo- science and Remote Sensing, vol. 62, pp. 1–17, 2024

2024

-

[8]

Location-aware adaptive normalization: a deep learning approach for wildfire danger forecasting,

M. H. S. Eddin, R. Roscher, and J. Gall, “Location-aware adaptive normalization: a deep learning approach for wildfire danger forecasting,”IEEE Transactions on Geoscience and Remote Sensing, vol. 61, pp. 1–18, 2023

2023

-

[9]

A cnn-transformer hybrid framework for mapping annual wheat fractional cover from 2001-2023 using modis satellite data over asia,

W. Li, S. Liang, Y . Chen, H. Ma, J. Xu, Y . Ma, Z. Chen, H. Fang, and F. Zhang, “A cnn-transformer hybrid framework for mapping annual wheat fractional cover from 2001-2023 using modis satellite data over asia,”IEEE Journal of Selected Topics in Signal Processing, pp. 1–15, 2026

2001

-

[10]

Nirnet: Noise incentive robust network in remote sensing object detection under cloud corruption,

P. Zhang, G. Cheng, C. Lang, X. Xie, and J. Han, “Nirnet: Noise incentive robust network in remote sensing object detection under cloud corruption,”IEEE Transactions on Geoscience and Remote Sensing, 2025

2025

-

[11]

Msfmamba: Multi-scale feature fusion state space model for multi-source remote sensing image classification,

F. Gao, X. Jin, X. Zhou, J. Dong, and Q. Du, “Msfmamba: Multi-scale feature fusion state space model for multi-source remote sensing image classification,”IEEE Transactions on Geoscience and Remote Sensing, vol. 63, pp. 1–16, 2025

2025

-

[12]

Rsref- seg 2: Decoupling referring remote sensing image segmentation with foundation models,

K. Chen, C. Liu, B. Chen, J. Zhang, Z. Zou, and Z. Shi, “Rsref- seg 2: Decoupling referring remote sensing image segmentation with foundation models,”IEEE Transactions on Geoscience and Remote Sensing, vol. 64, pp. 1–20, 2026

2026

-

[13]

A decoupling paradigm with prompt learning for remote sensing image change captioning,

C. Liu, R. Zhao, J. Chen, Z. Qi, Z. Zou, and Z. Shi, “A decoupling paradigm with prompt learning for remote sensing image change captioning,”IEEE Transactions on Geoscience and Remote Sensing, vol. 61, pp. 1–18, 2023

2023

-

[14]

W. Li, S. Liang, Y . Zhang, L. Liu, K. Chen, Y . Chen, H. Ma, J. Xu, Y . Ma, S. Guan, and Z. Shi, “Fine-grained hierarchical crop type classification from integrated hyperspectral enmap data and multispectral sentinel-2 time series: A large-scale dataset and dual-stream transformer method,”arXiv preprint arXiv:2506.06155, 2025

-

[15]

Retrieval of atmospheric and surface parameters from airs/amsu/hsb data in the presence of clouds,

J. Susskind, C. D. Barnet, and J. M. Blaisdell, “Retrieval of atmospheric and surface parameters from airs/amsu/hsb data in the presence of clouds,”IEEE Transactions on Geoscience and Remote Sensing, vol. 41, no. 2, pp. 390–409, 2003

2003

-

[16]

Foba: A foreground–background co-guided method and new benchmark for remote sensing semantic change detection,

H. Zhang, H. Guo, K. Chen, H. Chen, Z. Zou, and Z. Shi, “Foba: A foreground–background co-guided method and new benchmark for remote sensing semantic change detection,” IEEE Transactions on Geoscience and Remote Sensing, vol. 63, pp. 1–19, 2025

2025

-

[17]

Structural representation-guided gan for remote sensing image cloud removal,

J. Yang, W. Wang, K. Chen, L. Liu, Z. Zou, and Z. Shi, “Structural representation-guided gan for remote sensing image cloud removal,”IEEE Geoscience and Remote Sensing Letters, 2024

2024

-

[18]

Seg- sr: Integrating semantic knowledge into remote sensing image super-resolution via vision-language model,

B. Chen, K. Chen, M. Yang, Z. Zou, and Z. Shi, “Seg- sr: Integrating semantic knowledge into remote sensing image super-resolution via vision-language model,”IEEE Transactions on Geoscience and Remote Sensing, vol. 63, pp. 1–15, 2025

2025

-

[19]

A coarse-to-fine method for cloud detection in remote sensing images,

X. Kang, G. Gao, Q. Hao, and S. Li, “A coarse-to-fine method for cloud detection in remote sensing images,”IEEE Geoscience and Remote Sensing Letters, vol. 16, no. 1, pp. 110–114, 2018

2018

-

[20]

Cloud detection for fy meteorology satellite based on ensemble thresholds and random forests approach,

H. Fu, Y . Shen, J. Liu, G. He, J. Chen, P. Liu, J. Qian, and J. Li, “Cloud detection for fy meteorology satellite based on ensemble thresholds and random forests approach,”Remote Sensing, vol. 11, no. 1, p. 44, 2018

2018

-

[21]

Support vector machines for cloud detection over ice-snow areas,

G. Chen and D. E, “Support vector machines for cloud detection over ice-snow areas,”Geo-spatial Information Science, vol. 10, no. 2, pp. 117–120, 2007

2007

-

[22]

Energy-based cloud detection in multispectral images based on the svm technique,

Y . Sui, B. He, and T. Fu, “Energy-based cloud detection in multispectral images based on the svm technique,”International Journal of Remote Sensing, vol. 40, no. 14, pp. 5530–5543, 2019

2019

-

[23]

Scene learning for cloud detection on remote- sensing images,

Z. An and Z. Shi, “Scene learning for cloud detection on remote- sensing images,”IEEE Journal of selected topics in applied earth observations and remote sensing, vol. 8, no. 8, pp. 4206– 4222, 2015

2015

-

[24]

Bag-of-words and object-based classifica- tion for cloud extraction from satellite imagery,

Y . Yuan and X. Hu, “Bag-of-words and object-based classifica- tion for cloud extraction from satellite imagery,”IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, vol. 8, no. 8, pp. 4197–4205, 2015

2015

-

[25]

Cloud extraction from chinese high resolution satellite imagery by probabilistic latent semantic analysis and object-based machine learning,

K. Tan, Y . Zhang, and X. Tong, “Cloud extraction from chinese high resolution satellite imagery by probabilistic latent semantic analysis and object-based machine learning,”Remote Sensing, vol. 8, no. 11, p. 963, 2016

2016

-

[26]

Cloud detection in satellite images based on natural scene statistics and gabor features,

C. Deng, Z. Li, W. Wang, S. Wang, L. Tang, and A. C. Bovik, “Cloud detection in satellite images based on natural scene statistics and gabor features,”IEEE Geoscience and Remote Sensing Letters, vol. 16, no. 4, pp. 608–612, 2018

2018

-

[27]

Con- volutional neural networks for multispectral image cloud mask- ing,

G. Mateo-Garc ´ıa, L. G´omez-Chova, and G. Camps-Valls, “Con- volutional neural networks for multispectral image cloud mask- ing,” in2017 IEEE International Geoscience and Remote Sens- ing Symposium (IGARSS). IEEE, 2017, pp. 2255–2258

2017

-

[28]

Multilevel cloud detection in remote sensing images based on deep learning,

F. Xie, M. Shi, Z. Shi, J. Yin, and D. Zhao, “Multilevel cloud detection in remote sensing images based on deep learning,” IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, vol. 10, no. 8, pp. 3631–3640, 2017

2017

-

[29]

Fully convolutional networks for semantic segmentation,

J. Long, E. Shelhamer, and T. Darrell, “Fully convolutional networks for semantic segmentation,” inProceedings of the IEEE conference on computer vision and pattern recognition, 2015, pp. 3431–3440

2015

-

[30]

Utilizing multilevel features for cloud detection on satellite imagery,

X. Wu and Z. Shi, “Utilizing multilevel features for cloud detection on satellite imagery,”Remote Sensing, vol. 10, no. 11, p. 1853, 2018

2018

-

[31]

Cloud and cloud shadow detection using multilevel feature fused segmentation network,

Z. Yan, M. Yan, H. Sun, K. Fu, J. Hong, J. Sun, Y . Zhang, and X. Sun, “Cloud and cloud shadow detection using multilevel feature fused segmentation network,”IEEE Geoscience and Remote Sensing Letters, vol. 15, no. 10, pp. 1600–1604, 2018

2018

-

[32]

U-net: Convolutional networks for biomedical image segmentation,

O. Ronneberger, P. Fischer, and T. Brox, “U-net: Convolutional networks for biomedical image segmentation,” inInternational Conference on Medical image computing and computer-assisted JOURNAL OF LATEX CLASS FILES, VOL. XX, NO. XX, XX 2026 16 intervention. Springer, 2015, pp. 234–241

2026

-

[33]

Cloudfcn: Accu- rate and robust cloud detection for satellite imagery with deep learning,

A. Francis, P. Sidiropoulos, and J.-P. Muller, “Cloudfcn: Accu- rate and robust cloud detection for satellite imagery with deep learning,”Remote Sensing, vol. 11, no. 19, p. 2312, 2019

2019

-

[34]

Multi-sensor cloud and cloud shadow segmentation with a convolutional neural net- work,

M. Wieland, Y . Li, and S. Martinis, “Multi-sensor cloud and cloud shadow segmentation with a convolutional neural net- work,”Remote Sensing of Environment, vol. 230, p. 111203, 2019

2019

-

[35]

A cloud detection algorithm for satellite imagery based on deep learning,

J. H. Jeppesen, R. H. Jacobsen, F. Inceoglu, and T. S. Tofte- gaard, “A cloud detection algorithm for satellite imagery based on deep learning,”Remote sensing of environment, vol. 229, pp. 247–259, 2019

2019

-

[36]

Cdnet: Cnn-based cloud detection for remote sensing imagery,

J. Yang, J. Guo, H. Yue, Z. Liu, H. Hu, and K. Li, “Cdnet: Cnn-based cloud detection for remote sensing imagery,”IEEE Transactions on Geoscience and Remote Sensing, vol. 57, no. 8, pp. 6195–6211, 2019

2019

-

[37]

An effective cloud detection method for gaofen-5 images via deep learning,

J. Yu, Y . Li, X. Zheng, Y . Zhong, and P. He, “An effective cloud detection method for gaofen-5 images via deep learning,” Remote Sensing, vol. 12, no. 13, p. 2106, 2020

2020

-

[38]

Deep matting for cloud detection in remote sensing images,

W. Li, Z. Zou, and Z. Shi, “Deep matting for cloud detection in remote sensing images,”IEEE Transactions on Geoscience and Remote Sensing, vol. 58, no. 12, pp. 8490–8502, 2020

2020

-

[39]

A geographic information-driven method and a new large scale dataset for remote sensing cloud/snow detection,

X. Wu, Z. Shi, and Z. Zou, “A geographic information-driven method and a new large scale dataset for remote sensing cloud/snow detection,”ISPRS Journal of Photogrammetry and Remote Sensing, vol. 174, pp. 87–104, 2021

2021

-

[40]

Weakly supervised adversarial training for remote sensing image cloud and snow detection,

J. Yang, W. Li, K. Chen, Z. Liu, Z. Shi, and Z. Zou, “Weakly supervised adversarial training for remote sensing image cloud and snow detection,”IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2024

2024

-

[41]

Improving deep learning-based cloud detection for satellite images with attention mechanism,

L. Zhang, J. Sun, X. Yang, R. Jiang, and Q. Ye, “Improving deep learning-based cloud detection for satellite images with attention mechanism,”IEEE Geoscience and Remote Sensing Letters, vol. 19, pp. 1–5, 2021

2021

-

[42]

Cloud detection for satellite imagery using attention-based u-net convolutional neu- ral network,

Y . Guo, X. Cao, B. Liu, and M. Gao, “Cloud detection for satellite imagery using attention-based u-net convolutional neu- ral network,”Symmetry, vol. 12, no. 6, p. 1056, 2020

2020

-

[43]

Transcloudseg: Ground-based cloud image segmentation with transformer,

S. Liu, J. Zhang, Z. Zhang, X. Cao, and T. S. Durrani, “Transcloudseg: Ground-based cloud image segmentation with transformer,”IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, vol. 15, pp. 6121–6132, 2022

2022

-

[44]

Cloudformer: Supplementary aggregation feature and mask-classification net- work for cloud detection,

Z. Zhang, Z. Xu, C. Liu, Q. Tian, and Y . Wang, “Cloudformer: Supplementary aggregation feature and mask-classification net- work for cloud detection,”Applied Sciences, vol. 12, no. 7, p. 3221, 2022

2022

-

[45]

Cloudvit: A lightweight vision transformer network for remote sensing cloud detection,

B. Zhang, Y . Zhang, Y . Li, Y . Wan, and Y . Yao, “Cloudvit: A lightweight vision transformer network for remote sensing cloud detection,”IEEE Geoscience and Remote Sensing Letters, vol. 20, pp. 1–5, 2022

2022

-

[46]

Cd-ctfm: A lightweight cnn-transformer network for remote sensing cloud detection fusing multiscale features,

W. Ge, X. Yang, R. Jiang, W. Shao, and L. Zhang, “Cd-ctfm: A lightweight cnn-transformer network for remote sensing cloud detection fusing multiscale features,”IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, vol. 17, pp. 4538–4551, 2024

2024

-

[47]

A hybrid algorithm with swin transformer and convolution for cloud detection,

C. Gong, T. Long, R. Yin, W. Jiao, and G. Wang, “A hybrid algorithm with swin transformer and convolution for cloud detection,”Remote Sensing, vol. 15, no. 21, p. 5264, 2023

2023

-

[48]

Multi- feature combined cloud and cloud shadow detection in gaofen- 1 wide field of view imagery,

Z. Li, H. Shen, H. Li, G. Xia, P. Gamba, and L. Zhang, “Multi- feature combined cloud and cloud shadow detection in gaofen- 1 wide field of view imagery,”Remote sensing of environment, vol. 191, pp. 342–358, 2017

2017

-

[49]

Support-vector networks,

C. Cortes and V . Vapnik, “Support-vector networks,”Machine learning, vol. 20, no. 3, pp. 273–297, 1995

1995

-

[50]

Random forests,

L. Breiman, “Random forests,”Machine learning, vol. 45, no. 1, pp. 5–32, 2001

2001

-

[51]

Efficiently Modeling Long Sequences with Structured State Spaces

A. Gu, K. Goel, and C. R ´e, “Efficiently modeling long sequences with structured state spaces,”arXiv preprint arXiv:2111.00396, 2021

work page internal anchor Pith review arXiv 2021

-

[52]

Combining recurrent, convolutional, and continuous- time models with linear state space layers,

A. Gu, I. Johnson, K. Goel, K. Saab, T. Dao, A. Rudra, and C. R ´e, “Combining recurrent, convolutional, and continuous- time models with linear state space layers,”Advances in neural information processing systems, vol. 34, pp. 572–585, 2021

2021

-

[53]

Mamba: Linear-Time Sequence Modeling with Selective State Spaces

A. Gu and T. Dao, “Mamba: Linear-time sequence modeling with selective state spaces,”arXiv preprint arXiv:2312.00752, 2023

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[54]

Igroupss- mamba: Interval group spatial-spectral mamba for hyperspectral image classification,

Y . He, B. Tu, P. Jiang, B. Liu, J. Li, and A. Plaza, “Igroupss- mamba: Interval group spatial-spectral mamba for hyperspectral image classification,”IEEE Transactions on Geoscience and Remote Sensing, 2024

2024

-

[55]

Rs-mamba for large remote sensing image dense prediction,

S. Zhao, H. Chen, X. Zhang, P. Xiao, L. Bai, and W. Ouyang, “Rs-mamba for large remote sensing image dense prediction,” IEEE Transactions on Geoscience and Remote Sensing, 2024

2024

-

[56]

Cdmamba: Incorporating local clues into mamba for re- mote sensing image binary change detection,

H. Zhang, K. Chen, C. Liu, H. Chen, Z. Zou, and Z. Shi, “Cdmamba: Incorporating local clues into mamba for re- mote sensing image binary change detection,”arXiv preprint arXiv:2406.04207, 2024

-

[57]

Rscama: Remote sensing image change captioning with state space model,

C. Liu, K. Chen, B. Chen, H. Zhang, Z. Zou, and Z. Shi, “Rscama: Remote sensing image change captioning with state space model,”IEEE Geoscience and Remote Sensing Letters, vol. 21, pp. 1–5, 2024

2024

-

[58]

Rs 3 mamba: Visual state space model for remote sensing image semantic segmentation,

X. Ma, X. Zhang, and M.-O. Pun, “Rs 3 mamba: Visual state space model for remote sensing image semantic segmentation,” IEEE Geoscience and Remote Sensing Letters, vol. 21, pp. 1–5, 2024

2024

-

[59]

Cm-unet: Hybrid cnn-mamba unet for remote sensing image semantic segmentation,

M. Liu, J. Dan, Z. Lu, Y . Yu, Y . Li, and X. Li, “Cm-unet: Hybrid cnn-mamba unet for remote sensing image semantic segmentation,”arXiv preprint arXiv:2405.10530, 2024

-

[60]

Unetmamba: An efficient unet-like mamba for semantic seg- mentation of high-resolution remote sensing images,

E. Zhu, Z. Chen, D. Wang, H. Shi, X. Liu, and L. Wang, “Unetmamba: An efficient unet-like mamba for semantic seg- mentation of high-resolution remote sensing images,”IEEE Geoscience and Remote Sensing Letters, 2024

2024

-

[61]

Pyramidmamba: Rethinking pyramid feature fusion with se- lective space state model for semantic segmentation of remote sensing imagery,

L. Wang, D. Li, S. Dong, X. Meng, X. Zhang, and D. Hong, “Pyramidmamba: Rethinking pyramid feature fusion with se- lective space state model for semantic segmentation of remote sensing imagery,”International Journal of Applied Earth Ob- servation and Geoinformation, vol. 144, p. 104884, 2025

2025

-

[62]

Y . Hu, X. Ma, J. Sui, and M.-O. Pun, “Ppmamba: A pyramid pooling local auxiliary ssm-based model for remote sensing im- age semantic segmentation,”arXiv preprint arXiv:2409.06309, 2024

-

[63]

Gu,Modeling sequences with structured state spaces

A. Gu,Modeling sequences with structured state spaces. Stan- ford University, 2023

2023

-

[64]

Efficiently modeling long se- quences with structured state spaces,

A. Gu, K. Goel, and C. R ´e, “Efficiently modeling long se- quences with structured state spaces,” inThe International Conference on Learning Representations (ICLR), 2022

2022

-

[65]

Vmamba: Visual state space model,

Y . Liu, Y . Tian, Y . Zhao, H. Yu, L. Xie, Y . Wang, Q. Ye, J. Jiao, and Y . Liu, “Vmamba: Visual state space model,”Advances in neural information processing systems, vol. 37, pp. 103 031– 103 063, 2024

2024

-

[66]

SGDR: Stochastic Gradient Descent with Warm Restarts

I. Loshchilov and F. Hutter, “Sgdr: Stochastic gradient descent with warm restarts,”arXiv preprint arXiv:1608.03983, 2016

work page internal anchor Pith review Pith/arXiv arXiv 2016

-

[67]

Encoder-decoder with atrous separable convolution for se- mantic image segmentation,

L.-C. Chen, Y . Zhu, G. Papandreou, F. Schroff, and H. Adam, “Encoder-decoder with atrous separable convolution for se- mantic image segmentation,” inProceedings of the European conference on computer vision (ECCV), 2018, pp. 801–818

2018

-

[68]

Dcnet: A deformable convolutional cloud detection network for remote sensing imagery,

Y . Liu, W. Wang, Q. Li, M. Min, and Z. Yao, “Dcnet: A deformable convolutional cloud detection network for remote sensing imagery,”IEEE Geoscience and Remote Sensing Let- ters, vol. 19, pp. 1–5, 2021

2021

-

[69]

Cloud detection with boundary nets,

K. Wu, Z. Xu, X. Lyu, and P. Ren, “Cloud detection with boundary nets,”ISPRS Journal of Photogrammetry and Remote Sensing, vol. 186, pp. 218–231, 2022

2022

-

[70]

Cloudu-net: A deep convolutional neural network architecture for daytime and nighttime cloud images’ segmentation,

C. Shi, Y . Zhou, B. Qiu, D. Guo, and M. Li, “Cloudu-net: A deep convolutional neural network architecture for daytime and nighttime cloud images’ segmentation,”IEEE Geoscience and Remote Sensing Letters, vol. 18, no. 10, pp. 1688–1692, 2020

2020

-

[71]

Cloudsegnet: JOURNAL OF LATEX CLASS FILES, VOL. XX, NO. XX, XX 2026 17 A deep network for nychthemeron cloud image segmentation,

S. Dev, A. Nautiyal, Y . H. Lee, and S. Winkler, “Cloudsegnet: JOURNAL OF LATEX CLASS FILES, VOL. XX, NO. XX, XX 2026 17 A deep network for nychthemeron cloud image segmentation,” IEEE Geoscience and Remote Sensing Letters, vol. 16, no. 12, pp. 1814–1818, 2019

2026

-

[72]

U-mamba: Enhancing long-range dependency for biomedical image segmentation

J. Ma, F. Li, and B. Wang, “U-mamba: Enhancing long-range dependency for biomedical image segmentation,”arXiv preprint arXiv:2401.04722, 2024

-

[73]

Vm-unet: Vision mamba unet for medical image segmentation,

J. Ruan, J. Li, and S. Xiang, “Vm-unet: Vision mamba unet for medical image segmentation,”ACM Transactions on Multimedia Computing, Communications and Applications, 2024

2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.