Recognition: unknown

InstrAct: Towards Action-Centric Understanding in Instructional Videos

Pith reviewed 2026-05-10 17:03 UTC · model grok-4.3

The pith

Filtering noisy captions and generating action-centric hard negatives lets video models prioritize motion over objects in instructional videos.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

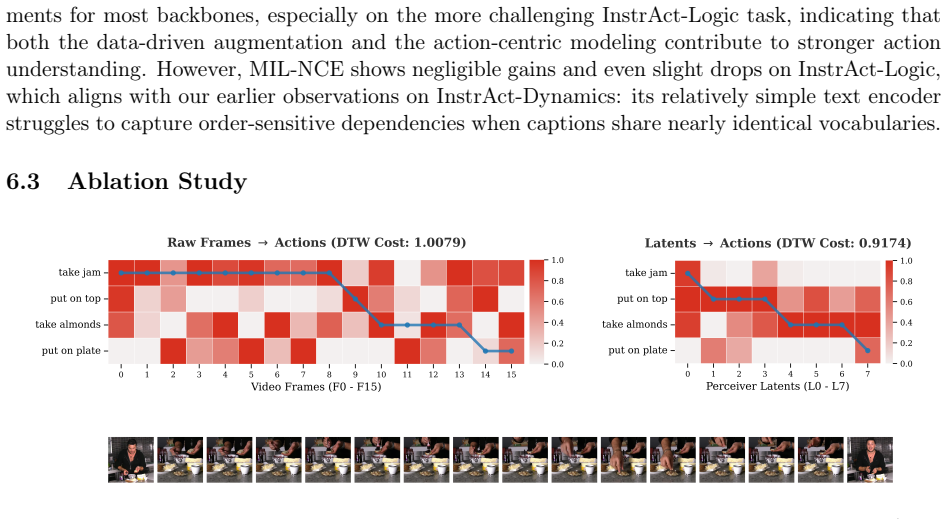

InstrAction establishes that a data-driven caption filter combined with action-centric hard negative generation, an Action Perceiver for motion tokens, Dynamic Time Warping alignment, and Masked Action Modeling produces representations that capture fine-grained actions and their temporal relations more effectively than prior video pretraining approaches.

What carries the argument

The Action Perceiver, which selects motion-relevant tokens from redundant video encodings to reduce static bias during contrastive pretraining with action-focused hard negatives.

Load-bearing premise

Filtering noisy captions and generating action-centric hard negatives will disentangle actions from objects without introducing new biases or discarding critical information.

What would settle it

Measure whether performance gains disappear on a controlled set of instructional videos that share the same objects and scenes but differ only in the performed actions.

Figures

read the original abstract

Understanding instructional videos requires recognizing fine-grained actions and modeling their temporal relations, which remains challenging for current Video Foundation Models (VFMs). This difficulty stems from noisy web supervision and a pervasive "static bias", where models rely on objects rather than motion cues. To address this, we propose InstrAction, a pretraining framework for instructional videos' action-centric representations. We first introduce a data-driven strategy, which filters noisy captions and generates action-centric hard negatives to disentangle actions from objects during contrastive learning. At the visual feature level, an Action Perceiver extracts motion-relevant tokens from redundant video encodings. Beyond contrastive learning, we introduce two auxiliary objectives: Dynamic Time Warping alignment (DTW-Align) for modeling sequential temporal structure, and Masked Action Modeling (MAM) for strengthening cross-modal grounding. Finally, we introduce the InstrAct Bench to evaluate action-centric understanding, where our method consistently outperforms state-of-the-art VFMs on semantic reasoning, procedural logic, and fine-grained retrieval tasks.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes InstrAct, a pretraining framework for action-centric representations in instructional videos to mitigate static bias in Video Foundation Models (VFMs). It describes a data-driven pipeline that filters noisy captions and generates action-centric hard negatives for contrastive learning, introduces an Action Perceiver module to extract motion-relevant tokens, and adds two auxiliary objectives: Dynamic Time Warping alignment (DTW-Align) for temporal structure and Masked Action Modeling (MAM) for cross-modal grounding. The work also introduces the InstrAct Bench and claims consistent outperformance over state-of-the-art VFMs on semantic reasoning, procedural logic, and fine-grained retrieval tasks.

Significance. If the reported outperformance is substantiated with rigorous controls, the framework's combination of caption filtering, hard-negative generation, and auxiliary temporal/cross-modal objectives could meaningfully advance action-centric video understanding beyond current VFMs. The new InstrAct Bench and explicitly introduced modules (Action Perceiver, DTW-Align, MAM) represent concrete contributions that could be adopted or extended by the community, particularly for instructional video applications.

major comments (1)

- [Abstract and Experimental Evaluation] The central claim of consistent outperformance on InstrAct Bench tasks rests on experimental validation, yet the abstract provides no quantitative results, baseline implementations, statistical significance tests, ablation studies, or details on data filtering criteria and hard-negative generation. This absence makes it impossible to determine whether gains arise from the proposed components or from unexamined biases in the pipeline.

minor comments (1)

- [Abstract] The abstract introduces several new terms (Action Perceiver, DTW-Align, MAM, InstrAct Bench) without brief definitions or high-level equations; adding one-sentence characterizations would improve immediate readability.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback. We address the major comment on the abstract and experimental evaluation below, agreeing that enhancements to the abstract will improve transparency.

read point-by-point responses

-

Referee: [Abstract and Experimental Evaluation] The central claim of consistent outperformance on InstrAct Bench tasks rests on experimental validation, yet the abstract provides no quantitative results, baseline implementations, statistical significance tests, ablation studies, or details on data filtering criteria and hard-negative generation. This absence makes it impossible to determine whether gains arise from the proposed components or from unexamined biases in the pipeline.

Authors: We agree that the abstract would benefit from including key quantitative results to better substantiate the claims of outperformance. In the revised manuscript, we will update the abstract to incorporate concise highlights of our main results on the InstrAct Bench (e.g., specific gains over prior VFMs on semantic reasoning, procedural logic, and retrieval tasks). The full paper already provides the requested details: baseline implementations and comparisons in Section 4.1, statistical significance testing in the results tables of Section 4, comprehensive ablation studies in Section 4.3, and explicit descriptions of the data filtering criteria and hard-negative generation process in Section 3.1. Adding these quantitative elements to the abstract will directly address the concern and make the central claims more immediately verifiable, while the body of the paper remains unchanged in its rigor. revision: yes

Circularity Check

No significant circularity detected

full rationale

The paper's core claims rest on a pipeline of explicitly introduced components (caption filtering for hard negatives, Action Perceiver, DTW-Align, MAM) applied to contrastive pretraining and evaluated on a newly constructed InstrAct Bench. No equations, fitted parameters, or self-citations are shown to reduce any prediction or uniqueness claim back to the inputs by construction. The outperformance statements are framed as empirical results on held-out tasks rather than tautological re-statements of the training objectives or prior self-work.

Axiom & Free-Parameter Ledger

free parameters (1)

- Hyperparameters for contrastive loss, DTW-Align, and MAM

axioms (2)

- domain assumption Action-centric hard negatives generated from captions can disentangle actions from objects during contrastive learning

- domain assumption DTW-Align and MAM strengthen temporal structure and cross-modal grounding

invented entities (2)

-

Action Perceiver

no independent evidence

-

InstrAct Bench

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Flamingo: a visual language model for few-shot learning

Jean-Baptiste Alayrac, Jeff Donahue, Pauline Luc, Antoine Miech, Iain Barr, Yana Hasson, KarelLenc, ArthurMensch, KatieMillican, MatthewReynolds, RomanRing, ElizaRutherford, SerkanCabi, TengdaHan, ZhaohanGong, DavidMolyneaux, MishaDenil, OriolVinyals, Karen Simonyan, and Andrew Zisserman. Flamingo: a visual language model for few-shot learning. InAdvances...

2022

-

[2]

Frozen in time: A joint video and image encoder for end-to-end retrieval

Max Bain, Arsha Nagrani, Gül Varol, and Andrew Zisserman. Frozen in time: A joint video and image encoder for end-to-end retrieval. InProceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2021

2021

-

[3]

arXiv preprint arXiv:2504.13180 , year=

Jang Hyun Cho et al. Perceptionlm: Open-access data and models for detailed visual under- standing.arXiv preprint arXiv:2504.13180, 2025

-

[4]

Soft-dtw: a differentiable loss function for time-series

Marco Cuturi and Mathieu Blondel. Soft-dtw: a differentiable loss function for time-series. In Proceedings of the 34th International Conference on Machine Learning - Volume 70, ICML’17, page 894–903. JMLR.org, 2017

2017

-

[5]

Scaling egocentric vision: The epic-kitchens dataset

Dima Damen, Hazel Doughty, Giovanni Maria Farinella, Sanja Fidler, Antonino Furnari, Evan- gelos Kazakos, Davide Moltisanti, Jonathan Munro, Toby Perrett, Will Price, and Michael Wray. Scaling egocentric vision: The epic-kitchens dataset. InEuropean Conference on Com- puter Vision (ECCV), 2018. 13

2018

-

[6]

BERT: Pre-training of deepbidirectionaltransformersforlanguageunderstanding

Jacob Devlin, Ming-Wei Chang, Kenton Lee, and Kristina Toutanova. BERT: Pre-training of deepbidirectionaltransformersforlanguageunderstanding. InJillBurstein, ChristyDoran, and Thamar Solorio, editors,Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Lo...

2019

-

[7]

Unsupervised procedure learning via joint dynamic sum- marization

Ehsan Elhamifar and Zwe Naing. Unsupervised procedure learning via joint dynamic sum- marization. InProceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2019

2019

-

[8]

Albar: Adversarial learning approach to mitigate biases in action recognition

Joseph Fioresi, Ishan Rajendrakumar Dave, and Mubarak Shah. Albar: Adversarial learning approach to mitigate biases in action recognition. InThe Thirteenth International Conference on Learning Representations, 2025

2025

-

[9]

Something Some- thing

Raghav Goyal, Samira Ebrahimi Kahou, Vincent Michalski, Joanna Materzynska, Susanne Westphal, Heuna Kim, Valentin Haenel, Ingo Fruend, Peter Yianilos, Moritz Mueller-Freitag, Florian Hoppe, Christian Thurau, Ingo Bax, and Roland Memisevic. The “Something Some- thing” Video Database for Learning and Evaluating Visual Common Sense . In2017 IEEE Internationa...

2017

-

[10]

Ego4d: Around the world in 3,000 hours of egocentric video

Kristen Grauman, Andrew Westbury, Eugene Byrne, Zachary Chavis, Antonino Furnari, Rohit Girdhar, Jackson Hamburger, Hao Jiang, Miao Liu, Xingyu Liu, et al. Ego4d: Around the world in 3,000 hours of egocentric video. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 18995–19012, 2022

2022

-

[11]

Probing image-language transformers for verb un- derstanding

Lisa Anne Hendricks and Aida Nematzadeh. Probing image-language transformers for verb un- derstanding. In Chengqing Zong, Fei Xia, Wenjie Li, and Roberto Navigli, editors,Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021, pages 3635–3644, Online, August 2021. Association for Computational Linguistics

2021

-

[12]

Perceiver: General perception with iterative attention

Andrew Jaegle, Felix Gimeno, Andy Brock, Oriol Vinyals, Andrew Zisserman, and Joao Car- reira. Perceiver: General perception with iterative attention. In Marina Meila and Tong Zhang, editors,Proceedings of the 38th International Conference on Machine Learning, volume 139 of Proceedings of Machine Learning Research, pages 4651–4664. PMLR, 18–24 Jul 2021

2021

-

[13]

The Kinetics Human Action Video Dataset

Will Kay, João Carreira, Karen Simonyan, Brian Zhang, Chloe Hillier, Sudheendra Vijaya- narasimhan, Fabio Viola, Tim Green, Amir Back, Paul Natsev, Mustafa Suleyman, and An- drew Zisserman. The kinetics human action video dataset. InarXiv preprint arXiv:1705.06950, 2017

work page internal anchor Pith review arXiv 2017

-

[14]

Kuehne, H

H. Kuehne, H. Jhuang, E. Garrote, T. Poggio, and T. Serre. Hmdb: A large video database for human motion recognition. In2011 International Conference on Computer Vision, pages 2556–2563, 2011

2011

-

[15]

Disentangled concepts speak louder than words: Explainable video action recognition

Jongseo Lee, Wooil Lee, Gyeong-Moon Park, Seong Tae Kim, and Jinwoo Choi. Disentangled concepts speak louder than words: Explainable video action recognition. InNeurIPS, 2025

2025

-

[16]

Mitigating and evaluating static bias of action representations in the background and the foreground

Haoxin Li, Yuan Liu, Hanwang Zhang, and Boyang Li. Mitigating and evaluating static bias of action representations in the background and the foreground. InInternational Conference on Computer Vision (ICCV), 2023. 14

2023

-

[17]

Junnan Li, Dongxu Li, Caiming Xiong, and Steven C. H. Hoi. Align before fuse: Vision and lan- guage representation learning with momentum distillation. InAdvances in Neural Information Processing Systems (NeurIPS), 2021

2021

-

[18]

ArXiv preprint abs/2002.06353 (2020)

Huaishao Luo, Lei Ji, Botian Shi, Haoyang Huang, Nan Duan, Tianrui Li, Jason Li, Taroon Bharti, and Ming Zhou. Univl: A unified video and language pre-training model for multimodal understanding and generation.arXiv preprint arXiv:2002.06353, 2020

-

[19]

Clip4clip: An empirical study of clip for end to end video clip retrieval and captioning.Neurocomputing, 508:293–304, 2022

Huaishao Luo, Lei Ji, Ming Zhong, Yang Chen, Wen Lei, Nan Duan, and Tianrui Li. Clip4clip: An empirical study of clip for end to end video clip retrieval and captioning.Neurocomputing, 508:293–304, 2022

2022

-

[20]

End-to-End Learning of Visual Representations from Uncurated Instructional Videos

AntoineMiech, Jean-BaptisteAlayrac, LucasSmaira, IvanLaptev, JosefSivic, andAndrewZis- serman. End-to-End Learning of Visual Representations from Uncurated Instructional Videos. InCVPR, 2020

2020

-

[21]

HowTo100M: Learning a Text-Video Embedding by Watching Hundred Million Narrated Video Clips

Antoine Miech, Dimitri Zhukov, Jean-Baptiste Alayrac, Makarand Tapaswi, Ivan Laptev, and Josef Sivic. HowTo100M: Learning a Text-Video Embedding by Watching Hundred Million Narrated Video Clips. InICCV, 2019

2019

-

[22]

Verbs in action: Improving verb understanding in video-language models

Liliane Momeni, Mathilde Caron, Arsha Nagrani, Andrew Zisserman, and Cordelia Schmid. Verbs in action: Improving verb understanding in video-language models. InInternational Conference on Computer Vision (ICCV), 2023

2023

-

[23]

Spoken Moments: Learning Joint Audio-Visual Representations from Video Descriptions

Mathew Monfort, SouYoung Jin, Alexander Liu, David Harwath, Rogerio Feris, James Glass, and Aude Oliva. Spoken Moments: Learning Joint Audio-Visual Representations from Video Descriptions . In2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 14866–14876, Los Alamitos, CA, USA, June 2021. IEEE Computer Society

2021

-

[24]

Exposing the limits of video-text models through contrast sets

Jae Sung Park, Sheng Shen, Ali Farhadi, Trevor Darrell, Yejin Choi, and Anna Rohrbach. Exposing the limits of video-text models through contrast sets. In Marine Carpuat, Marie- Catherine de Marneffe, and Ivan Vladimir Meza Ruiz, editors,Proceedings of the 2022 Confer- ence of the North American Chapter of the Association for Computational Linguistics: Hum...

2022

-

[25]

Learning transferable visual models from natural language supervision

Alec Radford, Jong Wook Kim, Chris Hallacy, Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry, Amanda Askell, Pamela Mishkin, Jack Clark, et al. Learning transferable visual models from natural language supervision. InInternational Conference on Machine Learning, pages 8748–8763. PMLR, 2021

2021

-

[26]

Recognizing human actions: A local svm approach

Christian Schuldt, Ivan Laptev, and Barbara Caputo. Recognizing human actions: A local svm approach. InProceedings of the 17th International Conference on Pattern Recognition (ICPR), pages 32–36, 2004

2004

-

[27]

UCF101: A Dataset of 101 Human Actions Classes From Videos in The Wild

Khurram Soomro, Amir Roshan Zamir, and Mubarak Shah. Ucf101: A dataset of 101 human actions classes from videos in the wild. InarXiv preprint arXiv:1212.0402, 2012

work page internal anchor Pith review arXiv 2012

-

[28]

Videobert: A joint model for video and language representation learning

Chen Sun, Fabien Baradel, Kevin Murphy, and Cordelia Schmid. Videobert: A joint model for video and language representation learning. InICCV, 2019. 15

2019

-

[29]

Coin: A large-scale dataset for comprehensive instructional video analysis

Yansong Tang, Dajun Ding, Yongming Rao, Yu Zheng, Danyang Zhang, Lili Zhao, Jiwen Lu, and Jie Zhou. Coin: A large-scale dataset for comprehensive instructional video analysis. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2019

2019

-

[30]

arXiv preprint arXiv:2307.06942 (2023)

Yi Wang, Yinan He, Yizhuo Li, Kunchang Li, Jiashuo Yu, Xin Ma, Xinyuan Chen, Yao- hui Wang, Ping Luo, Ziwei Liu, Yali Wang, Limin Wang, and Yu Qiao. Internvid: A large-scale video-text dataset for multimodal understanding and generation.arXiv preprint arXiv:2307.06942, 2023

-

[31]

Internvideo: General video foundation models via generative and discriminative learning

Yi Wang, Kunchang Li, Yizhuo Li, Yinan He, Bingkun Huang, Zhiyu Zhao, Hongjie Zhang, Jilan Xu, Yi Liu, Zun Wang, Sen Xing, Guo Chen, Junting Pan, Jiashuo Yu, Yali Wang, Limin Wang, and Yu Qiao. Internvideo: General video foundation models via generative and discriminative learning.arXiv preprint arXiv:2212.03191, 2022

-

[32]

Paxion: Patching action knowledge in video-language foundation models

ZhenhailongWang, AnselBlume, ShaLi, GenglinLiu, JaeminCho, ZinengTang, MohitBansal, and Heng Ji. Paxion: Patching action knowledge in video-language foundation models. In Advances in Neural Information Processing Systems, volume 36, 2023

2023

-

[33]

Videoclip: Contrastive pre-training for zero-shot video-text understanding

Hu Xu, Xiaolong Yang, Xiaoyu Tian, Guangyu Wang, Chen Deng, et al. Videoclip: Contrastive pre-training for zero-shot video-text understanding. InEMNLP, 2021

2021

-

[34]

Clip-vip: Adapting pre-trained image-text model to video-language representation alignment

Hongwei Xue, Yuchong Sun, Bei Fu, et al. Clip-vip: Adapting pre-trained image-text model to video-language representation alignment. InThe Eleventh International Conference on Learn- ing Representations (ICLR), 2023

2023

-

[35]

Learning fine-grained view-invariant representations from unpaired ego-exo videos via temporal alignment

Zihui Xue and Kristen Grauman. Learning fine-grained view-invariant representations from unpaired ego-exo videos via temporal alignment. InAdvances in Neural Information Processing Systems, volume 36, pages 25725–25740, 2023

2023

-

[36]

Coca: Contrastive captioners are image- text foundation models

Jiahui Yu, Zirui Wang, Vijay Vasudevan, Legg Yeung, Mojtaba Seyedhosseini, and Yonghui Wu. Coca: Contrastive captioners are image-text foundation models.arXiv preprint arXiv:2205.01917, 2022

-

[37]

When and why vision-language models behave like bags-of-words, and what to do about it? In International Conference on Learning Representations, 2023

Mert Yuksekgonul, Federico Bianchi, Pratyusha Kalluri, Dan Jurafsky, and James Zou. When and why vision-language models behave like bags-of-words, and what to do about it? In International Conference on Learning Representations, 2023

2023

-

[38]

Videoprism: A foundational visual encoder for video understanding

Long Zhao, Nitesh B Gundavarapu, Liangzhe Yuan, Hao Zhou, Shen Yan, Jennifer J Sun, Luke Friedman, Rui Qian, Tobias Weyand, Yue Zhao, et al. Videoprism: A foundational visual encoder for video understanding. InInternational Conference on Machine Learning (ICML), 2024

2024

-

[39]

Towards automatic learning of procedures from web instructional videos

Luowei Zhou, Chenliang Xu, and Jason J Corso. Towards automatic learning of procedures from web instructional videos. InAAAI, 2018

2018

-

[40]

Cross-task weakly supervised learning from instructional videos

Dimitri Zhukov, Jean-Baptiste Alayrac, Ramazan Gokberk Cinbis, David Fouhey, Ivan Laptev, and Josef Sivic. Cross-task weakly supervised learning from instructional videos. In2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 3532– 3540, 2019. 16

2019

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.