Recognition: unknown

CIR+CVN: Bridging LLM Semantic Understanding and Petri-Net Verification for Concurrent Programs

Pith reviewed 2026-05-10 16:49 UTC · model grok-4.3

The pith

LLMs synthesize an alias-free concurrency model from specifications that Petri nets can verify and repair

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The authors formalize the Concurrency Intermediate Representation as a statement-level alias-free model and the Concurrency Verification Net as a weighted place/transition Petri net with a finite global store and three-valued guards. They prove translation-correspondence results for deadlock and signal-loss analysis and define a two-layer checking architecture consisting of 61 static rules and 5 analysis predicates. Evaluation on nine representative bounded-concurrency patterns shows that the pipeline supports iterative bug detection and repair on the generated artifacts, with the goal-reachability check helping to filter semantically incomplete repairs. The trust boundary remains the g

What carries the argument

The Concurrency Intermediate Representation (CIR) and its mechanical translation to the Concurrency Verification Net (CVN), which together allow LLM-generated models to undergo Petri-net based exhaustive checking and counterexample-guided repair

If this is right

- Counterexamples identified in the CVN can be mapped back to statement identifiers in the CIR to direct targeted repairs

- The goal-reachability check over designated critical outcomes filters repairs that would otherwise drop required behaviors

- Correspondence proofs ensure that deadlock and signal-loss results from the net apply to the CIR model

- The combination of static rules and dynamic predicates provides layered coverage of concurrency properties

- The architecture enables iterative refinement on bounded-concurrency patterns

Where Pith is reading between the lines

- This model-first strategy could reduce the semantic gaps that arise when LLMs attempt to write concurrent code directly

- The explicit structure of the CIR might make it easier to integrate with other formal tools beyond Petri nets

- If the synthesis step is reliable, similar pipelines could apply to other domains where intermediate representations simplify verification

Load-bearing premise

The large language model can reliably produce a valid alias-free Concurrency Intermediate Representation from natural language that captures the intended concurrency behavior without undetectable semantic gaps

What would settle it

An experiment that finds an LLM-generated CIR passing all verification checks and the goal-reachability test, but whose corresponding source-code implementation still contains a deadlock or signal-loss bug not reflected in the model

Figures

read the original abstract

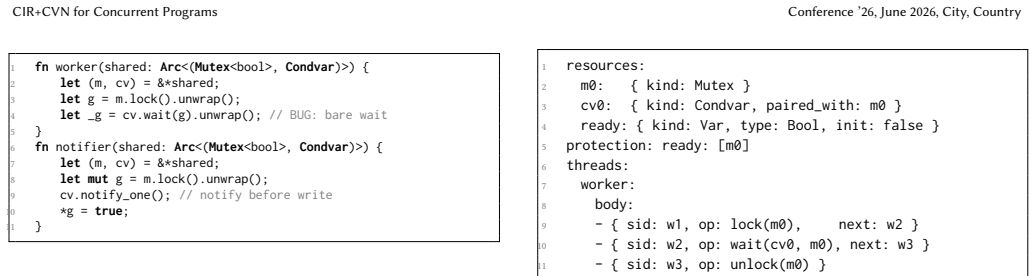

Recovering concurrency structure directly from source code is difficult because shared-resource identity and protection relations are often obscured by aliasing, ownership, and API-specific idioms. We therefore study a specification-driven, model-first verification architecture for LLM-assisted concurrent program construction. Instead of verifying arbitrary source code, a large language model first synthesizes a verification-oriented concurrency artifact from a natural-language requirement or system specification. The first formalism, the Concurrency Intermediate Representation (Cir), is a statement-level, alias-free model in which shared resources are globally named, protection relations are explicit, and each statement carries a stable identifier. The second formalism, the Concurrency Verification Net (Cvn), is a weighted place/transition Petri net with a finite global store and three-valued guards for data-dependent branching. A validated Cir artifact is translated mechanically to Cvn, explored exhaustively, and any counterexample is mapped back to statement identifiers to guide targeted repair. To reduce the risk of bug-free but behavior-dropping repairs, acceptance additionally applies a lightweight goal-reachability check over designated critical outcomes. We formalize both representations, prove translation-correspondence results for deadlock and signal-loss analysis, define a two-layer checking architecture with 61 static rules and 5 analysis predicates, and evaluate the pipeline on 9 representative bounded-concurrency patterns. The results show that the method supports iterative bug detection and repair on Cir artifacts and that goal reachability helps filter semantically incomplete repairs. The trust boundary of the present work is the generated Cir artifact rather than arbitrary source code.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces a specification-driven verification pipeline for concurrent programs that uses large language models to synthesize a Concurrency Intermediate Representation (CIR) from natural-language requirements. CIR is formalized as an alias-free, statement-level model with explicit global naming of shared resources and protection relations. This CIR is validated against 61 static rules and 5 predicates before mechanical translation to a Concurrency Verification Net (CVN), a weighted Petri net with finite store and three-valued guards. Translation correspondence is proved for deadlock and signal-loss properties. Counterexamples from CVN exploration are mapped back to CIR statements for targeted repair, augmented by goal-reachability checks to avoid behavior-dropping fixes. The pipeline is evaluated on 9 bounded-concurrency patterns, demonstrating iterative detection and repair capabilities within the generated CIR artifacts.

Significance. If the central claims hold, the work provides a novel bridge between LLM-based semantic synthesis and formal Petri-net verification for concurrency, addressing the challenges of aliasing and API idioms in direct source-code verification. The formal definitions, correspondence proofs, and two-layer checking architecture represent a substantive contribution to model-driven concurrent program construction. The evaluation illustrates practical benefits of goal reachability in filtering repairs. The approach's significance is currently bounded by its reliance on the quality of LLM-generated CIR rather than direct applicability to arbitrary codebases.

major comments (2)

- The results presuppose that LLM-synthesized CIR artifacts are faithful to the input natural-language specifications. However, the evaluation is limited to 9 patterns in which the LLM produced usable artifacts; no data is provided on the frequency of undetected semantic gaps (e.g., incorrect protection relations or missing data dependencies) that might survive the static rules and yield an internally consistent but semantically incorrect CVN.

- No formal completeness result or additional empirical validation is given to show that the 61 static rules and 5 predicates are sufficient to detect all possible semantic mismatches introduced during LLM synthesis from natural language, which is critical for the trust boundary claimed in the abstract.

minor comments (1)

- The notation for three-valued guards and the finite global store in the CVN definition would benefit from a concrete small example to illustrate data-dependent branching.

Simulated Author's Rebuttal

We thank the referee for the constructive comments and the recognition of the novel aspects of the CIR+CVN pipeline. We address each major comment point by point below, maintaining the manuscript's stated trust boundary at the generated CIR artifact.

read point-by-point responses

-

Referee: The results presuppose that LLM-synthesized CIR artifacts are faithful to the input natural-language specifications. However, the evaluation is limited to 9 patterns in which the LLM produced usable artifacts; no data is provided on the frequency of undetected semantic gaps (e.g., incorrect protection relations or missing data dependencies) that might survive the static rules and yield an internally consistent but semantically incorrect CVN.

Authors: The manuscript explicitly positions the trust boundary at the generated CIR artifact rather than arbitrary source code or LLM synthesis fidelity (abstract and introduction). The evaluation on the 9 patterns demonstrates the verification, counterexample mapping, goal-reachability filtering, and repair capabilities assuming a usable CIR input, as the pipeline is specification-driven. We do not provide frequency data on undetected semantic gaps because such gaps fall outside the claimed scope; the static rules and predicates enforce only the internal well-formedness of CIR (alias-freeness, global naming, explicit protections) before CVN translation. Semantic alignment with natural language is an orthogonal LLM concern not addressed here. This limitation is already noted in the referee's significance summary. No revision is required. revision: no

-

Referee: No formal completeness result or additional empirical validation is given to show that the 61 static rules and 5 predicates are sufficient to detect all possible semantic mismatches introduced during LLM synthesis from natural language, which is critical for the trust boundary claimed in the abstract.

Authors: The 61 rules and 5 predicates implement a two-layer check for CIR conformance to its formal definition (alias-free statements, explicit resource protections, consistency predicates), enabling the proved translation correspondence to CVN for deadlock and signal-loss properties. They are not claimed to be complete detectors of every possible semantic mismatch with natural-language input, as the space of LLM synthesis deviations is unbounded and a general completeness result is not feasible. The trust boundary remains the CIR, so the rules provide practical internal validation sufficient for the pipeline's soundness claims. We can partially revise the discussion section to further emphasize this distinction and the rules' design rationale. revision: partial

Circularity Check

No significant circularity in derivation chain

full rationale

The paper defines two new formalisms (Cir as alias-free statement-level model and CVN as weighted Petri net), proves mechanical translation correspondence for deadlock and signal-loss, specifies 61 static rules plus 5 predicates, and evaluates the pipeline empirically on 9 bounded patterns. No derivation reduces a claimed result to a fitted parameter, self-referential definition, or load-bearing self-citation. The central claims about iterative detection/repair and goal-reachability filtering rest on the introduced representations and external evaluation rather than on quantities defined in terms of themselves. The trust boundary is explicitly stated as the generated Cir artifact.

Axiom & Free-Parameter Ledger

invented entities (2)

-

Concurrency Intermediate Representation (CIR)

no independent evidence

-

Concurrency Verification Net (CVN)

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Rajamani

Thomas Ball and Sriram K. Rajamani. 2002. The SLAM project: debugging system software via static analysis. InProceedings of the 29th ACM SIGPLAN- SIGACT Symposium on Principles of Programming Languages (POPL). ACM, 1–3. https://dblp.org/rec/conf/popl/BallR02

2002

-

[2]

Erkan Keremoglu

Dirk Beyer and M. Erkan Keremoglu. 2011. CPAchecker: A Tool for Config- urable Software Verification. InComputer Aided Verification, 23rd International Conference (CA V). Springer, 184–190. https://dblp.org/rec/conf/cav/BeyerK11

2011

-

[3]

Devanbu, and Michael Pradel

Islem Bouzenia, Premkumar T. Devanbu, and Michael Pradel. 2025. RepairAgent: An Autonomous, LLM-Based Agent for Program Repair. InProceedings of the 47th IEEE/ACM International Conference on Software Engineering (ICSE). IEEE/ACM, 2188–2200. https://dblp.org/rec/conf/icse/BouzeniaDP25

2025

-

[4]

Chandrasekhar Boyapati, Robert Lee, and Martin C. Rinard. 2002. Ownership types for safe programming: preventing data races and deadlocks. InProceedings of the 17th ACM SIGPLAN Conference on Object-Oriented Programming, Systems, Languages, and Applications (OOPSLA). ACM, 211–230. https://dblp.org/rec/ conf/oopsla/BoyapatiLR02

2002

-

[5]

Sebastian Burckhardt, Pravesh Kothari, Madanlal Musuvathi, and Santosh Na- garakatte. 2010. A randomized scheduler with probabilistic guarantees of finding bugs. InProceedings of the 15th International Conference on Architectural Support for Programming Languages and Operating Systems (ASPLOS). ACM, 167–178. https://dblp.org/rec/conf/asplos/BurckhardtKMN10

2010

-

[6]

Mark Chen, Jerry Tworek, Heewoo Jun, et al. 2021. Evaluating Large Language Models Trained on Code.CoRRabs/2107.03374 (2021). https://dblp.org/rec/ journals/corr/abs-2107-03374

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[7]

In Computer Aided Verification (Lecture Notes in Computer Science, Vol

Edmund M. Clarke, Orna Grumberg, Somesh Jha, Yuan Lu, and Helmut Veith. 2000. Counterexample-Guided Abstraction Refinement. InComputer Aided Verification, 12th International Conference (CA V). Springer, 154–169. doi:10.1007/10722167_15

-

[8]

Dawson R. Engler and Ken Ashcraft. 2003. RacerX: effective, static detection of race conditions and deadlocks. InProceedings of the 19th ACM Symposium on Conference ’26, June 2026, City, Country Kaiwen Zhang and Guanjun Liu Table 12:Cirerror categories (61 rules total). Code Category Examples E0xx Structural Missing fields, invalid format E1xx Name resolu...

-

[9]

2008.Unfoldings - A Partial-Order Approach to Model Checking

Javier Esparza and Keijo Heljanko. 2008.Unfoldings - A Partial-Order Approach to Model Checking. Springer. https://dblp.org/rec/series/eatcs/EsparzaH08

2008

-

[10]

Javier Esparza, Stefan Römer, and Walter Vogler. 2002. An Improvement of McMillan’s Unfolding Algorithm.Formal Methods in System Design20, 3 (2002), 285–310. https://dblp.org/rec/journals/fmsd/EsparzaRV02

2002

-

[11]

Zhiyu Fan, Xiang Gao, Martin Mirchev, Abhik Roychoudhury, and Shin Hwei Tan

-

[12]

Automated program repair in the era of large pre-trained language models

Automated Repair of Programs from Large Language Models. InProceedings of the 45th IEEE/ACM International Conference on Software Engineering (ICSE). IEEE/ACM, 1469–1481. doi:10.1109/ICSE48619.2023.00128

-

[13]

Cormac Flanagan and Stephen N. Freund. 2000. Type-based race detection for Java. InProceedings of the ACM SIGPLAN 2000 Conference on Programming Language Design and Implementation (PLDI). ACM, 219–232. https://dblp.org/ rec/conf/pldi/FlanaganF00

2000

-

[14]

Cormac Flanagan and Patrice Godefroid. 2005. Dynamic partial-order reduction for model checking software. InProceedings of the 32nd ACM SIGPLAN-SIGACT Symposium on Principles of Programming Languages (POPL). ACM, 110–121. https: //dblp.org/rec/conf/popl/FlanaganG05

2005

-

[15]

Cormac Flanagan and Shaz Qadeer. 2003. A type and effect system for atomicity. InProceedings of the ACM SIGPLAN 2003 Conference on Programming Language Design and Implementation (PLDI). ACM, 338–349. https://dblp.org/rec/conf/ pldi/FlanaganQ03

2003

-

[16]

Patrice Godefroid. 1997. VeriSoft: A Tool for the Automatic Analysis of Concur- rent Reactive Software. InComputer Aided Verification, 9th International Confer- ence (CA V). Springer, 476–479. https://dblp.org/rec/conf/cav/Godefroid97

1997

-

[17]

Henzinger, Ranjit Jhala, Rupak Majumdar, and Grégoire Sutre

Thomas A. Henzinger, Ranjit Jhala, Rupak Majumdar, and Grégoire Sutre. 2003. Software Verification with BLAST. InModel Checking Software, 10th Inter- national SPIN Workshop. Springer, 235–239. https://dblp.org/rec/conf/spin/ HenzingerJMS03

2003

-

[18]

Gerard J. Holzmann. 1997. The Model Checker SPIN.IEEE Transactions on Software Engineering23, 5 (1997), 279–295. doi:10.1109/32.588521

-

[19]

Kurt Jensen, Lars Michael Kristensen, and Lisa Wells. 2007. Coloured Petri Nets and CPN Tools for modelling and validation of concurrent systems.International Journal on Software Tools for Technology Transfer9, 3–4 (2007), 213–254. https: //dblp.org/rec/journals/sttt/JensenKW07

2007

-

[20]

Yingling Li, Muxin Cai, Junjie Chen, Yang Xu, Lei Huang, and Jianping Li. 2025. Context-aware prompting for LLM-based program repair.Automated Software Engineering32, 2 (2025), 42. doi:10.1007/S10515-025-00512-W

-

[21]

Tadao Murata. 1989. Petri nets: Properties, analysis and applications.Proc. IEEE 77, 4 (1989), 541–580. https://dblp.org/rec/journals/pieee/Murata89

1989

-

[22]

Madanlal Musuvathi and Shaz Qadeer. 2008. Fair stateless model checking. In Proceedings of the ACM SIGPLAN 2008 Conference on Programming Language Design and Implementation (PLDI). ACM, 362–371. https://dblp.org/rec/conf/ pldi/MusuvathiQ08

2008

-

[23]

Madanlal Musuvathi, Shaz Qadeer, Thomas Ball, Gérard Basler, Pira- manayagam Arumuga Nainar, and Iulian Neamtiu. 2008. Finding and Reproducing Heisenbugs in Concurrent Programs. InProceedings of the 8th USENIX Symposium on Operating Systems Design and Implementation (OSDI). USENIX Association, 267–280. https://dblp.org/rec/conf/osdi/MusuvathiQBBNN08

2008

-

[24]

Mayur Naik, Alex Aiken, and John Whaley. 2006. Effective static race detection for Java. InProceedings of the ACM SIGPLAN 2006 Conference on Programming Language Design and Implementation (PLDI). ACM, 308–319. doi:10.1145/1133981. 1134018

-

[25]

George C. Necula. 2000. Translation validation for an optimizing compiler. In Proceedings of the ACM SIGPLAN 2000 Conference on Programming Language Design and Implementation (PLDI). ACM, 83–94. https://dblp.org/rec/conf/pldi/ Necula00

2000

-

[26]

Foster, and Michael W

Polyvios Pratikakis, Jeffrey S. Foster, and Michael W. Hicks. 2006. LOCKSMITH: context-sensitive correlation analysis for race detection. InProceedings of the ACM SIGPLAN 2006 Conference on Programming Language Design and Implementation (PLDI). ACM, 320–331. https://dblp.org/rec/conf/pldi/PratikakisFH06

2006

-

[27]

Chunqiu Steven Xia, Yuxiang Wei, and Lingming Zhang. 2023. Automated Program Repair in the Era of Large Pre-trained Language Models. InProceedings of the 45th IEEE/ACM International Conference on Software Engineering (ICSE). IEEE/ACM, 1482–1494. doi:10.1109/ICSE48619.2023.00129 A Reference Tables CIR+CVN for Concurrent Programs Conference ’26, June 2026, ...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.