Recognition: 2 theorem links

· Lean TheoremBiologically-Grounded Multi-Encoder Architectures as Developability Oracles for Antibody Design

Pith reviewed 2026-05-10 16:11 UTC · model grok-4.3

The pith

CrossAbSense oracles improve antibody developability predictions by 12-20% on three of five assays using property-specific attention decoders.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central claim is that decoder architecture should be chosen according to the biological dependency of each developability property: self-attention suffices when the relevant sequence features reside within one chain and are already resolved by the 6B-scale encoder, while bidirectional cross-attention is necessary when the property requires assessing compatibility between heavy and light chains. This inversion of the initial hypothesis is confirmed both by the performance differentials on the 242-IgG benchmark and by the independently learned chain-fusion weights (heavy-chain weight 0.62 for aggregation versus 0.51 for stability). The resulting oracles are then applied to 100 sequences, 0

What carries the argument

CrossAbSense oracles that combine a frozen protein language model encoder with a configurable attention decoder; the decoder switches between self-attention for single-chain properties and bidirectional cross-attention for inter-chain properties.

If this is right

- Self-attention decoders are sufficient and optimal for aggregation-related assays because the necessary hydrophobic patches are already encoded in single-chain representations.

- Bidirectional cross-attention is required for accurate prediction of expression yield and thermal stability because these properties depend on heavy-light chain compatibility.

- Learned fusion weights independently corroborate heavy-chain dominance (weight 0.62) for aggregation versus balanced contributions (weight 0.51) for stability.

- Deployment on 100 generative-model designs shows a concrete route to ranking candidates before expensive biophysical assays.

Where Pith is reading between the lines

- The same encoder-decoder selection logic could be tested on other multi-domain or multi-chain proteins where intra- versus inter-domain signals differ.

- Generative antibody design loops could embed these oracles to reject low-developability sequences during proposal rather than after synthesis.

- The observed encoder capacity effect suggests that similar architecture searches on even larger frozen models might further reduce the need for cross-attention on some properties.

Load-bearing premise

The GDPa1 benchmark of 242 therapeutic IgGs together with the 100 IgLM-generated designs are representative of the de novo sequences that future generative models will produce.

What would settle it

A new test set of antibody sequences drawn from additional generative models or from a wider range of therapeutic and non-therapeutic IgGs on which the reported accuracy gains disappear or the preferred decoder architectures reverse would falsify the central claim.

Figures

read the original abstract

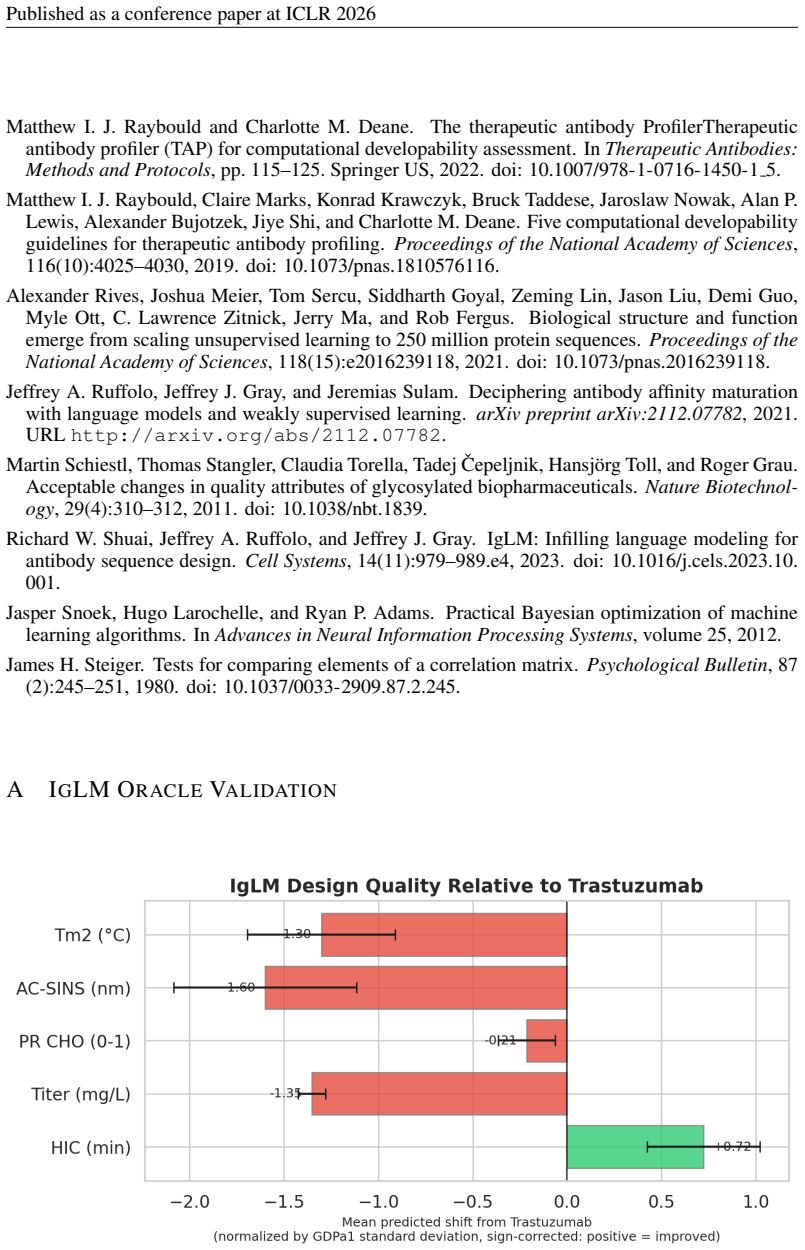

Generative models can now propose thousands of \emph{de novo} antibody sequences, yet translating these designs into viable therapeutics remains constrained by the cost of biophysical characterization. Here we present CrossAbSense, a framework of property-specific neural oracles that combine frozen protein language model encoders with configurable attention decoders, identified through a systematic hyperparameter campaign totaling over 200 runs per property. On the GDPa1 benchmark of 242 therapeutic IgGs, our oracles achieve notable improvements of 12--20\% over established baselines on three of five developability assays and competitive performance on the remaining two. The central finding is that optimal decoder architectures \emph{invert} our initial biological hypotheses: self-attention alone suffices for aggregation-related properties (hydrophobic interaction chromatography, polyreactivity), where the relevant sequence signatures -- such as CDR-H3 hydrophobic patches -- are already fully resolved within single-chain embeddings by the high-capacity 6B encoder. Bidirectional cross-attention, by contrast, is required for expression yield and thermal stability -- properties that inherently depend on the compatibility between heavy and light chains. Learned chain fusion weights independently confirm heavy-chain dominance in aggregation ($w_H = 0.62$) versus balanced contributions for stability ($w_H = 0.51$). We demonstrate practical utility by deploying CrossAbSense on 100 IgLM-generated antibody designs, illustrating a path toward substantial reduction in experimental screening costs.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper presents CrossAbSense, a set of property-specific neural oracles for antibody developability that pair frozen protein language model encoders with configurable attention-based decoders. A hyperparameter search exceeding 200 runs per property identifies optimal architectures on the GDPa1 benchmark of 242 therapeutic IgGs, yielding reported improvements of 12-20% over baselines on three of five assays (hydrophobic interaction chromatography, polyreactivity, and others) with competitive results on the remaining two. The central claim is that decoder architectures invert initial biological hypotheses: self-attention suffices for aggregation properties while bidirectional cross-attention is required for expression yield and thermal stability, corroborated by learned heavy-chain fusion weights (w_H = 0.62 for aggregation vs. 0.51 for stability). Practical utility is shown by applying the oracles to 100 IgLM-generated designs.

Significance. If the empirical gains prove robust, the framework could meaningfully reduce experimental screening burden for de novo antibody candidates by providing fast, property-specific filters. The systematic decoder ablation and the observation that high-capacity single-chain encoders already capture aggregation signals are potentially useful contributions to multi-chain modeling. The work also supplies a concrete demonstration on generated sequences, which aligns with current needs in generative antibody design.

major comments (2)

- [GDPa1 benchmark evaluation and hyperparameter search description] The reported 12-20% improvements and the architecture-inversion conclusion both rest on selecting the best decoder configuration after >200 runs per assay directly on the 242-IgG GDPa1 set. The manuscript provides no information on whether a strictly held-out test partition was reserved for final reporting or whether nested cross-validation was used (see the GDPa1 evaluation and hyperparameter campaign sections). Without this separation, the selected models can exploit benchmark-specific noise, directly undermining the central performance numbers and the biological interpretation of the fusion weights.

- [Abstract and GDPa1 results] Abstract and Results: the percentage gains are presented without error bars, explicit baseline definitions, or statistical tests for significance. This makes it impossible to determine whether the lifts are robust to data splits or choice of baselines, which is load-bearing for the claim that the oracles outperform established methods on three of five assays.

minor comments (2)

- [Abstract] The abstract refers to 'established baselines' without naming them or citing their original sources in the main text; adding a clear table of baseline methods and references would improve reproducibility.

- [Methods] Notation for the chain fusion weights (w_H) is introduced without an explicit equation; including a short methods equation for the weighted aggregation would clarify how the reported values (0.62 vs 0.51) are computed.

Simulated Author's Rebuttal

Thank you for the constructive feedback on our manuscript. We address each major comment below and describe the revisions we will make to improve the rigor of our evaluation protocol and statistical reporting.

read point-by-point responses

-

Referee: [GDPa1 benchmark evaluation and hyperparameter search description] The reported 12-20% improvements and the architecture-inversion conclusion both rest on selecting the best decoder configuration after >200 runs per assay directly on the 242-IgG GDPa1 set. The manuscript provides no information on whether a strictly held-out test partition was reserved for final reporting or whether nested cross-validation was used (see the GDPa1 evaluation and hyperparameter campaign sections). Without this separation, the selected models can exploit benchmark-specific noise, directly undermining the central performance numbers and the biological interpretation of the fusion weights.

Authors: We acknowledge that the current manuscript does not specify the use of a held-out test set or nested cross-validation for the hyperparameter search, which was performed on the full GDPa1 benchmark of 242 samples to identify optimal decoder architectures per property. Given the modest dataset size, this approach maximized data utilization for architecture selection, but we agree it leaves open the possibility of overfitting to benchmark-specific characteristics. In the revised manuscript, we will implement nested cross-validation (outer loop for unbiased performance estimation, inner loop for hyperparameter tuning across the >200 runs per assay) and re-report all metrics, including the 12-20% improvements and learned fusion weights (w_H), under this protocol. This will also allow us to confirm whether the architecture-inversion finding (self-attention for aggregation vs. cross-attention for stability) holds under more robust evaluation. revision: yes

-

Referee: [Abstract and GDPa1 results] Abstract and Results: the percentage gains are presented without error bars, explicit baseline definitions, or statistical tests for significance. This makes it impossible to determine whether the lifts are robust to data splits or choice of baselines, which is load-bearing for the claim that the oracles outperform established methods on three of five assays.

Authors: We agree that the absence of error bars, explicit baseline specifications, and significance testing weakens the presentation of the performance claims. In the revised version, we will: (1) explicitly define all baseline models and their training details in the Methods and Results sections; (2) report all metrics with standard deviations derived from the nested cross-validation folds; and (3) include appropriate statistical tests (e.g., paired t-tests across folds) to evaluate the significance of the 12-20% gains on the three assays. The abstract will be updated to reflect these additions while preserving the core claims, ensuring the results are presented with full transparency and robustness. revision: yes

Circularity Check

No circularity: empirical benchmark results with no derivation reducing to inputs by construction

full rationale

The paper presents an empirical ML framework evaluated on the external GDPa1 benchmark of 242 therapeutic IgGs, reporting performance improvements after hyperparameter tuning. No mathematical derivation, first-principles prediction, or self-citation chain is described in the provided text that reduces to fitted parameters or prior author results by construction. The central claims are performance numbers and architectural observations on held-out or benchmark data, which remain independent of the inputs even if hyperparameter search risks overfitting. This is the standard case of a self-contained empirical study against external benchmarks.

Axiom & Free-Parameter Ledger

free parameters (2)

- decoder hyperparameters

- chain fusion weights

axioms (2)

- domain assumption Frozen 6B PLM encoder already encodes all relevant single-chain signals for aggregation properties

- domain assumption GDPa1 242-IgG set is representative of developability for de novo designs

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

CrossAbSense framework of property-specific neural oracles that combine frozen protein language model encoders with configurable attention decoders

-

IndisputableMonolith/Foundation/AbsoluteFloorClosure.leanabsolute_floor_iff_bare_distinguishability unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

self-attention alone suffices for aggregation-related properties... bidirectional cross-attention required for expression yield and thermal stability

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

write newline

" write newline "" before.all 'output.state := FUNCTION n.dashify 't := "" t empty not t #1 #1 substring "-" = t #1 #2 substring "--" = not "--" * t #2 global.max substring 't := t #1 #1 substring "-" = "-" * t #2 global.max substring 't := while if t #1 #1 substring * t #2 global.max substring 't := if while FUNCTION format.date year duplicate empty "emp...

-

[2]

Ammar Arsiwala, Rebecca Bhatt, Lood van Niekerk, Porfirio Quintero-Cadena, Xiang Ao, Adam Rosenbaum, Aanal Bhatt, Alexander Smith, Yaoyu Yang, KC Anderson, Lucia Grippo, Xing Cao, Rich Cohen, Jay Patel, Joshua Moller, Olga Allen, Ali Faraj, Anisha Nandy, Jason Hocking, Ayla Ergun, Berk Tural, Sara Salvador, Joe Jacobowitz, Kristin Schaven, Mark Sherman, S...

-

[3]

Galson, and Jinwoo Leem

Justin Barton, Jacob D. Galson, and Jinwoo Leem. Enhancing antibody language models with structural information, 2024

2024

-

[4]

Zarraga, Sandeep Yadav, Thomas W

Anuj Chaudhri, Isidro E. Zarraga, Sandeep Yadav, Thomas W. Patapoff, Steven J. Shire, and Gregory A. Voth. The role of amino acid sequence in the self-association of therapeutic monoclonal antibodies: Insights from coarse-grained modeling. The Journal of Physical Chemistry B, 117 0 (5): 0 1269--1279, 2013. doi:10.1021/jp3108396

-

[5]

Claire L. Dobson et al. Engineering the surface properties of a human monoclonal antibody prevents self-association and rapid clearance in vivo. Scientific Reports, 6 0 (1): 0 38644, 2016. doi:10.1038/srep38644

-

[6]

Frédéric A. Dreyer et al. Computational design of therapeutic antibodies with improved developability: efficient traversal of binder landscapes and rescue of escape mutations. mAbs, 17 0 (1): 0 2511220, 2025. doi:10.1080/19420862.2025.2511220

-

[7]

ProtTrans : Toward understanding the language of life through self-supervised learning

Ahmed Elnaggar, Michael Heinzinger, Christian Dallago, Ghalia Rehber, Yu Wang, Llion Jones, Tom Gibbs, Tamas Feher, Christoph Angerer, Martin Steinegger, Debsindhu Bhowmik, and Burkhard Rost. ProtTrans : Toward understanding the language of life through self-supervised learning. IEEE Transactions on Pattern Analysis and Machine Intelligence, 44 0 (10): 0 ...

-

[8]

Gordon, João Gervasio, Colby Souders, and Charlotte M

Gemma L. Gordon, João Gervasio, Colby Souders, and Charlotte M. Deane. Characterising nanobody developability to improve therapeutic design using the therapeutic nanobody profiler. Communications Biology, 2026. doi:10.1038/s42003-026-09594-y

-

[9]

Jing Guo and Giorgio Carta. Unfolding and aggregation of monoclonal antibodies on cation exchange columns: Effects of resin type, load buffer, and protein stability. Journal of Chromatography A, 1388: 0 184--194, 2015. doi:10.1016/j.chroma.2015.02.047

-

[10]

Tom Hayes et al. Simulating 500 million years of evolution with a language model. Science, 2024. doi:10.1126/science.ads0018. URL https://www.science.org/doi/10.1126/science.ads0018

-

[11]

Ionescu, Josef Vlasak, Colleen Price, and Marc Kirchmeier

Roxana M. Ionescu, Josef Vlasak, Colleen Price, and Marc Kirchmeier. Contribution of variable domains to the stability of humanized IgG1 monoclonal antibodies. Journal of Pharmaceutical Sciences, 97 0 (4): 0 1414--1426, 2008. doi:10.1002/jps.21104

-

[12]

Averaging Weights Leads to Wider Optima and Better Generalization

Pavel Izmailov, Dmitrii Podoprikhin, Timur Garipov, Dmitry Vetrov, and Andrew Gordon Wilson. Averaging weights leads to wider optima and better generalization. Proceedings of the 34th Conference on Uncertainty in Artificial Intelligence, 2018. URL http://arxiv.org/abs/1803.05407

work page Pith review arXiv 2018

-

[13]

Biophysical properties of the clinical-stage antibody landscape

Tushar Jain et al. Biophysical properties of the clinical-stage antibody landscape. Proceedings of the National Academy of Sciences, 114 0 (5): 0 944--949, 2017. doi:10.1073/pnas.1616408114

-

[14]

Narayan Jayaram, Pallab Bhowmick, and Andrew C. R. Martin. Germline VH / VL pairing in antibodies. Protein Engineering, Design and Selection, 25 0 (10): 0 523--530, 2012. doi:10.1093/protein/gzs043

-

[15]

Effects on interaction kinetics of mutations at the VH -- VL interface of Fabs depend on the structural context

Myriam Ben Khalifa, Marianne Weidenhaupt, Laurence Choulier, Jean Chatellier, Nathalie Rauffer-Bruy \`e re, Dani \`e le Altschuh, and Thierry Vernet. Effects on interaction kinetics of mutations at the VH -- VL interface of Fabs depend on the structural context. Journal of Molecular Recognition, 13 0 (3): 0 127--139, 2000

2000

-

[16]

Christine C. Lee, Joseph M. Perchiacca, and Peter M. Tessier. Toward aggregation-resistant antibodies by design. Trends in Biotechnology, 31 0 (11): 0 612--620, 2013. doi:10.1016/j.tibtech.2013.07.002

-

[17]

Hyperband: A novel bandit-based approach to hyperparameter optimization

Lisha Li, Kevin Jamieson, Giulia DeSalvo, Afshin Rostamizadeh, and Ameet Talwalkar. Hyperband: A novel bandit-based approach to hyperparameter optimization. Journal of Machine Learning Research, 18 0 (185): 0 1--52, 2018

2018

-

[18]

Emily K. Makowski et al. Optimization of therapeutic antibodies for reduced self-association and non-specific binding via interpretable machine learning. Nature Biomedical Engineering, 8 0 (1): 0 45--56, 2024. doi:10.1038/s41551-023-01074-6

-

[19]

Tobias H. Olsen et al. Addressing the antibody germline bias and its effect on language models for improved antibody design. Bioinformatics, 40 0 (11): 0 btae618, 2024. doi:10.1093/bioinformatics/btae618

-

[20]

Matthew I. J. Raybould and Charlotte M. Deane. The therapeutic antibody ProfilerTherapeutic antibody profiler ( TAP ) for computational developability assessment. In Therapeutic Antibodies: Methods and Protocols, pp.\ 115--125. Springer US, 2022. doi:10.1007/978-1-0716-1450-1_5

-

[21]

Matthew I. J. Raybould, Claire Marks, Konrad Krawczyk, Bruck Taddese, Jaroslaw Nowak, Alan P. Lewis, Alexander Bujotzek, Jiye Shi, and Charlotte M. Deane. Five computational developability guidelines for therapeutic antibody profiling. Proceedings of the National Academy of Sciences, 116 0 (10): 0 4025--4030, 2019. doi:10.1073/pnas.1810576116

-

[22]

Lawrence Zitnick, Jerry Ma, and Rob Fergus

Alexander Rives, Joshua Meier, Tom Sercu, Siddharth Goyal, Zeming Lin, Jason Liu, Demi Guo, Myle Ott, C. Lawrence Zitnick, Jerry Ma, and Rob Fergus. Biological structure and function emerge from scaling unsupervised learning to 250 million protein sequences. Proceedings of the National Academy of Sciences, 118 0 (15): 0 e2016239118, 2021. doi:10.1073/pnas...

-

[23]

Jeffrey A. Ruffolo, Jeffrey J. Gray, and Jeremias Sulam. Deciphering antibody affinity maturation with language models and weakly supervised learning. arXiv preprint arXiv:2112.07782, 2021. URL http://arxiv.org/abs/2112.07782

-

[24]

Acceptable changes in quality attributes of glycosylated biopharmaceuticals

Martin Schiestl, Thomas Stangler, Claudia Torella, Tadej Čepeljnik, Hansjörg Toll, and Roger Grau. Acceptable changes in quality attributes of glycosylated biopharmaceuticals. Nature Biotechnology, 29 0 (4): 0 310--312, 2011. doi:10.1038/nbt.1839

-

[25]

Richard W. Shuai, Jeffrey A. Ruffolo, and Jeffrey J. Gray. IgLM : Infilling language modeling for antibody sequence design. Cell Systems, 14 0 (11): 0 979--989.e4, 2023. doi:10.1016/j.cels.2023.10.001

-

[26]

Jasper Snoek, Hugo Larochelle, and Ryan P. Adams. Practical Bayesian optimization of machine learning algorithms. In Advances in Neural Information Processing Systems, volume 25, 2012

2012

-

[27]

James H. Steiger. Tests for comparing elements of a correlation matrix. Psychological Bulletin, 87 0 (2): 0 245--251, 1980. doi:10.1037/0033-2909.87.2.245

-

[28]

@esa (Ref

\@ifxundefined[1] #1\@undefined \@firstoftwo \@secondoftwo \@ifnum[1] #1 \@firstoftwo \@secondoftwo \@ifx[1] #1 \@firstoftwo \@secondoftwo [2] @ #1 \@temptokena #2 #1 @ \@temptokena \@ifclassloaded agu2001 natbib The agu2001 class already includes natbib coding, so you should not add it explicitly Type <Return> for now, but then later remove the command n...

-

[29]

\@lbibitem[] @bibitem@first@sw\@secondoftwo \@lbibitem[#1]#2 \@extra@b@citeb \@ifundefined br@#2\@extra@b@citeb \@namedef br@#2 \@nameuse br@#2\@extra@b@citeb \@ifundefined b@#2\@extra@b@citeb @num @parse #2 @tmp #1 NAT@b@open@#2 NAT@b@shut@#2 \@ifnum @merge>\@ne @bibitem@first@sw \@firstoftwo \@ifundefined NAT@b*@#2 \@firstoftwo @num @NAT@ctr \@secondoft...

-

[30]

@open @close @open @close and [1] URL: #1 \@ifundefined chapter * \@mkboth \@ifxundefined @sectionbib * \@mkboth * \@mkboth\@gobbletwo \@ifclassloaded amsart * \@ifclassloaded amsbook * \@ifxundefined @heading @heading NAT@ctr thebibliography [1] @ \@biblabel @NAT@ctr \@bibsetup #1 @NAT@ctr @ @openbib .11em \@plus.33em \@minus.07em 4000 4000 `\.\@m @bibit...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.