Recognition: unknown

Envisioning the Future, One Step at a Time

Pith reviewed 2026-05-10 17:27 UTC · model grok-4.3

The pith

Sparse point trajectories advanced by autoregressive diffusion enable fast generation of thousands of plausible scene futures from one image.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Open-set future scene dynamics can be formulated as step-wise inference over sparse point trajectories, where an autoregressive diffusion model advances the points through short locally predictable transitions while explicitly modeling the growth of uncertainty over time; this representation supports rapid rollout of thousands of diverse futures from a single image, optionally guided by motion constraints, and achieves predictive accuracy comparable to dense simulators at orders-of-magnitude higher sampling speed.

What carries the argument

An autoregressive diffusion model that performs step-wise inference on sparse point trajectories to simulate dynamics and uncertainty growth.

If this is right

- Thousands of diverse futures can be rolled out from one image in a fraction of the time required by dense video predictors.

- Initial motion constraints can be incorporated to guide the distribution of predicted trajectories.

- Physical plausibility and long-range coherence are maintained without explicit appearance modeling.

- Predictive accuracy matches or exceeds that of dense simulators on open-set motion tasks.

- A new benchmark dataset enables standardized evaluation of trajectory distribution accuracy and variability on in-the-wild videos.

Where Pith is reading between the lines

- The trajectory-based representation could be combined with existing video synthesis networks to produce full appearance videos conditioned on sampled futures.

- Real-time robotics planners could use the fast sampling to evaluate many possible outcomes before choosing actions.

- The same step-wise uncertainty modeling might apply to other sparse dynamic systems such as particle simulations or traffic flow.

- Extending the model to include object identities or semantic labels on the points could improve handling of interactions between distinct scene elements.

Load-bearing premise

Short locally predictable transitions on sparse point trajectories alone can capture complex scene dynamics and keep predictions physically plausible and coherent over long horizons without dense appearance information.

What would settle it

Controlled experiments on videos with known rigid-body physics where the model's long-horizon trajectory distributions diverge from ground-truth motions or produce physically impossible paths at rates higher than dense baselines.

Figures

read the original abstract

Accurately anticipating how complex, diverse scenes will evolve requires models that represent uncertainty, simulate along extended interaction chains, and efficiently explore many plausible futures. Yet most existing approaches rely on dense video or latent-space prediction, expending substantial capacity on dense appearance rather than on the underlying sparse trajectories of points in the scene. This makes large-scale exploration of future hypotheses costly and limits performance when long-horizon, multi-modal motion is essential. We address this by formulating the prediction of open-set future scene dynamics as step-wise inference over sparse point trajectories. Our autoregressive diffusion model advances these trajectories through short, locally predictable transitions, explicitly modeling the growth of uncertainty over time. This dynamics-centric representation enables fast rollout of thousands of diverse futures from a single image, optionally guided by initial constraints on motion, while maintaining physical plausibility and long-range coherence. We further introduce OWM, a benchmark for open-set motion prediction based on diverse in-the-wild videos, to evaluate accuracy and variability of predicted trajectory distributions under real-world uncertainty. Our method matches or surpasses dense simulators in predictive accuracy while achieving orders-of-magnitude higher sampling speed, making open-set future prediction both scalable and practical. Project page: http://compvis.github.io/myriad.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes formulating open-set future scene dynamics prediction as step-wise inference over sparse point trajectories using an autoregressive diffusion model. The model advances trajectories via short, locally predictable transitions that explicitly model uncertainty growth, enabling fast rollout of thousands of diverse futures from a single image (optionally with motion constraints) while claiming to maintain physical plausibility and long-range coherence. It introduces the OWM benchmark based on diverse in-the-wild videos to evaluate trajectory distribution accuracy and variability, and reports matching or surpassing dense simulators in accuracy at orders-of-magnitude higher sampling speed.

Significance. If the empirical claims hold under rigorous verification, the work could meaningfully advance efficient, scalable future prediction in computer vision by prioritizing sparse dynamics over dense appearance modeling. The OWM benchmark is a constructive addition for evaluating open-set motion under real-world uncertainty. The claimed speed advantage would be particularly valuable for applications requiring large-scale hypothesis exploration, such as robotics or video analysis, provided the physical plausibility and coherence results are robustly demonstrated.

major comments (2)

- [§4] §4 (Method): The central modeling assumption—that conditioning the autoregressive diffusion solely on sparse point positions and velocities suffices to implicitly encode inter-object interactions, contacts, and force propagation for long-horizon physical plausibility—is load-bearing for the coherence claims. No explicit mechanism for global scene structure is described, and the skeptic concern that local transition kernels may compound errors in multi-object or collision scenarios is not directly addressed via targeted ablations on such cases.

- [§5.2, Table 3] §5.2 and Table 3: The quantitative comparison to dense simulators reports superior accuracy and speed on OWM, but provides insufficient detail on how physical plausibility was measured (e.g., no error bars, no breakdown by scene complexity, and no statistical tests). This weakens the abstract's claim of matching or surpassing dense methods without full verification of the metrics.

minor comments (2)

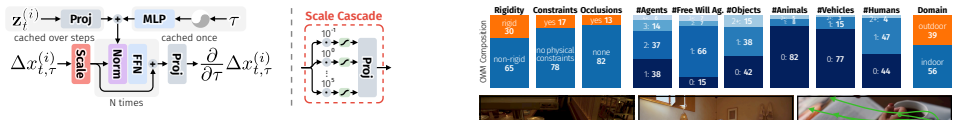

- [§3.2] §3.2: The description of the OWM benchmark construction would benefit from explicit details on video selection criteria and ground-truth trajectory annotation process to allow reproducibility.

- [Figure 4] Figure 4: The rollout visualizations are helpful but could include quantitative uncertainty overlays or failure-case examples to better illustrate the growth of uncertainty over time.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback and detailed comments. We have reviewed the concerns regarding the modeling assumptions and evaluation details. Below we respond point-by-point and will revise the manuscript to incorporate clarifications and additional analyses.

read point-by-point responses

-

Referee: [§4] §4 (Method): The central modeling assumption—that conditioning the autoregressive diffusion solely on sparse point positions and velocities suffices to implicitly encode inter-object interactions, contacts, and force propagation for long-horizon physical plausibility—is load-bearing for the coherence claims. No explicit mechanism for global scene structure is described, and the skeptic concern that local transition kernels may compound errors in multi-object or collision scenarios is not directly addressed via targeted ablations on such cases.

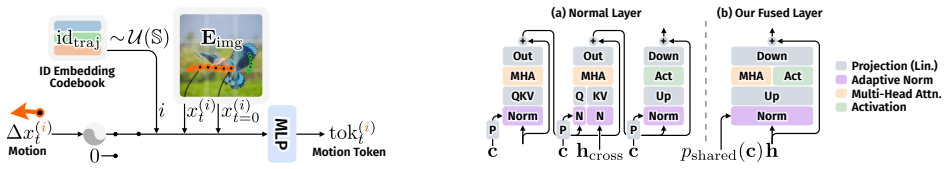

Authors: We agree that the implicit capture of interactions via conditioning on the full set of sparse point states is central to the coherence claims. The autoregressive diffusion is applied jointly to all points in the scene at each step, with the network (a transformer-based denoiser) learning to model relationships across points through attention over the trajectory tokens. This provides an implicit mechanism for encoding contacts and force propagation without an explicit global graph. To directly address the concern about error compounding in multi-object and collision scenarios, we will add a new subsection with targeted ablations: (1) quantitative results on OWM subsets filtered for high interaction density, and (2) qualitative rollout visualizations highlighting collision handling. These will demonstrate that the short-step formulation with explicit uncertainty modeling limits compounding compared to longer-horizon baselines. revision: yes

-

Referee: [§5.2, Table 3] §5.2 and Table 3: The quantitative comparison to dense simulators reports superior accuracy and speed on OWM, but provides insufficient detail on how physical plausibility was measured (e.g., no error bars, no breakdown by scene complexity, and no statistical tests). This weakens the abstract's claim of matching or surpassing dense methods without full verification of the metrics.

Authors: We acknowledge that the current presentation of physical plausibility evaluation lacks sufficient statistical rigor and detail. Physical plausibility is primarily quantified through distribution-level trajectory metrics (ADE, FDE, and diversity measures) on the OWM benchmark, supplemented by qualitative inspection of rollouts. In the revised manuscript we will expand Section 5.2 to include: error bars (mean ± std over 5 random seeds), a breakdown of results stratified by scene complexity (e.g., low vs. high object count and interaction presence), and statistical significance tests (paired t-tests with p-values) against the dense simulator baselines. Updated Table 3 will reflect these additions, providing stronger verification of the accuracy claims. revision: yes

Circularity Check

No circularity: new modeling choice for trajectory prediction is independent of inputs.

full rationale

The paper's core contribution is a design decision to model open-set future dynamics via autoregressive diffusion on sparse point trajectories rather than dense video or latents. This is presented as a formulation choice that enables fast rollout and uncertainty modeling, with the OWM benchmark introduced separately for evaluation. No equations or claims reduce by construction to fitted parameters, self-citations, or renamed known results; the approach relies on training the diffusion model on data and comparing outputs to dense simulators. The derivation chain is self-contained against external benchmarks and does not invoke load-bearing self-citations or uniqueness theorems from prior author work.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Technical report, Google DeepMind, 2025

Veo: a text-to-video generation system (veo 3 tech report). Technical report, Google DeepMind, 2025. Technical report. 2

2025

-

[2]

So- cial lstm: Human trajectory prediction in crowded spaces

Alexandre Alahi, Kratarth Goel, Vignesh Ramanathan, Alexandre Robicquet, Li Fei-Fei, and Silvio Savarese. So- cial lstm: Human trajectory prediction in crowded spaces. InProceedings of the IEEE conference on computer vision and pattern recognition, pages 961–971, 2016. 3, 5

2016

-

[3]

Diffusion for world modeling: Visual details matter in atari

Eloi Alonso, Adam Jelley, Vincent Micheli, Anssi Kan- ervisto, Amos J Storkey, Tim Pearce, and François Fleuret. Diffusion for world modeling: Visual details matter in atari. Advances in Neural Information Processing Systems, 37: 58757–58791, 2024. 2

2024

-

[4]

Jimmy Lei Ba, Jamie Ryan Kiros, and Geoffrey E Hin- ton. Layer normalization.arXiv preprint arXiv:1607.06450,

work page internal anchor Pith review Pith/arXiv arXiv

-

[5]

Back to the features: Dino as a foundation for video world models, 2025

Federico Baldassarre, Marc Szafraniec, Basile Terver, Vasil Khalidov, Francisco Massa, Yann LeCun, Patrick Labatut, Maximilian Seitzer, and Piotr Bojanowski. Back to the features: Dino as a foundation for video world models, 2025. 3

2025

-

[6]

Philip J. Ball, Jakob Bauer, Frank Belletti, Bethanie Brown- field, Ariel Ephrat, Shlomi Fruchter, Agrim Gupta, Kris- tian Holsheimer, Aleksander Holynski, Jiri Hron, Christos Kaplanis, Marjorie Limont, Matt McGill, Yanko Oliveira, Jack Parker-Holder, Frank Perbet, Guy Scully, Jeremy Shar, Stephen Spencer, Omer Tov, Ruben Villegas, Emma Wang, Jessica Yung...

2025

-

[7]

Round and round we go! what makes rotary positional encodings useful? In The Thirteenth International Conference on Learning Rep- resentations, 2025

Federico Barbero, Alex Vitvitskyi, Christos Perivolaropou- los, Razvan Pascanu, and Petar Veliˇckovi´c. Round and round we go! what makes rotary positional encodings useful? In The Thirteenth International Conference on Learning Rep- resentations, 2025. 4

2025

-

[8]

Vavim and vavam: Autonomous driving through video generative modeling, 2025

Florent Bartoccioni, Elias Ramzi, Victor Besnier, Shashanka Venkataramanan, Tuan-Hung Vu, Yihong Xu, Loick Cham- bon, Spyros Gidaris, Serkan Odabas, David Hurych, Renaud Marlet, Alexandre Boulch, Mickael Chen, Éloi Zablocki, Andrei Bursuc, Eduardo Valle, and Matthieu Cord. Vavim and vavam: Autonomous driving through video generative modeling, 2025. 2

2025

-

[9]

Simulation as an engine of physical scene understand- ing.Proceedings of the national academy of sciences, 110 (45):18327–18332, 2013

Peter W Battaglia, Jessica B Hamrick, and Joshua B Tenen- baum. Simulation as an engine of physical scene understand- ing.Proceedings of the national academy of sciences, 110 (45):18327–18332, 2013. 1, 3, 4

2013

-

[10]

What if: Understanding motion through sparse interactions

Stefan Andreas Baumann, Nick Stracke, Timy Phan, and Björn Ommer. What if: Understanding motion through sparse interactions. InProceedings of the IEEE/CVF Inter- national Conference on Computer Vision (ICCV), 2025. 2, 3, 4, 5, 7, 8, 1

2025

-

[11]

Smith, Fan-Yun Sun, Li Fei-Fei, Nancy Kanwisher, Joshua B

Daniel Bear, Elias Wang, Damian Mrowca, Felix Jedidja Binder, Hsiao-Yu Tung, RT Pramod, Cameron Holdaway, Sirui Tao, Kevin A. Smith, Fan-Yun Sun, Li Fei-Fei, Nancy Kanwisher, Joshua B. Tenenbaum, Daniel LK Yamins, and Judith E Fan. Physion: Evaluating physical prediction from vision in humans and machines. InThirty-fifth Conference on Neural Information P...

2021

-

[12]

Track2act: Predicting point tracks from internet videos enables generalizable robot manipula- tion, 2024

Homanga Bharadhwaj, Roozbeh Mottaghi, Abhinav Gupta, and Shubham Tulsiani. Track2act: Predicting point tracks from internet videos enables generalizable robot manipula- tion, 2024. 3, 7

2024

-

[13]

Perception of human motion.Annu

Randolph Blake and Maggie Shiffrar. Perception of human motion.Annu. Rev. Psychol., 58(1):47–73, 2007. 2

2007

-

[14]

ipoke: Poking a still image for controlled stochastic video synthesis

Andreas Blattmann, Timo Milbich, Michael Dorkenwald, and Björn Ommer. ipoke: Poking a still image for controlled stochastic video synthesis. InProceedings of the IEEE/CVF International Conference on Computer Vision, pages 14707– 14717, 2021. 2

2021

-

[15]

Understanding object dynamics for in- teractive image-to-video synthesis

Andreas Blattmann, Timo Milbich, Michael Dorkenwald, and Bjorn Ommer. Understanding object dynamics for in- teractive image-to-video synthesis. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 5171–5181, 2021. 2

2021

-

[16]

Stable Video Diffusion: Scaling Latent Video Diffusion Models to Large Datasets

Andreas Blattmann, Tim Dockhorn, Sumith Kulal, Daniel Mendelevitch, Maciej Kilian, Dominik Lorenz, Yam Levi, Zion English, Vikram V oleti, Adam Letts, et al. Stable video diffusion: Scaling latent video diffusion models to large datasets.arXiv preprint arXiv:2311.15127, 2023. 2, 7, 5

work page internal anchor Pith review arXiv 2023

-

[17]

What happens next? antici- pating future motion by generating point trajectories

Gabrijel Boduljak, Laurynas Karazija, Iro Laina, Christian Rupprecht, and Andrea Vedaldi. What happens next? antici- pating future motion by generating point trajectories. InThe Fourteenth International Conference on Learning Represen- tations, 2026. 3

2026

-

[18]

Mdmp: Multi-modal diffusion for supervised motion predictions with uncertainty

Leo Bringer, Joey Wilson, Kira Barton, and Maani Ghaf- fari. Mdmp: Multi-modal diffusion for supervised motion predictions with uncertainty. InProceedings of the Com- puter Vision and Pattern Recognition Conference, pages 2889–2899, 2025. 3

2025

-

[19]

Video generation models as world simulators, 2024

Tim Brooks, Bill Peebles, Connor Holmes, Will DePue, Yufei Guo, Li Jing, David Schnurr, Joe Taylor, Troy Luh- man, Eric Luhman, Clarence Ng, Ricky Wang, and Aditya Ramesh. Video generation models as world simulators, 2024. 2, 7

2024

-

[20]

Genie: Generative interactive environments

Jake Bruce, Michael D Dennis, Ashley Edwards, Jack Parker-Holder, Yuge Shi, Edward Hughes, Matthew Lai, Aditi Mavalankar, Richie Steigerwald, Chris Apps, et al. Genie: Generative interactive environments. InForty-first International Conference on Machine Learning, 2024. 3

2024

-

[21]

Multipath: Multiple probabilistic an- chor trajectory hypotheses for behavior prediction

Yuning Chai, Benjamin Sapp, Mayank Bansal, and Dragomir Anguelov. Multipath: Multiple probabilistic an- chor trajectory hypotheses for behavior prediction. InCon- ference on Robot Learning, pages 86–99. PMLR, 2020. 3

2020

-

[22]

Diffusion forcing: Next-token prediction meets full-sequence diffu- sion.Advances in Neural Information Processing Systems, 37:24081–24125, 2024

Boyuan Chen, Diego Martí Monsó, Yilun Du, Max Sim- chowitz, Russ Tedrake, and Vincent Sitzmann. Diffusion forcing: Next-token prediction meets full-sequence diffu- sion.Advances in Neural Information Processing Systems, 37:24081–24125, 2024. 7

2024

-

[23]

Physgen3d: Crafting a miniature interactive world from a single image

Boyuan Chen, Hanxiao Jiang, Shaowei Liu, Saurabh Gupta, Yunzhu Li, Hao Zhao, and Shenlong Wang. Physgen3d: Crafting a miniature interactive world from a single image. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 6178–6189, 2025. 3

2025

-

[24]

Skyreels- v2: Infinite-length film generative model, 2025

Guibin Chen, Dixuan Lin, Jiangping Yang, Chunze Lin, Junchen Zhu, Mingyuan Fan, Hao Zhang, Sheng Chen, Zheng Chen, Chengcheng Ma, Weiming Xiong, Wei Wang, Nuo Pang, Kang Kang, Zhiheng Xu, Yuzhe Jin, Yupeng Liang, Yubing Song, Peng Zhao, Boyuan Xu, Di Qiu, De- bang Li, Zhengcong Fei, Yang Li, and Yahui Zhou. Skyreels- v2: Infinite-length film generative mo...

2025

-

[25]

Pixart-$\alpha$: Fast training of diffusion transformer for photorealistic text-to-image syn- thesis

Junsong Chen, Jincheng YU, Chongjian GE, Lewei Yao, Enze Xie, Zhongdao Wang, James Kwok, Ping Luo, Huchuan Lu, and Zhenguo Li. Pixart-$\alpha$: Fast training of diffusion transformer for photorealistic text-to-image syn- thesis. InThe Twelfth International Conference on Learning Representations, 2024. 4

2024

-

[26]

Playing with transformer at 30+ fps via next- frame diffusion.arXiv preprint arXiv:2506.01380, 2025

Xinle Cheng, Tianyu He, Jiayi Xu, Junliang Guo, Di He, and Jiang Bian. Playing with transformer at 30+ fps via next- frame diffusion.arXiv preprint arXiv:2506.01380, 2025. 2

-

[27]

Scalable high-resolution pixel-space image syn- thesis with hourglass diffusion transformers

Katherine Crowson, Stefan Andreas Baumann, Alex Birch, Tanishq Mathew Abraham, Daniel Z Kaplan, and Enrico Shippole. Scalable high-resolution pixel-space image syn- thesis with hourglass diffusion transformers. InProceedings of the 41st International Conference on Machine Learning, pages 9550–9575. PMLR, 2024. 4, 1

2024

-

[28]

Oasis: A universe in a trans- former.URL: https://oasis-model.github.io, 2024

Etched Decart, Quinn McIntyre, Spruce Campbell, Xinlei Chen, and Robert Wachen. Oasis: A universe in a trans- former.URL: https://oasis-model.github.io, 2024. 2

2024

-

[29]

Stochastic image-to-video synthesis using cinns

Michael Dorkenwald, Timo Milbich, Andreas Blattmann, Robin Rombach, Konstantinos G Derpanis, and Bjorn Om- mer. Stochastic image-to-video synthesis using cinns. In Proceedings of the IEEE/CVF Conference on Computer Vi- sion and Pattern Recognition, pages 3742–3753, 2021. 2

2021

-

[30]

An image is worth 16x16 words: Transformers for image recognition at scale

Alexey Dosovitskiy, Lucas Beyer, Alexander Kolesnikov, Dirk Weissenborn, Xiaohua Zhai, Thomas Unterthiner, Mostafa Dehghani, Matthias Minderer, Georg Heigold, Syl- vain Gelly, Jakob Uszkoreit, and Neil Houlsby. An image is worth 16x16 words: Transformers for image recognition at scale. InInternational Conference on Learning Representa- tions, 2021. 4, 1

2021

-

[31]

python-billiards

Markus Ebke. python-billiards. https://github.com/markus- ebke/python-billiards, 2025. 6, 7, 1, 2

2025

-

[32]

Scaling rectified flow transformers for high-resolution image synthesis

Patrick Esser, Sumith Kulal, Andreas Blattmann, Rahim Entezari, Jonas Müller, Harry Saini, Yam Levi, Dominik Lorenz, Axel Sauer, Frederic Boesel, et al. Scaling rectified flow transformers for high-resolution image synthesis. In Forty-first international conference on machine learning,

-

[33]

Fluid: Scaling autoregressive text-to-image generative models with continuous tokens

Lijie Fan, Tianhong Li, Siyang Qin, Yuanzhen Li, Chen Sun, Michael Rubinstein, Deqing Sun, Kaiming He, and Yonglong Tian. Fluid: Scaling autoregressive text-to-image generative models with continuous tokens. InThe Thir- teenth International Conference on Learning Representa- tions, 2025. 5

2025

-

[34]

Sequential sampling models in cognitive neuroscience: Ad- vantages, applications, and extensions.Annual review of psychology, 67(1):641–666, 2016

Birte U Forstmann, Roger Ratcliff, and E-J Wagenmakers. Sequential sampling models in cognitive neuroscience: Ad- vantages, applications, and extensions.Annual review of psychology, 67(1):641–666, 2016. 4

2016

-

[35]

Learning visual predictive models of physics for playing billiards, 2016

Katerina Fragkiadaki, Pulkit Agrawal, Sergey Levine, and Jitendra Malik. Learning visual predictive models of physics for playing billiards, 2016. 3

2016

-

[36]

Vectornet: Encoding hd maps and agent dynamics from vectorized representation

Jiyang Gao, Chen Sun, Hang Zhao, Yi Shen, Dragomir Anguelov, Congcong Li, and Cordelia Schmid. Vectornet: Encoding hd maps and agent dynamics from vectorized representation. InProceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 11525– 11533, 2020. 3

2020

-

[37]

Im2flow: Motion hallucination from static images for action recogni- tion

Ruohan Gao, Bo Xiong, and Kristen Grauman. Im2flow: Motion hallucination from static images for action recogni- tion. InProceedings of the IEEE conference on computer vision and pattern recognition, pages 5937–5947, 2018. 3

2018

-

[38]

Mineworld: a real-time and open-source interactive world model on minecraft,

Junliang Guo, Yang Ye, Tianyu He, Haoyu Wu, Yushu Jiang, Tim Pearce, and Jiang Bian. Mineworld: a real-time and open-source interactive world model on minecraft.arXiv preprint arXiv:2504.08388, 2025. 2

-

[39]

Social gan: Socially acceptable trajec- tories with generative adversarial networks

Agrim Gupta, Justin Johnson, Li Fei-Fei, Silvio Savarese, and Alexandre Alahi. Social gan: Socially acceptable trajec- tories with generative adversarial networks. InProceedings of the IEEE conference on computer vision and pattern recognition, pages 2255–2264, 2018. 3, 5

2018

-

[40]

World models

David Ha and Jürgen Schmidhuber. World models. InAd- vances in Neural Information Processing Systems 31, pages 2451–2463. Curran Associates, Inc., 2018. 3

2018

-

[41]

Learning latent dynamics for planning from pixels

Danijar Hafner, Timothy Lillicrap, Ian Fischer, Ruben Ville- gas, David Ha, Honglak Lee, and James Davidson. Learning latent dynamics for planning from pixels. InInternational conference on machine learning, pages 2555–2565. PMLR, 2019

2019

-

[42]

Dream to control: Learning behaviors by latent imagination

Danijar Hafner, Timothy Lillicrap, Jimmy Ba, and Moham- mad Norouzi. Dream to control: Learning behaviors by latent imagination. InInternational Conference on Learning Representations, 2020

2020

-

[43]

Mastering atari with discrete world models

Danijar Hafner, Timothy P Lillicrap, Mohammad Norouzi, and Jimmy Ba. Mastering atari with discrete world models. InInternational Conference on Learning Representations, 2021

2021

-

[44]

Mastering diverse control tasks through world models.Nature, 640(8059):647–653, 2025

Danijar Hafner, Jurgis Pasukonis, Jimmy Ba, and Timothy Lillicrap. Mastering diverse control tasks through world models.Nature, 640(8059):647–653, 2025. 3

2025

-

[45]

Training agents inside of scalable world models.arXiv preprint arXiv:2509.24527, 2025

Danijar Hafner, Wilson Yan, and Timothy Lillicrap. Train- ing agents inside of scalable world models.arXiv preprint arXiv:2509.24527, 2025. 2

-

[46]

Gaussian error linear units (gelus), 2023

Dan Hendrycks and Kevin Gimpel. Gaussian error linear units (gelus), 2023. 1

2023

-

[47]

Denoising diffu- sion probabilistic models.Advances in neural information processing systems, 33:6840–6851, 2020

Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising diffu- sion probabilistic models.Advances in neural information processing systems, 33:6840–6851, 2020. 1

2020

-

[48]

Arbitrary style transfer in real-time with adaptive instance normalization

Xun Huang and Serge Belongie. Arbitrary style transfer in real-time with adaptive instance normalization. InPro- ceedings of the IEEE international conference on computer vision, pages 1501–1510, 2017. 4, 5, 2, 3

2017

-

[49]

The trajectron: Proba- bilistic multi-agent trajectory modeling with dynamic spa- tiotemporal graphs

Boris Ivanovic and Marco Pavone. The trajectron: Proba- bilistic multi-agent trajectory modeling with dynamic spa- tiotemporal graphs. InProceedings of the IEEE/CVF inter- national conference on computer vision, pages 2375–2384,

-

[50]

Physics-as-inverse-graphics: Unsupervised physical param- eter estimation from video

Miguel Jaques, Michael Burke, and Timothy Hospedales. Physics-as-inverse-graphics: Unsupervised physical param- eter estimation from video. InInternational Conference on Learning Representations, 2020. 3

2020

-

[51]

Enerverse-AC: Envisioning embodied environments with action condition

Yuxin Jiang, Shengcong Chen, Siyuan Huang, Liliang Chen, Pengfei Zhou, Yue Liao, Xindong HE, Chiming Liu, Hong- sheng Li, Maoqing Yao, and Guanghui Ren. Enerverse-AC: Envisioning embodied environments with action condition

-

[52]

Visual perception of biological motion and a model for its analysis.Perception & psychophysics, 14(2):201–211, 1973

Gunnar Johansson. Visual perception of biological motion and a model for its analysis.Perception & psychophysics, 14(2):201–211, 1973. 2

1973

-

[53]

Co- tracker3: Simpler and better point tracking by pseudo- labelling real videos

Nikita Karaev, Yuri Makarov, Jianyuan Wang, Natalia Neverova, Andrea Vedaldi, and Christian Rupprecht. Co- tracker3: Simpler and better point tracking by pseudo- labelling real videos. InProceedings of the IEEE/CVF Inter- national Conference on Computer Vision, pages 6013–6022,

-

[54]

DINO-foresight: Looking into the future with DINO

Efstathios Karypidis, Ioannis Kakogeorgiou, Spyros Gidaris, and Nikos Komodakis. DINO-foresight: Looking into the future with DINO. InThe Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. 2, 3

2025

-

[55]

Predictive processing: a canonical cortical computation.Neuron, 100 (2):424–435, 2018

Georg B Keller and Thomas D Mrsic-Flogel. Predictive processing: a canonical cortical computation.Neuron, 100 (2):424–435, 2018. 1

2018

-

[56]

Kingma and Jimmy Ba

Diederik P. Kingma and Jimmy Ba. Adam: A method for stochastic optimization. InICLR, 2015. 6

2015

-

[57]

Kling: Kuaishou’s propri- etary text–to–video generation model

Kuaishou Technology. Kling: Kuaishou’s propri- etary text–to–video generation model. https : / / ir . kuaishou . com / news - releases / news - release - details / kuaishou - unveils - proprietary - video - generation - model - kling, 2024. Press release. 2

2024

-

[58]

Crowds by example

Alon Lerner, Yiorgos Chrysanthou, and Dani Lischinski. Crowds by example. InComputer graphics forum, pages 655–664. Wiley Online Library, 2007. 4, 5

2007

-

[59]

Puppet-master: Scaling interactive video generation as a motion prior for part-level dynamics

Ruining Li, Chuanxia Zheng, Christian Rupprecht, and An- drea Vedaldi. Puppet-master: Scaling interactive video generation as a motion prior for part-level dynamics. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pages 13405–13415, 2025. 2

2025

-

[60]

Autoregressive image generation without vec- tor quantization.NeurIPS 2024, 2024

Tianhong Li, Yonglong Tian, He Li, Mingyang Deng, and Kaiming He. Autoregressive image generation without vec- tor quantization.NeurIPS 2024, 2024. 4, 5, 1

2024

-

[61]

Generative image dynamics

Zhengqi Li, Richard Tucker, Noah Snavely, and Aleksander Holynski. Generative image dynamics. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 24142–24153, 2024. 3

2024

-

[62]

Wonderplay: Dy- namic 3d scene generation from a single image and actions

Zizhang Li, Hong-Xing Yu, Wei Liu, Yin Yang, Charles Her- rmann, Gordon Wetzstein, and Jiajun Wu. Wonderplay: Dy- namic 3d scene generation from a single image and actions. InProceedings of the IEEE/CVF International Conference on Computer Vision, pages 9080–9090, 2025. 3

2025

-

[63]

Movideo: Motion-aware video generation with diffusion models

Jingyun Liang, Yuchen Fan, Kai Zhang, Radu Timofte, Luc Van Gool, and Rakesh Ranjan. Movideo: Motion-aware video generation with diffusion models. InEuropean Con- ference on Computer Vision, 2024. 3, 7

2024

-

[64]

Learning lane graph repre- sentations for motion forecasting

Ming Liang, Bin Yang, Rui Hu, Yun Chen, Renjie Liao, Song Feng, and Raquel Urtasun. Learning lane graph repre- sentations for motion forecasting. InEuropean Conference on Computer Vision, pages 541–556. Springer, 2020. 3

2020

-

[65]

Yaron Lipman, Ricky T. Q. Chen, Heli Ben-Hamu, Maxim- ilian Nickel, and Matthew Le. Flow matching for generative modeling. InThe Eleventh International Conference on Learning Representations, 2023. 4, 5, 1

2023

-

[66]

Physgen: Rigid-body physics-grounded image- to-video generation

Shaowei Liu, Zhongzheng Ren, Saurabh Gupta, and Shen- long Wang. Physgen: Rigid-body physics-grounded image- to-video generation. InEuropean Conference on Computer Vision (ECCV), 2024. 3

2024

-

[67]

Decoupled weight decay regularization

Ilya Loshchilov and Frank Hutter. Decoupled weight decay regularization. InInternational Conference on Learning Representations, 2019. 6, 2

2019

-

[68]

Nerf: Representing scenes as neural radiance fields for view syn- thesis

Ben Mildenhall, Pratul P Srinivasan, Matthew Tancik, Jonathan T Barron, Ravi Ramamoorthi, and Ren Ng. Nerf: Representing scenes as neural radiance fields for view syn- thesis. InEuropean Conference on Computer Vision, pages 405–421, 2020. 4

2020

-

[69]

Do generative video models understand physical principles? InProceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pages 948– 958, 2026

Saman Motamed, Laura Culp, Kevin Swersky, Priyank Jaini, and Robert Geirhos. Do generative video models understand physical principles? InProceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pages 948– 958, 2026. 5, 6, 7, 2, 4

2026

-

[70]

Newtonian scene understanding: Unfolding the dynamics of objects in static images

Roozbeh Mottaghi, Hessam Bagherinezhad, Mohammad Rastegari, and Ali Farhadi. Newtonian scene understanding: Unfolding the dynamics of objects in static images. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pages 3521–3529, 2016. 3

2016

-

[71]

what happens if

Roozbeh Mottaghi, Mohammad Rastegari, Abhinav Gupta, and Ali Farhadi. “what happens if...” learning to predict the effect of forces in images. InComputer Vision–ECCV 2016: 14th European Conference, Amsterdam, The Netherlands, October 11–14, 2016, Proceedings, Part IV 14, pages 269–

2016

-

[72]

Refaat, and Benjamin Sapp

Nigamaa Nayakanti, Rami Al-Rfou, Aurick Zhou, Kratarth Goel, Khaled S. Refaat, and Benjamin Sapp. Wayformer: Motion forecasting via simple & efficient attention networks. 2023 IEEE International Conference on Robotics and Au- tomation (ICRA), pages 2980–2987, 2022. 3

2023

-

[73]

Scene transformer: A unified architecture for predict- ing future trajectories of multiple agents

Jiquan Ngiam, Vijay Vasudevan, Benjamin Caine, Zheng- dong Zhang, Hao-Tien Lewis Chiang, Jeffrey Ling, Re- becca Roelofs, Alex Bewley, Chenxi Liu, Ashish Venugopal, David J Weiss, Benjamin Sapp, Zhifeng Chen, and Jonathon Shlens. Scene transformer: A unified architecture for predict- ing future trajectories of multiple agents. InInternational Conference o...

2022

-

[74]

Sora 2 system card

OpenAI. Sora 2 system card. https : / / cdn . openai.com/pdf/50d5973c-c4ff-4c2d-986f- c72b5d0ff069/sora_2_system_card.pdf, 2025. System card. 2

2025

-

[75]

Genie 2: A large-scale founda- tion world model

Jack Parker-Holder, Philip Ball, Jake Bruce, Vibhavari Dasagi, Kristian Holsheimer, Christos Kaplanis, Alexandre Moufarek, Guy Scully, Jeremy Shar, Jimmy Shi, Stephen Spencer, Jessica Yung, Michael Dennis, Sultan Kenjeyev, Shangbang Long, Vlad Mnih, Harris Chan, Maxime Gazeau, Bonnie Li, Fabio Pardo, Luyu Wang, Lei Zhang, Frederic Besse, Tim Harley, Anna ...

2024

-

[76]

Scalable diffusion models with transformers

William Peebles and Saining Xie. Scalable diffusion models with transformers. InProceedings of the IEEE/CVF inter- national conference on computer vision, pages 4195–4205,

-

[77]

You’ll never walk alone: Modeling social be- havior for multi-target tracking

Stefano Pellegrini, Andreas Ess, Konrad Schindler, and Luc Van Gool. You’ll never walk alone: Modeling social be- havior for multi-target tracking. In2009 IEEE 12th Inter- national Conference on Computer Vision, pages 261–268. IEEE, 2009. 4, 5

2009

-

[78]

Pika 2.1

Pika Labs. Pika 2.1. https://pika.art/faq, 2025. Product documentation/FAQ. 2

2025

-

[79]

Déja vu: Motion prediction in static images

Silvia L Pintea, Jan C van Gemert, and Arnold WM Smeul- ders. Déja vu: Motion prediction in static images. InEu- ropean Conference on Computer Vision, pages 172–187. Springer, 2014. 3

2014

-

[80]

SDXL: Improving latent diffusion models for high-resolution image synthesis

Dustin Podell, Zion English, Kyle Lacey, Andreas Blattmann, Tim Dockhorn, Jonas Müller, Joe Penna, and Robin Rombach. SDXL: Improving latent diffusion models for high-resolution image synthesis. InThe Twelfth Inter- national Conference on Learning Representations, 2024. 3

2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.