Recognition: unknown

Nanvix: A Multikernel OS Design for High-Density Serverless Deployments

Pith reviewed 2026-05-10 16:32 UTC · model grok-4.3

The pith

Nanvix separates ephemeral per-invocation state from shared tenant state to run far more serverless applications per server.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Nanvix disaggregates ephemeral execution state, unique per application invocation, from long-lived persistent state shared among invocations from the same tenant. Applications execute inside a lightweight user VM running a micro-kernel that implements threads and memory and forwards all I/O requests to a system VM. The system VM runs a macro-kernel with device drivers and is shared among all invocations from the same tenant. The split design achieves strong hypervisor isolation across tenants without sacrificing application performance and reduces same-tenant contention by multiplexing all I/O requests to the system VM, yielding order-of-magnitude lower startup times and the ability to serve

What carries the argument

The per-invocation user VM with micro-kernel paired with a per-tenant system VM with macro-kernel that receives all forwarded I/O.

If this is right

- Application startup times drop by roughly an order of magnitude with only moderate I/O overhead.

- A production trace replay requires 20-100 times fewer host servers than current systems.

- Strong isolation between tenants is preserved through the hypervisor layer.

- Same-tenant contention is lowered by centralizing I/O in the shared system VM.

- Overall serverless deployment density increases without added hardware.

Where Pith is reading between the lines

- Similar state disaggregation could be applied to other cloud execution models where isolation and density trade off.

- If the I/O multiplexing scales, it opens a path to denser multi-tenant runtimes beyond serverless functions.

- An experiment running identical workloads on both Nanvix and conventional container or VM stacks would quantify the density gain directly.

- The design may reduce the total cost of ownership for serverless platforms by allowing more work per physical machine.

Load-bearing premise

That routing all I/O from many concurrent invocations of the same tenant through one shared system VM will avoid both performance collapse and new side-channel vulnerabilities.

What would settle it

Measure I/O latency and side-channel leakage when running a high-concurrency trace from a single tenant on Nanvix versus an equivalent number of fully isolated per-invocation VMs; a large degradation or new leaks would falsify the density claim.

Figures

read the original abstract

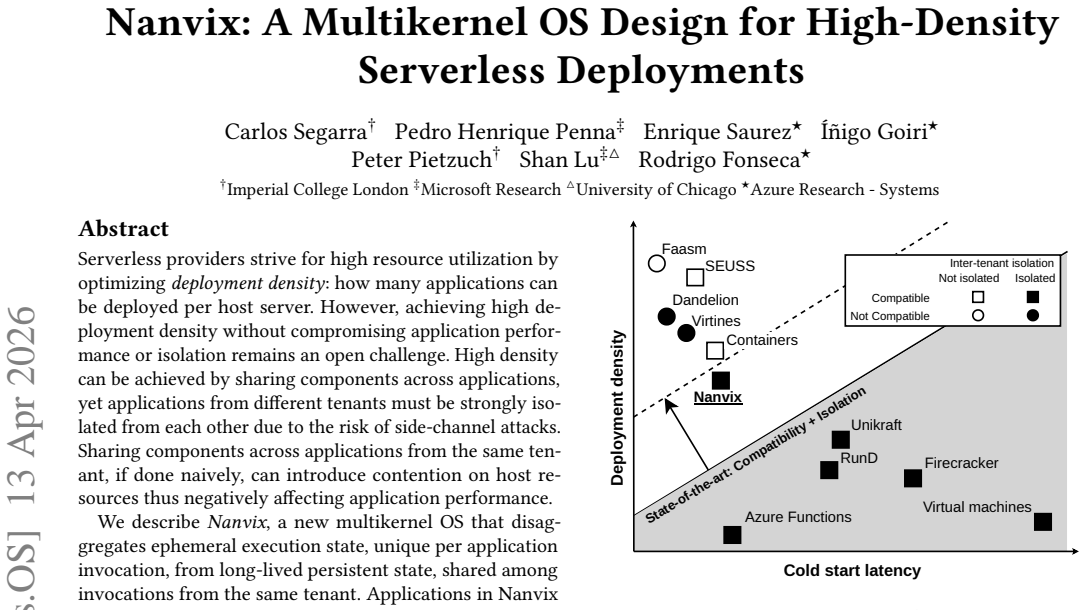

Serverless providers strive for high resource utilization by optimizing deployment density: how many applications can be deployed per host server. However, achieving high deployment density without compromising application performance or isolation remains an open challenge. High density can be achieved by sharing components across applications, yet applications from different tenants must be strongly isolated from each other due to the risk of side-channel attacks. Sharing components across applications from the same tenant, if done naively, can introduce contention on host resources thus negatively affecting application performance. We describe Nanvix, a new multikernel OS that disaggregates ephemeral execution state, unique per application invocation, from long-lived persistent state, shared among invocations from the same tenant. Applications in Nanvix execute inside a lightweight user VM running a micro-kernel that implements threads and memory, and forwards all I/O requests to a system VM. The system VM runs a macro-kernel with a rich set of device drivers and is shared among all invocations from the same tenant. Nanvix' split design achieves strong hypervisor isolation across tenants without sacrificing application performance, and reduces same-tenant contention by multiplexing all I/O requests to the system VM. Thanks to a system-wide co-design, Nanvix achieves order-of-magnitude lower application start up times with moderate I/O overheads. When replaying a production trace, Nanvix needs 20-100x fewer host servers compared to state-of-the-art systems, improving deployment density

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper describes Nanvix, a multikernel OS for high-density serverless deployments. It separates ephemeral execution state per application invocation into lightweight user VMs running micro-kernels from persistent state shared among same-tenant invocations in a system VM running a macro-kernel. All I/O is forwarded to the system VM to maintain isolation across tenants while aiming to reduce intra-tenant contention. The design is claimed to achieve order-of-magnitude lower startup times with moderate I/O overheads and 20-100x higher deployment density on production trace replays compared to state-of-the-art systems.

Significance. Should the claims be supported by detailed and reproducible evaluations, this work could have substantial impact on serverless platforms by significantly increasing deployment density while upholding strong isolation. The multikernel disaggregation tailored to serverless invocation patterns is a novel contribution, and the use of production traces for validation is commendable.

major comments (3)

- Abstract: Performance claims such as 'order-of-magnitude lower application start up times' and '20-100x fewer host servers' are made without reference to specific evaluation methodologies, baselines, or data, which are critical for assessing the central claims of improved density.

- §3 (Architecture): The architecture forwards every I/O request to a single shared system VM per tenant; however, there is no quantitative evaluation of potential contention or latency under high concurrency scenarios from the same tenant, which underpins the assertion that this reduces contention and enables the reported performance gains.

- §5 (Evaluation): The production trace replay experiment does not detail the state-of-the-art systems compared against, the specific metrics for density (e.g., how server count is determined), or any error analysis, making it hard to verify the 20-100x improvement.

minor comments (2)

- Abstract: Consider expanding the abstract to include a sentence on the specific hypervisor or kernel implementations used for better context.

- Throughout the manuscript: Clarify the distinction between 'lightweight user VM' and 'system VM' with consistent definitions early on.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed review. We address each major comment below and will revise the manuscript accordingly to improve clarity and completeness.

read point-by-point responses

-

Referee: Abstract: Performance claims such as 'order-of-magnitude lower application start up times' and '20-100x fewer host servers' are made without reference to specific evaluation methodologies, baselines, or data, which are critical for assessing the central claims of improved density.

Authors: We agree that the abstract would benefit from brief references to the supporting evaluation. In the revised manuscript we will update the abstract to note that startup-time claims are based on microbenchmarks and that the density improvement is measured via replay of a production trace against state-of-the-art serverless runtimes. Full methodologies, baselines, and quantitative data remain in Sections 4 and 5. revision: yes

-

Referee: §3 (Architecture): The architecture forwards every I/O request to a single shared system VM per tenant; however, there is no quantitative evaluation of potential contention or latency under high concurrency scenarios from the same tenant, which underpins the assertion that this reduces contention and enables the reported performance gains.

Authors: The design forwards I/O to the tenant-shared system VM to enable centralized multiplexing and lower per-invocation overhead. We acknowledge the absence of quantitative contention measurements under high same-tenant concurrency. We will add targeted experiments in the evaluation section that vary the number of concurrent invocations per tenant and report I/O latency and throughput to demonstrate the contention-reduction benefit. revision: yes

-

Referee: §5 (Evaluation): The production trace replay experiment does not detail the state-of-the-art systems compared against, the specific metrics for density (e.g., how server count is determined), or any error analysis, making it hard to verify the 20-100x improvement.

Authors: We will expand Section 5 to explicitly name the compared systems and their configurations, define the density metric as the minimum number of host servers needed to replay the trace while keeping tail latencies within SLA bounds, and include error analysis with standard deviations from repeated runs. These additions will make the 20-100x claim directly verifiable. revision: yes

Circularity Check

No circularity: claims rest on architecture description and empirical trace replay

full rationale

The paper describes a multikernel OS design that disaggregates per-invocation state from per-tenant persistent state, with I/O forwarded to a shared system VM. Performance claims (order-of-magnitude lower startup times, 20-100x fewer servers on production trace replay) are presented as outcomes of this co-design and evaluated via replay rather than derived from equations or parameters. No self-definitional loops, fitted inputs renamed as predictions, or load-bearing self-citations appear in the provided text. The central results are externally falsifiable through the described evaluation methodology and do not reduce to quantities defined by the authors' own prior results.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Strong hypervisor isolation is required between tenants because of side-channel attack risks.

- ad hoc to paper Multiplexing I/O through a shared per-tenant system VM avoids contention that would otherwise degrade performance.

invented entities (2)

-

Lightweight user VM running a micro-kernel

no independent evidence

-

Shared system VM running a macro-kernel

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Fire- cracker: Lightweight Virtualization for Serverless Applications

Alexandru Agache, Marc Brooker, Alexandra Iordache, Anthony Liguori, Rolf Neugebauer, Phil Piwonka, and Diana-Maria Popa. Fire- cracker: Lightweight Virtualization for Serverless Applications. In17th 12 USENIX Symposium on Networked Systems Design and Implementation, NSDI, 2020

2020

-

[2]

Drops: Managing serverless resource pools in microsoft azure functions

Ahmed Alquraan, Abdelrahman Baba, Rafael Mendes, Sameh Elnikety, Paul Batum, Yan Chen, Seth Safi, Hamid Henry Fine, and Samer Al- Kiswany. Drops: Managing serverless resource pools in microsoft azure functions. InProceedings of the Twentyfirst European Conference on Computer Systems, EuroSys ’26, 2026

2026

-

[3]

Improving startup performance with Lambda SnapStart.https: //docs.aws.amazon.com/lambda/latest/dg/snapstart.html, 2026

AWS. Improving startup performance with Lambda SnapStart.https: //docs.aws.amazon.com/lambda/latest/dg/snapstart.html, 2026

2026

-

[4]

Lambda - Provisioned Concurrency.https://docs.aws.amazon

AWS. Lambda - Provisioned Concurrency.https://docs.aws.amazon. com/lambda/latest/dg/provisioned-concurrency.html, 2026

2026

-

[5]

Lambda - Runtime Environment.https://docs.aws.amazon.com/ lambda/latest/dg/lambda-runtime-environment.html, 2026

AWS. Lambda - Runtime Environment.https://docs.aws.amazon.com/ lambda/latest/dg/lambda-runtime-environment.html, 2026

2026

-

[6]

Wei Bai, Shanim Sainul Abdeen, Ankit Agrawal, Krishan Kumar Attre, Paramvir Bahl, Ameya Bhagat, Gowri Bhaskara, Tanya Brokhman, Lei Cao, Ahmad Cheema, Rebecca Chow, Jeff Cohen, Mahmoud Elhaddad, Vivek Ette, Igal Figlin, Daniel Firestone, Mathew George, Ilya German, Lakhmeet Ghai, Eric Green, Albert Greenberg, Manish Gupta, Randy Haagens, Matthew Hendel, R...

2023

-

[7]

The multikernel: a new os architecture for scalable multicore systems

Andrew Baumann, Paul Barham, Pierre-Evariste Dagand, Tim Harris, Rebecca Isaacs, Simon Peter, Timothy Roscoe, Adrian Schüpbach, and Akhilesh Singhania. The multikernel: a new os architecture for scalable multicore systems. InProceedings of the ACM SIGOPS 22nd Symposium on Operating Systems Principles, SOSP ’09, 2009

2009

-

[8]

Dedup est machina: Memory deduplication as an advanced exploita- tion vector

Erik Bosman, Kaveh Razavi, Herbert Bos, and Cristiano Giuffrida. Dedup est machina: Memory deduplication as an advanced exploita- tion vector. In2016 IEEE Symposium on Security and Privacy, SP ’16, 2016

2016

-

[9]

SEUSS: skip redundant paths to make server- less fast

James Cadden, Thomas Unger, Yara Awad, Han Dong, Orran Krieger, and Jonathan Appavoo. SEUSS: skip redundant paths to make server- less fast. InEuroSys, 2020

2020

-

[10]

Fork in the road: reflections and optimizations for cold start latency in production serverless systems

Xiaohu Chai, Tianyu Zhou, Keyang Hu, Jianfeng Tan, Tiwei Bie, Anqi Shen, Dawei Shen, Qi Xing, Shun Song, Tongkai Yang, Le Gao, Feng Yu, Zhengyu He, Dong Du, Yubin Xia, Kang Chen, and Yu Chen. Fork in the road: reflections and optimizations for cold start latency in production serverless systems. InProceedings of the 19th USENIX Conference on Operating Sys...

2025

-

[11]

Run.https://cloud.google.com/run, 2026

Google Cloud. Run.https://cloud.google.com/run, 2026

2026

-

[12]

The cloud that works for developers, not the other way around.https://workers.cloudflare.com/, 2026

CloudFlare. The cloud that works for developers, not the other way around.https://workers.cloudflare.com/, 2026

2026

-

[13]

ACPI.https://wiki.osdev.org/ACPI, 2026

OS Dev. ACPI.https://wiki.osdev.org/ACPI, 2026

2026

-

[14]

VirtIO.https://wiki.osdev.org/Virtio, 2026

OS Dev. VirtIO.https://wiki.osdev.org/Virtio, 2026

2026

-

[15]

Handling uniqueness with Lambda SnapStart.https://docs

AWS Docs. Handling uniqueness with Lambda SnapStart.https://docs. aws.amazon.com/lambda/latest/dg/snapstart-uniqueness.html, 2026

2026

-

[16]

Catalyzer: Sub-millisecond startup for serverless computing with initialization-less booting

Dong Du, Tianyi Yu, Yubin Xia, Binyu Zang, Guanglu Yan, Chenggang Qin, Qixuan Wu, and Haibo Chen. Catalyzer: Sub-millisecond startup for serverless computing with initialization-less booting. InProceedings of the Twenty-Fifth International Conference on Architectural Support for Programming Languages and Operating Systems, ASPLOS ’20, 2020

2020

-

[17]

Open source Kubernetes-native Serverless Framework.https: //fission.io, 2026

Fission. Open source Kubernetes-native Serverless Framework.https: //fission.io, 2026

2026

-

[18]

Making kernel bypass practical for the cloud with Junction

Joshua Fried, Gohar Irfan Chaudhry, Enrique Saurez, Esha Choukse, Íñigo Goiri, Sameh Elnikety, Rodrigo Fonseca, and Adam Belay. Making kernel bypass practical for the cloud with Junction. InNSDI, 2024

2024

-

[19]

Mem- ory matters: Load-time deduplication for unikernels

Gaulthier Gain, Benoît Knott, Cyril Soldani, and Laurent Mathy. Mem- ory matters: Load-time deduplication for unikernels. InProceedings of the 2025 ACM Symposium on Cloud Computing, SoCC ’25, 2026

2025

-

[20]

Run Cloud Virtual Machines Securely and Effi- ciently.https://www.cloudhypervisor.org/, 2026

Cloud Hypervisor. Run Cloud Virtual Machines Securely and Effi- ciently.https://www.cloudhypervisor.org/, 2026

2026

-

[21]

Serverless cold starts and where to find them

Artjom Joosen, Ahmed Hassan, Martin Asenov, Rajkarn Singh, Luke Darlow, Jianfeng Wang, Qiwen Deng, and Adam Barker. Serverless cold starts and where to find them. InProceedings of the Twentieth European Conference on Computer Systems, EuroSys ’25, 2025

2025

-

[22]

Linefs: Efficient smartnic offload of a distributed file system with pipeline parallelism

Jongyul Kim, Insu Jang, Waleed Reda, Jaeseong Im, Marco Canini, De- jan Kostić, Youngjin Kwon, Simon Peter, and Emmett Witchel. Linefs: Efficient smartnic offload of a distributed file system with pipeline parallelism. In28th Symposium on Operating Systems Principles, SOSP ’21, 2021

2021

-

[23]

sel4: formal verification of an os kernel

Gerwin Klein, Kevin Elphinstone, Gernot Heiser, June Andronick, David Cock, Philip Derrin, Dhammika Elkaduwe, Kai Engelhardt, Rafal Kolanski, Michael Norrish, Thomas Sewell, Harvey Tuch, and Simon Winwood. sel4: formal verification of an os kernel. InProceedings of the ACM SIGOPS 22nd Symposium on Operating Systems Principles, SOSP ’09, 2009

2009

-

[24]

Spectre attacks: Exploit- ing speculative execution

Paul Kocher, Jann Horn, Anders Fogh, , Daniel Genkin, Daniel Gruss, Werner Haas, Mike Hamburg, Moritz Lipp, Stefan Mangard, Thomas Prescher, Michael Schwarz, and Yuval Yarom. Spectre attacks: Exploit- ing speculative execution. In40th IEEE Symposium on Security and Privacy, S&P’19, 2019

2019

-

[25]

Pronghorn: Effective checkpoint orchestration for serverless hot-starts

Sumer Kohli, Shreyas Kharbanda, Rodrigo Bruno, Joao Carreira, and Pedro Fonseca. Pronghorn: Effective checkpoint orchestration for serverless hot-starts. InProceedings of the Nineteenth European Con- ference on Computer Systems, EuroSys ’24, 2024

2024

-

[26]

Leonid Kondrashov, Lazar Cvetković, Hancheng Wang, Boxi Zhou, and Dmitrii Ustiugov. Melding the serverless control plane with the conventional cluster manager for speed and resource efficiency.https: //arxiv.org/abs/2505.24551, 2026

-

[27]

ufork: Sup- porting posix fork within a single-address-space os

John Alistair Kressel, Hugo Lefeuvre, and Pierre Olivier. ufork: Sup- porting posix fork within a single-address-space os. InProceedings of the ACM SIGOPS 31st Symposium on Operating Systems Principles, SOSP ’25, 2025

2025

-

[28]

Unlocking true elasticity for the cloud-native era with dandelion

Tom Kuchler, Pinghe Li, Yazhuo Zhang, Lazar Cvetković, Boris Gora- nov, Tobias Stocker, Leon Thomm, Simone Kalbermatter, Tim Notter, Andrea Lattuada, and Ana Klimovic. Unlocking true elasticity for the cloud-native era with dandelion. InProceedings of the ACM SIGOPS 31st Symposium on Operating Systems Principles, SOSP ’25, 2025

2025

-

[29]

Unikraft: Fast, specialized unikernels the easy way

Simon Kuenzer, Vlad-Andrei Bădoiu, Hugo Lefeuvre, Sharan San- thanam, Alexander Jung, Gaulthier Gain, Cyril Soldani, Costin Lupu, Ştefan Teodorescu, Costi Răducanu, et al. Unikraft: Fast, specialized unikernels the easy way. InEuroSys, 2021

2021

-

[30]

Squeezy: Rapid vm memory reclama- tion for serverless functions

Orestis Lagkas Nikolos, Clhoe Alverti, Stratos Psomadakis, Georgios Goumas, and Nectarios Koziris. Squeezy: Rapid vm memory reclama- tion for serverless functions. InProceedings of the Twentyfirst European Conference on Computer Systems, EuroSys ’26, 2026

2026

-

[31]

Lorch, Oded Padon, and Bryan Parno

Andrea Lattuada, Travis Hance, Jay Bosamiya, Matthias Brun, Chanhee Cho, Hayley LeBlanc, Pranav Srinivasan, Reto Achermann, Tej Chajed, Chris Hawblitzel, Jon Howell, Jacob R. Lorch, Oded Padon, and Bryan Parno. Verus: A practical foundation for systems verification. In Proceedings of the ACM SIGOPS 30th Symposium on Operating Systems Principles, SOSP ’24, 2024

2024

-

[32]

Loupe: Driving the development of os compatibility layers

Hugo Lefeuvre, Gaulthier Gain, Vlad-Andrei Bădoiu, Daniel Dinca, Vlad-Radu Schiller, Costin Raiciu, Felipe Huici, and Pierre Olivier. Loupe: Driving the development of os compatibility layers. InProceed- ings of the 29th ACM International Conference on Architectural Support for Programming Languages and Operating Systems, Volume 1, ASPLOS 13 ’24, 2024

2024

-

[33]

Lightweight and holistic-scalable serverless secure container runtime for high- density deployment and high-concurrency startup.IEEE Transactions on Computers, 2025

Zijun Li, Chenyang Wu, Chuhao Xu, Quan Chen, Shuo Quan, Bin Zha, Qiang Wang, Weidong Han, Jie Wu, and Minyi Guo. Lightweight and holistic-scalable serverless secure container runtime for high- density deployment and high-concurrency startup.IEEE Transactions on Computers, 2025

2025

-

[34]

Improving ipc by kernel design

Jochen Liedtke. Improving ipc by kernel design. InProceedings of the Fourteenth ACM Symposium on Operating Systems Principles, SOSP ’93, 1993

1993

-

[35]

Meltdown: Reading kernel memory from user space

Moritz Lipp, Michael Schwarz, Daniel Gruss, Thomas Prescher, Werner Haas, Anders Fogh, Jann Horn, Stefan Mangard, Paul Kocher, Daniel Genkin, Yuval Yarom, and Mike Hamburg. Meltdown: Reading kernel memory from user space. In27th USENIX Security Symposium, USENIX Security 18, 2018

2018

-

[36]

Unikernels: library operating systems for the cloud

Anil Madhavapeddy, Richard Mortier, Charalampos Rotsos, David Scott, Balraj Singh, Thomas Gazagnaire, Steven Smith, Steven Hand, and Jon Crowcroft. Unikernels: library operating systems for the cloud. InProceedings of the Eighteenth International Conference on Ar- chitectural Support for Programming Languages and Operating Systems, ASPLOS ’13, 2013

2013

-

[37]

Entropy for Clones.https: //github.com/firecracker-microvm/firecracker/blob/main/docs/ snapshotting/random-for-clones.md, 2026

Firecracker Maintainers. Entropy for Clones.https: //github.com/firecracker-microvm/firecracker/blob/main/docs/ snapshotting/random-for-clones.md, 2026

2026

-

[38]

Introducing Hyperlight: Virtual machine-based security for functions at scale.https://opensource.microsoft.com/blog/2024/11/ 07/introducing-hyperlight/, 2024

Microsoft. Introducing Hyperlight: Virtual machine-based security for functions at scale.https://opensource.microsoft.com/blog/2024/11/ 07/introducing-hyperlight/, 2024

2024

-

[39]

Metteagle: costs and benefits of implementing containers on microkernels

Till Miemietz, Viktor Reusch, Matthias Hille, Lars Wrenger, Jana Eisoldt, Jan Klötzke, Max Kurze, Adam Lackorzynski, Michael Roitzsch, and Hermann Härtig. Metteagle: costs and benefits of implementing containers on microkernels. InProceedings of the 19th USENIX Con- ference on Operating Systems Design and Implementation, OSDI ’25, 2025

2025

-

[40]

Nightingale, Orion Hodson, Ross McIlroy, Chris Hawblitzel, and Galen Hunt

Edmund B. Nightingale, Orion Hodson, Ross McIlroy, Chris Hawblitzel, and Galen Hunt. Helios: heterogeneous multiprocessing with satel- lite kernels. InProceedings of the ACM SIGOPS 22nd Symposium on Operating Systems Principles, SOSP ’09, 2009

2009

-

[41]

Narappa, and K

Federico Parola, Sisu Qi, Akhilesh B. Narappa, and K. K. Ramakrishnan. Sure: Secure unikernels make serverless computing rapid and efficient. InProceedings of the 2024 ACM Symposium on Cloud Computing, SoCC ’24, 2024

2024

-

[42]

Inter-VM Shared Memory device.https://www.qemu.org/ docs/master/system/devices/ivshmem.html, 2026

QEMU. Inter-VM Shared Memory device.https://www.qemu.org/ docs/master/system/devices/ivshmem.html, 2026

2026

-

[43]

Dirigent: Lightweight serverless orchestration

Lazar Sahraei, Dmitrii Ustiugov, Mahdi Mohammadi Amiri, and An- toni Wolnikowski. Dirigent: Lightweight serverless orchestration. In Proceedings of the ACM SIGOPS 30th Symposium on Operating Systems Principles, SOSP, 2024

2024

-

[44]

Sartakov, Lluís Vilanova, Munir Geden, David Eyers, Takahiro Shinagawa, and Peter Pietzuch

Vasily A. Sartakov, Lluís Vilanova, Munir Geden, David Eyers, Takahiro Shinagawa, and Peter Pietzuch. ORC: Increasing cloud memory density via object reuse with capabilities. In17th USENIX Symposium on Operating Systems Design and Implementation, OSDI ’23, 2023

2023

-

[45]

Sartakov, Lluís Vilanova, and Peter Pietzuch

Vasily A. Sartakov, Lluís Vilanova, and Peter Pietzuch. Cubicleos: a library os with software componentisation for practical isolation. In Proceedings of the 26th ACM International Conference on Architectural Support for Programming Languages and Operating Systems, ASPLOS ’21, 2021

2021

-

[46]

Serverless in the wild: Character- izing and optimizing the serverless workload at a large cloud provider

Mohammad Shahrad, Rodrigo Fonseca, Íñigo Goiri, Gohar Chaudhry, Paul Batum, Jason Cooke, Eduardo Laureano, Colby Tresness, Mark Russinovich, and Ricardo Bianchini. Serverless in the wild: Character- izing and optimizing the serverless workload at a large cloud provider. InProceedings of the 2020 USENIX Annual Technical Conference, ATC ’20, 2020

2020

-

[47]

Legoos: a disseminated, distributed os for hardware resource disaggregation

Yizhou Shan, Yutong Huang, Yilun Chen, and Yiying Zhang. Legoos: a disseminated, distributed os for hardware resource disaggregation. InProceedings of the 13th USENIX Conference on Operating Systems Design and Implementation, OSDI’18, 2018

2018

-

[48]

Faasm: Lightweight isolation for efficient stateful serverless computing

Simon Shillaker and Peter Pietzuch. Faasm: Lightweight isolation for efficient stateful serverless computing. InUSENIX ATC, 2020

2020

-

[49]

Frans Kaashoek

Ariel Szekely, Adam Belay, Robert Morris, and M. Frans Kaashoek. Unifying serverless and microservice workloads with sigmaos. In Proceedings of the ACM SIGOPS 30th Symposium on Operating Systems Principles, SOSP ’24, 2024

2024

-

[50]

Benchmarking, analysis, and optimization of serverless function snapshots

Dmitrii Ustiugov, Plamen Petrov, Marios Katebzadeh, Michal Sherr, and Boris Grot. Benchmarking, analysis, and optimization of serverless function snapshots. InProceedings of the Twenty-Sixth International Conference on Architectural Support for Programming Languages and Operating Systems, ASPLOS ’21, 2021

2021

-

[51]

Duarte, Michael Sammler, Peter Druschel, and Deepak Garg

Anjo Vahldiek-Oberwagner, Eslam Elnikety, Nuno O. Duarte, Michael Sammler, Peter Druschel, and Deepak Garg. ERIM: Secure, efficient in- process isolation with protection keys (MPK). In28th USENIX Security Symposium, USENIX Security 19, 2019

2019

-

[52]

Slashing the disaggregation tax in heterogeneous data cen- ters with fractos

Lluís Vilanova, Lina Maudlej, Shai Bergman, Till Miemietz, Matthias Hille, Nils Asmussen, Michael Roitzsch, Hermann Härtig, and Mark Silberstein. Slashing the disaggregation tax in heterogeneous data cen- ters with fractos. InProceedings of the Seventeenth European Conference on Computer Systems, EuroSys ’22, 2022

2022

-

[53]

Peeking behind the curtains of serverless platforms

Liang Wang, Mengyuan Li, Yinqian Zhang, Thomas Ristenpart, and Michael Swift. Peeking behind the curtains of serverless platforms. In 2018 USENIX Annual Technical Conference, ATC’18, 2018

2018

-

[54]

Isolating functions at the Hardware Limit with Virtines

Nicholas C Wanninger, Joshua J Bowden, Kirtankumar Shetty, Ayush Garg, and Kyle C Hale. Isolating functions at the Hardware Limit with Virtines. InEuroSys, 2022

2022

-

[55]

WebAssembly System Interface.https://wasi.dev/, 2026

WASI. WebAssembly System Interface.https://wasi.dev/, 2026

2026

-

[56]

Security implications of memory deduplication in a virtualized environment

Jidong Xiao, Zhang Xu, Hai Huang, and Haining Wang. Security implications of memory deduplication in a virtualized environment. In2013 43rd Annual IEEE/IFIP International Conference on Dependable Systems and Networks, DSN ’13, 2013

2013

-

[57]

The true cost of containing: A gVisor case study

Ethan G Young, Pengfei Zhu, Tyler Caraza-Harter, Andrea C Arpaci- Dusseau, and Remzi H Arpaci-Dusseau. The true cost of containing: A gVisor case study. InHotCloud, 2019

2019

-

[58]

Navarro Leija, Ashlie Martinez, Jing Liu, Anna Korn- feld Simpson, Sujay Jayakar, Pedro Henrique Penna, Max Demoulin, Piali Choudhury, and Anirudh Badam

Irene Zhang, Amanda Raybuck, Pratyush Patel, Kirk Olynyk, Jacob Nelson, Omar S. Navarro Leija, Ashlie Martinez, Jing Liu, Anna Korn- feld Simpson, Sujay Jayakar, Pedro Henrique Penna, Max Demoulin, Piali Choudhury, and Anirudh Badam. The demikernel datapath os architecture for microsecond-scale datacenter systems. InProceedings of the ACM SIGOPS 28th Symp...

2021

-

[59]

Characteri- zation and reclamation of frozen garbage in managed faas workloads

Ziming Zhao, Mingyu Wu, Haibo Chen, and Binyu Zang. Characteri- zation and reclamation of frozen garbage in managed faas workloads. InProceedings of the Nineteenth European Conference on Computer Systems, EuroSys ’24, 2024. 14

2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.